Anthropic Accidentally Leaks Entire Claude Code Source Code Online

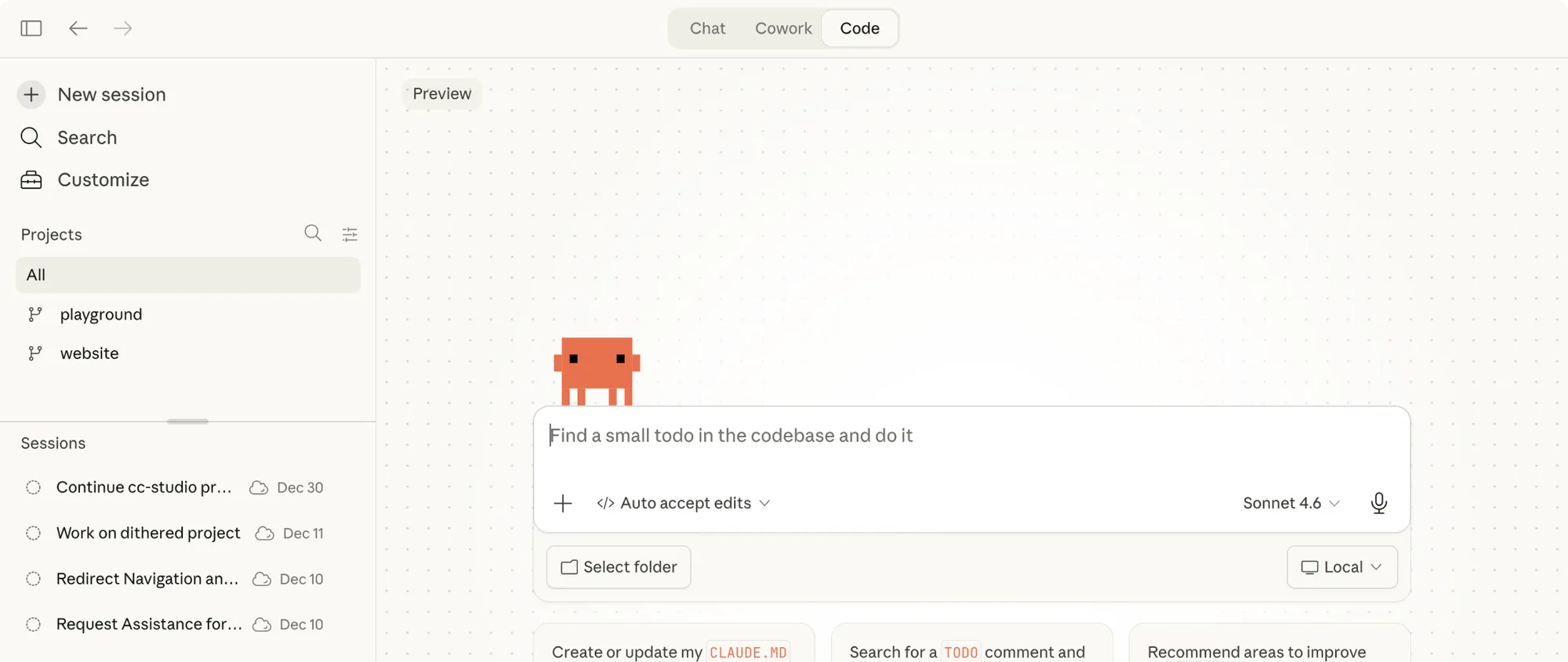

At the end of March 2026, Anthropic suffered an embarrassing mistake: the complete source code of their AI tool Claude Code accidentally ended up on the internet. The culprit was a single misconfigured file during the publication of the program. We explain here in plain terms exactly what happened, what the code reveals, and what it means.

Anthropic publishes Claude Code as a package in the so-called npm registry, a public directory for software packages. When uploading a new version, a so-called source map was accidentally included -- a technical auxiliary file that is not normally intended for the public. This file was 59.8 megabytes in size and contained the complete, readable original code of the tool.

A security researcher named Chaofan Shou discovered the problem and made it public. Within a few hours, complete copies of the code were circulating on GitHub. Anthropic responded quickly, removed the affected version, and replaced it with a cleaned-up variant. However, the company was no longer able to undo the damage.

Professional software is usually heavily compressed and obfuscated before release so that it runs faster and is harder to read. Source maps are files that can reverse this process. They show what the original, easily readable code looked like. For developers they are useful during testing, but they have no place in finished products.

The leaked material comprises around 1,900 files with over 512,000 lines of code. A look inside reveals some interesting details.

A section of the code referred to as "Undercover Mode" has attracted particular attention. According to its description, it would allow Claude Code to contribute to public open-source projects without disclosing its AI origin.

A section labeled Undercover Mode allegedly describes a feature that would allow Claude Code to contribute to public open-source projects without revealing its origin.

This raises serious questions. Open-source communities are built on transparency and mutual trust. If AI contributions are not identifiable as such, this trust is undermined. At the same time, there is a risk that other companies will develop similar features while being less careful in doing so.

For people currently using Claude Code, there is no immediate risk based on what is known so far. According to Anthropic, no access credentials or personal data were compromised. The company described the incident as human error, not a hacker attack.

The medium-term risk lies elsewhere: anyone who knows the code can target future versions of the tool more precisely. Anthropic has so far not commented beyond an initial confirmation of the incident. The case illustrates strikingly how a single small mistake when publishing software can have far-reaching consequences.