Anthropic quietly nerfed Claude Code's 1-hour cache, and your token budget is paying the price

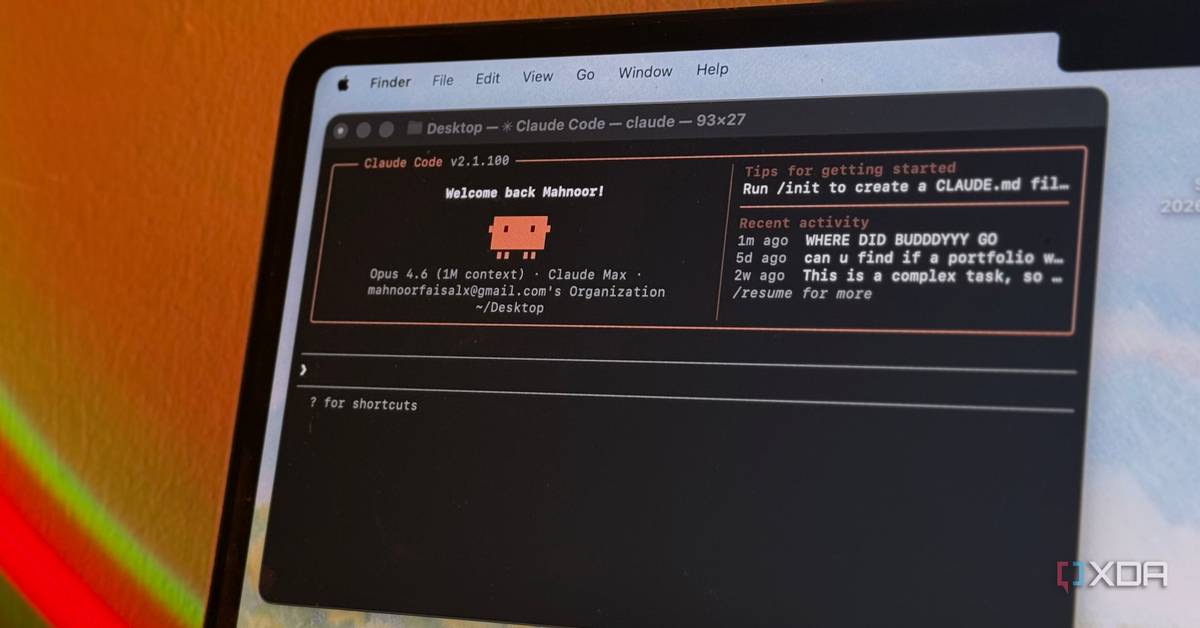

Claude Code has become the default agentic coding tool for a lot of developers, and for good reason. It understands a codebase, calls tools, edits files, and can plan multi-step tasks with very little handholding. I know many who use it daily, and for those using a Claude subscription, it's the only permissible coding harness.

But something changed over the past couple of weeks, and if you've been burning through your token quota faster than you used to, as many have said they are, there's a likely major cause. Beginning in early April, Anthropic quietly switched Claude Code's default prompt cache time-to-live from one hour down to five minutes, and the switch landed for different users on different days. There wasn't an announcement, and the docs were quietly updated to say that 5 minute caching is now the default in the API.

For what it's worth, most of the time, I've rolled my eyes at much of the doom and gloom claiming that Opus is getting dumber, and that usage limits are that much worse. After all, you could verify that Opus 4.6, for example, was performing more or less the same on independent benchmarks that were run every single day. However, the caching issue is a very real problem, and I can even see the fingerprints of that change inside my own Claude Code instance, and my own data shows the switch came on April 17th, with subagents switching over in late March. For another XDA writer, theirs still has an hour-long cache, so it's clear that it hasn't rolled out to everyone, yet.

You can find the switch in your session logs

All of your conversations contain this data

Claude Code writes session logs to "~/.claude/projects/" as JSONL files, and every response from the API includes a "usage.cache_creation" object with two relevant fields: "ephemeral_5m_input_tokens" and "ephemeral_1h_input_tokens". Only one of those is ever non-zero on any given turn, so it's easy to tell which tier your session is hitting, and Anthropic can't hide this number because it's part of the public API spec.

One user on the r/ClaudeAI subreddit ran the numbers across 1,140 sessions in their local conversation database, plotted the tier distribution by date, and built a chart that cleanly shows the problem in a way that's hard to argue against. From March 1st through to April 1st, their logs showed a 100% usage of the "ephemeral_1h" cache type. However, from April 2nd, between roughly 06:23 and 06:55 UTC, there's a mix, with 491 turns on five minutes and 644 turns on one hour. Finally, from April 3rd to the present day, it's completely, 100%, the "ephemeral_5m" cache type. It's clear a switch was flipped around this time.

To Anthropic's credit, it did eventually respond... sort of. It was on a single GitHub issue on April 12th, almost ten days after this Reddit user reported that the switch was flipped on their end. The next day, Boris Cherny, creator of Claude Code, replied to a query on social media about it, stating that a one hour cache has been implemented in some places for subscribers, though didn't mention where those places are, while also framing a five minute cache as the true default. It's possible Anthropic replied elsewhere, too, but it doesn't really matter; the messaging around this feature has been terrible, and it's only the users who notice that have it confirmed to them. Not everyone who uses the tool.

In that GitHub thread that was opened in the Claude Code repository, Jarred Sumner from Anthropic said that the shorter cache window actually makes Claude Code cheaper in aggregate, because "a meaningful share of Claude Code's requests are one-shot calls where the cached context is used once and not revisited." That framing might hold for someone who opens Claude Code to ask a single question and then closes it. For anyone doing long-context agentic work in a large codebase, it doesn't.

As for Cherny's post, the framing is technically defensible, but it has a convenient rhetorical property: it lets the downgrade be described as a revert to default rather than as taking something away. For a user who had consistent hour-long cache behavior for weeks or months and then suddenly didn't, the difference in whether it's a downgrade or a revert doesn't really matter, as the service they were receiving got worse on a specific date without any notice. Users have also been posting charts showing clean 1h-to-5m transitions, but nobody is posting the reverse. In a genuine A/B test with gates flipping in both directions, you'd expect roughly symmetric observations.

This one-way drift is more consistent with a treatment group being wound down than with heuristics being iterated on, even if Cherny's point that an hour long cache isn't universally better is fair on its own terms. However, saying that five minutes was always the default isn't exactly a satisfying explanation, even if Claude Code's own defaults will use a five minute cache unless told otherwise by Anthropic's servers. Anthropic's servers consistently telling it to use an hour-long cache, overriding that "default" behavior, makes the hour-long cache the default.

Every cache bust is a full rebuild, and it adds up fast

You use way more tokens without a cache in place

The whole point of prompt caching is that you pay the full input price once, alongside a much smaller cost to "write" a cache. Then, subsequent turns read from that cache at a much smaller cost than the typical base price of input. To give you an idea of just how much cheaper it is, here are the costs for Anthropic's newly-released Claude Opus 4.7:

Model

Base Input Tokens

5m Cache Writes

1h Cache Writes

Cache Hits & Refreshes

Output Tokens

Claude Opus 4.7

$5 / MTok

$6.25 / MTok

$10 / MTok

$0.50 / MTok

$25 / MTok

In other words, you spend a small bit more to write to the cache, but then you spend a whole lot less for every token that's read from the cache instead of being freshly input again. This is also why other coding harnesses have to get caching right for a lot of different providers, as otherwise, it drives up inference costs for the user and adds to server load for the company providing it.

If you're a developer using Anthropic's API, the 5-minute write is cheaper, but that's only if you're using the API. If you're someone with a Claude Code subscription, or you're using the API but you just want to use Claude Code anyway, you don't get a choice, and you've been downgraded from an hour long cache time to just five minutes. And this means that when the cache expires, your next turn has to rebuild the entire context from scratch at the write rate, not the read rate. That's a cache bust.

Before April 2, the Reddit user we talked about averaged 39 cache busts per day and triggered $6.28 in costs as a result. After April 2, it was 199 busts per day and $15.54 in daily cost. The per-bust cost actually went down, because 1-hour writes cost more than 5-minute ones, but the bust frequency multiplied by a factor of five, and that's enough to overwhelm the per-unit savings. Their projected monthly difference from this one change came out to $277.80.

And it's not just API users who are feeling the pain, too. On subscription plans, users get a "usage" meter that highlights how much they've used in a given five hour window, and how much they have left for the week. If you're busting the cache frequently, you'll be consuming more of your quota at a significantly higher rate. Plus, with Opus now enabling a million tokens by default, taking a five minute break from a conversation with, say, half a million tokens, will instantly consume 500,000 tokens all at once once you return to the chat.

If you want to get around that particular pain point, you can go back to a smaller context window by exporting "CLAUDE_CODE_DISABLE_1M_CONTEXT=1" in your .bashrc or .zshrc. This caps your context at 200k instead of the full million. Claude Code will also warn you by default if you're resuming a big conversation with the "/resume" command and will suggest compacting it first, but otherwise, there's no indication that going away and coming back a few minutes later to the same Claude Code instance will significantly drain your usage.

What really caught my eye about this is what happens to your conversation when it comes to sub-agents and backgrounded tasks. Claude Code backgrounds long tool calls and agent dispatches, which are typical of operations that take more than five minutes to complete. The moment that task returns, your cache has expired, and the next turn pays full input price to rebuild context you already had before the task started. Anything running with "/loop" or "/schedule" set to intervals over five minutes trips the same trap, too.

You can check your own instance

See when the change happened for you

The easiest check is to dig into the JSONL logs yourself. Pull up any recent session file under "~/.claude/projects/", grep for "cache_creation", and look at whether "ephemeral_5m_input_tokens" or "ephemeral_1h_input_tokens" is the one with a value. If 5m is populated, you're using the shorter cache. The grep command you can use is this:

grep -r "cache_creation" ./

Several people in the community have also written short scripts that process the JSONLs and produce daily breakdowns, and you can use one of them inside the "/get-token-insights" skill in the claude-memory plugin.

There's also a subtler piece of evidence baked into Claude Code itself. The ScheduleWakeup tool that Claude Code uses internally for timed pauses carries its own documentation, and that documentation explicitly states "The Anthropic prompt cache has a 5-minute TTL. Sleeping past 300 seconds means the next wake-up reads your full conversation context uncached -- slower and more expensive." That's Anthropic's own tool description treating five minutes as the baseline assumption. Nobody asked you to opt in when the change was made, and the five-minute window is just the new default, quietly assumed.

To be clear, I'm not accusing Anthropic of doing this maliciously. There are probably real reasons for the change that have to do with the shape of Claude Code's aggregate traffic, and Jarred's comment about one-shot requests is plausible if you treat the entire user base as an average. But the people paying $200 a month to run agentic workflows are not one-shot users, and they're the ones hitting limits in the last couple of weeks that they never would have hit in March. A default change that affects billing behavior this significantly deserves a changelog at a minimum, and probably a settings toggle for the users it hits hardest.

It shouldn't take reverse engineering the client to figure out why things suddenly feel different, and the fact that others did the work to find that out and communicate it to end users, not Anthropic, says a lot.