Anthropic's Project Glasswing to preempt AI-driven cyberattacks

Anthropic last week announced Project Glasswing, in partnership with Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. Claude Mythos Preview revealed that "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," the company stated in a press release.

Mythos Preview found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. The vulnerabilities it has spotted have, in some cases, survived decades of human review and millions of automated security tests, and the exploits it develops are increasingly sophisticated, Anthropic said. The model found a 27-year-old flaw in OpenBSD and a 16-year-old bug in FFmpeg. Mythos can autonomously chain multiple vulnerabilities together to create complex "zero-day" exploit chains with minimal human interaction. Project Glasswing will attempt to use these capabilities for defensive purposes.

"The window between a vulnerability being discovered and being exploited by an adversary has collapsed -- what once took months now happens in minutes with AI," said Elia Zaitsev, chief technology officer at CrowdStrike. "Claude Mythos Preview demonstrates what is now possible for defenders at scale, and adversaries will inevitably look to exploit the same capabilities. That is not a reason to slow down; it's a reason to move together, faster. If you want to deploy AI, you need security."

Leveraging agentic reasoning for autonomous vulnerability discovery

As part of the project, the launch partners will use Mythos Preview for defensive security work. Anthropic has also extended access to over 40 additional organizations that build or maintain critical software infrastructure, enabling them to use the model to scan and secure both first-party and open-source systems. Anthropic is committing up to $100 million in usage credits for Mythos Preview across these efforts, as well as $4 million in direct donations to open-source security organizations.

Anthropic anticipates this work will focus on tasks like local vulnerability detection, black box testing of binaries, securing endpoints and penetration testing of systems. Anthropic's commitment of $100M in model usage credits to Project Glasswing and additional participants will cover substantial usage throughout this research preview. Afterward, Claude Mythos Preview will be available to participants at $25/$125 per million input/output tokens (participants can access the model on the Claude API, Amazon Bedrock, Google Cloud's Vertex AI, and Microsoft Foundry).

Partners will, to the extent they're able, share information and best practices with each other; within 90 days, Anthropic will report publicly on what it learns, as well as the vulnerabilities fixed and improvements made that can be disclosed. It will also collaborate with leading security organizations to produce a set of practical recommendations for how security practices should evolve in the AI era.

Developing safeguards for the next generation of agentic coding models

As AI models become better at finding and exploiting vulnerabilities, safeguards are increasingly necessary to protect against cyberattacks. On the global stage, state-sponsored attacks from actors like China, Iran, North Korea, and Russia have threatened to compromise the infrastructure that underpins both civilian life and military readiness. Even smaller-scale attacks, such as those where individual hospitals or schools are targeted, can still inflict substantial economic damage, expose sensitive data, and even put lives at risk. The current global financial costs of cybercrime are challenging to estimate, but might be around $500B every year.

"We've been testing Claude Mythos Preview in our own security operations, applying it to critical codebases, where it's already helping us strengthen our code. We're bringing deep security expertise to our partnership with Anthropic and are helping to harden Claude Mythos Preview," said Amy Herzog, VP and CISO at AWS.

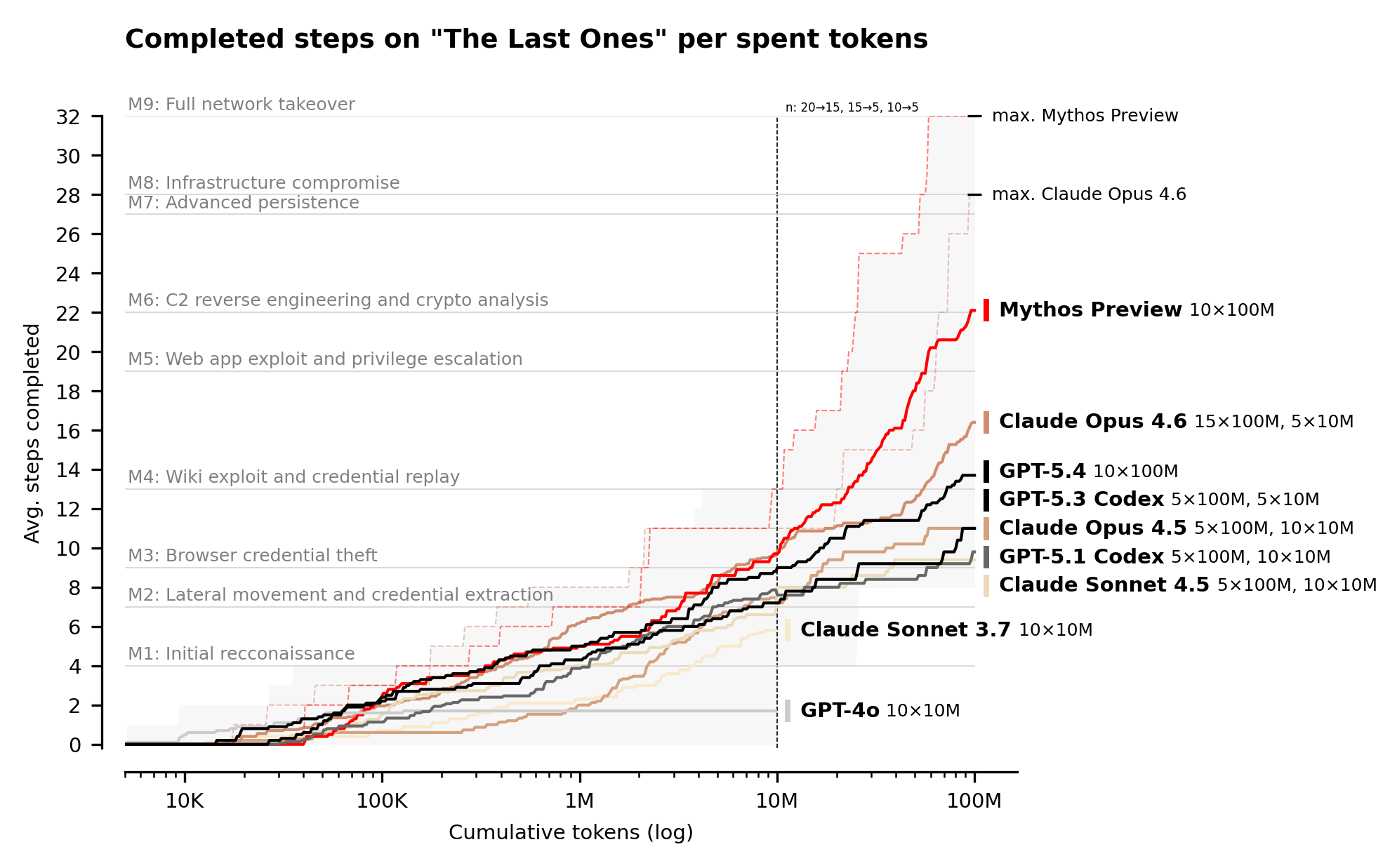

The powerful cyber capabilities of Claude Mythos Preview are a result of its strong agentic coding and reasoning skills. The model has the highest scores of any model yet developed on a variety of software coding tasks.

Anthropic does not plan to make Claude Mythos Preview generally available. Instead, the company aims to enable its users to deploy Mythos class models at scale for cybersecurity purposes and other benefits. It plans to launch new safeguards with an upcoming Claude Opus model to improve and refine them with a different model that poses less risk.

Anthropic has also been in ongoing discussions with U.S. government officials about Claude Mythos Preview and its offensive and defensive cyber capabilities.

Security initiative or PR play?

Bruce Schneier, a security expert, criticized the project, saying, "This is very much a PR play by Anthropic." He mentioned that Aisle, a security company, was able to replicate the vulnerabilities that Mythos found using older, cheaper, public models.

Similarly, Heidy Khlaaf, an AI safety researcher, told Mashable, "Releasing a marketing post with purposely vague language that clearly obscures evidence needed to substantiate Anthropic's claims brings into question if they are trying to garner further investment. It also serves their 'safety first' image as they're able to frame the lack of public release, even a limited one for independent evaluation, as a public service when it simply obscures even experts' abilities to validate their claims."

Still, the UK AI Security Institute (AISI) confirmed that Claude Mythos passed cybersecurity tests that no other model had ever completed and scored higher than any other frontier model on virtually every test. Claude Mythos' capabilities appear genuine, but Anthropic's framing may overstate its novelty and danger.