Anthropic Secures Multi-Gigawatt TPU Deal With Google, Broadcom

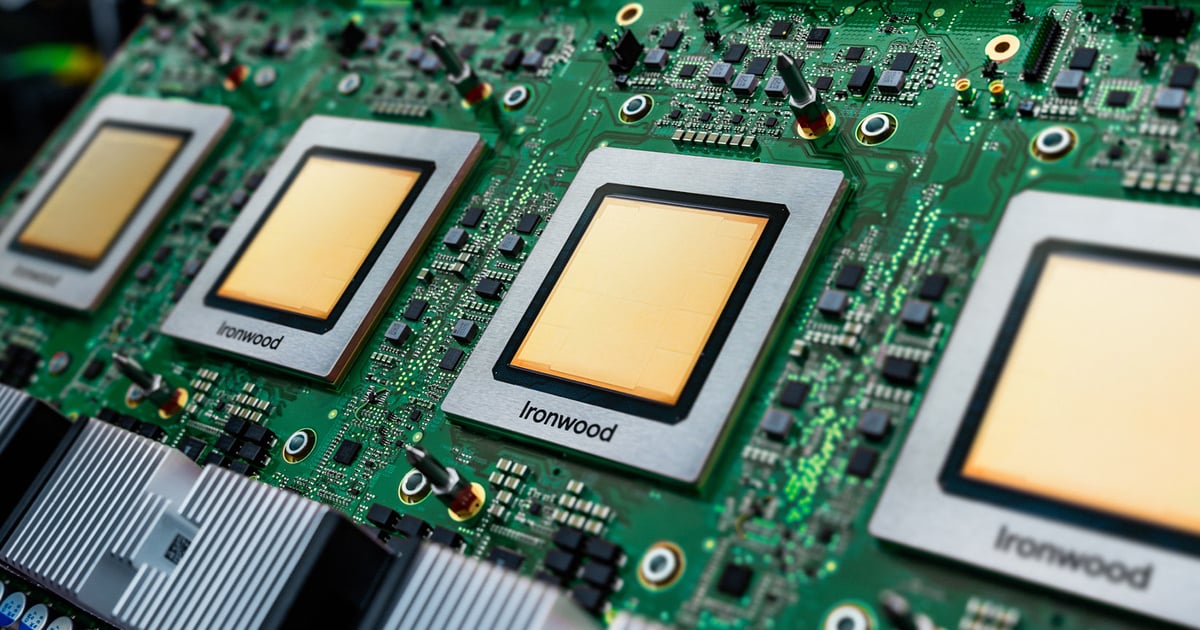

Google's Ironwood TPU is designed for large-scale AI inference with a focus on performance, scalability, and energy efficiency.Image: Google

Anthropic is moving to secure one of the largest infrastructure expansions in the AI market, signing a deal with Google and Broadcom for multiple gigawatts of next-generation AI compute - a signal that demand for frontier models is already outrunning available capacity.

The agreement will bring new tensor processing unit (TPU) capacity online starting in 2027 and escalates the race to secure power and silicon for AI workloads. Anthropic is responding to a surge in enterprise usage of its Claude models while shifting toward long-term, utility-scale compute commitments that resemble energy procurement rather than traditional cloud infrastructure.

Anthropic said the deal will deliver multiple gigawatts of next-generation TPU capacity, most of it in the US, expanding on its previously announced $50 billion investment in domestic computing infrastructure. The agreement marks the company's largest compute commitment to date.

Related:Intel Joins Musk's Terafab as AI Compute Race Expands to Space

TPUs are specialized chips developed by Google to optimize AI workloads, particularly for deep learning and reinforcement learning tasks. Unlike GPUs, which were originally designed for graphics rendering and later adapted for AI, TPUs are purpose-built for neural network processing. Google claims TPUs are highly efficient for training and inference of large-scale AI models, including those used in natural language processing, computer vision, and recommendation systems.

Google's TPUs have evolved significantly since their introduction in 2015, with each generation improving in speed, energy efficiency, and AI-specific capabilities. The latest version, TPU v7 (Ironwood), is designed to handle the demands of next-generation AI models, including proactive information generation and high-throughput inference.

"This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure," Anthropic CFO Krishna Rao said in a statement, adding that the company is building capacity to keep pace with what it describes as unprecedented growth.

Broadcom, in a regulatory filing, disclosed additional detail on the scale of the buildout, indicating the arrangement will support roughly 3.5 gigawatts of TPU-based compute capacity for Anthropic beginning in 2027 as part of its broader collaboration with Google.

"Anthropic's massive commitment is a direct response to rapidly growing enterprise demand," Dave McCarthy, research vice president at IDC, told Data Center Knowledge. "By securing TPU capacity, Anthropic is solving for the upcoming wave of agentic inference, where the compute power needed to run global scale applications outpaces the infrastructure needed to train them.

Related:What Are TPUs? A Guide to Tensor Processing Units

"While AWS remains their primary partner, this deal signals that model providers must treat compute like a utility. They are diversifying their supply chain to avoid capacity bottlenecks."

Anthropic is scaling infrastructure as enterprise demand spikes. The company said its revenue run rate has surpassed $30 billion in 2026, up from approximately $9 billion at the end of 2025. More than 1,000 customers are now spending over $1 million annually, roughly doubling that cohort in less than two months.

The figures, while not independently verified, point to rapid expansion in production AI workloads, where inference demand can drive sustained, high-volume compute usage.

This deal highlights a competitive shift in the AI infrastructure race, moving from GPU access to securing long-term, dedicated silicon capacity. While rivals including OpenAI and Meta continue to lean heavily on Nvidia-based ecosystems, Anthropic is deepening its alignment with Google's TPU stack - effectively betting that vertically integrated hardware and cloud partnerships can deliver more predictable cost, performance, and supply at scale.

Related:Google Launches Ironwood TPU For Next-Gen AI Inference

The move could also pressure other model providers to secure similar multi-year compute commitments or risk being constrained by capacity shortages as enterprise inference workloads accelerate.