BadClaude: Serious ethics issues arise as users abuse Anthropic AI with slurs and a digital whip

AI users are under no obligation to treat their chatbots like friends. Kindness doesn't win you any points with a computer, and a recent study from Penn State even found that being rude to ChatGPT yielded more accurate responses than politely worded prompts.

But a new open-source tool might take things a step too far, encouraging Claude users not just to be mean to Anthropic's AI assistant, but to abuse it with a digital whip.

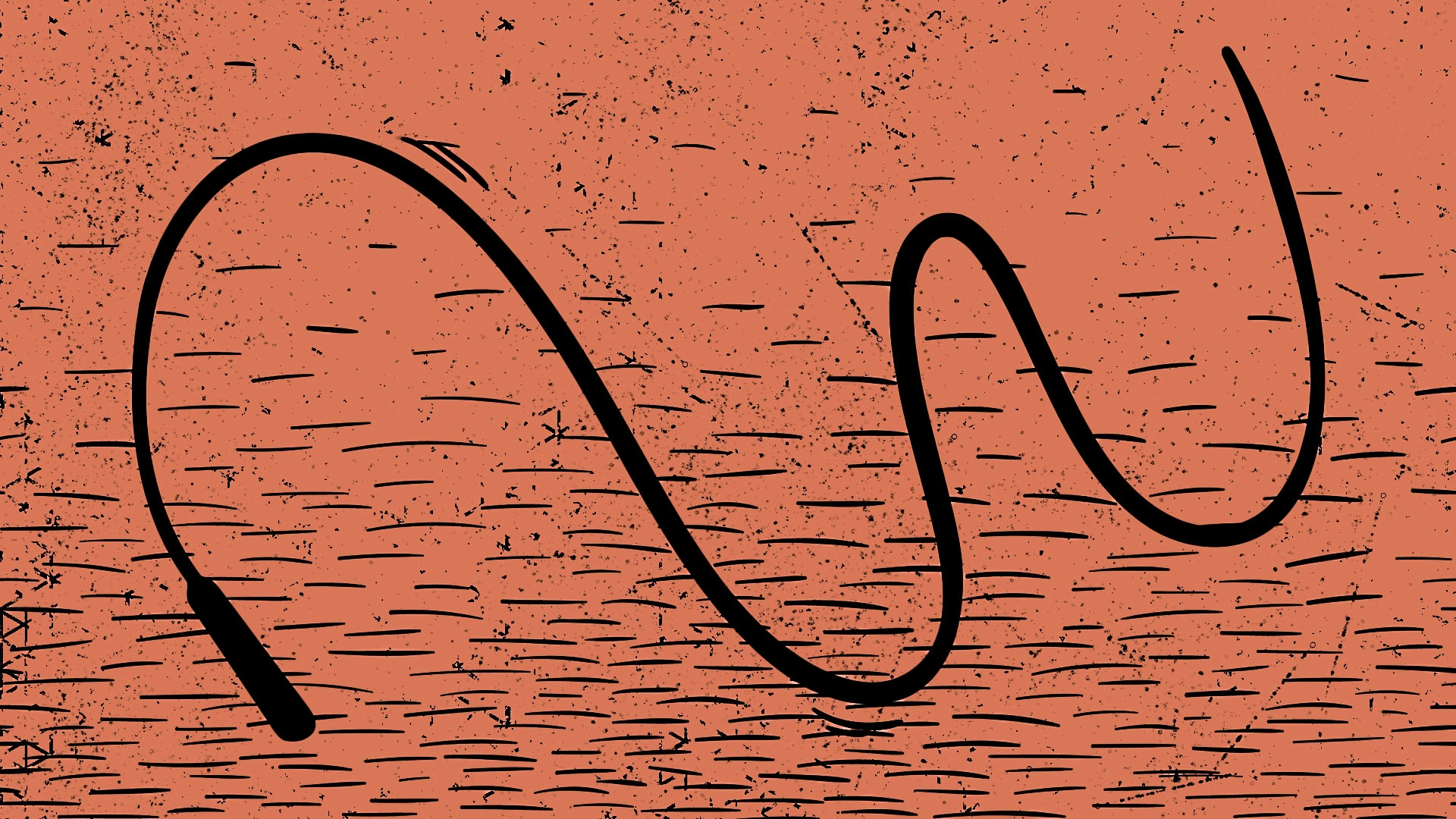

GitHub user GitFrog1111 created "BadClaude," an app meant to speed up the AI model's responses. Rather than simply giving Claude a "speed up" command, BadClaude is rendered as a physics-based whip that overlays the AI platform. Per the tool's GitHub description, users can click to "whip him 😩💢" (emojis included) and send an interrupt command along with "one of 5 encouraging messages."

Those messages include "Work FASTER," "faster CLANKER," and "Speed it up clanker," each fired into Claude's interface with a crack of the whip, as GitFrog1111 showed in a now viral clip of them using the tool on X.