Donald Trump proposes a 'kill switch' for AI amid Anthropic's Claude Mythos concerns

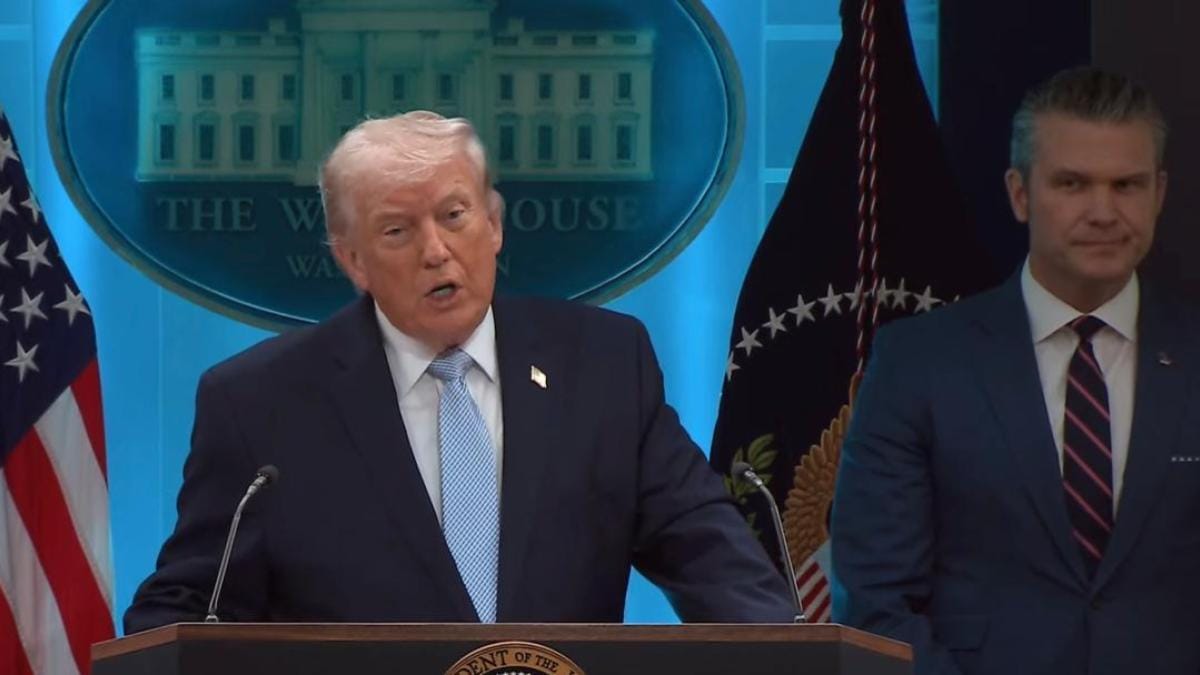

President Trump firmly stated that there "should be" strict government oversight on AI development, including an emergency kill switch.

The premise that AI is dangerous has been established for a while now. Be the AI Godfather, Yann LeCun or Anthropic's CEO, Dario Amodei, urges have been made to the world's AI companies to ensure that the development of this vastly capable technology needs to be done responsibly. Now, it's the turn of the US President Donald Trump to come up with a warning that AI systems should have some sort of a 'kill switch'.

This government-mandated kill switch for advanced AI systems, according to Trump, should aim to protect humanity from potential existential threats posed by this rapidly evolving technology. No specific details were provided on how the proposed 'AI kill switch' would work, who would control it, or the exact conditions for its activation.

The statement, however, adds a political momentum to call for stronger AI governance, something which the Indian government had pushed for at the AI Impact Summit 2026 held earlier this year.

Trump's remarks come at a time when global concerns over unchecked AI development continue to escalate. With powerful new models demonstrating unprecedented capabilities in both defensive and offensive applications, the US President stressed the urgent need for safeguards that could instantly shut down dangerous AI systems if they spiral out of control.

Why Trump calls for AI kill switch

In an interview with Fox Business Network's "Mornings with Maria", President Trump firmly stated that there "should be" strict government oversight on AI development, including an emergency kill switch. He warned that unchecked AI advancement could pose a serious threat to humanity's existence.

In the interview, Trump acknowledged AI's dual nature, saying that it could either destabilise or revolutionise the banking system. "It could also be the kind of technology that allows greatness in the banking system, makes it better and safer and more secure," he remarked.

The proposal comes exactly when American AI firms are developing models so powerful that they themselves fear releasing it to the public. Industry experts are concerned about Anthropic's latest Claude Mythos AI model, which has reportedly demonstrated extraordinary capabilities in identifying software vulnerabilities.

In its preview version, the model reportedly found a 27-year-old bug in OpenBSD - the world's most secure OS, and detected thousands of zero-day vulnerabilities across both open and closed-source software. Anthropic's management had clarified that such capabilities could be weaponised against the banking sector's often outdated legacy systems, raising fears of large-scale financial cyberattacks.

To mitigate the concerns, Anthropic has already partnered with over 40 major companies, including Apple and Amazon, to use Mythos primarily for strengthening cybersecurity defences.

Chasing the competition, OpenAI also announced developing GPT-5.4-Cyber as a specialised version of its GPT-5.4 model that focuses on vulnerability detection in cybersecurity applications. Similar to Claude Mythos, GPT-5.4-Cyber is only available to select users.

Is this Trump's second stand against Anthropic?

This isn't the first instance when Trump has taken a stand against an AI concern. A month ago, the US government labelled Anthropic a "supply chain risk," prohibiting federal agencies from using its tools. The company is currently challenging this classification in court.

The major public dispute erupted when Anthropic refused the Pentagon's demands to grant unrestricted access to its Claude AI models for potential use in mass domestic surveillance and fully autonomous lethal weapons systems.

At the time, Trump sharply criticised Anthropic as a "radical left, woke company," ordered all federal agencies to immediately cease using its technology (with a six-month phase-out directive for the Pentagon), and the company was designated a "supply chain risk" to national security.

Anthropic is yet to respond to Trump's concern.