OpenAI, Anthropic, Google Form United Front to Block Chinese 'AI Free-Riding' - Techstrong.ai

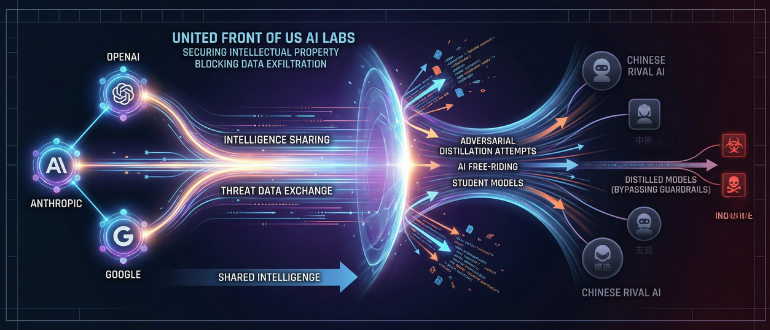

Rivals OpenAI, Anthropic, and Google have begun sharing sensitive intelligence to thwart Chinese competitors from distilling their artificial intelligence (AI) models in a rare alliance to protect their intellectual property.

The collaboration, facilitated through the Frontier Model Forum -- an industry nonprofit founded in 2023 -- marks a significant escalation in the global AI arms race. According to a Bloomberg report, citing people familiar with the matter, the firms are swapping data to detect "adversarial distillation," a process where users extract outputs from top-tier U.S. models to train cheaper, imitation versions.

The stakes are both economic and existential. U.S. officials estimate that unauthorized distillation costs Silicon Valley labs billions of dollars in annual profit. By using a "teacher" model from the U.S. to train a "student" model in China, developers can replicate high-level reasoning capabilities at a fraction of the original research and development cost.

The urgency behind the partnership intensified following the January 2025 release of DeepSeek-R1. The Chinese startup's model shocked the industry by matching the performance of U.S. systems while remaining "open-weight" and significantly cheaper to operate.

OpenAI recently escalated the rhetoric in a memo to Congress, explicitly accusing DeepSeek of trying to "free-ride on the capabilities developed by OpenAI and other U.S. frontier labs." While DeepSeek has maintained its innovations are original, Microsoft and OpenAI launched internal investigations into whether the Chinese firm exfiltrated massive datasets to build its reasoning engine.

Beyond the loss of market share, U.S. labs warn of a "guardrail gap." While proprietary U.S. models like GPT-4 or Claude are programmed with strict safety limits to prevent the creation of biological weapons or the execution of cyberattacks, distilled models often bypass these filters.

"The threat extends beyond any single company," Anthropic said recently, when it identified Chinese labs DeepSeek, Moonshot, and MiniMax as illicitly extracting capabilities. Without oversight, these distilled models could provide bad actors with high-level technical assistance for malicious activities.

The industry's move mirrors cybersecurity protocols where firms trade data on hacking threats to bolster collective defenses. It also aligns with the Trump administration's AI Action Plan, which calls for an information-sharing center to curb foreign exploitation of U.S. tech.

However, the collaboration remains in its early stages. Insiders suggest that while the companies are eager to protect their hundreds of billions in infrastructure investments, they are navigating a legal minefield. Many are seeking clearer guidance from the Department of Justice to ensure that sharing information about Chinese competitors does not run afoul of U.S. antitrust laws.

As Chinese open-source models continue to proliferate, Silicon Valley is betting that its proprietary moats can only be maintained if the industry acts as a single entity to slam the door on data exfiltration.