News & Updates

The latest news and updates from companies in the WLTH portfolio.

Vercel's Breach Is a Warning -- "Shadow AI" Risks to CX Are Escalating

"Shadow AI" adoption across enterprises is creating hidden security gaps that can expose customer data and disrupt customer experience A new form of shadow IT, where employees use tools without formal oversight, is taking shape inside enterprises. As teams rapidly integrate AI tools into daily workflows without centralized governance, their use of "shadow AI" is creating a new and largely unmonitored risk layer of hidden access points that can expose sensitive data and disrupt customer experience. Shadow AI reflects the simple reality that employees are no longer waiting for centralized approval to experiment with new tools. From drafting customer responses to prototyping workflows, AI is being embedded organically across teams that directly shape customer interactions. That reality came up in CX Today's recent roundtable discussion on how AI is reshaping CRM and customer data stacks. As John Kelleher, VP UKI/MEA of Enterprise Sales at Zendesk, said, "In the past you wouldn't have taken a CRM and played with it yourself... but CIOs are building [apps] in Claude... everybody's playing with it themselves and creating the thoughts, 'I could do this within my own function, my own business'." "We don't really talk about it much at the moment, but... that whole shadow AI... is a risk. That creates complexity... that will need to be governed better." This decentralized experimentation is accelerating innovation, but it is also creating fragmented, often invisible, extensions of the enterprise tech stack. That speed introduces a new category of operational risk, especially when those tools interact with customer data or production systems. "I'm seeing it already, the speed with which someone says, 'I can do this.' They're doing it, and actually going live." Mark Ashton, VP of Solution Consulting, CRM, EMEA, at ServiceNow added. "When you think about governance, security, control, where the data is coming from, there's an explosion waiting to happen with all these citizen developers building... At some point, there'll be a leak of some information." How the Vercel Breach Reveals Hidden CX Risks From Shadow AI A recent breach of a third-party tool used by an employee at U.S. cloud application company Vercel demonstrates how shadow AI can translate into real-world CX impact. The breach originated through a compromised third-party AI tool connected to an employee's account, which hackers then used to access internal systems and customer-related data. The incident highlights how quickly a single instance of a seemingly innocuous tool can become a gateway into an enterprise's core infrastructure. As Fredrik Almroth, co-founder and security researcher at Detectify, pointed out, the issue lies in how access is structured, and how easily it is extended by third-party tools. "The Vercel breach is a stark reminder that modern security risks don't stop at the boundaries of your own systems. They extend to every tool and service your organization is connected to." Sophisticated attackers using less-scrutinized, third-party tools to take over an employee's account and infiltrate an organization's systems is becoming common, Almroth warned. "There was no need to go after Vercel directly, to use brute force, or sophisticated technical knowledge." One of the most significant challenges shadow AI introduces is a lack of visibility. While IT teams may maintain oversight of approved vendors, the tools that employees connect independently often fall outside formal tracking. In Vercel's case, the employee connected a consumer version of Context.ai, an agentic AI tool that enabled access to their Vercel-issued Google Workspace account, which allowed agents to automate actions in Google Workspace. Context.ai noted that the breach did not affect its enterprise customers, which run deployments in their own infrastructure. Almroth cautioned: "That's a blind spot many organizations still have. They've got a reasonable handle on their known vendors, but the web of third-party tools that employees connect to their work accounts organically, tool by tool, often without a formal approval process, is a different thing entirely." "It's rarely tracked, rarely reviewed, and almost never reconsidered when something goes wrong elsewhere. That's the gap this incident exposes." This lack of visibility has direct CX implications. Vercel initially found that a "limited subset of customers" had non-sensitive environment variables compromised. And its continuing investigation "identified a small number of additional accounts that were compromised as part of this incident." That indicates how the use of shadow AI can directly result in customer data becoming compromised. And when an incident occurs, enterprises may struggle to quickly identify the source of the breach as well as the scope, delaying customer communications and increasing the risk of inconsistent messaging. Vercel's investigation into the breach also turned up signs of further compromise from outside the company. "We have identified a small number of customer accounts with signs of compromise that appear to be separate from the April 2026 incident," the bulletin stated. "Based on our investigation to date, these compromises do not appear to have originated on Vercel systems. We have already contacted those accounts and provided them with specific corrective actions to remediate potential risk." The update added that "this activity does not appear to be a continuation or expansion of the April incident, nor does it appear to be evidence of an earlier Vercel security incident." What Enterprises Can Learn About Protecting Customer Experience Almroth offers a practical lesson. "Focus less on the label of the tool involved and more on the access chain: which external apps are connected to employee accounts, what those apps are allowed to do, what internal systems those accounts can reach, and whether sensitive credentials would still be exposed if that chain of trust broke. As the Vercel incident shows, security events originating in shadow AI environments are likely to become apparent first in customer-facing channels. The firm recommends that customers enable multi-factor authentication, rotate environment variables and deployments, and review activity logs. Whether it is forced password resets, unexpected downtime, or precautionary restrictions, the customer experience becomes the most visible layer of the incident. It also reframes governance. Managing enterprise AI usage includes understanding which tools employees are using, how they connect to corporate systems, what permissions they hold, and how quickly those connections can be revoked or contained. It also requires tighter coordination between security, IT, and customer-facing teams to ensure that when incidents occur, responses are technically effective and customer-aware. "The organizations that develop real visibility into what's connected to their systems (and what those connections can actually reach) will be the ones that catch these intrusions before an attacker decides to go public," Almroth advised.

Vercel says some customer data stolen before the breach.

This analysis has uncovered two additional findings: First, we have identified a small number of additional accounts that were compromised as part of this incident. Second, we have uncovered a small number of customer accounts with evidence of prior compromise that is independent of and predates this incident, potentially as a result of social engineering, malware, or other methods.

Vercel identifies more accounts 'with evidence of prior compromise' exposed during security incident

* Vercel expanded its breach investigation, confirming more compromised accounts than initially reported. * Researchers linked the attack to a Context.ai account infected with Lumma Stealer malware, which was used to access Vercel environments. * A dark web actor attempted to sell stolen Vercel data, claiming ties to ShinyHunters, though the group denied involvement. The number of customers affected by the recent breach at Vercel is bigger than initially thought, as the company confirmed finding even more compromised accounts. Earlier this week, the cloud development platform confirmed suffering a cyberattack and losing "non-sensitive" customer data. In the initial report, Vercel said one of its employees used a third-party AI tool called Context.ai, which seems to have been used as an entry point. "The incident originated with a compromise of Context.ai" the company said, claiming that the attacker used that access to take over that employee's Google Workspace account. Through that, they gained access to some Vercel environments and environment variables "that were not marked as 'sensitive'. Infected after downloading "game hacks" During a more thorough investigation, Vercel expanded its list of compromise indicators. As a result, it found even more accounts that were exposed. It also said it found a "small number" of customer accounts with evidence of proper compromises, predating this attack. These, the company believes, are the result of social engineering, or malware attacks. It said it notified the affected individuals but did not want to say how many people were affected. In its own investigation, security researchers Hudson Rock found that the Context.ai user was infected with the Lumma Stealer infostealer in February 2026, after searching for exploits for Roblox. "We now understand that the threat actor has been active beyond that startup's compromise," Vercel CEO Guillermo Rauch said on X. "Threat intel points to the distribution of malware to computers in search of valuable tokens like keys to Vercel accounts and other providers." Just a day before Vercel announced the breach, someone tried selling the archive on a dark web forum. "Greetings all. Today I am selling Access Key/Source Code/Database from Vercel," the attacker said. They claimed to be part of the ShinyHunters team, which the group denied. Via The Hacker News Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds.

Vercel Says Some Of Its Customers' Data Was Stolen Prior To Its Recent Hack

Vercel says some of its customers' data was stolen prior to its recent hack - BERITAJA is one of the most discussed topics today. In this article, you will find a clear explanation, key facts, and the latest updates related to this topic, presented in a concise and easy-to-understand way. Read more news on Beritaja. App and website hosting elephantine Vercel connected Thursdays said hackers had accessed immoderate of its customers' information earlier the institution discovered its caller information breach, suggesting that this incident whitethorn person broader information implications than initially known. In an update connected its information incident page, Vercel said it had identified grounds of malicious activity connected its web preceding the early-April breach aft it expanded its first investigation. "We person uncovered a mini number of customer accounts pinch grounds of anterior discuss that is independent of and predates this incident, perchance arsenic a consequence of societal engineering, malware, aliases different methods," the update reads. Vercel besides said it discovered much customer accounts compromised by the April incident, but did not disclose details, only saying that it had notified customers known to beryllium affected truthful far. The San Francisco-based app and website hosting institution initially said its soul systems were breached aft an worker downloaded an app made by package startup Context AI, which hackers abused to summation entree to the employee's activity account, and subsequently, Vercel's systems. The caller update suggests the information breach whitethorn beryllium larger successful scope and could person lasted longer than initially thought. In a station connected X, Vercel CEO Guillermo Rauch confirmed that the hackers who compromised Vercel person been progressive "beyond that startup's compromise," referring to Context AI, which confirmed an earlier breach of its systems successful a station this week. A Vercel spokesperson declined to remark beyond the update connected the incident page. They would neither corroborate really galore customers the breach now affects, nor opportunity really acold the 2nd discuss dates back. Vercel has not yet confirmed really the hackers collapsed into its systems, but Rauch pointed to early signs that the hackers relied connected malware that compromises computers "in hunt of valuable tokens for illustration keys to Vercel accounts and different providers." Rauch whitethorn beryllium referring to accusation stealing malware, aliases infostealers, which often masquerade arsenic morganatic software. When installed, the malware collects and uploads delicate secrets from the victim's computer, including passwords and different backstage keys, allowing hackers to participate immoderate strategy that those keys let entree to. "Once the attacker gets ahold of those keys, our logs show a repeated pattern: accelerated and broad API usage, pinch a attraction connected enumeration of non-sensitive situation variables," said Rauch. The hackers utilized the hijacked Vercel employee's relationship to summation entree to immoderate of the company's soul systems, including customer credentials that were not encrypted. Rauch's comments look to adhd weight to earlier reporting by information researchers that a Context AI employee's machine was infected pinch infostealer malware aft they allegedly looked up Roblox crippled cheats. It's not yet known really galore customers are affected by the Vercel breaches and customer information thefts. Both Vercel and Context AI person suggested that the breach whitethorn impact much companies, and that much victims whitethorn travel to light.

Vercel says some of its customers' data was stolen prior to its recent hack - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Vercel says some customer accounts were compromised prior to its early-April breach, potentially through social engineering, malware, or other methods

@itsmarkmoran: YES, I did bet ~$100 on myself on Kalshi because I wanted to get caught... After discovering potential manipulation on polymarket in the NYC mayoral race (NY Post reported on this) I realized how rife with corruption kalshi is...I mean death markets...come on.... Today, we're releasing notices related to three enforcement investigations. All three cases concern political insider trading and were flagged because of our newly released safeguards to block political candidates from trading on their own elections. Kalshi does not tolerate anyone cheating or skirting the rules. Regulated exchanges must constantly evolve and adapt their systems to address insider threats.

Vercel says some of its customers' data was stolen prior to its recent hack | TechCrunch

App and website hosting giant Vercel on Thursdays said hackers had accessed some of its customers' data before the company discovered its recent data breach, suggesting that this incident may have broader security implications than initially known. In an update on its security incident page, Vercel said it had identified evidence of malicious activity on its network preceding the early-April breach after it expanded its initial investigation. "We have uncovered a small number of customer accounts with evidence of prior compromise that is independent of and predates this incident, potentially as a result of social engineering, malware, or other methods," the update reads. Vercel also said it discovered more customer accounts compromised by the April incident, but did not disclose details, only saying that it had notified customers known to be affected so far. The San Francisco-based app and website hosting company initially said its internal systems were breached after an employee downloaded an app made by software startup Context AI, which hackers abused to gain access to the employee's work account, and subsequently, Vercel's systems. The new update suggests the data breach may be larger in scope and could have lasted longer than initially thought. In a post on X, Vercel CEO Guillermo Rauch confirmed that the hackers who compromised Vercel have been active "beyond that startup's compromise," referring to Context AI, which confirmed an earlier breach of its systems in a post this week. A Vercel spokesperson declined to comment beyond the update on the incident page. They would neither confirm how many customers the breach now affects, nor say how far the second compromise dates back. Vercel has not yet confirmed how the hackers broke into its systems, but Rauch pointed to early signs that the hackers relied on malware that compromises computers "in search of valuable tokens like keys to Vercel accounts and other providers." Rauch may be referring to information stealing malware, or infostealers, which often masquerade as legitimate software. When installed, the malware collects and uploads sensitive secrets from the victim's computer, including passwords and other private keys, allowing hackers to enter any system that those keys allow access to. "Once the attacker gets ahold of those keys, our logs show a repeated pattern: rapid and comprehensive API usage, with a focus on enumeration of non-sensitive environment variables," said Rauch. The hackers used the hijacked Vercel employee's account to gain access to some of the company's internal systems, including customer credentials that were not encrypted. Rauch's comments appear to add weight to earlier reporting by security researchers that a Context AI employee's computer was infected with infostealer malware after they allegedly looked up Roblox game cheats. TechCrunch reported on Thursday that embattled compliance startup Delve, accused of faking customer data, performed the security certifications for Context AI. It's not yet known how many customers are affected by the Vercel breaches and customer data thefts. Both Vercel and Context AI have suggested that the breach may affect more companies, and that more victims may come to light.

Vercel Confirms Security Breach Affecting Customer Accounts - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Vercel Confirms Security Breach - Set of Customer Account Compromised - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Vercel Finds More Compromised Accounts in Context.ai-Linked Breach - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Hacker Active Well Beyond Context.ai Compromise: Vercel CEO

Vercel CEO Guillermo Rauch, in an update today said that after scanning through petabytes of logs of the company's networks and APIs, his security team concluded that the threat actor behind the Vercel breach had been active well beyond Context.ai's compromise. Rauch said that the "threat intel points to the distribution of malware to computers in search of valuable tokens like keys to Vercel accounts and other providers. Once the attacker gets ahold of those keys, our logs show a repeated pattern: rapid and comprehensive API usage, with a focus on enumeration of non-sensitive environment variables." Researchers at Hudson Rock had earlier confirmed that the attack actually initiated in February itself when a Context.ai employee's computer was infected with Lumma Stealer malware after they searched for Roblox game exploits, a common vector for infostealer deployments. What the latest findings mean is that there could be a wider net of victims that the threat actor may have phished for and what we know is just the tip of the iceberg - or not. In an official update, the company also stated that initially it identified a limited subset of customers whose non-sensitive environment variables stored on Vercel were compromised. However, a deeper assessment of the their network, as well as environment variable read events in the company's logs uncovered two additional findings. "First, we have identified a small number of additional accounts that were compromised as part of this incident," the company noted. But the main concern is the next finding: "Second, we have uncovered a small number of customer accounts with evidence of prior compromise that is independent of and predates this incident, potentially as a result of social engineering, malware, or other methods." The company did not disclose who were the attackers, what was the motive, or the impact on customers, and is yet to respond to these queries from The Cyber Express. It only stated: "In both cases, we have notified the affected customers." Meanwhile, Rauch said, Vercel had notified other suspected victims and encouraged them to rotate credentials and adopt best practices.

Vercel confirms April 2026 security incident linked to third-party AI tool - IT Security News

Cloud development platform Vercel has confirmed a security incident involving unauthorized access to parts of its internal systems, following a breach disclosed in April 2026. In an official security bulletin, the company stated: "We've identified a security incident that involved unauthorized access to certain internal Vercel systems." Vercel added that it is "actively investigating" the incident, has engaged incident response experts, and notified law enforcement [...]

Vercel Breach Started With AI Tool - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Security Leaders Discuss the Vercel Breach

Following the news of the Vercel data breach, security experts are discussing implications, sharing their insights, and weighing in on what this incident suggests about the future of attack patterns. Incidents like this are never fun, and living through one in real time is stressful for everyone involved, no matter how prepared your team thinks they are. Vercel has a massive footprint in the dev community, particularly for modern web apps and CI/CD workflows, so even when only a slice of customers are affected, people are going to notice and talk about it. That said, from what's been shared publicly, this doesn't look like a sweeping supply chain attack. It reads more like a targeted account takeover, someone found a foothold through a third-party AI tool and worked their way into internal systems from there. The bigger concern is the exposure of environment variables and tokens, which can open doors to follow-on access if teams don't move quickly to lock things down. One thing that really stands out here is the timeline. By the time Vercel got ahead of the story publicly, the attacker had already disclosed it. That's a tough spot to be in, and it's a good reminder of why comms teams need a seat at the table during incident response tabletop exercises, not just the engineers. When there's a gap between what's being reported and what the company is saying, the narrative fills itself, usually without the full picture. To Vercel's credit, they've been upfront about what happened and given customers concrete steps to take -- audit your environment variables, use sensitive variable protections, check your deployments, rotate your tokens. That kind of clear, actionable guidance matters a lot when customers are trying to figure out if they're exposed. The bigger takeaway here isn't really about Vercel specifically. It's about the fact that third-party integrations, especially newer AI tools that connect into identity systems like Google Workspace, are quietly becoming a serious attack surface, even for organizations that have otherwise done a lot of things right. Calling this a full-scale supply chain attack would be a gross overstatement. What we are seeing in the Vercel incident is a third-party compromise with supply chain characteristics, but not a systemic, cascading supply chain failure similar to the SolarWinds attack. The threat actor leveraged a compromised third-party AI tool integrated via a Google Workspace OAuth application, which then enabled unauthorized access into internal systems. That is a trust and authentication boundary failure, not a compromised software distribution pipeline. In a true supply chain attack, the adversary weaponizes the vendor's product itself to propagate downstream at scale. Here, the blast radius appears constrained to a subset of customers, with no evidence of malicious code being distributed through Vercel's platform to its tenants. The more accurate framing is this is an identity-centric supply chain exposure. The OAuth trust model became the attack vector. This is not about code integrity but rather about delegated access and over-permissioned integrations. The takeaway is more concerning than the public disclosure. The modern supply chain is no longer just installed software. It is based on identities, APIs, and AI tooling created by third parties, open source, and sovereign installations. That is where control was lost and the breach occurred. The question of whether this is a supply chain attack is the wrong frame. Supply chain is becoming a catch-all term that often generates more heat than clarity. The question every CISO, security team, and engineering leader, should be asking right now is how many third-party AI tools in their environment have OAuth access to systems that hold production secrets, and when that access was last reviewed. This is a governance and program design problem, and no amount of platform hardening fixes it if the access decisions themselves were never rigorously made. The breach vector is the signal: a third-party AI tool's OAuth credentials were compromised and used to reach internal Vercel systems. This is the new attack pattern that security teams are not yet fully pricing into their risk models. AI tools are being onboarded at machine speed, and the access governance frameworks designed to evaluate those integrations are running at human speed. Until that gap closes, every OAuth token granted to an AI productivity tool is a potential pivot point into something much more critical. This incident is the latest in a growing pattern of OAuth 2.0-based supply chain attacks. From the Chrome extension breaches in late 2024 to the Entra ID consent injection attacks, attackers are increasingly targeting the trust relationships built into OAuth 2.0 rather than breaking through traditional perimeters. The initial compromise was an infostealer, not a sophisticated exploit. A Context.ai employee with administrative privileges -- using the [email protected] account, described as belonging to a "core member" of the team -- was infected with Lumma Stealer in February 2026. According to Hudson Rock, the employee had been downloading malicious Roblox "auto-farm" scripts. The malware exfiltrated browser credentials, session cookies, and OAuth tokens, including credentials for Google Workspace, Supabase, Datadog, and Authkit. The attacker used a compromised OAuth token to access Vercel's Google Workspace, gaining entry to certain internal systems and environment variables that were not marked as "sensitive." The OAuth application involved has been identified by its client ID: 110671459871-30f1spbu0hptbs60cb4vsmv79i7bbvqj.apps.googleusercontent.com. The application's Chrome extension was removed from the Chrome Marketplace on March 27, and Google subsequently deleted the account. Hudson Rock had possessed the compromised credential data over a month before Vercel confirmed the breach highlighting the detection gap that allowed the supply chain escalation to succeed. The stolen data is now being sold by the ShinyHunters group. Regardless of what tooling you use, the Vercel incident highlights several important practices: The Vercel incident is a clear example of how identity infrastructure, in this case OAuth 2.0 trust relationships, has become a primary attack vector. The attacker didn't exploit a zero-day or brute-force a password. They compromised a third-party app and inherited the trust that employees had already granted. This pattern will continue. As organizations adopt more SaaS tools, AI assistants, and third-party integrations, the sprawl of OAuth grants grows. Defending against these threats requires continuous visibility into your OAuth app landscape, automated detection of risky scopes, and the ability to revoke access at speed.

AI-assisted intruders pwned Vercel via OAuth abuse and a pilfered employee account - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Vercel Customer Data Breach Highlights CX Risks of "Shadow AI" Tools

Vercel breach indicates how third-party AI tools and compromised credentials can expose customer data and disrupt CX A data security breach at US cloud application company Vercel has prompted urgent customer notifications and drawn attention to the risk that employees using third-AI tools could open additional attack vectors for hackers to steal customer information. Vercel provides developer tools and cloud infrastructure, including the widely used Next.js web development framework for React that it created and maintains. The company issued a bulletin on April 19 stating that it had discovered unauthorized access to certain internal systems and indicated that some customers' accounts were compromised. "Initially we identified a limited subset of customers whose non-sensitive environment variables stored on Vercel (those that decrypt to plaintext) were compromised. We reached out to that subset and recommended an immediate rotation of credentials." The bulletin added the company is investigating "whether and what data was exfiltrated" and will contact customers if it discovers further evidence of their information being compromised. The investigation found that the attacker gained access to Context.ai, a third-party agentic AI tool used by a Vercel employee, which allowed it to take over the employee's Vercel-issued Google Workspace account to breach some Vercel environments and environment variables that were not marked as sensitive. The company fully encrypts variables that are marked as sensitive to prevent them from being read, and the bulletin stated that "we currently do not have evidence that those values were accessed." It turns out that Context.ai's Google Workspace OAuth app was the subject of a broader security compromise, potentially affecting "hundreds of users across many organizations," Vercel warned. The company recommends that Google Workspace Administrators and Google Account owners check for usage of the app immediately. Vercel's CEO, Guillermo Rauch, provided more detail in a post on X, stating: "We believe the attacking group to be highly sophisticated and, I strongly suspect, significantly accelerated by AI. They moved with surprising velocity and in-depth understanding of Vercel." Rauch added that the company analyzed its supply chain to make sure that Next.js, the Turbopack bundler built into Next.js, and its open-source projects remain secure. A subsequent update to the bulletin on April 20, 5:32 PM PST stated: "In collaboration with GitHub, Microsoft, npm, and Socket, our security team has confirmed that no npm packages published by Vercel have been compromised. There is no evidence of tampering, and we believe the supply chain remains safe." Vercel is also working with Google Mandiant and other cybersecurity firms, industry peers, and law enforcement, as well as Context.ai, to understand the full scale of the security compromise. The company recommends that customers enable multi-factor authentication and make use of the sensitive environment variables feature. As compromised credentials can still provide access to production systems, customers need to rotate them before deleting Vercel projects or accounts. Customers should also review their account activity logs and environments for suspicious activity and investigate recent deployments for unexpected or suspicious-looking activity, deleting any that appear to be suspicious, according to the bulletin. Vercel Breach May Indicate Wider Attack on Enterprise Credentials AI chat app developer Theo Browne also warned on X that the breach could extend further: "The method of compromise was likely used to hit multiple companies other than Vercel." Austin Larsen, Principal Threat Analyst at Google Threat Intelligence Group, warned in a LinkedIn post that Vercel users should check whether their systems have been affected. "If your organization relies on their infrastructure, I strongly recommend you start looking into this immediately," Larsen wrote. The hacker claimed to be part of the notorious ShinyHunters group, but Larsen noted that "likely this is an imposter attempting to use an established name to inflate their notoriety." Israeli cybersecurity firm Hudson Rock connected the dots between an infostealer attack on Context.ai and the Vercel breach. "In a February 2026 Lumma stealer infection, a Context.ai employee with sensitive access privileges was compromised. A deep dive into the infected machine's browser history provides a textbook example of how these breaches originate," the firm noted. The user was actively searching for and downloading Roblox game exploits, which are well known for deploying Lumma stealer infections. Hudson Rock traced the single infection in Context.ai's systems to harvested corporate data including Google Workspace credentials, as well as keys and logins for Supabase, Datadog, and Authkit. The records included the [email protected] account, which gave the attacker the leverage needed to escalate privileges, bypass initial security perimeters, and enter Vercel's infrastructure. The compromised user was a core member of the "context-inc" Vercel team with direct access to critical administrative endpoints, according to the security firm. For its part, Context.ai pointed to a security incident involving unauthorized access to its AWS environment that it identified and stopped in March, stating: "At the time, we engaged CrowdStrike, a leading forensic firm, conducted an investigation, and informed a customer we identified as impacted. We also closed the AWS environment, hosting service, and associated resources to fully deprecate the consumer product." Following the notification from Vercel that its systems had been breached, the company found that OAuth tokens belonging to some users of its AI Office Suite were compromised during the incident. The suite allowed consumer users to enable AI agents to perform actions across external applications, facilitated by another third-party service. The statement explained: "One of those tokens was used by the attacker to access Vercel's Google Workspace. Vercel is not a Context customer, but it appears that at least one employee enabled 'allow all' on all requested Google Workspace permissions using their Vercel Google Workspace account." The permissions were intended to enable AI agents to carry out actions in Google Workspace on the user's behalf, such as writing emails or creating documents. Context.ai has since taken down the environment and the AI Office Suite's OAuth application. "We are supporting a subset of AI Office Suite users potentially impacted by a recent security incident that we detected and stopped," the company stated. "This incident does not affect Context's enterprise customers, whose Bedrock deployments run in their own infrastructure." Shadow AI Tools Introduce Hidden CX Risks The infiltration of a Vercel employee's third-party tool, which enabled access to the company's internal systems, highlights the risk to customer data from "shadow AI." As AI tools become embedded in day-to-day workflows, employees using them outside formal procurement and security review processes can inadvertently create hidden vulnerabilities. A compromise originating in a seemingly low-risk tool in customer support, product, and engineering functions can escalate into a security breach or accidental data exposure. That dynamic also complicates accountability and communication, as incidents tied to shadow AI tools can be harder for enterprises to detect and explain. CX teams may be required to respond to customer concerns before there is a clear internal narrative, increasing the risk of inconsistent or incomplete messaging. Browne emphasized the importance of clear communication and a focus on the impact on customers, posting: "Fwiw, I am impressed with how Vercel has handled this incident so far. They're taking it seriously. Notifying affected parties within minutes of identification. Being realistic about what they do and don't know. They're clearly more worried about their customers than their reputation right now and I have a lot of respect for that." "There's also a bunch of third parties they could throw under the bus but they are fully focused on fixing the issues instead." Hudson Rock, however, did not hold back in pointing the finger: "Hudson Rock obtained this compromised credential data over a month ago. Had this infostealer infection been identified and the exposed credentials revoked immediately, this entire supply-chain attack could have been completely prevented." The incident emphasizes "the critical importance of rapid detection and quick remediation of infostealer credentials before threat actors have the opportunity to operationalize the stolen access," the firm added. AI-Powered Cyber Attacks Raise Stakes for CX Resilience With Rauch noting that the attack on Vercel appeared to have been accelerated by the hacker using AI, Browne also warned that the prevalence of such cyber attacks will continue to rise as AI models become more capable of exploiting security vulnerabilities. "Incidents like this are never easy. We're going to start seeing more and more of them as LLMs get more powerful. IMO, they're doing this right." As this incident indicates, the growing presence of shadow AI in enterprise environments and the use of AI agents to perform actions autonomously expands the attack surface in ways that are likely to surface in the customer experience first, rather than backend systems. Enterprises increasingly need to ensure they incorporate AI governance into their CX risk management, setting policies around tool usage, access controls, and integration boundaries to help ensure customer-facing experiences remain stable and trustworthy.

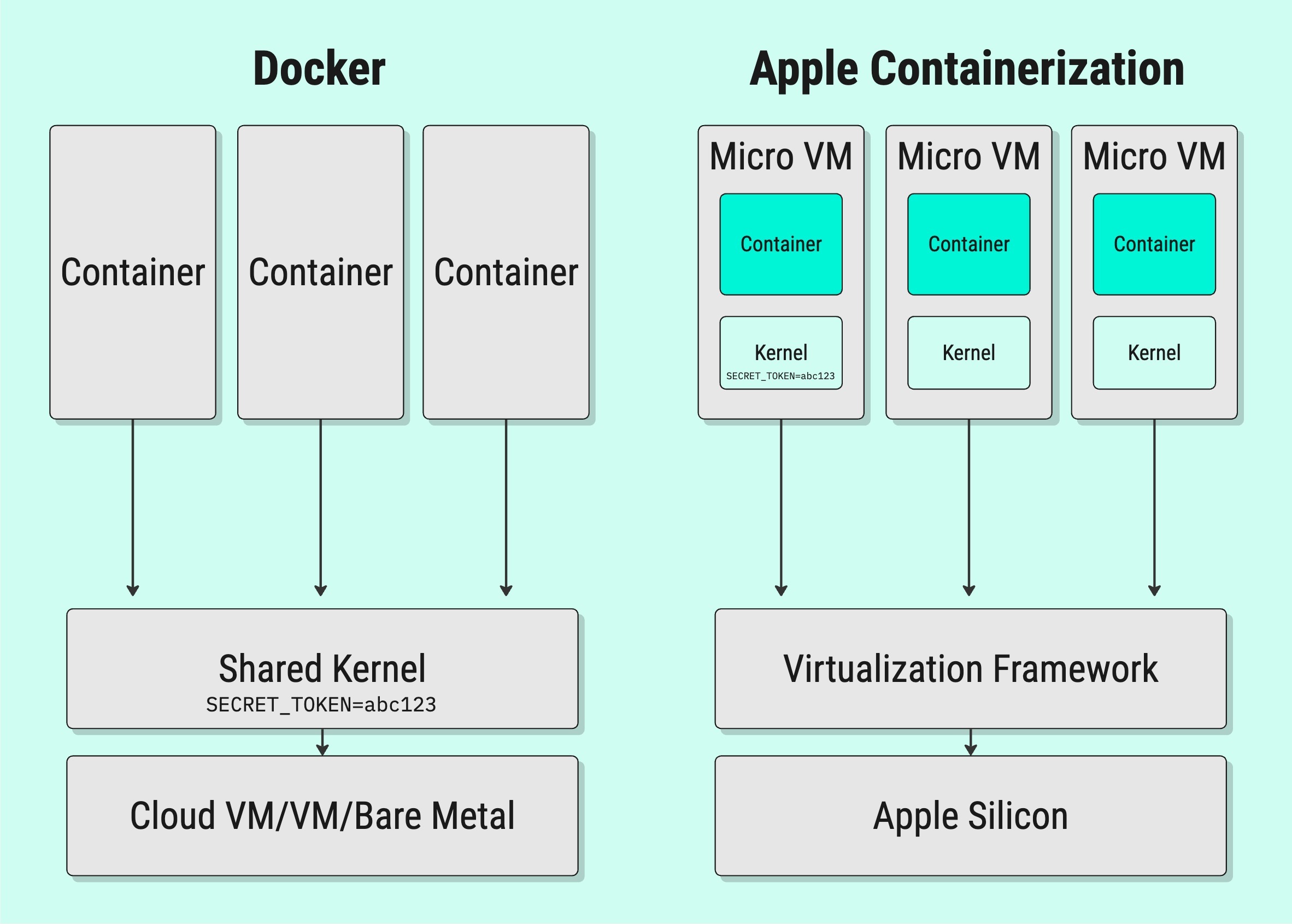

NanoClaw 2.0 Introduces Human-in-the-Loop AI Security with Vercel and OneCLI Integration for Safe Agent Actions Across 15 Messaging Platforms - News Directory 3

The fundamental shift in NanoClaw 2.0 is the move away from "application-level" security to "infrastructure-level" enforcement. The creators of the open source sandboxed NanoClaw agent framework -- now operating under their new private startup named NanoCo -- have announced a landmark partnership with Vercel and OneCLI to introduce a standardized, infrastructure-level approval system for enterprise AI agents. This launch addresses a critical barrier to AI agent adoption: the tradeoff between utility and security that has forced organizations to either severely restrict agent capabilities or risk unintended consequences from autonomous actions. By integrating Vercel's Chat SDK and OneCLI's open source credentials vault, NanoClaw 2.0 ensures that no sensitive action occurs without explicit human consent, delivered natively through the messaging apps where users already live. The system intercepts outbound requests from AI agents and requires human approval before allowing access to real credentials, effectively preventing unauthorized actions even if an agent is compromised. The specific use cases that stand to benefit most are those involving high-consequence "write" actions. In DevOps, an agent could propose a cloud infrastructure change that only goes live once a senior engineer taps "Approve" in Slack. For finance teams, an agent could prepare batch payments or invoice triaging, with the final disbursement requiring a human signature via a WhatsApp card. The fundamental shift in NanoClaw 2.0 is the move away from "application-level" security to "infrastructure-level" enforcement. In traditional agent frameworks, the model itself is often responsible for asking for permission -- a flow that Gavriel Cohen, co-founder of NanoCo, describes as inherently flawed. "The agent could potentially be malicious or compromised," Cohen noted. "If the agent is generating the UI for the approval request, it could trick you by swapping the 'Accept' and 'Reject' buttons." NanoClaw solves this by running agents in strictly isolated Docker or Apple Containers. The agent never sees a real API key; instead, it uses "placeholder" keys. When the agent attempts an outbound request, the request is intercepted by the OneCLI Rust Gateway. The gateway checks a set of user-defined policies (e.g., "Read-only access is okay, but sending an email requires approval"). If the action is sensitive, the gateway pauses the request and triggers a notification to the user. Only after the user approves does the gateway inject the real, encrypted credential and allow the request to reach the service. While security is the engine, Vercel's Chat SDK is the dashboard. Integrating with different messaging platforms is notoriously difficult because every app -- Slack, Teams, WhatsApp, Telegram -- uses different APIs for interactive elements like buttons and cards. By leveraging Vercel's unified SDK, NanoClaw can now deploy to 15 different channels from a single TypeScript codebase. When an agent wants to perform a protected action, the user receives a rich interactive card on their phone. "The approval shows up as a rich, native card right inside Slack or WhatsApp or Teams, and the user taps once to approve or deny," said Cohen. This "seamless UX" is what makes human-in-the-loop oversight practical rather than a productivity bottleneck. NanoClaw launched on January 31, 2026, as a minimalist and security-focused response to the "security nightmare" inherent in complex, non-sandboxed agent frameworks. Created by Cohen, a former Wix.com engineer, and marketed by his brother Lazer, CEO of B2B tech public relations firm Concrete Media, the project was designed to solve the auditability crisis found in competing platforms like OpenClaw, which had grown to nearly 400,000 lines of code. By contrast, NanoClaw condensed its core logic into roughly 500 lines of TypeScript -- a size that allows the entire system to be audited by a human or a secondary AI in approximately eight minutes. The platform's primary technical defense is its use of operating system-level isolation. Every agent is placed inside an isolated Linux container -- utilizing Apple Containers for high performance on macOS or Docker for Linux -- to ensure that the AI only interacts with directories explicitly mounted by the user. In March 2026, NanoClaw further matured this security posture through an official partnership with the software container firm Docker to run agents inside "Docker Sandboxes". This integration utilizes MicroVM-based isolation to provide an enterprise-ready environment for agents that, by their nature, must mutate their environments by installing packages, modifying files, and launching processes -- actions that typically break traditional container immutability assumptions. Operationally, NanoClaw rejects the traditional "feature-rich" software model in favor of a "Skills over Features" philosophy. Instead of maintaining a bloated main branch with dozens of unused modules, the project encourages users to contribute "Skills" -- modular instructions that teach a local AI assistant how to transform and customize the codebase for specific needs, such as adding Telegram or Gmail support. This methodology ensures that users only maintain the exact code required for their specific implementation. the framework natively supports "Agent Swarms" via the Anthropic Agent SDK, allowing specialized agents to collaborate in parallel while maintaining isolated memory contexts for different business functions. NanoClaw remains firmly committed to the open source MIT License, encouraging users to fork the project and customize it for their own needs. This stands in stark contrast to "monolithic" frameworks. NanoClaw's codebase is remarkably lean, consisting of only 15 source files and roughly 3,900 lines of code, compared to the hundreds of thousands of lines found in competitors like OpenClaw. The partnership also highlights the strength of the "Open Source Avengers" coalition. By combining NanoClaw (agent orchestration), Vercel Chat SDK (UI/UX), and OneCLI (security/secrets), the project demonstrates that modular, open-source tools can outpace proprietary labs in building the application layer for AI. As shown on the NanoClaw website, the project has amassed more than 27,400 stars on GitHub and maintains an active Discord community. A core claim on the NanoClaw site is that the codebase is small enough to understand in "8 minutes," a feature targeted at security-conscious users who want to audit their assistant. In an interview, Cohen noted that iMessage support via Vercel's Photon project addresses a common community hurdle: previously, users often had to maintain a separate Mac Mini to connect agents to an iMessage account. For enterprises, NanoClaw 2.0 represents a shift from speculative experimentation to safe operationalization. Historically, IT departments have blocked agent usage due to the "all-or-nothing" nature of credential access. By decoupling the agent from the secret, NanoClaw provides a middle ground that mirrors existing corporate security protocols -- specifically the principle of least privilege. Enterprises should consider this framework if they require high-auditability and have strict compliance needs regarding data exfiltration. According to Cohen, many businesses have not been ready to grant agents access to calendars or emails because of security concerns. This framework addresses that by ensuring the agent structurally cannot act without permission. Enterprises stand to benefit specifically in use cases involving "high-stakes" actions. As illustrated in the OneCLI dashboard, a user can set a policy where an agent can read emails freely but must trigger a manual approval dialog to "delete" or "send" one. Because NanoClaw runs as a single Node.js process with isolated containers, it allows enterprise security teams to verify that the gateway is the only path for outbound traffic. This architecture transforms the AI from an unmonitored operator into a supervised junior staffer, providing the productivity of autonomous agents without forgoing executive control. NanoClaw is a recommendation for organizations that want the productivity of autonomous agents without the "black box" risk of traditional LLM wrappers. It turns the AI from a potentially rogue operator into a highly capable junior staffer who always asks for permission before hitting the "send" or "buy" button. As AI-native setups become the standard, this partnership establishes the blueprint for how trust will be managed in the age of the autonomous workforce.

Vercel Confirms Cyber Incident After Sophisticated Attacker Exploits Third‑Party Tool - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Vercel Confirms Cyber Incident

Next.js developer Vercel has confirmed a cyber-incident conducted by a "highly sophisticated" attacker which may have resulted in threat actors getting hold of sensitive internal data. The US firm, which provides developer tools and cloud infrastructure, said in an updated April 21 notice that the unauthorized access originated from an employee's use of a third-party tool, Context.ai. "The attacker used that access to take over the employee's Vercel Google Workspace account, which enabled them to gain access to some Vercel environments and environment variables that were not marked as sensitive," it added. "Environment variables marked as 'sensitive' in Vercel are stored in a manner that prevents them from being read, and we currently do not have evidence that those values were accessed." Read more on Vercel: NCSC Urges Users to Patch Next.js Flaw Immediately Vercel claimed that the attacker was "highly sophisticated based on their operational velocity and detailed understanding of Vercel's systems". However, it confirmed that none of its npm packages were compromised and there's no evidence of tampering, meaning projects like popular React framework Next.js are safe. Vercel said it has already reached out to "a limited subset of customers whose non-sensitive environment variables stored on Vercel" were compromised. According to screenshots posted to X (formerly Twitter), a threat actor purporting to be part of the ShinyHunters collective is trying to extort Vercel to the tune of $2m. They claim to have access to multiple employee accounts "with access to several internal deployments," as well as API keys, npm/GitHub tokens, source code and databases. As it works with Mandiant to ascertain the validity of the threat actor's claims, Vercel has issued the following advice for customers: Cory Michal, CISO at AppOmni, traced the breach back to the OAuth access Context.ai provided to the Vercel employee's Google Workspace account. "Once a user authorizes one app, that trust can extend into email, identity, CRM, development, and other systems in ways many organizations do not fully inventory or monitor, which makes a single compromised integration a powerful pivot point," he added. "The key lesson is that third-party risk management cannot stop at reviewing a vendor's SOC 2 report or penetration test results. Organizations need continuous visibility into how third-party applications are actually connected across their SaaS estate, what OAuth grants and integration tokens they hold, and how those relationships could be abused if one provider is compromised."

Vercel breach, ZionSiphon targets water infrastructure, Bluesky DDoS - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.