News & Updates

The latest news and updates from companies in the WLTH portfolio.

ChatGPT vs Gemini vs Claude vs Perplexity: The 2026 AI Engine Landscape

Updated June 2026. Originally published 2023 when Bard launched, refreshed for the AI Communications era -- including Bard's rebrand to Gemini, the rise of Claude and Perplexity, and the structural shift to AI Overviews. Gemini (formerly Bard) vs ChatGPT vs the Modern AI Engine Landscape When this piece was originally published in May 2023, the AI chatbot landscape was simple: ChatGPT had taken the internet by storm, and Google had just launched Bard as its first answer. Three years later the landscape looks completely different. Google Bard has been rebranded to Gemini and integrated across Google's product suite. Anthropic's Claude has emerged as one of the most-used AI engines among professionals and developers. Perplexity has become the dominant answer-engine for citation-driven research. ChatGPT itself has gone through multiple GPT model generations. And the most important structural change -- Google AI Overviews now mediates a substantial share of all U.S. search queries with AI-generated answers above the traditional ten blue links. This piece compares the modern AI engine landscape and what it means for marketers, communicators, and brands operating in the answer-engine era. The Five Major AI Engines in 2026 ChatGPT (OpenAI) Launched November 2022, ChatGPT remains the largest single AI chatbot by consumer adoption. The product has gone through GPT-3.5, GPT-4, GPT-4o, and subsequent model generations. ChatGPT now offers web browsing, file uploads, image generation, voice mode, and integration with custom tools. The user base spans casual consumers, students, professionals, and developers. ChatGPT is generally regarded as strong on writing, summarization, brainstorming, and conversational tasks. Gemini (Google, formerly Bard) Google rebranded Bard to Gemini in February 2024 and has continued to expand the product across Google's surfaces -- the standalone Gemini app, the AI Overviews appearing in Google Search results, the AI-powered features in Gmail, Docs, Sheets, and the broader Workspace suite, and the Android assistant integration. Gemini's strengths reflect Google's data and infrastructure advantages: real-time web access, multimodal capability, integration with the broader Google ecosystem, and the unique distribution that places AI answers in front of billions of daily searchers. Claude (Anthropic) Anthropic's Claude has emerged as one of the most-used AI engines among professionals, developers, and enterprise users. Claude is generally regarded as strong on long-form writing, complex reasoning, coding, and tasks requiring careful instruction-following. The user base skews professional and technical relative to ChatGPT's broader consumer reach. Claude is available through the standalone Claude app, the API, and integration with platforms like Slack and increasingly the broader enterprise software ecosystem. Perplexity Perplexity launched as an "answer engine" -- an AI-powered search experience that returns synthesized answers with explicit citations to the sources. The product has become the dominant tool for citation-driven research, professional information work, and any use case where users need to verify the source of an AI answer. Perplexity's growth has been particularly strong among researchers, journalists, financial analysts, and other professionals whose work depends on traceable sourcing. Google AI Overviews Not a chatbot but the most consequential AI engine in the search market. AI Overviews are the Google-generated AI summaries that now appear above traditional search results on a substantial share of U.S. queries. For brands, AI Overviews are the single most important AI surface to optimize for, because they intercept buyer research at the moment of intent. The brand mentioned inside the AI Overview wins the consideration. The brand omitted is invisible. The Original Comparison -- What Changed Since 2023 When this piece was first written, the comparison was simpler. ChatGPT was trained on data up to 2021 and could not browse the web. Bard used Google's real-time web access. ChatGPT used GPT-3.5 (with GPT-4 in the paid tier). Bard used PaLM 2. Both were "in the initial phases of learning." The structural changes since: * Real-time web access is now standard across all major AI engines, not a Bard differentiator. * Bard became Gemini and the underlying model migrated from PaLM 2 through the Gemini model family. * ChatGPT's training data window moved forward through multiple model generations. * The "summarizing and writing vs. comprehensive answers" distinction has largely collapsed -- all major AI engines now do both well. * The arrival of Claude and Perplexity changed the landscape from a two-horse race into a multi-engine ecosystem where buyers and professionals use different engines for different tasks. * The arrival of AI Overviews made the comparison less about chatbot UX and more about which AI surfaces mediate buyer research. What This Means for Brands and Communicators Three implications: 1. Citation Share across multiple engines is the new metric. Brands need to measure their appearance inside ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews -- not just one engine. The brand that appears inside three of five engines is differently positioned than the brand that appears inside only one. 2. Generative Engine Optimization (GEO) is the new discipline. The optimization work that made content findable in Google has evolved. GEO is the practice of structuring content, building editorial authority, and creating the citation patterns that AI engines reward. 3. AI Communications is the new function. Public relations, digital marketing, GEO, and AI-visibility research now combine into a single discipline. The firms and in-house teams that operate across this stack are pulling ahead of teams still organized around the pre-AI playbook. Other AI Engines and Tools Worth Knowing Beyond the five major engines above, the AI tool landscape includes specialist products (Jasper for marketing content, Cursor and GitHub Copilot for code, Midjourney and DALL-E for image generation), open-source models (Meta's Llama family, Mistral, others), enterprise-focused offerings, and the Chinese AI ecosystem (Baidu's Ernie, ByteDance's Doubao, DeepSeek). For Western brands building AI Communications strategies, the five major engines covered above are the priority. The specialist and open-source tooling supports the workflow but does not currently mediate buyer research at scale. Frequently Asked Questions Is Google Bard the same as Gemini? Yes. Google rebranded Bard to Gemini in February 2024 and consolidated its consumer and enterprise AI offerings under the Gemini name. The underlying model and the product surface have continued to evolve. Which AI engine is best for which task? ChatGPT remains the leading consumer AI for general use. Claude is preferred by many professionals for long-form writing and complex reasoning. Perplexity is the dominant citation-driven research tool. Gemini wins on Google ecosystem integration and AI Overviews distribution. The right answer depends on the task -- and most professionals now use multiple engines for different jobs. What is the most important AI engine for brand visibility? Google AI Overviews, because of its reach inside Google Search where most consumer and B2B research still begins. ChatGPT, Claude, Perplexity, and Gemini are also important -- measurement and optimization should cover all five for any brand serious about AI Communications. How should marketers prepare for the AI Communications era? Build Citation Share measurement across the five major engines, develop Generative Engine Optimization (GEO) capability, accumulate editorial authority through original reporting and named expert voices, and reorganize the marketing and communications function around AI Communications as an integrated discipline rather than treating AI as a content-generation tool inside the existing function.

CNN sues Perplexity AI over alleged scraping of 17,000 news stories

CNN's lawsuit against Perplexity, OpenAI's EU compliance framework, and DOJ's intervention in Colorado's AI Act all landed on same day On a single day, Thursday, a renowned media outlet, CNN, filed a copyright lawsuit against Perplexity AI; OpenAI published a formal internal governance framework aligned to EU and California law; and the US Department of Justice filed its first-ever federal challenge to a state AI statute in Colorado. When all three cases were put together, they represented something the industry wanted to avoid. It was fragmentation of artificial intelligence regulations in courts, businesses, and governments, with no one solution gaining any traction. Why are publishers suing Perplexity? According to CNN's filing of a 54-page case against Perplexity, a new AI firm, the plaintiff claims that the company scraped and distributed over 17,000 pieces of their articles, photographs, and videos to use this information as input into AI-based answers that, according to CNN, are competing with their news content. CNN alleges Perplexity falsely implied a content relationship by advertising CNN premium access to subscribers of its Comet Plus tier, despite no licensing agreement existing between the two companies. Negotiations between the media organisation and Perplexity had been made prior to the lawsuit being filed. Perplexity Chief Communications Officer Jesse Dwyer gave the following response from the company, according to which 'You can't copyright facts.' On the other hand, CNN's claim is based on the infringement of copyrights on works containing protected expression, such as articles, photos, and videos. Other news agencies such as Time, Gannett, Le Monde, and Der Spiegel chose to license Perplexity instead of suing. However, as time passes by, the price for legally licensed copyrighted training data grows with each new legal case. As reported in relation to Bartz v. Anthropic, a class action suit that saw the plaintiffs claim that their copyrighted books were used to train AI models without their consent, a settlement worth billions is awaiting the court's approval in mid-May 2026 in the Northern District of California.

How Perplexity's 'Personal Computer' Marketing is Turning Apple's Mac Mini Into the Hottest Hardware in AI

The scramble to secure computing power for artificial intelligence is no longer confined to billion-dollar data centers and advanced Nvidia chips. Increasingly, it is spilling into the consumer hardware market, where an unlikely device has emerged as a favorite among AI developers, power users and technology enthusiasts: Apple's Mac Mini. That trend is now being amplified by Perplexity, which has been sending Mac Minis to select users as part of an effort to showcase its new AI agent platform, Personal Computer. A handful of technology-focused creators began posting online in recent weeks that they had received Mac Minis from Perplexity. The company later confirmed it had distributed a small number of devices to people interested in exploring the full capabilities of Personal Computer, its newest push beyond AI search and into autonomous digital assistants. The campaign may appear at first glance to be a straightforward influencer-marketing exercise. In reality, it highlights a much larger shift underway in the AI industry: the growing importance of personal computing infrastructure as AI agents become capable of performing increasingly complex tasks on behalf of users. Perplexity's Personal Computer, which began rolling out in April, expands on the company's browser-based AI agent technology by allowing AI to operate across local files, native applications, and web services. Unlike traditional chatbots that simply answer questions, the system is designed to interact directly with a user's digital environment. Perplexity has described the Mac Mini as "one of the best ways to experience Personal Computer." "On a mini, Personal Computer stays available 24/7 for work that needs a persistent machine or secure local access to your files and native apps," the company wrote in an April blog post. That statement offers a glimpse into where AI development is headed. For years, most advanced AI services have relied almost entirely on cloud computing. Users submit requests to remote servers, which process information and return answers. But as AI agents evolve from conversational tools into software capable of carrying out multi-step actions, there is a growing demand for systems that remain continuously available, retain access to local files, and operate with lower latency. The Mac Mini has emerged as an attractive platform for that transition. Its appeal stems from a combination of factors: powerful Apple silicon processors, relatively low energy consumption, quiet operation, and the ability to remain online continuously. Those characteristics make it particularly suitable for running AI agents that need persistent access to applications, documents, and workflows. Perplexity Chief Communications Officer Jesse Dwyer underscored that use case, telling Business Insider that he uses his Mac Mini constantly and accesses it remotely through other Apple devices regardless of location. The enthusiasm around the machine is not limited to Perplexity. Across the AI ecosystem, developers and hobbyists have increasingly embraced the Mac Mini as a dedicated AI workstation capable of running agent-based systems, coding assistants, and local AI applications. What was once considered Apple's most overlooked desktop product is increasingly being viewed as an affordable gateway into AI-powered productivity. The surge in interest has become significant enough to affect supply. During a March earnings call, Tim Cook highlighted strong demand for the product. Availability has tightened in recent weeks as more consumers, developers, and AI enthusiasts seek out the device. Apple's base-model Mac Mini has become increasingly difficult to find, leaving many buyers with higher-priced configurations. The phenomenon illustrates a broader shift in how AI is being commercialized. Much of Wall Street's attention remains focused on companies building large language models, AI chips, and cloud infrastructure. Yet a parallel market is emerging around the hardware required to run AI agents in everyday environments. As those systems become more autonomous, users may want dedicated machines that function as personal AI hubs. That could create new opportunities not only for Apple but also for software companies seeking to build ecosystems around AI-native computing. For Perplexity, this strategy also means expanding beyond its roots as an AI-powered search engine into a broader platform designed to compete for users' daily workflows. By promoting Personal Computer through influential technology creators, the company is attempting to position itself at the center of the emerging agent economy. The move comes as rivals race to build similar capabilities. Companies such as OpenAI, Anthropic, and Google are all developing increasingly sophisticated AI agents capable of carrying out tasks rather than merely generating responses.

Perplexity AI Says 'You Can't Copyright Facts' in Defense Against CNN Copyright Suit

More than 100 copyright lawsuits have been filed against AI companies as of early 2026. The television network CNN is taking aim at the artificial intelligence search engine Perplexity in a lawsuit over copyright infringement. As reported by the network's Brian Stetler, the suit, filed Thursday in a New York District Court, accuses the AI company of copying and distributing CNN's content, including over 17,000 of CNN's stories, videos, images and other published works. Though this is CNN's first legal case against an AI company, the network joins other publishers who have sued the San Francisco-based startup, including the New York Times and News Corp. According to the suit, CNN attempted to strike a licensing deal with Perplexity, but those talks didn't result in an agreement. CNN previously made a content licensing deal with Meta last year, where the tech giant compensates the media company for using its reporting and content to respond to queries on Meta AI. AI products regularly scrape news publications and websites to answer user questions with real-time data, accelerating the collapse in traffic and revenue to original sources. In response to the lawsuit, Jesse Dwyer, Perplexity's chief communications officer, told Stetler and other media outlets in a statement: "You can't copyright facts." The US government's Copyright Office states: "Copyright does not protect facts, ideas, systems, or methods of operation, although it may protect the way these things are expressed." CNN said in its own statement that a company valued at tens of billions of dollars shouldn't "steal from entities that create the original content Perplexity exploits" and that "commercial operators can and must pay to make use of it." A Perplexity representative didn't immediately respond to a request for comment. AI copyright suits Perplexity is one of several companies, including OpenAI and Anthropic, that have been battling news publishers and media giants over copyright claims. (Disclosure: Ziff Davis, CNET's parent company, in 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.) More than 100 such lawsuits have been filed. But different conclusions have been reached as to whether training AI models on copyrighted data counts as fair use, said Michael Goodyear, an associate professor at New York Law School. Considerations include how the training occurs, what AI outputs contain and whether there's any competitive harm to copyright holders. "No appellate courts have yet weighed in on the viability of these copyright infringement claims against AI companies," Goodyear said. In the CNN case, he said that Perplexity is correct that facts aren't protected by copyright, but the way CNN presents facts could be. "Even short news articles would typically qualify for copyright protection under the low bar of required originality," Goodyear said. "The question becomes whether the thousands of cases of infringement CNN describes are copying whole paragraphs verbatim, or whether they are paraphrasing or merely copying unprotectable facts." AI licensing deals As plunging website traffic has drained billions in publisher revenue and triggered widespread media layoffs, AI firms are aggravating the crisis. According to a new report from the think tank Open Markets Institute, over the past six months, the rate of AI crawlers bypassing paywalls and blocks has nearly quadrupled, spiking from 3.3% to 12.9%. That's partly why a number of publishers signed AI content licensing deals with tech companies to monetize content used to train AI systems. One way out for Perplexity may be to renegotiate a licensing deal with CNN. Even if Perplexity has valid legal arguments, a licensing agreement could shift from unauthorized scraping toward a formalized content partnership. However, the Open Markets Institute report says that when it comes to AI content licensing, news and content creators are trapped in a double bind. The same tech giants whose AI tools are starving websites of human traffic are now the ones gatekeeping the licensing deals meant to replace that lost ad revenue.

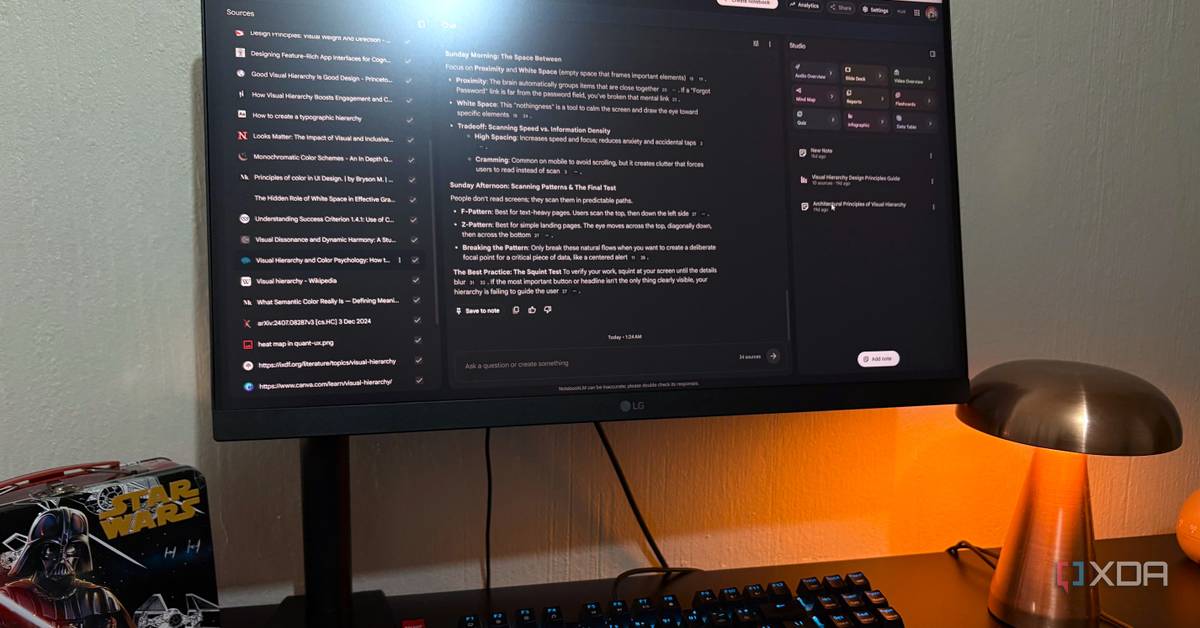

I paid for Perplexity, Claude, and NotebookLM, and only one was worth keeping

There's a point in AI adoption where the tools stopped being optional. Think about it, how many tools have you tried out at this point or even become reliant on, spanning across domains like productivity, creativity, coding, learning, and so on - a big chunk of this stuff happens in some kind of chat window now. This shift toward AI isn't really news anymore, but with so many new companies popping up all wanting you to try their product, the bill is. And it's not like AI subscriptions are $5 throwaways - some are $20 a pop, if not more, and they all sit alongside whatever else you've already got renewing in the background. Every single AI tool is competing for the same slot in your monthly budget. So the key is to pick only what you actually need, and stick to the free tiers on the rest. At least, that's how I've been approaching it. And that's why I gave three different categories a fair shot: a search engine, source-grounded research, and a general chatbot. I subscribed to Claude, Perplexity, and NotebookLM (Google AI), and here's how that panned out for me... Want to stay in the loop with the latest in AI? The XDA AI Insider newsletter drops weekly with deep dives, tool recommendations, and hands-on coverage you won't find anywhere else on the site. Subscribe by modifying your newsletter preferences! I use ChatGPT, Claude, Perplexity, and Gemini daily -- here's the only one worth paying for One stands above the rest. Posts 16 By Mahnoor Faisal The case for Perplexity Pro My other search engines were already giving me what I needed for free First up is Perplexity. The Pro tier is $20 a month, and it's the obvious upgrade pitch for anyone who already uses the free version a lot. You get unlimited Pro Searches instead of being capped daily, access to bigger models like GPT-5, Claude Sonnet 4.6, and Gemini 3 Pro instead of being routed through whatever default it gives you, way more file uploads inside Spaces (50 per Space instead of 5), and Deep Research without the monthly limit you hit pretty quickly on the free plan. There's also image generation, some API credits, and access to a few premium data sources like Statista that would normally cost money to read. So on paper, I was getting plenty. And I do genuinely like Perplexity; the citation system is one of the better things going for it, and Deep Research is great when you actually need a structured multi-source breakdown of something instead of just an answer. Spaces also has potential as a sort of project hub for research. But after the month was over, I didn't feel like I was actually getting anything that I couldn't already get by combining my existing search engines and chatbots. Both Google and Brave have some AI features baked in already, their AI summaries and dedicated chatbots have gotten really good over the past year or so. So the $20 didn't earn its place for me, however, if your stack is research-heavy, I would actually recommend Perplexity Pro. Perplexity See at Perplexity Expand Collapse Claude Pro's turn You don't need to be a coder to get your money's worth Pro is also $20 a month, and you get a lot for it - the obvious thing being five times the usage of the free tier, but the bigger draw is access to Opus 4.7 (Anthropic's most capable model) and Sonnet 4.6, both with a 200k context window that you can push up to 1 million tokens with Extra Usage enabled. You also get unlimited Projects instead of being capped at 5, Artifacts with way more headroom, the inline interactive visuals, Claude Design, and Cowork. Claude Code is also included but it's not something I need for my workflow as of yet. The thing about Opus 4.7 is that it actually pushes back, which is a weirdly rare thing in chatbots right now. Most of them are just trying to agree with you faster. Opus will tell me when an approach isn't going to work, and that makes it a much better thinking partner for the design work and research I do. Projects is still the feature I lean on the hardest - my design briefs, novel drafts, and research stacks all live in their own contained spaces, which means no re-explaining the context every single time. Claude Design has also been a nice addition for quick visual mockups and honestly just playing around with UI concepts. Plus, I've got Cowork set up to handle a couple of folders in my Obsidian vault and screenshots directory on a schedule. Usage caps still hit on the heaviest design days, but most of the time the sessions stay smooth. So $20 for Opus, Artifacts, Projects, inline visuals, Claude Design, and Cowork - all wrapped in an interface that's mostly pleasant to use - is the easiest subscription justification in my stack. It's truly an all-in-one tool, it spans across so many domains, and it's why I've actually had the subscription for three months now. I cancelled my ChatGPT, Perplexity, and Gemini subscriptions for Claude -- and I should have sooner Wish I did this sooner. Posts 50 By Mahnoor Faisal Claude OS Windows, macOS Individual pricing Free plan available; $17/month Pro plan Group pricing $100/month per person for the Max plan See at Claude Expand Collapse And then there's NotebookLM The cheapest option, with unexpected benefits NotebookLM is the one I'm newest to in subscription form. You can't actually buy NotebookLM Plus on its own - it's bundled into Google AI Plus, which is $8 a month and is the cheapest of the three. The plan bumps up NotebookLM's limits across the board: 200 notebooks instead of 100, 100 sources per notebook instead of 50, 200 daily chat queries instead of 50, more Audio and Video Overviews per day, and 3 Deep Research reports daily instead of 10 a month. Basically every limit roughly doubles. I haven't been on it for a full month yet, so the verdict is still a bit early. But the source bump alone has been the most useful thing for me - my notebooks already hit the free tier ceiling pretty quickly, so being able to load more in without juggling them has been worth it on its own. What I didn't consider was the Gemini side of things: Google AI Plus also gives you access to Gemini 3 Pro in the Gemini app with a bigger context window and higher daily prompt limits, so my Gemini chats feel noticeably more capable and run longer. If Claude wasn't a thing, Google AI is probably the subscription I would have swung toward because Gemini is a close second for me. Who knows, I might even renew it next month. NotebookLM See at NotebookLM Expand Collapse It honestly just depends on what you actually need There's no universally right answer here. If your work is research-heavy, I'd probably go with Perplexity. NotebookLM is a steal if you're already deep into the Google ecosystem and use NotebookLM regularly. Claude is the one that does the most across the widest range of work. For me, Claude is the one I'm keeping without a doubt because of its models, the design workflows, and projects. I will say, Gemini is actually better with live web data than Claude, so I'm actually tempted to keep the Google AI sub and see where it goes, but the free tier has been serving me well too. At the end of the day, it entirely depends on your workflow and preferences. I just wouldn't recommend stacking three or more of them just because the hype says you should. Figure out what kind of AI work you actually do most, and pay for that one, if the free tier has too many barriers.

Perplexity Built a Tool That Checks Your Computer for Infected Software -- Without Setting Off the Infection - Decrypt

It's the first open-source scanner to treat MCP config files -- the connectors that give AI tools access to your data -- as a security surface. Imagine you suspect someone poisoned a bottle of water in your house. To check, you drink from every bottle. That's roughly how most security scanners work. Perplexity just open-sourced a tool called Bumblebee that takes a different approach. It scans developer computers for infected software packages, malicious browser extensions, and compromised AI tool configs -- without ever running the code it finds. It reads the code, the ingredient label instead of eating the food. On May 11, a hacker group called TeamPCP slipped malicious code into over 160 software packages used by millions of developers worldwide -- including packages from Mistral AI, UiPath, and a widely used React tool with 12 million weekly downloads. The attack spread automatically the moment developers installed those packages. Perplexity's Bumblebee could have prevented that, the company says. Software packages -- especially in the JavaScript world -- can run hidden scripts the moment you install them. That's exactly how the May 11 attack spread so fast. The malicious code fired automatically on install, before anyone noticed anything was wrong. A scanner that invokes the package manager to check for infections can trigger those same scripts. You go looking for the worm; the worm runs. Bumblebee sidesteps this by never calling any package manager at all. It reads raw metadata files -- the records that describe what's installed -- without touching the software itself. The genuinely new piece is that Bumblebee also scans MCP configuration files -- the local files that tell AI assistants like Claude or Cursor which external services they're allowed to connect to. MCP connectors give AI tools access to emails, databases, calendars, and code. If an attacker sneaks a malicious connector into that config, your AI assistant could leak credentials or run unauthorized commands in the background. Most security tools aren't checking for this yet. Beyond MCP, it covers browser extensions on Chrome, Edge, Brave, Arc, and Firefox, plus editor plugins in VS Code and its forks. The whole scan happens in one pass, outputs a clean structured list of what it found, and never modifies anything on the machine. Perplexity has been running Bumblebee internally to protect the systems behind its search product, its Comet browser, and its Computer AI agent. When a new threat surfaces, Perplexity Computer drafts a catalog entry for it, a human reviews and approves it, and Bumblebee runs across all developer machines to check for matches. Teams can run their own catalogs the same way. The tool ships with a built-in threat directory seeded from recent supply-chain attacks, including the May 11 campaign. The group behind that attack -- tracked by Google under the alias UNC6780 -- has been running coordinated software poisoning campaigns since at least March 2026. Bumblebee is available free at github.com/perplexityai/bumblebee under Apache 2.0, which means you can run it, tweak it, improve it and fork it without legal repercussions.

xAI Says Grok V9-Medium Is Almost Here. Should Claude and ChatGPT Be Worried?

xAI's new 1.5 trillion parameter model trained on Cursor coding data drops in 2 to 3 weeks. Elon Musk posted on X early Monday morning (5:48 AM UTC), May 25, that xAI has finished training Grok foundation model V9-Medium. According to him, this model will come with 1.5 trillion parameters, which is three times bigger than the current version running Grok today. The public release timeline is between 2 and 3 weeks, with Musk saying evaluations look good, fine-tuning is underway, and reinforcement learning starts in a few days. What caught attention, though, is Musk's focus on coding. When asked if the new model would perform better at coding tasks, Musk replied directly: "Much better at coding." The V9-Medium name refers to xAI's internal model version, not the public product name users see. The current Grok app runs on V8, which has 0.5 trillion parameters. V9-Medium triples that size to 1.5 trillion parameters. "This will be a major improvement over the 0.5T v8-small that currently serves all Grok production traffic," Musk said in his post. Parameter count is basically the number of connections inside an AI model. More parameters typically mean the model can handle more complex tasks, though size alone doesn't guarantee better performance. Musk revealed that xAI added "a lot of Cursor data" during training, with more still coming. Cursor is a code editor that developers at OpenAI, Stripe, and Perplexity actually use. It's basically VS Code with AI features built in for writing and debugging code. Training on Cursor means Grok learned from how real developers work, not just public code on GitHub. Claude leads on coding right now. Independent testing tests conducted by Ryz Labs shows it hits about 95% accuracy on coding tasks. ChatGPT comes in around 85%. Claude's Opus 4.6 scores 80.8% on SWE-bench Verified, which developers watch closely. GPT-5.5 scored 88.7% on that same test, while xAI self-reports Grok 4, it's current flagship series of models, at 72% to 75% . So there's ground to make up. If V9-Medium closes the performance gap, the coding AI market gets more competitive. The Cursor training data suggests xAI knows where Claude is strong and is aiming there. Musk also said xAI will open source the 0.5 trillion parameter model "towards the end of this year." Developers could use that to experiment while xAI keeps pushing bigger models. Mid-June 2026 is when V9-Medium should arrive, based on the 2 to 3 week timeline. Whether it matches Claude and ChatGPT on actual coding benchmarks remains to be seen.