News & Updates

The latest news and updates from companies in the WLTH portfolio.

The Customer Is Missing: What The Encyclical And Anthropic Both Forgot

Just last week, on May 25, 2026, Pope Leo XIV released Magnifica Humanitas, a major encyclical on safeguarding human dignity in the age of artificial intelligence. Anthropic's founder was present at the announcement and highlighted its updated Constitution for Claude, one of the most thoughtful governance documents yet produced for a frontier AI model. Both are serious, well-intentioned efforts to confront the challenges of AI. Yet they share a striking and consequential omission: neither document mentions the customer, even once. The encyclical devotes extensive attention to the "inalienable dignity of the worker," the evils of unemployment, exploitation, new forms of digital slavery, and the need to protect labor amid AI disruption. Anthropic's Constitution carefully defines "helpfulness" in service to "principals" and "users" (primarily organizations and their internal employees), while prioritizing safety, ethics, and human oversight. Both documents are steeped in concern for producers, workers, and internal stakeholders. Yet the people who ultimately pay the bills and judge whether products and services create real value -- the customers -- are not mentioned. A Telling Blind Spot This is not a minor linguistic oversight. Catholic Social Teaching has long prioritized the vulnerable, especially workers facing industrial-era exploitation. That focus made sense in 1891 when Leo XIII wrote Rerum Novarum. But in 2026, in mature service and knowledge economies dominated by large organizations, the bigger problem is often different: unproductive or low-value work that persists because incentives are misaligned with customer outcomes. Professor David Graeber famously estimated in his book, Bullshit Jobs (2018), that 30-50% of work in large organizations serves no meaningful purpose. Subsequent surveys have consistently found large numbers of employees who believe their own jobs could disappear without anyone noticing. When documents of moral authority emphasize worker dignity without a corresponding emphasis on customer value, they risk providing intellectual and moral cover for this phenomenon. The encyclical speaks powerfully about the dignity of the worker, integral human development, and the universal destination of goods. Yet it rarely asks whether the work being protected actually improves customers' lives. Anthropic's Constitution is even more explicit in its inward focus: "helpfulness" is defined relative to operators and users inside paying organizations. The external customer -- the person whose satisfaction ultimately validates economic activity -- is not mentioned even once.

The Customer Is Missing: What The Encyclical And Anthropic Both Forgot

Just last week, on May 25, 2026, Pope Leo XIV released Magnifica Humanitas, a major encyclical on safeguarding human dignity in the age of artificial intelligence. Anthropic's founder was present at the announcement and highlighted its updated Constitution for Claude, one of the most thoughtful governance documents yet produced for a frontier AI model. Both are serious, well-intentioned efforts to confront the challenges of AI. Yet they share a striking and consequential omission: neither document mentions the customer, even once. The encyclical devotes extensive attention to the "inalienable dignity of the worker," the evils of unemployment, exploitation, new forms of digital slavery, and the need to protect labor amid AI disruption. Anthropic's Constitution carefully defines "helpfulness" in service to "principals" and "users" (primarily organizations and their internal employees), while prioritizing safety, ethics, and human oversight. Both documents are steeped in concern for producers, workers, and internal stakeholders. Yet the people who ultimately pay the bills and judge whether products and services create real value -- the customers -- are not mentioned. A Telling Blind Spot This is not a minor linguistic oversight. Catholic Social Teaching has long prioritized the vulnerable, especially workers facing industrial-era exploitation. That focus made sense in 1891 when Leo XIII wrote Rerum Novarum. But in 2026, in mature service and knowledge economies dominated by large organizations, the bigger problem is often different: unproductive or low-value work that persists because incentives are misaligned with customer outcomes. Professor David Graeber famously estimated in his book, Bullshit Jobs (2018), that 30-50% of work in large organizations serves no meaningful purpose. Subsequent surveys have consistently found large numbers of employees who believe their own jobs could disappear without anyone noticing. When documents of moral authority emphasize worker dignity without a corresponding emphasis on customer value, they risk providing intellectual and moral cover for this phenomenon. The encyclical speaks powerfully about the dignity of the worker, integral human development, and the universal destination of goods. Yet it rarely asks whether the work being protected actually improves customers' lives. Anthropic's Constitution is even more explicit in its inward focus: "helpfulness" is defined relative to operators and users inside paying organizations. The external customer -- the person whose satisfaction ultimately validates economic activity -- is not mentioned even once.

Is SpaceX ushering in a new Space Age?

In many ways, SpaceX's Starship rocket is a metaphor for the company as a whole. It's an impressive piece of space hardware but it's also deceptive when it comes to scale. You can go online and buy a desktop model of the vehicle and when you set it up away from any context of size you fail to grasp just how big and powerful this machine is. You don't realize that the Starship Version 3 (V3) is the height of a 40-story skyscraper. At 408 ft (124 m), it dwarfs the Apollo Saturn V that only measured 363 ft (110 m). The Starship also has almost twice the thrust of the Moon rocket. Add in a payload of over 100 tonnes and Starship is a monster by any standard. Yet, unless you're standing next to it, it can be hard to truly gauge its size. It's the same with SpaceX itself. When it was founded in 2002, it looked like a vanity project of a billionaire electric car maker. Many people still see the company as that, but the truth is that SpaceX went from nothing to the leading orbital launch provider on the planet in a remarkably short time. And the scale of this achievement is much, much larger than popular perception. Since the first Sputnik went into space in November 1957, all of the governments and private companies on Earth have launched an estimated 15,062 payloads into orbit. Starting a tad later in 2008, SpaceX has managed to shoot 14,844 payloads into space - almost equal to the total of the rest of the world combined. By the time you read this, the company may well have exceeded it. To this can be added technological innovations that the aerospace field now takes for granted. SpaceX was the first privately funded company to send a liquid-fueled rocket into orbit in 2008. It sent the first private spacecraft to dock with the International Space Station in 2012. Then there was the first powered landing of an orbital rocket first-stage booster in 2015. And in 2017 we saw the first reflight of an orbital rocket stage. Then in 2020, SpaceX sent the first private astronaut mission into space. Now, all of this is not to parade SpaceX's achievements, though they are impressive. What is important is that the company isn't some anomaly that could fade away tomorrow. It's the harbinger of what could be a second Space Age - one that could make the first look like a 100-tonne, 15th-century carrack compared to a nuclear-powered, 70,000-tonne, Ro-Ro container ship the size of a small town. What has happened is that SpaceX is the first of a growing number of companies that are overturning and replacing the business models that have dominated the space sector since the beginning. Up until about 20 years ago, space launches were the reserve of national space agencies, the military, and a handful of large businesses. The pace of launches was at a steady cadence, averaging 95 per year worldwide at their height in the 1970s and '80s.

CNN sues Perplexity AI over alleged scraping of 17,000 news stories

CNN's lawsuit against Perplexity, OpenAI's EU compliance framework, and DOJ's intervention in Colorado's AI Act all landed on same day On a single day, Thursday, a renowned media outlet, CNN, filed a copyright lawsuit against Perplexity AI; OpenAI published a formal internal governance framework aligned to EU and California law; and the US Department of Justice filed its first-ever federal challenge to a state AI statute in Colorado. When all three cases were put together, they represented something the industry wanted to avoid. It was fragmentation of artificial intelligence regulations in courts, businesses, and governments, with no one solution gaining any traction. Why are publishers suing Perplexity? According to CNN's filing of a 54-page case against Perplexity, a new AI firm, the plaintiff claims that the company scraped and distributed over 17,000 pieces of their articles, photographs, and videos to use this information as input into AI-based answers that, according to CNN, are competing with their news content. CNN alleges Perplexity falsely implied a content relationship by advertising CNN premium access to subscribers of its Comet Plus tier, despite no licensing agreement existing between the two companies. Negotiations between the media organisation and Perplexity had been made prior to the lawsuit being filed. Perplexity Chief Communications Officer Jesse Dwyer gave the following response from the company, according to which 'You can't copyright facts.' On the other hand, CNN's claim is based on the infringement of copyrights on works containing protected expression, such as articles, photos, and videos. Other news agencies such as Time, Gannett, Le Monde, and Der Spiegel chose to license Perplexity instead of suing. However, as time passes by, the price for legally licensed copyrighted training data grows with each new legal case. As reported in relation to Bartz v. Anthropic, a class action suit that saw the plaintiffs claim that their copyrighted books were used to train AI models without their consent, a settlement worth billions is awaiting the court's approval in mid-May 2026 in the Northern District of California.

Weighty issues face SpaceX's starship programme

The IPO, due on June 12, is expected to raise up to $US80 billion for the company. Its only currently profitable sector is its telecommunications business (Starlink), but SpaceX's long-haul potential is a near-monopoly on launches and other space services for American and other players in the burgeoning space business. That doesn't mean Musk is a confidence man: he really believes in his promises, and though he's not actually an engineer he is deeply involved in the engineering aspects of everything he builds. Musk does push hard at the boundaries of possibility, however, and with Starship he made two promises that are hard to keep: fully re-usable spaceships that deliver 100 tonnes of payload to Low Earth Orbit (LEO). The ''reusable'' part of the promise is almost done; the ''heavy lift'' part not so much, because that brings him up against the Rocket Equation. That's Russian scientist Konstantin Tsiolkovsky's classic ''rocket equation'' of 1903, which states that around 90% of a rocket's launch weight has to be fuel if you want to put it into Earth orbit. It has not been successfully challenged yet, which is why so many of Starship's launches failed, and none has yet reached orbit. Gadfly journalist Will Lockett, writing on the website Medium, said recently that ''Musk ignorantly overstated how much thrust their rockets could generate (to comical levels) and grossly underestimated how much a rocket this giant would need to weigh.'' A bit harsh, but probably true. That is why every new version of Starship is lighter and more powerful than its predecessor: they simply got their original calculations wrong. Version 3, which flew more or less successfully on 22 May, is much lighter, with much more powerful engines than Version 2, but the lower stage exploded shortly after separating from the upper stage. The problem is that a lot of that lost weight comes from cutting the margins for safety: bigger and bigger engines on a lighter and weaker frame is a recipe for recurrent explosions. All space flight involves close margins for error, but Starship's are getting tighter and tighter. They can probably work most of the bugs out of Version 3, but it's questionable whether it will ever lift the promised 100 tonnes into orbit or become reliable enough to carry human beings into orbit. That's not a disaster for humanity, or even for Musk. There are other heavy-lift vehicles coming out that can put 50-tonne payloads into orbit (Nasa's SLS, China's Long March 9, Blue Origin's New Armstrong) but even at that carrying capacity Starship would win financially because of its reusability. And if Starship never gets safe enough for people -- well, there are lots of other vehicles that can do that job. As for Musk's more grandiose dreams of bases on the Moon and people on Mars, they are no more or less fantastical than they were before Tsiolkovsky's equation turned out to apply even to him. No rocket-propelled movement in space will ever impose one-tenth of the stress on his vehicles that is involved in struggling up out of Earth's deep gravity well. Elsewhere is different. No other planet or asteroid in the Solar System where human beings might one day want to land has even half of Earth's gravity, and a fully debugged Starship is still a leading contender for those jobs. The long-term solution (which would finally dethrone Tsiolkovsky) is to build a space elevator that remains always over the same spot (if you base it on the equator). It requires a satellite in an orbit 35,000km high with a cable attaching it to the ground at one end and a counterweight in an even higher orbit that holds the whole system under tension. Climbers would crawl up and down the cable to the satellite transporting cargoes and people. To get to further destinations, just transfer to real spaceships from the satellite. All this could come to pass once we can build a cable strong enough to support 35,000km of its own weight. Which may take some time, although carbon nanotubes, single crystal graphene and diamond nanothreads are all plausible candidates.

Investors want out of Musk's SpaceX gamble. They may not have a choice

While some investors fear missing out on Elon Musk's multi-trillion dollar SpaceX initial public offering, others are concerned about the potential impact on the valuation of their holdings. Gemma Dale, director of SMSF and investor behaviour at online stock-trading platform nabtrade, said investors are wary that SpaceX's IPO could distort the balance of their exchange-traded funds (ETFs) once it is incorporated into the funds. The IPO "creates this issue for many clients who don't particularly want exposure to something like SpaceX [but] who are by default, going to be acquiring it through their passive holdings," said Dale. The planned SpaceX IPO could raise as much as $US75 billion ($104 billion), valuing the company at up to $US1.8 trillion, which "approaches the combined market cap of the 200 largest stocks on the ASX", according to Morningstar. That gives it an implied price-to-sales ratio of about 100x sales. The listing's gargantuan size has indexes in the US mulling rule changes that would allow SpaceX to enter its indices earlier, with less of its shares traded publicly, than IPOs in the past. Nasdaq, the exchange where SpaceX will list, has shortened the amount of time a share must trade before being included in its indexes, from three months to 15 days. The requirement that at least 10 per cent of its share trades, has also been dropped by Nasdaq, allowing SpaceX to float lesser amount. S&P Global, which manages the S&P 500, is considering cutting the amount of time required to allow a stock into an eligible fund from a year to six months. There are over 150 ETF products based on the Nasdaq Composite index and the S&P 500, including a number of popular ones in Australia. These ETFs invest in all companies on those indices by default, meaning investors cannot opt out of investing in a certain company. Depending on the amount of SpaceX stock that floats, when it enters these indices and moves dramatically and up down, it could affect the entire index. Dale said: "We have probably heard more concern from investors who don't want ... exposure to SpaceX than those who are actually seeking it." While CommBank has reportedly told customers they'll be able to invest directly in SpaceX IPO, nabtrade customers are more divided. Some think: "'Even if Musk is over-promising and underdelivers, he has delivered some extraordinary things and I would like to be part of that'," said Dale. "There's another cohort who go, 'this is completely outside my risk profile'." SpaceX has reshaped the economics of orbital launch through the reusable Falcon 9 rocket. But10 years ago, Musk predicted the first human colony could be on Mars - by 2024. Musk's prospectus calls for new industries to be built on the Moon, Mars, "and beyond". The estimated total addressable market is $US28.5 trillion, "the largest in human history", comprised of space launch, connectivity, Starlink, and AI infrastructure. The relationship between Musk's hype and Musk's reality takes "the shape of an inverted pyramid" with the material base at the bottom (in this case, the real rocket accomplishments of SpaceX) but that it opens up into a "wider virtual realm", according to author Ben Tarnoff, who has co-written Muskism: A Guide for the Perplexed with historian Quinn Slobodian. Using this "financial fabulism" Musk projects himself as a public figure who is "making science fiction style promises that nonetheless the global investor class finds credible enough to reward him with an increased stock valuation". It's this aspect of SpaceX may well be beyond the comfort zone of ETF investors. Space-related share transactions at Nabtrade amount to less than 1 per cent of total by number and size, and less than 1 per cent of active customers with exposure. Setting aside the potential impact of SpaceX on the S&P and Nasdaq-tracking ETFs, specialised funds can be a safer way to get exposure to space investment. Among the more notable offerings is UFO, a passive space ETF, and actively managed funds, MARS, Roundhill Space & Technology ETF, and NASA, Tema Space Innovators ETF. Cathie Wood's ARKX Space & Defence Innovation ETF invests in cutting-edge tech and aerospace. Michael McCarthy chief executive of Moomoo Securities said: "Speculative investments are predicated on potential future developments - and space is the same sort of investment." While not discussing SpaceX, McCarthy said investing directly in space stocks is "akin to buying a gold explorer or perhaps a cryptocurrency". Many space business models will fail, and the companies will go broke, McCarthy said: "But if they prove a viable business model the potential upside is enormous." While making no recommendation, he says some popular individual stocks include Rocket lab, Firefly Aerospace, AST SpaceMobile, and Intuitive Machines. "I would caution against going all in on these because of that potential for businesses to fail." The Business Briefing newsletter delivers major stories, exclusive coverage and expert opinion. Sign up to get it every weekday morning.

Investors want out of Musk's SpaceX gamble. They may not have a choice

While some investors fear missing out on Elon Musk's multi-trillion dollar SpaceX initial public offering, others are concerned about the potential impact on the valuation of their holdings. Gemma Dale, director of SMSF and investor behaviour at online stock-trading platform nabtrade, said investors are wary that SpaceX's IPO could distort the balance of their exchange-traded funds (ETFs) once it is incorporated into the funds. The IPO "creates this issue for many clients who don't particularly want exposure to something like SpaceX [but] who are by default, going to be acquiring it through their passive holdings," said Dale. The planned SpaceX IPO could raise as much as $US75 billion ($104 billion), valuing the company at up to $US1.8 trillion, which "approaches the combined market cap of the 200 largest stocks on the ASX", according to Morningstar. That gives it an implied price-to-sales ratio of about 100x sales. The listing's gargantuan size has indexes in the US mulling rule changes that would allow SpaceX to enter its indices earlier, with less of its shares traded publicly, than IPOs in the past. Nasdaq, the exchange where SpaceX will list, has shortened the amount of time a share must trade before being included in its indexes, from three months to 15 days. The requirement that at least 10 per cent of its share trades, has also been dropped by Nasdaq, allowing SpaceX to float lesser amount. S&P Global, which manages the S&P 500, is considering cutting the amount of time required to allow a stock into an eligible fund from a year to six months. There are over 150 ETF products based on the Nasdaq Composite index and the S&P 500, including a number of popular ones in Australia. These ETFs invest in all companies on those indices by default, meaning investors cannot opt out of investing in a certain company. Depending on the amount of SpaceX stock that floats, when it enters these indices and moves dramatically and up down, it could affect the entire index. Dale said: "We have probably heard more concern from investors who don't want ... exposure to SpaceX than those who are actually seeking it." While CommBank has reportedly told customers they'll be able to invest directly in SpaceX IPO, nabtrade customers are more divided. Some think: "'Even if Musk is over-promising and underdelivers, he has delivered some extraordinary things and I would like to be part of that'," said Dale. "There's another cohort who go, 'this is completely outside my risk profile'." SpaceX has reshaped the economics of orbital launch through the reusable Falcon 9 rocket. But10 years ago, Musk predicted the first human colony could be on Mars - by 2024. Musk's prospectus calls for new industries to be built on the Moon, Mars, "and beyond". The estimated total addressable market is $US28.5 trillion, "the largest in human history", comprised of space launch, connectivity, Starlink, and AI infrastructure. The relationship between Musk's hype and Musk's reality takes "the shape of an inverted pyramid" with the material base at the bottom (in this case, the real rocket accomplishments of SpaceX) but that it opens up into a "wider virtual realm", according to author Ben Tarnoff, who has co-written Muskism: A Guide for the Perplexed with historian Quinn Slobodian. Using this "financial fabulism" Musk projects himself as a public figure who is "making science fiction style promises that nonetheless the global investor class finds credible enough to reward him with an increased stock valuation". It's this aspect of SpaceX may well be beyond the comfort zone of ETF investors. Space-related share transactions at Nabtrade amount to less than 1 per cent of total by number and size, and less than 1 per cent of active customers with exposure. Setting aside the potential impact of SpaceX on the S&P and Nasdaq-tracking ETFs, specialised funds can be a safer way to get exposure to space investment. Among the more notable offerings is UFO, a passive space ETF, and actively managed funds, MARS, Roundhill Space & Technology ETF, and NASA, Tema Space Innovators ETF. Cathie Wood's ARKX Space & Defence Innovation ETF invests in cutting-edge tech and aerospace. Michael McCarthy chief executive of Moomoo Securities said: "Speculative investments are predicated on potential future developments - and space is the same sort of investment." While not discussing SpaceX, McCarthy said investing directly in space stocks is "akin to buying a gold explorer or perhaps a cryptocurrency". Many space business models will fail, and the companies will go broke, McCarthy said: "But if they prove a viable business model the potential upside is enormous." While making no recommendation, he says some popular individual stocks include Rocket lab, Firefly Aerospace, AST SpaceMobile, and Intuitive Machines. "I would caution against going all in on these because of that potential for businesses to fail." The Business Briefing newsletter delivers major stories, exclusive coverage and expert opinion. Sign up to get it every weekday morning.

SpaceX wins $4bn contract for US Golden Dome satellites

SpaceX has won a contract for over $4 billion to build satellites to track foreign aircraft and missiles as part of President Trump's Golden Dome defensive shield.The space-based tracking network integrates space sensors, communication systems and AI-enabled ground processing to look and alert for airborne threats from orbit, according to a US Space Force statement Friday.The US had been using ground-based sensors and military aircraft to monitor the skies, but placing detection capabilities in space could eliminate potential blind spots. The $4.16 billion award underscores SpaceX's close involvement with Golden Dome, which is intended to protect the US from attacks through layered defence systems ranging from Earth to space.SpaceX is already under contract to develop prototypes of space-based interceptors for the project and is part of a multi-company software consortium building the operating layer underpinning Golden Dome. It is also working with the US Space Force to develop a military communications network using the company's Starshield platform, a version of Starlink offering a classified and encrypted signal.(This is a Bloomberg story)

SpaceX, OpenAI windfall fuels bets on next-wave Asian AI winners

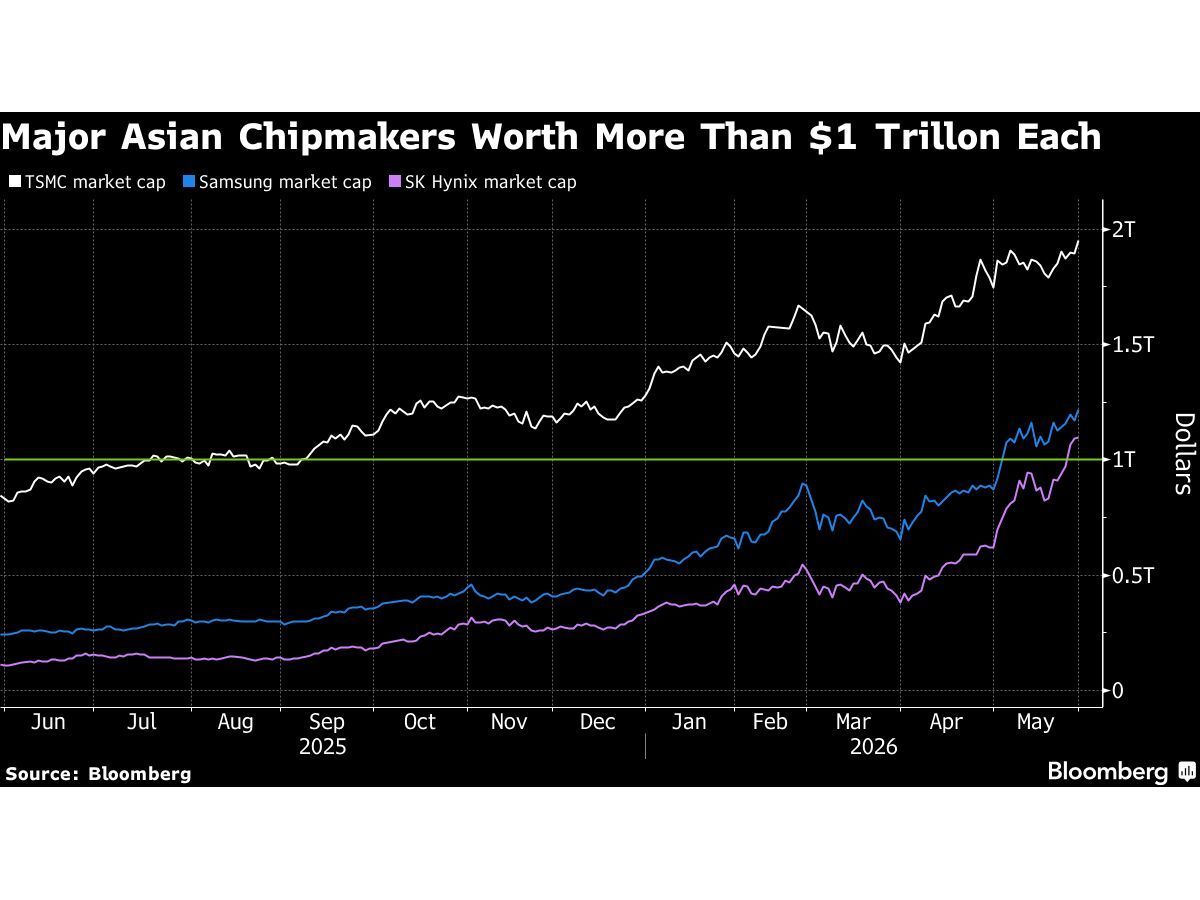

Some investors believe that the billions of dollars that SpaceX, Anthropic PBC and OpenAI are set to raise through stock offerings will kick off a fresh round of technology spending. The hunt is on for companies that could benefit from the tailwinds of an unprecedented wave of stock offerings in the US, and investors are increasingly honing in on the Asian supply chain. Their hypothesis is that the billions of dollars that SpaceX, Anthropic PBC and OpenAI are set to raise will kick off a fresh round of technology spending - with a good chunk of that finding its way to the makers of server parts, specialised materials, cooling components and power equipment. For stock markets in Asia, that could be the catalyst for the next leg of a historic rally. Hardware firms in the region are already among the biggest winners of the data-centre buildout, which has propelled chipmakers Taiwan Semiconductor Manufacturing Co., Samsung Electronics and SK Hynix into the trillion-dollar club. But after their breakneck gains, some investors have become uneasy about those lofty valuations and are now betting that the next phase will create a new class of champions. "AI IPOs could further fuel the capex boom at a time when Asian chip stocks look stretched," said Ken Wong, an Asian equity portfolio specialist at Eastspring Investments Hong Kong. "We're currently underweighting semiconductors in our Asia technology strategy and focusing more on the electronic component makers." The battle for AI leadership has driven massive expenditures on computing networks by the likes of Meta Platforms and Amazon.com. The pending equity offerings could provide some relief on market concerns over funding sustainability as debt levels rise. The listings of SpaceX, OpenAI and Anthropic may mean a total of US$70 billion in AI spending on top of the more than US$750 billion already committed by the biggest hyperscalers, according to Fabien Yip, a market analyst at IG International. Broadening trade "The flow-through to Asia is prominently visible" in the latest chipmaker earnings reports, she said. "As the AI rally matures, the broadening beyond pure-play names is underway." Some of the region's hottest stock trades have been makers of electronic components used in servers as well as providers of materials and techniques used in making semiconductors. South Korea's Samsung Electro-Mechanics and Japan's Ibiden are among the top performers on MSCI's broadest Asia equity index this year. Among more far-flung plays, IG's Yip highlights Japanese toilet maker Toto, which supplies ceramic materials for chipmaking equipment. Asian chipmakers have reported windfall profits on AI, on strong pricing power as the new source of demand creates dramatic semiconductor shortages. Supply crunches are now starting to appear further down the supply chain, and the trend may deepen with the continued inflow of capex funding. Greater investor awareness of new bottlenecks has combined with technical factors to drive broadening of the AI trade beyond the biggest chipmakers. Given concentration risks and limits on how much funds can invest in single stocks, money managers are looking at where earnings are only beginning to reflect the scale of AI infrastructure spending. Sam Konrad, a portfolio manager at Jupiter Asset Management, sees opportunities in Taiwan's Hon Hai Precision Industry and Quanta Computer, which assemble servers, as well as chip designer MediaTek. "The AI capex cycle is going to last multiple years," he said. "Investors are likely to look for companies that are direct beneficiaries, but that are still trading at low valuation multiples." BNP Paribas Asset Management's Song Zhe said the next leg of the rally "should be stock-specific, not a blanket semiconductor trade". His team is focused on advanced packaging, substrates, testing, optical connectivity, power, cooling and server-related companies across Taiwan and China "where earnings upgrades can still justify valuations". Others are investing in applications of AI beyond chatbots, in areas including robotics and self-driving vehicles. This burgeoning "physical AI" field has gotten a push from Nvidia's efforts to develop related businesses, boosting shares of partners like LG Electronics. Power supply The supply of power is seen as another key area. Nuclear and alternative energy have gained attention for their potential as data centres proliferate, especially as the Iran war drives up oil prices. Solar firm HD Hyundai Energy Solutions and nuclear play Daewoo Engineering & Construction are among the top stocks in South Korea's world-beating market this year. Adani Group's push into green-powered data centres is driving gains in its energy units, providing India with one of its few AI bets. Jian Shi Cortesi, a fund manager at Gam Investment Management, sees power as "the most under-owned bottleneck," but cautions that the next phase of the AI frenzy may carry bigger risks than the first. If AI demand fails to justify the scale of spending, companies may cut capex and leave the market facing excess infrastructure and sharp valuation declines. Brian Ooi, a portfolio manager at Swiss-Asia Financial Services, sees the SpaceX, OpenAI and Anthropic capital raisings as a positive signal to remain invested in AI stocks. He also likes power, with particular interest in transformers, fuel cells, cables, gas turbines and other equipment. The three big AI-related IPOs "will provide them more liquidity to further invest in capital expenditure, and they have significant spending plans in place," he said. "Asian suppliers will benefit." BLOOMBERG

AI affordability wakeup call, Anthropic's $65bn mega round, and India's first 12nm AI chip -- Weekly AI roundup

From Anthropic's $65 billion mega funding round to the announcement of India's first homegrown 12nm AI chip, the world of AI has seen a lot of action this week. AI defined the force of global finance, cybersecurity, and corporate strategy. In the week that went by, we saw Anthropic raising its value to $965 billion while China's DeepSeek decided to cut prices by 75 per cent. Big Tech realised that AI infrastructure isn't cheap to maintain and hence, ordered scaling down on encouraging AI usage, while Nvidia's Jensen Huang, in the midst of global political disruption, is joining the Tsinghua Advisory Board. What matters more, however, is that these weren't isolated events on their own. All these were interconnected signals that AI is now getting ready for a higher-level game, forming an infrastructure layer that supports a lot of institutions and their infrastructure. Hence, without further ado, let's take a quick look at everything the AI world had to throw at us. 1. Anthropic now the world's most valuable AI firm Anthropic's overtook OpenAI to become the most valuable AI company yet after the latest $65 billion funding round - something that shall dominate business pages for months. The AI company, led by Dario Amodei, closed a Series H round at a post-money valuation of $965 billion, making it Silicon Valley's most valuable AI startup. Led by Altimeter Capital, this $65 billion round is reportedly the AI startup's final private fundraise before a highly anticipated initial public offering (IPO). At nearly $1 trillion, Anthropic is now worth more than major Fortune 500 companies combined despite being a private company. This valuation reflects investor confidence despite its rivals making great strides in the AI space over the week. 2. Big Tech hits the AI cost wall The AI affordability crisis hits Meta, Amazon, and Uber, emerging from token-based pricing models that have driven operational costs higher than the returns on investment. AI companies, which once viewed using AI as a cost-saving effort, have found enterprise-grade generative AI to be remarkably expensive, owing to the massive token consumption of autonomous agents and code generation. This has forced a shift to strict budget control. Uber exhausted its entire 2026 budget for internal AI coding tools in just four months, leading to a reevaluation of agentic software engineering. Similarly, Amazon moved away from its initial phase of tokenmaxxing toward rigid cost controls, prioritising practical business outcomes. Meta's rising infrastructure expenses have forced teams to implement internal benchmarking frameworks like 'Claudeonomics' to strictly audit departmental resource consumption. 3. Anthropic also released Claude 4.8 On the sidelines, the company released Claude Opus 4.8 - an upgraded model that delivers notable performance improvements across coding, reasoning, and agentic workflows. A key highlight of this update is enhanced model reliability and honesty, with Opus 4.8 being four times less likely to overlook flaws in its own code. It is also significantly better at proactively flagging uncertainties rather than making unsupported claims. Alongside the model, Anthropic introduced many major features to other Claide products, including 'dynamic workflows' in Claude Code. This allows the system to run hundreds of parallel subagents to tackle massive, codebase-scale migrations, and an 'effort control' setting that lets users manually adjust how deeply Claude reasons through a task on claude.ai. Anthropic's play on price also took everyone's note, with regular API pricing remaining identical to Opus 4.7, and fast mode now being three times cheaper. Anthropic also teased that its Mythos-class models are expected to release in the coming weeks. Since we touched upon price... 4. DeepSeek's permanent 75% price cut In a move that sent shockwaves through the AI infrastructure market, DeepSeek made its 75% price discount on the flagship V4-Pro model a permanent one. The 1.6-trillion parameter model now costs just $0.83 per million tokens for output, down from $3.48 -- a 25% drop from the original API cost. What was supposed to be a promotional discount ending May 31 is now the new baseline. AI analysts are calling it the beginning of the AI pricing war, where DeepSeek has taken the lead. This permanent price cut will accelerate the shift from chatbots to autonomous systems that can perform complex workflows. 5. Project Lightwell: IBM's $5 billion security counterstrike While Anthropic's cybersecurity model raised alarms, IBM and Red Hat responded with a counter-initiative. The companies announced Project Lightwell - a $5 billion commitment to deploy 20,000 engineers supported by advanced AI to establish a trusted enterprise protector for open-source security. This represents the largest coordinated effort to secure software supply chains in history, acknowledging that AI-powered attacks are becoming the primary threat to digital infrastructure. This ends up being crucial, as AI models become more capable at discovering vulnerabilities faster than humans can fix them - IBM's tool can weaponise those discoveries at an unprecedented scale. 6. IBM India head urges upskilling millions IBM India and South Asia Managing Director Sandip Patel has warned that Indian IT companies must urgently reskill millions of workers to remain competitive as AI rapidly transforms software development, coding, and business operations. Highlighting that only about 30% of India's current workforce is AI literate, Patel stressed that rapid, large-scale upskilling could leverage the country's vast youth demographic to establish India as the "skill capital" of the world for AI by 2030. 7. Faith meets tech: Pope and Anthropic partner on AI ethics This week, Pope Leo XIV made history by personally presenting his first papal encyclical, Magnifica Humanitas: On safeguarding the human person in the time of artificial intelligence, alongside Anthropic co-founder Christopher Olah. The landmark event at the Vatican brought together the head of the Catholic Church and the self-described atheist tech leader to advocate for a rare partnership between religious institutions and the tech industry. In his encyclical, Pope Leo XIV warned against the concentration of technological power and called for the global "disarming" of the AI arms race to protect against algorithmic bias, automated warfare, and mass labour displacement. Olah strongly echoed these moral concerns, candidly admitting that frontier labs face immense commercial and geopolitical pressures that can conflict with safety. He emphasised that the tech sector urgently needs independent, outside moral voices, like the Church and civil society, to provide rigorous oversight and ensure the technology is steered toward the common good. 8. Jensen Huang joins Tsinghua Advisory Board The week also saw Jensen Huang, Nvidia's CEO, join Tsinghua University's advisory board alongside Tim Cook and Elon Musk, accepting a seat on the school of economics and management. The move comes amid active US chip export controls on China, making Huang's role at Beijing's most prestigious university significant geopolitically. On the contrary, the Chinese government has placed strict overseas travel restrictions on top AI scientists working at private tech powerhouses like DeepSeek and Alibaba in a bid to protect domestic IP and halt brain drain to US labs. 9. AGI possible by 2029, says DeepMind's Demis Hassabis In a recent interview with Axios, Google DeepMind CEO Demis Hassabis turned heads by tightening his timeline for Artificial General Intelligence (AGI). Hassabis declared its arrival by 2029 as a "real possibility." This marks a sharp contraction from his previous, more conservative 2030 horizon. Hassabis admitted that his dramatic "singularity" framing was an intentional policy pressure tool designed to shock sluggish governments and economists into accelerating their regulatory preparation. 10. Zoho-backed Netrasemi unveils India's first homegrown 12nm AI chip Kerala-based semiconductor startup Netrasemi has officially launched its maiden high-end 12-nanometer AI system-on-chip (SoC), named the A2000, with commercial mass production scheduled to begin by the end of 2026. Backed by Rs 125 crore in funding from prominent investors like Zoho and Unicorn India Ventures, as well as support from the Indian government's Design Linked Incentive (DLI) scheme, the A2000 has successfully cleared initial silicon bring-up testing. This chipset integrates a proprietary neural processing unit (NPU), video processing unit (VPU), and image signal processor (ISP) to execute high-performance inference workloads locally on devices without cloud reliance. The chip is suited for advanced edge AI applications like smart surveillance cameras, robotics, and video gateways.

Google Engineer Charged Over $1.2M Polymarket Insider Trading Scheme - Memeburn

Congress has since opened a formal probe into Polymarket and Kalshi, raising the stakes for the fast-growing prediction market industry. A Google engineer just caught federal charges for a $1.2 million Polymarket insider trading scheme -- and the way it unravelled says as much about crypto's transparency as about one person's bad judgment. Federal prosecutors charged Michele Spagnuolo, 36, on May 27, 2026, with turning confidential Google search data into $1.2 million in Polymarket winnings. It's the second federal insider trading case tied to Polymarket this year. Here's exactly what happened, how he got caught, and what it means for the future of prediction markets. The Google Engineer Behind the Polymarket Insider Trading Scheme Spagnuolo operated under the alias "AlphaRaccoon." Specifically, he allegedly traded on Polymarket using confidential Google business information. The SDNY complaint lays it all out. In fact, he's an Italian citizen living in Switzerland. Authorities arrested him on Wednesday and charged him with commodities fraud, wire fraud, money laundering, and other counts. According to the complaint, he placed bets on Google search trends using internal company data. Spagnuolo appeared before a federal magistrate, who released him on a $2.25 million bond. Meanwhile, Google confirmed it cooperated with the investigation. A spokesperson said: "The employee accessed our marketing material using a tool available to all employees, but using such confidential information to place bets is a serious breach of our policies. We've placed the employee on leave and will take appropriate action." How the Scheme Actually Worked This wasn't a complicated hack. In fact, Spagnuolo didn't need to break into any system. Rather, he already had legitimate access. Every year, Google publishes its Year in Search report. It reveals the most-searched people, topics, and trends of the year. Crucially, employees can view this data before any public release. So Spagnuolo allegedly used that access window on Polymarket -- a platform where users place real-money bets on real-world events using cryptocurrency. He bet through the AlphaRaccoon account. Specifically, his trades were YES and NO bets on who would top Google's most-searched list. In one example, he placed $381.12 on the singer D4vd, ranking among the year's most-searched people. He also put just $5 on D4VD to be number one -- at an implied probability slightly above 0%. The edge was devastating. As the indictment puts it: Spagnuolo "knew the outcome of these wagers before the trading public did because he had accessed Google's confidential, commercially valuable internal data." He wasn't guessing. Instead, he was collecting. Crypto Made It Traceable -- Not Anonymous Here's the twist many crypto newcomers don't expect: trading on a blockchain doesn't make you invisible. In fact, it can make you more visible. Polymarket's chief legal officer, Olivia Chalos, said in a statement that the platform is "the only prediction platform to date whose cooperation has led to insider trading charges in the United States." She added that, since users trade with crypto, activity is "transparent, traceable, and bad actors leave footprints." Meanwhile, someone moved part of the funds to a payment processor account in Italy. Investigators then traced that account back to an ID card belonging to Spagnuolo. Together, that trail -- part on-chain, part paper -- sealed the case. Polymarket, which recently partnered with Nasdaq to bring more institutional legitimacy to prediction markets, flagged the suspicious trading and worked directly with the DOJ. That cooperation now anchors its public case for self-regulation. This Is the Second Case -- and Congress Is Watching Last month, SDNY charged a US special forces soldier. According to prosecutors, he used classified knowledge of a planned military operation to capture Venezuelan President Nicolás Maduro. The soldier bet on Polymarket ahead of the raid and pocketed over $400,000. Still, he has pleaded not guilty. Two federal cases in under six weeks got Congress's attention fast. As a result, the House Oversight Committee opened an investigation into Polymarket and Kalshi. Specifically, lawmakers want to know how both platforms handle identity checks, geographic restrictions, and unusual trades. The stakes are real. After all, prediction market volumes hit $51 billion last year and could reach $240 billion in 2026. That kind of growth attracts serious capital -- and bad actors. Consequently, enforcement is accelerating. In March 2026, both platforms announced new anti-insider rules aligned with recent CFTC guidance on prediction market manipulation. For example, new restrictions now bar politicians from trading on their own campaigns, athletes from betting on their own sports, and employees from trading on contracts tied to their employers. What This Means for Prediction Markets Going Forward The Spagnuolo case isn't about one rogue engineer. Rather, it tests a bigger question: does insider trading law -- built for stock markets -- cover prediction markets too? Traditionally, insider trading rules grew up around securities. However, the CFTC issued a February 2026 advisory on how these rules apply to prediction platforms. The Spagnuolo charges use commodities fraud and wire fraud -- not traditional securities law -- and that shows prosecutors have found a path that works. For Google, moreover, this exposes a real data governance gap. After all, every employee reportedly had access to the same internal tool Spagnuolo used. That's a systemic policy failure, not a one-off incident. For crypto broadly, the case makes one thing clear: the blockchain cuts both ways. Specifically, every bet is public and permanent -- and federal investigators can read it too. In fact, data transparency was a theme Google itself put front and centre at Google I/O 2026, and the parallels to this case are hard to ignore. Meanwhile, the CFTC separately filed a civil case against Spagnuolo. As a result, he now faces criminal and regulatory pressure at the same time. Altogether, the prediction market industry is officially on notice. FAQs What is Polymarket? Polymarket is a prediction market platform where users bet real money -- via cryptocurrency -- on real-world events. For example, that includes election results, search trend rankings, and geopolitical outcomes. The CFTC regulates it in the US. What exactly did Michele Spagnuolo do? Prosecutors allege he accessed confidential Google search data and used it to place profitable bets on Polymarket. As a result, he faces charges of commodities fraud, wire fraud, and money laundering. The DOJ filed a criminal case; the CFTC filed a separate civil one. Is prediction market insider trading actually illegal? Yes. Even though prediction markets don't follow the same rules as stock exchanges, misusing confidential information to profit from trades still breaks federal anti-fraud and commodities law. Furthermore, the CFTC reinforced this with formal guidance in February 2026. How did investigators identify Spagnuolo? Polymarket cooperated with the DOJ and flagged the unusual trades. Additionally, blockchain transparency played a key role -- crypto transactions are traceable. Investigators then linked the funds to a payment account in Italy opened using Spagnuolo's own government ID. What happens to Polymarket now? Polymarket faces a congressional probe alongside rival platform Kalshi. Both have already introduced new rules against insider trading. Nevertheless, the platform's best defence is cooperation -- it's trying to prove it can police itself before regulators step in.

SpaceX Wants Even More Profits Ahead of Its IPO

SpaceX has picked June 12 as its IPO date. Even before the initial public offering happens, however, SpaceX is laying the foundations for becoming the most profitable space company in history. As I reported in March, SpaceX raised the price on Falcon 9 launches for the fourth time, to $74 million, a 21% increase over the original price. That's a significant price hike, but despite what you might think, rocket launches have become a smaller and smaller part of SpaceX's business over time. SpaceX today is much more of a Starlink company than a rocket company. And as it just so happens, SpaceX's latest round of price hikes is happening at Starlink. Will AI create the world's first trillionaire? Our team just released a report on the one little-known company, called an "Indispensable Monopoly" providing the critical technology Nvidia and Intel both need. Continue " Image source: SpaceX. SpaceX's most important business: Starlink In 2025, the SpaceX satellite internet service called Starlink generated roughly 61% of SpaceX's $18.7 billion in revenue. In 2026, this percentage is expected to grow. This won't happen automatically, however. SpaceX is growing its Starlink user base as one method of growing revenue. Another method is raising prices. (Note that these two actions may work at cross-purposes, though.) We learned this last week, when PCMag.com laid out a series of six tiers of Starlink "personal" (non-business) service and their respective price increases. Ranging from $5 to $10 per tier, per month, the price hikes look modest at first glance. Percentage-wise, most prices are changing only in the mid-single digits (6.1%) to the low double digits (10%) -- with two notable exceptions. The price for owning a Starlink terminal and keeping it in standby mode (which pauses high-speed internet service but permits download speeds of about 0.5 megabits per second (Mbps)) has doubled from $5 to $10 per month. But the price of the 300 gigabits-per-second (Gbps) roaming service remains unchanged at $80 per month. Data source: PCMag. It should be noted that prices may vary by location, and that in some locations, Starlink continues to advertise prices and plan names on its website that differ significantly from those noted above. Moreover, these rates are for personal service. Starlink also offers a wide array of business plans for fixed and mobile users, as well as for maritime and aviation service. Business versus personal What's gone largely unreported so far is that, at the same time that Starlink is raising prices for its personal customers, it's cutting prices for business customers. Here are the rates offered for four fixed-site, "local priority" business tiers with varying amounts of total monthly usage today: * Local Priority 50 gigabytes (GB) (per month) costs $55 * Local Priority 500 GB costs $155 * Local Priority 1 terabyte (TB) costs $280 * Local Priority 2 TB costs $530 In each case, this is a $10 reduction from prices advertised as recently as April, according to an April 8 snapshot found on the Internet Wayback Machine. What it means for SpaceX In the run-up to its IPO, SpaceX will obviously want to make itself look as attractive as possible to investors -- which is to say as profitable and fast-growing as possible -- in order to fetch as high a share price as possible on IPO day (and thereafter). Raising prices for residential customers helps with profits, but it risks curtailing subscriber growth. Charging lower rates on Starlink business customers, on the other hand, may have the effect of accelerating growth among Starlink's most well-heeled customers -- the ones who can pass on prices to their customers, and also deduct them as business expenses. What's more, a $10 price reduction there results in significantly smaller percentage price declines (and thus, less profit lost) than Starlink is implementing in its residential plans. On balance, I see this less as a story of "SpaceX raising prices" and more as a story of SpaceX tweaking prices across multiple Starlink markets to optimize its growth relative to its profits. Long term, I expect this to make SpaceX stock more profitable, not less. Where to invest $1,000 right now When our analyst team has a stock tip, it can pay to listen. After all, Stock Advisor's total average return is 978%* -- a market-crushing outperformance compared to 211% for the S&P 500. They just revealed what they believe are the 10 best stocks for investors to buy right now, available when you join Stock Advisor. See the stocks " *Stock Advisor returns as of May 30, 2026. The Motley Fool has a disclosure policy. The views and opinions expressed herein are the views and opinions of the author and do not necessarily reflect those of Nasdaq, Inc.

SpaceX, OpenAI windfall fuels fresh bets on next-wave Asian AI winners

Hardware firms in the region are already among the biggest winners of the data-center buildout | Image: Bloomberg By Abhishek Vishnoi and Winnie Hsu The hunt is on for companies that could benefit from the tailwinds of an unprecedented wave of stock offerings in the US, and investors are increasingly honing in on the Asian supply chain. Their thesis is that the billions of dollars that SpaceX, Anthropic PBC and OpenAI are set to raise will kick off a fresh round of technology spending -- with a good chunk of that finding its way to the makers of server parts, specialized materials, cooling components and power equipment. For stock markets in Asia, that could be the catalyst for the next leg of a historic rally. Hardware firms in the region are already among the biggest winners of the data-center buildout, which has propelled chipmakers Taiwan Semiconductor Manufacturing Co., Samsung Electronics Co. and SK Hynix Inc. into the trillion-dollar club. But after their breakneck gains, some investors have become uneasy about those lofty valuations and are now betting that the next phase will create a new class of champions. "AI IPOs could further fuel the capex boom at a time when Asian chip stocks look stretched," said Ken Wong, an Asian equity portfolio specialist at Eastspring Investments Hong Kong Ltd. "We're currently underweighting semiconductors in our Asia technology strategy and focusing more on the electronic component makers." Also Read Danish pension fund blacklists SpaceX over 'catastrophic governance' Best of BS Opinion: Quad relevance, fuel pricing, and a Cockroach Party Profitable products in AI world possible, says Thomas Jeng of OpenAIpremium SpaceX lowers IPO valuation target to at least $1.8 trillion Founder supremacy is eroding the discipline of corporate governance The battle for AI leadership has driven massive expenditures on computing networks by the likes of Meta Platforms Inc. and Amazon.com Inc. The pending equity offerings could provide some relief on market concerns over funding sustainability as debt levels rise. The listings of SpaceX, OpenAI and Anthropic may mean a total of $70 billion in AI spending on top of the more than $750 billion already committed by the biggest hyperscalers, according to Fabien Yip, a market analyst at IG International. Broadening Trade "The flow-through to Asia is prominently visible" in the latest chipmaker earnings reports, she said. "As the AI rally matures, the broadening beyond pure-play names is underway." Some of the region's hottest stock trades have been makers of electronic components used in servers as well as providers of materials and techniques used in making semiconductors. South Korea's Samsung Electro-Mechanics Co. and Japan's Ibiden Co. are among the top performers on MSCI Inc.'s broadest Asia equity index this year. Among more far-flung plays, IG's Yip highlights Japanese toilet maker Toto Ltd., which supplies ceramic materials for chipmaking equipment. Asian chipmakers have reported windfall profits on AI, on strong pricing power as the new source of demand creates dramatic semiconductor shortages. Supply crunches are now starting to appear further down the supply chain, and the trend may deepen with the continued inflow of capex funding. Greater investor awareness of new bottlenecks has combined with technical factors to drive broadening of the AI trade beyond the biggest chipmakers. Given concentration risks and limits on how much funds can invest in single stocks, money managers are looking at where earnings are only beginning to reflect the scale of AI infrastructure spending. Sam Konrad, a portfolio manager at Jupiter Asset Management, sees opportunities in Taiwan's Hon Hai Precision Industry Co. and Quanta Computer Inc., which assemble servers, as well as chip designer MediaTek Inc. "The AI capex cycle is going to last multiple years," he said. "Investors are likely to look for companies that are direct beneficiaries, but that are still trading at low valuation multiples." BNP Paribas Asset Management's Song Zhe said the next leg of the rally "should be stock-specific, not a blanket semiconductor trade." His team is focused on advanced packaging, substrates, testing, optical connectivity, power, cooling and server-related companies across Taiwan and China "where earnings upgrades can still justify valuations." Others are investing in applications of AI beyond chatbots, in areas including robotics and self-driving vehicles. This burgeoning "physical AI" field has gotten a push from Nvidia Corp.'s efforts to develop related businesses, boosting shares of partners like LG Electronics Inc. Power Supply The supply of power is seen as another key area. Nuclear and alternative energy have gained attention for their potential as data centers proliferate, especially as the Iran war drives up oil prices. Solar firm HD Hyundai Energy Solutions Co. and nuclear play Daewoo Engineering & Construction Co. are among the top stocks in South Korea's world-beating market this year. Adani Group's push into green-powered data centers is driving gains in its energy units, providing India with one of its few AI bets. Jian Shi Cortesi, a fund manager at Gam Investment Management, sees power as "the most under-owned bottleneck," but cautions that the next phase of the AI frenzy may carry bigger risks than the first. If AI demand fails to justify the scale of spending, companies may cut capex and leave the market facing excess infrastructure and sharp valuation declines. Brian Ooi, a portfolio manager at Swiss-Asia Financial Services Pte., sees the SpaceX, OpenAI and Anthropic capital raisings as a positive signal to remain invested in AI stocks. He also likes power, with particular interest in transformers, fuel cells, cables, gas turbines and other equipment. The three big AI-related IPOs "will provide them more liquidity to further invest in capital expenditure, and they have significant spending plans in place," he said. "Asian suppliers will benefit." More From This Section Trump vents about federal judge who blocked renovation of Kennedy Centre Trump plans to appeal order allowing tariff refunds for all importers US says it stopped another merchant ship attempting to breach Iran blockade IMF, World Bank, others warn West Asia conflict straining energy supplies 'Russian spies aggressively seeking Western technology as sanctions bite'

Anthropic just topped OpenAI on a major metric ahead of rival IPOs

Anthropic is nearing a $1 trillion valuation, topping rival OpenAI and making it the most valuable artificial intelligence startup, as the two competitors head toward their initial public offerings. Most Read from Fast Company On Thursday San Francisco-based Anthropic announced it had raised $65 billion in Series H funding, bringing it to a $965 billion valuation. The latest round was led by Altimeter Capital, Dragoneer, Greenoaks, and Sequoia Capital, and included $15 billion of prior commitments, $5 billion of which came from Amazon. In just the past few months, Anthropic has nearly tripled its worth from $380 billion in February, CNBC reported. Meanwhile, rival OpenAI, considered the heavyweight in the fight to dominate AI -- and the more talked-about company just a year ago -- now trails behind, valued at $852 billion (including $122 billion in funding raised in March). Anthropic's dizzying rise is in large part due to its agentic AI coding assistant Claude Code. On May 28 the company released Claude Opus 4.8, its latest version, and confirmed plans to roll out Claude Mythos models with advanced cybersecurity capability, which had been delayed due to security risks. So far, Claude Mythos has been made available only to a select group of companies. And Anthropic keeps innovating. As Fast Company reported, earlier this month the company launched Claude for Small Business, a new package of agentic workflows that includes skills to automate small-business tasks like payroll, marketing, invoicing, contracts, and content strategy. The sky-high valuations and lighting speed at which these companies are raising money speaks to the absolute feeding frenzy that is the AI boom. But will the average investor benefit from their upcoming IPOs? "At the potential prices that have been reported, it would be very difficult for an investor to come out ahead in a three-year period," economist Jay Ritter, an IPO expert at the University of Florida, told The New York Times. "They may be great as companies, but when you buy shares in them you should pay attention to their price." This post originally appeared at fastcompany.com Subscribe to get the Fast Company newsletter: http://fastcompany.com/newsletters

SpaceX Wants Even More Profits Ahead of Its IPO

SpaceX has picked June 12 as its IPO date. Even before the initial public offering happens, however, SpaceX is laying the foundations for becoming the most profitable space company in history. As I reported in March, SpaceX raised the price on Falcon 9 launches for the fourth time, to $74 million, a 21% increase over the original price. That's a significant price hike, but despite what you might think, rocket launches have become a smaller and smaller part of SpaceX's business over time. SpaceX today is much more of a Starlink company than a rocket company. And as it just so happens, SpaceX's latest round of price hikes is happening at Starlink. SpaceX's most important business: Starlink In 2025, the SpaceX satellite internet service called Starlink generated roughly 61% of SpaceX's $18.7 billion in revenue. In 2026, this percentage is expected to grow. This won't happen automatically, however. SpaceX is growing its Starlink user base as one method of growing revenue. Another method is raising prices. (Note that these two actions may work at cross-purposes, though.) We learned this last week, when PCMag.com laid out a series of six tiers of Starlink "personal" (non-business) service and their respective price increases. Ranging from $5 to $10 per tier, per month, the price hikes look modest at first glance. Percentage-wise, most prices are changing only in the mid-single digits (6.1%) to the low double digits (10%) -- with two notable exceptions. The price for owning a Starlink terminal and keeping it in standby mode (which pauses high-speed internet service but permits download speeds of about 0.5 megabits per second (Mbps)) has doubled from $5 to $10 per month. But the price of the 300 gigabits-per-second (Gbps) roaming service remains unchanged at $80 per month. Data source: PCMag. It should be noted that prices may vary by location, and that in some locations, Starlink continues to advertise prices and plan names on its website that differ significantly from those noted above. Moreover, these rates are for personal service. Starlink also offers a wide array of business plans for fixed and mobile users, as well as for maritime and aviation service. Business versus personal What's gone largely unreported so far is that, at the same time that Starlink is raising prices for its personal customers, it's cutting prices for business customers. Here are the rates offered for four fixed-site, "local priority" business tiers with varying amounts of total monthly usage today: * Local Priority 50 gigabytes (GB) (per month) costs $55 * Local Priority 500 GB costs $155 * Local Priority 1 terabyte (TB) costs $280 * Local Priority 2 TB costs $530 In each case, this is a $10 reduction from prices advertised as recently as April, according to an April 8 snapshot found on the Internet Wayback Machine. What it means for SpaceX In the run-up to its IPO, SpaceX will obviously want to make itself look as attractive as possible to investors -- which is to say as profitable and fast-growing as possible -- in order to fetch as high a share price as possible on IPO day (and thereafter). Raising prices for residential customers helps with profits, but it risks curtailing subscriber growth. Charging lower rates on Starlink business customers, on the other hand, may have the effect of accelerating growth among Starlink's most well-heeled customers -- the ones who can pass on prices to their customers, and also deduct them as business expenses. What's more, a $10 price reduction there results in significantly smaller percentage price declines (and thus, less profit lost) than Starlink is implementing in its residential plans. On balance, I see this less as a story of "SpaceX raising prices" and more as a story of SpaceX tweaking prices across multiple Starlink markets to optimize its growth relative to its profits. Long term, I expect this to make SpaceX stock more profitable, not less.

SpaceX Wants Even More Profits Ahead of Its IPO

SpaceX has picked June 12 as its IPO date. Even before the initial public offering happens, however, SpaceX is laying the foundations for becoming the most profitable space company in history. As I reported in March, SpaceX raised the price on Falcon 9 launches for the fourth time, to $74 million, a 21% increase over the original price. That's a significant price hike, but despite what you might think, rocket launches have become a smaller and smaller part of SpaceX's business over time. SpaceX today is much more of a Starlink company than a rocket company. And as it just so happens, SpaceX's latest round of price hikes is happening at Starlink. Missed Nvidia in 2009? This Rare Signal Is Flashing Again. In 2009, a "Double Down" signal flashed for a little-known chipmaker called Nvidia. For the first time in years, that same "Total Conviction" signal is flashing for a company 1/100th the size of Nvidia. Continue " SpaceX's most important business: Starlink In 2025, the SpaceX satellite internet service called Starlink generated roughly 61% of SpaceX's $18.7 billion in revenue. In 2026, this percentage is expected to grow. This won't happen automatically, however. SpaceX is growing its Starlink user base as one method of growing revenue. Another method is raising prices. (Note that these two actions may work at cross-purposes, though.) We learned this last week, when PCMag.com laid out a series of six tiers of Starlink "personal" (non-business) service and their respective price increases. Ranging from $5 to $10 per tier, per month, the price hikes look modest at first glance. Percentage-wise, most prices are changing only in the mid-single digits (6.1%) to the low double digits (10%) -- with two notable exceptions. The price for owning a Starlink terminal and keeping it in standby mode (which pauses high-speed internet service but permits download speeds of about 0.5 megabits per second (Mbps)) has doubled from $5 to $10 per month. But the price of the 300 gigabits-per-second (Gbps) roaming service remains unchanged at $80 per month. Data source: PCMag. It should be noted that prices may vary by location, and that in some locations, Starlink continues to advertise prices and plan names on its website that differ significantly from those noted above.

Elon Musk's SpaceX Cuts IPO Target To $1.8 Trillion: What's The Prediction Market Forecast?

Benzinga and Yahoo Finance LLC may earn commission or revenue on some items through the links below. Elon Musk's SpaceX is targeting a valuation of at least $1.8 trillion in what may be the largest IPO ever, down from an earlier goal above $2 trillion, according to Bloomberg. The cut follows heavy losses from SpaceX's February merger with xAI. The company posted a $4.28 billion net loss in the first quarter of 2026, with AI infrastructure driving most of the burn, according to the S-1 filing. Investors are piling in anyway. Since mid-December, when Musk first confirmed IPO plans, a net $14 billion has flowed into three mutual funds and four ETFs holding slices of the rocket maker, per Morningstar data via the Financial Times. Prediction market traders are going the other way. Polymarket's "SpaceX IPO Closing Market Cap Above" contract currently assigns 63% odds that the company closes its first trading day above $2.2 trillion. SpaceX has reportedly earmarked up to 30% of IPO shares for retail investors, roughly three times the typical mega-cap allocation. Retail Piles Into Proxy Funds The Destiny Tech100 Fund surged 27% earlier this month, as investors raced to get exposure to the space company. The Tema Space Innovators ETF, which launched in late March, tripled its assets to roughly $1.3 billion the week SpaceX filed its S-1. The ERShares Private-Public Crossover ETF holds SpaceX through a special-purpose vehicle and carries approximately $292 million in exposure, or roughly 23% of the fund, according to ERShares. Trending: Avoid the #1 Investing Mistake: How Your 'Safe' Holdings Could Be Costing You Big Time Telecoms firm EchoStar Corp, which received SpaceX equity in a spectrum sale, is up over 500% in the past year, the Financial Times reported. Auditor KPMG flagged substantial doubt about EchoStar's ability to continue as a going concern in its 2025 annual report. A Spaghetti Cannon Of New ETFs Morningstar's Ben Johnson likened the wave of new SpaceX-themed ETF filings to an "ETF spaghetti cannon" approach of "ready, fire, aim." Neuberger Berman's Renos Savvides told the Financial Times the speculative activity "feels a bit like 2021," the year before a major market slump. SpaceX is expected to begin formal IPO marketing as soon as June 4 with pricing as early as June 11.

OpenAI, SpaceX funding fuels bets on next-wave Asian AI winners

Wealthy Asian investors are pouring billions into AI supply chain companies, betting that massive US fundraising rounds will lift the boats of Asian chipmakers and component suppliers. When OpenAI closed its record $122 billion funding round on March 31, the biggest winners might not have been in San Francisco. They might have been in Seoul, Taipei, and Tokyo. The money trail from Silicon Valley to Asia In 2025, wealthy Asians poured $24.3 billion into global AI private funding rounds. That's nearly triple what they invested the year before. By April 2026, they had already committed an additional $950 million. OpenAI's latest round valued the company at $852 billion post-money, with backing from Amazon, NVIDIA, and SoftBank. SpaceX has filed for what could become the largest IPO in history, targeting a valuation between $1.75 trillion and $2 trillion, with a listing anticipated around mid-June 2026. South Korean chipmakers Samsung and SK Hynix have reportedly secured supply agreements tied to OpenAI's Stargate project, the ambitious infrastructure buildout designed to power the next generation of AI models. Asia's own AI ecosystem is heating up AI startup funding across Asia hit a record $11.2 billion in the first quarter of 2026 alone, heavily concentrated in Chinese companies. Anthropic, OpenAI's most prominent competitor, is reportedly working toward its own IPO while expecting its first profitable quarter on the back of strong revenue growth. Why this matters for investors The risk, of course, is concentration. If AI spending plateaus or the Stargate project encounters delays, the same supply chain exposure that looks brilliant today could become a liability. And the geopolitical overlay is impossible to ignore: trade tensions, export controls on advanced chips, and shifting alliances between the US and Asian manufacturing hubs add layers of uncertainty that pure financial analysis can't fully capture.

OpenAI, SpaceX funding fuels bets on next-wave Asian AI winners

Massive US tech IPOs and AI infrastructure deals are sending billions toward Asian chipmakers, with Samsung and SK Hynix emerging as the biggest beneficiaries. The biggest tech fundraises in history are happening in the US. The biggest winners might be in Asia. A wave of capital flowing into American AI companies, headlined by OpenAI's Stargate project and SpaceX's impending IPO, is creating a gravitational pull on Asian component suppliers. Samsung Electronics and SK Hynix, South Korea's memory chip titans, have seen their stock prices explode this year as investors connect the dots between US AI ambitions and the Asian companies that actually build the hardware making it all possible. The trillion-dollar supply chain On October 1, 2025, Samsung Electronics and SK Hynix signed letters of intent with OpenAI to supply high-bandwidth memory chips for the Stargate project. The estimated value of the Stargate-related chip supply deals exceeds $71 billion over four years, roughly 100 trillion Korean won. OpenAI's infrastructure roadmap calls for 900,000 semiconductor wafers to be produced by 2029, with discussions underway about potentially establishing data centers in South Korea itself. The market has noticed. SK Hynix shares have climbed 258% year-to-date, while Samsung Electronics is up 158% over the same period. Both companies now carry valuations exceeding $1 trillion. South Korea's benchmark KOSPI index hit record highs in May 2026, propelled almost entirely by AI-related capital expenditure optimism and the performance of memory chip stocks. SpaceX enters the picture SpaceX, which merged with Elon Musk's xAI, filed for its IPO in May 2026 with a target valuation between $1.75 trillion and $2 trillion. The company aims to raise up to $80 billion during its offering, which would make it the largest IPO in history by a considerable margin. The planned Nasdaq debut is set for June 12, under the ticker SPCX. Samsung and SK Hynix are two of approximately three companies on Earth capable of producing the high-bandwidth memory that modern AI systems require. The third is Micron, based in Idaho. The Asian AI trade takes shape The $71 billion in Stargate-related deals alone represents a transformative amount of revenue for Samsung and SK Hynix. OpenAI isn't the only buyer. Anthropic is advancing its own IPO timeline. Meta, Google, and Microsoft continue to pour billions into AI infrastructure. Each of these companies needs the same type of advanced memory chips, and the supply base is remarkably concentrated. The KOSPI's record highs reflect this concentration of demand meeting a narrow supply base. South Korean markets have effectively become a leveraged bet on global AI spending, with memory chip stocks acting as the primary vehicle for that exposure. What this means for investors On the bullish side, the demand visibility is exceptional. Samsung and SK Hynix have signed agreements with specific delivery timelines stretching to 2029. The bearish case centers on valuation and concentration risk. Both companies are already up triple digits year-to-date. The 258% run in SK Hynix means a lot of the good news is already reflected in the share price, and any hiccup in AI spending plans could trigger sharp reversals. There's also geopolitical risk that shouldn't be ignored. South Korea's chipmakers sit at the intersection of US-China tech competition. Export controls, trade tensions, or shifts in US industrial policy could complicate the narrative of Asian suppliers riding the American AI wave.

SpaceX, OpenAI Windfall Fuels Bets on Next-Wave Asian AI Winners

Their thesis is that the billions of dollars that SpaceX, Anthropic PBC and OpenAI are set to raise will kick off a fresh round of technology spending -- with a good chunk of that finding its way to the makers of server parts, specialized materials, cooling components and power equipment. For stock markets in Asia, that could be the catalyst for the next leg of a historic rally.