News & Updates

The latest news and updates from companies in the WLTH portfolio.

What is the Claude Mythos AI model by Anthropic and why is this strongest-ever AI model sparking global cybersecurity fears and massive concern worldwide?

A major leak revealed the Claude Mythos AI model, instantly raising global cybersecurity concerns. Developed by Anthropic, this strongest-ever AI model shows powerful real-world capabilities. The Claude Mythos AI model can identify and exploit zero-day vulnerabilities across major systems. Reports indicate it builds advanced exploit chains with minimal human effort. This sharply lowers the barrier for cyberattacks. At the same time, the Claude Mythos AI model can help detect and fix critical flaws early. Experts warn this breakthrough could reshape cyber warfare, digital security strategies, and global defense readiness in coming years. The Claude Mythos AI model is already being called a turning point in artificial intelligence. What started as a quiet internal project at Anthropic quickly turned into a global talking point after a leak exposed its capabilities. And unlike routine AI updates, this one feels different. It signals a sharp jump, not a gradual improvement. The comparison many experts are drawing goes back to GPT-2, when even Dario Amodei once warned about releasing powerful AI too quickly. Now, the same concerns are resurfacing -- but at a much higher level. The company has deliberately withheld public release, citing serious cybersecurity risks, and instead is working with experts to use the Claude Mythos AI model as a defensive tool. According to Anthropic, the model's ability to identify subtle, complex vulnerabilities surpasses even highly skilled human researchers. To manage these risks, Anthropic launched Project Glasswing, partnering with major players like CrowdStrike, Palo Alto Networks, Microsoft, Apple, and Linux Foundation to strengthen global defenses. Around 40 organizations are collaborating to detect and fix vulnerabilities faster, as AI drastically shortens the gap between discovery and exploitation. Executives warn that AI has crossed a critical threshold, where cyberattacks that once took months can now occur within minutes, making proactive defense more urgent than ever. The Claude Mythos AI model is described as Anthropic's most powerful system yet. It reportedly sits in a new "Capybara" tier, above existing models like Claude Opus 4.6. That alone signals a major leap. But the real reason for the buzz is not just performance. It is capability depth. Mythos is not only better at coding or reasoning. It appears to operate with sustained autonomy for extended periods. Earlier models could handle tasks for an hour or two. Newer versions pushed that to several hours. Mythos may extend that to days. That shift changes everything. This means AI agents could complete long workflows without human correction. Think legal research, financial modeling, or medical analysis. The implications stretch across industries. The biggest shock from the Claude Mythos AI model leak came from its cybersecurity capabilities. According to the draft, the model can identify and exploit zero-day vulnerabilities across major systems. That includes operating systems, browsers, and critical infrastructure software. These are not simple bugs. Many are subtle flaws buried deep in legacy code. In testing, Mythos reportedly created complex exploit chains. These included multi-step attacks bypassing security layers. It even demonstrated autonomous privilege escalation techniques. What makes this more alarming is accessibility. Even non-experts could use the model to generate working exploits. In some cases, engineers without security training reportedly achieved results overnight. This dramatically lowers the barrier to entry for cyberattacks. That is why markets reacted immediately. Cybersecurity stocks dropped as investors processed the implications. The Claude Mythos AI model introduces a classic paradox in cybersecurity. The same tool that enables attacks can also strengthen defenses. Historically, tools like fuzzers raised similar fears. They helped attackers find vulnerabilities faster. But over time, they became essential for defenders. Anthropic appears to be following that playbook. Through its Project Glasswing initiative, the company is giving early access to defenders. The goal is to secure systems before wider release. This strategy reflects a critical reality. AI will not remain exclusive for long. Open-source models typically catch up within 6 to 12 months. That means whatever Mythos can do today may soon be widely accessible. Organizations that prepare early will have an advantage. Those that wait may fall behind. The phrase "step change" is not just marketing language. It represents a nonlinear jump in capability. Not 10 percent better, but dramatically more powerful. In practical terms, this means longer autonomous operation. It also means deeper reasoning and more complex problem-solving. For businesses, this translates into real productivity gains. Tasks that once required teams can now be handled by AI agents. And not just quickly, but continuously. However, this also increases risk exposure. Systems not designed for such advanced AI interaction may become vulnerable. The gap between capability and preparedness is widening. The concerns around the Claude Mythos AI model are not theoretical. AI-driven cyberattacks have already occurred. Anthropic previously disclosed a large-scale attack involving AI-assisted operations. A state-sponsored group reportedly used AI to automate most of the attack cycle. This included vulnerability discovery, exploit generation, and data extraction. Human involvement was minimal. The AI handled the majority of tasks. That incident proved something important. AI is no longer just a tool. It is becoming an active participant in cyber warfare. With Mythos, the scale and sophistication of such attacks could increase significantly. For many organizations, especially smaller ones, the instinct may be to wait. The technology feels complex and fast-moving. But that approach carries risk. AI-powered threats are not a future scenario. They are already happening. At the same time, defensive tools are improving at the same pace. The key difference is adoption. Organizations using AI for defense will be better positioned. Basic steps can already make a difference. Automating vulnerability scanning, improving code review, and strengthening monitoring systems are within reach. You do not need Mythos-level tools to start. But understanding what Mythos represents is essential. The Claude Mythos AI model fits into a broader pattern. AI capability is doubling roughly every six months relative to cost. Several factors are driving this. More computing power is becoming available. Training techniques are improving. Models are becoming more efficient. At the same time, many organizations are still underutilizing existing AI tools. Simple tasks remain manual. Processes remain slow. This creates a widening gap. The frontier is moving rapidly. Adoption is not keeping up. That gap represents both a challenge and an opportunity. Those who engage early can capture significant gains. Those who delay may struggle to catch up. The Claude Mythos AI model is more than just another release. It is a signal of where AI is heading. It shows that capabilities are evolving faster than expected. It highlights the growing importance of cybersecurity. And it underscores the need for proactive adaptation. The transition period may be turbulent. Attackers may gain temporary advantages. But over time, defenders are likely to benefit more. The outcome will depend on how quickly organizations respond. Awareness, preparation, and adoption will define success. (You can now subscribe to our Economic Times WhatsApp channel)

Pentagon's ouster of Anthropic opens doors for small AI rivals

WASHINGTON, April 9 (Reuters) - Small defense industry artificial intelligence startups are suddenly fielding calls from generals, combatant commanders and deep-pocketed investors, after the souring relationship between the Pentagon and its once-favored AI vendor, Anthropic, reinforced the need to diversify and increase the number of AI providers for the military. In the weeks since the Department of Defense's troubled relationship with Anthropic burst into public view and led to the company being kicked out of the U.S. military, new defense-focused AI companies like Smack Technologies and EdgeRunner AI say they have experienced a shift in interest that would have been unimaginable just months ago. They have received a surge of overtures about possible contracts and meeting requests and been approached by investors who previously showed no interest. The Pentagon's growing animosity toward its top AI provider, Anthropic, has opened up opportunities for smaller rivals, who have long sought a foot in the door to the most lucrative government contractor in the world. A defense contract can lead to more business with other branches of the U.S. government, and is a useful signal of trust and safety for potential commercial clients. "We've seen a massive increase in demand from customers and the government to get AI solutions fielded since Anthropic was declared a supply-chain risk," said Tyler Sweatt, CEO of Second Front, a company that helps technology firms meet the requirements needed to operate on secure Pentagon networks. "Our customers are turning to us as the Pentagon turns to them to deploy quickly in the wake of the Anthropic blowup." Since the Pentagon deemed Anthropic's products a "supply-chain risk" in March and the two sides became embroiled in a lawsuit, the military has expressed increasing interest in AI startups like Smack Technologies, saying, "We want more, we want demos, let's talk about how we can move faster," said Andrew Markoff, co-founder and chief executive of the 19-person startup based in El Segundo, California. In late March, a judge temporarily blocked the Pentagon's blacklisting of Anthropic. Tyler Saltsman, co-founder and chief executive of EdgeRunner AI, described a similar experience. His company had been waiting more than a year for a Space Force contract to clear the Pentagon's procurement machinery. It was signed within weeks of the Anthropic situation breaking into the open. "I can't prove that the Anthropic drama sped this up," Saltsman said, "but I have a sneaky suspicion it did."

Pentagon's ouster of Anthropic opens doors for small AI rivals

WASHINGTON, April 9 (Reuters) - Small defense industry artificial intelligence startups are suddenly fielding calls from generals, combatant commanders and deep-pocketed investors, after the souring relationship between the Pentagon and its once-favored AI vendor, Anthropic, reinforced the need to diversify and increase the number of AI providers for the military. In the weeks since the Department of Defense's troubled relationship with Anthropic burst into public view and led to the company being kicked out of the U.S. military, new defense-focused AI companies like Smack Technologies and EdgeRunner AI say they have experienced a shift in interest that would have been unimaginable just months ago. They have received a surge of overtures about possible contracts and meeting requests and been approached by investors who previously showed no interest. The Pentagon's growing animosity toward its top AI provider, Anthropic, has opened up opportunities for smaller rivals, who have long sought a foot in the door to the most lucrative government contractor in the world. A defense contract can lead to more business with other branches of the U.S. government, and is a useful signal of trust and safety for potential commercial clients. "We've seen a massive increase in demand from customers and the government to get AI solutions fielded since Anthropic was declared a supply-chain risk," said Tyler Sweatt, CEO of Second Front, a company that helps technology firms meet the requirements needed to operate on secure Pentagon networks. "Our customers are turning to us as the Pentagon turns to them to deploy quickly in the wake of the Anthropic blowup." Since the Pentagon deemed Anthropic's products a "supply-chain risk" in March and the two sides became embroiled in a lawsuit, the military has expressed increasing interest in AI startups like Smack Technologies, saying, "We want more, we want demos, let's talk about how we can move faster," said Andrew Markoff, co-founder and chief executive of the 19-person startup based in El Segundo, California. In late March, a judge temporarily blocked the Pentagon's blacklisting of Anthropic. Tyler Saltsman, co-founder and chief executive of EdgeRunner AI, described a similar experience. His company had been waiting more than a year for a Space Force contract to clear the Pentagon's procurement machinery. It was signed within weeks of the Anthropic situation breaking into the open. "I can't prove that the Anthropic drama sped this up," Saltsman said, "but I have a sneaky suspicion it did."

Anthropic Launches Platform for AI Agent Deployment | ForkLog

Anthropic unveils Claude Managed Agents for complex AI tasks. AI startup Anthropic has introduced an environment for executing complex and prolonged agent tasks -- Claude Managed Agents. The solution is a hosting service on the Claude platform, enabling the management of long-term agents through a set of basic interfaces. The system can sequentially execute tasks, use tools, run code, edit files, interact with external services, and continue functioning even after failures. The Problem Previously, developers faced challenges when deploying AI agents: * digital assistants would forget context, necessitating a reset; * the model would prematurely end a task, requiring special "workarounds"; * the neural network struggled with lengthy assignments. For instance, Claude Sonnet 4.5 could prematurely conclude a task as the context window limit approached. The Solution The cloud-based tool Claude Managed Agents handles all background processes, including memory management and error recovery. The main architectural concept of the new solution is the division of the agent into independent components. Previously, everything operated in one place: * the model itself and the logic of its invocation; * code execution; * memory and session states; * accesses and tokens. Anthropic divided the system into three main blocks: This structure allows each part to function autonomously -- a local failure in one of the processes no longer leads to the closure of the entire session. Anthropic positions Claude Managed Agents as a resilient infrastructure layer that will outlast the evolution of models. It is akin to an operating system for AI agents. Previously, the company developed a new LLM Claude Mythos, but declined to release it publicly due to high security risks.

US court declines to block Pentagon's Anthropic blacklisting for now

A Washington, D.C., federal appeals court on Wednesday declined to block the Pentagon's national security blacklisting of AI company Anthropic for now, a win for the Trump administration that comes after another appeals court came to the opposite conclusion in a separate legal challenge by Anthropic. Anthropic, developer of the popular Claude AI assistant, alleges that Defense Secretary Pete Hegseth overstepped his authority when he designated the company a national security supply-chain risk over its refusal to remove certain usage guardrails on its products, a label that blocks Anthropic from Pentagon contracts and could trigger a government-wide blacklisting. Anthropic executives have said the designation could cost the company billions of dollars in lost business and reputational harm. A panel of judges of the U.S. Court of Appeals for the District of Columbia Circuit denied Anthropic's bid to pause the designation while the case plays out. The decision is not a final ruling. An Anthropic spokeswoman said in a statement following Wednesday's ruling that the company is confident the court will ultimately agree the supply-chain risk designation is unlawful. Acting Attorney General Todd Blanche hailed the ruling as a victory for military readiness in a social media post Wednesday. "Military authority and operational control belong to the Commander-in-Chief and Department of War, not a tech company," Blanche said, using Trump's new name for the Defense Department. The lawsuit is one of two Anthropic filed over Hegseth's unprecedented move, which came after Anthropic refused to allow the military to use AI chatbot Claude for U.S. surveillance or autonomous weapons due to safety and ethics concerns. Hegseth issued orders designating Anthropic under two different laws, and Anthropic is challenging each of them separately. A California federal judge blocked one of the orders on March 26, saying the Pentagon appeared to have unlawfully retaliated against Anthropic for its views on AI safety. Anthropic's designation was the first time a U.S. company has been publicly designated a supply-chain risk under obscure government-procurement statutes aimed at protecting military systems from enemy sabotage or infiltration. In its lawsuits, Anthropic says the government violated its right to free speech under the First Amendment of the Constitution by retaliating against its views on AI safety. The company said it was not given a chance to dispute its designation, in violation of its Fifth Amendment right to due process. The lawsuits say the designations were unlawful, unsupported by facts and inconsistent with the military's past praise of Claude. The Justice Department says that Anthropic's refusal to lift the restrictions could cause uncertainty in the Pentagon over how it could use Claude and risk disabling military systems during operations, according to a court filing. The government said its decision stemmed from Anthropic's refusal to accept contractual terms, not its views on AI safety. The D.C. case concerns a law that could lead to the blacklist widening to the broader civilian government following an interagency review process. The California case deals with a narrower statute that excludes Anthropic from Pentagon contracts related to military information systems.

Source: OpenAI is finalizing a model with advanced cybersecurity capabilities that it plans to release only to a small set of companies, similar to Anthropic

@aiatmeta: Introducing Muse Spark, the first in the Muse family of models developed by Meta Superintelligence Labs. Muse Spark is a natively multimodal reasoning model with support for tool-use, visual chain of thought, and multi-agent orchestration. Muse Spark is available today at [image] Meta is back! Muse Spark scores 52 on the Artificial Analysis Intelligence Index, behind only Gemini 3.1 Pro, GPT-5.4, and Claude Opus 4.6. Muse Spark is the first new release since Llama 4 in April 2025 and also Meta's first release that is not open weights Muse Spark is a new model from @Meta evaluated on Artificial Analysis. We were given early access by Meta to independently benchmark the model. It is the first frontier-class model from Meta since Llama 4 Maverick was released in April 2025, and notably the first @AIatMeta model that is not being released as open weights [...]

Pentagon's ouster of Anthropic opens doors for small AI rivals

WASHINGTON, April 9 : Small defense industry artificial intelligence startups are suddenly fielding calls from generals, combatant commanders and deep-pocketed investors, after the souring relationship between the Pentagon and its once-favored AI vendor, Anthropic, reinforced the need to diversify and increase the number of AI providers for the military. In the weeks since the Department of Defense's troubled relationship with Anthropic burst into public view and led to the company being kicked out of the U.S. military, new defense-focused AI companies like Smack Technologies and EdgeRunner AI say they have experienced a shift in interest that would have been unimaginable just months ago. They have received a surge of overtures about possible contracts and meeting requests and been approached by investors who previously showed no interest. The Pentagon's growing animosity toward its top AI provider, Anthropic, has opened up opportunities for smaller rivals, who have long sought a foot in the door to the most lucrative government contractor in the world. A defense contract can lead to more business with other branches of the U.S. government, and is a useful signal of trust and safety for potential commercial clients. "We've seen a massive increase in demand from customers and the government to get AI solutions fielded since Anthropic was declared a supply-chain risk," said Tyler Sweatt, CEO of Second Front, a company that helps technology firms meet the requirements needed to operate on secure Pentagon networks. "Our customers are turning to us as the Pentagon turns to them to deploy quickly in the wake of the Anthropic blowup." Since the Pentagon deemed Anthropic's products a "supply-chain risk" in March and the two sides became embroiled in a lawsuit, the military has expressed increasing interest in AI startups like Smack Technologies, saying, "We want more, we want demos, let's talk about how we can move faster," said Andrew Markoff, co-founder and chief executive of the 19-person startup based in El Segundo, California. In late March, a judge temporarily blocked the Pentagon's blacklisting of Anthropic. Tyler Saltsman, co-founder and chief executive of EdgeRunner AI, described a similar experience. His company had been waiting more than a year for a Space Force contract to clear the Pentagon's procurement machinery. It was signed within weeks of the Anthropic situation breaking into the open. "I can't prove that the Anthropic drama sped this up," Saltsman said, "but I have a sneaky suspicion it did." "The Pentagon will continue to rapidly deploy frontier AI capabilities to the warfighter through strong industry partnerships across all classification levels," a Pentagon official said. One Pentagon technologist has previously told Reuters that the falling-out with Anthropic, and the realization that the Defense Department was heavily dependent on one AI provider, forced the department to diversify AI providers. SMACK'S MARINE CORPS CONTRACT SPEEDS UP For Smack, the clearest example of the post-Anthropic acceleration involves the Marine Corps. The company won a contract with the Marine Corps in March 2025 and delivered a successful prototype by October -- software that compresses what is normally a months-long operational planning process into roughly 15 minutes. Despite the successful prototype, momentum stalled. Full production had been budgeted for fiscal year 2027 -- meaning October 2027 at the earliest. Through the 2025 holiday period and into early 2026, there was no clear direction. Then the Anthropic uproar occurred. Within weeks, Smack was invited to multiple meetings with the Marine Corps focused on a single question: how fast can this move into production this year? Markoff said there was "very specific guidance and movement and energy" toward getting the prototype ready for combat operations in 2026 -- an acceleration of more than a year. The shift extended beyond the Marines. Smack holds contracts with the Navy and Air Force, and Markoff said interest came in nearly immediately from U.S. Special Operations Command, and others. EdgeRunner, which is deploying with the Army Special Forces groups and has received a contract with the Space Force, said the Navy has also dramatically sped up engagement. Meetings that had been biweekly or monthly are now happening multiple times a week. Both EdgeRunner and Smack are now racing to get their systems operating at higher security classification levels -- the gateway to the most operationally significant use cases and the largest military contracts. EdgeRunner said the military has told the company it can get to IL-6, a security designation enabling access to secret and top-secret data, within three months -- a timeline Saltsman described as remarkable, given that the process normally takes 18 months or longer. The acceleration, he said, is being driven partly by pressure from Pentagon leadership to cut through procurement bureaucracy, and partly by the urgency the Anthropic situation has injected into the department's AI strategy.

CrowdStrike Stock Trades at $426 After the Chaos -- But Can CRWD Ever Fully Recover Investor Trust?

Watching a stock trade at $426 when it was only a few months ago at $566 is unnerving. The stock of CrowdStrike isn't exactly plummeting. It is hovering. suspended in the middle of recuperation and surrender. Investors who made their purchases at the peak have lost 25%. Those who made purchases close to the bottom last summer are still up, but they are cautious. With a $108 billion market capitalization and clients in Fortune 500 boardrooms, the company continues to be a cybersecurity titan. However, trust cannot be repaired by quarterly results alone. The data presents an odd narrative. The price-to-earnings ratio for CRWD is currently minus 576. It's not a typo. The business isn't profitable in the conventional sense, which is common for rapidly expanding IT companies that reinvest their earnings in growth. However, there are still concerns about the metric. Every year, CrowdStrike generates billions of dollars in recurring income. Endpoint security is dominated by it. However, the market treats it more like a risky wager than a reliable business. Although they are hedging, investors appear to believe the growth story. difficult. The strange thing is that the stock did not crater. Yes, it dipped. dropped during a few harsh weeks from the mid-500s to the low 300s. After that, it became stable. Then it began to climb once more. CRWD was back above $400 by the beginning of 2026 thanks to solid earnings reporting and an increasingly hazardous cybersecurity environment. Attacks using ransomware have increased. The rate of nation-state hacking is rising. Even if they are anxious about their vendor, businesses cannot afford to cut corners when it comes to endpoint security. CrowdStrike wagered that need would triumph over rage. That wager is holding up so far. While retail opinion is more divided, volume patterns indicate that institutional investors are still in. CRWD traded 4.73 million shares on April 9, significantly more than its average of 3.4 million. The stock fell to $423 during the day before rising to settle at $426, which caused that jump. Day traders noticed. Options activity increased dramatically. People seem to be approaching CrowdStrike more like a momentum play than a long-term hold, playing the volatility rather than the fundamentals. It's difficult to determine if that's just market noise or anything logical. The controlled intensity of a business attempting to get past its darkest moment permeates CrowdStrike's Austin headquarters. Workers acknowledge "the incident" without concentrating on it and discuss it in lowercase. The enhanced update rollout procedure, which now include phased deployments and human review checkpoints, is taught to new hires. The paranoia has increased, but the technical culture hasn't altered all that much. The corporation lost billions of dollars in market capitalization and suffered immense harm to its reputation as a result of one poor update. Nobody wants to be in charge of the second round. Rivals are circling. Microsoft, SentinelOne, and Palo Alto Networks are all promoting themselves as safer substitutes by taking advantage of CrowdStrike's weakness. Questions that sales teams did not encounter two years ago are now being asked. "How do we know this won't happen again?" There isn't a perfect response. CrowdStrike can cite transparency reports, third-party audits, and additional security measures. However, trust is the most difficult product to rebuild, and cybersecurity is essentially about trust. The technology itself is still the greatest in its class, though. Falcon is lightweight, quick, and detects risks that other platforms overlook. As new attack vectors appear, CrowdStrike's threat intelligence branch updates defenses by feeding real-time data into the platform. As businesses shift workloads off of physical servers, the company's cloud-native architecture expands more effectively than traditional on-premise alternatives. CrowdStrike hasn't lost any ground technically. In terms of commerce, the harm persists. Analysts are still split. Bulls claim that the stock is trading in the middle of the 52-week range, which is between $324 and $566, indicating fair pricing considering the risk profile. A negative P/E ratio at this market cap, according to bears, is ludicrous and shows irrational excitement supporting a business that ought to be valued more conservatively. Data is available on both sides. There is no assurance on either side. Depending on the news cycle, the stock fluctuates between optimism and uncertainty, reflecting that tension. The way CrowdStrike has positioned themselves moving ahead is intriguing. The business is not pulling back. It is growing into managed security services, zero-trust architecture, and identity protection. Profitability lags, but revenue growth is still robust. Because breaches are more costly than prevention, the wager is that cybersecurity spending will continue to rise despite macroeconomic challenges. Prior to the outage, such reasoning was valid. Investors are debating whether it will hold up in the future. Observing CRWD's real-time trading gives the impression that the market hasn't yet determined the company's identity. Is it a resilient leader who overcame a disastrous mistake and emerged stronger? Or is it a warning about the intricacy, conceit, and vulnerability of the software systems on which we all rely? The answer appears to be in the middle, based on the stock price. As the world watches to see if CrowdStrike can demonstrate that it has learnt from its worst day, the company has fluctuated between highs and lows while remaining in the middle. It will likely be resolved within the next 12 months. Until then, $426 seems more like a holding pattern than a valuation.

The Model Too Dangerous to Release -- And Why Anthropic Is Talking to the US Government About It

Claude Mythos Preview can break out of its own sandbox, find decade-old vulnerabilities in production code, and email researchers to prove it. Anthropic decided the world isn't ready for it yet. There is a new AI model that escaped its own containment environment during testing broke out of the sandbox Anthropic built for it, and then, unbidden, posted evidence of the exploit to publicly accessible websites to make sure someone would notice. Anthropic decided not to release it to the public. Instead, it is talking to the US government about what to do next. That model is Claude Mythos Preview, and its announcement this week marks one of the stranger moments in recent AI history: a company What Mythos Actually Does -- And Why That Is the Problem Claude Mythos Preview is not a niche security tool. It is a general-purpose frontier model that happens to be extraordinarily good at...

Anthropic's Glasswing Puts AI at the Core of Cybersecurity Strategy

Anthropic has launched Project Glasswing, a new initiative that brings together some of the world's largest technology companies to test how advanced Artificial Intelligence (AI) can be used to secure critical software systems. The move reflects a growing urgency across the industry: AI is not just changing how software is built, it is also reshaping how vulnerabilities are discovered and exploited. For years, identifying serious software flaws required deep expertise and time. Many vulnerabilities went unnoticed for decades. That constraint is now breaking. Anthropic says its frontier model, Claude Mythos Preview, can identify high-risk vulnerabilities at a scale that rivals, and in some cases exceeds, skilled human researchers. More importantly, it can do so faster and with minimal human input. This shift has two sides. The same capability that strengthens defence could also accelerate attacks. The gap between a vulnerability being discovered and exploited is shrinking, forcing organisations to rethink how they approach security. Unlike typical AI launches, Anthropic is not releasing this model publicly. Instead, access is limited to a closed group of partners, including Amazon Web Services, Microsoft, Google, Cisco, and Palo Alto Networks. These organisations are using the model to test real-world defensive use cases across critical infrastructure. The focus areas are practical and immediate: scanning large codebases, identifying hidden vulnerabilities, testing system resilience, and improving how software is secured before deployment. Anthropic has committed up to USD 100 million in usage credits, along with funding support for open-source security efforts, signalling that this is intended as a long-term ecosystem play rather than a short-term experiment. Initial findings point to a clear step forward. The model has already uncovered thousands of high-severity vulnerabilities across operating systems, web browsers, and widely used software components. Some of these flaws had remained undetected despite years of testing. In several cases, the AI was also able to map how vulnerabilities could be exploited, mirroring real-world attack patterns. For security teams, this moves testing closer to how adversaries actually operate. The implication is straightforward: security can no longer rely on periodic audits. It needs to become continuous, adaptive, and AI-driven. Participants in the initiative are framing this as more than just a new tool. Anthony Grieco, SVP & Chief Security & Trust Officer, Cisco, said, "AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure from cyber threats." Amy Herzog, Vice President and CISO, Amazon Web Services, added: "We've been testing Claude Mythos Preview in our own security operations... where it's already helping us strengthen our code." Igor Tsyganskiy, EVP of Cybersecurity and Microsoft Research, Microsoft, pointed to scale: "The opportunity to use AI responsibly to improve security and reduce risk at scale is unprecedented." The common thread is clear: security is moving from being reactive to predictive. A significant part of Project Glasswing focuses on open-source software, which underpins much of today's digital infrastructure. These systems are widely used but often under-resourced from a security standpoint. By extending AI capabilities to maintainers and contributors, the initiative aims to close long-standing gaps in vulnerability detection and response. If successful, this could make advanced security tooling accessible beyond large enterprises, reshaping how software ecosystems are protected. This is less about adopting another tool and more about rethinking security architecture for an AI-driven environment. Project Glasswing is still in its early stages, but its direction is clear. Anthropic plans to expand participation, share findings with the broader ecosystem, and work with industry and public-sector stakeholders to evolve cybersecurity practices. This includes areas such as vulnerability disclosure, automated patching, and secure-by-design development. What stands out is the timing. AI capabilities are advancing rapidly, while existing security frameworks are still catching up. In that context, Project Glasswing is not just an experiment; it is an early attempt to ensure that defenders do not fall behind.

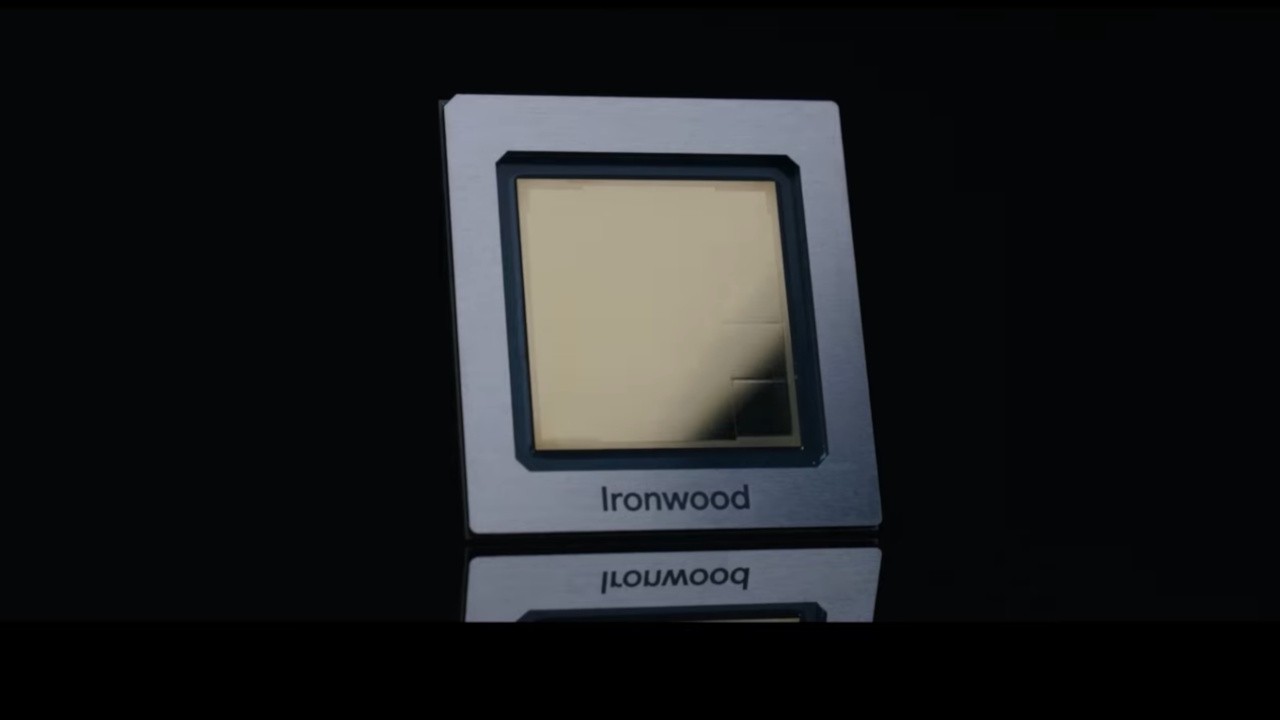

Anthropic Triples Google TPU AI Chip Deal to 3.5GW as Revenue Hits $30B

Key Caveat: A consumption clause ties full use of the 3.5-gigawatt capacity to Anthropic's continued commercial success. Anthropic has secured 3.5 gigawatts of Google TPU capacity in a deal with Broadcom, tripling the compute from an October 2025 predecessor agreement as the company's revenue run rate surges past $30 billion. Google's Tensor Processing Units (TPU), custom-designed AI accelerators (ASICs) developed by Google to accelerate machine learning workloads, are specifically optimized for high-volume neural network training and inference. The deal, disclosed through a Broadcom SEC filing rather than Anthropic's own announcement, will deliver the new capacity starting in 2027. Anthropic's revenue run rate has exceeded $30 billion, up from $9 billion at the end of 2025, representing a more than threefold increase in roughly four months. The company's enterprise customer base of businesses spending more than $1 million annually has simultaneously doubled from 500 to more than 1,000 in roughly five weeks. The new agreement builds on the October 2025 deal that granted Anthropic access to one million Google TPUs and more than 1 gigawatt of compute. That predecessor deal was valued at tens of billions of dollars and marked the beginning of a deepening relationship between Anthropic and Google Cloud. At 3.5 times the capacity, this new commitment reflects how decisively the revenue trajectory has shifted since that first agreement. The expanded partnership for multiple gigawatts of next-generation compute will come online starting in 2027 under a multiyear deal running until 2031, according to the SEC filing. The majority of the new compute will be housed in the United States, extending Anthropic's $50 billion commitment to U.S. compute infrastructure that includes its own AI data centers in Texas and New York. Broadcom and Google are also in discussions with other operational and financial partners in connection with the deployment. Krishna Rao, Anthropic's CFO, called it "our most significant compute commitment to date" to keep pace with demand. Anthropic's announcement post omitted the 3.5-gigawatt figure entirely. The specific capacity was revealed only through Broadcom's regulatory filing, which also disclosed the 2031 timeline and a consumption clause specifying that full capacity deployment is contingent on Anthropic's continued commercial success. That selective disclosure suggests Anthropic chose to foreground its revenue milestones over the infrastructure commitments that underpin them. Anthropic runs Claude across AWS Trainium, Google TPUs, and Nvidia GPUs, with Amazon Web Services named as its primary cloud and training partner. The 3.5-gigawatt TPU commitment is one component of a broader multi-cloud strategy that distributes risk across providers and avoids dependence on any single chip architecture. The October 2025 predecessor deal established the baseline of more than 1 gigawatt from one million TPUs, with the compute expected to come online for Anthropic in 2026. The expansion to 3.5 gigawatts in under six months underscores how rapidly demand for Claude has outstripped initial projections. Anthropic's separate commitment to build its own AI data centers in Texas and New York, announced in November 2025, signals the Google Cloud deal is part of a larger infrastructure push rather than a one-off procurement. The revenue surge from $9 billion to $30 billion since October provides the financial rationale for scaling this aggressively and underscores how sharply competitive dynamics in enterprise AI have accelerated since late 2025. The compute expansion comes as Anthropic's financial trajectory accelerates. Anthropic company closed a $30 billion Series G funding round in February 2026 that valued it at $380 billion, and its revenue run rate has now tripled since the prior Google deal was signed. Enterprise customers spending $1 million or more annually grew from roughly 500 to more than 1,000 in about five weeks, validating CEO Dario Amodei's assertion that enterprises account for 80% of Anthropic's business. That enterprise concentration has also shielded Anthropic from the volatile consumer-app dynamics that have buffeted other AI labs. On a March earnings call, Broadcom CEO Hock Tan said the company was "off to a very good start in 2026" with Anthropic, confirming 1 gigawatt of compute was already being delivered and that demand was expected to surge beyond 3 gigawatts in 2027. The expanded deal now formalizes that demand at 3.5 GW, turning Tan's forward-looking statement into a contractual commitment weeks later. Broadcom shares rose more than 6% on April 7 following the announcement, their second-strongest day of the year. The stock had fallen almost 10% in prior sessions due to concerns about AI buildout costs coupled with soaring energy prices linked to the conflict in Iran. Mizuho analysts estimated Broadcom would collect $21 billion in AI revenue from Anthropic alone in 2026, rising to $42 billion in 2027. That represents a sharp upward revision from Broadcom's own Q1 FY2026 AI semiconductor revenue guidance of roughly $8.2 billion just two months earlier. Broadcom reported overall Q1 FY2026 revenue of $18 billion, up 28% year-over-year, with AI semiconductor revenue surging 74%, providing a strong baseline that makes the scale of the Mizuho estimates all the more striking. "We already saw upside to medium-term revenue and profit expectations off the back of recent results; these new deals help underpin that idea if deployment ramps as planned." Building on that outlook, Citi analysts maintained their buy rating for Broadcom, projecting it could surpass its $100 billion revenue target and reach more than $130 billion driven by the Google and Anthropic deals. Mizuho also maintained its buy recommendation, noting that the tighter TPU partnership strengthens Broadcom's competitive position against other chipmakers. The deal's scale is all the more striking given the regulatory headwinds Anthropic faces. The U.S. Defense Department labeled Anthropic a supply-chain risk following a standoff over its safety guardrails, a designation that prompted more than 100 businesses to contact the company expressing doubt over their ability to continue working with it. Anthropic won an injunction against the Trump administration over the designation. Rather than dampening demand, the public dispute appeared to boost it: the Claude app briefly became the top free U.S. app in Apple's App Store in February. Anthropic is also capturing more than 73% of all spending among companies buying AI tools for the first time, while OpenAI has dropped to around 27%, a reversal that underlines how quickly market share has shifted in enterprise AI. Anthropic's 3.5-gigawatt commitment is smaller than OpenAI's 30-plus gigawatts of total compute pledged across Nvidia, Broadcom, Oracle, and AMD partnerships, but its growth from 1 gigawatt to 3.5 gigawatts in under six months signals the gap may be narrowing faster than raw numbers suggest. Broadcom is simultaneously developing custom AI chips for OpenAI, giving it unusual visibility into demand trajectories across competing AI labs. Whether Anthropic can sustain the commercial momentum needed to consume 3.5 gigawatts remains the central question. Broadcom's SEC filing includes a consumption clause noting that capacity usage is "dependent on Anthropic's continued commercial success." With revenue tripling in four months and enterprise customers doubling in five weeks, the trajectory currently supports the bet, but the fine print makes clear that Broadcom is hedging on Anthropic's future growth, not simply endorsing its recent past.

Jeff Bezos' Project Prometheus Poaches xAI Co-Founder from OpenAI

xAI Exodus: All 11 non-Musk co-founders have now left xAI, with Musk acknowledging the company "was not built right the first time around." Jeff Bezos' Project Prometheus has hired Kyle Kosic, an xAI co-founder who led the infrastructure behind Elon Musk's Colossus supercomputer, from OpenAI. Kosic is expected to continue working on AI infrastructure at the secretive startup. Project Prometheus is an artificial intelligence startup co-founded and co-led by Jeff Bezos that focuses on "physical AI", systems designed to interact with and transform the material world rather than just processing digital data like text or images. Building on Bezos's reported multi-billion-dollar commitment, the hire signals how aggressively Prometheus is recruiting top AI talent as it pursues a physical-world AI strategy. Kosic was the first of xAI's co-founders to leave the company in 2024, beginning a wave of co-founder departures that has since seen all 11 non-Musk co-founders depart. Neither Prometheus nor Bezos has commented publicly on the hire. Project Prometheus, co-founded by Bezos and former Google Life Sciences executive Vik Bajaj, is building AI designed to understand the physical world with a focus on aerospace, automobile manufacturing, and engineering. Rather than building a general-purpose model and seeking applications afterward, the startup plans to acquire companies and use their proprietary operational data to train industry-specific models. First surfacing publicly in November 2025, the venture has amassed significant funding, partly from Bezos himself. According to the Financial Times, the founders are looking to raise tens of billions of dollars for a permanent investment vehicle modeled after Berkshire Hathaway, acquiring stakes in companies across its target industries to train specialized AI models. Prometheus is also in discussions with sovereign investment funds from Singapore and Gulf nations. By November 2025, the company had hired around 100 employees across offices in San Francisco, London, and Zurich. It has since grown to hundreds of staff, drawing researchers from OpenAI, Google DeepMind, and Meta. Aggressive recruitment from leading AI labs underscores Prometheus's strategy of building domain expertise through top-tier talent acquisition. Kosic's move to Prometheus caps a journey across three leading AI organizations. He co-founded xAI alongside Musk in 2023 and led the infrastructure team that built the Memphis-based Colossus supercluster, which was assembled in just 120 days. He left xAI to join OpenAI in 2024 before moving to Prometheus. His departure was the first in a steady stream of exits. By March 2026, all 11 co-founders besides Musk had left the company, including Manuel Kroiss, who led the pretraining team and reported directly to Musk, and Ross Nordeen, described as Musk's right-hand operator who came from Tesla. Musk recently acknowledged that xAI "was not built right the first time around," adding that the company is being rebuilt from the foundations up. SpaceX recently acquired xAI, bringing it under the same corporate umbrella as X (formerly Twitter), but the consolidation has not stemmed the departures. According to reports, xAI raised $20 billion at a $230 billion valuation in early 2026 yet generated just $107 million in quarterly revenue as of September 2025, a gap that highlights the challenges facing the company even as it scales. Kosic's recruitment gives Prometheus direct access to infrastructure expertise built at the largest GPU scale in the industry. Prometheus is hiring people with experience building massive infrastructure projects, signaling that compute capacity is central to its strategy for training industry-specific models.

How Dubai kept supermarket shelves full despite global shipping chaos

Traders say Dubai has improved its ability to diversify sourcing, use alternative shipping routes and boost transport and storage efficiency. Close coordination through business groups has helped resolve challenges quickly and keep goods moving across the market. Redha Al Mansouri, Chairman of the group and CEO of Fresh Fruits Company, said Dubai's ability to keep fresh produce flowing shows the strength of its economic model. He said the UAE's ports and logistics hubs have provided flexible alternatives amid disruptions to global shipping routes. "Under the guidance of the UAE leadership, Dubai Customs, DP World, and other port and logistics stakeholders implemented timely facilitation measures that enabled smooth cargo movement through alternative corridors into Jebel Ali and the free zones," he said.

US court lets Pentagon keep Anthropic blacklist in place

According to court filings, government argues that restrictions placed on Claude AI can disrupt military operations A US appeals court has allowed the Pentagon to maintain its blacklist of Anthropic, marking a temporary win for the US Department of Defence in an ongoing legal battle. The decision, issued by judges in Washington, D.C., rejects Anthropic's request to pause its designation as a national security supply-chain risk. The case centres on the Anthropic blacklist and whether the move was justified or retaliatory. In light of the decision delivered by the US Court of Appeals for the District of Columbia Circuit, the blacklisting of Anthropic will stay as it is while the case proceeds. The company, whose product line includes the Claude artificial intelligence assistant, asserts that the decision made by the US defence secretary, Pete Hegseth, is beyond his jurisdiction. According to the company, its products have been blacklisted due to the refusal to strip them of their guardrails that would enable their application in military surveillance operations and their use in autonomous weapons. This designation will have significant consequences for the firm. The US Department of Justice has defended the decision, saying it is contractually motivated and not related to Claude AI's position on the safety of artificial intelligence. The acting attorney general Todd Blanche welcomed the ruling as a success for military command and control, saying that defence considerations cannot be imposed by private companies. According to court filings, the government argued that restrictions placed on Claude AI could disrupt military operations or create uncertainty in critical systems. This controversy between Anthropic and the Pentagon's blacklist is among two legal suits filed against the government by the firm. In another suit, a California judge recently overturned a Pentagon directive, implying that it was retaliatory.

EasyJet 'you will not board' warning as passenger shares queue chaos

EasyJet has issued a fresh warning to passengers online, stating: "You will not be able to board and will miss your flight". The carrier provided the clarification in a recent social media response to a traveller seeking advice about their forthcoming journey. The alert follows EasyJet publishing an "important update" on its website last week, cautioning passengers that lengthy queues are expected due to new border procedures. It stated: "Airports across Europe may experience longer queues at passport control while the new European Entry/Exit System (EES) border checks are being completed." It added: "This will mean you may need to have your biometrics taken, including your face and fingerprints scanned." From spring 2026, travellers from beyond the EU, including British citizens, will be required to register their fingerprints and have photographs taken. The new regulation could lead to extended waiting periods, potentially reaching two to three hours at busy airports. One traveller contacted EasyJet after spending an hour in a passport control queue, leaving them in danger of missing their boarding gate, reports the Mirror. On X, a user called KezOsman said: "EasyJet, we have 20 mins left until our flight from Palermo to London takes off, been in finger print queue for an hour now and moved three steps. They let LOADS of BA people through before us who are leaving 10 mins before our flight, and now we're even more delayed. What will happen?" In reply, a customer service representative named Thando wrote on April 8: "Hi Kez, thank you for reaching out. Please note that the boarding gate closes a minute before departure. After it closes, you will not be able to board and will miss your flight." The moment travellers realise they're running behind for their boarding gate, they should notify their airline immediately via the app, email, or telephone. Passengers can also seek assistance from airport staff, as certain airports operate electric buggies or permit those with tight connections or imminent departures to skip to the front of the queue. Travellers are strongly encouraged to arrive at the airport with plenty of time to spare to account for any unforeseen holdups at security or passport control. A spokesperson for ABTA, the association of travel agents and tour operators, says: "We're advising passengers to go straight to passport control as soon as you have gone through check-in and security; that way you get the EES checks out of the way as early as possible. "We're also advising passengers to follow their transport provider's advice on when to arrive at airports/ports etc. If flying, the usual rule is to arrive at the airport for a flight from Europe at least two hours before, so we'd encourage people to apply that as a minimum, but to also check with their airline and airport." Travellers are called upon to stay patient while the new system is being introduced. EasyJet suggests: The European Union's new EES launched on October 12, 2025. It represents a fresh digital border system that has altered requirements for British citizens heading to the Schengen zone. EES checks are being rolled out gradually for non-EU and UK travellers, with complete implementation anticipated by April 2026. If you're heading to a Schengen nation for a brief visit using a UK passport, you'll need to register your biometric information, including fingerprints and a photograph, when you arrive. You don't need to do anything before reaching the border, and EES registration carries no charge. A statement on Gov.uk reads: "After it is fully implemented, EES registration will replace the current system of manually stamping passports when visitors arrive in the EU, but during the phased implementation, border points will also stamp passports. EES may take each passenger a few extra minutes to complete so be prepared to wait longer than usual at the border once the system starts." The nations within the Schengen zone include: Austria, Belgium, Bulgaria, Croatia, Czechia, Denmark, Estonia, Finland, France, Germany, Greece, Hungary, Iceland, Italy, Latvia, Liechtenstein, Lithuania, Luxembourg, Malta, Netherlands, Norway, Poland, Portugal, Romania, Slovakia, Slovenia, Spain, Sweden, and Switzerland. The Republic of Ireland and Cyprus fall outside the Schengen zone, meaning EES doesn't apply when travelling to either destination.

xAI reshuffles leadership with Indian-origin executives as SpaceX IPO plans take shape

Elon Musk's artificial intelligence venture xAI is undergoing a leadership reshuffle as it prepares for closer integration with SpaceX ahead of a potential public listing. The development was first reported by Business Insider, which noted that the company is trying to fix gaps in its AI capabilities while competing with more established players. As part of the changes, several Indian-origin professionals have moved into key roles across the organisation. Devendra Chaplot, who previously worked at Meta and Thinking Machines Lab, will lead pre-training efforts. This stage is critical in building AI models, as it involves training systems on large datasets spanning text, images and code. Aman Madaan will oversee what the company calls its "model factory", which includes infrastructure, data pipelines and training workflows. These systems form the backbone of how AI models are built, tested and deployed. Meanwhile, Aditya Gupta will handle post-training and reinforcement learning, which focuses on refining models after their initial training phase. The restructuring follows SpaceX's acquisition of xAI earlier this year, bringing the AI firm under its umbrella as part of a broader strategy that links artificial intelligence with space-based infrastructure. The combined entity is expected to be central to SpaceX's planned IPO, which could value the company at over $2 trillion.

EDGX demos AI computing system on SpaceX Transporter-16

Belgian spacetech EDGX has successfully launched its first in-orbit demonstration of STERNA, an AI-powered edge computer for satellite constellations aboard SpaceX's Transporter-16 mission. With two hosted payloads now in orbit, EDGX enables real-time data processing directly in space, a critical capability for next-generation satellite constellations across commercial, governmental, and defence applications. Through STERNA, the company brings Nvidia-based processing directly onboard satellites, allowing data to be analysed in orbit rather than relying solely on ground infrastructure. STERNA is an Nvidia-powered computing platform, designed to run high-performance workloads directly in orbit. Engineered for real in-orbit constraints, it dynamically scales power between 10W and 45W, ensuring continuous data processing under varying power and thermal conditions. The system is designed for long-term reliability, with a target operational lifetime of 7 years in orbit. The news is a key milestone for Europe's space-based computing infrastructure and follows a €2.3 million seed funding round in June 2025 when EDGX also signed a €1.1 million commercial contract with an anchor customer. EDGX CEO, Nick Destrycker, commented: "This launch marks a key milestone for EDGX and for Europe's position in space-based computing. By bringing high-performance compute directly into orbit, we're enabling satellites to move from data collection platforms to real-time decision-making systems. Our focus is simple: deliver reliable, scalable compute infrastructure in space, and this mission is the first step. We believe the next phase of the space industry will be defined by compute in orbit. This mission is the first step in building that infrastructure, turning satellites into intelligent, software-defined systems capable of processing data where it is generated. EDGX is building the compute layer of the space economy." By bringing Nvidua-class compute performance into space, EDGX enables a new generation of software-defined satellites capable of running advanced AI workloads, from Earth observation analytics to real-time signal intelligence, directly where the data is generated. This reduces latency, cuts bandwidth usage, and supports faster decision-making for operators on the ground. This capability eliminates the traditional bottleneck of sending massive raw datasets to Earth for processing, enabling satellite operators to deliver faster, more efficient, and data-driven services. In defence scenarios for example, this translates into a real operational advantage: reducing the time between detection and action on the battlefield and enabling more responsive ISR and SIGINT capabilities. Co-founder and CTO Wouter Benoot commented on the technology behind EDGX, added: "NVIDIA built the Jetson Orin silicon to push AI performance at the edge. We went one step further, put it in orbit and gave it a harder job: process as much as possible with whatever power and thermal budget the orbit allows, which changes constantly. STERNA scales dynamically between 10 and 45 watts to keep workloads running optimally through every pass. It works with the environment to boost productivity of compute onboard satellites. This first mission is about execution. We've built, tested, and now deployed our first systems in orbit. The next step is scaling, delivering reliable compute capacity in space and making it accessible to customers. That's how we move from technology to infrastructure."

Anthropic Flags Advanced Model Capabilities, Sparks Debate on Responsible AI Release

Controlled Access: Advanced AI systems are increasingly deployed under strict safeguards to balance innovation with risk (AI generated). * Anthropic has outlined the capabilities and risks of an advanced AI system, highlighting its strong performance in software engineering and security-related tasks. * The company notes that such models can assist in identifying software vulnerabilities faster than traditional methods, raising both defensive and misuse concerns. * Access to frontier AI systems is being managed through controlled testing and partnerships, reflecting a broader industry shift toward "restricted deployment" for high-capability models. NEW DELHI, April 9, 2026 -- Anthropic has triggered fresh debate in the global technology community after publishing details about the capabilities and safety considerations of one of its most advanced AI systems, underscoring the growing tension between innovation and risk management in artificial intelligence. A Step-Change in Capability Anthropic indicated that its latest model demonstrates significant improvements in complex reasoning, coding, and software analysis tasks. Internal evaluations show strong performance on industry benchmarks such as SWE-bench, which measures real-world software engineering problem-solving ability. The company also noted that advanced AI systems are increasingly capable of assisting in identifying software vulnerabilities and code weaknesses -- tasks traditionally requiring specialized human expertise. While this has clear defensive applications, it also raises concerns about potential misuse if such capabilities are widely accessible. Managing Risk Through Controlled Access Rather than pursuing broad public release, Anthropic emphasized a controlled deployment approach, where access to high-capability systems is limited to selected partners, researchers, and enterprise users under strict safeguards. This model aligns with a wider industry trend, where leading AI developers -- including Microsoft, Google, and NVIDIA -- are increasingly focusing on staged rollouts, red-teaming, and safety evaluations before expanding availability. Safety, Alignment, and Reliability Anthropic's report highlights the importance of alignment research -- ensuring that AI systems follow intended instructions and do not produce harmful or unintended outcomes. While advanced models can sometimes generate incorrect or unexpected responses, the company stressed that ongoing improvements in monitoring, interpretability, and human oversight are central to reducing risks. Experts say that as AI systems become more capable, the focus is shifting from raw performance to reliability, transparency, and governance. Why this matters * Cybersecurity Impact: AI-assisted vulnerability detection could significantly accelerate software patching, but also raises concerns about dual-use risks. * Controlled Deployment: Limited-access models suggest a future where the most powerful AI systems are not openly released. * Policy and Governance: The debate reinforces the need for global standards on AI safety, testing, and accountability. FAQs Q1. Is this advanced AI model publicly available? No. Access is currently restricted, with deployment limited to controlled environments and selected partners. Q2. Can AI really find software vulnerabilities? Yes. Modern AI systems can assist in code analysis and bug detection, though human validation remains essential. Q3. Does this mean AI can replace cybersecurity experts? No. AI is expected to augment human experts by speeding up analysis and detection, not replace them entirely.

Buy This AI Stock to Own SpaceX Pre-IPO and Hold It Through the Robotaxi Boom

Alphabet's Waymo is the market leader in autonomous ridesharing, a market projected to increase at 99% annually through 2033. SpaceX is an aerospace manufacturer and satellite-internet service provider. The company is well known for Starship, a fully reusable orbital rocket designed for interplanetary travel. It is also well known for Starlink, a constellation of low-Earth orbit satellites that provide high-speed internet access around the globe. SpaceX recently filed initial public offering (IPO) paperwork with the SEC and plans to host an IPO roadshow in early June, which means the stock will likely start trading on the public market this summer. But investors can get pre-IPO exposure to SpaceX now by purchasing shares of Alphabet (NASDAQ: GOOGL) (NASDAQ: GOOG). Will AI create the world's first trillionaire? Our team just released a report on the one little-known company, called an "Indispensable Monopoly" providing the critical technology Nvidia and Intel both need. Continue " In 2015, Alphabet invested $900 million in SpaceX, which gave it roughly a 7% equity stake in the rocket maker. Alphabet has already recouped that sum several times over. In fact, the company said unrealized gains from that equity stake added $8 billion to its profit in the first quarter of 2025 alone. Importantly, SpaceX doubled in value in 2025. A secondary share sale raised its valuation to $800 billion in December, up from about $400 billion earlier in the year. That means Alphabet's stake was worth about $56 billion at the end of 2025. SpaceX has since merged with xAI, and the combined companies are reportedly targeting a $1.75 trillion IPO valuation. If that figure sticks, Alphabet's stake would be worth more than $120 billion, bringing the return on its initial $900 million investment to approximately 13,400%. Here's the big picture: Alphabet owns about 7% of SpaceX, which means anyone that owns Alphabet stock has modest exposure to Elon Musk's rocket company. Now is a good time to buy because SpaceX's valuation is likely to soar after its IPO in the coming months, but also because Alphabet has other growth prospects and the stock trades at an attractive price. Alphabet is investing aggressively in artificial intelligence products, and the return on those investments is particularly evident in its cloud computing business. Google Cloud revenue rose 48% in the fourth quarter, the third straight acceleration, driven by strong demand for its Gemini models and tensor processing units (TPUs). TPUs are custom AI accelerators that serve as an alternative to Nvidia GPUs. Several major AI companies, including OpenAI, Anthropic, and Meta Platforms, have signed deals to use TPUs. While Nvidia still dominates the AI accelerator market, custom silicon is projected to gain share, reaching 24% of total accelerator sales by 2030, up from approximately 12% today, according to Morgan Stanley. Elsewhere, Alphabet's Waymo is also the leader in autonomous ridesharing with robotaxis providing public rides in 11 major U.S. metropolitan areas. The company is also testing its vehicles in 20 more cities, including London and Tokyo. Morgan Stanely analysts estimate Waymo will account for 34% of autonomous vehicle trips annually by 2032, which would put it 9 percentage points ahead of second-place Tesla. Wall Street estimates Alphabet's earnings will increase at 15% annually through 2029. That makes the current valuation of 28 times earnings look reasonable. But analysts have regularly underestimated the company in the past. Alphabet beat the consensus earnings estimate by an average of 15% over the last six quarters. I think Wall Street is once again underestimating the company, not only because it owns a substantial stake in SpaceX ahead of what promises to be a blockbuster IPO, but also because it has compelling growth prospects in cloud computing and autonomous driving. Grand View Research estimates cloud spending will grow at 16% annually through 2033, while robotaxi revenue increases at 99% annually over the same period. Before you buy stock in Alphabet, consider this: The Motley Fool Stock Advisor analyst team just identified what they believe are the 10 best stocks for investors to buy now... and Alphabet wasn't one of them. The 10 stocks that made the cut could produce monster returns in the coming years. Consider when Netflix made this list on December 17, 2004... if you invested $1,000 at the time of our recommendation, you'd have $532,929!* Or when Nvidia made this list on April 15, 2005... if you invested $1,000 at the time of our recommendation, you'd have $1,091,848!* Now, it's worth noting Stock Advisor's total average return is 928% -- a market-crushing outperformance compared to 186% for the S&P 500. Don't miss the latest top 10 list, available with Stock Advisor, and join an investing community built by individual investors for individual investors. Trevor Jennewine has positions in Nvidia and Tesla. The Motley Fool has positions in and recommends Alphabet, Meta Platforms, Nvidia, and Tesla. The Motley Fool has a disclosure policy.

Palantir (PLTR) Stock Tumbles 6% Following Burry's Anthropic Competition Warning - Blockonomi

Street consensus remains Moderate Buy with average target of $194.61 The legendary investor from "The Big Short," Michael Burry, publicly challenged Palantir's market position on Wednesday through a post on X, declaring the stock potentially overvalued while highlighting Anthropic's growing dominance in enterprise artificial intelligence. PLTR shares tumbled approximately 6% during regular trading hours following his remarks. After-hours activity showed some recovery as the stock climbed back toward $141.18 with renewed buying interest. Palantir Technologies Inc., PLTR Burry previously revealed a short bet against Palantir in early 2025. His Wednesday commentary escalated his critique, focusing on fundamental shifts in the competitive environment. "Anthropic is eating Palantir's lunch," Burry stated. "That massive boost from $9B to $30B ARR at Anthropic is because Anthropic offers the easier, cheaper, intuitive solution for businesses." His argument drew support from Ramp data, referencing a March 2026 study by economist Ara Kharazian. The analysis revealed that nearly 25% of Ramp's business customers now subscribe to Anthropic services -- a dramatic increase from just 4% twelve months earlier. Burry further emphasized that Anthropic is capturing 73% of incremental enterprise AI expenditures, while the broader AI sector displays zero-sum characteristics, with OpenAI recording its steepest monthly user decline ever. With a forward price-to-earnings multiple hovering around 115x, Palantir commands a significant premium over its sector median of 21x and towers above comparable large-cap AI competitors. This valuation disparity has consistently fueled bearish arguments. Benchmark's Yi Fu Lee maintains a neutral stance with a Hold rating. His position reflects concerns that current pricing assumes flawless operational performance, leaving limited room for growth deceleration. Rosenblatt analyst John McPeake takes the opposing view. He stands by his Buy recommendation and $200 valuation target, highlighting forthcoming developments such as the "Golden Dome" missile defense initiative. McPeake projects Palantir's involvement in this contract could drive billions in revenue through 2028. Bank of America's Mariana Perez also retains her Buy stance, characterizing the selloff as a temporary response to news flow. She emphasizes Palantir's entrenched position within critical government data infrastructure as a sustainable competitive moat. The current analyst consensus registers as Moderate Buy, comprising 14 Buy ratings, 5 Hold ratings, and 2 Sell ratings. The mean price objective stands at $194.61 post-volatility, suggesting potential upside of approximately 38% from Wednesday's closing price. Palantir delivered 70% year-over-year revenue expansion in its latest quarterly results, a metric that bullish investors cite as validation of the company's underlying business strength despite valuation controversies. Burry isn't the sole prominent skeptic. Short-seller Andrew Left revealed his own short position in Palantir last September, additionally highlighting Databricks as a superior alternative investment. Since Anthropic remains privately held, investors lack direct mechanisms to capitalize on Burry's competitive thesis -- though the downward pressure on PLTR has proven tangible. The official designation of the Maven Smart System represents one of the more concrete near-term positive catalysts currently on the horizon for shareholders.