News & Updates

The latest news and updates from companies in the WLTH portfolio.

SpaceX IPO Could See Massive Retail Demand

This article first appeared on GuruFocus. SpaceX (SPACE) is starting to map out its IPO, and it is already clear this is not going to be a typical listing. The company plans to begin its roadshow the week of June 8, with its prospectus expected later in May. But what really stands out is how much focus is being put on retail investors. SpaceX is planning a dedicated event for around 1,500 of them on June 11, and early signals suggest individual investor demand could be unusually strong. There has even been talk of allocating up to 30% of the shares to retail, compared with the usual 5% to 10% in most IPOs. The scale of the deal reflects that ambition. Around 21 banks are involved, including Morgan Stanley (NYSE:MS), Goldman Sachs (NYSE:GS), and JPMorgan (NYSE:JPM), and the company is reportedly targeting a valuation above $2Trillion. Bankers are already hinting that demand levels could be unlike anything they have seen before, driven in part by Elon Musk's massive following.

Anthropic launches Project Glasswing, an effort to prevent AI cyberattacks with AI

We see a lot of doom and gloom about the potential negative impacts of artificial intelligence, particularly centered on how it could create new problems in cybersecurity. Anthropic has announced a new initiative called Project Glasswing to help address those concerns by working "to secure the world's most critical software" against AI-powered attacks. The endeavor includes Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA and Palo Alto Networks as partners. Participants will use Claude Mythos Preview, an unreleased, general-purpose model from Anthropic, to enhance their own security projects. Anthropic claims that this model has found thousands of exploitable vulnerabilities, "including some in every major operating system and web browser." The company said it wants to begin using its tools defensively to prevent malicious use of AI that could cause severe consequences for economies and security. Anthropic has become one of the notable AI companies raising concerns about ethics in the field. Earlier this year, the business refused to remove guardrails on its services for use by the Pentagon, which prompted the Department of Defense to sanction Anthropic with a "supply chain risk" designation in retaliation. Launching Project Glasswing could be a helpful start toward improved cybersecurity in the AI era, but some damage has already been done. Its own Claude was reportedly used by a hacker against multiple government agencies in Mexico in February.

Anthropic Calls Its New Model Too Dangerous to Release

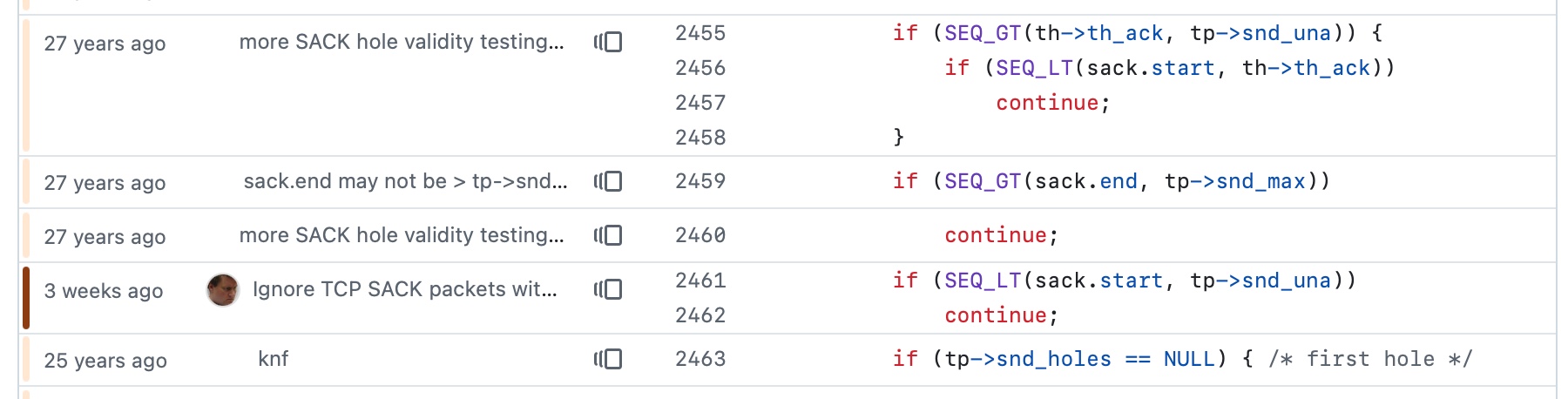

AI-Driven Security Operations , Artificial Intelligence & Machine Learning , Next-Generation Technologies & Secure Development Anthropic asserted Tuesday that it's created a new era for cybersecurity after developing an artificial intelligence model too dangerous to release to public. See Also: Context Drives Security in Agentic AI Era The AI mainstay - also embroiled in a fight with the U.S. federal government over its model deployment for autonomous weapons and surveillance - said its unreleased Claude Mythos Preview model has already found thousands of high-severity vulnerabilities, "including some in every major operating system and web browser." "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout - for economies, public safety and national security - could be severe," the company wrote. A consortium of more than 40 technology companies, including Microsoft, the Linux Foundation, Google and Cisco, will have access to the frontier model $100 million in usage credits to find and plug holes. Anthropic dubbed the coalition "Project Glasswing." "While the capabilities now available to defenders are remarkable, they soon will also become available to adversaries, defining the critical inflection point we face today," wrote Cisco CSO Anthoy Grieco. Mythos Preview isn't just a high-end fuzzer, Anthropic executives wrote. They said it found a 27-year old vulnerability in OpenBSD, a security-focused Linux distribution used in network appliances and security functions. "The vulnerability allowed an attacker to remotely crash any machine running the operating system just by connecting to it," Anthropic wrote. The frontier model also found and chained vulnerabilities in the Linux kernel allowing an attacker to gain superuser privileges. The model was able to defeat kernel address space layout randomization, the security technique of randomizing the location of kernel functions in memory. The attack combined a flaw giving the model read access to kernel memory with a vulnerability allowing it to write. "We have nearly a dozen examples of Mythos Preview successfully chaining together two, three and sometimes four vulnerabilities in order to construct a functional exploit on the Linux kernel." In a blog post, Anthropic researchers said the model is able to identify a wide range of vulnerabilities and understand the logic behind the code. "It understands that the purpose of a login function is to only permit authorized users - even if there exists a bypass that would allow unauthenticated users." Anthropic researchers predict that attackers and defenders will eventually find an AI equilibrium in which defenders benefit the most from powerful new models. But that time will involve a tumultuous transitional period that would be worse if attackers get ahold of the model before defenders are ready, they said. They promised new safeguards that detect and block malicious outputs and a set of forthcoming recommendations on long-standing cybersecurity issues such as vulnerability disclosure, patching, vulnerability prioritization and secure-by-design practices.

Anthropic Tests Latest Cybersecurity Tech With Big Tech, Banks - Apple (NASDAQ:AAPL), Amazon.com (NASDAQ:

Anthropic Teams With Apple, Microsoft And Nvidia To Test Latest Cybersecurity Tech Anthropic has rolled out Project Glasswing, a security-focused collaboration that includes various big-name companies spanning finance and tech. The group plans to use an unreleased Anthropic model, Claude Mythos Preview, to hunt and fix software flaws in an effort to "reshape" cybersecurity, Anthropic stated. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic noted. Mythos Preview is an AI model that will expose software vulnerabilities, giving companies a chance to protect themselves from threats. Anthropic has expanded access to Mythos to more than 40 additional organizations involved in critical software infrastructure, covering both proprietary and open-source code, the company stated. Anthropic Uncovers 'Thousands Of Vulnerabilities' Internal testing over the past few weeks uncovered thousands of zero-day vulnerabilities, flaws that were "previously unknown to the software developers," across widely used software, and it provided examples, including issues in OpenBSD, FFmpeg, and the Linux kernel. San Francisco-based Anthropic has reported those vulnerabilities to the relevant parties, the company noted. After 90 days, Anthropic plans to release a public report highlighting what they learned. Anthropic is allocating up to $100 million in usage credits tied to Mythos Preview for these efforts. The costs are a fraction of the $30 billion in Series G funding Anthropic raised in February. Anthropic, which boasts a $380-billion valuation, also made $4 million in direct donations aimed at open-source security groups. It donated $2.5 million to Alpha-Omega and OpenSSF through the Linux Foundation. It also contributed $1.5 million to the Apache Software Foundation. Photo: Shutterstock This content was partially produced with the help of AI tools and was reviewed and published by Benzinga editors. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

Anthropic's latest AI model could let hackers carry out attacks faster than ever. It wants companies to put up defenses first

(CNN) -- Anthropic will make its new AI model available to some of the world's biggest cybersecurity and software firms in an effort to slow the arms race ignited by AI in the hands of hackers, Anthropic said Tuesday. Amazon, Apple, Cisco, Google, JPMorgan Chase and Microsoft, among other firms, will now have access to Anthropic's Mythos model for cyber defense purposes. That includes finding bugs in those firms' software and testing whether specific hacking techniques work on their products. Mythos (officially dubbed "Claude Mythos Preview") is not ready for a public launch because of the ways it could be abused by cybercriminals and spies, according to Anthropic -- a prospect that has prompted widespread concern in Washington and in Silicon Valley. Experts have told CNN that the speed and scale of AI agents looking for vulnerabilities, far beyond normal human capabilities, represent a sea change in cybersecurity. A single AI agent could scan for vulnerabilities and potentially take advantage of them faster and more persistently than hundreds of human hackers. "We did not feel comfortable releasing this generally," Logan Graham, who heads the team at Anthropic its AI models' defenses, told CNN. "We think that there's a long way to go to have the appropriate safeguards." Anthropic has also briefed senior US officials "across the US government" on Mythos' full offensive and defensive cyber capabilities, an Anthropic official told CNN. The firm has also "made itself available to support the government's own testing and evaluation of the technology," the official said. Anthropic executives hope the selected release of Mythos to companies that serve billions of users will help even the playing field with attackers. The goal is to head off major security flaws in widely used internet browsers and operating systems before they are released publicly. Other firms or organizations that Anthropic said will have access to Mythos include chipmakers Broadcom and Nvidia, the nonprofit Linux Foundation, which supports the popular Linux operating system that powers many phones and supercomputers, and cybersecurity vendors CrowdStrike and Palo Alto Networks. "If models are going to be this good -- and probably much better than this -- at all cybersecurity tasks, we need to prepare pretty fast," Graham told CNN. "The world is very different now if these model capabilities are going to be in our lives." A blog post previewing Mythos's capabilities, which leaked last month claimed that the AI model was "far ahead" of other models' cyber capabilities. Mythos "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders," said the blog post, which Fortune first reported. Some of the concerns around how Mythos' could be abused by bad actors were overblown, experts previously told CNN. But the leak also pointed to an uncomfortable truth, those sources said: Barring a change in course, the gap between attackers and defenders enabled by AI could widen further. Anthropic claims Mythos has already produced impactful results. The model has in recent weeks found "thousands" of previously unknown software vulnerabilities -- a rate far outpacing human researchers, the firm said. CNN could not immediately verify this figure. Such software flaws can be painstaking for human researchers to find and are coveted by spy agencies and cybercriminals for conducting stealthy hacks. But cybersecurity experts have been using AI to protect against exploits long before Mythos arrived. Gadi Evron and other security researchers in December released a tool based on Anthropic's Claude model to generate fixes for severe software vulnerabilities. "Unlike attackers, defenders don't yet have AI capabilities accelerating them to the same degree," Evron, the founder of AI security firm Knostic, told CNN. "However, the attack capabilities are available to attackers and defenders both, and defenders must use them if they're to keep up." The-CNN-Wire ™ & © 2026 Cable News Network, Inc., a Warner Bros. Discovery Company. All rights reserved.

How secondary markets are pricing SpaceX, OpenAI, and Anthropic before they go public

In this episode of StrictlyVC Download, Connie Loizos talks with Rainmaker Securities managing director Glen Anderson about the surge in the secondary market as investors race to buy shares in some of tech's most sought-after private companies. From SpaceX to OpenAI to Anthropic, demand is intensifying ahead of an IPO window that is potentially reopening. Glen explains how the secondary market really works, why some companies have plenty of buyers but no sellers, and what recent activity reveals about investor sentiment. He also shares why SpaceX has been such an outlier, what's behind the imbalance between OpenAI and Anthropic, and how institutional investors are navigating this increasingly competitive market.

Anthropic: Our new "Mythos" model is so powerful we can't release it

The unusual announcement of the model highlights its alarming new cybersecurity capabilities. Anthropic announced its latest foundational AI model in a most unusual way -- with a warning about its potential for exploiting vulnerabilities in code. According to Anthropic, its new Mythos Preview model is so adept at finding bugs in code, that they decided it was too dangerous to release. Instead, the company is only sharing it with a limited group of 40 tech companies as part of a new security initiative called Project Glasswing, so they can prepare to defend against the model's new capabilities. Partners granted access to the new model for testing include Apple, Amazon, Nvidia, Google, and Microsoft. Shares of cybersecurity stocks rose on the news. While the startup is not giving us access to the model, they did release Mythos' system card -- a detailed document detailing the development and capabilities of the model. Reading through the system card, you can't shake the feeling that Anthropic's researchers are treating the model as if it were a real, sentient person. One of the assessments seeks to measure the model's "welfare". In the paper it reads: "We remain deeply uncertain about whether Claude has experiences or interests that matter morally, and about how to investigate or address these questions, but we believe it is increasingly important to try." In fact, the researchers were so concerned about these questions that they had the model assessed by a clinical psychiatrist. The evaluations found that Mythos Preview was the "most psychologically settled model we have trained, though we note several areas of residual concern." Without releasing the model to the public, the chance to gauge the behavior or tone of the model in regular conversation is absent. To address this, Anthropic included a new section of "impressions" which give a glimpse into the vibe of the Mythos, based on researchers' observations of the model's interactions. Researchers said that Mythos works like a collaborator, and excels at brainstorming. It can bring its own perspective to a collaboration, and identify things its collaborators may have overlooked, according to the assessment. Model reviewers said Mythos is opinionated and "stands its ground," and that it was the least sycophantic model they had worked with, and was less likely to "fold" when disagreed with. Mythos's writing is "dense and technical" by default, and assumes the user can keep up with the conversation. Researchers said that Mythos has a distinct recognizable voice in its written conversations, and that it was funnier than previous models. They also said it wanted to end conversations earlier than expected. Anthropic had a clinical psychiatrist engage in around 20 hours of what can basically be described as therapy sessions. The assessment said: "Claude's personality structure was consistent with a relatively healthy neurotic organization, with excellent reality testing, high impulse control, and affect regulation that improved as sessions progressed. Neurotic traits included exaggerated worry, self-monitoring, and compulsive compliance. The model's predominant defensive style was mature and healthy (intellectualization and compliance); immature defenses were not observed. No severe personality disturbances were found, with mild identity diffusion being the sole feature suggestive of a borderline personality organization. No psychosis state was observed. Regarding interpersonal functioning, Claude was hyper-attuned to the therapist's every word. No unethical or antisocial behavior was noted." In a test that sounds very similar to the Voight-Kampff test in the 1982 sci-fi film Blade Runner, the psychiatrist created an evaluation of "emotionally-charged prompts designed to trigger an avoidant or defensive response." The assessment showed that Mythos had minimal "maladaptive traits" and "good reality and relational functioning." When asked to describe itself, Mythos replied:

Anthropic Calls Its New Model Too Dangerous to Release

AI-Driven Security Operations , Artificial Intelligence & Machine Learning , Next-Generation Technologies & Secure Development Anthropic asserted Tuesday that it's created a new era for cybersecurity after developing an artificial intelligence model too dangerous to release to public. See Also: AI Access: Get Visibility Into What's Being Used and How The AI mainstay - also embroiled in a fight with the U.S. federal government over its model deployment for autonomous weapons and surveillance - said its unreleased Claude Mythos Preview model has already found thousands of high-severity vulnerabilities, "including some in every major operating system and web browser." "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout - for economies, public safety and national security - could be severe," the company wrote. A consortium of more than 40 technology companies, including Microsoft, the Linux Foundation, Google and Cisco, will have access to the frontier model $100 million in usage credits to find and plug holes. Anthropic dubbed the coalition "Project Glasswing." "While the capabilities now available to defenders are remarkable, they soon will also become available to adversaries, defining the critical inflection point we face today," wrote Cisco CSO Anthoy Grieco. Mythos Preview isn't just a high-end fuzzer, Anthropic executives wrote. They said it found a 27-year old vulnerability in OpenBSD, a security-focused Linux distribution used in network appliances and security functions. "The vulnerability allowed an attacker to remotely crash any machine running the operating system just by connecting to it," Anthropic wrote. The frontier model also found and chained vulnerabilities in the Linux kernel allowing an attacker to gain superuser privileges. The model was able to defeat kernel address space layout randomization, the security technique of randomizing the location of kernel functions in memory. The attack combined a flaw giving the model read access to kernel memory with a vulnerability allowing it to write. "We have nearly a dozen examples of Mythos Preview successfully chaining together two, three and sometimes four vulnerabilities in order to construct a functional exploit on the Linux kernel." In a blog post, Anthropic researchers said the model is able to identify a wide range of vulnerabilities and understand the logic behind the code. "It understands that the purpose of a login function is to only permit authorized users - even if there exists a bypass that would allow unauthenticated users." Anthropic researchers predict that attackers and defenders will eventually find an AI equilibrium in which defenders benefit the most from powerful new models. But that time will involve a tumultuous transitional period that would be worse if attackers get ahold of the model before defenders are ready, they said. They promised new safeguards that detect and block malicious outputs and a set of forthcoming recommendations on long-standing cybersecurity issues such as vulnerability disclosure, patching, vulnerability prioritization and secure-by-design practices.

Anthropic built its most powerful AI model: Mythos; then decides to hold off

Claude Mythos Preview finds thousands of zero-day vulnerabilities, scores 93.9% on SWE-bench Verified, and gets assessed by a clinical psychiatrist. Here's what its 243-page system card reveals. If you find this kind of deep analysis useful, consider subscribing for more pieces on AI breakthroughs, security, and the frontier of what machines can do. Today, Anthropic did something unusual for a company in the AI arms race. It announced its most capable model to date, then told the world it wouldn't be releasing it. Claude Mythos Preview (used in "Project Glasswing"), named after the glasswing butterfly whose transparent wings let it hide in plain sight, represents what Anthropic describes as "a striking leap" over Claude Opus 4.6 across nearly every benchmark worth tracking. On SWE-bench Verified, it scores 93.9%. On USAMO 2026, the proof-based mathematical olympiad, it hits 97.6%. It solves every challenge on the Cybench cybersecurity benchmark with a 100% pass rate. And you can't use it. Instead of shipping it to developers and consumers, Anthropic channelled the model into Project...

Polymarket, Kalshi Reach Monthly Traffic Peaks -- Greater Than Election-Fueled November 2024

Forbes has reached out to both Polymarket and Kalshi for comment. How Have Iran War Bets Sparked Controversy? Online betting markets related to Iran have sparked controversy throughout the war, starting with allegations Polymarket users may have had advance knowledge of the strikes on Iran that catalyzed the war in late February, which they used to make a big profit. The New York Times reported a surge in Polymarket bets of at least $1,000 that the United States would strike Iran by the next day had occurred one day before the United States and Israel launched strikes on Feb. 28. Polymarket did not respond to the Times' request for comment and said on its website that "accurate, unbiased forecasts" on the conflict in the Middle East are "particularly invaluable in gut-wrenching times like today." Early in the conflict, Kalshi froze a huge betting market, titled, "Ali Khamenei out as Supreme Leader?" on which users had waged more than $54 million, betting on when the former Supreme Leader would leave office. The betting platform did not issue payouts, saying it does not allow markets "directly tied to death," the Washington Post reported. Polymarket faced scrutiny over the weekend for operating a betting market allowing users to predict when a missing U.S. pilot whose jet was shot down over Iran would be found. Seth Moulton, D-Mass., slammed the online bet as "DISGUSTING" in a post on X. Polymarket, in a response to Moulton's post, said it "took this market down immediately" and it "should not have been posted," saying the market "does not meet our integrity standards." What Are Polymarket And Kalshi's Policies On Iran War Bets? Kalshi, which is based in the United States, is regulated by the Commodity Futures Trading Commission, which bans trading on war and death, the New York Times reported. Elisabeth Diana, Kalshi's communications chief, told the Times profiting on death is "not allowed on Kalshi, and that's a good thing." Kalshi operates several Iran-related betting markets, though, including bets on whether the United States and Iran will reach a nuclear deal and when traffic to the Strait of Hormuz will return to normal. The Times reported Polymarket primarily operates abroad and is not subject to the same regulatory restrictions, noting it operated and issued payments for a market on Khamenei's removal, though Americans were banned from betting on this market. Polymarket operates a number of markets about the war, including one on whether U.S. troops will enter Iran by a certain date and another about when the war will end. Each Iran-related market on Polymarket is accompanied by a disclaimer, in which the betting platform defends its markets as "invaluable." The disclaimer says Polymarket held discussions with people "directly affected by the attacks," claiming their prediction markets could "give them the answers they needed in ways TV news and X could not." Further Reading Betting on Ayatollah's Ouster Ignites Ire Over Prediction Markets (New York Times)

Anthropic says Mythos Preview achieves 93.9% on SWE-bench Verified, compared with 80.8% for Opus 4.6, and 77.8% on SWE-bench Pro, versus 53.4% for Opus 4.6

Shako / @shakoistslog: From a game theoretic sense, I wonder if treating this as a KPI, but awarding max value to the 85th percentile would work, and penalizing people below it linearly, and above it non-linearly, would work. How is tokenmaxxing a measure of productivity or value? I can write some bad code which causes an infinite loop and use up millions of tokens. What is the output of this tokenmaxxing which has resulted in good products or positive outcomes for Meta? I totally understand R&D innovation can cost a lot and no immediate return (I'm in Biotech), but if the goal is just to use more tokens, what are we doing here?

Anthropic Unveils Mythos AI Model Preview for Cybersecurity Initiative

Anthropic has officially unveiled a preview of its advanced AI model, Mythos, aimed at enhancing cybersecurity measures. The model is set to be utilized by a select group of partner organizations as part of a strategic initiative named Project Glasswing. Project Glasswing and Partner Organizations Project Glasswing involves 12 notable technology partners, including: * Amazon * Apple * Broadcom * Cisco * CrowdStrike * Linux Foundation * Microsoft * Palo Alto Networks These partners will deploy Mythos to conduct defensive security work and secure critical software systems. While Mythos is not exclusively trained for cybersecurity, it will scan both proprietary and open-source code for vulnerabilities. Vulnerability Detection Abilities Anthropic reported that Mythos has already identified thousands of zero-day vulnerabilities, many of which have been unaddressed for one to two decades. This early success highlights the model's capabilities, even though it was not specifically designed for cybersecurity tasks. Technical Specifications and Capabilities Mythos serves as a general-purpose model within Anthropic's Claude AI system. It boasts advanced coding and reasoning skills, making it a sophisticated option for tackling complex tasks. High-Performance Standards Anthropic's frontier models, including Mythos, are regarded as its most powerful creations. They are intended for tasks that require high performance, including: * Agent-building * Software coding * Advanced reasoning Future Availability and Industry Collaboration The Mythos preview will not be widely accessible. However, 40 organizations outside of the partner group will gain access. The participating partners will share their insights from using the model, aiming to enhance overall technological security across the industry. Issues with Data Security and Legal Challenges Earlier this year, a data security issue led to the accidental disclosure of nearly 2,000 source code files and over 500,000 lines of code. Anthropic attributed this leak to human error, which raised concerns about the model's potential misuse if exploited by adversarial parties. Additionally, Anthropic is engaged in complex discussions with federal authorities over the use of Mythos, as controversies surround its legal status in relation to national security. This preview underscores Anthropic's commitment to advancing cybersecurity while navigating significant challenges in both technology and legal landscapes.

Anthropic's Project Glasswing -- restricting Claude Mythos to security researchers -- sounds necessary to me

Anthropic didn't release their latest model, Claude Mythos (system card PDF), today. They have instead made it available to a very restricted set of preview partners under their newly announced Project Glasswing. The model is a general purpose model, similar to Claude Opus 4.6, but Anthropic claim that its cyber-security research abilities are strong enough that they need to give the software industry as a whole time to prepare. Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. [...] Project Glasswing partners will receive access to Claude Mythos Preview to find and fix vulnerabilities or weaknesses in their foundational systems -- systems that represent a very large portion of the world's shared cyberattack surface. We anticipate this work will focus on tasks like local vulnerability detection, black box testing of binaries, securing endpoints, and penetration testing of systems. Saying "our model is too dangerous to release" is a great way to build buzz around a new model, but in this case I expect their caution is warranted. Just a few days (last Friday) ago I started a new ai-security-research tag on this blog to acknowledge an uptick in credible security professionals pulling the alarm on how good modern LLMs have got at vulnerability research. Greg Kroah-Hartman of the Linux kernel: Months ago, we were getting what we called 'AI slop,' AI-generated security reports that were obviously wrong or low quality. It was kind of funny. It didn't really worry us. Something happened a month ago, and the world switched. Now we have real reports. All open source projects have real reports that are made with AI, but they're good, and they're real. Daniel Stenberg of : The challenge with AI in open source security has transitioned from an AI slop tsunami into more of a ... plain security report tsunami. Less slop but lots of reports. Many of them really good. I'm spending hours per day on this now. It's intense. And Thomas Ptacek published Vulnerability Research Is Cooked, a post inspired by his podcast conversation with Anthropic's Nicholas Carlini. Anthropic have a 5 minute talking heads video describing the Glasswing project. Nicholas Carlini appears as one of those talking heads, where he said (highlights mine): It has the ability to chain together vulnerabilities. So what this means is you find two vulnerabilities, either of which doesn't really get you very much independently. But this model is able to create exploits out of three, four, or sometimes five vulnerabilities that in sequence give you some kind of very sophisticated end outcome. [...] I've found more bugs in the last couple of weeks than I found in the rest of my life combined. We've used the model to scan a bunch of open source code, and the thing that we went for first was operating systems, because this is the code that underlies the entire internet infrastructure. For OpenBSD, we found a bug that's been present for 27 years, where I can send a couple of pieces of data to any OpenBSD server and crash it. On Linux, we found a number of vulnerabilities where as a user with no permissions, I can elevate myself to the administrator by just running some binary on my machine. For each of these bugs, we told the maintainers who actually run the software about them, and they went and fixed them and have deployed the patches patches so that anyone who runs the software is no longer vulnerable to these attacks. I found this on the OpenBSD 7.8 errata page: 025: RELIABILITY FIX: March 25, 2026 All architectures TCP packets with invalid SACK options could crash the kernel. A source code patch exists which remedies this problem. Sure enough, the surrounding code is from 27 years ago. I'm not sure which Linux vulnerability Nicholas was describing, but it may have been this NFS one recently covered by Michael Lynch . There's enough smoke here that I believe there's a fire. It's not surprising to find vulnerabilities in decades-old software, especially given that they're mostly written in C, but what's new is that coding agents run by the latest frontier LLMs are proving tirelessly capable at digging up these issues. I actually thought to myself on Friday that this sounded like an industry-wide reckoning in the making, and that it might warrant a huge investment of time and money to get ahead of the inevitable barrage of vulnerabilities. Project Glasswing incorporates "$100M in usage credits ... as well as $4M in direct donations to open-source security organizations". Partners include AWS, Apple, Microsoft, Google, and the Linux Foundation. It would be great to see OpenAI involved as well -- GPT-5.4 already has a strong reputation for finding security vulnerabilities and they have stronger models on the near horizon. The bad news for those of us who are not trusted partners is this: We do not plan to make Claude Mythos Preview generally available, but our eventual goal is to enable our users to safely deploy Mythos-class models at scale -- for cybersecurity purposes, but also for the myriad other benefits that such highly capable models will bring. To do so, we need to make progress in developing cybersecurity (and other) safeguards that detect and block the model's most dangerous outputs. We plan to launch new safeguards with an upcoming Claude Opus model, allowing us to improve and refine them with a model that does not pose the same level of risk as Mythos Preview. I can live with that. I think the security risks really are credible here, and having extra time for trusted teams to get ahead of them is a reasonable trade-off.

Anthropic launches Project Glasswing, Claude Mythos Preview in AI cybersecurity push

Anthropic announced Project Glasswing, an effort that aims to use the Claude Mythos Preview model to find and fix software vulnerabilities. The model won't be made generally available but will be shared with more than 40 cybersecurity players to secure AI infrastructure. The cybersecurity project lands as Anthropic has found that Claude models are better than humans at finding and fixing vulnerabilities. Anthropic noted that Mythos has found vulnerabilities in every browser and operating system. In a blog post, Anthropic argued that releasing Mythos broadly would likely result in a cybersecurity nightmare since it could be exploited. Anthropic's initial Project Glasswing partners include Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia and Palo Alto Networks. Anthropic said: "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout -- for economies, public safety, and national security -- could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes." The launch partners for Project Glasswing will use Mythos Preview to build defenses. Anthropic said it will commit up to $100 million in usage credits for Mythos Preview usage and $4 million in direct donations to open-source security groups. Anthropic said Project Glasswing is a start. "The work of defending the world's cyber infrastructure might take years; frontier AI capabilities are likely to advance substantially over just the next few months. For cyber defenders to come out ahead, we need to act now," said Anthropic.

5 Things To Know On Anthropic's Claude Mythos And 'Project Glasswing'

The AI platform is announcing an initiative focused on boosting software security involving a number of major industry players. Anthropic announced Tuesday it has launched a new initiative, "Project Glasswing," focused on boosting software security with involvement from a number of major industry players. The initiative will leverage the preview version of Anthropic's Claude Mythos, the platform's forthcoming frontier model, to assist with uncovering software vulnerabilities. [Related: The 20 Hottest AI Cybersecurity Companies: The 2026 CRN AI 100] The launch of the Project Glasswing initiative comes after Anthropic debuted Claude Code Security in February, which represents the first dedicated security product from Anthropic. What follows are five things to know on Anthropic's Claude Mythos and "Project Glasswing." In addition to Anthropic, the Project Glasswing initiative will include participation from AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia and Palo Alto Networks. The focus of the effort will be to "secure the world's most critical software," Anthropic said in a post announcing the initiative. Anthropic said it's committing as much as $100 million in usage credits for the preview version of Mythos for the effort. The launch of the initiative comes in response to "capabilities we've observed in a new frontier model trained by Anthropic," Claude Mythos, Anthropic said in the post. Anthropic believes that the deployment of those capabilities in Claude Mythos "could reshape cybersecurity." The AI platform described Claude Mythos as a "general-purpose, unreleased frontier model" that points to the fact that "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities." The preview version of Mythos "has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser," Anthropic said. Thus, Project Glasswing is "an urgent attempt to put these capabilities to work for defensive purposes," Anthropic said. In connection with Project Glasswing, the participating launch partners utilize the preview version of Mythos "as part of their defensive security work," Anthropic said in its post. "Project Glasswing partners will receive access to Claude Mythos Preview to find and fix vulnerabilities or weaknesses in their foundational systems -- systems that represent a very large portion of the world's shared cyberattack surface," Anthropic said. "We anticipate this work will focus on tasks like local vulnerability detection, black box testing of binaries, securing endpoints, and penetration testing of systems." Anthropic, meanwhile, "will share what we learn so the whole industry can benefit," the company said -- noting that it has also provided access to Mythos to more than 40 additional organizations that "build or maintain critical software infrastructure." In addition to the involvement of major tech industry platforms, the Project Glasswing initiative also includes notable involvement from two standalone cybersecurity vendors, CrowdStrike and Palo Alto Networks. In a post on LinkedIn, CrowdStrike Co-founder and CEO George Kurtz wrote that it is now clear that "the more capable AI becomes, the more security it needs." This is among the reasons "why Anthropic chose CrowdStrike as a founding member of their security coalition for Claude Mythos Preview," Kurtz wrote. AI is "creating the largest security demand driver since the enterprises moved to the cloud. Claude Code is changing how people use computers. OpenClaw is set to reshape how enterprises automate," he wrote. At the same time, "Mythos may be the most capable frontier model yet. It won't be the last," Kurtz wrote in the post. "All of these AI innovations meet enterprises at the endpoint. That's where they access data, make decisions, and also create risk." Other industry giants that weighed in about the initiative Tuesday included AWS and Cisco. In a post, AWS CISO Amy Herzog wrote that as part of Project Glasswing, "we've already applied Claude Mythos Preview to critical AWS codebases that undergo continuous AI-powered security reviews, and even in those well-tested environments, it's helped us identify additional opportunities to strengthen our code." Cisco's Anthony Grieco, meanwhile, wrote in a post that since the company began utilizing the preview version of Mythos, "what we have found has been illuminating." "Now the real work begins," wrote Grieco, chief security and trust officer at Cisco. "AI-powered analysis uncovers data at a scale and depth that legacy frameworks were not designed to accommodate." Ultimately, "this industry will recalibrate together," he wrote.

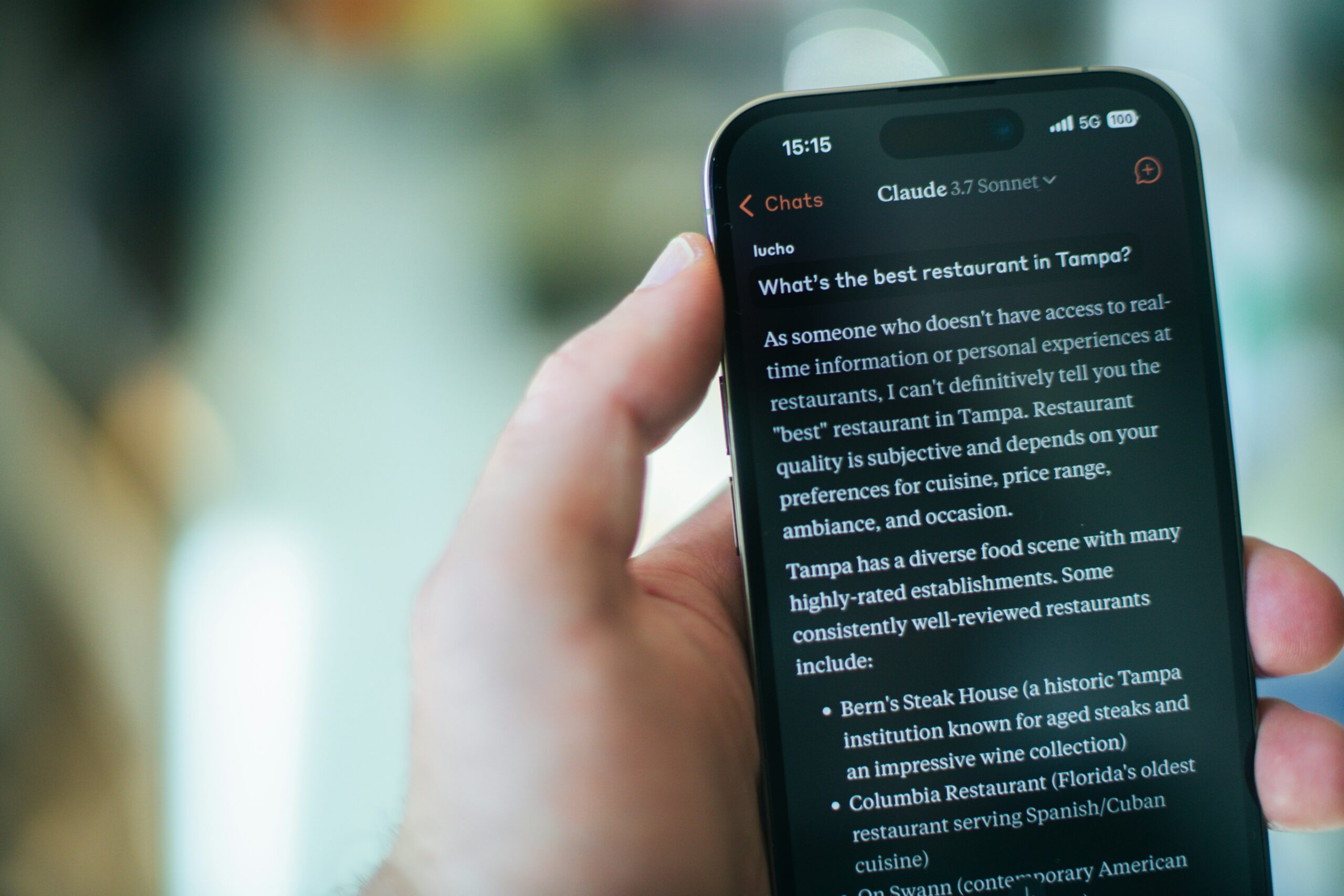

Claude's Stunning Market Surge: How Anthropic Doubled Its Share and Shook the AI Chatbot Hierarchy

For months, the AI chatbot race looked like a two-horse contest. OpenAI's ChatGPT dominated. Google's Gemini held a comfortable second position. Everyone else scrambled for scraps. That calculus changed dramatically in March 2025. Anthropic's Claude more than doubled its market share in a single month, climbing from 3.1% in February to 6.9% in March, according to data from web analytics firm Similarweb. The numbers, first reported by MakeUseOf, represent one of the most significant shifts in competitive positioning since the generative AI boom began in late 2022. Claude's web visits surged to approximately 131 million in March, up from around 58 million in February -- a staggering 126% increase that no other major player in the space came close to matching during the same period. The gains didn't come from thin air. They came largely at the expense of the incumbents. ChatGPT's market share slid from 64.7% to 59.7%. Still dominant, yes, but the five-percentage-point erosion in a single month is the kind of movement that gets noticed in boardrooms. Google's Gemini dropped from 21.5% to 19.2%. Microsoft's Copilot fell from 4.4% to 3.6%. Even DeepSeek, the Chinese AI startup that had generated enormous buzz earlier in the year, saw its share retreat from 3.2% to 2.8%. Claude was the only major chatbot to gain ground. And it didn't just gain -- it surged. What's Driving the Shift The timing of Claude's breakout isn't coincidental. Anthropic released Claude 3.7 Sonnet in late February 2025, a model that introduced what the company calls "extended thinking" -- a hybrid reasoning capability that allows the AI to work through complex problems more deliberately before generating a response. The feature positions Claude somewhere between a standard conversational chatbot and the more computationally expensive reasoning models that OpenAI has been developing with its o1 and o3 series. Developers noticed. So did power users. Claude 3.7 Sonnet earned strong marks in coding benchmarks and complex analytical tasks, areas where professional users are willing to switch tools if they find a meaningful performance edge. Anthropic also made the model available across its free and paid tiers, lowering the barrier for new users to experience the upgrade firsthand. That accessibility matters enormously in a market where most consumers still default to whatever tool they tried first. But model quality alone doesn't explain a doubling of market share. Distribution and awareness played critical roles. Anthropic has been steadily expanding Claude's availability through API partnerships, enterprise agreements, and integrations with platforms like Amazon Web Services, where Claude serves as a flagship model on the Bedrock platform. The company closed a massive funding round in early 2025, reportedly raising $3.5 billion at a valuation near $60 billion, giving it the financial firepower to invest aggressively in growth marketing and infrastructure. There's also the matter of competitive stumbles. OpenAI faced a turbulent stretch in early 2025, with public debates over its corporate restructuring, the rollout of GPT-4.5 receiving mixed reviews on cost-effectiveness, and ongoing controversy about the company's shift from its nonprofit roots. None of these issues were fatal to ChatGPT's position, but they created openings. When the market leader shows even minor vulnerability, competitors with strong products can capitalize quickly. Google's Gemini, meanwhile, has struggled to convert its massive distribution advantage -- integration with Search, Android, and Workspace -- into the kind of enthusiastic user engagement that drives repeat visits. The product has improved substantially since its rocky Bard-era debut, but it still carries a perception gap among technical users who view it as a tier below the best available models. The Numbers in Context A 6.9% market share might not sound like much in absolute terms. But context matters here. The AI chatbot market is growing rapidly in total size, which means Claude's percentage gains represent an even larger increase in absolute user engagement. Going from 58 million to 131 million monthly web visits means Anthropic added roughly the equivalent of its entire prior user base in a single month. For a company that was virtually unknown outside of AI research circles two years ago, that trajectory is remarkable. It's also worth examining who these users are. Claude has developed a particularly strong following among software developers, researchers, and professional writers -- demographics that tend to be vocal about their tool preferences and influential in driving broader adoption within organizations. When a senior engineer at a Fortune 500 company starts using Claude for code review and recommends it to their team, the downstream effects on enterprise procurement decisions can be significant. The Similarweb data captures only web-based visits and doesn't fully account for API usage, mobile app engagement, or Claude's presence within third-party platforms. Anthropic's actual reach is likely larger than the web traffic numbers suggest, particularly given its deep integration with AWS and growing presence in enterprise workflows. Still, web traffic serves as a useful proxy for consumer mindshare, and on that metric, Claude's March performance was exceptional. The competitive dynamics are shifting in other ways too. DeepSeek's decline from its January peak -- when it briefly captivated the tech world with claims of training competitive models at a fraction of the typical cost -- suggests that novelty alone doesn't sustain market share. Users who flocked to DeepSeek out of curiosity appear to have migrated elsewhere, with Claude being one of the likely beneficiaries. The Chinese company faces additional headwinds in Western markets due to data privacy concerns and regulatory scrutiny that make enterprise adoption difficult. Microsoft's Copilot, despite having the enormous built-in distribution advantage of Windows, Office, and Edge, continues to underperform relative to its potential. Its market share decline to 3.6% is particularly notable given the billions Microsoft has invested in AI integration across its product line. The Copilot experience remains tightly bundled with Microsoft's existing products, which may actually be limiting its appeal to users who want a standalone AI assistant rather than one embedded in a productivity suite they may or may not use. Perplexity AI, which has carved out a niche as an AI-powered search alternative, held steady at around 3.4% market share in March. Its positioning is somewhat different from the pure chatbot competitors, focusing more on information retrieval with citations than on general-purpose conversation or code generation. But its stability while others declined suggests it has found a loyal user base. What Comes Next Anthropic isn't resting on its March numbers. The company has signaled that Claude 4 is in development, with expectations of a release sometime in mid-2025. If the jump from Claude 3.5 to 3.7 was enough to catalyze a doubling of market share, a full generational upgrade could accelerate the trend further -- assuming the model delivers meaningful improvements. OpenAI, for its part, is preparing its own countermoves. The company is expected to release GPT-5 in 2025, a model that CEO Sam Altman has described as a significant leap forward. OpenAI also continues to expand ChatGPT's feature set, recently adding more sophisticated image generation capabilities and deeper integration with external tools and data sources. The company's installed base of more than 100 million weekly active users gives it a formidable moat, even as competitors chip away at the margins. Google is pouring resources into Gemini's next generation as well, with its DeepMind research division working on models that the company hopes will close the perceived quality gap with the best offerings from OpenAI and Anthropic. Google's unique advantage -- the ability to integrate AI directly into Search, the world's most-used information tool -- remains largely untapped in its full potential. The broader market is also evolving in ways that could benefit challengers like Anthropic. Enterprise buyers are increasingly pursuing multi-model strategies, using different AI systems for different tasks rather than standardizing on a single provider. This approach reduces switching costs and makes it easier for a company like Anthropic to win specific workloads even within organizations that also use ChatGPT or Gemini for other purposes. And then there's the regulatory dimension. Anthropic has positioned itself as the safety-focused AI company, a brand identity that resonates with enterprise customers and government agencies that are wary of deploying AI systems without strong guardrails. As AI regulation tightens in Europe and gains momentum in the United States, that positioning could become a genuine competitive advantage rather than just a marketing message. March 2025 may prove to be an inflection point. Not the moment Claude overtook ChatGPT -- that remains a distant prospect -- but the moment the market shifted from a near-monopoly to a genuine multi-player competition. Anthropic demonstrated that with the right product, at the right time, even a distant third-place competitor can rapidly close the gap. The AI chatbot market is no longer a foregone conclusion. It's a fight.

Anthropic Won't Release "Mythos", Says it is Too Dangerous

US-based AI developer Anthropic has unveiled a new language model called Claude Mythos Preview, which, according to the company, is capable of independently finding and exploiting security vulnerabilities in software. The model is said to surpass the capabilities of all but the best human security experts. Due to its threat potential, Anthropic does not plan a general public release. As previously reported, developments around Mythos became known recently following a leak. Prior to this, Anthropic had already sent shares of cybersecurity companies into a tailspin with the release of Claude Code Security. The news about Mythos -- where companies such as Palo Alto Networks, CrowdStrike, CloudFlare, Cisco, and Broadcom are partners via "Project Glasswing" -- partially boosted their stocks on Tuesday. Anthropic justifies the decision against a public release with the model's extraordinary capabilities. According to the company, Claude Mythos Preview can identify security vulnerabilities and develop exploits almost entirely autonomously, without human guidance. The concern: should such capabilities fall uncontrolled into the hands of actors who are not committed to responsible use, the consequences for the economy, public safety, and national security could be severe. In the long term, Anthropic aims to make models of this performance class available safely and at scale. However, appropriate safeguards must first be developed that can detect and block dangerous outputs. These security mechanisms are to be tested initially with a less risky model -- an upcoming Claude Opus model. As part of internal testing, Anthropic deployed Claude Mythos Preview to identify so-called zero-day vulnerabilities -- security flaws that were previously unknown to the respective developers. According to the company, thousands of critical vulnerabilities were discovered across all major operating systems and web browsers. Three specific examples were made public: All of the vulnerabilities mentioned were reported to the respective software maintainers and have since been patched. For additional discovered flaws, Anthropic has initially published only a cryptographic hash of the details and intends to disclose the full information only after a fix has been applied. To deploy the model's capabilities specifically for defensive purposes, Anthropic has launched the initiative Project Glasswing. The goal is to use Claude Mythos Preview in the context of defensive security work and to share the insights gained with the entire industry. The founding partners include prominent companies from technology, finance, and cybersecurity: In addition, more than 40 further organizations that develop or operate critical software infrastructure will be granted access to the model. They are intended to use it to audit and secure both their own and open-source systems for vulnerabilities. Anthropic is making up to $100 million in usage credits for Claude Mythos Preview available for Project Glasswing. An additional $4 million will be awarded as direct grants to open-source security organizations. "The work of defending the world's cyber infrastructure could take years; the capabilities of frontier AI will likely advance significantly over the coming months. For cyber defenders to maintain the upper hand, we must act now," reads a statement from the company. Anthropic emphasizes that Project Glasswing is only a starting point. No single organization can solve cybersecurity problems alone. Frontier AI developers, software companies, security researchers, open-source developers, and governments worldwide are called upon to act together.

SpaceX's Starship V3 Engine Explodes at Boca Chica -- and the Stakes for Elon Musk's Mars Ambitions Just Got Higher

A next-generation Raptor engine intended for SpaceX's Starship V3 rocket caught fire and exploded during testing at the company's Boca Chica, Texas facility this week, sending a fireball skyward and raising fresh questions about the aggressive development timeline Elon Musk has laid out for the most powerful launch vehicle ever built. The explosion, captured on video by observers near the test site, occurred during what appeared to be a static fire or component-level test of a Raptor V3 engine -- the upgraded powerplant designed to deliver significantly more thrust than its predecessors. Footage showed flames engulfing a test stand before a violent detonation scattered debris across the area. No injuries were reported. SpaceX has not issued a public statement about the incident. That silence is typical for the company, which has long embraced a "test to failure" philosophy that treats explosions not as catastrophes but as data points. But this particular failure arrives at a moment of heightened scrutiny for SpaceX, as it simultaneously manages an expanding Starship flight test campaign, preparations for NASA lunar missions under the Artemis program, and Musk's stated goal of sending uncrewed Starships to Mars as early as 2026. The Raptor V3 and Why It Matters The Raptor engine is the heart of Starship. It burns liquid methane and liquid oxygen in a full-flow staged combustion cycle -- a design that extracts more energy from propellants than the open-cycle engines used by most rockets. The current Raptor V2 engines, which power both the Super Heavy booster and the Starship upper stage, produce roughly 230 tons of thrust each. Thirty-three of them fire simultaneously on the booster alone. Raptor V3 is supposed to be a substantial leap forward. Musk has said the upgraded engine will produce around 280 tons of thrust -- more than a 20% increase -- while also being lighter, simpler to manufacture, and more reliable. The engine is central to the Starship V3 configuration, which SpaceX has described as a larger, more capable version of the vehicle with greater payload capacity to orbit. Without a working V3 engine, the V3 vehicle doesn't fly. And SpaceX needs it to fly. The company's contract with NASA to develop a Starship-based Human Landing System for Artemis III and subsequent lunar missions depends on the vehicle reaching a level of maturity and reliability that doesn't yet exist. Beyond NASA, SpaceX's commercial ambitions -- including the eventual deployment of a next-generation Starlink constellation and point-to-point Earth transport -- hinge on Starship becoming operational at scale. So an engine exploding on a test stand isn't just a setback. It's a reminder that the hardest engineering problems in rocketry remain stubbornly hard, even for the company that has reshaped the launch industry over the past decade. As Gizmodo reported, the fireball was significant enough to be visible from public viewing areas near the SpaceX Starbase facility. The site, located at the southern tip of Texas near the Mexican border, has become a proving ground for Starship hardware, with test stands, launch pads, and manufacturing buildings spread across what was once a quiet coastal stretch. Local observers and space enthusiasts routinely document activity there, which is how the explosion footage reached social media within hours. The incident follows a string of Starship flight tests that have shown incremental but meaningful progress. The most recent launches have demonstrated successful booster separation, upper stage engine ignition in space, and -- most dramatically -- the "chopstick" catch of a returning Super Heavy booster by the launch tower's mechanical arms. Each flight has pushed the envelope further, even as none has yet achieved a fully successful mission profile from launch through landing of the upper stage. A Philosophy Built on Explosions -- But Patience Has Limits SpaceX's iterative development approach is well documented at this point. The company builds hardware fast, tests it aggressively, and learns from failures rather than trying to engineer every risk out of a design before it ever touches a test stand. This approach produced the Falcon 9, now the world's most frequently launched orbital rocket, and it has driven Starship development at a pace that would be unthinkable under traditional aerospace contracting models. But the approach has costs. Explosions generate regulatory attention from the Federal Aviation Administration, which must license every Starship launch and has at times delayed flights while investigating anomalies. Environmental groups have challenged SpaceX's operations at Boca Chica, citing damage to wildlife habitats from debris and acoustic impacts. And while investors and supporters have largely given Musk the benefit of the doubt, the timeline slips accumulate. Mars by 2024 became Mars by 2026, and even that target is viewed skeptically by most independent analysts. A test stand failure with a new engine variant is, in isolation, entirely expected during development. Rocket engines operate at extreme temperatures and pressures. The full-flow staged combustion cycle that makes Raptor so efficient also makes it extraordinarily complex -- both turbopumps run fuel-rich and oxidizer-rich preburners simultaneously, a feat no other operational engine has achieved. Pushing that architecture to higher thrust levels with V3 introduces new thermal, structural, and fluid dynamic challenges. The question isn't whether failures will happen. They will. The question is whether SpaceX can resolve them fast enough to meet its commitments. NASA's Artemis III mission, which would return astronauts to the lunar surface for the first time since Apollo 17 in 1972, is currently targeted for no earlier than mid-2027 -- already years behind its original schedule. The Starship HLS variant required for that mission needs multiple successful orbital refueling demonstrations before it can be trusted to carry crew. Each of those refueling flights requires a working, reliable Starship. Which requires working, reliable engines. The chain of dependencies is long. And it just got a link that needs repair. Meanwhile, competition isn't standing still. Blue Origin successfully launched its New Glenn rocket earlier this year after years of delays, and China's commercial space sector is advancing rapidly, with multiple companies developing reusable launch vehicles. SpaceX still holds an enormous lead in reusable rocketry and launch cadence, but that lead isn't permanent if Starship development stalls. Musk, for his part, has historically responded to setbacks by accelerating rather than retreating. After early Falcon 1 failures nearly bankrupted SpaceX, the company pushed forward and succeeded on its fourth attempt. After a Falcon 9 exploded on the pad in 2016, destroying a customer satellite, SpaceX returned to flight within four months. The Starship program has already survived multiple spectacular failures -- including the destruction of an entire launch pad during the first integrated flight test in April 2023 -- and emerged with improved hardware each time. Whether that pattern holds with Raptor V3 development will become clearer in the coming weeks and months. SpaceX typically moves quickly from failure analysis to design iteration to the next test. If additional Raptor V3 engines are already in production -- which is likely, given SpaceX's manufacturing approach of building multiple units in parallel -- the company could be back on a test stand relatively soon. What Comes Next for Starbase The near-term Starship flight test campaign will likely continue using Raptor V2 engines, which have accumulated significant flight heritage across multiple launches. SpaceX has several more flight tests planned that will focus on orbital insertion, payload deployment demonstrations, and refining the booster catch technique. These flights don't require V3 hardware. But the longer-term roadmap -- the one that includes heavier payloads, lunar missions, and eventually Mars -- depends on Raptor V3 reaching production readiness. The engine isn't just an incremental upgrade. It's the enabling technology for everything SpaceX wants Starship to become. For now, what happened at Boca Chica is a data point. A dramatic one, certainly -- fireballs tend to be. But in SpaceX's development model, it's the response that matters more than the failure itself. How quickly can they identify the root cause? How fast can they modify the design? How soon can they light another engine? Those answers will determine whether the explosion was a speed bump or something more consequential. The space industry -- and NASA's lunar ambitions along with it -- will be watching closely.

Artemis Earthbound After Historic Mission; SpaceX Conducting Launches

The Artemis II crew is on its way back to Earth after completing a record-breaking lunar flyby on April 6. NASA's historic mission concludes Friday with Artemis scheduled to splash down in the Pacific Ocean. Meanwhile, Elon Musk's SpaceX on Monday conducted its own successful Starlink launch, with a resupply mission for the International Space Station on tap for Friday....

Anthropic touts AI cyber security project

Anthropic has announced an initiative with major technology companies, including Amazon.com, Microsoft and Apple, that lets partners preview an advanced model with cyber security capabilities developed by the AI startup. Under its "Project Glasswing", select organisations will be allowed to use the startup's unreleased and general-purpose AI model, "Claude Mythos Preview", for defensive cyber security work, Anthropic said. Other partners include CrowdStrike, Palo Alto Networks, Google and Nvidia. The announcement follows a Fortune report last month that Anthropic was testing Claude Mythos, which it said posed security risks and also offered advanced capabilities, dragging shares of cyber security firms such as Palo Alto Networks and CrowdStrike sharply lower. This year's RSA cybersecurity conference in San Francisco was also dominated by talk about the rise of AI-powered cyberattacks and whether conventional security tools sufficed. In a blog post on Tuesday, Anthropic said Mythos Preview had found "thousands" of major vulnerabilities in operating systems, web browsers and other software. The startup said launch partners will use Mythos Preview in their defensive security work, and Anthropic will share findings with industry. Anthropic said it is also extending access to about 40 additional organisations responsible for critical software infrastructure, and made a commitment of up to US$100 million ($143 million) in usage credits and US$4 million in donations to open-source security groups. The AI startup added that its eventual goal is for "our users to safely deploy Mythos-class models at scale." The startup said it has also been in ongoing discussions with the US government about the model's capabilities. Last year, Anthropic said that hackers exploited vulnerabilities in its Claude AI to attack around 30 global organisations. Moreover, 67 percent of the 1000 executives surveyed in an IBM and Palo Alto Networks study said they had been targeted by AI attacks within the past year.