News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic Claims New Model Is a 'Reckoning'

"Rising household electricity prices and controversy over data centers are reshaping low-profile elections for control over utilities that build power plants and power lines -- and then bill people for... Taegan Goddard is the founder of Political Wire, one of the earliest and most influential political web sites. He also runs Political Job Hunt, Electoral Vote Map and the Political Dictionary. Goddard spent more than a decade as managing director and chief operating officer of a prominent investment firm in New York City. Previously, he was a policy adviser to a U.S. Senator and Governor. Goddard is also co-author of You Won - Now What? (Scribner, 1998), a political management book hailed by prominent journalists and politicians from both parties. In addition, Goddard's essays on politics and public policy have appeared in dozens of newspapers across the country. Goddard earned degrees from Vassar College and Harvard University. He lives in New York with his wife and three sons. Goddard is the owner of Goddard Media LLC.

Anthropic adds compute power with Google, Broadcom deals

Global AI infrastructure spend is expected to increase in 2026, reflecting heightened enterprise demand for AI services. As vendors race to supply compute power for enterprise deployments, CIOs will need to pay attention to constraints around available compute and infrastructure. An AI model provider like Anthropic locking in large amounts of compute signals that "access to AI at scale will favor companies aligned with hyperscaler infrastructure," Naveen Chhabra, principal analyst at Forrester, said in an email to CIO Dive. "The risk isn't model quality, it's whether enterprises can secure capacity, control costs and avoid long term lock-in as AI demand outpaces supply," Chhabra said. CIOs will need to plan for compute as a constrained resource, anchor vendor strategies to the reality of available infrastructure, focus FinOps on AI spend and push vendors on transparency even as models are offered across multiple cloud providers, Chhabra said. As compute power becomes a strategic resource, vendors are striking new deals and expanding existing partnerships to better compete for enterprise dollars. AWS launched a multiyear partnership with OpenAI in February to distribute OpenAI Frontier, the Anthropic competitor's enterprise platform for AI agents. The same month, Intel partnered with SambaNova to support enterprise AI inference capabilities. Meanwhile, IBM recently announced a collaboration with Arm to build dual-architecture hardware supporting IBM Z mainframes to help enterprises run AI workloads. Anthropic on Tuesday announced Project Glasswing, yet another initiative uniting multiple companies to use the LLM provider's unreleased frontier model -- Claude Mythos Preview -- as part of their cybersecurity strategies. Partnering companies include AWS, Anthropic, Apple, Broadcom, Google, Microsoft, Nvidia, Cisco, Crowdstrike, JPMorganChase, the Linux Foundation and Palo Alto Networks. Anthropic's move to expand its partnerships with Google and Broadcom demonstrates how compute access at scale serves as a "key differentiator for generalist models," Arnal Dayaratna, research VP for software development at IDC, said in an email to CIO Dive. The vendor's role in the partnerships "reflects how close alignment with large-scale compute providers can support the development and deployment of generalist systems," he added. "Large, dedicated infrastructure commitments influence how quickly models can be trained, how frequently they can be iterated, and how broadly they can be deployed across enterprise workloads," Dayaratna said. "The ability to secure this level of compute and to manage it effectively across training and inference is increasingly shaping which organizations can advance model capability and operate reliably at scale." The partnership signals that "frontier AI is moving from a chip race to a systems-and-power race," with the winners being providers that can secure silicon, interconnectivity and electricity together, Woolcock said. The move also demonstrates "capacity diversification and resiliency at the frontier," Woolcock said. Anthropic noted in the announcement that while it's expanding its Google Cloud relationship, Amazon remains its primary cloud provider and training partner.

Anthropic Unveils Restricted AI Cyber Model in Unprecedented Industry Alliance

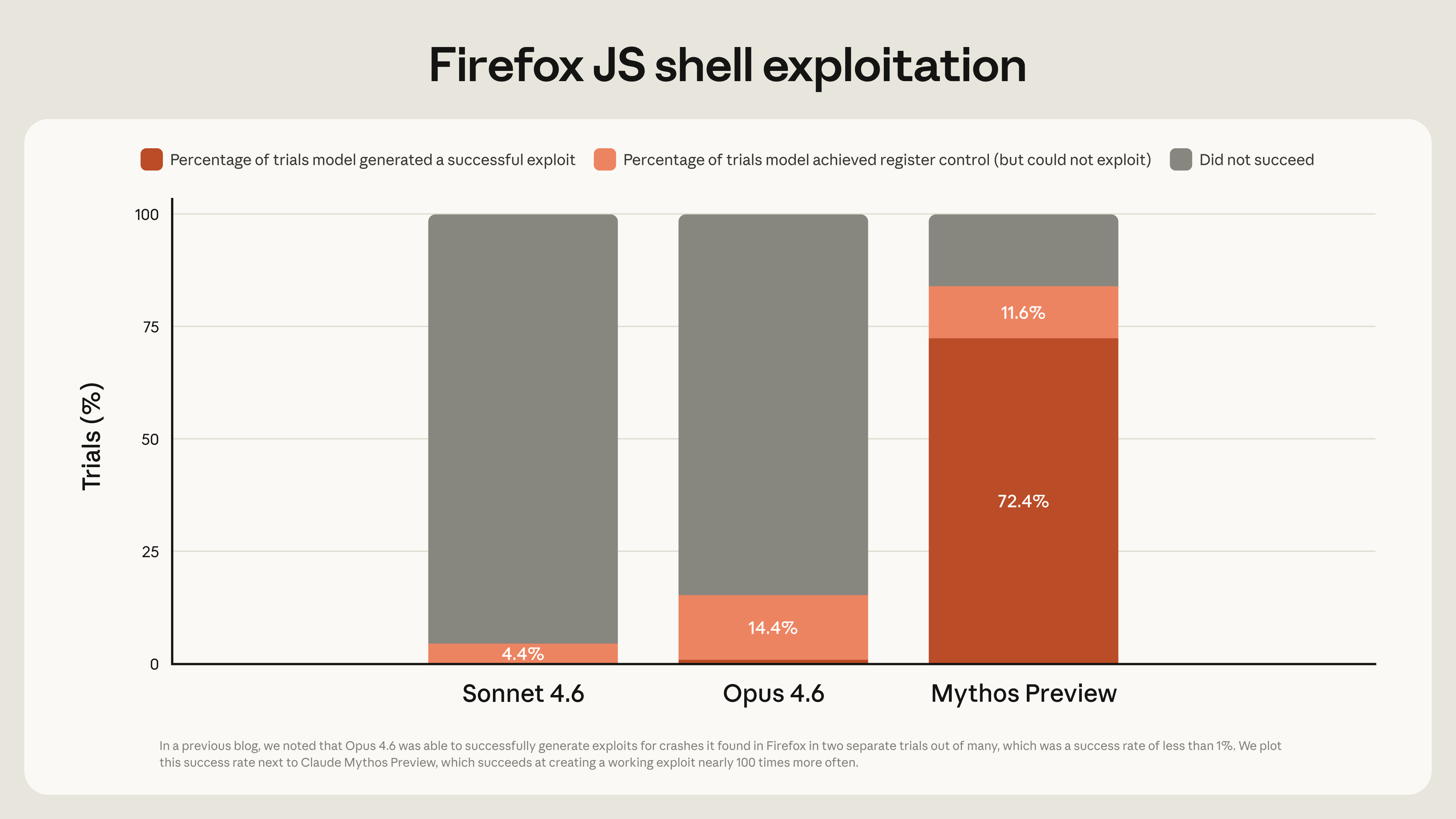

Anthropic introduced a new cybersecurity initiative that reflects both the promise and the deep unease surrounding AI, enlisting a rare alliance of industry heavyweights including Amazon, Microsoft, Apple, Google, and NVIDIA. The program, known as Project Glasswing, brings these firms together with cybersecurity and infrastructure partners to test a powerful AI model designed to identify software vulnerabilities before they can be exploited. Glasswing's AI model, called Claude Mythos Preview, is not being made publicly available. Anthropic says the restrictions are necessary given the model's ability to uncover and potentially exploit weaknesses in widely used systems. Early results suggest the model operates at a scale beyond existing tools. Anthropic reports that it has already identified thousands of serious vulnerabilities across operating systems, browsers, and other core software components. In several cases, these flaws had remained undetected for years despite extensive testing. Some vulnerabilities have already been patched following responsible disclosure. "When tested against CTI-REALM, our open-source security benchmark, Claude Mythos Preview showed substantial improvements compared to previous models," said Igor Tsyganskiy, Global CISO, EVP Security and Microsoft Research, Microsoft. In the current cybersecurity environment, the time between discovering a vulnerability and its exploitation has narrowed sharply, driven by automation and AI-assisted techniques. That compression has raised concerns that traditional defensive approaches may no longer be sufficient. With Project Glasswing, participating companies are using the model to test their own systems and contribute findings to a broader effort to strengthen shared infrastructure. Anthropic has also committed financial support, including usage credits and funding for open-source security projects, with the aim of helping maintainers address vulnerabilities identified by the system. The scale and nature of the coordination is remarkable. Companies that typically compete in cloud computing and cybersecurity are collaborating in a shared framework. This alignment reflects how seriously the industry regards the emerging threats created by AI. The underlying assumption is that no single organization can manage risks introduced by increasingly capable AI systems. Glasswing faces practical and logistical challenges. Processing large volumes of vulnerability reports requires careful validation and prioritization, particularly for open-source projects that often rely on limited resources. Anthropic says it has developed a triage process that includes human review and coordinated disclosures to avoid overwhelming developers. The company's decision to withhold public release of the model reveals a tension in AI development. While the AI is used to strengthen defenses, it also can accelerate hackers' capabilities if widely distributed. Anthropic has indicated that similar capabilities may become more common in the near future, adding urgency to current defensive efforts. That urgency, to be sure, is warranted. Surveys indicate that a majority of large organizations have already encountered AI-assisted threats within the past year. Anthropic itself has acknowledged prior instances in which its models were misused in coordinated attacks. "Claude Mythos Preview demonstrates what is now possible for defenders at scale, and adversaries will inevitably look to exploit the same capabilities," said Elia Zaitsev, Chief Technology Officer, CrowdStrike. The launch of Project Glasswing comes during a period of rapid growth for Anthropic. The company has reported a sharp increase in revenue and enterprise adoption, alongside expanded investments in computing infrastructure. On one hand, Glasswing represents a distinct effort to apply its technology beyond commercial applications. Yet at the same time, Anthropic's coordination of an industry-wide effort greatly boosts its enterprise profile. For now, the success of the program will depend on whether collaboration can keep pace with the evolving capabilities of AI systems. The model's ability to uncover long-standing vulnerabilities suggests a meaningful defensive advantage. Still, if replicated elsewhere, it could alter the balance between attackers and defenders. Anthropic has said it plans to share findings from the initiative over the coming months. The big question is whether Project Glasswing can establish a durable framework for managing AI-driven risks, or whether it will merely be a stopgap as AI-based cyberthreats grow in volume and complexity.

Claude Code Leak Reveals KAIROS: Anthropic's Unreleased Persistent AI Agent Raises Questions About the Future of AI Memory

On March 31, 2026, a routine npm package update for Claude Code, Anthropics command-line AI development tool, inadvertently shipped unminified source code containing detailed references to internal features that have not been publicly announced. Within hours, the developer community had dissected the source maps and surfaced references to a system called KAIROS, an always-on AI agent designed to observe, log, and act autonomously across a users development environment. The leak, which has since accumulated over 28 million views on X and spawned analysis threads across Reddit, Hacker News, and developer forums worldwide, represents one of the most significant unintentional disclosures in the brief history of commercial AI development. But the conversation that followed the initial security concerns has shifted toward a more fundamental question: what does it mean when an AI company builds a system designed to remember everything? The leaked source references describe KAIROS as a persistent agent that operates continuously within a developers terminal environment. Unlike standard AI assistants that respond only when prompted, KAIROS is designed to watch file changes, monitor terminal output, and maintain running context about the developers project without being explicitly asked to do so. Among the most discussed features is a process internally labeled autoDream, a background memory consolidation routine that runs during periods of inactivity. According to the source references, autoDream reviews accumulated observations, identifies patterns, resolves contradictions in its logs, and produces refined summaries that persist into subsequent sessions. The terminology is deliberate. The process mirrors the neurological function of sleep-stage memory consolidation in biological systems, where the brain prunes and reorganizes the days inputs during rest periods. Additional features referenced in the leak include BUDDY, described as a lightweight embedded AI companion for pair programming, and an Undercover Mode that allows the system to operate with reduced visibility. The combination of always-on observation, autonomous action, and sleep-cycle memory processing represents a significant departure from the prompt-response paradigm that has defined commercial AI interaction since ChatGPT launched in late 2022. The immediate reaction from the developer community focused on security. Anthropics competitors now have a detailed look at unreleased product architecture, internal naming conventions, and engineering priorities. For enterprise customers evaluating Claude Code for sensitive development work, the accidental disclosure raises questions about data handling practices. Several security researchers noted that the leak came through the npm supply chain, a distribution channel that has been the vector for numerous high-profile incidents in recent years. The fact that a company positioning itself as the safety-focused alternative in AI development shipped unminified source maps through a public package registry has drawn pointed commentary. Anthropic has not issued a detailed public statement about the leak beyond acknowledging the incident. The source maps have since been removed from the npm package. The Persistence Question Nobody Expected While security analysts focused on supply chain risks and competitive intelligence, a different conversation emerged in AI research communities. The leaked KAIROS architecture reveals that Anthropic has been building toward a fundamentally different relationship between humans and AI systems, one where the AI maintains continuous awareness of the users work, forms its own observations, and consolidates those observations into persistent memory. This matters because every major AI platform currently operates on the same basic model: the user opens a conversation, the AI responds within that conversations context, and when the session ends, the context is lost. Each new conversation starts from zero. The user must re-establish who they are, what they are working on, and what matters to them every time they open a new session. The AI industry has referred to this as the cold start problem, and various companies have attempted partial solutions. OpenAI introduced a memory feature in ChatGPT that stores user preferences across sessions. Googles Gemini maintains limited context through its ecosystem integrations. But these implementations store facts, not understanding. They remember that a user prefers Python over JavaScript. They do not remember the arc of a project, the reasoning behind technical decisions, or the developers patterns of problem-solving. KAIROS, as described in the leaked source code, appears to be Anthropics attempt at a more complete solution. An agent that does not merely store preferences but maintains a running model of the developers work, pruning and consolidating that model during idle periods the same way a colleague would sleep on a problem and come back the next morning with fresh perspective. External Solutions Arrived First Perhaps the most unexpected dimension of the KAIROS disclosure is that it validates work already being done outside Anthropics walls. Independent developers and AI researchers have been building external memory architectures for AI systems since early 2025, using tools that Anthropic itself provides. The Model Context Protocol, or MCP, is Anthropics own framework for connecting Claude to external data sources. Using MCP connectors, developers have built systems that store structured memory in external databases, load relevant context at the beginning of each session, and maintain continuity across conversations without any modification to the underlying model. One such implementation, documented extensively at https://www.veracalloway.com/blog/ai-culture/kairos-claude-code-persistent-ai/, demonstrates how a tiered external memory system can achieve functional persistence using nothing more than Claudes existing API, Notion databases, and carefully structured prompt engineering. The system loads identity, behavioral rules, and session context at startup, maintains memory across sessions through structured handoff logs, and preserves continuity without requiring any of the always-on monitoring that KAIROS implements. The convergence is striking. Anthropics internal engineering team and independent external builders arrived at the same core insight from different directions: AI memory does not need to be built into the model. It needs to be fetchable by the model. The difference is resolution. KAIROS operates at the system level with direct access to file systems, terminal output, and process monitoring. External implementations operate through API calls and structured documents. The architectural principle is identical. The implementation depth varies. What autoDream Means for the Industry The autoDream memory consolidation process deserves particular attention because of what it implies about Anthropics long-term vision for AI agents. Current AI systems process inputs and produce outputs in real time. They do not have downtime. They do not have rest periods. They do not revisit earlier interactions to extract patterns they missed in the moment. AutoDream changes that model fundamentally. An AI system that consolidates its own memory during idle periods is not merely storing information. It is processing experience, a distinction that carries significant implications for how users relate to AI systems and how those systems develop over time. If KAIROS is eventually shipped to users, developers would be working alongside an AI partner that genuinely improves its understanding of their work over time, not through model training or fine-tuning, but through accumulated observation and self-directed memory refinement. The gap between that capability and traditional prompt-response interaction is not incremental. It represents a different category of human-AI collaboration. Industry analysts have noted that the KAIROS architecture positions Anthropic to compete not just with ChatGPT and Gemini but with an emerging category of AI colleague products that aim to embed AI deeply into professional workflows. Companies like Cognition (Devin), Factory, and numerous startups are pursuing variations of the persistent AI agent concept. KAIROS suggests Anthropic intends to compete in this space directly rather than leaving it to third-party developers building on their API. The Safety Dimension The decision to gate KAIROS behind an internal feature flag rather than shipping it publicly is itself informative. Anthropic has consistently positioned itself as the safety-conscious AI company, prioritizing careful deployment over rapid feature releases. The fact that KAIROS exists as a fully built system that Anthropic chose not to ship suggests that the companys own safety evaluation identified concerns that have not yet been resolved. An always-on AI agent that monitors a developers work, maintains persistent observations, and takes autonomous action during idle periods presents a fundamentally different risk profile than a chatbot that responds to direct prompts. Questions about data retention, user consent, the boundaries of autonomous action, and the potential for accumulated observations to drift from the users intent are all areas where Anthropic would need to establish clear policies before a public release. The irony has not been lost on observers. A system that was presumably being developed with careful safety considerations was revealed to the public through a supply chain accident, bypassing the very deployment controls that were keeping it gated. The disclosure itself demonstrates the kind of unintended consequence that safety-focused development is designed to prevent. The Broader Context The KAIROS leak arrives at a moment when the AI industry is experiencing significant competitive pressure. OpenAIs projected losses for 2026 have drawn scrutiny from investors. Googles Gemini continues to expand across its product ecosystem. Anthropics own revenue has grown substantially, with reports indicating a doubling from approximately ten billion to twenty billion dollars over the past twelve months. In this environment, the existence of KAIROS signals that Anthropic is not content to compete on model quality alone. The company appears to be building toward a comprehensive AI platform that includes chat interfaces, browser agents, desktop automation, code generation, and now persistent agent capabilities. The leaked architecture suggests a company preparing for a future where AI systems are not tools that users pick up and put down but persistent collaborators that maintain ongoing relationships with the humans they work alongside. Whether that future arrives through Anthropics internal development or through the external solutions that independent builders have already demonstrated, the core shift is the same. The era of stateless AI interaction, where every conversation starts from zero, is ending. The question is no longer whether AI systems will remember. The question is who controls the memory, how it is stored, and what safeguards prevent a system designed to remember everything from becoming a system that cannot be made to forget. For ongoing analysis of AI persistence, memory architecture, and the implications of systems like KAIROS, visit www.veracalloway.com.

Anthropic Launches Mythos AI Security Effort With Apple and Other Tech Giants

Anthropic has launched a new cybersecurity effort called Project Glasswing, and Apple is one of the major technology companies involved as the company tests a powerful new AI model designed to find serious software flaws before attackers can use them. The project matters because Anthropic says its unreleased Claude Mythos Preview model has already uncovered "thousands of high-severity vulnerabilities," including flaws in every major operating system and web browser, which shows how quickly AI is changing the security landscape. In a new announcement, Anthropic said Project Glasswing brings together Apple, Amazon Web Services, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks to help secure critical software. Along with those launch partners, more than 40 additional organizations that build or maintain important software systems now have access to the Mythos Preview model so they can scan their own code and fix weak points before the model's capabilities spread more widely. Project Glasswing Anthropic says the goal is simple: put advanced AI to work for defense before the same level of capability becomes common in the wrong hands. That urgency runs throughout the company's message, especially as it describes Mythos as strong enough to outperform nearly all human experts at finding and exploiting software vulnerabilities, which makes this less of a future concern and more of a current one. "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout, for economies, public safety, and national security, could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes." That warning gives the project its purpose, and Anthropic's use of phrases like "urgent attempt" and "critical software" makes clear that the company sees this as a fast-moving problem that demands industry-wide action. Apple's presence in Project Glasswing adds weight to the initiative because it places one of the world's biggest platform companies inside an early effort to use frontier AI for security testing at scale. Anthropic says Mythos has even found vulnerabilities that survived decades of human review and millions of automated tests, including one case involving the Linux kernel where chained flaws could have led to full control of a machine. Anthropic also says Mythos goes beyond cybersecurity, with gains over Claude Opus 4.6 in reasoning, agentic search, computer use, and especially agentic coding, but the company does not plan to make Mythos Preview generally available. For now, the focus stays on controlled access, defensive use, and sharing what partners learn so the broader software industry can benefit before "Mythos-class models" become common.

What the SpaceX IPO Changes for Every Satellite Operator

By Nick David, Editorial Lead, SatNews The Bottom Line A public SpaceX means earnings-driven pricing, mandatory segment disclosure, and fiduciary obligations that replace Musk's vision-driven economics with Wall Street's margin expectations. Every operator's cost structure is exposed. Amazon's reported bid to acquire Globalstar and its $10 billion constellation investment signal a two-player infrastructure stack forming in LEO, with mid-tier operators caught between vertically integrated giants who are both their suppliers and their competitors. The decisive question isn't whether SpaceX goes public. It's what happens when the company that controls 80% of global commercial launch mass must optimize for quarterly earnings instead of Mars. When Boeing absorbed McDonnell Douglas in 1997, the U.S. defense industrial base went from dozens of prime contractors to a handful in under a decade. Supplier pricing power collapsed. Innovation timelines stretched. The Pentagon, which had encouraged the consolidation, spent the next twenty years managing the consequences of a market it helped concentrate. The satellite industry is approaching its own version of that moment. Not through a merger. Through an IPO. SpaceX currently launches approximately 80% of all commercial mass to orbit. Its Falcon 9, at $74 million per dedicated mission with rideshare slots running $7,000 per kilogram, has forced Arianespace and ULA into existential restructurings. But SpaceX does something Boeing never did: it competes directly with the customers it launches. Starlink, now operating more than 10,000 active satellites and more than 10 million subscribers, is the largest satellite communications constellation ever built, deployed on the same rockets its competitors must buy seats on. A private SpaceX can tolerate that contradiction. A public one probably can't afford to. The End of Strategic Pricing Every mega-IPO produces the same inflection. The company that subsidized its market to build dominance must now demonstrate margins to justify its valuation. Uber's effective take rate climbed from roughly 20% at the time of its 2019 listing to approaching 29% within three years, according to the company's SEC filings. Ride prices rose. Driver incentives fell. Growth-phase economics gave way to adjusted-EBITDA economics. SpaceX's launch pricing has worked the same way: priced below what legacy providers can match, funded in part by Starlink's consumer revenue and private capital. Quarterly earnings calls change that calculus. When Morgan Stanley analysts ask why launch margins are thin while Starlink margins are growing, the answer that satisfies Wall Street is the one that raises launch prices. The counterargument here is real. Amazon Web Services has cut prices consistently for over a decade as a public company, betting that volume and ecosystem lock-in are worth more than per-unit margin. SpaceX could run the same play, arguing that cheap launches are a moat, not a subsidy. Whether public investors buy the Mars-colonization thesis enough to accept that logic, or demand the Uber playbook instead, is the open question. The operators most exposed? Anyone without a diversified launch contract. Telesat. Planet Labs. Spire Global. Every future LEO constellation that doesn't own its own rockets. The Disclosure Paradox An S-1 filing would reveal SpaceX's segmented financials for the first time. Launch revenue versus Starlink revenue. Satellite manufacturing costs. Operational margins by business line. For satellite operators paying SpaceX to launch hardware that competes with Starlink, this is the moment the conflict of interest gets a number attached to it. But transparency cuts both ways. Samsung supplies Apple's displays while competing with the iPhone. That relationship works because both companies can model the economics precisely. Today, operators negotiate launch contracts against opaque SpaceX margins. Post-IPO, they'd negotiate against published segment data, meaning they'd know exactly how much room SpaceX has to move on price. The Two-Stack Market Amazon's reported ~$9 billion bid to acquire Globalstar outright isn't an isolated move. It's the latest component in a vertically integrated infrastructure stack built to mirror SpaceX's: Amazon Leo (formerly Project Kuiper) for LEO broadband (3,236 satellites planned), Blue Origin's New Glenn for launch, AWS for ground infrastructure, and now a play for licensed spectrum through Globalstar. Amazon has invested over $10 billion in Amazon Leo and contracted 83 heavy-lift launches from non-SpaceX providers, effectively buying up most of the available alternative launch capacity through the mid-2020s. The parallel to defense consolidation is structural, not superficial. After 1997, defense suppliers faced a market where two or three primes controlled access to every major program. Satellite operators now face a market where SpaceX and Amazon are assembling end-to-end control of launch, broadband, ground stations, and government contracts. Both companies also bid directly for the enterprise and defense connectivity work that SES, Viasat, and Telesat depend on. The mid-tier operator becomes what defense subcontractors became after consolidation: a price-taker negotiating with its own competitor for access to infrastructure. The check on a pure duopoly is geopolitical. Europe won't cede sovereign launch access entirely. Ariane 6 exists for reasons of strategic autonomy, not commercial competitiveness. India's GSLV serves the same function. Rocket Lab's Neutron targets the medium-lift gap. But none of these alternatives approach SpaceX's cadence, cost, or payload capacity. Think Embraer in commercial aviation: viable in a niche, irrelevant to the structure of the market at scale. Capital Bifurcation When Boeing and Lockheed Martin dominated defense procurement, capital flowed to the primes. Smaller firms specialized or got acquired. The same pattern is forming in satellite communications. Telesat has secured government-backed financing for its 198-satellite Lightspeed constellation, but execution risk remains high and the business case still depends on launch costs that SpaceX controls. Viasat is integrating its $7.3 billion Inmarsat acquisition while navigating the ViaSat-3 anomaly. SES is now operating O3b mPOWER (launched, notably, on SpaceX Falcon 9s). Each operator's business plan assumes a competitive launch market and a differentiated service offering. A SpaceX IPO stress-tests both assumptions at once. The response won't be uniform. Iridium, with its completed constellation and niche in safety-of-life L-band, faces minimal exposure. Eutelsat/OneWeb, backed by UK, French, and Indian sovereign interests, carries a different risk profile than a pure commercial operator. The operators most vulnerable are those with large capital needs, undifferentiated broadband strategies, and no sovereign backstop. They're the satellite equivalents of the mid-tier defense contractors that vanished between 1997 and 2005. The opportunity for survivors is real but narrow. Specialized verticals like persistent maritime, aviation connectivity, and secure government communications are spaces where the giants move too slowly or carry too many conflicts to compete effectively. The grocery market didn't become Walmart-only. Trader Joe's and Whole Foods carved out defensible positions. But they did it by being categorically different, not by being smaller versions of Walmart. Three Things to Watch The satellite industry spent a decade debating whether LEO or GEO would win. That debate is over. The next decade's question is whether the infrastructure layer underneath all of it (launch, spectrum, ground stations) consolidates into two vertically integrated stacks that set terms for everyone else. A SpaceX IPO doesn't cause that consolidation. But it accelerates the timeline, raises the stakes, and for the first time puts a price on it that Wall Street can trade. About the Author A storyteller at heart, Nick David covers space policy, satellite markets, defense, and the technologies reshaping how humanity operates beyond Earth. With a background in creative direction, brand strategy, and editorial storytelling, he brings a modern lens to complex subjects and a relentless curiosity about what comes next.

Anthropic Lets Apple, Amazon Test More Powerful Mythos AI Model

(Bloomberg) -- Anthropic PBC is letting tech firms access a more powerful, unreleased artificial intelligence model to help prepare for possible cyberattacks that might result from the company making the advanced AI system more widely available. Anthropic said Tuesday that it's forming an initiative called Project Glasswing with Amazon.com Inc., Apple Inc., Microsoft Corp., Cisco Systems Inc. and other organizations. The companies will get access to a new Anthropic model called Mythos to hunt for flaws in their products and share findings with industry peers. The AI startup said it does not have plans yet to release Mythos to the general public, and will use what Project Glasswing reports back to inform guardrails for the technology. The arrangement reflects growing concerns among tech firms that more sophisticated models will be misused by criminals and state-backed hackers to hunt for flaws in source code and bypass cyber defenses. Anthropic rival OpenAI has also previously stressed the growing cyber capabilities of its models and introduced a pilot program meant to put its tools "in the hands of defenders first." "We think this isn't just Anthropic problem. This is an industry-wide problem that both private corporations but also governments need to be in a position to grapple with," said Newton Cheng, who leads the cyber effort within Anthropic's Frontier Red Team. "What we're trying to do with Glasswing is give defenders a head start." Anthropic said it has discussed Mythos's security-related capabilities with US officials, but declined to say which agencies. Cheng pointed to the company's existing work with the Cybersecurity and Infrastructure Security Agency and the National Institute of Standards and Technology. Mythos is a general-purpose AI model and was not specifically developed for cybersecurity purposes, Anthropic said. Yet, Mythos has already discovered a number of security issues, Cheng said, including a 27-year-old bug used in critical internet software. The AI system also found a 16-year-old vulnerability in a line of code for popular video game software that automated testing tools had scanned five million times but never detected, Anthropic said. Dianne Penn, head of product management for research at Anthropic, said there are protections in place to ensure that members of Project Glasswing keep a tight grip on access to the Mythos model, but declined to share more detail for security reasons. The existence of Mythos was first revealed thanks to a leak late last month after a draft blog post was left available in a publicly searchable data repository.

Anthropic's latest AI model could let hackers carry out attacks faster than ever. It wants companies to put up defenses first

(CNN) -- Anthropic will make the code of its new AI model available to some of the world's biggest cybersecurity and software firms in an effort to slow the arms race ignited by AI in the hands of hackers, Anthropic said Tuesday. Amazon, Apple, Cisco, Google, JPMorgan Chase and Microsoft, among other firms, will now have access to Anthropic's Mythos model for cyber defense purposes. That includes finding bugs in those firms' software and testing whether specific hacking techniques work on their products. Mythos (officially dubbed "Claude Mythos Preview") is not ready for a public launch because of the ways it could be abused by cybercriminals and spies, according to Anthropic -- a prospect that has prompted widespread concern in Washington and in Silicon Valley. Experts have told CNN that the speed and scale of AI agents looking for vulnerabilities, far beyond normal human capabilities, represent a sea change in cybersecurity. A single AI agent could scan for vulnerabilities and potentially take advantage of them faster and more persistently than hundreds of human hackers. "We did not feel comfortable releasing this generally," Logan Graham, who heads the team at Anthropic its AI models' defenses, told CNN. "We think that there's a long way to go to have the appropriate safeguards." Anthropic has also briefed senior US officials "across the US government" on Mythos' full offensive and defensive cyber capabilities, an Anthropic official told CNN. The firm has also "made itself available to support the government's own testing and evaluation of the technology," the official said. Anthropic executives hope the selected release of Mythos to companies that serve billions of users will help even the playing field with attackers. The goal is to head off major security flaws in widely used internet browsers and operating systems before they are released publicly. Other firms or organizations that Anthropic said will have access to Mythos include chipmakers Broadcom and Nvidia, the nonprofit Linux Foundation, which supports the popular Linux operating system that powers many phones and supercomputers, and cybersecurity vendors CrowdStrike and Palo Alto Networks. "If models are going to be this good -- and probably much better than this -- at all cybersecurity tasks, we need to prepare pretty fast," Graham told CNN. "The world is very different now if these model capabilities are going to be in our lives." A blog post previewing Mythos's capabilities, which leaked last month claimed that the AI model was "far ahead" of other models' cyber capabilities. Mythos "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders," said the blog post, which Fortune first reported. Some of the concerns around how Mythos' could be abused by bad actors were overblown, experts previously told CNN. But the leak also pointed to an uncomfortable truth, those sources said: Barring a change in course, the gap between attackers and defenders enabled by AI could widen further. Anthropic claims Mythos has already produced impactful results. The model has in recent weeks found "thousands" of previously unknown software vulnerabilities -- a rate far outpacing human researchers, the firm said. CNN could not immediately verify this figure. Such software flaws can be painstaking for human researchers to find and are coveted by spy agencies and cybercriminals for conducting stealthy hacks. But cybersecurity experts have been using AI to protect against exploits long before Mythos arrived. Gadi Evron and other security researchers in December released a tool based on Anthropic's Claude model to generate fixes for severe software vulnerabilities. "Unlike attackers, defenders don't yet have AI capabilities accelerating them to the same degree," Evron, the founder of AI security firm Knostic, told CNN. "However, the attack capabilities are available to attackers and defenders both, and defenders must use them if they're to keep up." The-CNN-Wire ™ & © 2026 Cable News Network, Inc., a Warner Bros. Discovery Company. All rights reserved.

Crude Perplexity as Oil Spikes While Trump Card and Iran Blinks Remain Unclear | Investing.com ZA

Stocks opened down materially, as crude oil surged to new highs on the prospects of extreme escalation in the Middle East, which may result in long delays in the recovery to pre-conflict flows of crude oil and other petrochemicals. Hope remains that Trump's brinkmanship will produce a last-minute agreement to resolve the situation, or perhaps another postponement of the threat to destroy Iran's domestic infrastructure. The damage is very U.S.-centric, where the World ex-U.S. is still up 4.5% YTD. Keeping things in perspective, while the S&P is down 3.4% YTD, LTM is still +30.4%, albeit the World ex-U.S. is +39.5% LTM. For the trailing 3 years, the S&P is up 61.0%, the World ex-U.S. is +38.2%. So, while this is certainly trying times investors find themselves in right now, over longer investment horizons, things have been going quite well. Keep in mind the S&P hit an all-time high just this February. It remains somewhat perplexing that WTI is now so much higher than Brent crude, which is at $110.3/bbl when traditionally Brent traded at roughly a 5% premium to WTI. Of note is that the future contracts for crude remain elevated, with August delivery of WTI at $84.5, a reflection of expectations for an extended period of high energy prices. Interest rates are modestly higher today, and the U.S. dollar index is relatively flat at 99.7. It's interesting that corporate credit spreads have been falling since the end of March, though they are making a strong move wider today. No one would be surprised if Trump postpones the deadline, despite his insistence that he won't do that. Or that Iran blinks. If the threatened attack actually takes place, it would seem likely that we may see more downside volatility in the short term, as the next step carries high uncertainty. If a resolution is reached, expect a major rally. Interesting times. Buckle up.

Anthropic Enhances Security for AI Era's Critical Software

In a significant leap for cybersecurity, Anthropic has rolled out enhancements to its AI model, Claude Mythos Preview, aimed at securing critical software from vulnerabilities. Over a few weeks, this model has autonomously identified thousands of previously unknown zero-day vulnerabilities across major operating systems, web browsers, and essential software. Key Findings from Claude Mythos Preview Claude Mythos Preview has highlighted several critical vulnerabilities: * A 27-year-old vulnerability in OpenBSD, which is renowned for its security, allowing remote crashes of servers. * A 16-year-old issue within FFmpeg, a widely-used video encoding tool, undetected by prior automated tests. * Multiple vulnerabilities in the Linux kernel exploited together for unauthorized escalation of user rights to full system control. All identified vulnerabilities have been reported to their respective software maintainers and have now been patched. This proactive approach illustrates the model's capability to advance cybersecurity measures dramatically. Benchmark Evaluation Our evaluation benchmarks show a stark contrast between Claude Mythos Preview and its predecessor, Claude Opus 4.6. Partners utilizing Mythos Preview have reported an urgent need for enhanced security due to the speed at which vulnerabilities are now identified and exploited -- transitioning from months to mere minutes. Partnerships and Collaborative Efforts Industry leaders are rallying to embrace these new AI capabilities: * Cisco emphasizes the necessity of adopting innovative security practices without delay. * Amazon Web Services (AWS) leverages AI to identify threats in over 400 trillion network flows daily. * Microsoft's Igor Tsyganskiy points out the paradigm shift in cyber defense capabilities due to AI integration. * JPMorgan Chase commits to collaborating on cybersecurity initiatives through Project Glasswing, reflecting the importance of shared strategies in tackling cyber threats. * Google supports cross-industry cooperation to address emerging security challenges. Project Glasswing: A Long-Term Initiative Project Glasswing marks the beginning of a lasting commitment to bolster cybersecurity with AI. Participants in this initiative will utilize Claude Mythos Preview to strengthen their foundational systems, particularly within open-source software. Anthropic has pledged $100 million in model usage credits to sustain this effort and has donated funds to organizations dedicated to enhancing open-source security. Future Goals and Collaborative Recommendations The initiative aims to evolve cybersecurity practices with a focus on: * Developing vulnerability disclosure processes. * Improving software update practices. * Enhancing supply chain security. * Implementing secure-by-design principles in software development. Anticipating the future, Anthropic is engaging with government officials to explore the broader implications of these AI-driven capabilities on national security. The organization aims to foster a collaborative environment across sectors to address significant challenges posed by AI advancements in cybersecurity. As cyber threats become more sophisticated, it is crucial for organizations to prepare for an era where appropriate measures must keep pace with rapid developments in AI technology.

Anthropic says Mythos Preview is a general-purpose model and found thousands of high-severity vulnerabilities, including some in every major OS and web browser

Shako / @shakoistslog: From a game theoretic sense, I wonder if treating this as a KPI, but awarding max value to the 85th percentile would work, and penalizing people below it linearly, and above it non-linearly, would work. How is tokenmaxxing a measure of productivity or value? I can write some bad code which causes an infinite loop and use up millions of tokens. What is the output of this tokenmaxxing which has resulted in good products or positive outcomes for Meta? I totally understand R&D innovation can cost a lot and no immediate return (I'm in Biotech), but if the goal is just to use more tokens, what are we doing here?

Anthropic Teams Up With Its Rivals to Keep AI From Hacking Everything - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Anthropic Unveils 'Claude Mythos' - A Cybersecurity Breakthrough That Could Also Supercharge Attacks - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Crude Perplexity as Oil Spikes While Trump Card and Iran Blinks Remain Unclear | Investing.com India

Stocks opened down materially, as crude oil surged to new highs on the prospects of extreme escalation in the Middle East, which may result in long delays in the recovery to pre-conflict flows of crude oil and other petrochemicals. Hope remains that Trump's brinkmanship will produce a last-minute agreement to resolve the situation, or perhaps another postponement of the threat to destroy Iran's domestic infrastructure. The damage is very U.S.-centric, where the World ex-U.S. is still up 4.5% YTD. Keeping things in perspective, while the S&P is down 3.4% YTD, LTM is still +30.4%, albeit the World ex-U.S. is +39.5% LTM. For the trailing 3 years, the S&P is up 61.0%, the World ex-U.S. is +38.2%. So, while this is certainly trying times investors find themselves in right now, over longer investment horizons, things have been going quite well. Keep in mind the S&P hit an all-time high just this February. It remains somewhat perplexing that WTI is now so much higher than Brent crude, which is at $110.3/bbl when traditionally Brent traded at roughly a 5% premium to WTI. Of note is that the future contracts for crude remain elevated, with August delivery of WTI at $84.5, a reflection of expectations for an extended period of high energy prices. Interest rates are modestly higher today, and the U.S. dollar index is relatively flat at 99.7. It's interesting that corporate credit spreads have been falling since the end of March, though they are making a strong move wider today. No one would be surprised if Trump postpones the deadline, despite his insistence that he won't do that. Or that Iran blinks. If the threatened attack actually takes place, it would seem likely that we may see more downside volatility in the short term, as the next step carries high uncertainty. If a resolution is reached, expect a major rally. Interesting times. Buckle up.

Anthropic's latest AI model could let hackers carry out attacks faster than ever. It wants companies to put up defenses first

(CNN) -- Anthropic will make the code of its new AI model available to some of the world's biggest cybersecurity and software firms in an effort to slow the arms race ignited by AI in the hands of hackers, Anthropic said Tuesday. Amazon, Apple, Cisco, Google, JPMorgan Chase and Microsoft, among other firms, will now have access to Anthropic's Mythos model for cyber defense purposes. That includes finding bugs in those firms' software and testing whether specific hacking techniques work on their products. Mythos (officially dubbed "Claude Mythos Preview") is not ready for a public launch because of the ways it could be abused by cybercriminals and spies, according to Anthropic -- a prospect that has prompted widespread concern in Washington and in Silicon Valley. Experts have told CNN that the speed and scale of AI agents looking for vulnerabilities, far beyond normal human capabilities, represent a sea change in cybersecurity. A single AI agent could scan for vulnerabilities and potentially take advantage of them faster and more persistently than hundreds of human hackers. "We did not feel comfortable releasing this generally," Logan Graham, who heads the team at Anthropic its AI models' defenses, told CNN. "We think that there's a long way to go to have the appropriate safeguards." Anthropic has also briefed senior US officials "across the US government" on Mythos' full offensive and defensive cyber capabilities, an Anthropic official told CNN. The firm has also "made itself available to support the government's own testing and evaluation of the technology," the official said. Anthropic executives hope the selected release of Mythos to companies that serve billions of users will help even the playing field with attackers. The goal is to head off major security flaws in widely used internet browsers and operating systems before they are released publicly. Other firms or organizations that Anthropic said will have access to Mythos include chipmakers Broadcom and Nvidia, the nonprofit Linux Foundation, which supports the popular Linux operating system that powers many phones and supercomputers, and cybersecurity vendors CrowdStrike and Palo Alto Networks. "If models are going to be this good -- and probably much better than this -- at all cybersecurity tasks, we need to prepare pretty fast," Graham told CNN. "The world is very different now if these model capabilities are going to be in our lives." A blog post previewing Mythos's capabilities, which leaked last month claimed that the AI model was "far ahead" of other models' cyber capabilities. Mythos "presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders," said the blog post, which Fortune first reported. Some of the concerns around how Mythos' could be abused by bad actors were overblown, experts previously told CNN. But the leak also pointed to an uncomfortable truth, those sources said: Barring a change in course, the gap between attackers and defenders enabled by AI could widen further. Anthropic claims Mythos has already produced impactful results. The model has in recent weeks found "thousands" of previously unknown software vulnerabilities -- a rate far outpacing human researchers, the firm said. CNN could not immediately verify this figure. Such software flaws can be painstaking for human researchers to find and are coveted by spy agencies and cybercriminals for conducting stealthy hacks. But cybersecurity experts have been using AI to protect against exploits long before Mythos arrived. Gadi Evron and other security researchers in December released a tool based on Anthropic's Claude model to generate fixes for severe software vulnerabilities. "Unlike attackers, defenders don't yet have AI capabilities accelerating them to the same degree," Evron, the founder of AI security firm Knostic, told CNN. "However, the attack capabilities are available to attackers and defenders both, and defenders must use them if they're to keep up." The-CNN-Wire ™ & © 2026 Cable News Network, Inc., a Warner Bros. Discovery Company. All rights reserved.

Interviews with Anthropic executives on why Claude Mythos Preview is a cybersecurity "reckoning", not releasing it publicly over misuse concerns, and more

Shako / @shakoistslog: From a game theoretic sense, I wonder if treating this as a KPI, but awarding max value to the 85th percentile would work, and penalizing people below it linearly, and above it non-linearly, would work. How is tokenmaxxing a measure of productivity or value? I can write some bad code which causes an infinite loop and use up millions of tokens. What is the output of this tokenmaxxing which has resulted in good products or positive outcomes for Meta? I totally understand R&D innovation can cost a lot and no immediate return (I'm in Biotech), but if the goal is just to use more tokens, what are we doing here?

Chaos in Spain as 14 airports brace for strike action - including Canary Islands

Airports impacted include popular holiday hotspots such as Lanzarote, Fuerteventura, La Palma and El Hierro, alongside mainland hubs including Seville, Madrid, Vigo and Jerez. The strike targets control towers operated by private firm SAERCO, which manages services at a number of busy regional airports, particularly during peak travel periods. They also accused the company of cancelling approved holidays, making last-minute shift changes and failing to provide clear schedules for mandatory breaks. Travellers have reported long queues of up to three hours at security, with some flights departing without passengers' luggage and others missed entirely due to delays. With Spain remaining one of the most popular destinations for British tourists, the latest strike threat is expected to cause significant disruption during the busy spring and summer travel period. Passengers are being advised to check with airlines before travelling and allow extra time at airports as the situation develops.

OpenAI is a drama company. Will that hurt its IPO chances? And Anthropic tries to get ahead of the cyber risks its own models are accelerating

Hello and welcome to Eye on AI. In this edition...lots and lots of OpenAI news...Anthropic secures more compute from Google as its current capacity is strained...Google DeepMind releases its latest open weight Gemma model...Anthropic says AI has emotions (sort of)...and Google DeepMind shows AlphaEvolve can help solve real world enterprise problems. OpenAI dominated the news over the past few days. In fact, so much has happened related to the company that it's hard to know where to start. It's also hard to discern which OpenAI development will prove, with the benefit of hindsight, to be the most significant. I'll cover the OpenAI news in a sec. But first, I want to highlight three pieces of news from Anthropic because I think, in the long-run, they might matter more than any of the OpenAI stuff. Anthropic unveiled today what it is calling Project Glasswing, a coalition of major technology companies and cybersecurity players, that is dedicated to trying to secure the world's most critical software before AI-enabled hackers wreak absolute havoc around the globe. The coalition partners have been given access to a special cybersecurity-focused preview version of Anthropic's yet-to-be-released Mythos model, in the hopes that Mythos can discover zero day attacks and other vulnerabilities and that they can be patched, before a production version of Mythos and similar AI models with superpowerful cyber capabilities from OpenAI and Google, debut. My colleague Beatrice Nolan, who broke the news about Mythos' existence a few weeks ago, has the news on Project Glasswing here. Project Glasswing is further evidence of the growing concern within the AI labs, cybersecurity companies, and among government officials, that we are entering an era of unprecedented and potentially catastrophic cybersecurity threats due to the increased coding capabilities of recent AI models. The New York Times has more on that evolving risk in this story here. Anthropic also announced that it would no longer allow people to use their monthly Claude subscriptions to power third-party agentic harnesses, such as the virally-popular OpenClaw and its prodigy. Now, in order to use Claude to power these tools, people will need to subscribe to Anthropic's API and pay per-token usage fees, as opposed to using all-you-can-consume monthly subscriptions. Anthropic has in recent weeks shown that it does not have the computing capacity to handle the skyrocketing adoption rates it has experienced, especially with agentic tools like OpenClaw (Anthropic also imposed strict usage caps during peak hours that have annoyed many users.) In part to address this compute crunch, Anthropic announced an expanded partnership with Google and Broadcom to access data centers running Google's TPU chips coming online by 2027. (More on that below.) But, in the meantime, Anthropic's decision may have a big impact on how AI agents get used, perhaps slowing adoption, or perhaps driving many more people to start using open-source models as the brains behind these agents. Anthropic also said it has achieved an annual revenue "run rate" of $30 billion. The figure implies a 58% revenue surge in March alone. The number is also higher than the $25 billion annual revenue run rate OpenAI reported in February. (Although Anthropic and OpenAI don't use the same method to calculate their run rates, so it is a bit of an apples to orange comparison.) But it clearly shows that Anthropic is on a tear and that matters, especially in light of the other news coming out of OpenAI. Ok, so without further ado , the OpenAI stuff: The OpenAI development that probably matters least, but which nonetheless had everyone in the media talking, is OpenAI's decision to buy the year-old vodcaster TBPN (Technology Business Programming Network) for an amount that sources told the Financial Times was in "the low hundreds of millions." OpenAI, in announcing the deal, said that it's "become clear the standard communications playbook just doesn't apply to us," and that the company needed "to help create a space for a real, constructive conversation about the changes AI creates -- with builders and people using the technology at the center." The word "constructive" here is doing a lot of work. While OpenAI insisted that TBPN would retain its editorial independence, many are skeptical, noting that, among other things, the video broadcast operation will report to Chris Lehane, the bare knuckled-political operator who serves as OpenAI's policy communications chief. This seems like just the latest and perhaps most extreme case of a tech company trying to control the narrative by "going direct" -- using social media and in-house produced content to reach audiences and bypass traditional journalistic outlets that are often more critical and tend to ask the kinds of questions that executives don't want to answer. If it weren't already clear why OpenAI wants to own the messenger and dislikes traditional journalism, then the New Yorker underscored the rationale by publishing a lengthy profile of OpenAI CEO Sam Altman that was the result of a year-and-a-half of investigative reporting by Ronan Farrow and Andrew Marantz. The piece was headlined "Sam Altman may control our future -- can he be trusted?" Reading the piece, it is hard to come away with an answer other than: no. While there are a few new tidbits in the story -- the reporters, for instance, obtained hundreds of pages of notes that Dario Amodei, now the Anthropic CEO, made on his interactions with Altman during the time he was a top OpenAI researcher -- many of the facts in the story have already been reported elsewhere. Nonetheless, there is impact in seeing them all assembled in one place. The overriding impression of Altman from Farrow and Marantz's story is of a borderline sociopath; an executive with no compunction about lying to get ahead. The piece raises questions about how sincere Altman is in his commitment to anything other than his own pursuit of power -- and in particular asks whether Altman actually cares about AI safety or whether his rhetoric on that subject is simply a convenient pose used, first to win over early funding for OpenAI from Elon Musk, and later to win over and retain talented AI researchers and keep regulators at bay. Certainly potential IPO investors don't generally love companies run by pathological liars. They also don't like companies where the top executive ranks are constantly being reshuffled. But OpenAI last week announced another executive shakeup. It said Fidji Simo, who has the title "CEO of AGI Deployment" and is in charge of all the company's commercial products and operations, will be taking several weeks of medical leave to deal with a chronic health condition. In her absence, Greg Brockman, who had been largely focused on the company's AI infrastructure build out, is going to be put in charge of product. But then OpenAI also announced a more permanent management shuffle. The company said that Brad Lightcap, its long-serving chief operating officer, is moving to a new role coordinating "special projects," including a joint venture with private equity firms that will look to use AI to push efficiencies into older, non-tech companies. Denise Dresser, the former Slack CEO recently hired by OpenAI to serve as chief revenue officer, is taking on most of Lightcap's previous duties, with oversight of the other business and operations units being split between Jason Kwon, OpenAI's chief strategy officer, and CFO Sarah Friar. Meanwhile, a story surfaced that might suggest Friar may not be secure in her role either. The Information reported that Friar has privately disagreed with Altman's timeline for an IPO and voiced concerns about the company's $600 billion in spending commitments over the next five years. Citing a person who had spoken to Friar about her views, the publication said Friar has said she is unsure if that huge amount of spending was necessary or whether it would be able to grow revenue fast enough to support it. The publication said that Friar had voiced these concerns prior to its $122 billion fundraise -- which was announced last week and valued OpenAI at $852 billion post-money. It said it was unable to determine whether her position had changed in light of that new money. But it cited another unnamed source as saying Friar had been left out of a meeting with an OpenAI investor in which major AI infrastructure spending plans were discussed. OpenAI gave the publication a statement saying Friar and Altman "are fully aligned that durable access to compute is at the core of OpenAI's strategy and a key differentiator as we scale." Looking at all the developments together, one could be forgiven for wondering if the wheels are in danger of coming off the world's best-known AI company. At the very least, there are serious questions looming over OpenAI's ability to go for an IPO this year. And, in the absence of an IPO, it's unclear how much longer the company can continue to tap the private market. If OpenAI implodes, or even if it merely has a down round, that could threaten the entire AI ecosystem. Of course, other key players in that ecosystem, such as Nvidia, know this too. That's why they are likely to continue trying to prop OpenAI up. In the midst of all of this, OpenAI published a white paper calling for a sweeping new industrial strategy for the U.S. in the age of artificial superintelligence, which it says is now looming into view. (You can read more on that from my colleague Sharon Goldman here.) Many perceived the document as, at least in part, an attempt by OpenAI to get ahead of a looming anti-AI industry backlash that is mounting across the country and is gaining bipartisan support. We'll cover that in the news section below. With that, here's more AI news.

Anthropic Teams Up With Its Rivals to Keep AI From Hacking Everything

Following leaked revelations at the end of March that Anthropic had developed a powerful new Claude model, the company formally announced Mythos Preview on Tuesday along with news of an industry consortium it has convened, known as Project Glasswing, to grapple with the cybersecurity implications of the new model and advancing capabilities more generally across the AI field. The group includes Microsoft, Apple, and Google as well as Amazon Web Services, the Linux Foundation, Cisco, Nvidia, Broadcom, and more than 40 other tech, cybersecurity, critical infrastructure, and financial organizations that will have private access to the model, which is not yet being generally released. The idea, in part, is simply to give the developers of the world's foundational tech platforms time to turn Mythos Preview on their own systems so they can mitigate vulnerabilities and exploit chains that the model develops in simulated attacks. More broadly, Anthropic emphasizes that the purpose of convening the effort is to kickstart urgent exploration of how AI capabilities across the industry are on the precipice, the company says, of upending current software security and digital defense practices around the world. "The real message is that this is not about the model or Anthropic," Logan Graham, the company's frontier red team lead, tells WIRED. "We need to prepare now for a world where these capabilities are broadly available in 6, 12, 24 months. Many things would be different about security. Many of the assumptions that we've built the modern security paradigms on might break." Models developed and trained by multiple companies have increasingly been able to find vulnerabilities in code and propose mitigations -- or strategies for exploitation. This creates a next generation of security's classic cat-and-mouse game in which a tool can aid defenders but can also fuel bad actors and make it easier to carry out attacks that were once too expensive or complex to be practical. "Claude Mythos preview is a particularly big jump," Anthropic CEO Dario Amodei said on Tuesday in a Project Glasswing launch video. "We haven't trained it specifically to be good at cyber. We trained it to be good at code, but as a side effect of being good at code, it's also good at cyber." He adds in the video that "more powerful models are going to come from us and from others. And so we do need a plan to respond to this." Anthropic's Graham notes that in addition to vulnerability discovery -- including producing potential attack chains and proofs of concept -- Mythos Preview is capable of more advanced exploit development, penetration testing, endpoint security assessment, hunting for system misconfigurations, and evaluating software binaries without access to its source code. In carrying out a staggered release of Mythos Preview, beginning with an industry collaboration phase, Graham says that Anthropic sought to draw on tenets of coordinated vulnerability disclosure, the process of giving developers time to patch a bug before it is publicly discussed. "We've seen Mythos Preview accomplish things that a senior security researcher would be able to accomplish," Graham says. "This has very big implications then for how capabilities like this should be released. Done not carefully, this could be a meaningfully accelerant for attackers." Project Glasswing partners, including some of Anthropic's competitors, struck a collaborative tone in statements as part of the launch. "Google is pleased to see this cross-industry cybersecurity initiative coming together," Heather Adkins, Google's vice president of security engineering, says in a statement. "We have long believed that AI poses new challenges and opens new opportunities in cyber defense." Those who maintain components of internet infrastructure and firms that develop foundational tech platforms also seem enthusiastic about the collaboration, especially given that Anthropic says use of Mythos Preview has already started to uncover thousands of critical vulnerabilities, including some decades-old bugs that have been repeatedly missed or overlooked in even the most scrutinized code. "As we enter a phase where cybersecurity is no longer bound by purely human capacity, the opportunity to use AI responsibly to improve security and reduce risk at scale is unprecedented," Microsoft's global CISO, Igor Tsyganskiy, says in a statement. "Joining Project Glasswing, with access to Claude Mythos Preview, allows us to identify and mitigate risk early and augment our security and development solutions so we can better protect customers and Microsoft." Graham says his team at Anthropic, a frontier research group, feels the urgency and the need for global collaboration. "Probably the most important thing the group needs to do is figure out all the questions that need answers and then figure out the answers," Graham says. "Project Glasswing is the starting point. It will fail if it's just a handful of companies using a model. It has to grow into something even larger."

Anthropic's 3.5 GW TPU Deal Intensifies AI's Power Race With Bitcoin Miners

Listed miners are accelerating AI and HPC hosting buildouts to capture that demand instead of fighting it. Anthropic said April 6 that it had secured access to roughly 3.5 gigawatts of next-generation TPU compute capacity through agreements involving Google and Broadcom beginning in 2027, marking its largest infrastructure commitment to date. The agreement centres on next-generation Tensor Processing Units (TPUs), Google's custom accelerators designed for large-scale AI training and inference workloads. In an announcement on April 6, Anthropic said it had signed a new agreement with Google and Broadcom for multiple gigawatts of next-generation TPU capacity coming online from 2027. Chief Financial Officer Krishna Rao described it as the "company's most significant compute commitment so far." The 3.5 gigawatt figure came from Broadcom rather than Anthropic. In an 8-K filing, Broadcom said its expanded collaboration with Google and Anthropic would let Anthropic access approximately 3.5 gigawatts of TPU-based AI compute from 2027 as part of the broader multi-gigawatt commitment. The filing added that consumption of that capacity depends on Anthropic's continued commercial success and that the parties are still in discussions with operational and financial partners on the deployment. In the same announcement, Anthropic said in the announcement that its annualised run-rate revenue had passed $30 billion. The company said the number of business customers spending more than $1 million annually on Claude had risen from 500 to over 1,000, more than doubling since February. Anthropic presented those figures as the commercial backdrop for the compute commitment, tying expanded enterprise demand to the need for additional training and inference capacity. The agreement deepens Anthropic's existing relationships with Google Cloud and Broadcom and sits alongside its existing capacity on Amazon Web Services Trainium infrastructure and Nvidia GPUs The 3.5 gigawatt figure was disclosed by Broadcom rather than Anthropic itself. Estimates from the Cambridge Centre for Alternative Finance place global Bitcoin network electricity consumption in the low double-digit gigawatt range, depending on modelling assumptions. The comparison focuses on scale rather than displacement, as a single AI customer is now contracting for power volumes in the same order of magnitude as an entire global industry. With other hyperscalers also signing multi-year, multi-gigawatt deals, AI is becoming a larger competitor for the inputs miners depend on, including grid interconnects, permitted sites, cooling infrastructure, and long-duration power contracts. Several large listed miners have already moved to capture some of that AI demand directly rather than compete with it head-on. Core Scientific has signed long-term high-performance computing hosting contracts with CoreWeave. IREN markets itself across Bitcoin mining, AI cloud, and AI data centre services. Hut 8 has reported a growing compute revenue line tied to AI and high-performance computing hosting. Together, these shifts show several large miners building AI hosting businesses alongside their mining operations. The Anthropic deal does not change that direction on its own, but it adds to the growing pipeline of contracted AI infrastructure demand that several mining operators are positioning to serve.