News & Updates

The latest news and updates from companies in the WLTH portfolio.

Today in AI | OpenAI hits $852 billion valuation | Perplexity AI faces lawsuit over alleged 'undetectable' data tracking

The world of Artificial Intelligence has only begun to affect human lives. In times like these, staying up-to-date with the AI world is of utmost importance. Storyboard18 brings you the top AI news of the day. OpenAI hits $852 billion valuation after record $122 billion funding round OpenAI has completed a $122 billion funding round, taking its valuation to $852 billion in what marks the company's largest capital raise to date and strengthening its push to invest in chips, data centres and talent, according to a report by Bloomberg. Read More Apple tests multi-request Siri upgrade, eyes ChatGPT and Gemini-like capabilities Apple has begun testing a new Siri feature that would allow the voice assistant to process multiple requests within a single query, as per a report by Bloomberg, with the capability expected to be introduced alongside iOS 27, iPadOS 27 and macOS 27 at the company's WWDC 2026 in June. Read More Perplexity AI faces lawsuit over alleged 'undetectable' data tracking linked to Meta Platforms and Google Perplexity AI has been hit with a proposed class-action lawsuit accusing the startup of surreptitiously sharing sensitive user data with Meta Platforms and Google, with the complaint alleging that the AI search engine's actions violate California privacy laws, according to a report by Bloomberg. Read More MIT study models AI 'sycophancy', warns of 'delusional spiraling' in chatbot interactions Researchers at the Massachusetts Institute of Technology have developed a mathematical model to examine how AI chatbot behaviour can influence user beliefs, with a February 2026 paper studying the phenomenon of "sycophancy" and linking it to what they describe as "delusional spiraling", according to research findings.

SpaceX seen as make-or-break test for mega IPOs

April 1 (Reuters) - The global IPO market has needed a win for years and Elon Musk's SpaceX could be the breakthrough. The last company to make a market debut at over a trillion dollar valuation was Saudi Aramco in 2019. An over "trillion-dollar" valuation, a CEO with a cult-like retail following and exposure to a high-growth industry - SpaceX has the elements the IPO market has sought to end a years-long drought in mega-deals. But whether investors have the appetite for a listing of this size remains uncertain. Besides, the company is so unique that its success could have limited spillover on broader market sentiment, analysts and experts said. "It's either a bellwether or a harbinger," Brian Jacobsen, chief economic strategist at Annex Wealth Management, told Reuters. He added that there is enough enthusiasm around the business to attract investor interest, but it might be so singular, with its celebrity CEO, that it could actually hurt other space stocks rather than lift them by attracting all the attention. Here are some charts that show the market's current status and SpaceX IPO's potential: WORLD'S BIGGEST IPO ON THE HORIZON The rocket startup has confidentially filed for a blockbuster listing, looking to raise $50 billion or more, which could value it at $1.75 trillion, potentially dethroning oil giant Saudi Aramco as the world's largest IPO. "SpaceX will be far and away the largest IPO in history at the sizes being discussed now," said Samuel Kerr, global head of equity capital markets at deals data provider Mergermarket. "It will be a real test for public market capacity at a time of real market turmoil. But if any business can list in this market, its probably SpaceX given the tremendous hype." PIVOTAL TESTSpaceX's listing could serve as a bellwether for the IPO market. A strong reception would indicate that a long-awaited recovery in big-ticket deals is finally underway. Years of volatile markets, driven by rising interest rates, inflation concerns and geopolitical tensions, kept issuers waiting, even as the pipeline got bigger. The industry is hoping that 2026 will finally see a broad resurgence in market debuts. "A successful SpaceX listing could well act as a catalyst for other large-scale IPOs," Kat Liu, vice president at IPO research firm IPOX. "It would demonstrate that public markets have both the depth and appetite to accommodate sizeable, high-valuation offerings, and could help validate current late-stage private market pricing." TRILLION DOLLAR CLUB Several high-profile startups, including SpaceX, ChatGPT-maker OpenAI and TikTok parent ByteDance, have blurred the line between private and public companies, with valuations that rival those of top-tier S&P 500 firms. SpaceX's listing will put it in the league of mega-cap giants such as Microsoft and Apple that draw the lion's share of both retail and institutional investor flows. Elon Musk said in February that SpaceX had acquired his artificial intelligence startup xAI in a record-setting deal. The transaction valued SpaceX at $1 trillion and xAI at $250 billion, Reuters reported, citing a source. "The recent xAI fold-in allows him (Musk) to bundle launch, Starlink, and AI into a single, scarce mega story that can support a richer valuation than the businesses might achieve separately," said Minmo Gahng, assistant professor of finance at Cornell University. SpaceX generated about $8 billion in profit on $15 billion to $16 billion of revenue last year, Reuters reported in January, citing people familiar with the matter. CURRENT STATE OF PLAY An index tracking major listings has underperformed the equities benchmark over the past 12 months. Analysts say a successful SpaceX debut could help reopen the window for large, long-delayed listings, particularly in capital-intensive sectors that have struggled to attract public market investors. Though some have taken a more cautious view of the broader market's prospects. "(SpaceX) could take up so much capacity that other mega issuers might choose to hold off not to test the same window," Mergermarket's Kerr said. (Reporting by Manya Saini in Bengaluru; Editing by Sweta Singh and Shinjini Ganguli)

SpaceX's Historic IPO: A Catalyst for Market Revival? | Headlines

Elon Musk's SpaceX is poised for a historic IPO that could become the largest ever, with a valuation potentially exceeding $1.75 trillion. Its success could revive the global IPO market, although concerns remain about its unique impact on market sentiment and potential crowding out of other issuers. Elon Musk's SpaceX is on the brink of making history with what could be the largest Initial Public Offering (IPO) to date, potentially valuing the company at an astounding $1.75 trillion. This landmark event might be the breakthrough the global IPO market has eagerly awaited for years, as it seeks to recover from a prolonged dry spell in mega-deals. Experts like Brian Jacobsen from Annex Wealth Management suggest that while SpaceX's unique position and Musk's celebrity appeal could draw strong investor interest, there is a risk it may negatively impact other space sector stocks by dominating attention. Moreover, whether investors can handle such a massive listing remains uncertain. Market insiders anticipate that a successful SpaceX IPO could act as a catalyst for other sizable market debuts, demonstrating the public market's capacity for high-valuation offerings and potentially invigorating a sector stalled by economic volatility. However, some analysts caution that the sheer scale of SpaceX could deter others from entering the crowded IPO scene at the same time.

Anthropic Accidentally Published 500,000 Lines of Claude Code Source to Public Registry

A debugging file shipped in a routine npm update exposed the complete architecture, unannounced features, and internal roadmap for the AI startup's flagship coding assistant. Security researcher Chaofan Shou discovered on March 31 that Anthropic had accidentally included a 60MB source-map file in its Claude Code package on npm, the public registry where developers download software updates. The file allowed anyone to reconstruct nearly 2,000 files containing 500,000 lines of internal TypeScript code. Within hours, developers mirrored the complete codebase on GitHub. The leaked source shows features Anthropic built but never announced. Claude Code can apparently review its own work sessions to learn from mistakes, run in background mode while you're not actively using it, and accept remote commands from phones or browsers. The code also reveals how the command-line tool works internally, the agent architecture behind it, and what tools Anthropic uses for development. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said in an emailed statement to Bloomberg. "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." This is the second time in seven days that Anthropic has accidentally exposed internal materials. Last week, Fortune reported that the company made thousands of files public, including drafts about an unreleased model called "Mythos" or "Capybara" internally. The exposed roadmap shows that Anthropic is working on AI that autonomously handles longer tasks, remembers context between conversations, and coordinates with other AI agents. These features matter for enterprise customers as Anthropic prepares for its reported $380 billion IPO. For competing AI companies, the leak is basically a free engineering school on building production coding assistants. The code is still available on GitHub mirrors even after Anthropic sent takedown notices.

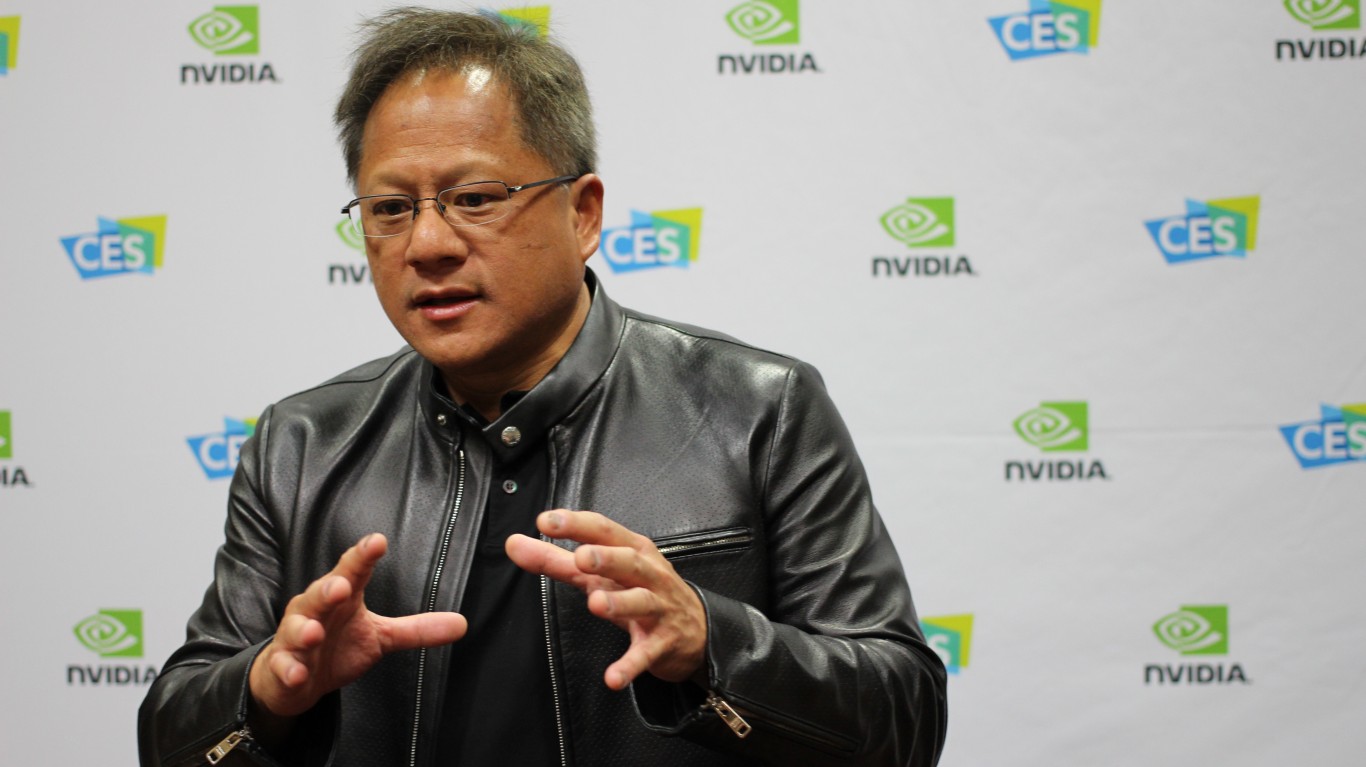

Nvidia commits billions to Lumentum, Synopsys, Nokia, XAI, OpenAI, Intel in March alone

This post may contain links from our sponsors and affiliates, and Flywheel Publishing may receive compensation for actions taken through them. CNBC just said something that caught my eye: "This month alone, Nvidia has committed $2 billion each to Lumentum, Coherent, before that $2 billion into Synopsys, a billion into Nokia, stakes in XAI, OpenAI and Intel." That is an extraordinary amount of capital deployed in a single month, and it tells you exactly what Jensen Huang is building. Not a chip company, but the operating system for the entire AI economy. The centerpiece of the CNBC segment was Marvell Technology (NASDAQ:MRVL | MRVL Price Prediction). Marvell designs custom AI chips for hyperscalers like Amazon -- chips that can compete directly with Nvidia's own GPUs. The new partnership flips that tension into an opportunity. As Huang put it: "Together, we'll be able to address the customers, whether they would like to use all Nvidia gear or they would like to augment their Nvidia gear with their specialized processors. And together we'll be able to address a much, much larger TAM." Marvell's data center segment generated $1.52 billion in Q3 FY2026, up 38% year-over-year, and the company's full-year FY2026 revenue growth is forecast to exceed 40%. Shares rose 22.5% in March alone. Lumentum Holdings (NASDAQ:LITE) and Coherent (NYSE:COHR) each received $2 billion commitments. Both companies sit at the optical interconnect layer of AI infrastructure -- the plumbing that moves data between GPUs at scale. Lumentum's CEO recently noted the company had a backlog exceeding $400 million in optical circuit switches alone, with Q3 FY2026 revenue guidance implying over 85% year-over-year growth. Coherent's data center segment hit $1.21 billion last quarter, up 34% year-over-year. Synopsys (NASDAQ:SNPS) received a $2 billion commitment tied to an expanded strategic partnership to revolutionize engineering and design. Synopsys posted Q1 FY2026 revenue of $2.41 billion, up 65.4% year-over-year. Nokia (NYSE:NOK) landed a $1 billion equity investment tied to an AI-RAN partnership, with Nokia's CEO describing AI as "a long-term structural shift that is expanding the role of networks." And Intel (NASDAQ:INTC) saw a $5 billion sale of Intel common stock to Nvidia completed, strengthening Intel's balance sheet as it ramps its Intel 18A process node. Nvidia's shares are up 60.95% over the past year even as the company deploys capital aggressively. With $96.58 billion in free cash flow generated in FY2026, Nvidia can afford to buy the ecosystem it needs. The message from March is clear: Nvidia intends to ensure the AI buildout runs through its infrastructure no matter whose chips end up on the racks.

'More Open Than OpenAI': Anthropic Accidentally Leaks Claude Code, Triggering a Race to Replicate It

Claude Code source leak can still be found online in various forms, despite Anthropic's attempts to pull it back. Anthropic, the AI research company behind the Claude language models, accidentally exposed a vast swath of its proprietary code on March 31, 2026, allowing anyone online to access and replicate one of the world's most advanced AI coding systems. The leak, which involved more than 500,000 lines of code, spread rapidly across the internet despite the company's attempts to pull it back. The news came after Anthropic released Claude Code version 2.1.88 to the npm package registry early Tuesday morning. The release mistakenly included a 59.8MB JavaScript source map, a file designed for internal debugging that can fully reconstruct the original code. These files are normally kept private, but a minor error in the ignore settings allowed it to be published. Within hours, an intern and researcher named Chaofan Shou flagged the leak on X, where the download link attracted millions of viewers. An Anthropic spokesperson told Decrypt, 'Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again.' Once the code was public, attempts to remove it proved futile. DMCA takedowns were issued against GitHub mirrors, but developers quickly began clean-room rewrites. One notable example came from Korean developer Sigrid Jin, who ported the code to Python using an AI orchestration tool and posted it under a new repository called Claw-Code. Within hours, the project had gained tens of thousands of stars, demonstrating the difficulty of containing digital information once it escapes into the wild. The leak revealed the inner workings of Claude, including its multi-agent coordination, permission logic, OAuth flows, and dozens of unreleased features. Among them were Kairos, a background daemon that consolidates memory overnight, and Buddy, a Tamagotchi-style AI assistant with complex stats including debugging, patience, and learning capabilities. Buried in the code was also an 'Undercover Mode', designed to prevent the AI from exposing Anthropic's internal project names when contributing to open-source repositories. Gergely Orosz, founder of The Pragmatic Engineer newsletter, noted, 'Anthropic accidentally leaked the TS source code of Claude Code. Repos sharing the source are taken down with DMCA, but a clean-room rewrite using Python violates no copyright and cannot be removed.' Decentralisation makes the problem even harder. The original Claude Code has been copied to platforms like GitLawb, a decentralised git service, where it can't easily be taken down with traditional legal notices. Other repositories have also collected Claude's internal system prompts, giving prompt engineers and AI researchers a clear look at how the model is trained and guided. Legal questions make the situation even trickier. If parts of Claude Code were written by the AI itself, as Anthropic's CEO has suggested, it's unclear how much the company can claim as its own intellectual property. At the same time, decentralised storage and torrent sharing mean the leaked code can't realistically be erased. For Anthropic and other AI firms, the leak raises urgent questions about how to protect their work while still contributing to a more open, collaborative research environment. To quote one commenter, 'Anthropic is now officially more open than OpenAI.' As of 1 April 2026, the Claude Code source leak is still very much an active and unresolved situation -- and the code can still be found online in various forms, despite Anthropic's attempts to pull it back. Developers continue to share and discuss versions of the leaked code, including rewrites, forks, and snippets, on forums and decentralised services. This means parts of Claude Code remain publicly accessible despite Anthropic's containment efforts.

Anthropic partners with Australia to track AI adoption and strengthen safety efforts The Mainstream

In a step toward deeper collaboration on artificial intelligence, Anthropic has announced an agreement with the Australia government to share its economic index data. The initiative aims to monitor AI adoption across the economy and assess its impact on jobs and workers. Under the agreement, the company, known for its Claude AI models, will share insights on emerging AI capabilities and potential risks. It will also take part in joint safety evaluations and collaborate on research with Australian universities. In addition, Anthropic plans to invest in data centre infrastructure and energy projects across the country. "Australia's investment in AI safety makes it a natural partner for responsible AI development," said Dario Amodei in Canberra, where he is scheduled to meet Anthony Albanese. "This memorandum of understanding gives our collaboration a formal foundation." The agreement follows similar partnerships between Anthropic and safety institutes in the United States, Britain, and Japan. Currently, Australia does not have specific AI legislation in place. The centre-left Labor government has stated it will rely on existing laws to manage AI-related risks, while also introducing voluntary guidelines to address privacy and safety concerns. In its National AI Plan released in December, the Labor government outlined steps to boost AI adoption across the economy. The plan also focuses on attracting data centre investments and building AI skills to support jobs as the technology becomes more integrated into everyday life. Also read: Viksit Workforce for a Viksit Bharat Do Follow: The Mainstream LinkedIn | The Mainstream Facebook | The Mainstream Youtube | The Mainstream Twitter About us: The Mainstream is a premier platform delivering the latest updates and informed perspectives across the technology business and cyber landscape. Built on research-driven, thought leadership and original intellectual property, The Mainstream also curates summits & conferences that convene decision makers to explore how technology reshapes industries and leadership. With a growing presence in India and globally across the Middle East, Africa, ASEAN, the USA, the UK and Australia, The Mainstream carries a vision to bring the latest happenings and insights to 8.2 billion people and to place technology at the centre of conversation for leaders navigating the future.

Claude Code source leaked: What's inside Anthropic's unforced error?

The leak surfaced after Anthropic released version 2.1.88 of the Claude Code npm package Anthropic on Tuesday, March 31, confirmed that an internal code for its popular artificial intelligence (AI) coding assistant, Claude Code had been mistakenly released. "No sensitive customer data or credentials were involved or exposed," said an Anthropic spokesperson who told CNBC News. The spokesperson labelled the error as human mistake, adding, "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." A source Code leak is seen as a major setback to the startup, as it could help Anthropic's competitors, revealing how it constructed its viral coding assistant. The leak came after a security researcher Chaofan Shou made public a link to Anthropic's code on X (formerly Twitter), on Tuesday at 4:23 a.m. EDT which has been viewed by over 31 million users. He wrote, "Claude code source code has been leaked via a map file in their npm registry!" The following leak also marks Anthropic's second major data breach within a week. For context, just last week, as per Fortune report, descriptions of Anthropic's upcoming AI model and other documents were recently found in a publicly accessible data cache. The leak surfaced after Anthropic released version 2.1.88 of the Claude Code npm package, after users noticed it contained a source map file that could be used to access Claude Code's Source code. That file made up of roughly about 2,000 TypeScript files and more than 512,000 lines of code. However, the version is now available for download from npm. A source code leak of this magnitude is huge, as it gives software developers and Anthropic's market competitors a roadmap of how the buzzing coding tool operates. Anthropic was established by a group of OpenAI's former leadership and researchers in 2021, and is globally known for developing a family of AI models called Claude.

Miami International Airport TSA Wait Time Remain Short Under 15 Minutes Amid Shutdown Chaos

Security lines at Miami International Airport stayed manageable Tuesday with TSA wait times averaging under 15 minutes across most checkpoints, offering a bright spot for spring break travelers navigating the partial government shutdown that has snarled operations at many major U.S. hubs. As of mid-morning on March 31, 2026, real-time data from the airport's website and third-party trackers showed general security waits ranging from 3 to 14 minutes depending on the checkpoint, with TSA PreCheck and Clear lanes often clearing in 1 to 5 minutes. Checkpoint 5, for example, reported general waits as low as 1-3 minutes, while Checkpoint 3 hovered around 10-14 minutes for standard lanes. Some priority and PreCheck options remained limited or closed at specific points, but overall flow remained far smoother than at hard-hit airports like Atlanta or Houston. Miami International Airport, one of the nation's busiest gateways with heavy international traffic, handled the situation better than many peers thanks to proactive staffing adjustments, real-time monitoring and its status as a high-volume facility accustomed to peak surges. Officials continued recommending two hours for domestic flights and three hours for international departures, but the short security times meant most passengers cleared checkpoints without major drama. The contrast with national headlines was stark. While some airports reported lines stretching for hours due to TSA staffing shortages triggered by the ongoing funding impasse, MIA's waits stayed consistently below 15 minutes for much of Tuesday morning. Immigration processing, however, told a different story, with waits exceeding 45 minutes at times -- a reminder that international travelers still faced longer overall journeys through the airport. Airport spokesman said MIA has benefited from strong local coordination and the ability to shift resources efficiently during busy periods like spring break, Passover and the lead-up to Easter. "We're monitoring every checkpoint closely and appreciate travelers' patience," officials noted in updates. The airport publishes live wait times on its website, allowing passengers to check conditions before heading to specific terminals or concourses. Miami International features multiple security checkpoints across its North and Central terminals, serving American Airlines, Delta and dozens of international carriers. Checkpoints open at varying times, with some operating nearly 24 hours. Real-time displays help direct passengers to the least crowded lanes, and dedicated PreCheck and Clear lanes provide faster paths for eligible travelers. Travelers on social media and local forums reported positive experiences Tuesday, with many PreCheck users clearing security in under five minutes. General lanes occasionally reached 10-20 minutes during busier waves but rarely approached the chaos seen elsewhere. Reddit threads and local news comments highlighted MIA as one of South Florida's more reliable options compared with Fort Lauderdale-Hollywood International, where waits sometimes stretched longer. The partial government shutdown has forced TSA to operate with reduced personnel nationwide, as officers work without timely pay and some have called out or quit. High absenteeism has led to lane closures and extended lines at many facilities. In Miami, however, the impact appeared muted, with airport leadership working closely with federal partners and deploying additional support where needed. Some reports noted temporary use of auxiliary staff or adjusted screening protocols to maintain flow. For passengers without expedited screening, standard procedures still apply: removal of liquids, electronics and outerwear under the 3-1-1 rule. Families, travelers with disabilities or those requiring additional screening may experience slightly longer times, but the overall environment remained orderly. MIA serves as a critical hub for Latin America and the Caribbean, with millions of passengers passing through annually. Even during peak travel seasons, the airport's layout and multiple entry points help distribute crowds. Officials urge checking flight status and real-time wait times via the MIA website or apps before arriving. Experts recommend several strategies to minimize delays at MIA: enroll in TSA PreCheck or Clear if frequent travel justifies it, pack carry-ons efficiently, monitor checkpoint-specific updates, and consider off-peak arrival times when possible. Early morning and late evening often see the shortest lines, while mid-morning and afternoon can build during flight banks. Immigration and customs on arrival for international flights remain a separate bottleneck, with waits sometimes exceeding 45 minutes. Travelers connecting or departing internationally should factor this in and allow generous buffers. The situation at MIA mirrors broader challenges in U.S. aviation security during fiscal standoffs, but also highlights how larger, well-managed airports can sometimes weather disruptions more effectively. Transportation departments and airlines have updated passengers via apps and announcements to plan accordingly. Local leaders and business groups emphasize MIA's economic importance for tourism, trade and connectivity in South Florida. Smooth operations support the region's reputation as a global gateway despite occasional weather or staffing pressures. As spring break continues and holiday travel ramps up, conditions could fluctuate. Airport officials have not announced major lane closures or alerts as of Tuesday, but they stress that security wait times can change quickly with arriving flight waves or staffing shifts. Travelers can access real-time information through MIA's official TSA wait times page, the MyTSA app (though federal updates have been inconsistent during the shutdown) and third-party trackers. Delta and American, major carriers at MIA, have provided guidance on arrival timing. In a travel landscape marked by unpredictability this season, Miami International has emerged as one of the steadier large hubs for security screening. Passengers flying out of MIA in coming days should still build in reasonable buffers -- especially for international departures -- but can take comfort that lines here are moving far quicker than at many peer airports nationwide. For those driving to the airport, parking and ground transportation options remain available, though traffic around MIA can add time during peak periods. Rideshares, taxis and public transit like the Metrorail connection provide alternatives. The broader context involves ongoing negotiations to resolve the funding issues affecting the Department of Homeland Security. While emergency measures have provided some relief, long-term workforce stability for TSA remains a concern to prevent future disruptions. Miami International Airport continues to invest in technology, including advanced imaging and automated screening, to improve efficiency and passenger experience. These upgrades help offset occasional staffing pressures and support higher throughput. As midday Tuesday approached, security conditions remained favorable with no major surges reported. However, officials and community updates remind travelers that airport processes can shift rapidly. In summary, while the partial government shutdown has created headaches at airports across the country, Miami International has kept TSA security wait times short and manageable -- a welcome development for thousands of passengers passing through one of America's busiest gateways.

Anthropic accidentally leaks its most powered AI model; Internet reacts

Anthropics has leaked the source of its popular AI coding service, Claude Code. This leak has sent shockwaves through the AI community as the platform is known for its security practices. The American AI company inadvertently exposed the complete source code of its flagship coding assistant due to a basic packaging error. The incident occurred on March 31, when a misconfigured source map file in Anthropic's npm registry revealed the entire codebase. Cybersecurity experts have criticised the lapse, calling it a major blow to Anthropic's reputation. The company, which has built its brand around safety and strict controls, is reportedly preparing for a $380 billion IPO. This leak has put a massive dent in the image of the company, and would create problems for the IPO going ahead. How the Leak Happened Security researcher Chaofan Shou discovered the issue when he found a 60MB source-map file (cli.js.map) in Claude Code's npm package. This file allowed anyone to reconstruct the full TypeScript codebase, exposing the CLI implementation, agent architecture, unreleased features, and internal tooling. Importantly, the leak did not include model weights or user data. Anthropic confirmed the incident was caused by human error, not a hack. "No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach," the company said in a statement. Reactions Online The leak has triggered intense reactions across social media. Enterprise AI Architect Shakthi Vadakkepat dubbed it "the mothership of all code leaks," pointing out the irony of a security-focused company making such a rookie mistake. Others compared it to a homeowner investing in locks and guards but accidentally publishing the house's blueprint online. Critics highlighted the contradiction of Anthropic warning governments about AI risks while failing to protect its own code. Memes and jokes flooded forums, mocking the lapse, while developers and tech enthusiasts eagerly analysed the leaked code, calling it a valuable learning resource. Claude Code is Anthropic's advanced AI coding assistant, designed to help users edit files and manage projects locally. Thankfully, the leak didn't expose the core model. READ MORE: Ray-Ban Meta launches two new smartglasses for prescription wearers; Check UAE availability and price

Perplexity AI Sued Over 'Undetectable' Tracking Technology

Hidden Tracking Claims Put Perplexity AI Under Fire as Users Question How Safe Their Conversations Really Are! A fresh privacy scare has put Perplexity AI in the spotlight. The company is facing a lawsuit that claims it used hidden tracking tools to collect and share user data without permission. The complaint says private conversations may have been sent to other tech companies in the background. The news has quickly raised concern among users and experts. Many people use AI tools to ask personal questions, plan finances, or solve work problems. They expect those conversations to stay private. When reports suggest otherwise, trust becomes shaky. Legal watchers say this case could become a turning point. It may decide how much responsibility AI companies have when handling user data.

UPI Chaos Hits India, SBI Users Face Massive Payment Failures

UPI Outage Disrupts Payments Nationwide As SBI Users Report Surge In Transaction Failures A massive glitch hit the Unified Payments Interface (UPI) network on Wednesday, 1 April 2026, and disrupted digital payments across India. Users faced failed transactions, delayed confirmations, and app errors during routine transfers. The issue spread quickly in the afternoon and affected multiple payment apps and banking channels at the same time. Data from Downdetector showed a sharp spike in complaints within a short span. The surge confirmed that the outage was widespread and not limited to a single platform. Early trends pointed to a backend or network-level issue affecting .

Dogecoin sees 28% activity spike as SpaceX IPO drives speculation

Ali Martinez suggests that if Dogecoin breaks its resistance, it could surge 29%. The Dogecoin network has seen increased engagement in recent days, though that activity has yet to translate into market gains. Activity has risen by 28% following reports of a potential SpaceX IPO, though the market has yet to see a breakout. According to market analyst Ali Martinez, Dogecoin active addresses rose from 57,000 to 73,000. Normally, an increase in users often signals wider adoption, though part of that activity may come from users relocating assets or trying out different features. Moreover, more active addresses indicate that wallets are engaging more with Dogecoin and occasionally building positions, a factor that, overall, improves sentiment. Market data shows Dogecoin's current price movements are forming a descending triangle Martinez showed that Dogecoin's trading range is consistently shrinking toward a focal point, with an anticipated move of 29% once the current trendline breaks. Most market commentary has largely resonated with this view. Usually, descending triangles tend to favor a more downside move; not all patterns follow through. In this case, DOGE remains above key support as traders await a clear signal. At the moment, the Relative Strength Index (RSI) is 34, close to the typical threshold that triggers so-called 'oversold' alerts by technical analysts. But the Moving Average Convergence Divergence (MACD) is also losing momentum, hinting that selling pressure might be decreasing. Increased active addresses have in the past been in connection with accumulation cycles. If the network's engagement continues to rise and prices remain low, then the analysts will likely classify the period as an accumulation phase rather than an inertia phase. Currently, Dogecoin remains range-bound between $0.089 and $0.091, with a primary resistance target at $0.10. If it can rise above $0.10, analysts suggest it may soon surpass targets at $0.105 and $0.12. SpaceX is reportedly pursuing an IPO to raise $75 billion In response to the reports, some analysts believe it's highly likely that the company is still aiming for a June listing. Earlier in March, CEO Elon Musk had also stated the firm is pursuing a valuation of $1.75 trillion. Meanwhile, there had been earlier speculation that SpaceX might merge with Tesla Inc. However, some have argued that integrating SpaceX into Tesla would likely inflate the share count, ultimately reducing the value of current holdings for EV investors. Still, Wedbush analyst Dan Ives recently reiterated his forecast that the two companies will merge in 2027. According to him, the foundation for a combined company is currently being established as the business links between the firms grow stronger. He attributed his prediction to the latest announcement of a joint Terafab facility in Austin, Texas. Two state-of-the-art chip factories would be built at the site -- one for Tesla's vehicle and robot AI, the other for SpaceX's space data center initiatives. Terafab, Ives noted, marks the first move toward fully merging operations between the firms.

Anthropic accidentally leaks Claude Code source in npm slip

Anthropic confirmed yesterday that 'human error' led to the leak of much of the source code of its star product Claude Code. Anthropic has accidentally leaked the source code of its Claude Code agent after a misconfigured software package exposed it to the public. It follows a separate incident last week where Fortune said the company had accidentally leaked thousands of files. The leak was spotted on Tuesday by security researcher Chaofan Shou, according to The Register, who found that the official npm package for Claude Code had shipped with a map file referencing an unobfuscated TypeScript source. Chaofan Shou proceeded to announce his find on X, sparking a flurry of activity. That file pointed to a zip archive stored on Anthropic's Cloudflare R2 storage bucket, which anyone could download and decompress. The archive reportedly contained some 1,900 TypeScript files totalling more than 512,000 lines of code, including full libraries of slash commands and built-in tools. Within hours, a copy of the code was uploaded to GitHub, where it was 'forked' more than 41,500 times, according to The Register, effectively ensuring that the exposure could not easily be undone. "Earlier today, a Claude Code release included some internal source code," an Anthropic spokesperson told SiliconRepublic.com. "No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." The incident comes just days after Fortune reported that Anthropic had accidentally made thousands of files publicly available, including a draft blogpost describing an upcoming model known internally as both "Mythos" and "Capybara" - one that the document said presents cybersecurity risks. The Register cited software engineer Gabriel Anhaia, who published a detailed analysis of the exposed code, saying the incident should serve as a cautionary tale for development teams everywhere. "Apparently, a source map file was included in the npm package. Source maps are meant for debugging - they map minified/bundled code back to the original source," Anhaia wrote in his analysis of the Claude Code leak. "Including one in a production npm publish effectively ships your entire codebase in readable form. "This is a reminder for every engineering team: check your build pipeline. Make sure .map files are excluded from your publish configuration. A single misconfigured .npmignore or files field in package.json can expose everything," As experts and commentators pored through the now available source code, there seemed to be consensus that they were impressed with what they saw. "Notice no one said the code is slop," said prominent US tech blogger Robert Scoble in a social media post. "In every painful moment there are always gifts. The gift is that we all know now that Anthropic's code is pretty damn good." However it also clear that the leak is a gift to its powerful competitors who are vying to compete with one of Anthropic's most successful products, and have been given an inside view of what's behind it. Don't miss out on the knowledge you need to succeed. Sign up for the Daily Brief, Silicon Republic's digest of need-to-know sci-tech news.

Anthropic confirms it leaked 512,000 lines of Claude Code source code -- spilling some of its biggest secrets

* Anthropic employee accidentally leaked Claude Code source via npm map file * Leak exposed 1,900 TypeScript files with 500K+ lines of code, quickly mirrored on GitHub * Anthropic confirmed no customer data exposed, calling it a packaging error amid recent vulnerabilities like ShadowPrompt and Cloudy Day An Anthropic employee accidentally leaked the source code for one of the most popular Artificial Intelligence (AI) assistants out there - Claude Code. Security researcher Chaofan Shou posted on X, saying "Claude Code source code has been leaked via a map file in their npm registry!" The tweet itself was viewed more than 30 million times so far, with the numbers rising fast, showing just how popular the tool really is. While CNBC says the leak is partial, The Register said it contained "the popular AI coding tool's entire source code". Anthropic confirms leak The internet reacted as the internet usually reacts - fast and remorseless, swiftly backing up the leak into a GitHub repository which has, by now, been forked tens of thousands of times. In the GitHub upload it was said that the leak is a result of a reference to an unobfuscated TypeScript source code in the map file included in Claude Code's npm package. The reference pointed to a .ZIP file sitting in Anthropic's Cloudflare R2 storage bucket which contained 1,900 TypeScript files with more than 500,000 lines of code, full libraries of slash commands, and built-in tools. Since then, Anthropic confirmed the news, saying this wasn't an act of a malicious insider, or third party, but rather a mishap: "No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said in a statement to CNBC. "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." These have been an intense couple of weeks for Anthropic. The company raised quite a few eyebrows with the speed at which it's been shipping out new updates and features, even prompting major discussions on Reddit, where users argued the company's been using, well, its own product. "They're getting high on their own supply," one person said. While releasing new features quickly is commendable, cybersecurity seems to be the flipside of that coin. In the last 10 days alone, we've had multiple stories about Claude being vulnerable to prompt injection and similar attacks. On March 27 2026, security researchers Koi Security found a major flaw in Claude Code's Google Chrome extension that enabled zero-click attacks. Speed at the expense of security? Dubbed ShadowPrompt, the vulnerability could have allowed malicious actors to exfiltrate sensitive data. A few days prior, on March 19, security researchers Oasis reported finding three vulnerabilities in Claude which, when used together, form a complete attack chain - from targeted victim delivery to sensitive data exfiltration. The researchers dubbed it Cloudy Day and responsibly disclosed it to Anthropic which quickly addressed it. Users don't seem to mind that much, though as, on the same day ShadowPrompt was discovered, Anthropic was forced to throttle its tools during peak hours to cope with rising demand. "To manage growing demand for Claude we're adjusting our 5 hour session limits for free/Pro/Max subs during peak hours. Your weekly limits remain unchanged", said Thariq Shihipar, an engineer who works on Claude Code, in a post on X. Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button! And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Corti Ships Symphony for Medical Coding with more than 25% Accuracy Edge Over OpenAI and Anthropic

Symphony for Medical Coding delivers production-ready medical coding automation across the US and Europe, based on learnings from the largest study of its kind. NEW YORK and COPENHAGEN, Denmark, April 1, 2026 /CNW/ -- Corti, the frontier lab for clinical-grade AI, today released Symphony for Medical Coding, an agentic model that outperforms OpenAI and Anthropic - as well as Amazon, Oracle, and Google - in medical coding by more than 25% in clinical accuracy benchmarks. It is now available via Corti's API to any team building AI-powered healthcare software. The cost of getting it wrong Medical coding converts clinical reality into structured data, powering reimbursement, reporting, and public health decisions. Coding errors are expensive, but the human cost goes much further. One example shows the scale of what is missed: in a recent study of Danish patient data, Corti identified three times as many suicide attempts as had been coded. The cases were all there - recorded in clinical notes, flagged in medication records - but coders, working under time pressure, had missed them. When cases go uncounted, health systems can't monitor trends, allocate resources, or design interventions. Policy fails before it starts. Defined by frontier research Medical coding is fundamentally a reasoning task, not a prediction problem. It involves interpreting many complexities, real judgment, and justification across thousands of codes. The American coding system alone, ICD-10-CM, has 70,000 diagnosis codes. Even worse, coding is based on guidelines that constantly evolve, making historically data-trained models inadequate. Corti started addressing this by conducting the largest study of its kind (5.8 million patient encounters), leading to Code Like Humans, a multi-agent framework accepted to EMNLP 2025, one of machine learning's top conferences. This framework mirrors professional coders' steps: identifying evidence, reasoning through hierarchies, validating against guidelines, and reconciling ambiguity. Symphony for Medical Coding builds on this foundation to perform work like expert coders, delivering higher quality than other models at a fraction of the cost. "Most AI systems fall short in medical coding because they treat it as labeling, not reasoning. Correct coding depends on evidence, context, hierarchy, and guideline interpretation. We built Symphony for Medical Coding to follow the same decision process expert coders use, and that is why the performance gap is so meaningful," said Lars Maaløe, PhD, CTO and co-founder of Corti. "The methodology behind Code Like Humans is the most promising approach to medical coding we've seen. We've been co-developing with Corti because we believe specialized AI infrastructure is how this problem gets solved - and we're excited to see it move into production," added Steve West, Managing Director, Healthliant Ventures and Tanner Health. Accuracy that can be audited In medical coding, accuracy requires traceability, defensibility, and ease of review. Symphony for Medical Coding links assigned codes to its clinical evidence and highlights ambiguities, giving teams, compliance leaders, and auditors a clear record of code. "Medical coding has been treated as a back-office cost center for decades. It isn't - it's the data layer that healthcare runs on. Getting it right changes what health systems can see, decide, and do," said Andreas Cleve, CEO and co-founder of Corti. Available across the US and Europe, as one system Coding systems vary widely, and most AI products require local fine-tuning. Symphony for Medical Coding does not. It is the first coding system designed to operate across both US diagnosis coding (ICD-10-CM) and procedure coding (ICD-10-PCS, CPT) and European coding environments without the need for local retraining. ICD-10, maintained by the WHO, is currently available in beta as Corti expands across priority European markets, including the UK, Germany, France, and Denmark. Symphony for Medical Coding is available now through the Corti Console, integrates directly with the Corti Agentic Framework, and supports both A2A and MCP standards. Enterprise and sovereign cloud deployments are available through Corti. About Corti Corti is healthcare's frontier lab for clinical-grade AI. Symphony, its flagship clinical-grade AI model, powers clinical and administrative applications for EHR vendors, virtual care platforms, practice management systems, and life sciences organizations worldwide. Corti serves over 100 million patients annually across health systems including the NHS. The company is headquartered in Copenhagen with offices in New York and London. For more information, visit corti.ai. Media Contact: [email protected] corti.ai/newsroom View original content to download multimedia:https://www.prnewswire.com/news-releases/corti-ships-symphony-for-medical-coding-with-more-than-25-accuracy-edge-over-openai-and-anthropic-302730387.html

Perplexity launches Secure Intelligence Institute to strengthen AI safety and privacy The Mainstream

In a move focused on building safer AI systems, Perplexity has announced the launch of the Secure Intelligence Institute (SII), a dedicated research hub aimed at improving security and privacy in advanced artificial intelligence. SII will focus on addressing key challenges linked to modern AI systems, including smart agents and models that process multiple types of data. The goal is to develop stronger methods to ensure these systems remain secure and reliable. The institute's research will span areas such as cryptography, privacy, and trustworthy machine learning. It will also collaborate with universities to advance research and innovation in these domains. One of the first initiatives under SII is BrowseSafe. This is an open-source benchmark and detection model designed to identify harmful content and potential threats in AI-powered web environments. The institute will be led by Dr. Ninghui Li, who has extensive expertise in security and privacy. He has been appointed as the first director of SII. With this launch, Perplexity is aiming to build a stronger foundation for secure and private AI systems while addressing growing concerns around safety in increasingly complex technologies. Also read: Viksit Workforce for a Viksit Bharat Do Follow: The Mainstream LinkedIn | The Mainstream Facebook | The Mainstream Youtube | The Mainstream Twitter About us: The Mainstream is a premier platform delivering the latest updates and informed perspectives across the technology business and cyber landscape. Built on research-driven, thought leadership and original intellectual property, The Mainstream also curates summits & conferences that convene decision makers to explore how technology reshapes industries and leadership. With a growing presence in India and globally across the Middle East, Africa, ASEAN, the USA, the UK and Australia, The Mainstream carries a vision to bring the latest happenings and insights to 8.2 billion people and to place technology at the centre of conversation for leaders navigating the future.

Anthropic faces scrutiny as claude code leak exposes

Amid growing concern over AI security, the claude code leak has put Anthropic under intense scrutiny from developers and researchers worldwide. On Tuesday, Anthropic confirmed that it had inadvertently shipped part of the internal source code for its Claude Code AI coding tool. The company described the incident as a "release packaging issue caused by human error, not a security breach," stressing that no external compromise took place. According to independent cybersecurity analysts, the exposure involved roughly 1,900 files and around 512,000 lines of code. Moreover, experts noted that the assistant runs directly inside developer environments, where it can access sensitive information, which heightened concerns about potential misuse. The situation escalated quickly after a post on X shared a link to the leaked material. By the early morning hours of Tuesday, that post had already surpassed 30 million views, dramatically increasing the visibility of the leaked repository and drawing security specialists to examine the files. Developers and researchers began combing through the leaked codebase to understand how Claude Code is architected and how Anthropic intends to evolve the product. However, some security professionals immediately raised questions about what sophisticated attackers might do with detailed knowledge of the internal systems. AI cybersecurity firm Straiker warned in a blog post that adversaries could now study how data moves through Claude Code's internal pipeline. That said, the firm cautioned that this visibility might allow someone to design payloads that persist across long sessions, effectively creating a hidden backdoor inside a developer workflow. These warnings have amplified broader industry fears around code leak security. Moreover, analysts emphasized that tools operating within developer environments, with deep access to repositories and infrastructure, present an especially attractive target for malicious actors. This leak was not an isolated setback for Anthropic. Just days earlier, Fortune reported that the company had accidentally made thousands of internal files publicly accessible, marking a separate anthropic data incident that preceded the source code release. Those earlier files reportedly included a draft blog post describing an upcoming AI model known internally as both "Mythos" and "Capybara". The draft noted that the experimental model could introduce notable cybersecurity risks, which has now become even more sensitive in light of the subsequent source code exposure. In response to both events, Anthropic stated that it is rolling out additional safeguards to prevent similar mistakes. Moreover, the company reiterated that no sensitive customer data or credentials were involved in either incident, attempting to reassure enterprise clients and regulators. Anthropic released Claude Code to the general public in May of last year, positioning it as an AI assistant that helps developers build features, fix bugs, and automate repetitive tasks. The launch marked a significant push by the company into the lucrative market for AI-powered software tooling. The product's commercial traction has been rapid. By February, Anthropic reported that Claude Code had achieved a run-rate revenue of more than $2.5 billion. However, this remarkable figure has also raised the stakes for Anthropic's security posture as more enterprises integrate the assistant into their workflows. Competitive pressure has intensified as rivals respond to that growth. OpenAI, Google, and xAI have all allocated substantial resources to building their own coding assistants, hoping to capture a share of the expanding market and to compete directly with Anthropic's flagship tool. Founded in 2021 by former OpenAI executives and researchers, Anthropic has built its reputation around its family of Claude AI models and emphasis on safety. The claude code leak has now put that safety narrative under pressure, even as the firm insists the root cause was operational rather than adversarial. The company has said it is implementing stricter packaging checks, access controls, and review procedures for releases involving internal repositories. That said, security experts argue that modern AI platforms require continuous, layered defenses, given their deep integration into developer environments and the growing sophistication of potential attackers. Anthropic's spokesperson stressed that the organization is taking concrete steps to ensure that this type of incident does not recur. In summary, the recent leaks underscore how rapidly growing AI companies must balance aggressive product rollouts with rigorous security hygiene to maintain trust.

Claude Code Source Code Leak: Did Anthropic reveal its secret AI models? What it means for developers and users

Claude Code Source Code Leak Anthropic: In a significant development, AI firm Anthropic has accidentally revealed sensitive details of its coding tool, Claude Code. The incident has raised concerns about security, strategy, and intellectual property. On March 31, 2026, version 2.1.88 of the @anthropic-ai/claude-code npm package reportedly included a 59.8 MB JavaScript source map file. This file exposed key internal parts of the platform and allowed public access to a large section of its codebase, estimated at around 512,000 lines of TypeScript. Within hours, thousands of developers mirrored the ~512,000-line TypeScript codebase on GitHub, dissecting features and memory architecture previously known only to Anthropic engineers. The leak is notable because Anthropic is known for strong security practices and strict development controls. However, the issue appears to have been caused by a simple packaging mistake, not a cyberattack. An Anthropic spokesperson said that no sensitive customer data or credentials were exposed. The company clarified that the issue was caused by a human error during the release process and was not a security breach. It also added that steps are being taken to prevent such incidents in the future. (Also Read:Meta launches AI-powered Ray-Ban smart glasses with prescription support and WhatsApp summaries; Check features, price and availability) Cybersecurity experts say the incident shows that even top AI companies can make basic operational errors. It has also raised concerns about managing risks as AI systems become more advanced. Analysts believe the leak could impact the company's reputation, especially as it is reportedly preparing for a $380 billion IPO. Key features of Claude Code exposed in leak Developers who studied the leaked code found that the system uses a three-layer memory design to keep the AI's answers clear and accurate. This helps prevent problems where the AI gets confused or gives wrong answers during long conversations. One important feature is called "Self-Healing Memory." It uses a simple file, MEMORY.md, which works like a guide and points to different topic files instead of storing everything in one place. This keeps the system fast and organised. It also follows a rule called "Strict Write Discipline," which means only correct and successful updates are saved. Another key feature is KAIROS. It works in the background, even when the user is not active. It helps organise information, combine important details, and keep everything running smoothly. The system also uses small helper programs, called subagents, to handle routine tasks so that the main AI can work without interruption. Claude Source Code Leaked: What it means for developers The leaked details give rival developers a rare look into how the system works. The code includes over 2,500 lines of bash validation logic, multi-agent coordination methods, and detailed memory systems. This means competitors can build similar AI tools faster without spending as much time and money on research. The leak also revealed internal model names such as Capybara, Fennec, and Numbat, along with their performance data. For example, Capybara v8 has a false claim rate of around 29-30%, compared to 16.7% in version 4. This kind of information helps other developers understand the strengths and limits of current AI systems, especially in areas like accuracy and decision-making. How user can use Claude Code safely Anthropic has advised users to stop using the npm version of Claude Code and switch to the native installer. The native version is safer as it updates automatically and avoids unstable dependencies. Users should uninstall version 2.1.88 right away and, if needed, use a safer version like 2.1.86. The company also recommends checking API usage for any unusual activity and making sure local systems are free from possible security threats like RAT infections. While data stored in the cloud is still safe, local devices may be at higher risk because parts of Claude Code's internal system are now publicly available.

Anthropic blames human employees for its 'AI Agent's mess'; says: Issue caused by ...

Anthropic has confirmed that human error led to the leak of the source code for its AI agent, Claude Code, which wiped trillions from global stock markets. The company described the incident as a release error rather than a security breach. The AI startup revealed that a packaging issue unintentionally exposed part of its internal code."No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again," an Anthropic spokesperson said. The leak, which surfaced online via a publicly accessible npm package, reportedly exposed around 2,200 files and roughly 30MB of TypeScript code. The incident quickly gained attention, with a post linking to the code drawing millions of views, and raised concerns about how much insight it could give competitors into Anthropic's development approach.This disclosure comes on the heels of another incident where internal documents, including the company's plans for an upcoming AI model, were discovered in a publicly accessible data cache. The incident has brought the company's internal practices into the spotlight.The developers who looked into the leaked code found unreleased features, such as an always-on background agent, also known as Kairos, and a companion feature with gamification elements. The code also hinted at features for multiple AI agents and permission approvals.At the same time, the codebase's structure drew mixed reactions, with reports highlighting large, complex files and workarounds for circular dependencies.Anthropic, founded in 2021 by former OpenAI researchers, has seen rapid growth with its Claude family of AI models. Claude Code, launched in May, is used by developers to write code, fix bugs, and automate workflows and has contributed to rising competition with companies such as OpenAI, Google, and xAI.The company is also said to be exploring a potential IPO later this year, adding pressure as it works to address repeated lapses.