News & Updates

The latest news and updates from companies in the WLTH portfolio.

Is SpaceX buying GlobalStar?

* The companies aren't confirming anything, but new buzz suggests SpaceX is the frontrunner to acquire Globalstar * The deal could strengthen SpaceX's position in satellite-to-phone services and deepen ties with partners like Apple * It's all speculation at this point - and the AWS-3 auction adds more intrigue Rumors that SpaceX will buy GlobalStar were the buzz at SatShow 2026 last week. "I think everyone's convinced that there will be a sale of Globalstar," Tim Farrar, founder of the satellite research firm TMF Associates, told Fierce. "A lot of people talked about that being Amazon. My best guess is that SpaceX will probably carry the day." Last year, a report surfaced that Globalstar Chairman Jay Monroe had talked with associates about potentially selling the company for more than $10 billion. "I don't know what the price is going to be," Farrar said, adding that the $10 billion floated last fall could very well be in the ballpark. Globalstar declined to comment. "Globalstar does not comment on industry rumors and Globalstar has never been able to discuss anything outside of public information on our D2D relationship," a spokesperson told Fierce. Fierce reached out to SpaceX as well and did not immediately hear back. Globalstar's shares, at around $66.29, were trading up more than 7% today. Its market cap is in the $7.95 billion range. Starlink, Amazon and Apple too Although the rumor mill was in high gear last week, no deal has since been announced, which could be for any number of reasons. With players the size of SpaceX at stake - and Apple too, because it uses Globalstar's satellites to provide SOS services - it's easy to see that negotiations could take more time than expected. Farrar, who wrote about the sale speculation in his blog last week, said he believes Monroe was in talks with Amazon and then took its offer to SpaceX. It's conceivable that SpaceX agreed to up the ante in an effort to further cement Starlink's dominance of the satellite industry. Amazon is preparing to compete in the direct-to-device (D2D) and broadband market via Amazon Leo. "I think SpaceX did an awful lot to beat up on Amazon," including by opposing Amazon's request for a launch deadline extension at the Federal Communications Commission (FCC). "They're trying to win as many customers as possible before Amazon gets to market." As for how all this went down, the thinking goes something like this: Amazon tried to team up with Apple to more directly challenge Starlink in the broadband and D2D space. Apple already has a deal with Globalstar and is invested in the company. SpaceX/Starlink then enters the picture and outbids Amazon. "That could be a pretty valuable outcome for Starlink, regardless of whether or not it's justified purely on the direct-to-device business and whether they need additional spectrum," he said. SpaceX and AWS-3 auction The other big scuttlebutt in the satellite/terrestrial world is what SpaceX will do in the AWS-3 auction, which starts on June 2. The AWS-3 auction will include a total of 200 licenses for markets scattered across the U.S. Reports surfaced last week (thanks Mike!) that SpaceX submitted an incomplete application to bid in the auction. The big question is what SpaceX plans to do in that auction, assuming it resubmits a completed application. "I think leaping to the conclusion that they suddenly want lots of paired AWS-3 spectrum and they're going to spend lots of money to buy a terrestrial footprint - that seems like a big leap of assumptions," Farrar said. Instead, Farrar said he thinks SpaceX is primarily interested in two licenses for Moline, Illinois, and Cincinnati, Ohio, which it needs to pair up with spectrum SpaceX is buying from EchoStar. "They need them to complement what they're already buying. They need to fill in the gaps as much as possible," he said. "It's not a surprise that they are participating. I think everyone is getting a little bit carried away and assuming that this means that they want to buy lots of terrestrial spectrum," he said. Terry Chevalier, managing director at Sunstone Associates, agreed. He said if SpaceX actually goes through with the AWS-3 auction, it's most likely to get the two remaining licenses that it didn't get from the EchoStar transaction. Still, "there is never real clarity on what people want or intent to do until the bidding begins ... and even then you don't really know until it's all over and the dust settles," he added. FCC's anti-collusion rule But Chevalier also raised some other issues. For example, the FCC has an anti-collusion rule that disqualifies both bidders from an auction if they have overlapping controlling interests - that threshold is 10% or more. "EchoStar's stake in SpaceX after the transaction closes would likely fall around 3%, per some of the reporting out there, likely keeping them below that bright line," he said. However, the FCC's communication prohibition is a separate and broader rule that prohibits bidders from talking with one another. "It bars any applicant from sharing bids or bidding strategies with any other applicant in the same auction - regardless of ownership percentage. That creates some interesting questions about how SpaceX and EchoStar firewall that information among officers, directors and others given their ongoing commercial relationship and EchoStar's direct financial stake in SpaceX's success," he noted. The added wrinkle: The AWS-3 auction exists in the first place because of the controlling interest question around EchoStar's original AWS-3 licenses. The licenses being sold in the upcoming auction are ones that EchoStar's Dish-backed entities Northstar and SNR had to give back to the FCC due to a ruling over their designated entity (DE) status. "So the entity that caused the auction to happen is now a co-bidder alongside the company it just sold $20 billion in spectrum to. That makes for some good reading," Chevalier concluded.

Anthropic to sign deal with Australia on AI safety and economic data tracking

SYDNEY, April 1 (Reuters) - Anthropic said on Wednesday it would sign an agreement to share its economic index data with the Australian government to help track artificial intelligence adoption across the economy, and its impact on workers and jobs. Under the agreement, the Claude maker will share findings on emerging AI model capabilities and risks, participate in joint safety evaluations, and collaborate on research with Australian universities. Anthropic said it would also target investments in data centre infrastructure and energy across Australia. "Australia's investment in AI safety makes it a natural partner for responsible AI development," Anthropic CEO Dario Amodei said in Canberra, where he is expected to meet Prime Minister Anthony Albanese on Wednesday. "This memorandum of understanding gives our collaboration a formal foundation." The deal mirrors similar agreements with safety institutes in the United States, Britain and Japan. Australia currently has no specific AI legislation. The centre-left Labor government has said it would rely on existing laws to manage emerging AI risks while introducing voluntary guidelines amid privacy and safety concerns. In its National AI Plan released in December, Labor outlined a roadmap to ramp up AI adoption across the economy, attract data centre investment, and build AI skills to support jobs as the technology becomes more integrated into daily life. Reporting by Renju Jose in Sydney; Editing by Edmund Klamann Our Standards: The Thomson Reuters Trust Principles., opens new tab

Anthropic accidentally exposes Claude Code source code in npm packaging error - SiliconANGLE

Anthropic accidentally exposes Claude Code source code in npm packaging error Anthropic PBC has accidently exposed the source code for its Claude Code command-line interface tool through a packaging error that led to the inclusion of sensitive files in a publicly distributed npm release. Claude Code is Anthropic's command-line tool that lets developers interact with its Claude artificial intelligence models directly from the terminal to write, edit and debug code. It's essentially an AI coding agent wrapped in a CLI that is designed to run tasks, manipulate files and automate development workflows without needing a full Integrated Development Environment interface. The exposure occurred due to the inclusion of a source map file in version 2.1.88 of Claude Code npm package. The leak consisted of more than 500,000 lines of TypeScript code across nearly 2,000 files, with the exposed material including core components of the Claude Code system, such as its agent architecture, tool integrations and execution logic. Anthropic has acknowledged the incident, saying in a statement reported by CNBC that "this was a release packaging issue caused by human error, not a security breach" and that it's "rolling out measures to prevent this from happening again." The problem when source code like this is leaked is that you can't put the proverbial rabbit back into the hat - removal of the original source does not prevent continued distribution once copies have propagated. In this case, the code was quickly mirrored externally, making it difficult to fully contain. While there is no indication that user data, prompts or customer information were exposed in the incident and Anthropic has also confirmed this, the impact of the leak comes down to intellectual property exposure and the potential for deeper analysis of internal system design. Access to the source code can provide insight into how AI agents manage tool usage, permissions and workflows. Such visibility can also assist in identifying weaknesses or crafting more targeted exploits against similar systems. The incident also raises competitive considerations, as proprietary implementation details can and will give Anthropic's rivals a clearer understanding of how its coding tools are structured. While the models themselves remain closed, the surrounding orchestration layer represents a significant portion of product differentiation. The news that Anthropic has accidentally leaked Claude Code CLI source code comes after the details of the company's upcoming AI model called Claude Mythos and other documents were recently discovered in a publicly accessible data cache.

Anthropic Partners with Australia for AI Economic Insights | Technology

Anthropic has agreed to collaborate with the Australian government to share data on AI adoption and its effects on the economy and workforce. The partnership involves sharing AI model insights, investing in data infrastructure and energy, and collaborating with local universities. This follows similar deals elsewhere. Anthropic has announced a significant collaboration with the Australian government, centered on sharing its economic index data to track and analyze artificial intelligence (AI) adoption within the nation's economy. This partnership aims to assess AI's impacts on jobs and workers. The agreement outlines that the creator of the AI model Claude will provide insights on emerging AI capabilities and potential risks. Additionally, Anthropic will participate in joint safety evaluations and pursue collaborative AI research with Australian universities. Investment initiatives in data center infrastructure and energy development across Australia are also part of the proposal. In commenting on the collaboration, Anthropic CEO Dario Amodei highlighted Australia's commitment to AI safety as a foundational element for ethical AI progress. During his visit to Canberra, where he will confer with Prime Minister Anthony Albanese, Amodei affirmed the formal nature of this collaboration. This deal aligns with other international agreements in the US, UK, and Japan, as Australia maneuvers AI integration without specific legislative guidance, opting instead for existing laws and voluntary guidelines. Labor's National AI Plan advocates for widespread AI integration, emphasizing data center investment and skill-building across the economy.

AI giant Anthropic backs in Albanese government's data centre plan

Global artificial intelligence behemoth Anthropic has committed to Albanese government's AI Plan to lure big tech to Australia, saying it will invest in renewable energy to power data centres, while investing $3 million for medical research across several universities. Dario Amodei, the chief executive of Anthropic, which owns the Claude AI agent, met with Prime Minister Anthony Albanese and Industry Minister Tim Ayres in Canberra on Wednesday morning to sign a memorandum of understanding between the $US380 billion ($555 billion) tech giant and the government.

I tried Replit, Lovable, and Claude Code to build the same app -- here's the only one worth using

Regardless of what you do, I'm sure at least one app idea has crossed your mind over the years. Perhaps a random frustration you faced at 2 AM and thought "how is there still not an app for this" and scribbled it into your Notes app. Or you saw someone else's app blow up and quietly muttered "I had that idea months ago." Maybe you have a running list of random app ideas somewhere -- ideas you swore you'd build when you have the time to learn how to actually code. Well, in 2026, you don't really need to go the traditional route anymore. You don't need to commit hours to learning how to code or paying a handsome amount of money to an app developer to build their version of your vision. Hundreds of AI-powered app builders now exist that claim all you need to do is describe your idea in plain English, and the AI will go ahead and build it for you. Three of the biggest names leading this space right now are Replit, Lovable, and of course, Claude Code. Now, if you've been wanting to finally bring your app ideas to life, you've likely seen people swearing by each of these tools. So, to see which one actually delivers the best results, I decided to put them to the test by using each to build the exact same app. Here's how it went... I used the same exact prompt across all three tools But I kept it intentionally vague When I'm vibe-coding, I've found that the best way to get results that match my exact vision is having incredibly detailed prompts. I don't necessarily mean longer prompts, but I mean a value-rich prompt that clearly communicates what you want. The more context you give, the less the AI has to guess and the closer the output lands to what's in your head. But for this test, I deliberately went the other way. I kept the prompt a bit vague on purpose. If my prompt covered every detail down to the exact colors, the specific libraries, the precise layout of every page, then I'm doing most of the "thinking" part for the tool and any halfway decent AI would produce roughly the same result. I wanted to more-so see how each tool interprets a rough idea I have, and then what it whips up. How functional is the app it creates on the very first go? Do the features work as I envisioned? Is it a solid enough first prototype to genuinely capture what I had in mind? While a lot of the answers to these questions come down to the exact prompt I'm using, the average user who wants to build an app isn't going to go through all that hassle. They're going to describe their idea the way they'd explain it to a friend. So, that's exactly how I wrote the prompt. The idea I had was an app where you upload your bank statement as a CSV and the app roasts your spending habits, categorizes your transactions, gives you a letter grade, and generates a brutally funny AI-powered breakdown of how you spend your money! It also lets you track your letter grade over time. So, while the idea was more or less an AI wrapper, it still touches enough real complexity to be a fair test! In my prompt, I mentioned the core features I wanted but I left out things like specific design direction and what tech stack to use. Claude Code is the only one that passed the real-world test Isn't that what really matters? Let's begin with the tool that impressed me the most in this test, and the one I think is genuinely worth using for something like this (even if you're a beginner): Claude Code. All three of the tools handled the sample data I had asked for just fine, which isn't surprising at all. The sample data is quite literally fake data that the tool itself generated, so of course it knows how to parse it! The real test was what happens when you upload a bank statement as a CSV file! That's real-world data in a format that the tool isn't accustomed to, and it's what helps determine whether the app actually works or just looks like it does. I exported my own bank statement as a CSV and uploaded it to all three, and Claude Code was the only one that handled it properly. It did get the currency wrong, but that's more-so something I should have specified in the prompt! For Replit and Lovable, I had to go in and follow up with additional prompts just to get them to parse the CSV correctly. On the other hand, Claude's version handled it perfectly on the first go! Claude's version of the roast was also the best out of the three! I cancelled my ChatGPT, Perplexity, and Gemini subscriptions for Claude -- and I should have sooner Wish I did this sooner. Posts 51 By Mahnoor Faisal The output was also the only version to have a neat graph of daily spending, and had an extremely interactive interface that was nice to look at! That said, I do think the UI looked very generic and you could tell it was vibe-coded. It had that classic look that every vibe-coded app ends up with these days, and I think this is something Replit and Lovable handled better. Claude Code was the tool that took the longest to complete building this app out, but given that the end result was the most functional and the most complete of the three, I'd say the extra time was well worth it. Replit's output looked the best But it didn't actually work Honestly, when Replit's version first loaded, I thought it was going to win. The UI was the best out of the three. For example, it had this Mac-style window frame around the roast section, complete with the little traffic light dots at the top. The roast would appear as if it was being typed out in real-time, like a typewriter effect, which was a really nice touch and made the whole experience feel more dramatic and fun. Ironically enough, despite the roast section being my favorite design element of the app, it was also the most broken part. Instead of actually displaying the roast as readable text, it dumped the raw API response straight onto the screen! All it took was one more prompt to fix it, but that's exactly what I was trying to put to the test here! When youre comparing tools based on what they produce from a single prompt, details like this certainly matter. Like I mentioned above, the file upload also didn't work with my actual statements on the first try. Again, fixable with follow-up prompts, but that's two core features that needed extra work. Lovable was the fastest to build But the output was just okay Lovable was the first tool to finish building, and the app it produced had a working roast feature. The UI of the tool was definitely clean, but it didn't really stand out. It did sort of give the vibe-coded look I was talking about earlier, and just looked like every other AI-generated app you've seen. The roast itself actually worked, so it's clear that Lovable handled the AI integration better than Replit on the first go. However, just like Replit, it couldn't parse a real CSV file initially. Subscribe for hands-on AI app-builder breakdowns Want confident, hands-on guidance on AI app builders? Subscribe to the newsletter for practical comparisons, honest analyses, and clear verdicts on Claude Code, Replit, Lovable and other build-with-AI platforms to help you pick the right tool. Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy. You can unsubscribe anytime. So essentially, Lovable didn't have Replit's design edge or Claude Code's functionality and essentially sat somewhere between the two. It's definitely the tool I'd turn to when I need a quick working prototype given how fast it was to finish, but if I'm building something I actually want to use or show to people, I'd rather wait the extra time and go with either Claude or Replit. To be fair, Replit and Lovable are free to try Now, while you can try Claude out for completely free, Claude Code (which I used for this test) requires a paid subscription. So if you're just exploring and want to test the waters without spending anything, Replit and Lovable both have free tiers that'll let you build something like this without paying anything. But if you've got an idea you're actually serious above, Claude Code is the one I'd recommend!

Polymarket Promo Code COVERS: Get a $20 Bonus for Knicks vs Rockets Prediction Markets

The Polymarket promo code COVERS unlocks a $20 bonus for new users looking to trade on Tuesday's Knicks vs Rockets matchup. This welcome offer from one of the best prediction market apps requires entering the code COVERS during registration and making a $20 deposit. As of Tuesday, March 31, this promotion gives bettors access to the Houston Astros' crucial homestand opener against the New York Yankees. The Polymarket promo code COVERS provides a straightforward $20 welcome bonus that requires a matching $20 deposit to activate. Unlike traditional sportsbook offers, this prediction market bonus focuses on trading outcomes rather than standard betting lines. Users can apply this bonus toward markets related to the Knicks vs. Rockets game, such as player performance props or team statistics. Key terms include availability in all states except Nevada, mandatory ID verification with photo documentation, and the requirement to enter the code COVERS during registration. For example, if you trade $20 on Tari Eason recording over 12 points and win, your return includes both your stake and profit. If the trade loses, you only lose your initial stake, not the bonus funds. The promotion expires based on the timing of account creation, making Tuesday's game an ideal opportunity to maximize the offer's value. New users should compare this offer with other best prediction market promos to ensure they're getting optimal value for their trading activity. Claiming the Polymarket welcome offer requires completing several verification steps before you can access the bonus funds. Follow these steps to secure your $20 bonus for the Knicks vs. Rockets game:

Taylor says MSCS audit release will show 'colossal waste' in school district

MEMPHIS, Tenn. -- Lawmakers and the community are awaiting Wednesday's release of the forensic audit of Memphis-Shelby County Schools. The audit release could determine if Republican lawmakers' push for a state takeover of Tennessee's largest school district is warranted. Sen. Brent Taylor (R-Eads), one of the sponsors of that state takeover bill, says he's had meetings with auditors who describe the findings as "unprecedented," and said the community will be "shocked" by what they hear. "That's what you'll see in this audit tomorrow, is the colossal mismanagement, the colossal waste of taxpayer dollars," Taylor said Tuesday ahead of the audit's release. "What the audit will show is that the clown cartel has been in charge of the Memphis-Shelby County School district." Taylor says the MSCS forensic audit will reveal issues within the school district, specifically its usage of state dollars. "It was reported that the school district bought a building for $6 million. They spent $30 million renovating the building, then sold it to a former school board member for $5 million," Taylor said. "They built the Whitehaven STEM center, down in Whitehaven, they spent about $9 million of public and private money, they had a huge ribbon cutting, and then, it's sitting empty. They don't have any furniture, it's not wired for internet, computers, anything, just a total waste of money." Additionally, Taylor says he's been displeased with the series of leadership changes within the school district, specifically when it comes to superintendents. Ahead of the firing of former MSCS superintendent, Dr. Marie Feagins, Taylor and Rep. Mark White both warned that a state takeover would move forward, if the board proceeded with the termination. Additionally, Taylor says an oversight board is needed to improve the academic success and performance of MSCS students. The audit findings are expected to be released Wednesday morning at 9, and from there, we could see what the next steps are, as it pertains to the bills that call for a state takeover.

On LawNext: Mary Technology Wants to Solve Litigation's 'Fact Chaos' Problem

E-discovery platforms have gotten great at narrowing millions of documents down to manageable sets. But what happens next -- the grueling work of extracting facts, organizing them, and building a reliable case narrative -- has remained largely manual. In this episode of LawNext, host Bob Ambrogi talks with Daniel Lord-Doyle, cofounder and CEO of Mary Technology, about the Australian startup's bet that "fact management" is the missing layer in litigation technology. Mary's approach is distinct from the large AI platforms that store documents as embeddings in vector databases. Instead, the company extracts every individual fact, enriches it with metadata, and links it directly back to its source -- creating what Lord-Doyle calls a verifiable chain from work product to evidence. He makes a compelling case that in litigation, where fault tolerance is low and the stakes are high, the nuance lost by compression-based AI systems is exactly what matters most. The company just closed a $7 million (Australian) seed round led by OIF Ventures and is expanding into the U.S. with a new San Francisco office and a new self-serve platform that lets smaller firms try it without a sales process. Lord-Doyle also talks about the concept of "productive friction" -- why Mary deliberately won't let lawyers skip the verification step -- and what he's learned about bringing an Australian legal tech product to the American market. This episode of LawNext is generously made possible by our sponsors. We appreciate their support and hope you will check them out. If you enjoy listening to LawNext, please leave us a review wherever you listen to podcasts.

SpaceX Falcon 9 successfully launches Transporter-16 mission from Vandenberg

On March 30, SpaceX conducted a successful launch of its Transporter 16 mission from Vandenberg Space Force Base, marking the 21st launch from Vandenberg SFB in 2026, demonstrating again how dominant they are in the commercial space industry. According to Vandenberg Space Force Base, Falcon 9 launched from Space Launch Complex 4 East (SLC-4E), and multiple microsatellites and cubesats were deployed into Sun-Synchronous Orbit on this dedicated rideshare mission. These satellites represent many international startups, research institutions, and government agencies that are using this mission as an affordable way to send their products into orbit.The Falcon 9 first-stage booster performed a successful RTLS (Return-to-Launch-Site) landing at Landing Zone 4 shortly after completing its primary mission. This marks another successful recovery of a reusable booster by SpaceX.According to SpaceX, the Transporter-16 mission was launched aboard a Falcon 9 rocket, delivering 119 payloads (large and small) into Sun-Synchronous orbit (SSO). Based on official ride-share launch integration information from SpaceX, the commercial and institutional payloads deployed included over 20 countries with a wide variety of CubeSats and microsats of various standardised form factors.The rideshare model also creates an opportunity for smaller research institutions to share costs associated with launching on a single rocket. This shared rocket cost significantly decreases the barriers experienced by various research institutions when attempting to launch to space.One of the government payloads of great importance was for the NGA's MagQuest project. The National Geospatial-Intelligence Agency (NGA) developed this project as a platform that utilises three different CubeSats to test the magnetic fields of the Earth. The information measured by these CubeSats is used for maintaining the World Magnetic Model (WMM), the system that provides accurate global navigation for GPS-enabled devices and commercial and ocean-going vessels. The mission is the first in deploying the Space-based PhOtonics for Quantum Communication (SPOQC) satellite. The SPOQC satellite is a '12U CubeSat' that will serve as a demonstrator for an 'unhackable' method of transmitting quantum-encrypted photons from space via orbiting satellites to grounded stations. This is the first step in developing a robust, worldwide quantum secure internet capable of protecting against future cyber threats.According to the Vandenberg Space Force Base, the Falcon 9 launched from Space Launch Complex 4 East (SLC-4E). The booster of the Falcon 9 launch vehicle (1st stage) successfully executed its Return to Launch Site (RTLS) landing at Landing Zone 4 (LZ-4) after separation from the 2nd stage of the launch vehicle. The RTLS landing of the 1st stage booster is unique to Vandenberg SFB missions and typically results in an audible sonic boom along the entire coastline of Central California from deceleration through the atmosphere of the booster.

From Insurance Mogul to Casino Cameras: John Cerasani's Unconventional Rise

Not everyone's path to Las Vegas notoriety starts in a corporate boardroom, but John Cerasani's did. Before the social media fame, before the blackjack tables, and before the millions of monthly views online, John Cerasani was building an insurance brokerage from the ground up after making a bold decision at 27 years old: he quit his stable corporate job and bet on himself. A decade later, that decision paid off when he sold his firm, Northwest Comprehensive, into private equity; a move that would later multiply significantly when the company became part of a massive acquisition by insurance giant Brown & Brown in a deal worth nearly $10 billion.

Starlink satellite breaks apart into "tens of objects"; SpaceX confirms "anomaly"

SpaceX's Starlink division confirmed yesterday that it lost contact with a satellite on Sunday and is trying to locate space debris that might have been produced by... whatever happened there. Starlink said there appeared to be "no new risk" to other space operations and did not use the word "explosion." But it seems that something caused a Starlink broadband satellite to break apart into at least tens of pieces. LeoLabs, which operates a radar network that can track objects in low Earth orbit, said in an X post that it "detected a fragment creation event involving SpaceX Starlink 34343," one of the 10,000 or so Starlink satellites in orbit. "LeoLabs Global Radar Network immediately detected tens of objects in the vicinity of the satellite after the event, with a first pass over our radar site in the Azores, Portugal," LeoLabs said. "Additional fragments may have been produced -- analysis is ongoing." LeoLabs said the breakup was "likely caused by an internal energetic source rather than a collision with space debris or another object." Because of "the low altitude of the event, fragments from this anomaly will likely de-orbit within a few weeks," it said. Two anomalies in orbit Starlink said in an X post yesterday that "Starlink satellite 34343 experienced an anomaly on-orbit, resulting in loss of communications with the satellite at ~560 km above Earth." Starlink said its "analysis shows the event poses no new risk" to the International Space Station, its crew, or NASA's Artemis II mission that could launch as soon as Wednesday. "We will continue to monitor the satellite along with any trackable debris and coordinate with NASA and the US Space Force," Starlink said. "The event also posed no new risk to this morning's Transporter-16 mission, which was designed to avoid Starlink with payload deploys well above or well below the constellation. The SpaceX and Starlink teams are actively working to determine root cause and will rapidly implement any necessary corrective actions." LeoLabs said yesterday that the new event is similar to one from December 17, 2025, which also produced "tens of objects in the vicinity of the satellite" and appeared to be "caused by an internal energetic source" rather than a crash with another object. LeoLabs said it wants more information on the anomalies. "These events illustrate the need for rapid characterization of anomalous events to enable clarity of the operating environment," it said. Starlink provided a few details shortly after the December 2025 incident, saying on December 18 that an "anomaly led to venting of the propulsion tank, a rapid decay in semi-major axis by about 4 km, and the release of a small number of trackable low relative velocity objects." Starlink added that the satellite was "largely intact" but "tumbling," and would reenter the Earth's atmosphere and "fully demise" within weeks. In December, Starlink seemed confident that it could prevent future anomalies. "Our engineers are rapidly working to [identify the] root cause and mitigate the source of the anomaly and are already in the process of deploying software to our vehicles that increases protections against this type of event," Starlink said in the December 18 post. We asked SpaceX today whether it has determined the cause of the December anomaly or the one on Sunday, and will update this article if we get a response. Starlink reported near-crash after Chinese launch Starlink also had a near-crash in December, in a different incident about a week before the "tumbling" satellite. Starlink Senior VP Michael Nicolls wrote on December 12 that a Chinese company had launched nine satellites without coordinating with other space users. Lack of coordination increases the risk of collisions, he said. "As far as we know, no coordination or deconfliction with existing satellites operating in space was performed, resulting in a 200 meter close approach between one of the deployed satellites and STARLINK-6079 (56120) at 560 km altitude," Nicolls wrote at the time, referring to the Chinese launch. "Most of the risk of operating in space comes from the lack of coordination between satellite operators -- this needs to change." Coordination can only become more important if SpaceX goes through with its stated plan of launching a million satellites to create an orbital data center. Under normal circumstances, Starlink satellites reaching their end-of-life date follow "a targeted reentry approach to deorbit satellites over the open ocean, away from populated islands and heavily trafficked airline and maritime routes," Starlink says in a document on "satellite demisability." But satellites that fall to Earth unexpectedly should pose no risk to people on the ground because they are designed to "demise with extremely low impact energy," according to Starlink. "A critical aspect of sustainable satellite design is demisability, which ensures that satellites fully break up and burn up during atmospheric reentry," Starlink says in the document. "Any fragments that do not completely demise should have negligible impact energy."

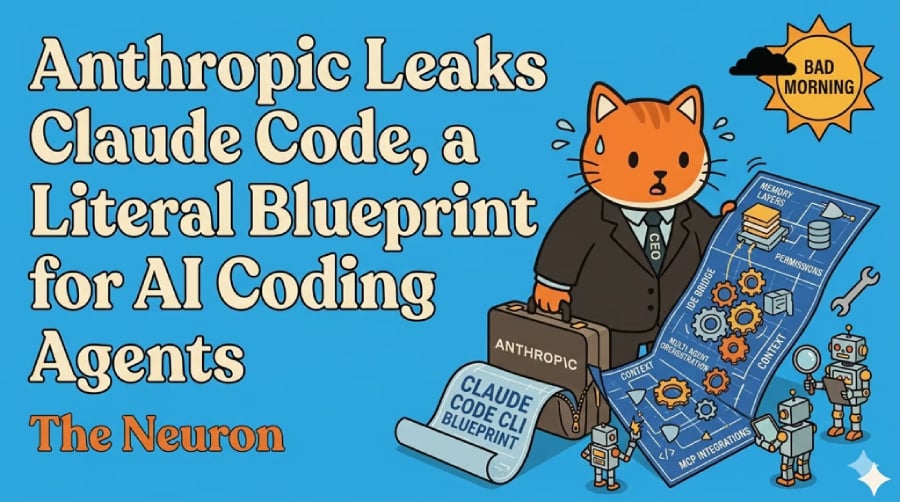

Anthropic Leaks Claude Code, a Literal Blueprint for AI Coding Agents

eWeek content and product recommendations are editorially independent. We may make money when you click on links to our partners. Learn More For all the talk about frontier AI, it's funny how often the really big reveals still come from the software equivalent of leaving your keys in the door. On March 31, 2026, developers noticed that a published npm package for Anthropic's Claude Code appeared to include a source map pointing to a downloadable archive of the tool's unobfuscated TypeScript source. The discovery was first amplified by Chaofan Shou on X, then quickly mirrored across GitHub, Reddit, and the broader AI-dev internet. Within hours, what had been a closed commercial coding agent was being picked apart in public like a new iPhone on teardown day. The obvious story is that Anthropic had a bad morning. The more interesting story is what the leak appears to reveal: Claude Code is not just "Claude, but in a terminal." It looks much closer to an operating system for software work, with permissions, memory layers, background tasks, IDE bridges, MCP plumbing, and multi-agent orchestration all stacked around the model. That matters because the AI coding race is no longer just about who has the smartest model. It's about who has the best harness. Not a chatbot wrapper According to the public mirror at nirholas/claude-code, the leaked Claude Code CLI spans roughly 1,900 files and more than 512,000 lines of code in strict TypeScript, built with Bun, React, and Ink for the terminal UI. The repo's architecture docs describe a sprawling system: a huge QueryEngine, a centralized tool registry, dozens of slash commands, persistent memory, IDE bridge support, MCP integrations, remote sessions, plugins, skills, and a task layer for background and parallel work. In other words: not a chatbot wrapper. A product stack. That's the real leak. We've spent the last year watching coding agents get pitched as if the magic were all in the model. Make the model better, give it a shell, sprinkle in a code editor, and voilà: software engineer. But the Claude Code mirror suggests the hard part is everything around the model. Tool permissions. Context loading. State management. Session recovery. Memory hygiene. Background jobs. Team coordination. The stuff that sounds boring right up until your agent nukes a repo, loses the thread, or hallucinates its way into a refactor from hell. The memory story is the real story And if one theme keeps surfacing from developers analyzing the leaked code, it's memory. One of the sharpest breakdowns came from Himanshu on X, who argued that Claude Code's memory system appears designed less like a giant notebook and more like a constrained retrieval architecture. The key idea is deceptively simple: memory is treated as an index, not storage. A lightweight MEMORY.md or CLAUDE.md-style layer stays resident, but it mostly points to knowledge rather than trying to contain it. Topic files are fetched on demand. Full transcripts aren't hauled back into context wholesale. If something is derivable from the codebase, it often shouldn't be stored at all. That's a subtle but important design choice. Most people imagine "agent memory" as a bigger backpack. Claude Code's memory, at least from the public analysis, looks more like a filing system with a strict librarian. The rest of the pattern is even more revealing. The memory system described by analysts appears to be bandwidth-aware, skeptical, and aggressively self-editing. It doesn't just append more notes forever. It rewrites. It deduplicates. It prunes contradictions. It treats stale memory as a liability, not an asset. And crucially, it seems to separate memory consolidation from the main agent context, which is exactly the kind of detail you only build after learning the hard way that autonomous systems can poison themselves with their own residue. That's the sort of thing competitors care about a lot more than Twitter does. Why this matters to everyone building agents Because of this leak, if the mirror is broadly faithful, it didn't just reveal some internal implementation trivia. It exposed how one serious AI coding product appears to be solving the central problem of agent reliability: how do you let an AI operate over long stretches of messy, changing work without drowning in context entropy? The answer seems to be: don't trust memory too much, don't store what you can re-derive, and never let the agent's internal scrapbook become more authoritative than the actual code. That's a much bigger deal than "Anthropic leaked code." The security irony It also comes at an awkward moment for Anthropic, because Claude Code has been marketed with a strong security-and-control story. Anthropic's own Claude Code docs emphasize permission prompts, read-only defaults, and sandboxing. Security researchers at Check Point had already disclosed serious Claude Code flaws in February 2026 involving malicious repo configuration and consent bypass. So when a packaging mistake appears to expose the internals of the product a month later, the irony is hard to miss: the company building secure autonomous coding agents may have tripped over one of the oldest problems in software shipping. Open by design, open by accident At the same time, the leak arrives in a market that is already moving toward a split between open-by-design and closed-until-it-breaks. That's where OpenAI's Codex changes the framing. OpenAI has been explicit for months that parts of its coding-agent stack are open source. In May 2025, OpenAI described Codex CLI as a "lightweight open-source coding agent" in its official launch post. Its openai/codex repo is public and Apache-2.0 licensed. By Oct. 6, 2025, OpenAI was saying GPT-5-Codex had been trained specifically for "the open-source agent implementation that powers the Codex CLI," and by Feb. 2, 2026, it was still describing the Codex app as built on the same open-source sandboxing foundation. That doesn't make the Claude Code leak less significant. It makes it more legible. OpenAI is selectively open-sourcing parts of its harness on purpose. Anthropic appears to have disclosed comparable product architecture by accident. The difference isn't just PR. It tells you what each company thinks the moat is. If you open-source the harness, you're betting that the advantage lies in the model, product velocity, ecosystem, and distribution. If you keep the harness closed, you're implying the orchestration layer itself is part of the crown jewels. Claude Code's leak matters because it suggests Anthropic believed exactly that, and then had that layer dragged into daylight anyway. When the internet gets a teardown There's a second-order effect here, too: leaks like this don't just inform competitors. They educate the entire market. The public mirror didn't stay a dumb archive for long. It quickly turned into an explorable documentation project, complete with architecture guides, subsystem notes, and even an MCP server for navigating the leaked source. That's a weirdly perfect metaphor for the current AI era. The moment the black box cracks open, the community doesn't just stare at the guts. It productizes the teardown. The bigger lesson So yes, Anthropic leaked Claude Code. But the real significance is not that developers got to rubberneck. It's that we got a clearer picture of what a modern AI coding agent actually is: not a model with a shell, but a carefully engineered stack of permissions, retrieval, memory discipline, task management, UI design, and orchestration logic. The frontier is moving away from raw intelligence demos and toward systems that can keep their footing over long-running work. Or put differently: the future of coding agents may depend less on how much they can remember than on how well they forget. And if that turns out to be the enduring lesson of the Claude Code leak, Anthropic may have accidentally given the entire industry a free architecture review. If you want, I can do one more polishing pass next to make it even more Neuron-y and tighten a few lines for flow. Editor's note: This content originally ran in the newsletter of our sister publication, The Neuron. To read more from The Neuron, sign up for its newsletter here.

OpenAI Releases Codex Plugin That Runs Inside Anthropic's Claude Code

OpenAI published a Codex plugin on March 30 that installs directly inside Anthropic's Claude Code, letting developers run code reviews and delegate tasks to Codex without leaving their existing workflow. The open-source plugin, released under an Apache 2.0 license, is the first official OpenAI integration designed to run inside a rival's coding environment. The plugin provides six slash commands. runs a standard read-only Codex code review. adds a steerable challenge mode that questions implementation decisions, tradeoffs, and failure modes. hands work off to Codex entirely, operating as a subagent that can investigate bugs, attempt fixes, or take a second pass at a problem. Three additional commands -- , , and -- manage background jobs. Installation requires a ChatGPT subscription (including the free tier) or an OpenAI API key, plus Node.js 18.18 or later. Codex usage through the plugin counts against existing Codex usage limits. Rather than bundling a separate runtime, the plugin delegates through the local Codex CLI and Codex app server already installed on a developer's machine. It reuses the same authentication, configuration, environment variables, and MCP server setup that Codex uses directly. Project-level settings in and user-level settings in both apply. The plugin also includes an optional review gate feature. When enabled via , Codex automatically reviews Claude's output before it finalizes. If the review finds issues, it blocks completion so Claude can address them first. OpenAI's documentation warns this feature can create long-running loops and drain usage limits quickly. The release comes days after OpenAI launched a broader plugin marketplace for Codex, which added integrations with Slack, Notion, Figma, Gmail, and Google Drive. That system lets developers package skills, app integrations, and MCP server configurations into installable bundles distributed across teams. The Codex plugin directory now lists more than 20 available plugins across the app, CLI, and VS Code extension. OpenAI building an official plugin for a competitor's platform marks a notable shift. The company has been consolidating its developer tools into a unified desktop experience, but this release extends Codex's reach into the workflow of developers who have chosen Anthropic's tooling instead. The move reflects a practical reality in the AI coding tool market: developers often use multiple assistants, and locking them into a single ecosystem has proven difficult. By making Codex available inside Claude Code, OpenAI gains visibility with developers who might not otherwise interact with its coding tools, while those developers get access to a second model's perspective on their code. The timing also coincides with Claude's surging popularity among paying users. Claude Code has become a significant distribution channel, and OpenAI appears to be meeting those developers where they already work rather than asking them to switch. The growing competition among AI coding tools has pushed companies toward interoperability rather than walled gardens. Whether other AI tool makers follow OpenAI's lead and build plugins for rival platforms remains an open question - but the precedent is now set.

Rachel Reeves to make £20m a day in tax from Iran war chaos

Rachel Reeves is in line for a multibillion-pound tax windfall as the war in the Middle East drives up energy prices. A new analysis has suggested that the Government is raising about £20million a day in extra revenue through levies and taxes linked to the price of oil and gas. The additional revenue also includes billions of pounds levied from North Sea oil and gas profits, power generators and VAT on petrol sales. If fuel prices were to remain at their elevated level for 12 months, the Treasury would bring in an extra £8billion a year, compared with the most recent forecasts, The Times revealed. Now, Starmer's Government is being urged by lobbying groups to use the cash to protect households and drivers from the spiralling cost of energy as war rages on between Donald Trump and Iran.

Transportation Goon Mocked For Trump Airport Suck-Up Amid Air Travel Chaos

Sean Duffy is focused on renaming a Florida airport code after his boss. Transportation Secretary Sean Duffy is being brutally mocked for prioritizing sucking up to President Donald Trump over addressing air travel chaos that includes a crash at a major U.S airport. Palm Beach International Airport has officially been greenlit to be renamed the "President Donald J. Trump International Airport" after Florida Gov. Ron DeSantis quietly signed off on the legislation on Monday. Duffy posted, "The FAA is working on changing PBI's airport code RIGHT NOW... the name change to Donald J. Trump International Airport already official!" "Stay tuned," he added, along with the eyeballs emoji. Duffy was immediately called out for focusing more on the vain name change over a fatal crash that occurred at New York's LaGuardia Airport last week. A collision with a fire truck left two Air Canada pilots dead after an understaffed control tower appeared to grant clearances that put a fire truck in the path of the landing regional jet. "Because that's the most important thing for the FAA to be focused on," one Florida-based user on X said of Duffy's airport post. "Not planes hitting fire trucks or helicopters." "You just had a plane fly into an airport crash truck, and this is what you are focused on," wrote another user. "Do you take this job serious or is all just about pandering to trump. FYI, I will never fly into that airport ever." A third posted "great!" and asked sarcastically, "How does this address the national air traffic controller shortage?" A fourth chimed in, "How about you address the whole crashing & ATC issues. You know, your job." The main road that leads from Palm Beach International Airport to the president's Mar-a-Lago club was already renamed Donald J. Trump Boulevard earlier this year. Mar-a-Lago is just five miles east of the airport, which boasts international flights to Canada and the Bahamas, but is significantly smaller than the region's major airports in Miami and Fort Lauderdale. Save for John F. Kennedy International Airport (JFK), other airports named after presidents have not had their codes changed. Washington, D.C.'s Ronald Regan National Airport kept its code of DCA, Gerald R. Ford International Airport in Grand Rapids, Michigan, remains GRR, and George Bush Intercontinental in Houston is still known as IAH. The body that would have to officially rename the Palm Beach airport's code is the International Air Transport Association. The Canada-based organization has managed and recognized airport codes worldwide for decades and has given no indication whether it will approve a code change for the president or Duffy. The state legislation that changed the airport's name did not require its three-letter code to be altered. However, Rep. Brian Mast, a Republican who represents Trump in Congress, has put forward federal legislation in the House to change the airport code to DJT. If the IATA were to permit a change, the three-letter code DJT -- the president's initials -- is not in use elsewhere. Reached for comment, the FAA referred the Daily Beast to Duffy's post but did not say what specific efforts it is taking to have the airport's code changed.

Crypto Startup Uses Polymarket to Bet on Its Own Fundraise, Blindsiding Backers - Decrypt

The wagers were placed around the time that Polymarket updated its rules to prohibit insider trading, including by those who can influence markets' outcomes. P2P.me was established to push boundaries with stablecoins, but the startup has determined that wagering on itself via Polymarket may have been a bridge too far. On Saturday, the firm backed by Coinbase Ventures and Multicoin Capital apologized for speculating on its latest fundraising round using the prediction market, describing the move in a post on X as an inappropriate attempt at conveying conviction to the public. In total, the company that bills itself as a non-custodial service for converting between stablecoins and cash signaled that it notched less than $15,000 in profits on the prediction market move. Still, it recognized how a small payday could carry outsized consequences. "It created confusion and hurt trust," P2P.me said. "We should have let the work, the product, and the mission speak for themselves. That was our mistake." As prediction markets have exploded in popularity, so too have concerns that the platforms can be abused by insiders who have access to confidential information. Recent enforcement actions and arrests have focused on the behavior of individuals, but P2P.me's mea culpa signals that questionable choices can also arise at the company level. P2P.me's wagers centered on MetaDAO, a Solana-based fundraising and governance platform. Some of the company's bets stood to win if $140 million in funding was committed to P2P.me through MetaDAO, but the ones that hit hinged on a $6 million milestone. In a post on X, Prohp3t, a pseudonymous co-founder of MetaDAO, said the platform would've pushed P2P.me to steer clear of Polymarket had it known what was coming. They didn't support the behavior, but argued that it resembled "a guerrilla marketing stunt gone too far." In the name of investor protection, Prohp3t said that MetaDAO would facilitate refunds for investors who want out before P2P.me's public fundraise concludes on Tuesday. A spokesperson told Decrypt that $20,000 worth of refunds out of $6.7 million committed had been requested. For P2P.me's biggest backers, the conduct also came as a surprise. Some were unaware that the India-based stablecoin firm was betting on its own fundraise, two people familiar with the matter told Decrypt. Before it began soliciting funds on MetaDAO, P2P.me raised $2 million in a seed funding round led by Coinbase Ventures and Multicoin Capital. A Coinbase Ventures spokesperson told Decrypt that the firm hasn't allocated beyond the initial fundraise. At the time that P2P.me placed its bets on Polymarket, the firm said on X that it had only received a $3 million "oral commitment" from Multicoin, which wasn't binding. On top of that, the wagers were made 10 days before the public fundraising campaign went live, the company added. P2P said that it named its Polymarket account "P2P Team" for transparency's sake. In total, the account has made 27 predictions. Its biggest win so far, $8,173, came in January. The firm had wagered that another MetaDAO project wouldn't receive $100 million in commitments. A couple days before MetaDAO's fundraise went live on March 25, Polymarket said that it had updated its rules to prohibit insider trading. The platform made clear that it disavows trading on stolen information and illegal tips, as well as by individuals who "hold a position of authority or influence sufficient to affect the outcome of the underlying event." Decrypt has reached out to Multicoin and Polymarket for comment. A P2P.me spokesperson referred Decrypt to the firm's previous posts on X, including one that had gained more than 620,000 views. That firm said it didn't think it was "trading on a done deal," but would still implement a company policy on prediction market trading moving forward.

This Cult Book Mixed Race, Weed and American Chaos Long Before the Culture Caught Up. Now It's Back

Javier Hasse is a seasoned reporter and author with over a decade of experience focusing on cannabis, hemp, CBD and psychedelics. He currently serves as Editor-in-Chief... First published by Random House in 2003, Ghetto Celebrity is not a standard memoir and never really tried to be. Donnell Alexander traces the book back to Tupac Shakur's death, an LA Weekly story about his absent father, and a writing process shaped by weed, pop culture, race, Midwestern Black life and the kind of voice that either grabs you immediately or sends you running. More than two decades later, the book returnsDonnell Alexander did not arrive at Ghetto Celebrity the normal way, and that is part of the point. Donnell Alexander did not arrive at Ghetto Celebrity the normal way, and that is part of the point. The book, first published in 2003, began with Tupac Shakur's death. In the new edition's introduction, Alexander traces the memoir back to an LA Weekly assignment on Tupac, then to a line from "Papa'z Song," then to a deeper reckoning with the father he had spent years keeping at a distance. That eventually became "The Delbert in Me," a reported piece that pushed him toward a full memoir, one that would move through Dave Eggers' literary orbit before landing at Random House. It was a strange path to a stranger book, and that strangeness still feels like part of its appeal. Because Ghetto Celebrity: Searching for My Father in Me was never built to behave itself. It is a memoir, yes, but not the polite, sanded-down version of memoir that tends to travel best through publishing. Alexander frames it instead as something more unruly: a story about fathers and sons, Black identity, small-town Ohio, style, dysfunction, media ambition and self-invention. It is also, unmistakably, a cannabis book, though not in the obvious way. In the introduction to the new edition, Alexander says cannabis consumption in the memoir is "persistent," a bottom-line truth rather than some decorative cultural accessory. That distinction matters. This is not a novelty weed memoir. It is a literary memoir that happens to be soaked in the same smoke, swagger and altered consciousness as the life that produced it. Maybe that helps explain why the book has lingered for years in a semi-underground way, more beloved by certain readers than fully absorbed into the larger culture. Alexander himself seems to understand that. In the new introduction, he calls the book odd, mentions its eight-page graphic interlude, and makes clear that even its original "Warning" was designed as a kind of litmus test, one that may have pushed some readers away as much as it pulled the right ones closer. In other words, this was never the sort of book engineered for broad comfort. It was made to hit the people who got it. That is part of why its return now feels interesting. Earlier this month, Alexander celebrated the paperback relaunch with a gathering in chef Wendy Zeng's garden in Northeast Los Angeles, and the event looked a lot more like the kind of scene this book should have had all along than the kind of stiff, timid literary rollout it might have gotten in another era. There was mezcal. There were infused elements. There was a tribute to the late novelist and educator Jervey Tervalon, one of the earliest readers to recognize what Alexander was doing. There were writers, friends, old comrades, and the sort of cultural overlap L.A. still occasionally gets right when nobody is trying too hard to turn it into "content." Which is to say: it did not feel like a bookstore signing. It felt like a resurrection. That matters because Ghetto Celebrity belongs to a world that publishing does not always know what to do with. It is too literary to be dismissed as pure counterculture ephemera, too raw and weed-soaked to fit neatly into prestige memoir conventions, and too interested in race, style and contradiction to flatten itself into some easy redemption arc. Alexander's gift is that he can write from the middle of all that mess without cleaning it up for the room. The voice is brash, funny, confrontational, and often genuinely electric. You do not really read a book like this for tidy lessons. You read it for voltage. And for the life inside it. The opening of the memoir makes that clear immediately. Before the book even settles into its larger sweep, Alexander is already writing with a confidence that feels less like memoir-as-testimony and more like memoir-as-performance, memoir-as-music, memoir-as a writer trying to pin a whole American frequency to the page. Elsewhere, he writes that he thought of the book as an arrangement of lines, not just a narrative. That feels right. Ghetto Celebrity is interested in sentences as much as scenes, rhythm as much as chronology, tone as much as confession. That sort of approach may have made the book harder to market in 2003. It also may be why it has held onto a cult-life long after first publication. Some books do not really disappear. They wait for the world to catch up, or at least for the right readers to find them in better conditions. That may be what is happening here. Alexander, who also publishes the West Coast Sojourn Substack, has been framing the return of Ghetto Celebrity not just as a reissue but as a second shot at public life. That does not mean rewriting history or pretending the first release never happened. It means recognizing that the culture has changed. Weed no longer carries the same stigma it did in 2003. Readers are more open to hybrid work, harder edges, and voices that do not sound workshop-approved. A book like this, which once may have seemed too slippery, too abrasive or too difficult to categorize, now has a better chance of being read on its own terms. And on its own terms, Ghetto Celebrity remains a compelling piece of work. It is funny. It is sharp. It is restless. It is often moving without begging for that response. It carries a deep sense of American drift and self-invention, while never losing sight of the local details, the bodily details, the humiliations, vanities and small absurdities that make a life feel lived instead of summarized. The book's backstory helps, sure. So does the literary pedigree. So does the Tupac origin point, the LA Weekly history, the McSweeney's brush, the Random House release. But none of that would matter much if the writing did not still hold up. It does. Which is why the Eagle Rock relaunch feels bigger than a nostalgic victory lap. It feels like an argument. Not just that Ghetto Celebrity deserved more the first time, though maybe it did. More that there is still room, even now, for messy, singular, cannabis-adjacent American books that do not ask permission to be themselves. That is a useful reminder. Especially at a time when so much publishing feels optimized to death, and so much weed culture coverage can drift toward either sterile commerce or empty lifestyle packaging. A book like Ghetto Celebrity belongs to a rougher lineage. It is closer to lived counterculture than cannabis branding. Closer to voice than market category. Closer to a writer trying to get free on the page than a product trying to find its lane. More than two decades after its first release, that still has power. And if this paperback really is the beginning of a second life for Ghetto Celebrity, Donnell Alexander found a good way to start it: not quietly, not apologetically, but in a garden full of people, stories, smoke, memory and the kind of L.A. energy that makes rediscovery feel less like marketing than justice.

Anthropic leaks part of Claude Code's internal source code

Anthropic leaked part of the internal source code for its popular artificial intelligence coding assistant, Claude Code, the company confirmed on Tuesday. "No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said in a statement. "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." A source code leak is a blow to the startup, as it could help give software developers, and Anthropic's competitors, insight into how it built its viral coding tool. A post on X with a link to Anthropic's code has amassed more than 21 million views since it was shared at 4:23 a.m. ET on Tuesday. The leak also marks Anthropic's second major data blunder in under a week. Descriptions of Anthropic's upcoming AI model and other documents were recently discovered in a publicly accessible data cache, according to a report from Fortune on Thursday.

Typing mistake causes petrol station chaos

A petrol station in Perth has been forced to sell the most expensive diesel in Western Australia after an employee made a typing error which added an entire dollar of value onto the already expensive fuel. Independent fuel retailer Burk was forced to sell diesel at nearly $4 a litre at its Cannington petrol station on Tuesday because of strict laws enforced by the WA petrol watchdog FuelWatch. Since the fuel crisis began, individual petrol stations have been forced to lock in the following day's petrol prices with FuelWatch in order to prevent artificial inflation. Burk managing director Umar Farooq told 9News that "multiple calls and emails" were sent to FuelWatch to try and rectify the issue, but nothing could be done. "We were told we can't sell it at the price other than what's been reported," Mr Farooq said. In a workaround to the issue, the petrol station chose to put a one-day discount of $1.04 on the diesel bowser. The employee responsible for the error is not expected to face any disciplinary action.