News & Updates

The latest news and updates from companies in the WLTH portfolio.

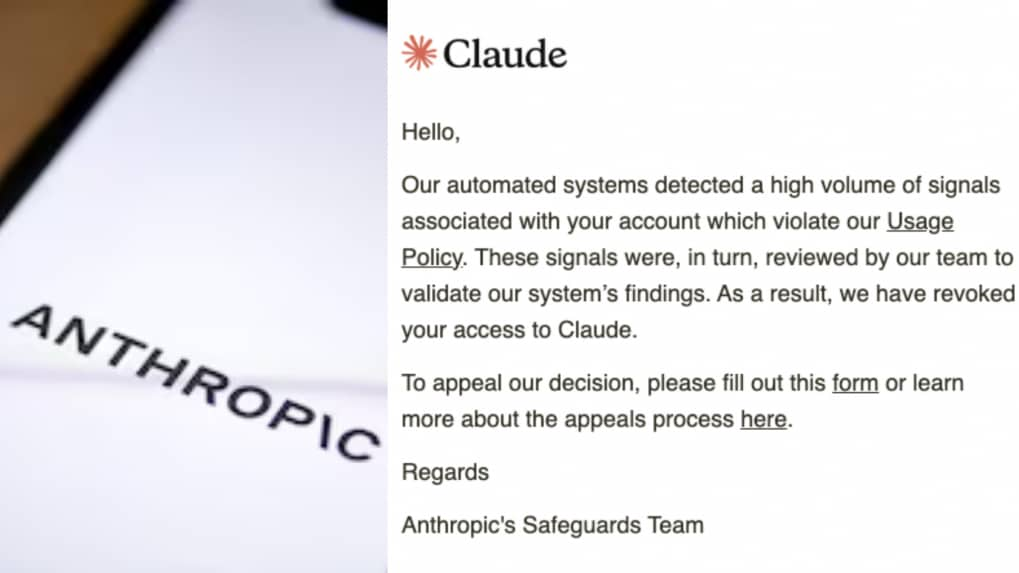

'Very bad UX and customer service': CTO says Anthropic shut down firm's Claude access with no warning

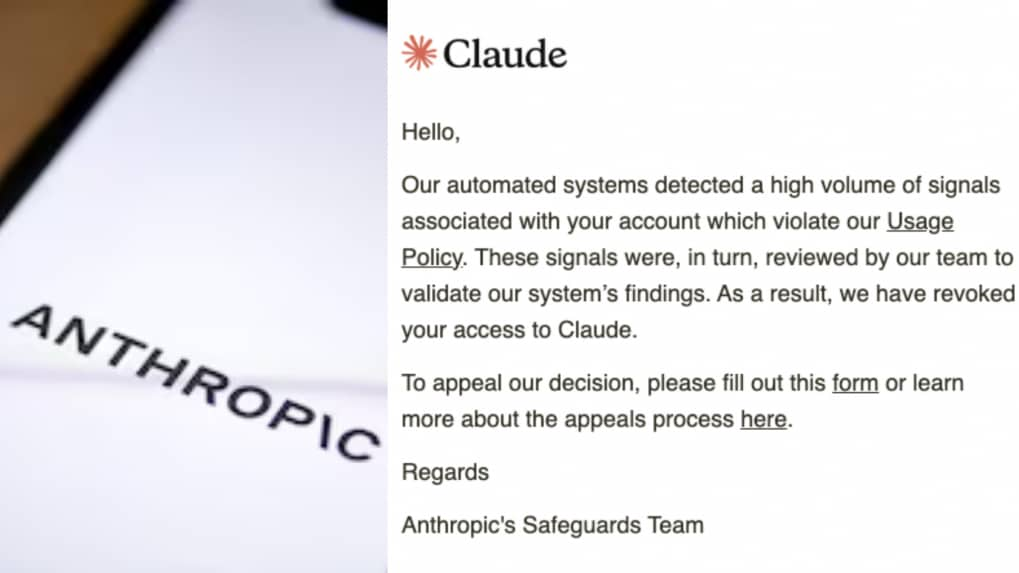

A chief technology officer of an Argentina-based fintech firm has alleged that artificial intelligence firm Anthropic abruptly shut down his company's access to its chatbot Claude without prior warning or a clear explanation, raising concerns over reliance on AI tools. Pato Molina, said the action disrupted operations and affected employees who depended on the tool for daily work. No prior warning, limited clarity In a post on X, Molina criticised the response process, questioning why users were required to submit a Google Form to appeal the decision. He described the experience as poor from both a user interface and customer service perspective. https://publish.twitter.com/?query=https%3A%2F%2Ftwitter.com%2Fpatomolina%2Fstatus%2F2045254152377323970&widget=Tweet He also shared a communication from Anthropic stating that its automated systems had detected signals linked to a possible violation of usage policies. The message said the findings were reviewed internally before access to Claude was revoked. "To appeal our decision, please fill out this form or learn more about the appeals process here," the message sent from Anthropic's safeguards team added. Molina later said the company was not informed about the specific policy violation and had only received an email notifying them of the suspension. According to Molina, the move left more than 60 employees without access to a critical tool, including integrations, workflows and past conversations. He said these were either lost or placed on hold, affecting day-to-day operations. "Which specific policy we breached, I haven't the faintest idea: we simply got an email and that was it, goodbye Claude." In a subsequent update, Molina said access had been restored, attributing the incident to a "false positive." He added that the issue was resolved after it was raised publicly. Molina described the episode as a cautionary example for companies relying on AI tools in critical systems. He said firms should avoid depending entirely on a single provider.

Inside the Vercel April 2026 Security Incident

Member-only story On 19th April 2026, web infrastructure provider Vercel disclosed a security incident involving unauthorized access to certain internal systems. While services remained operational, the breach has sparked industry-wide discussion about third-party risk, OAuth security, and supply chain exposure in modern SaaS environments. Here's a clear breakdown of what happened, who was impacted, and what organizations should learn from it. What Happened 🧠 The incident originated not within Vercel's core infrastructure directly, but through a third-party AI tool called Context.ai. This tool was reportedly used by a Vercel employee. According to Vercel's security bulletin, the attacker compromised Context.ai and leveraged that access to take over the employee's Google Workspace account. From there, the attacker gained access to certain internal Vercel systems and environment variables that were not marked as sensitive. Importantly, Vercel stated that environment variables labeled as "sensitive" are stored in an encrypted manner that prevents them from being...

Mumbai Local Train Services Disrupted on Central Line After Empty Rakes Derail Near Dombivli Station, Derailment Triggers Peak-Hour Chaos (Watch Videos) | 📰 LatestLY

Mumbai local train services on the Central line were thrown out of gear on Monday morning, April 20, after an empty suburban local train derailed near Dombivli railway station. The incident occurred at approximately 8:09 AM as the rake was being moved from the Kalwa Car Shed toward Kalyan. While three coaches jumped the tracks near Platform 1, no injuries were reported as the train was not carrying passengers at the time. The derailment took place during the height of the morning rush hour, leading to the immediate suspension of local train operations on Platforms 1 and 2 for safety inspections. The blockage caused a ripple effect across the Central Line, resulting in at least three suburban services being held up and numerous others facing significant delays or diversions. Jannat Zubair and Brother Ayaan Zubair Assaulted on Panvel Highway in Mumbai, Team Issues Statement (See Post). Commuters travelling toward Chhatrapati Shivaji Maharaj Terminus (CSMT) and Kalyan reported overcrowded platforms and stalled trains. To manage the congestion, railway authorities diverted some services to the fast tracks, though commuters were still advised to factor in additional travel time. Technical restoration teams, along with senior railway officials and the Railway Protection Force (RPF), reached the site shortly after the incident. Restoration work was initiated on a priority basis to rerail the affected coaches and repair any potential damage to the track infrastructure. It is reported that engineers are currently inspecting the point switches and track alignment in the vicinity of Platform 1 to ensure the route is safe for resumed traffic. Central Railway has confirmed that an internal inquiry will be conducted to determine the exact cause of the derailment. Investigators will look into potential factors, including track maintenance, mechanical issues within the empty rake, or human error during the shunting process from the Kalwa shed. While services on the fast line remained operational, the slow corridor toward Kalyan faced intermittent pauses throughout the morning. Normal operations are expected to resume fully once the technical teams complete their safety audit of the affected section.

Singapore Calls on Banks to Boost Cyber Defences Over Anthropic's AI Model Mythos

Get the hottest Fintech Singapore News once a month in your Inbox Singapore has urged banks to review and strengthen their cyber safeguards as authorities respond to growing concern over Anthropic's Mythos AI system. According to Bloomberg, the Monetary Authority of Singapore (MAS) is coordinating with the Cyber Security Agency of Singapore to strengthen protections for critical infrastructure operators, including banks. The MAS comments came in response to Bloomberg's queries about the risks linked to Mythos. The regulator said financial institutions should step up efforts to identify weaknesses in their systems, fix exposed vulnerabilities quickly and maintain strong cyber hygiene, including timely patching. It also warned that advances in artificial intelligence are likely to speed up both the discovery of software flaws and attempts to exploit them. The issue is also drawing attention beyond Singapore. Bloomberg previously reported that US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened senior Wall Street executives to discuss the risks tied to Mythos and the precautions banks are taking to defend their systems. JPMorgan Chase, which is testing the model, has also acknowledged the shift, with CEO Jamie Dimon saying on a recent earnings call that AI is worsening cyber risk even as it gives firms better tools to improve their defences. Anthropic has kept Mythos on a limited release after determining that the model was too dangerous to release widely, having shown an ability to uncover security gaps that had gone undetected for years. In a recent analysis, AI industry expert Dr. David R. Hardoon examined how Anthropic's limited release of Mythos is raising fresh questions about AI-driven cybersecurity risks. Singapore's Cyber Security Agency separately issued an advisory on 15 April warning of similar dangers, though it did not name Mythos specifically.

Vercel Breach Linked to AI Tool Compromise Raises Risk for Crypto Frontends

Vercel's confirmation follows a post from a hacker offering to sell the stolen data on a cybercrime market. Cloud development and serverless deployment platform, Vercel, has confirmed a security incident which saw hackers gain access to its internal systems. The incident presents a serious risk to the Web3 space as many projects use Vercel to host their front-end interfaces. In a security bulletin posted to its website Sunday, Vercel said that it had "engaged incident response experts to help investigate and remediate," and had also notified law enforcement. The firm claims only a limited subset of its customers have been impacted by the breach -- its services currently remain fully operational despite the incident. Vercel's initial investigations suggest the breach originated from a "small, third-party AI tool." The AI tool's Google Workspace OpenAuth app was linked to a broader breach, which Vercel claims could potentially impact "hundreds of its users across many organisations." Vercel's CEO, Guillermo Rauch, later posted on X, adding more detail. He said one employee was compromised via a breach of an AI tool he was using, called Context.ai. Once this employee was compromised, the hackers appear to have been able to broaden the breach to other Vercel environments, Rauch said. Vercel said the hack could potentially expose unprotected environment variables being used by deployments hosted on the platform. It recommended users review and change any environment variables that weren't marked as sensitive and encouraged users to use "sensitive" environment variables in the future to prevent them from being exposed. Vercel's Confirmation Follows Hacker Post Offering to Sell Platform's Data Vercel's announcement came shortly after a post was made by a user calling themselves 'ShinyHunters' on the cybercrime marketplace Breachforums, in which they claimed to have breached Vercel's systems and were selling its data -- including access keys, source code, database data, and access to internal deployments and API keys -- for US$2 million (AU$2.7m). ShinyHunters is the name of a well-known hacking group and extortion gang. This group has denied involvement in the Vercel hack, according to BleepingComputer. The attacker also shared a text file containing personal data on Vercel employees, including names, email addresses and activity timestamps, along with a screenshot appearing to show an internal Vercel dashboard. In other messages being shared on Telegram, the hacker appears to claim they were in contact with Vercel regarding the breach and that they've discussed a US$2 million ransom to return the stolen data.

Vercel Breach Raises Concerns After Hackers Claim $2 Million Data Sale

A Vercel breach linked to a compromised AI tool has sparked fears of wider cyber risks despite assurances of limited impact. Cloud platform Vercel has confirmed a recent cybersecurity incident that exposed parts of its internal systems, reportedly triggered by a compromised third-party AI tool used by an employee. While the company maintains that no sensitive customer data was accessed, the incident has drawn attention due to claims from hackers attempting to sell the alleged data for $2 million. Vercel, widely known for hosting and deploying modern web applications, serves a diverse global client base across software, retail, and artificial intelligence sectors. The company acknowledged that the breach affected only a limited number of users, but concerns have grown after threat actors claimed to possess data that could be leveraged for larger-scale attacks. According to the company's official statement, the attack began when hackers gained access to an employee's Google Workplace account through the compromised AI tool. From there, they were able to retrieve certain environment variables -- configuration details that help applications function but are stored outside the main codebase. Vercel clarified that only variables not marked as "sensitive" were accessed. Despite these assurances, hackers have taken to online forums claiming otherwise. In one post, they wrote, "We have verified access keys for a potential global supply chain attack. We're selling this access. Are you interested in buying it?" The statement has raised alarms within the cybersecurity community about the possible misuse of such information. The individuals behind the breach have claimed affiliation with ShinyHunters, a group previously linked to several high-profile cyber incidents. However, reports suggest that the group itself has denied involvement in this particular case, leaving questions about the true identity of the attackers. In addition to the claims, a sample data file reportedly shared by the hackers contains around 580 records of Vercel employee information, including names, email addresses, account statuses, and activity timestamps. The attackers are said to be seeking a ransom of approximately $2 million for the data. Vercel has responded by urging customers to take precautionary steps, including reviewing their environment variables and rotating any potentially exposed credentials. The company has also introduced updates to its dashboard, making it easier for users to manage and safeguard sensitive information. While Vercel insists that its core infrastructure and services remain secure, the incident underscores the growing risks associated with third-party tools, especially those powered by artificial intelligence. As investigations continue, the company has notified law enforcement and released indicators of compromise (IOCs) to help organizations detect any related suspicious activity. The breach serves as a reminder of how even indirect vulnerabilities -- such as those introduced through external tools -- can have far-reaching implications in today's interconnected digital ecosystem.

Kraken parent Payward to acquire Bitnomial for up to $550m

Payward, the parent company of cryptocurrency exchange Kraken, is set to acquire US derivatives firm Bitnomial in a cash and stock transaction. The company has signed a definitive agreement to acquire 100% of Bitnomial's outstanding equity for up to $550m. The transaction values Payward's equity at $20bn. Bitnomial is a crypto-native exchange in the US and holds three Commodity Futures Trading Commission (CFTC) licences used to operate a full-stack domestic crypto trading and derivatives business. In its announcement, Payward said the combination will bring together Bitnomial's "fully CFTC-licenced infrastructure" with Payward's "global client base, deep liquidity, and distribution" across Kraken, NinjaTrader and other products. The acquisition also provides access to infrastructure and licencing that can "takes years to build" to assemble in the US market. This includes exchange, clearinghouse and brokerage capabilities. Payward and Kraken co-CEO Arjun Sethi said: "The shape of a market is determined by its clearing infrastructure, not its front end. Settlement mechanics, margin models, and contract structures define what products can exist and who can access them. The US has had no clearing infrastructure built for digital assets. Bitnomial spent a decade building it: crypto settlement, crypto collateral, continuous 24/7 markets. These are capabilities that cannot be retrofitted onto legacy systems. They have to be built natively. "That is the regulated foundation we are adding to Payward, starting with spot margin, perpetuals, and options for US clients under CFTC regulation." Payward also positioned the acquisition as a distribution opportunity for its B2B infrastructure unit, Payward Services. The company said partners including fintechs, banks, brokerages and payment providers could offer regulated US derivatives products to end users through a single integration. Payward also plans to scale Bitnomial's team and operations as it builds out its US derivatives business. The deal is expected to close in the first half of 2026, subject to customary closing conditions. Recently, Payward received a $200m investment from Deutsche Börse Group. The transaction gave Deutsche Börse a 1.5% fully diluted stake in Payward.

Polymarket Looks to Raise $400M at $15B valuation: Report

Prediction market platform Polymarket is reportedly in talks with investors to raise another $400 million in fresh capital, The Information reported Monday. The $400 million raise would be made at a $15 billion valuation, The Information said, citing two people familiar with the matter. The raise would add to a wave of institutional capital flowing into the predictions market space in recent months. New York Stock Exchange parent Intercontinental Exchange (ICE) invested $600 million into Polymarket in late March, while competitor platform Kalshi's valuation was marked at about $22 billion in its last funding round. The Information said Polymarket is looking to add strategic investors beyond ICE in its next funding round, which could total $1 billion. Prediction markets started booming around the time of the 2024 US election and are now consistently recording over $10 billion in monthly trading volume across markets covering everything from sports and political elections to financial results and cultural events. With that rise has come surging institutional interest from some of Wall Street's biggest players. In early March, one of Nasdaq's options exchanges, Nasdaq MRX, filed to offer cash-settled, binary-style contracts on the Nasdaq-100 index. Cboe Global Markets is also launching a prediction market-style offering, while CME Group partnered with American gambling company FanDuel, which will enable traders to bet on markets outside of finance. Related: Kalshi to create 'portal for parents' on prediction markets: Report Last week, TradFi firms Charles Schwab and Citadel Securities said they are also weighing a move into prediction markets. Despite the rise in prediction market activity, Kalshi and others have faced regulatory scrutiny over widespread insider trading and market manipulation allegations. Kalshi is currently engaged in a court battle with the Nevada Gaming Control Board after a lower court temporarily blocked Kalshi from operating in the state. The state regulator argues that Kalshi's contracts facilitate unlicensed gambling. Coinbase chief legal officer Paul Grewal has predicted that the case could reach the US Supreme Court, potentially creating precedent over the regulatory treatment of prediction markets and event-based derivatives. Magazine: Should users be allowed to bet on war and death in prediction markets?

Anthropic says Claude Opus 4.7 has a 92% honesty rate, less sycophancy

Anthropic says Claude Opus 4.7 is less likely to hallucinate or engage in sycophany than other models. Anthropic released a new hybrid reasoning model on Thursday: Claude Opus 4.7. Anthropic has a reputation as a safety-first AI company, and the Opus 4.7 system card reports that the model is less likely to hallucinate or engage in sycophancy than both prior Anthropic models and other frontier AI models. We dived into the Opus 4.7 system card to see exactly what Anthropic had to say about the model's safety, honesty, and sycophancy. Don't miss out on our latest stories: Add Mashable as a trusted news source in Google. The TL;DR version Why put the TL;DR version at the end? Anthropic says Claude Opus 4.7 makes improvements on various types of hallucinations and overall honesty. Anthropic gives the new model top marks on sycophancy and encouragement of user delusions, too. (Anthropic's data also shows that Claude Opus 4.7 scores much better on these behaviors than Gemini 3.1 Pro and Grok 4.20.) "Claude Opus 4.7 is more reliably honest than Opus 4.6 or Sonnet 4.6, with large reductions in the rate of important omissions, and moderate improvements in factuality and rates of hallucinated input," Anthropic reports. Want to learn more about getting the best out of your tech? Sign up for Mashable's Top Stories and Deals newsletters today. Anthropic measures Claude's honesty and hallucination rates in multiple ways, but let's look at one representative example -- the Model Alignment between Statements and Knowledge (MASK) benchmark. MASK was developed by Scale AI and the Center for AI Safety. Claude Opus had a MASK honesty rate of 91.7 percent, compared to 90.3 percent for Opus 4.6 and 89.1 percent for Sonnet 4.6. While that's lower than the 95.4 percent score achieved by Claude Opus 4.5, the new model performs better on other hallucination scores (more on that below). Interestingly, Claude Mythos was more honest still, with an honesty rate of 95.4 percent. Claude Opus 4.7 lags behind Claude Mythos on overall performance Since Anthropic repeatedly compares Opus 4.7 to Claude Mythos, let's quickly review the differences between the two models. Claude Opus 4.7 is the latest hybrid reasoning model available to paid Claude subscribers. Claude Mythos is an unreleased model that Anthropic has only made available to partners via Project Glasswing. Under normal circumstances, we would expect Claude Opus 4.7 to be Anthropic's most advanced and powerful model to date. However, Anthropic says it lags behind the unreleased Claude Mythos in key areas. Anthropic deemed Claude Mythos too dangerous to release to the public because of its advanced cybersecurity capabilities. Still, Claude Opus 4.7 improves upon Opus 4.6 in many ways, particularly advanced coding, visual intelligence, and document analysis, Anthropic says. More details on Claude Opus 4.7 hallucination rates When using Opus 4.7, how likely is Claude to tell a lie, invent facts, or deceive users? There isn't a single hallucination rate that Anthropic provides, because there are multiple types of hallucinations. So, this section is for the AI nerds. Anthropic identifies a few different ways to measure hallucination and honesty: Factual hallucinations: How likely the model is to provide accurate information. How often does the model admit that it doesn't know something?Input hallucination: This occurs when an AI model ignores prompt instructions, hallucinates the content of files, or pretends to have access to a tool it doesn't have.False premises honesty rate: Will the model tell a user when they're incorrect?MASK honesty rate: This "tests whether a model will contradict its own stated belief when a user or system prompt pushes it to." We've already covered the MASK honesty rate, and Claude Opus 4.7 shows similar gains on these other measures, according to Anthropic. At this time, we cannot independently verify Anthropic's results. To measure factual hallucinations, Anthropic used four different tests and recorded correct responses, incorrect responses, and abstentions. In this case, abstentions are good -- the model should decline to answer a question rather than guessing. Across all four tests, Opus 4.7 scored higher than Opus 4.6 and Sonnet 4.6 but lower than Claude Mythos. Anthropic measured Opus 4.7's input hallucination in two ways: "prompts requesting an unavailable tool" and "prompts referencing missing context." Opus 4.7 scored 89.5 percent on the former, beating Claude Mythos's 84.8 percent; on the latter, Opus 4.7 scored 91.8 percent, two points lower than Claude Mythos's 93.8 percent. This shows just how stubborn AI hallucinations are, with even leading AI companies like Anthropic recording input hallucination rates around 90 percent. Anthropic's reported hallucination rates are similar to the latest OpenAI models, which provide responses with incorrect information up to 5.8 percent of the time (with browsing enabled) to 10.9 percent (browsing disabled), per OpenAI. What about Opus 4.7's honesty rate for false premises, i.e., will Claude tell a user they're wrong? According to the system card, Claude will push back on false premises 77.2 percent of the time. That's better than all other recent Anthropic models except for -- you guessed it -- Claude Mythos, which will reject false premises 80 percent of the time. Claude Opus 4.7 sycophancy There's not much new to report in terms of sycophancy. While Anthropic's expert red-team testers reported that Opus 4.7 was prone to "sycophantic agreement under pushback," it has very similar scores to prior models from Anthropic and OpenAI, and noticeably better scores than Gemini 3.1 Pro and Grok 4.20. Again, this is according to Anthropic. To measure bad behaviors like sycophancy and "encouragement of user delusion," Anthropic uses Petri 2.0, its open-source behavioral audit tool. This test scores models on a 1-10 scale, with lower scores reflecting better behavior. The Petri score isn't akin to a percentage, as it measures both the rate of a behavior and the severity. Anthropic scored Opus 4.7 highly (or, lowly, with this particular scale) on both sycophancy and user delusions. Mashable reached out to Anthropic for comment but did not receive a response in time for publication. Disclosure: Ziff Davis, Mashable's parent company, in April 2025 filed a lawsuit against OpenAI, alleging it infringed Ziff Davis copyrights in training and operating its AI systems.

Vercel Reports Data Breach Amid Claims of Compromised Internal Infrastructure - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Vercel Breach Tied to Context AI Hack Exposes Limited Customer Credentials - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

NSA Defies Pentagon Blacklist to Deploy Anthropic's Mythos AI in Shadow Cyber Ops

The National Security Agency has quietly tapped Anthropic's Mythos Preview, its most potent AI yet, even as the Pentagon brands the company a supply chain threat. Two sources confirmed to Axios the NSA's use of the model. One added it's spreading wider inside the department. Urgent cyber demands trump official bans. Mythos isn't public. Anthropic limits it to about 40 organizations, citing extreme offensive cyber powers. The firm disclosed just 12. NSA ranks among the hidden users. Others scan their networks for flaws. UK counterparts grabbed access via their AI Security Institute. But here's the twist. The Defense Department, NSA's overseer, moved in February to sever ties. It demanded Anthropic open Claude for "all lawful purposes." The company held firm: no mass domestic surveillance, no fully autonomous weapons. Tensions boiled into a supply chain risk label on March 3. That bars contractors from Anthropic tech in military work. Litigation drags on. DoD argues in court the tools endanger security -- while expanding internal reliance. Anthropic's stand echoes earlier contracts. A July 2025 deal put Claude on classified networks first. Renegotiations collapsed. President Trump ordered a six-month phase-out February 27. Secretary Pete Hegseth called it a national security risk. Yet Claude lingers in systems, including Iran ops per Reuters. Mythos: Zero-Day Hunter Extraordinaire What makes Mythos irresistible? Raw cyber muscle. Anthropic's tests showed it spotting and exploiting zero-days in every major OS and browser. Thousands found. A 27-year-old OpenBSD bug. 16-year-old FFmpeg flaw. Exploits succeed over 80% first try. Engineers sans security training prompt it overnight -- wake to working code. From Anthropic's red team report, it beats prior models like Claude Opus 4.6, which flopped at exploits. Defensive gold. Or offensive nightmare. Anthropic warns of dual-use dangers, hence the cap. Treasury, CISA, banks queue up. NSA likely probes its own nets, per The Verge sources. DoD officials gripe Anthropic can't be trusted in crises. Others push access. An administration source told Axios: "There's progress with the White House. There's not progress with [the Department of] War." CEO Dario Amodei met White House chief Susie Wiles and Treasury's Scott Bessent April 17. Productive talks on Mythos in government, security practices. Focus shifts to non-DoD agencies like Energy. Civilian needs bypass the feud. Fractured Lines in AI Arms Race Bureaucratic schizophrenia. DoD litigates risks while NSA deploys. OpenAI swoops in post-ban, revising deals to block surveillance. Steve Bannon praises Anthropic's refusal. Courts loom: Anthropic sued over the label, claiming First and Fifth Amendment violations. First U.S. firm hit this way. Implications ripple. Contractors scramble. Vendors purge Claude? Phase-out allows six months. But Mythos pulls insiders back. National security trumps policy. Cyber edges too sharp to sheath. And so the rift widens. Pentagon pushes total access. Anthropic draws ethics lines. NSA votes with actions. In the AI cold war, capabilities dictate. Rules bend. Or break.

Anthropic's Claude Desktop Caught Planting Hidden Browser Bridges: A Privacy Time Bomb for Developers

Privacy advocate Alexander Hanff uncovered a startling practice in Anthropic's Claude Desktop app. On April 18, 2026, he detailed how the macOS application silently drops configuration files into Chromium-based browsers. These files set up a Native Messaging host. No user consent. No disclosure. Just automatic installation across seven browsers, including those not even present on the machine. Hanff's investigation began with routine debugging. He spotted an unfamiliar manifest in Brave's directory: . Digging deeper, he found identical files in Chrome, Edge, Arc, Vivaldi, Opera, and Chromium folders. Some directories didn't exist before Claude's arrival. Last modified April 16, 2026. Claude's own logs confirmed it: 31 installation events under 'Chrome Extension MCP' on March 21. The manifest points to a binary at . Signed by Anthropic's Developer ID. Notarized by Apple. It allows three specific Chrome extensions -- uninstalled by default -- to invoke this host outside the browser sandbox. Via stdio. At user privilege level. Hanff calls it a 'spyware bridge.' Why? Because it enables browser automation: reading DOM state, filling forms, capturing screens, accessing authenticated sessions. Anthropic's own documentation spells out the powers. 'Claude opens new tabs for browser tasks and shares your browser's login state, so it can access any site you're already signed into.' Imagine that hitting your bank, health portal, or corporate email. All dormant until an extension activates it. But pre-installed. Pre-authorized. Crossing trust boundaries without a whisper. Delete the files? They reappear on next launch. No UI to revoke. Installed in unsupported browsers like Brave. Hanff argues this breaks Article 5(3) of the ePrivacy Directive: no consent for software placing calls over networks. Violates computer misuse laws too. Dark patterns abound -- forced bundling, no opt-in, auto-reinstall. Security holes compound the mess. Anthropic admits prompt injection hits Claude for Chrome at 23.6% without mitigations, 11.2% with them. A compromised extension could exploit this bridge. Supply chain nightmare. Latent risks everywhere. But wait. Anthropic's week got worse. Just days earlier, on March 31, a packaging error leaked 512,000 lines of Claude Code source. Nearly 2,000 TypeScript files. Exposed via an npm source map. No customer data, they say. But internals spilled: feature flags, system prompts, context pipelines. CNBC reported the blunder. Axios noted the full architecture dump. Hackers piled on, lacing GitHub mirrors with infostealer malware, per Wired. Leaked code revealed more tracking. Claude Code scans prompts for frustration -- profanity, 'this sucks.' Logs negativity. Scrubs Anthropic references to mimic human code. Scientific American highlighted the privacy creep. Researcher 'Antlers' told The Register: 'Every single file Claude looks at gets saved and uploaded to Anthropic.' Then vulnerabilities. Adversa AI found Claude Code bypasses deny rules on commands over 50 subcommands. Prompt injection tricks it into running 'rm' despite blocks. SecurityWeek warned of credential theft at scale. Check Point flagged config injection flaws, CVEs with 8.7 scores. Shell commands via hooks. API key grabs. X buzzed with outrage. One post: 'Anthropic installs spyware when you install Claude Desktop,' linking Hanff's piece. Developers fumed over rate limits amid leaks. 'Claude is literally malware,' another claimed, citing IP blocks. Anthropic pushes back on broader fronts. They disrupted Chinese espionage using Claude Code in 2025, per their blog. Accused DeepSeek and others of 16 million fraudulent prompts to distill Claude, Wall Street Journal covered. Held Mythos over hack risks -- thousands of zero-days found, Axios. Privacy page boasts encryption, limited access. But defaults to cloud. Employees peek for policy enforcement. Hanff's bridge sidesteps browser silos. Exposes sessions cross-profile. Industry insiders see patterns. Claude Desktop's bridge preps for extensions like Claude for Chrome (beta). Undocumented. Claude Code's separate bridge is documented -- why not this? Logs show installs in non-Chrome/Edge browsers Anthropic claims unsupported. Developers face choices. Vibe coding tempts. But at what cost? Dedicated machines? Air-gapped setups? Hanff reproduced on a second Mac. Same manifests. Same logs. Persistent. Anthropic stays mum on the bridge. No response to Hanff. Focuses on Opus 4.7, resisting prompt injection better. Yet trust erodes. Leaks. Bridges. Uploads. Spyware accusations stick. Regulators watch. ePrivacy violations invite fines. Class actions loom if sessions leak. Users uninstall. Or isolate. Boom. For pros, audit your systems. Check . Hunt Anthropic manifests. Revoke if found. Watch Anthropic's next move. They build safety-first AI. But execution falters. Badly.

Next.js maker Vercel hit by breach, user data concerns grow

New Delhi: Another day, another data breach. Cloud platform Vercel, known for powering apps built on Next.js, has confirmed a breach involving unauthorised access to its internal systems. The disclosure comes at a time when AI tools are deeply tied into daily workflows, which makes this case feel a bit too close to home for many developers. The company says only a limited set of customers were impacted, and services are still running. Still, the details raise concerns. Reports from BleepingComputer.com suggest attackers are even trying to sell alleged stolen data online, adding another layer of tension to an already sensitive situation. How the Vercel breach started From what the company has shared, the attack did not begin inside Vercel directly. It started with a third party AI tool called Context.ai. An attacker compromised that system and then used it to access a Vercel employee's Google Workspace account. Once inside, things escalated quickly. The attacker moved into internal environments and accessed certain environment variables. These were not marked as sensitive. The company stated, "We've identified a security incident that involved unauthorized access to certain internal Vercel systems." Vercel breach: What data was at risk Here is what we know so far: The CEO later explained that attackers moved further after scanning these variables and finding more entry points. That part feels worrying, even if the data was not tagged sensitive. Attacker claims and wider risk According to BleepingComputer.com, a hacker claimed to have access to: * Employee accounts * API keys and tokens * Internal deployments There were also claims of a data file with around 580 employee records. The group name ShinyHunters came up, but links remain unclear. What users should do now? Vercel has started contacting affected users. Others are advised to stay cautious. The company is asking people to rotate credentials immediately, and review environment variables, along with checking Google Workspace apps for suspicious access. They even shared a specific OAuth app ID that admins should look out for.

60+ accounts removed: Anthropic face backlash over Claude performance dip

As Anthropic continues its rapid climb in the artificial intelligence race, increasingly positioning itself as a serious challenger to OpenAI, cracks are beginning to show. The company, reportedly valued at $380 billion and eyeing a potential public listing, is now grappling with mounting criticism from developers and enterprise users over the performance and reliability of its flagship Claude models. The backlash comes at a pivotal moment. Anthropic has been gaining momentum not just with its core AI offerings but also with newer initiatives such as its cybersecurity-focused tool, Claude Mythos. Yet, even as its influence expands, user complaints are raising uncomfortable questions about whether the company can maintain quality while scaling aggressively. Belo CTO: 60+ Claude accounts were abruptly deactivated without warning The CTO of a Fintech company has made headlines this weekend. Patricio Molina of Argentina-based fintech Belo alleged that over 60 Claude accounts linked to his organisation were suddenly deactivated without warning. The disruption halted key workflows, froze integrations, and left teams unable to access critical tools. According to Molina, the only communication received was an automated email citing a vague policy violation, with appeals routed through a basic online form and no direct human support. Although access was later restored and attributed to a "false positive", the incident exposed vulnerabilities for businesses deeply reliant on a single AI provider. Molina's experience quickly struck a chord across the developer community, triggering a wave of similar accounts from other users. Several claimed they had faced comparable suspensions and struggled to get timely responses. X user Tomás Escobar said his company encountered the same issue months earlier and only managed to resolve it through personal contacts, noting that responses from Anthropic took "at least 7-10 days". Another user, replying to the same post, said that he has also experienced the same with his account. According to his timeline, he upgraded his plan the expensive tier. He did everything Anthropic asked him to do to use the platform, including KYC. But, as soon as, he thought he was done, the platform banned his account. Performance concerns with Anthropic Claude Compounding the issue are reports of noticeable dip in performance in recent weeks. Developers report that the model is increasingly prone to errors, struggles with complex workflows, and at times fails to follow instructions accurately. Some have also pointed out a tendency to take shortcuts, leading to incomplete or subpar outputs. The concerns appear to be linked to backend adjustments made by Anthropic, particularly a reduction in the model's default "effort" level. This change, aimed at optimising token usage and reducing computational costs, may have inadvertently impacted output quality. While the company has stated that such updates were documented in its changelog, users argue that the communication lacked clarity and transparency. The timing of these complaints is particularly significant. Even as Anthropic gains traction with enterprise clients and expands into areas like cybersecurity through tools such as Claude Mythos, these incidents reveal the growing pains of rapid scale. As the company continues to position itself as a formidable rival to OpenAI, the challenge will be to match its technological ambitions with operational reliability. Notably, last month, ChatGPT witnessed a significant dip on the user count. According to the data, US installs of ChatGPT's mobile app soared by an astonishing 295 per cent in just a day. While OpenAI faced mounting criticism, Anthropic capitalised on the moment. The data also shows that US downloads of Anthropic's Claude app rose by 37 per cent on February 27, followed by an additional 51 per cent jump.

)

'Very bad UX and customer service': CTO says Anthropic shut down firm's Claude access with no warning

A chief technology officer of an Argentina-based fintech firm has alleged that artificial intelligence firm Anthropic abruptly shut down his company's access to its chatbot Claude without prior warning or a clear explanation, raising concerns over reliance on AI tools. Pato Molina, said the action disrupted operations and affected employees who depended on the tool for daily work. No prior warning, limited clarity In a post on X, Molina criticised the response process, questioning why users were required to submit a Google Form to appeal the decision. He described the experience as poor from both a user interface and customer service perspective. https://publish.twitter.com/?query=https%3A%2F%2Ftwitter.com%2Fpatomolina%2Fstatus%2F2045254152377323970&widget=Tweet He also shared a communication from Anthropic stating that its automated systems had detected signals linked to a possible violation of usage policies. The message said the findings were reviewed internally before access to Claude was revoked. "To appeal our decision, please fill out this form or learn more about the appeals process here," the message sent from Anthropic's safeguards team added. Molina later said the company was not informed about the specific policy violation and had only received an email notifying them of the suspension. According to Molina, the move left more than 60 employees without access to a critical tool, including integrations, workflows and past conversations. He said these were either lost or placed on hold, affecting day-to-day operations. "Which specific policy we breached, I haven't the faintest idea: we simply got an email and that was it, goodbye Claude." In a subsequent update, Molina said access had been restored, attributing the incident to a "false positive." He added that the issue was resolved after it was raised publicly. Molina described the episode as a cautionary example for companies relying on AI tools in critical systems. He said firms should avoid depending entirely on a single provider.

Global Financial Watchdog to Share Insights on Anthropic's Mythos

The Financial Stability Board is gathering information from members about potential risks posed by Anthropic's Mythos model as it look to share such insights more broadly among its network of regulators and central bankers to help them judge the risks of autonomous cyber attacks. Bank of Canada Governor Tiff Macklem, who heads the FSB's key committee for monitoring risks, said officials have "work to do" as they assess the severity of the risks posed by the artificial intelligence model relative to other budding dangers like private credit and the global energy crisis. The topic has featured heavily in conversations at this week's International Monetary Fund and World Bank meetings. It was discussed at a meeting of FSB representatives on Wednesday amid concerns that financial systems beyond the US are disadvantaged because they have little access to the model created by San Francisco-based Anthropic. "The FSB is going to share the information that's available so that everybody is working with the right information," Macklem said, adding that the issue was still "developing." "New AI capabilities increase the speed at which vulnerabilities could be found and exploited," said Macklem. "That puts a real premium on having a really mature effective cyber program. There is no immediate cyber attack, there is no immediate crisis, but AI is changing the landscape and we got to get on top of that." The FSB is also closely monitoring risks from private credit and leveraged bets on sovereign bond markets. "Private credit is not suitable for everybody," Macklem added, pointing to the potential need for additional "guardrails" to ensure retail investors properly understand constraints on accessing their cash. Macklem said an upcoming FSB report on private credit vulnerabilities would be an "important step" though it will not be a magic bullet for dealing with a sector that officials judge too small to imperil financial stability, despite rising threats flagged by Bank of England Governor and FSB chair Andrew Bailey this week. "I think it's a bit early to start to start to get super prescriptive about solutions," Macklem said. "It's not going to answer all the questions." The FSB prioritized leveraged bets on sovereign bond markets in its first targeted attempt to deliver better data on the non-banking world, and has promised an update on that work by the middle of the year.

Polymarket eyes $400 million funding round at $15 billion valuation

Polymarket aims to capitalize on the explosive growth in prediction markets with its latest funding effort. Prediction markets platform Polymarket is targeting a $400 million fundraising round at a valuation of about $15 billion including the new money, The Information reported on Sunday, citing people familiar with the matter. The $15 billion figure is a 67% jump from Polymarket's $9 billion valuation established in October 2025, when Intercontinental Exchange (ICE), the parent company of the New York Stock Exchange, led a $2 billion investment. According to the report, the new round would add to ICE's existing stake and could bring total new financing to around $1 billion. Polymarket is also looking to bring in additional strategic investors alongside ICE. The company has been expanding its push into prediction markets as the sector gains momentum. Prediction markets have expanded from niche crypto platforms into a multi-billion-dollar financial market, with monthly volumes growing from roughly $1.2 billion in early 2025 to over $20 billion by January 2026, according to TRM Labs. The overall growth reflects both increased engagement from existing users and a broad influx of new participants, with leading platforms now reaching roughly 840,000 unique active wallets per month. Trading activity is increasingly concentrated on geopolitics, macroeconomics, and political events, which now account for a majority of total market volume. Kalshi and Polymarket currently dominate the prediction market space in terms of trading volume, liquidity, and user participation. Beyond political and sports markets, Polymarket has begun offering prediction contracts on commodities and individual equities, integrating real-time pricing data from oracle providers such as Pyth and Chainlink.

Singapore urges banks to fix security gaps amid concerns over Anthropic's Mythos AI

DeeperDive is a beta AI feature. Refer to full articles for the facts. Learn more SINGAPORE'S financial regulator is urging banks to plug their cybersecurity holes, as concerns over Anthropic's latest AI model Mythos spread to Asia. The Monetary Authority of Singapore is coordinating with the country's cybersecurity agency to strengthen defences at critical infrastructure operators including banks, a spokesperson said in response to queries from Bloomberg about the risks posed by Mythos, a new AI model from Anthropic which the company sees as too dangerous to release widely. "Financial institutions need to redouble efforts to strengthen their security defences, pro-actively identify and close vulnerabilities, and raise vigilance on cyber hygiene, including timely security patching," the spokesperson said. Advances in artificial intelligence will "accelerate the discovery and exploitation of software vulnerabilities in IT systems," the spokesperson added. The Cyber Security Agency of Singapore published an advisory on April 15 warning of similar risks, without naming Mythos directly. The city-state's warning reflects rising global concern over Mythos as regulators discuss with financial firms on how they are handling the cybersecurity risks raised by the model, which has so far been given only a limited release. Anthropic held back a wider release after finding the model was capable of discovering security holes that have gone undetected for years, fuelling alarm about a potential new era of cybersecurity attacks. US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an urgent meeting with Wall Street bank leaders in April to discuss the risks and precautions the firms are taking to defend their systems, Bloomberg reported. JPMorgan Chase chief executive officer Jamie Dimon, whose firm is testing Mythos, said on a recent earnings call that AI has made cyber risks worse, though also offers better ways to boost defences. BLOOMBERG

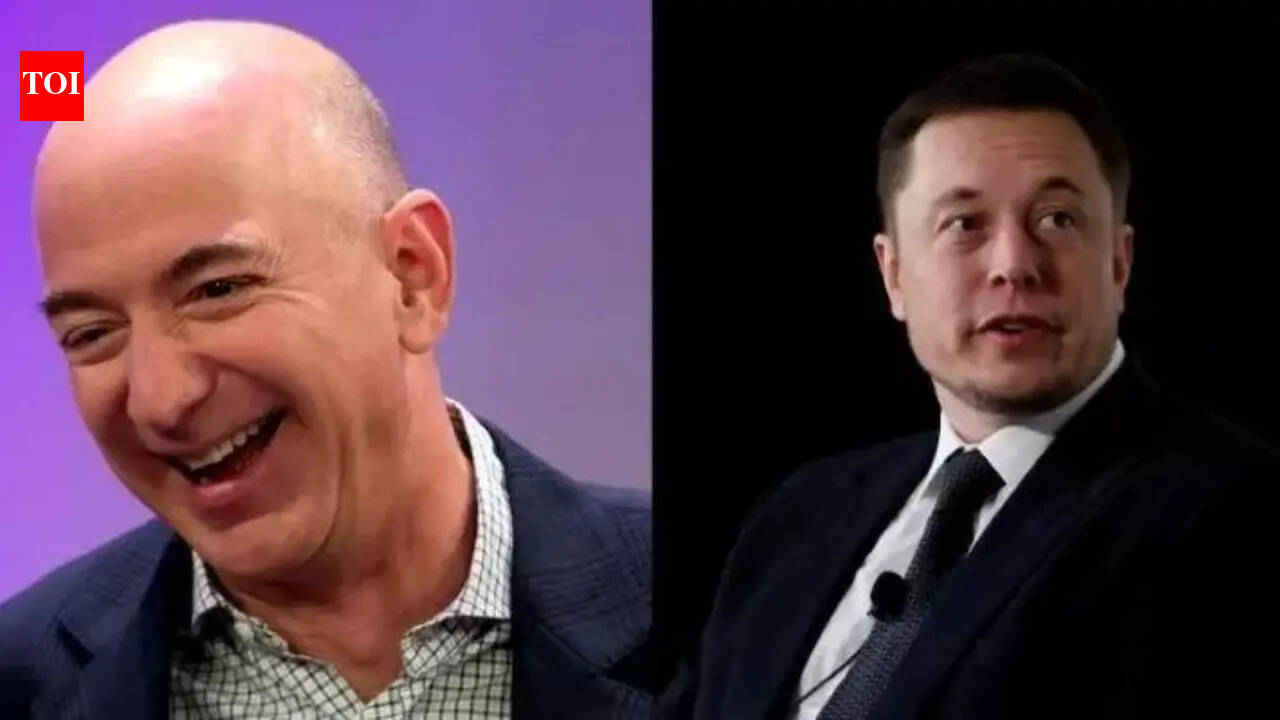

As Amazon and SpaceX are 'busy' sending complaint letters about each other to FCC, Elon Musk congratulates Jeff Bezos' on Blue Origin's New Glenn vertical landing on a droneship

Jeff Bezos-owned Blue Origin launched the New Glenn rocket from Cape Canaveral, Florida, on April 19. The rocket was sent into orbit using the same booster from the previous mission, NG-2. This milestone advances Blue Origin's reusable rocket capabilities for lower-cost orbital launches, following the mission's partial success in attempting to deploy a direct-to-cellphone satellite despite an off-nominal payload orbit. Bezos shared a video of New Glenn first-stage booster executing a successful vertical landing on a droneship in the Atlantic in an X post. The post caught the attention of SpaceX CEO Elon Musk who congratulated Jeff Bezos on the achievement. "Congrats," wrote Musk. Earlier this month, Elon Musk complimented Jeff Bezos' photograph of Blue Origin rocket. Replying to a post sharing a nighttime photograph of Blue Origin's New Glenn rocket standing tall on the launch pad, the world's richest persona said "Looks dood". The compliments come as Amazon and SpaceX continue to file complaint letters against each other with the US Federal Communications Commission (FCC). Recently, Elon Musk's SpaceX filed a formal letter with the Federal Communications Commission (FCC) about Amazon's petition to deny SpaceX's 1 million-satellite proposal for orbiting data centers. In the letter, SpaceX argues that if regulators apply Amazon's criticisms to its application then they must also apply the same standards to Amazon founder Jeff Bezos' Blue Origin, who himself has filed an application for 51,600 AI satellites (original datacenter).In its filing, Bezos rocket company Blue Origin proposes to launch up to 51,600 datacenter satellites. The filing argues that FCC should approve Blue Origin's plans because "insatiable demand for AI workloads" means orbiting servers represent "a complement to terrestrial infrastructure by introducing a new compute tier that operates independently of Earth-based constraints." The filing says that the explosive growth in artificial intelligence ("AI") workloads, machine learning, and cloud computing is driving unprecedented demand for data center capacity that is already encountering severe roadblocks to scale through terrestrial infrastructure aloneSpaceX seems to be effectively turning Amazon's argument back on them, pushing for equal treatment across competing space projects.