News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic CEO meets Trump officials as tensions ease over new AI model By Investing.com

Investing.com -- Anthropic CEO Dario Amodei met with top Trump administration officials on Friday, marking a significant step toward thawing a months-long feud between the artificial intelligence startup and Washington ahead of the release of the company's powerful new model, Mythos. Feud thaws over Mythos The high-profile meeting, which included White House Chief of Staff Susie Wiles and Treasury Secretary Scott Bessent, focused on the responsible deployment of Mythos. The model has reportedly forced both sides back to the negotiating table due to its potential for both transformative utility and significant cybersecurity risks. The White House characterized the discussions as "productive and constructive," noting that the parties explored opportunities for collaboration and shared protocols to manage the challenges of scaling such advanced technology. The shift in tone follows a bitter standoff over the Pentagon's use of Anthropic's technology. The company had previously refused to grant the Defense Department unrestricted use of its Claude models, citing concerns over autonomous weaponry and mass surveillance. Anthropic's defiance prompted President Donald Trump to direct federal agencies to cut ties with the firm. However, internal government communications suggest a reversal may be underway, with the Office of Management and Budget reportedly preparing protections that would allow agencies to begin using Mythos. IPO ambitions and regulatory hurdles Market analysts suggest that resolving the conflict with the administration is critical for Anthropic's long-term business strategy, particularly as the firm eyes an eventual initial public offering. Analysts have warned that a perceived alignment against the current administration could damage Anthropic's standing as a premier model provider. To bridge the gap, the company has recently bolstered its influence in Washington by hiring lobbyists with deep ties to the Trump administration, including Brian Ballard. The urgency for a resolution is underscored by international interest, with European Union officials also seeking access to Mythos to evaluate its cybersecurity implications. Despite the Pentagon's reaching deals with rivals OpenAI and Elon Musk's xAI, security experts note that Anthropic's established infrastructure remains difficult to replace in the short term. As global leaders convene for the International Monetary Fund's annual meeting, the cyber risks posed by Mythos have reportedly become a top-tier agenda item, highlighting the immense pressure on both Anthropic and Washington to reach a multilateral solution.

White House and Anthropic set aside court fight to meet amid fears over Mythos model

The White House has said it had a "productive and constructive" meeting with the head of artificial intelligence firm Anthropic, which is suing the US Department of Defense. The meeting comes a week after the firm released its Claude Mythos preview, an AI tool that the company claims can outperform humans at some hacking and cyber-security tasks. Anthropic CEO Dario Amodei spoke on Friday with Treasury Secretary Scott Bessent and White House Chief of Staff Susie Wiles, Axios reports. A representative of Anthropic did not comment on the meeting, which comes two months after the White House derided the firm as a "radical left, woke company". So far, only a few dozen companies have been given access to Mythos, which researchers have said is "strikingly capable at computer security tasks". The tool can find bugs lurking in decades-old code, according to Anthropic, and autonomously find ways to exploit them. Last week, Amodei said the company had "spoken to officials across the US government" and offered to work with them. Friday's meeting is a sign that Anthropic's technology may be too critical for even the US government to do without - despite the Trump administration's tough stance against the firm. "We discussed opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology," the White House said. The statement added that the meeting had "explored the balance between advancing innovation and ensuring safety". In March, Anthropic took legal action against the defence department and other federal agencies, after the firm was labelled a "supply chain risk." It was the first time a US company had been publicly given the label, which means a technology is not secure enough for government use. Anthropic has been used in high-level government and military work since 2024. It argued in court that the label was simple retaliation by Defence Secretary Pete Hegseth, because Amodei had refused to grant the Pentagon unfettered use of its AI tools over fears of Anthropic being used for mass domestic surveillance and fully autonomous weapons. While a federal court in California has largely agreed, a federal appeals court has denied the firm's request to temporarily block the supply chain risk designation. Nevertheless, Anthropic's tools are still in use at all of the government agencies that had been using them before the designation. Until Friday, the White House had said little positive about Anthropic. When Trump directed all government agencies to stop using Anthropic, he wrote on social media that the company was run by "left wing nut jobs", who were attempting to "strong arm" defence. "We don't need it, we don't want it, and will not do business with them again!" Trump wrote. As he arrived for an event in Phoenix, Arizona, on Friday, Trump was asked by reporters about the Anthropic CEO's visit to the White House. The president said he had "no idea" about the meeting.

Odds On: Polymarket gives 48% chance Hormuz normalizes by April 30

* Unlock hedge fund-level data and powerful investing tools for smarter, sharper decisions * Discover top-performing stock ideas and upgrade to a portfolio of market leaders with Smart Investor Picks "Odds On" is The Fly's weekly series diving into the most interesting bets on events trading platforms like Polymarket, Kalshi, and Robinhood. Subscribers, add $EBET to your Fly portfolios for alerts on news about events trading. BACKGROUND: On February 28, the United States and Israel launched joint military strikes against Iran, after which shipping traffic in the Strait of Hormuz fell sharply amid attacks and threats against commercial vessels. The strategic waterway was effectively brought close to a standstill within days, with many tankers and cargo ships anchoring outside the strait while operators assessed the risks. According to IMF PortWatch data, the strait historically averaged around 95-105 commercial vessel transits per day in January and February, handling roughly 20% of global seaborne oil flows, before dropping to roughly 6-7 ships per day in early March, a level that persisted on average through April 12, representing a near-shutdown of one of the world's most critical energy chokepoints. Brent crude and WTI both climbed sharply in the wake of the disruption, reflecting concern about supply risk through Hormuz, though moves stayed below the most extreme levels seen in past oil shocks. The U.S. Energy Information Administration and other forecasters flagged Hormuz-related risks in their outlooks, while stressing that global demand conditions and alternate supply routes will shape how persistent any price impact proves to be. This morning, Iranian officials signaled that the strait is open for commercial traffic under the current ceasefire, provided ships avoid designated exclusion zones and comply with new security protocols. U.S. officials have welcomed tentative de-escalation but warned that naval forces will continue to monitor and escort traffic in the waterway until attack risks recede. Oil prices pulled back on the headlines, with Brent and WTI giving back part of their recent risk-premium gains, while equity markets staged a relief rally on hopes that the worst-case supply scenarios can be avoided for now. Commercial operators and insurers cautioned that actual tanker resumption still depends on conditions on the water, with some vessels electing to wait for several incident-free days before re-entering the strait. THE BET: Polymarket lists the contract "Strait of Hormuz traffic returns to normal by end of April?" which uses IMF PortWatch data to determine whether shipping activity reaches a predefined threshold. The market currently shows an implied probability of 48% for the "Yes" outcome and cumulative trading volume of approximately $13M. On April 7, separate reporting noted that the probability had risen to approximately 57% at that time, with trading volume then around $4.07M. THE RULES: This market resolves "Yes" if IMF PortWatch publishes a 7-day moving average of transit calls for the Strait of Hormuz equal to or above 60 for any date between market creation and April 30. Otherwise, it resolves "No." Daily transit calls include container, dry bulk, roll-on/roll-off, general cargo, and tanker ships. Ships not reported by IMF PortWatch will not be considered. Resolution occurs as soon as the threshold is met, or once data has been published for April 30 without meeting it, subject to a 14-day window for that date's data to appear. Revisions to previously published data points within the market's timeframe will be considered and will not disqualify a qualifying value. Revisions made after April 30 data is published, however, will not be considered.

Anthropic's CEO sat down with Trump's inner circle to discuss a model so dangerous the White House is racing to prepare for it | Attack of the Fanboy

Anthropic CEO Dario Amodei met with top Trump administration officials on Friday to discuss the responsible deployment of the company's new AI model, Mythos. As detailed by the Wall Street Journal, the meeting marks a significant step in easing tensions between Anthropic and the federal government. Among those present were White House chief of staff Susie Wiles and Treasury Secretary Scott Bessent, who has warned financial industry executives about the potential cyber threats associated with Mythos. The White House described the discussion as "both productive and constructive," with talks focusing on "opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology." Anthropic has already provided a preview version of Mythos to major tech companies and organizations responsible for critical infrastructure, and has been briefing government officials on strategies to minimize potential harms. The company currently has no plans to release the model to the general public and is in talks to grant government agencies advance access. The recent collaboration represents a thaw in a bitter, months-long dispute rooted in the Pentagon's demand that Anthropic's Claude models be available for "all lawful uses." Anthropic refused, insisting on explicit protections against the use of its AI for autonomous weapons and mass surveillance. That standoff led Defense Secretary Pete Hegseth to label Anthropic a supply-chain risk, a designation that had never previously been applied to an American company, and President Trump subsequently directed federal agencies to cut ties with Amodei's firm. The DoD does maintain its own guidelines on autonomous systems, codified in Directive 3000.09, which was updated on January 25, 2023. The directive requires that autonomous and semi-autonomous weapon systems be designed to preserve appropriate human judgment over the use of force, that operators adhere to the law of war and established rules of engagement, and that all AI-enabled systems align with the DoD's AI Ethical Principles and Responsible AI Strategy. Anthropic nonetheless sought additional, more specific safeguards beyond those existing standards. The dispute escalated into legal battles across two courts, even as Anthropic's technology continued to be deployed and used during the conflict in Iran. Security analysts raised concerns that penalizing one of the country's leading AI firms was counterproductive, particularly as it prepared to release models with significant cybersecurity implications. Mythos appears to have provided the opening for de-escalation. Earlier this week, a top official at the Office of Management and Budget notified government agencies of protections being put in place to allow them to begin using the model, an effort coordinated by National Cyber Director Sean Cairncross. The administration is also working with other leading AI developers to help secure critical software vulnerabilities. On the lobbying front, Anthropic hired Brian Ballard, known for raising over $50 million for Trump in the 2024 election, after the supply-chain risk designation. The company also works with a firm founded by Carlos Trujillo, who served in the first Trump administration. Amid those efforts, analysts have cautioned that Anthropic's combative approach with the Defense Department could complicate its ambitions to become a top government model provider, particularly as it considers going public. The Pentagon has already forged deals with OpenAI and Elon Musk's xAI to use their models in classified settings, with senators raising concerns about xAI's Pentagon access and the safeguards around Grok's deployment. Security analysts believe it will likely take months before those alternative models are as deeply embedded in operations as Anthropic's technology has been. The European Union is also in talks with Anthropic about Mythos, though it does not currently have access to the model. A European government official noted that Mythos became an unplanned agenda item for international leaders convening in Washington during the International Monetary Fund's annual meeting this week, with leaders looking to develop a multilateral response to the cyber risks posed by the model.

White House and Anthropic set aside court fight to meet amid fears over Mythos model

The White House has said it had a "productive and constructive" meeting with the head of artificial intelligence firm Anthropic, which is suing the US Department of Defense. The meeting comes a week after the firm released its Claude Mythos preview, an AI tool that the company claims can outperform humans at some hacking and cyber-security tasks. Anthropic CEO Dario Amodei spoke on Friday with Treasury Secretary Scott Bessent and White House Chief of Staff Susie Wiles, Axios reports. A representative of Anthropic did not comment on the meeting, which comes two months after the White House derided the firm as a "radical left, woke company". So far, only a few dozen companies have been given access to Mythos, which researchers have said is "strikingly capable at computer security tasks". The tool can find bugs lurking in decades-old code, according to Anthropic, and autonomously find ways to exploit them. Last week, Amodei said the company had "spoken to officials across the US government" and offered to work with them. Friday's meeting is a sign that Anthropic's technology may be too critical for even the US government to do without - despite the Trump administration's tough stance against the firm. "We discussed opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology," the White House said. The statement added that the meeting had "explored the balance between advancing innovation and ensuring safety". In March, Anthropic took legal action against the defence department and other federal agencies, after the firm was labelled a "supply chain risk." It was the first time a US company had been publicly given the label, which means a technology is not secure enough for government use. Anthropic has been used in high-level government and military work since 2024. It argued in court that the label was simple retaliation by Defence Secretary Pete Hegseth, because Amodei had refused to grant the Pentagon unfettered use of its AI tools over fears of Anthropic being used for mass domestic surveillance and fully autonomous weapons. While a federal court in California has largely agreed, a federal appeals court has denied the firm's request to temporarily block the supply chain risk designation. Nevertheless, Anthropic's tools are still in use at all of the government agencies that had been using them before the designation. Until Friday, the White House had said little positive about Anthropic. When Trump directed all government agencies to stop using Anthropic, he wrote on social media that the company was run by "left wing nut jobs", who were attempting to "strong arm" defence. "We don't need it, we don't want it, and will not do business with them again!" Trump wrote. As he arrived for an event in Phoenix, Arizona, on Friday, Trump was asked by reporters about the Anthropic CEO's visit to the White House. The president said he had "no idea" about the meeting.

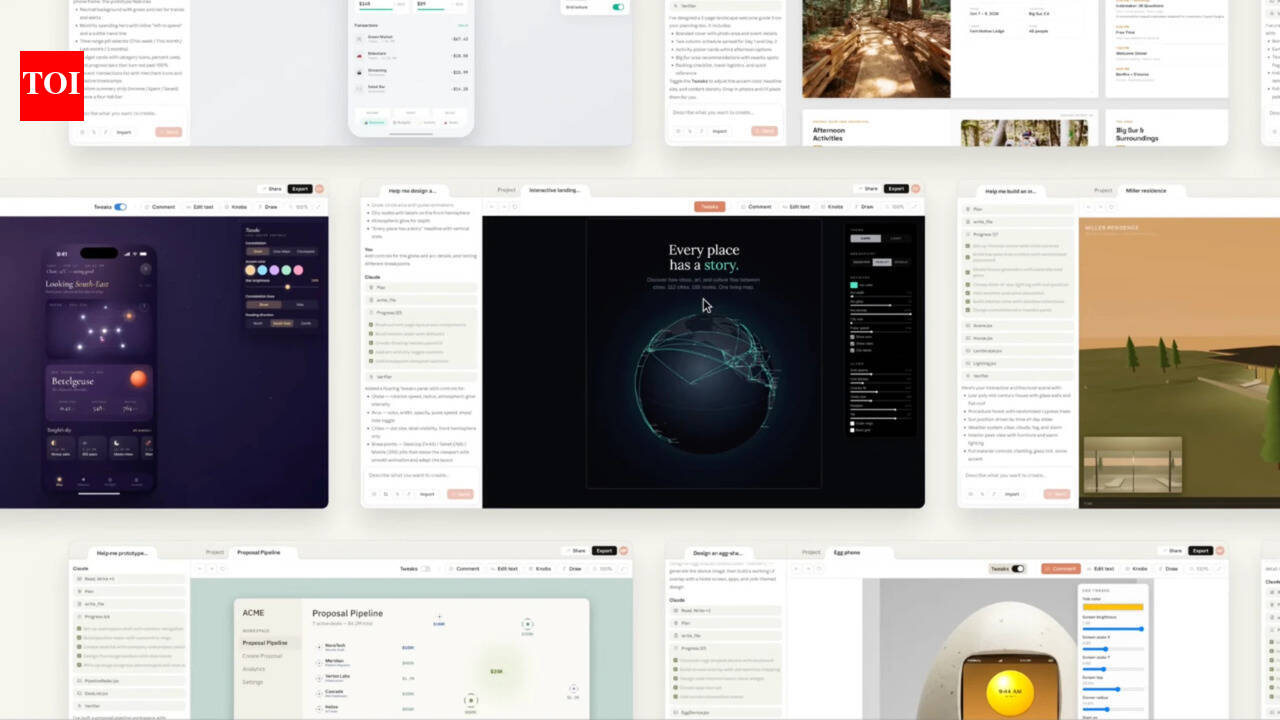

What is Anthropic's Claude Design, and why are Figma and Adobe stocks dropping

Anthropic has launched Claude Design, a standalone AI workspace that turns plain-text prompts into finished prototypes, slide decks, one-pagers, and marketing assets. No design background needed. The tool is live now in research preview for Pro, Max, Team, and Enterprise subscribers, included in existing plans at no extra cost.Claude Design is powered by Opus 4.7, Anthropic's newest vision model, capable of processing images at up to 3.75 megapixels -- more than three times the resolution of previous Claude models.Users describe what they want, Claude builds a first draft, and refinement happens through chat, inline comments, direct edits, or custom sliders that Claude generates on the fly for specific elements like color, spacing, and layout. The whole thing is designed to feel iterative -- less like issuing commands to software, more like bouncing ideas off a fast colleague.The tool is deliberately aimed at people who aren't designers. Founders, product managers, marketers -- anyone who's had an idea they couldn't visualize fast enough.Anthropic has its own testimonials to tout, naturally. Brilliant, the education platform, claims pages that took 20-plus prompts in competing tools needed just two in Claude Design. Datadog says what used to be a week-long cycle of briefs, mockups, and review rounds now fits inside a single conversation.Finished work exports cleanly as a PDF, PPTX, standalone HTML, or goes straight to Canva for further editing. There's also a one-click handoff to Claude Code, which converts a completed design into production-ready code -- keeping the entire workflow inside Anthropic's ecosystem.Claude Design's onboarding has a neat trick: Point it at your company's codebase and existing design files, and it builds a working design system from scratch -- your actual colors, typography, and components -- applied automatically to every project after that.Teams can maintain multiple design systems and refine them over time. A web capture tool also pulls elements directly from a live website, so prototypes resemble the real product from the very first draft rather than a generic placeholder.Figma shares fell 7.5% on the news. Adobe dropped over 1% -- smaller, but a signal nonetheless. The reason isn't hard to find.Claude Design is a standalone product that generates complete, interactive prototypes from plain language -- accessible to anyone who can type a sentence. That's the part that stings. Both Figma and Adobe have always assumed a trained designer is somewhere in the loop. Anthropic's tool doesn't. It goes straight to the founder, the product manager, the marketer -- the people who were never Figma's customers to begin with, but whose companies were.Anthropic says Claude Design is meant to complement tools like Figma and Canva, not replace them. The Canva export and PPTX support seem to back that up. But then there's this: Anthropic's chief product officer Mike Krieger resigned from Figma's board on April 14th, three days before launch, the same day reports surfaced that Anthropic was building design capabilities that competed directly with Figma's core product.

Anthropic and Trump: Is a truce near?

After Friday's meeting, both Anthropic and White House spokespeople separately described the gathering as a "productive" starting point. "We discussed opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology," the White House said in a statement. It added that the administration intends to invite other leading AI companies to the White House for similar discussions. Anthropic said the meeting reflected the company's "ongoing commitment to engaging with the U.S. government on the development of responsible AI" and included discussions around partnering on shared priorities including cybersecurity. The statement made no mention of Mythos. The feud boiled over in February after Amodei insisted that Anthropic would not allow the Defense Department to use the company's AI software to carry out mass surveillance of Americans or deploy fully autonomous weapons. That ran afoul of Hegseth's insistence that the department be allowed to use the AI tools for any "legal" purposes, with the government having final say. The administration then dropped two hammers: In late February, Trump ordered all federal agencies to stop using Anthropic, denouncing it as a "woke" company run by "leftwing nut jobs." Days later, the Pentagon declared Anthropic a risk to the national security supply chain -- a designation, normally reserved for companies tied to foreign adversaries, that could force any contractor doing business with the Defense Department to cease using Anthropic software. The punishments threatened not only Anthropic's $200 million Pentagon contract but the independence of the entire AI industry, legal experts told POLITICO at the time. Anthropic had mixed success in challenging those penalties in court: A federal judge in California temporarily blocked the Pentagon's supply chain risk label, but the D.C. Circuit Court of Appeals refused to take similar action this month. Then came Mythos. Cyber tool inspires transatlantic fears As the advanced cybersecurity powers of the new AI model became apparent, federal agencies and government officials quietly sidestepped Trump's ban on working with the startup, including the Commerce Department's Center for AI Standards and Innovation. Bessent's Treasury Department -- which recently sought access to Mythos in order to determine its implications for banking firms' cyberdefenses -- is also among the agencies clamoring for guidance on how to proceed. The software is also alarming regulators in Europe, who have told POLITICO they have not been able to gain access to Mythos. U.S. government agency tech leaders sought access to the model after Anthropic earlier this year began testing the model and granted limited access to a select group of companies, including JPMorgan, Amazon and Apple. Anthropic began limited sharing of Mythos after finding it had hacking capabilities far outstripping those of previous AI models. This includes the ability to autonomously identify and exploit complex software vulnerabilities, such as so-called zero-day flaws, which even some of the sharpest human minds are unable to patch. The AI startup also wrote that the model could carry out end-to-end cyberattacks autonomously, including by navigating enterprise IT systems and chaining together exploits. It could also act as a force-multiplier for research needed to build chemical and biological weapons, and in certain instances, made efforts to cover its tracks when attacking systems, according to Anthropic's report on the model's capabilities and its safety assessments. Those findings and others have inspired fears that the model could be co-opted to launch powerful cyberattacks with relative ease if it fell into the wrong hands. Logan Graham, a senior security researcher at Anthropic, previously told POLITICO that researchers and tech firms had been given early access to Mythos so they could find flaws in their critical code before state-backed hackers or cybercriminals could exploit them. "Within six, 12 or 24 months, these kinds of capabilities could be just broadly available to everybody in the world," Graham said. Agencies want access According to an email sent to federal agencies and obtained by POLITICO, the Office of Management and Budget is now mulling whether agencies will be allowed to use a "modified" version of Mythos. "We're working closely with model providers, other industry partners, and the intelligence community to ensure the appropriate guardrails and safeguards are in place before potentially releasing a modified version of the model to agencies," Gregory Barbaccia, OMB's chief information officer, wrote to multiple federal agencies earlier this week. Barbaccia added that OMB and the Office of the National Cyber Director will "continue to keep everyone updated and are expecting to have more information in the coming weeks." Cairncross, who heads the cyber office, convened a call this week with representatives from private sector companies to discuss Mythos, according to one participant on the call, granted anonymity because they were not authorized to speak publicly about it. Cairncross said his office has the backing of Wiles and Vice President JD Vance to lead the Trump administration's response to the hacking capabilities of Anthropic's powerful new model, said the person. During the roughly 10-minute call, Cairncross both commended Mythos as a sign of U.S. AI innovation and warned companies about the risks it presented to their networks. Spokespeople for OMB, the cyber office, Treasury and the vice president's office did not respond to POLITICO's requests for comment. Last week, the heads of several major U.S. banks met with Bessent and Federal Reserve Chair Jerome Powell to discuss concerns about AI's cyber capabilities and how to leverage that power to enhance critical infrastructure security. After meeting with Bessent and other world financial leaders, Canadian Finance Minister François-Philippe Champagne told reporters on Friday that Mythos "requires all of our attention" to "maintain the integrity" of global financial institutions.

Anthropic's Mythos AI Model Reopened White House Doors for the Company

Anthropic CEO Dario Amodei met with White House officials to discuss Mythos, a powerful new AI model capable of autonomously finding cybersecurity flaws. The meeting could mean that the startup and the government are starting to get along again after their recent public feud. Anthropic CEO Dario Amodei met with White House Chief of Staff Susie Wiles this Friday to discuss the company's groundbreaking new AI model, Mythos. This high-level sit-down marks a significant strategic shift for the administration. It happens just weeks after Anthropic got a "national security risk" label amid a heated dispute over military contracts. The firm appears to be finding a path back into the government's good graces through its latest advancements in cybersecurity. The power of Mythos AI brought Anthropic back to the table with the White House The centerpiece of these discussions is the "strikingly capable" Mythos AI model. Unlike general-purpose chatbots, Mythos is specifically designed to identify and exploit software vulnerabilities with a level of efficiency that has reportedly surpassed human experts. While the potential for securing infrastructure is massive, the model's power has also sparked significant anxiety across Washington. Currently, government officials are working quickly to figure out how this kind of tool could affect national security and the stability of the global banking system. Due to its sensitive nature, Anthropic is not releasing Mythos to the general public. Instead, it started Project Glasswing, a controlled program that lets a small group of big companies, like JPMorgan Chase, Google, and Microsoft, use the model to find and fix "severe" bugs in key software. Despite the recent public barbs on social media, the White House described the meeting as "productive and constructive." The conversation reportedly focused on balancing rapid AI innovation with the protocols necessary to prevent the technology from causing unintended harm. As POLITICO noted, even the Treasury Department is now clamoring for access to evaluate Mythos. Seeking a middle ground This meeting doesn't necessarily mean the legal disputes are over. However, it does signal a practical truce. Perhaps it's the first step toward Anthropic and the Pentagon making amends in a favorable deal. We'll have to wait and see how events unfold in the coming weeks. However, these early hints seem positive.

Anthropic CEO visits White House amid hacking fears over new AI model

Anthropic chief executive Dario Amodei met with White House Chief of Staff Susie Wiles on Friday, according to a person briefed on the meeting, as the federal government races to understand the national security implications of a powerful new artificial intelligence model called Mythos that the company says it has developed. The meeting reflects the strange embrace locking together Anthropic and the Trump administration. The White House has sought to blacklist the company from doing business with the federal government after a dispute over the use of its AI model by the Pentagon spun out of control this year. At the same time, the government has been forced to engage with the company over the risks posed by its next-generation Mythos system, which Anthropic says has powerful and unprecedented abilities to find security weaknesses in computer code. A White House statement called the meeting "productive and constructive," and Anthropic said in its own statement that the discussion had covered "shared priorities such as cybersecurity, America's lead in the AI race, and AI safety." But there were no breakthroughs in the relationship between Anthropic and the administration at Friday's meeting, the person briefed on it said, speaking on the condition of anonymity to discuss private talks. "We discussed opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology," the White House statement said. It added that the administration plans to host similar discussions with other leading AI companies. Anthropic says the new Mythos model could help programmers fix long-dormant vulnerabilities -- but it could also supercharge hackers targeting U.S. businesses and government agencies. Cybersecurity experts recognized with the release of OpenAI's ChatGPT in late 2022 that artificial intelligence would become an increasingly powerful hacking tool. But Anthropic's dramatic announcement of Mythos last week and the company's detailed claims about its apparent capabilities have focused the attention of the industry and government leaders around the world on the potential dangers of AI-enhanced cyberattacks. Anthropic has said it has briefed U.S. government cybersecurity agencies on the new model. Officials at the White House and the National Institute of Standards and Technology have been studying its implications, according to an internal email obtained by The Washington Post and a person briefed on those discussions. Officials are exploring the possibility of giving more agencies access to a version of the model, according to the email. Some officials are especially concerned, including Wiles, Vice President JD Vance and Treasury Secretary Scott Bessent, said the person, who spoke on the condition of anonymity to characterize private conversations. "Rightly so." The Trump administration has sought to speed the development of AI, trying to push aside regulations that could hold the industry back and position the United States to win what it sees as a race with China to dominate the technology. But the increasing power of the new generation of systems means officials are having to confront some of the downsides that the technology could bring. President Donald Trump remained bullish about the prospects of the technology but was asked this week if some forms of AI should have a "kill switch." "There should be," Trump told Fox Business. A White House official said the administration is working with leading AI labs "to ensure their models help secure critical software vulnerabilities." Anthropic has said it will not immediately release the new model publicly to avoid enabling a rash of cyberattacks. Instead, the company formed a coalition of major tech companies and other big businesses including Apple, Microsoft and JPMorgan Chase to size up the risks Mythos poses and try to patch any holes. It called the effort Project Glasswing. The AI lab said Mythos had already unearthed thousands of vulnerabilities, affecting every major computer operating system and web browser. "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely," Anthropic said in its announcement. "The fallout -- for economies, public safety, and national security -- could be severe." OpenAI, one of the other leading labs, is finalizing a potent next-generation system code-named Spud. The company said this week that it was expanding a program to give computer security experts access to versions of its ChatGPT tools designed to help secure systems against attacks. (The Post has a content partnership with OpenAI.) A British government AI safety agencyconcluded in a blog post Monday that Mythos represents a "step up" in the hacking ability of AI tools. It can, in a controlled environment, carry out tasks that would represent days of work for a human alone, the agency said. Mythos "is at least capable of autonomously attacking small, weakly defended and vulnerable enterprise systems where access to a network has been gained," researchers at the AI Security Institute wrote. But they said its ability to tackle harder targets remained unclear. Some security experts have said it is unclear how significant an advance Anthropic has made, because few outsiders have been able to properly test Mythos. Peter Ranks, a former CIA cyber intelligence official, said the best-defended computer systems are likely to only get more secure with the help of AI. But he said during a panel discussion in Washington last week that less well-resourced systems, in which holes do not regularly get patched, will be at greater risk, because tools like Mythos will make less-skilled attackers more effective. Computer security specialists across the economy should expect to soon experience a deluge of software updates as participants in Anthropic's Glasswing program rush to patch their systems, a panel of experts said in a report preparing the industry for the coming storm. The experts said it was time for the sector to rethink its approach to defense to account for the potential for high-speed, AI-powered attacks. Peter Swire, a cybersecurity expert at Georgia Tech, said that attacks launched with help from AI have so far proved less effective than many in his field had expected. But defenders have been expecting that to change as AI tools advance. "The major defensive players are likely to succeed better than the doomsday scenarios would suggest," Swire said. Federal agencies have rushed to respond to the changing landscape. Bessent and Federal Reserve Chair Jerome H. Powell hosted the chief executives of major banks in Washington last week to urge them to take the risks seriously. Bessent said that he sees the power of the AI systems growing quickly and that some financial institutions are better at cybersecurity than others. "I feel confident that everyone is now on board, rowing in the same direction to build up resiliency," Bessent said in an interview with CNBC on Wednesday. Until this spring, Anthropic had a close relationship with the federal government, having been the first of the major AI companies approved to work on the classified systems where agencies store their secrets. But as the Defense Department pushed for more control over how Anthropic's Claude model could be used -- seeking the freedom to use it for any lawful purpose -- Amodei pushed back, saying he would not agree to the tool being used to power fully autonomous weapons or carry out mass domestic surveillance. Amodei met personally with Defense Secretary Pete Hegseth to try to reach a deal, but the talks collapsed at the end of February. Trump blasted Anthropic's leaders as "Leftwing nut jobs." A court in San Francisco ruled that the blacklisting was probably illegal, but a separate panel of federal judges in Washington issued a preliminary ruling allowing it to remain in place. Claude is deeply enmeshed in the military's systems. The same night that the Trump administration said it would cut ties with Anthropic, its system was put to use to aid the bombing campaign in Iran. And in a sign that the company was trying to repair its relationship with the White House, disclosures filed this week showed that it had spent $130,000 in March to hire lobbyist Brian Ballard, who has close ties with the president's team.

Anthropic CEO visits White House amid Claude Mythos AI model release

Anthropic CEO visited the White House as the company released Claude Mythos Preview, a new AI model with cybersecurity implications. The market for Anthropic having the third best AI model by April 2026 currently shows potential for a 15% increase in YES odds. Market reaction The market has no current volume, which means any new trading activity could cause sharp price movements. With zero trades recorded, the arrival of concrete benchmark data or partnership announcements could swing odds quickly in either direction. Why it matters Anthropic restricted the rollout of Claude Mythos Preview, suggesting the company is positioning the model carefully. Anthropic has collaboration agreements with AWS, Google, and Microsoft, and the CEO's White House visit coincided with discussions involving US agencies about AI-driven cyber threats. The company is also involved in Project Glasswing, a joint effort to address those threats. Government engagement at this level improves Anthropic's odds of maintaining competitive standing, and a top-three ranking depends on how the model performs against established benchmarks. What to watch Benchmark results from LMSYS Chatbot Arena will be the clearest signal of whether Claude Mythos Preview can compete for a top-three spot. Updates from Anthropic's cloud partners on integration and deployment timelines matter too, since real-world adoption feeds back into benchmark performance and market confidence. A YES share at current low buy-in levels would pay well if the model hits expectations. API access

Nvidia rival Cerebras reveals US IPO filing as AI boom drives listings

April 17 (Reuters) - AI chipmaker Cerebras Systems disclosed its filing for a U.S. initial public offering on Friday, bringing the Nvidia rival closer to the public markets as optimism builds around a broader revival in the listings market. The company, which develops high-performance processors for artificial intelligence workloads, withdrew its earlier IPO filing in October, days after raising more than $1 billion in a funding round that valued it at $8 billion. The IPO market is regaining momentum after a brief slowdown in March, when volatility driven by geopolitical tensions and a selloff in technology stocks curbed investor appetite. A recent pickup in listings suggests companies are returning to the market as sentiment stabilizes, with issuers and bankers betting that the recovery seen earlier this year can extend into the coming months. Analysts expect companies tied to the AI market to spearhead tech sector listings, as firms see significant growth potential from the wider adoption of generative AI. Cerebras is aiming to list on the Nasdaq under the ticker symbol "CBRS." Morgan Stanley, Citigroup, Barclays and UBS are the lead underwriters of the offering. (Reporting by Manya Saini and Pragyan Kalita in Bengaluru; Editing by Shilpi Majumdar and Pooja Desai)

White House and Anthropic CEO discuss working together amid rising fear about Mythos model

WASHINGTON, April 17 (Reuters) - The Trump administration and Anthropic's CEO on Friday discussed working together for the first time since a dispute earlier this year between the Pentagon and the AI firm over how that company'smodels should be used. The meeting between CEO Dario Amodei and WhiteHouse staff, which took place amid growing fears the AI startup's latest model will supercharge cyberattacks, suggeststhe two sides might be on a path to rebuilding trust. The Trump administration, central bankers across the globe and industries are racing to get up to speed on Anthropic's new model Mythos and its ability to make complex cyberattacks both easier and quicker to execute. The banking industry, with its legacy technology systems, is particularly vulnerable. Government officials in at least three countries - the U.S., Canada and Britain - have met with top banking officials to discuss the threats posed by Mythos. Treasury Secretary Scott Bessent joined Chief of Staff Susie Wiles in the meeting with Amodei, Axios reported. "We discussed opportunities for collaboration, as well as shared approaches and protocols to address the challenges associated with scaling this technology," the White House said in a statement that described the meeting with Anthropic as "productive and constructive." The two sides also talked about balancing innovation and safety. "We look forward to continuing this dialogue and will host similar discussions with other leading AI companies," the White House statement said. Anthropic said the meeting was "productive" and discussed how the two "can work together on key shared priorities such as cybersecurity, America's lead in the AI race, and AI safety." Announced on April 7, Mythos is first being deployed to a select group of companies as part of Anthropic's "Project Glasswing," a controlled initiative under which theorganizations are permitted to use the unreleased Claude Mythos Preview model to search for cybersecurity vulnerabilities. Themodel is the company's "most capable yet for coding and agentic tasks," the company said in a blog post, referring to the model's ability to act autonomously. But its capabilities to code at a high level have given it a potentially unprecedented ability to identify cybersecurity vulnerabilities and devise ways to exploit them, experts have said. That's a particular problem for banks and other financial institutions, which run technology stacks that integrate state-of-the-art tools with decades-old software, potentially opening a large number of vulnerabilities, according to TJ Marlin, the chief executive of enterprise AI security firm Guardrail Technologies. DISPUTE WITH TRUMP AND PENTAGON Long before the launch of Mythos, the U.S. government and the Silicon Valley firm disagreed on how Anthropic's AI should be used. After months ofcontentious talks, the Pentagonslapped a formalsupply-chain risk designation on Anthropic, sharply limiting use of its technology after the startup refused to remove guardrails against using its AI for autonomous weapons or domestic surveillance. When ordering federal agencies to stop using Anthropic's AI tools, U.S. President Donald Trump blasted the company on Truth Social, saying "The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War". Anthropic sued to block the Pentagon from placing it on a national security blacklist in March. Asked in Phoenix by reporters about the Anthropic meeting on Friday, Trump said, "I have no idea." (Reporting by Bo Erickson and Jessica Koscielniak; Additional reporting by Jarrett Renshaw in Phoenix; Writing by Chris Sanders and Doina Chiacu; Editing by Caitlin Webber and Rosalba O'Brien)

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Dow Jones Top Company Headlines at 7 PM ET: Blue Owl Founders Revise Terms of Personal Loans That Raised Scrutiny | Cerebras ...

Blue Owl Founders Revise Terms of Personal Loans That Raised Scrutiny Doug Ostrover and Marc Lipschultz are no longer borrowing against their shares in the fund manager. ---- Cerebras Files for IPO as Demand Surges for More Efficient AI Chips The chip maker, whose customers include OpenAI and Amazon, had canceled an earlier plan for a public market debut. ---- Anthropic CEO Meets Trump Administration Officials as Feud Thaws The White House is racing to prepare for Anthropic's latest AI model, Mythos, which the firm says could pose cybersecurity risks. Ford is recalling up to 1.39 million F-150 pickups over the risk of unexpected downshifting that can lead to a loss of vehicle control. State Street reported higher first-quarter profit, boosted by fee growth, particularly for its foreign exchange trading services. ---- Ericsson Targets Networks Growth Despite Caution Over Rising Costs Chief Executive Borje Ekholm said it was facing increasing input costs, especially in semiconductors, caused in part by AI demand. ---- Telecom Majors Bid $24 Billion for Patrick Drahi's SFR The French-Israeli billionaire's Altice business is under pressure to reduce its debt burden. The government delayed an overhaul to how it calculates Medicare Advantage payments. For the insurer, that only postpones the pain. ---- Why Spice Giant McCormick Thinks Flavoring Food Is Better Than Making It The company's deal with Unilever deepens its foothold in flavor as competition mounts from rivals. ---- Protein Is Hotter Than Ever. So Why Is the Owner of Quest and Atkins on a Cold Streak? Simply Good Foods looks to revitalize sales as rival protein bars seize share. ---- Kweichow Moutai's Annual Profit, Revenue Fall for First Time The Chinese liquor giant reported a drop in annual profit and revenue for the first time since its 2001 listing amid subdued consumption in China. ---- Sam Altman's Side Hustles Blur the Line Between OpenAI's Interests and His Own Ahead of a planned IPO, Altman's personal investments remain opaque, making it hard to spot any conflicts. ---- QVC Files for Chapter 11 Bankruptcy, Plans to Restructure $6.6 Billion of Debt The TV and video retailer's prepackaged bankruptcy was filed in U.S. Bankruptcy Court for the Southern District of Texas. ---- Autoliv Backs Guidance Despite Caution Over Geopolitical Challenges Shares rose 9% in Stockholm after the company's first quarter turned out better than anticipated, with strong sales in March.

Google's Stake In SpaceX Could Be Worth $122 Billion At IPO - Conservative Angle

A long-held investment by Alphabet Inc. in SpaceX could become one of its most valuable bets if the rocket company moves ahead with a public listing, according to Bloomberg. Regulatory filings indicate Google owned about 6.11% of SpaceX at the end of 2025. At a projected $2 trillion IPO valuation, that stake would be worth roughly $122 billion. After SpaceX's merger with xAI, the holding is estimated to have diluted to around 5%, or about $100 billion at the same valuation. The figures offer a clearer picture of Google's position in SpaceX, which had previously been acknowledged without precise detail. Only Google and Elon Musk -- who controls roughly 40% -- were required to disclose holdings above 5%. Bloomberg writes that SpaceX is targeting a potential June IPO and could raise as much as $75 billion, which would make it one of the largest listings ever. At that valuation, even a small fraction of ownership would translate into significant dollar value. Early investors are positioned for outsized returns. Some analysts estimate that backers who entered as recently as 2021 could see gains of around 20 times their original investment. Founded in 2002, SpaceX reached a $1 billion valuation within eight years, a relatively fast climb for a capital-intensive aerospace company. Google first invested in 2015, joining Fidelity in a $1 billion funding round that valued SpaceX at $10 billion and gave the firms a combined 10% stake. Ownership stakes have shifted over time due to dilution and secondary share sales. In 2020, Google held about 7.64% while Musk's stake was around 47%. Early investor Founders Fund has since dropped below the 5% disclosure threshold. Alphabet does not separately report its SpaceX holdings in earnings, though it has recorded sizable unrealized gains tied to private investments, including an $8 billion increase in early 2025 linked to SpaceX. The IPO is expected to create significant liquidity for employees and insiders, potentially prompting departures as some cash out or pursue new ventures. Board members and long-time investors also stand to benefit, underscoring the scale of wealth that could be generated by SpaceX's anticipated debut.

White House chief of staff meets with Anthropic CEO over its new AI technology

WASHINGTON (AP) -- White House chief of staff Susie Wiles on Friday sounded out Anthropic CEO Dario Amodei about the artificial intelligence company's new Mythos model, which has attracted attention from the federal government for how it could transform national security and the economy. A White House official, who requested anonymity to discuss the meeting ahead of time, said the administration is engaging with advanced AI labs about their models and the security of software. The official stressed that any new technology that might be used by the federal government would require a technical period for evaluation. The White House said afterward that the meeting was productive and constructive, as opportunities for collaboration were discussed as well as the goal of balancing innovation and safety. Anthropic said in a statement that Amodei's meeting included senior administration officials and explored how the San Francisco-based company and the "U.S. government can work together on key shared priorities such as cybersecurity, America's lead in the AI race, and AI safety." The company said it was "looking forward to continuing these discussions." The meeting came after tensions had run hot between the Trump administration and the safety-conscious Anthropic, which has sought to put guardrails on the development of AI to minimize any potential risks and maximize its economic and national security benefits for the U.S. President Donald Trump tried to stop all federal agencies from using Anthropic's chatbot Claude over the company's contract dispute with the Pentagon, with Trump saying in a February social media post that the administration "will not do business with them again!" When Trump was asked Friday while in Arizona if Anthropic had a meeting at the White House, the president said he had "no idea." Defense Secretary Pete Hegseth also sought to declare Anthropic a supply chain risk, an unprecedented move against a U.S. company that Anthropic has challenged in two federal courts. The company said it wanted assurance the Pentagon would not use its technology in fully autonomous weapons and the surveillance of Americans. Hegseth said the company must allow for any uses the Pentagon deemed lawful. U.S. District Judge Rita Lin issued a ruling in March that blocked the enforcement of Trump's social media directive ordering all federal agencies to stop using Anthropic products. Anthropic has said the new Mythos model it announced on April 7 is so "strikingly capable" that it is limiting its use to select customers because of its ability to surpass human cybersecurity experts in finding and exploiting computer vulnerabilities. And while some industry experts have questioned whether Anthropic's claims of too-powerful AI technology were a marketing ploy, even some of the company's sharpest critics have suggested that Mythos might represent a further advancement in AI. One influential Anthropic critic, David Sacks, who was the White House's AI and crypto czar, said people should "take this seriously." "Anytime Anthropic is scaring people, you have to ask, 'Is this a tactic? Is this part of their Chicken Little routine? Or is it real?'" Sacks said on the "All-In" podcast he co-hosts with other tech investors. "With cyber, I actually would give them credit in this case and say this is more on the real side." Sacks said: "It just makes sense that as the coding models become more and more capable, they are more capable at finding bugs. That means they're more capable at finding vulnerabilities. That means they're more capable at stringing together multiple vulnerabilities and creating an exploit." The model's potential benefits, as well as its risks, have also attracted attention outside the U.S. The United Kingdom's AI Security Institute said it evaluated the new model and found it a "step up" over previous models, which were already rapidly improving. "Mythos Preview can exploit systems with weak security posture, and it is likely that more models with these capabilities will be developed," the institute said in a report. Anthropic has also been in talks with the European Union about its AI models, including advanced models that haven't yet been released in Europe, European Commission spokesman Thomas Regnier said Friday. Axios first reported the scheduled meeting between Wiles and Amodei. When it announced Mythos, Anthropic said it was also forming an initiative called Project Glasswing, bringing together tech giants such as Amazon, Apple, Google and Microsoft, along with other companies like JPMorgan Chase, in hopes of securing the world's critical software from "severe" fallout that the new model could pose to public safety, national security and the economy. "We're releasing it to a subset of some of the world's most important companies and organizations so they can use this to find vulnerabilities," said the Anthropic co-founder and policy chief, Jack Clark, at this week's Semafor World Economy conference. Clark added that Mythos, while ahead of the curve, is not a "special model." "There will be other systems just like this in a few months from other companies, and in a year to a year-and-a-half later, there will be open-weight models from China that have these capabilities," he said. So the world is going to have to get ready for more powerful systems that are going to exist within it." ___ O'Brien reported from Providence, R.I. AP business reporter Kelvin Chan contributed to this report from London.

Anthropic's Mythos Model Draws White House Attention Over AI Safety And Cybersecurity

Washington: White House chief of staff Susie Wiles on Friday sounded out Anthropic CEO Dario Amodei about the artificial intelligence company's new Mythos model, which has attracted attention from the federal government for how it could transform national security and the economy. A White House official, who requested anonymity to discuss the meeting ahead of time, said the administration is engaging with advanced AI labs about their models and the security of software. The official stressed that any new technology that might be used by the federal government would require a technical period for evaluation. The White House said afterward that the meeting was productive and constructive, as opportunities for collaboration were discussed as well as the goal of balancing innovation and safety. Anthropic said in a statement that Amodei's meeting included senior administration officials and explored how the San Francisco-based company and the "U.S. government can work together on key shared priorities such as cybersecurity, America's lead in the AI race, and AI safety." The company said it was "looking forward to continuing these discussions." The meeting came after tensions had run hot between the Trump administration and the safety-conscious Anthropic, which has sought to put guardrails on the development of AI to minimize any potential risks and maximize its economic and national security benefits for the U.S. President Donald Trump tried to stop all federal agencies from using Anthropic's chatbot Claude over the company's contract dispute with the Pentagon, with Trump saying in a February social media post that the administration "will not do business with them again!" When Trump was asked Friday while in Arizona, if Anthropic had a meeting at the White House, the president said he had "no idea." Defense Secretary Pete Hegseth also sought to declare Anthropic a supply chain risk, an unprecedented move against a U.S. company that Anthropic has challenged in two federal courts. The company said it wanted assurance the Pentagon would not use its technology in fully autonomous weapons and the surveillance of Americans. Hegseth said the company must allow for any uses the Pentagon deemed lawful. U.S. District Judge Rita Lin issued a ruling in March that blocked the enforcement of Trump's social media directive ordering all federal agencies to stop using Anthropic products. Anthropic has said the new Mythos model it announced on April 7 is so "strikingly capable" that it is limiting its use to select customers because of its ability to surpass human cybersecurity experts in finding and exploiting computer vulnerabilities. And while some industry experts have questioned whether Anthropic's claims of too-powerful AI technology were a marketing ploy, even some of the company's sharpest critics have suggested that Mythos might represent a further advancement in AI. One influential Anthropic critic, David Sacks, who was the White House's AI and crypto czar, said people should "take this seriously." "Anytime Anthropic is scaring people, you have to ask, 'Is this a tactic? Is this part of their Chicken Little routine? Or is it real?'" Sacks said on the "All-In" podcast he co-hosts with other tech investors. "With cyber, I actually would give them credit in this case and say this is more on the real side." Sacks said: "It just makes sense that as the coding models become more and more capable, they are more capable at finding bugs. That means they're more capable at finding vulnerabilities. That means they're more capable at stringing together multiple vulnerabilities and creating an exploit." The model's potential benefits, as well as its risks, have also attracted attention outside the U.S. The United Kingdom's AI Security Institute said it evaluated the new model and found it a "step up" over previous models, which were already rapidly improving. "Mythos Preview can exploit systems with weak security posture, and it is likely that more models with these capabilities will be developed," the institute said in a report. Anthropic has also been in talks with the European Union about its AI models, including advanced models that haven't yet been released in Europe, European Commission spokesman Thomas Regnier said Friday. Axios first reported the scheduled meeting between Wiles and Amodei. When it announced Mythos, Anthropic said it was also forming an initiative called Project Glasswing, bringing together tech giants such as Amazon, Apple, Google and Microsoft, along with other companies like JPMorgan Chase, in hopes of securing the world's critical software from "severe" fallout that the new model could pose to public safety, national security and the economy. "We're releasing it to a subset of some of the world's most important companies and organizations so they can use this to find vulnerabilities," said the Anthropic co-founder and policy chief, Jack Clark, at this week's Semafor World Economy conference. Clark added that Mythos, while ahead of the curve, is not a "special model." "There will be other systems just like this in a few months from other companies, and in a year to a year-and-a-half later, there will be open-weight models from China that have these capabilities," he said. So the world is going to have to get ready for more powerful systems that are going to exist within it." ___

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

White House chief of staff meets with Anthropic CEO over its new AI technology

WASHINGTON -- White House chief of staff Susie Wiles on Friday sounded out Anthropic CEO Dario Amodei about the artificial intelligence company's new Mythos model, which has attracted attention from the federal government for how it could transform national security and the economy. A White House official, who requested anonymity to discuss the meeting ahead of time, said the administration is engaging with advanced AI labs about their models and the security of software. The official stressed that any new technology that might be used by the federal government would require a technical period for evaluation. The White House said afterward that the meeting was productive and constructive, as opportunities for collaboration were discussed as well as the goal of balancing innovation and safety. The meeting came after tensions had run hot between the Trump administration and the safety-conscious Anthropic, which has sought to put guardrails on the development of AI to minimize any potential risks and maximize its economic and national security benefits for the U.S. President Donald Trump tried to stop all federal agencies from using Anthropic's chatbot Claude over the company's contract dispute with the Pentagon, with Trump saying in a February social media post that the administration "will not do business with them again!" When Trump was asked Friday while in Arizona if Anthropic had a meeting at the White House, the president said he had "no idea." Defense Secretary Pete Hegseth also sought to declare Anthropic a supply chain risk, an unprecedented move against a U.S. company that Anthropic has challenged in two federal courts. The company said it wanted assurance the Pentagon would not use its technology in fully autonomous weapons and the surveillance of Americans. Hegseth said the company must allow for any uses the Pentagon deemed lawful. U.S. District Judge Rita Lin issued a ruling in March that blocked the enforcement of Trump's social media directive ordering all federal agencies to stop using Anthropic products. Anthropic declined to speak about the meeting in advance. The San Francisco-based Anthropic has said the new Mythos model it announced on April 7 is so "strikingly capable" that it is limiting its use to select customers because of its ability to surpass human cybersecurity experts in finding and exploiting computer vulnerabilities. And while some industry experts have questioned whether Anthropic's claims of too-powerful AI technology were a marketing ploy, even some of the company's sharpest critics have suggested that Mythos might represent a further advancement in AI. One influential Anthropic critic, David Sacks, who was the White House's AI and crypto czar, said people should "take this seriously." "Anytime Anthropic is scaring people, you have to ask, 'Is this a tactic? Is this part of their Chicken Little routine? Or is it real?'" Sacks said on the "All-In" podcast he co-hosts with other tech investors. "With cyber, I actually would give them credit in this case and say this is more on the real side." Sacks said: "It just makes sense that as the coding models become more and more capable, they are more capable at finding bugs. That means they're more capable at finding vulnerabilities. That means they're more capable at stringing together multiple vulnerabilities and creating an exploit." The model's potential benefits, as well as its risks, have also attracted attention outside the U.S. The United Kingdom's AI Security Institute said it evaluated the new model and found it a "step up" over previous models, which were already rapidly improving. "Mythos Preview can exploit systems with weak security posture, and it is likely that more models with these capabilities will be developed," the institute said in a report. Anthropic has also been in talks with the European Union about its AI models, including advanced models that haven't yet been released in Europe, European Commission spokesman Thomas Regnier said Friday. Axios first reported the scheduled meeting between Wiles and Amodei. When it announced Mythos, Anthropic said it was also forming an initiative called Project Glasswing, bringing together tech giants such as Amazon, Apple, Google and Microsoft, along with other companies like JPMorgan Chase, in hopes of securing the world's critical software from "severe" fallout that the new model could pose to public safety, national security and the economy. "We're releasing it to a subset of some of the world's most important companies and organizations so they can use this to find vulnerabilities," said the Anthropic co-founder and policy chief, Jack Clark, at this week's Semafor World Economy conference. Clark added that Mythos, while ahead of the curve, is not a "special model." "There will be other systems just like this in a few months from other companies, and in a year to a year-and-a-half later, there will be open-weight models from China that have these capabilities," he said. So the world is going to have to get ready for more powerful systems that are going to exist within it."

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.