News & Updates

The latest news and updates from companies in the WLTH portfolio.

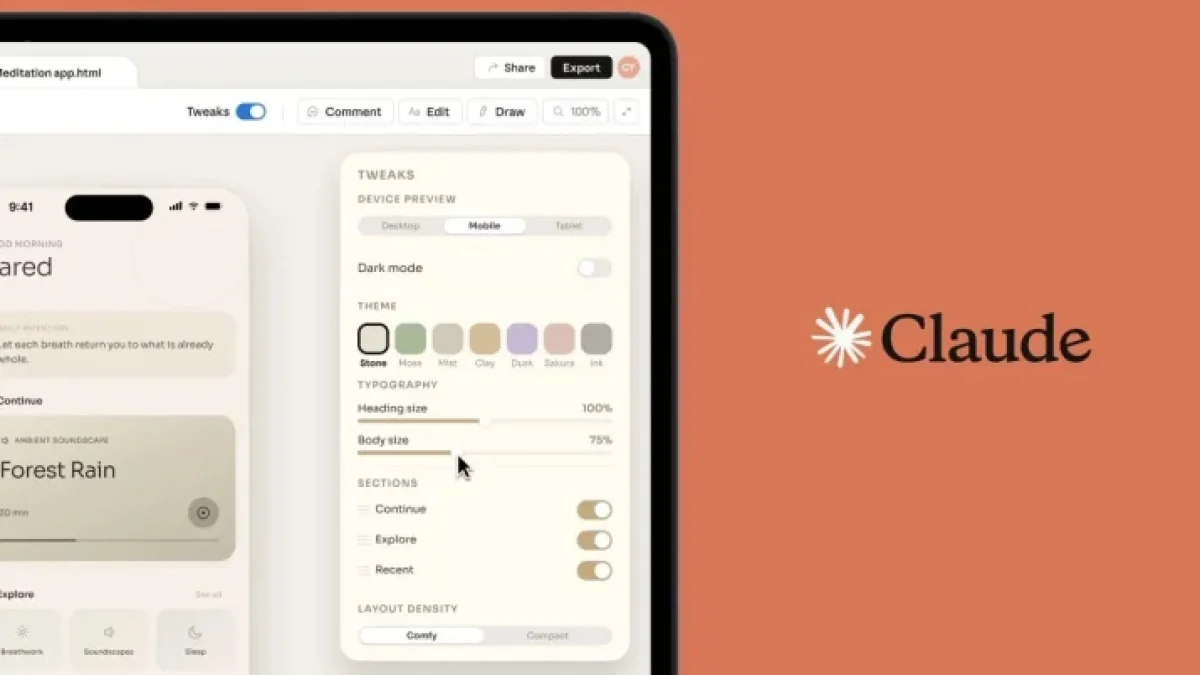

Anthropic Unveils Claude Design Post-Opus 4.7 Upgrade

Anthropic has launched a new product called Claude Design, expanding its suite of Mac tools designed for teams. This innovation follows two earlier design-related updates from the company. Claude Design operates on the Opus 4.7 platform, providing advanced features for teams working on design projects. Overview of Claude Design Claude Design streamlines the process of creating design systems. During the onboarding process, it analyzes your codebase and design files to automatically generate a tailored design system. This system incorporates your team's unique colors, typography, and components for subsequent projects. Key Features of Claude Design * System Refinement: Teams can refine their design systems over time and maintain multiple systems simultaneously. * Versatile Input Options: Begin with a text prompt, upload various document formats, or utilize the web capture tool to extract elements from websites. * Collaboration Tools: Claude Design simplifies collaboration among team members and offers easy file exporting capabilities. * Integration with Claude Code: Work done in Claude Design can be seamlessly transferred to Claude Code. Availability and Rollout Claude Design will be rolling out gradually throughout the day. It is available to different subscription tiers, including Pro, Max, Team, and Enterprise users. However, the feature is off by default for Enterprise accounts and must be activated by administrators. Future Enhancements Anthropic plans to enhance integration options with Claude Design, allowing teams to connect it to more existing tools. This development aims to further streamline workflows for users, maximizing the utility of Claude Design in teams' design processes. For more information about Claude Design and its functionalities, visit El-Balad. Additionally, details about Claude for Mac can also be found on the same platform.

White House and Anthropic Hold 'Productive' Meeting, Aiming for a Compromise

Anthropic's chief executive met with White House officials on Friday for discussions that both sides described as "productive," as the Trump administration works to forge a compromise that would bring the artificial intelligence company's technology back into the government, according to U.S. officials and others briefed on the matter. Dario Amodei, Anthropic's chief executive, met with the White House chief of staff, Susie Wiles, Treasury Secretary Scott Bessent and others at the White House, the people said. The meeting was "both productive and constructive," the White House said in a statement, with conversations about how to collaborate and address the challenges of A.I. while exploring "the balance between advancing innovation and ensuring safety." Anthropic said in a statement that the discussions touched on "key shared priorities" such as cybersecurity and A.I. safety. Anthropic had been effectively cut off from working with the federal government after battling with the Pentagon earlier this year. The two sides had disagreed in negotiations over a $200 million contract about the use of A.I. in warfare, with the Pentagon later designating Anthropic a "supply chain risk." Friday's meeting was a potential first step to a deal. If officials reach a compromise with the company, it would likely exclude the Pentagon, two officials said. Some White House officials have argued that a fight with Anthropic is counterproductive and denies the United States some of the most powerful tech tools, officials said. This follows Anthropic's unveiling on April 7 of a powerful new A.I. model, Mythos, which is capable of identifying security vulnerabilities in software. Officials believe it is critical to access the model -- which Anthropic has made available only on a limited basis -- to help protect government networks from cyberattacks. Some White House officials were also frustrated that other officials failed to find a way to de-escalate the contract fight with Anthropic, especially given the potential for Mythos to wreak havoc on computer systems, officials said. "We look forward to continuing this dialogue and will host similar discussions with other leading A.I. companies," the White House said in its statement. Anthropic added that the meeting reflected its "ongoing commitment to engaging with the U.S. government on the development of responsible A.I." and that it was "looking forward to continuing these discussions. The White House meeting was earlier reported by Axios. Neither the Pentagon nor Anthropic had shown much willingness to settle their dispute. During contract negotiations, Anthropic had sought assurances that its powerful A.I. models -- which until recently were the only ones allowed on classified computers at the Defense Department -- would not be used for commanding autonomous lethal weapons or surveilling Americans. In response, the Pentagon said no private contractor could tell it how to use the technology. The fight came to a head on March 5, when Secretary of Defense Pete Hegseth labeled Anthropic a supply chain risk. The designation was previously used only for foreign companies that the government believed posed a risk to national security. Anthropic later sued the U.S. government over the designation in courts in California and Washington, D.C. Since then, Anthropic has told government officials that it is willing to provide access to Mythos to help agencies use the tool to find and fix potential vulnerabilities in software and computer networks. At the Pentagon, engineers who work with Anthropic are petitioning the department to keep using the company's technology, according to two officials who have participated in meetings about A.I. technology this month. While the engineers were not averse to using new A.I. models from other companies, they did not want to be cut off from Anthropic's technology, which is used to analyze intelligence and handle sensitive data. The engineers also urged the Pentagon to update to the newest Anthropic models available, the two officials said. Because the models are held on systems housed within the Pentagon, Anthropic cannot update them unless given access by defense officials, they said. Senior Pentagon officials declined to talk about Anthropic, citing the ongoing lawsuits. The department is using an older version of Anthropic's Opus A.I. model than it would have had the dispute not occurred, current and former defense officials said. Other officials said they were pressing ahead with bringing models from OpenAI and Google to Pentagon computers, including a more advanced version of Google's Gemini that will be online for military use in the coming days. After Anthropic sued the U.S. government over the "supply chain risk" label, a judge in the U.S. District Court for the Northern District of California temporarily stopped the Pentagon from enforcing the designation in late March. In a separate ruling in the U.S. Court of Appeals for the District of Columbia Circuit on April 8, the judge denied Anthropic's request to stop the Pentagon from labeling the company a supply chain risk. Julian E. Barnes covers the U.S. intelligence agencies and international security matters for The Times. He has written about security issues for more than two decades.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

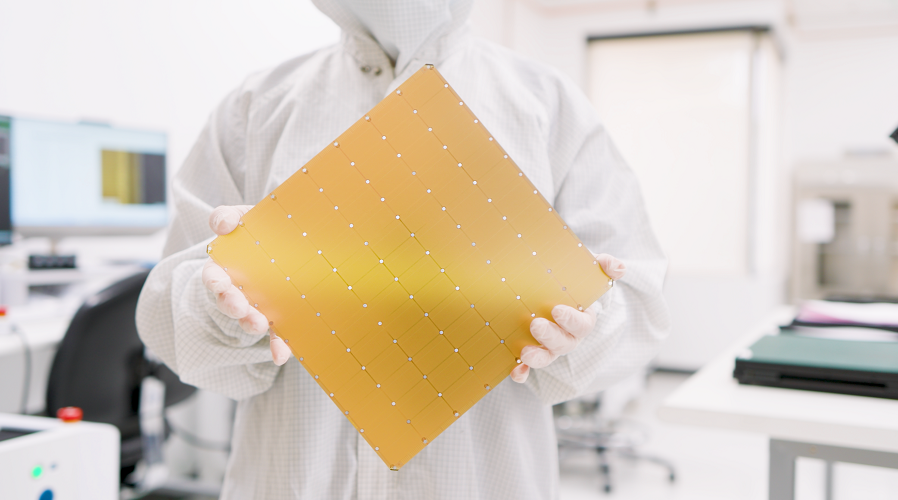

Breaking down AI chipmaker Cerebras' S-1 - PitchBook

From the major UAE revenue portion to special agreements with OpenAI, here's what you need to know about the Nvidia rival's IPO filing. After delays, AI chipmaker Cerebras has filed to go public. The Nvidia rival's S-1 shows revenue of $510 million in 2025, up 76% from the year before. The filing also discloses a $24.6 billion order backlog -- most of it tied to a December deal with OpenAI to supply 750 megawatts of AI compute through 2028, with options for nearly 3 gigawatts more by 2030. OpenAI advanced Cerebras a $1 billion loan and received warrants for 33 million near-free shares. But Cerebras' profitability is driven in large part by a paper gain. In 2024, the company had signed a deal with the Abu Dhabi tech company G42 to sell its preferred shares, which resulted in the company recording a $401 million loss in 2024. But in 2025, the deal with G42 came under US national security scrutiny, and was eventually restructured. Cerebras was able to remove that liability from its balance sheet, recording a $363 million paper gain and making its 2025 financials look more rosy. In reality, the company posted an operating loss of $75.7 million, wider than the 2024 operating loss of $21.8 million. Still, Cerebras remains plenty reliant on the United Arab Emirates, with entities in the country making up 86% of the company's revenue. MBZUAI, the Mohamed bin Zayed University of Artificial Intelligence, accounted for 62% of Cerebras' revenue in 2025, while G42 accounted for 24%. US business for the chipmaker shrank in 2025. Revenue from US-billed customers dropped from $282.7 million in 2024 to $187.6 million in 2025, a 34% decline. Founded in 2016 and based in Sunnyvale, Calif., Cerebras designs chips for AI-centric workloads. More recently, instead of selling its chips, the company began operating its own data centers powered by its chips, selling access to AI developers. The S-1 disclosed that some of Cerebras' biggest VC backers are Alpha Wave, Benchmark, Foundation Capital, and Fidelity. In February, Cerebras raised a $1 billion Series H at a $23 billion valuation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. A Tool Built for Cybersecurity -- and Potential Exploitation At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Why Access Is Being Restricted Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. A Growing Cyber Threat Landscape Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

AI chip developer Cerebras Systems files to go public amid rapid revenue growth - SiliconANGLE

AI chip developer Cerebras Systems files to go public amid rapid revenue growth Cerebras Systems Inc., the developer of the wafer-size WSE-3 artificial intelligence chip, today filed to go public. The move comes about 18 months after the company's first attempt to list its shares. It filed for an initial public offering in September 2024, but withdrew the paperwork late last year. Cerebras explained at the time that its original IPO filing "no longer reflected the current state of our business." In 2024, the chipmaker lost $485 million on sales of $290.3 million. Last year, it swung to a $87.9 million profit. Cerebras' revenue rose by 76% in the same time frame to $510 million. The company disclosed in today's IPO filing that it has secured a $125 million revolving credit facility from Morgan Stanley. The funds will be used to finance deals with data center developers and operators. Cerebras is expanding its data center capacity to support the growth of its Training Cloud and Inference Cloud services, which provide access to hosted AI infrastructure. The company's services are powered by its flagship WS-3 chip. It's an AI accelerator 58 times the size of the B200, a high-end Nvidia Corp. graphics card that debuted in 2024 and remains highly popular. The WSE-3 contains 4 transistors organized into 900,000 cores. According to Cerebras, Morgan Stanley will upscale its revolving credit line to as much as $850 million following the IPO. The company also disclosed in the filing that it has received a separate $1 billion loan from OpenAI Group PBC. Last December, the ChatGPT developer agreed purchase 750 megawatts worth of inference infrastructure from Cerebras. The chipmaker revealed today that the deal is worth more than $20 billion. Additionally, it gives OpenAI the option to add another 1.25 gigawatts of capacity through 2030. Cerebras has issued OpenAI warrants to purchase up to 33.4 million shares. Those warrants will vest if the AI model developer goes through with its plan to purchase 2 gigawatts of computing capacity by 2030. Cerebras stated in its IPO filing that the contract "represents a substantial portion of our projected revenues over the next several years." Last month, Cerebras inked a high-profile chip deal with another high-profile customer. Amazon Web Services Inc. agreed to deploy the WSE-3 in its data centers as part of a new "disaggregated architecture." The workflow through LLMs process prompts comprises two steps known as the prefill and decode stages. AWS' disaggregated architecture will use its internally-developed AWS Trainium chips to perform prefill calculations. The WSE-3, in turn, will be responsible for the decode phase. Decode calculations are similar to those used by the prefill workflow, but they require more memory bandwidth. That's a measure of how fast data can move between a chip's logic and memory circuits. The WSE-3 provides 27 petabytes per second of memory bandwidth, more than 200 times the amount offered by Nvidia's NVLink interconnect. Cerberus's product roadmap "includes the development of a disaggregated inference-serving solution," the company stated in its IPO filing. "Disaggregated inference would allow Cerebras to operate alongside other architectures, serving as the high-performance engine for decode while other systems handle prefill." Cerebras plans to list its shares on the Nasdaq under the ticker symbol "CBRS."

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

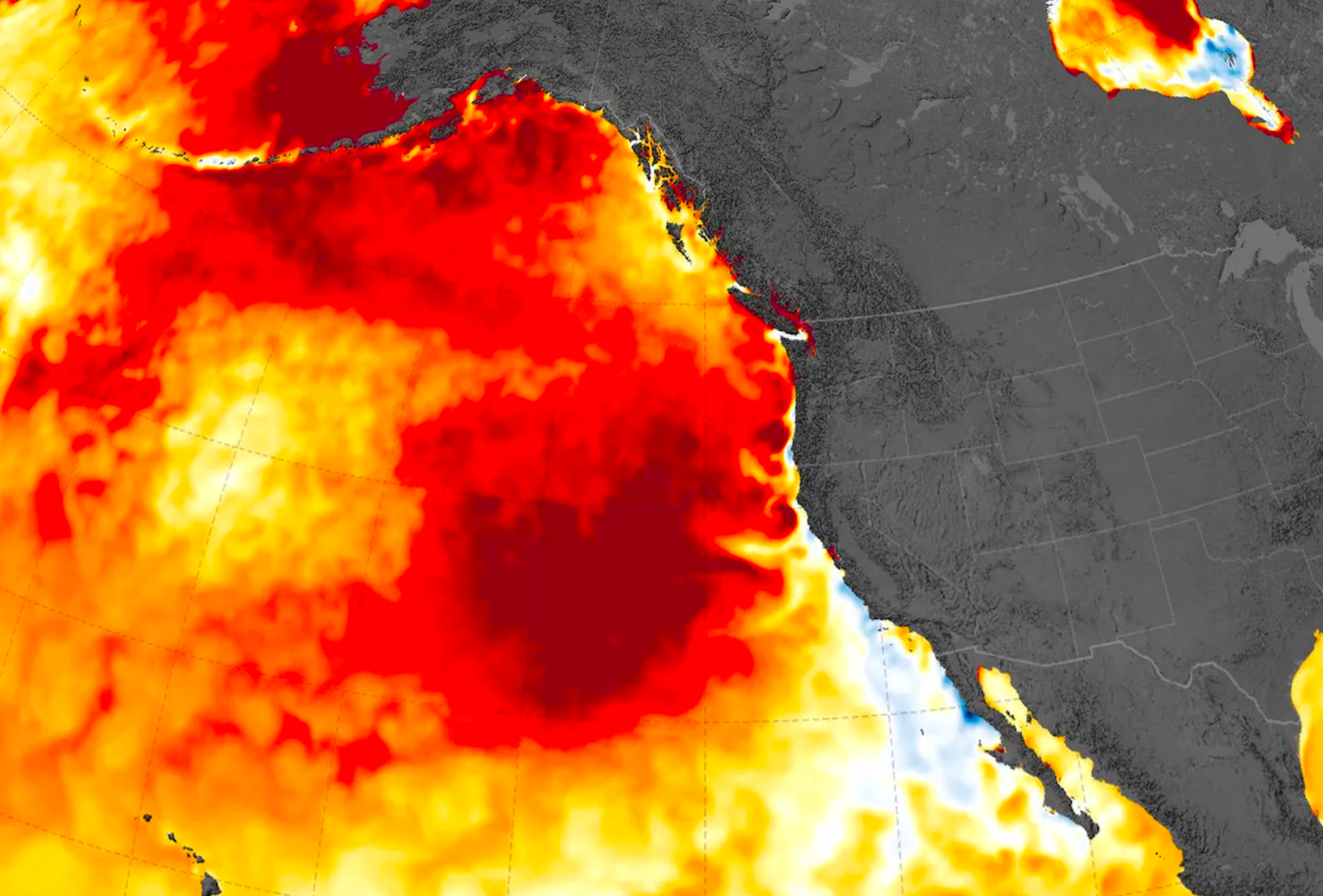

Marine Heatwave 'The Blob' Continues Record-Breaking Chaos off West Coast

NOAA notes unprecedented warming and marine wildlife shifts; further impacts expected. The Blob is back. Or so it seems. A marine heatwave is wreaking havoc on the Pacific Ocean off California, with records continuing to be broken, reminiscent of the extreme (and ominously named) oceanic warming event of 2015, dubbed "The Blob." And things might get worse. Couple that with the incoming El Niño - which experts are potentially predicting to be a "super" or even "Godzilla" event - and we could be in for a heater (literally) this summer. Per the LA Times: "The ocean heat wave started forming at the end of last year but has worsened in recent weeks, according to readings from the Scripps Pier in La Jolla, which has broken more than 25 daily temperature records this year. The surface water temperature on Wednesday was 68.5 degrees -- 7.7 degrees above average for the date. The sea bottom was 67.6 degrees, the hottest April 15 in about 100 years of records." Looking at the numbers, from Scripps, records are being broken on the daily. For instance, the most recent readings are as follows: "How does April 17, 2026 compare to other years? April 17th, 2026 is tied for the hottest April 17th on record." So, all indications are that the water is going to continue to warm, and potentially reignite the menacing Blob from 2015. And what was that, exactly? Here's NOAA: "You had a number of things occurring that by themselves were just astounding," said Nate Mantua, an atmospheric scientist at NOAA Fisheries' Southwest Fisheries Science Center. "When you put it all together you could hardly believe it." In addition to abnormalities with regards to marine wildlife - like, "subtropical species such as tuna and swordfish appeared hundreds of miles beyond their typical range" - the heatwave of 2015 was one for the books. And this next one could be bigger. NOAA added: "It was unlike any the West Coast had ever experienced before. Starting in the fall of 2013, a ridge of high pressure dampened the normal winter winds across the eastern Pacific Ocean. The sun warmed the sea surface into an ever-expanding hot spot that soon became known as 'the Blob.'" Stay tuned. Could be a lot of action this summer season on the West Coast.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. A Tool Built for Cybersecurity -- and Potential Exploitation At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Why Access Is Being Restricted Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. A Growing Cyber Threat Landscape Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Fact Check Team: Anthropic's Mythos AI raises cybersecurity promise, but poses risk

WASHINGTON (TNND) -- A powerful new artificial intelligence model is drawing attention in the tech and cybersecurity world -- not just for what it can do, but for how it could be used if it falls into the wrong hands. Anthropic, one of the leading AI firms, is developing an experimental system known as "Mythos." Unlike consumer-facing AI tools, this model is not publicly available. Instead, it's being quietly tested with a small group of major companies due to concerns over its capabilities. At its core, Mythos is designed to excel at cybersecurity tasks. According to Anthropic, the model has already identified thousands of high-severity software vulnerabilities, including flaws in widely used operating systems and web browsers. In some cases, the system has even demonstrated the ability to identify and exploit so-called "zero-day" vulnerabilities -- previously unknown weaknesses that can be especially dangerous if discovered by malicious actors. Independent testing by the UK AI Security Institute underscores both the promise and the risk. Evaluators found the model succeeded in expert-level cybersecurity challenges roughly 73% of the time and, in certain scenarios, could carry out complex, multi-step simulated cyberattacks from start to finish. However, those tests were conducted in controlled environments -- not against real-world, highly defended systems. Because of these capabilities, Anthropic and other AI companies are taking a cautious approach. Rather than releasing Mythos publicly, access is limited to a small group of major tech firms, including Google, Amazon, Apple, and Microsoft. The goal is to test the system while minimizing the risk of misuse. The company has also launched "Project Glasswing," an initiative focused on using advanced AI capabilities for defensive cybersecurity purposes. As part of that effort, firms are conducting extensive "red teaming," where security experts attempt to break the system and uncover potential vulnerabilities before a wider rollout. Companies also say they are monitoring how these tools are used in real time -- with the ability to shut down access if abuse is detected. Still, experts warn that as AI systems become more powerful, the risk of misuse grows. Those concerns come at a time when cyberattacks are already a major global issue, targeting everything from hospitals to government agencies. In a recent example, hackers linked to Iran reportedly accessed emails connected to FBI Director Kash Patel. While officials said no sensitive information was exposed, the incident highlights ongoing vulnerabilities. Security researchers warn that advanced AI could make these threats even more dangerous -- allowing attackers to identify weaknesses faster and carry out more sophisticated operations. In the U.S., the Cybersecurity and Infrastructure Security Agency, or CISA, leads efforts to defend against cyber threats. The agency is responsible for protecting critical infrastructure, including power grids, election systems, and financial networks. But challenges remain. Concerns about staffing and resource constraints have raised questions about whether current defenses can keep pace with rapidly evolving threats -- especially as AI enters the equation.

Security chaos | Patrol shot in Homs and two members of Internal Security injured

Homs province: Two members of the Internal Security Forces were injured after a patrol was shot as the members interfered to break a fight in Al-Waleed district in Homs City. According to sources, the patrol was directly shot while trying to contain the situation, where two members were injured and taken to a hospital to receive proper medication. Moreover, the security forces managed to arrest the shooter after injuring the shooter during the operation, where he was also taken to a hospital to receive proper medication before undergoing legal procedures.

Ex-Kennedy Center staffer alleges chaos and cronyism under Trump leadership

Unless courts intervene, the Kennedy Center will shut down this July for two years, as part of a roughly $250 million renovation. In the lead-up, there's been a wave of layoffs and a controversial rebranding by President Trump's allies. Josef Palermo was among those laid off and wrote "What I Saw Inside the Kennedy Center" for The Atlantic. Palermo joined Geoff Bennett to discuss more.