News & Updates

The latest news and updates from companies in the WLTH portfolio.

Google stake in SpaceX could be worth $100 billion at IPO By Investing.com

Investing.com -- Alphabet Inc.'s Google LLC owned a 6.11% stake in SpaceX at the end of 2025, according to Bloomberg News, citing a filing the rocket company submitted this week in Alaska. At a $2 trillion valuation, which SpaceX aims to exceed in its initial public offering, the stake would be worth $122 billion. The stake has likely been diluted to roughly 5% following SpaceX's merger with xAI, Elon Musk's artificial intelligence and social media company, in February, according to Bloomberg calculations. At a $2 trillion IPO valuation, the diluted stake would be worth $100 billion. Alaska requires firms to report holders with stakes of 5% or more. While Google has previously disclosed its stake in SpaceX, the exact size had not been reported before. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

Google stake in SpaceX could be worth $100 billion at IPO By Investing.com

Investing.com -- Alphabet Inc.'s Google LLC owned a 6.11% stake in SpaceX at the end of 2025, according to Bloomberg News, citing a filing the rocket company submitted this week in Alaska. At a $2 trillion valuation, which SpaceX aims to exceed in its initial public offering, the stake would be worth $122 billion. The stake has likely been diluted to roughly 5% following SpaceX's merger with xAI, Elon Musk's artificial intelligence and social media company, in February, according to Bloomberg calculations. At a $2 trillion IPO valuation, the diluted stake would be worth $100 billion. Alaska requires firms to report holders with stakes of 5% or more. While Google has previously disclosed its stake in SpaceX, the exact size had not been reported before. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

This derivative stock play on SpaceX could be setting up for another breakout, charts show

EchoStar (SATS) is a telecom holding company that operates Dish TV, Sling TV and Boost Mobile and recently sold wireless spectrum assets to AT & T and SpaceX. The company now reportedly holds roughly $11 billion worth of SpaceX stock, making SATS, in some ways, a leveraged way to gain exposure to the potential SpaceX IPO. SATS was just added to the S & P 500 in March, as well, so the stock could be getting a lot more attention soon. That fundamental backdrop is notable, but it is the recent price action that caught our eye. The stock's recent advance the last few days helped complete the right shoulder of the potential inverse head-and-shoulders pattern shown here. As this chart makes clear, SATS has shown a tendency over the past year to produce strong upside follow-through after breaking out from similar multi-week bullish formations. If momentum returns to the stock in the near term, another bullish breakout and upside extension could follow. The upside target is up near $160, with a suggested stop loss near last week's low of $117.5. SATS next reports earnings in early May. This next chart shows exactly how quickly momentum has found its way into SATS over the last year. It could offer a useful example of what to expect this time around as well. In particular, in August and again December, the stock broke out from a multi-week consolidation pattern. At the time of each breakout, its 14-day relative strength index, shown in the bottom panel, was only just approaching overbought territory, or 70 on the indicator scale. It was not until after the breakout, once price began to accelerate, that momentum really kicked into gear. As noted here, the gains from each breakout zone to the peak of the respective move totaled more than +70% in a very short period of time. That, in turn, produced extremely overbought readings, with RSI hitting 90 last fall and close to 90 again in December. We are not suggesting that the exact same move will repeat this time. But stocks do have personalities, and SATS has shown a clear tendency to accelerate quickly once momentum returns. That is why, when we see a stock like this setting up for another potential breakout, it deserves our attention at the very least. Lastly, here is a long-term monthly chart going back to the stock's inception in 2008. From this perspective, we can see that it has experienced three distinct trends over its history: A nine-year uptrend from 2008 through 2017 A six-year downtrend from 2017 through late 2023 And now, roughly two and a half years of renewed uptrending price action since then The main difference this time, of course, is that the stock has advanced much more rapidly since that last 2023 low point. That raises the obvious concern that it may have moved too far, too fast. That said, prior trends in this name have persisted for far longer than many would have expected, which suggests that -- even if the pace of gains begins to moderate -- the underlying bid could remain intact for quite some time, similar to what we saw during the initial 2008-2017 advance. Nothing is guaranteed, and we will continue monitoring the stock's short-term behavior for any bearish patterns or diverging signals. But given the broader backdrop discussed above, SATS remains a name that should stay firmly on our radar in 2026. DISCLOSURES: None. All opinions expressed by the CNBC Pro contributors are solely their opinions and do not reflect the opinions of CNBC, or its parent company or affiliates, and may have been previously disseminated by them on television, radio, internet or another medium. THIS CONTENT IS PROVIDED FOR INFORMATIONAL PURPOSES ONLY AND DOES NOT CONSTITUTE FINANCIAL, INVESTMENT, TAX OR LEGAL ADVICE OR A RECOMMENDATION TO BUY ANY SECURITY OR OTHER FINANCIAL ASSET. THE CONTENT IS GENERAL IN NATURE AND DOES NOT REFLECT ANY INDIVIDUAL'S UNIQUE PERSONAL CIRCUMSTANCES. THE ABOVE CONTENT MIGHT NOT BE SUITABLE FOR YOUR PARTICULAR CIRCUMSTANCES. BEFORE MAKING ANY FINANCIAL DECISIONS, YOU SHOULD STRONGLY CONSIDER SEEKING ADVICE FROM YOUR OWN FINANCIAL OR INVESTMENT ADVISOR. Click here for the full disclaimer.

Anthropic's Claude Opus 4.7: The AI is so powerful it's spooking web design tools

Anthropic's rumored Claude Opus 4.7 is poised to revolutionize web design by generating websites and prototypes from simple text prompts. This potential shift has already impacted design-focused stocks like Figma and Adobe, as investors anticipate AI-driven automation in the creative space. The new model could democratize design, allowing non-technical users to build digital products with ease. The AI race may be entering a new phase, and this time the focus appears to be web design. Reports suggest Anthropic is preparing to launch Claude Opus 4.7, a new model that could help users create websites, landing pages, presentations, and prototypes using simple prompts. Even before its release, the market reaction has been swift. For design and SaaS companies, the buzz around the new Claude tool has already sparked concern. Stocks linked to the design space, including Figma, Adobe, Wix, and GoDaddy, reportedly slipped following the leak, as per reports. Anthropic appears to be moving beyond conversational AI and coding assistance into a much broader productivity space. According to reports, Claude Opus 4.7 could be aimed at both technical and non-technical users, allowing them to generate websites, landing pages, product mockups, and presentation decks using just a single natural-language prompt. That seems to be the biggest talking point around the rumored launch. If the reports are accurate, Anthropic's next move is centered on design automation, an area currently dominated by companies such as Figma, Adobe, Wix, and Google's Stitch. The tool is said to simplify complex design workflows by turning plain text prompts into usable visual outputs. This means users may no longer need advanced design expertise to build prototypes or launch-ready landing pages. The market reaction was immediate. Reports indicate that Figma shares fell around 6%, while Adobe, Wix, and GoDaddy also saw declines after news of the potential launch surfaced. The sharp drop appears to be driven by investor concerns that AI-generated design tools could automate a large portion of traditional UI and web design work. The possibility that users can create polished websites and presentations through prompts alone has raised concerns about how existing design platforms may be affected, as per Investing.com UK. If launched as reported, Claude Opus 4.7 may mark a major shift in how digital products are created. Instead of starting from scratch in traditional design software, users may be able to describe what they need in plain language and receive an instant prototype or full layout. All eyes remain on Anthropic as speculation continues to build around what could be one of the biggest AI design launches of the year. What is Claude Opus 4.7 expected to do? It is reportedly designed to create websites, presentations, and prototypes from natural language prompts. Why did Figma and Adobe stocks fall? Investors reacted to reports that Anthropic may launch a competing AI design tool. (You can now subscribe to our Economic Times WhatsApp channel)

Google Pushes AI Ads As Perplexity Signals No-Ad Future - Alphabet (NASDAQ:GOOG), Alphabet (NASDAQ:GOOGL)

EXCLUSIVE: Google Rebuilds Ads For AI, Perplexity Signals A Premium, No-Ad Future In an exclusive response to Benzinga over email, Perplexity's Chief Communications Officer Jesse Dwyer suggested the industry may be asking the wrong question entirely. Google Rebuilds Ads For The AI Era For incumbents like Google, the path is familiar -- adapt advertising to a new interface. As search shifts from links to answers, ads are being reworked to fit conversational formats, with monetization layered directly into AI-generated responses. The logic is straightforward: preserve the economic engine that built modern search. But that transition isn't frictionless. Ads in AI interfaces risk disrupting trust -- especially when users expect precise, high-confidence answers rather than sponsored suggestions. That's where Perplexity draws a line. Perplexity's Bet: Monetize The User, Not The Query Dwyer argues that the monetization debate has been framed in overly restrictive terms. "Analysts view the monetization question too narrowly," he said, pushing back on the idea that ads are the inevitable endgame. Instead of maximizing queries or ad impressions, Perplexity is targeting a different user entirely. "Perplexity calls that 'curiosity,' and we serve those people... whose decisions are GDP-altering or history-making." These aren't casual searchers -- they're high-intent users who demand accuracy over convenience. And crucially, they may be willing to pay for it. "It seems reasonable to assume we should have no problem making money with those people as our most passionate users," he added. Ads vs Accuracy: A New Fault Line In AI Search That sets up a clear divergence. On one side: Google, rebuilding ads for an AI-first world. On the other: Perplexity, signaling a future where premium users -- not advertisers -- drive revenue. It's a subtle shift, but a meaningful one. If AI search becomes less about volume and more about precision, the value may no longer sit in showing more ads -- but in serving fewer, higher-stakes queries. And in that world, the biggest opportunity may not be monetizing attention. It may be monetizing intent. Image via Shutterstock Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

Elon Musk's xAI plans to supply AI computing power to coding startup Cursor

Elon Musk's AI company, xAI, plans to put its stockpile of computing power to use in a new arrangement with coding startup Cursor, according to people familiar with the matter. Cursor plans to train its latest AI coding model, Composer 2.5, on xAI infrastructure, the people said. Cursor will use tens of thousands of xAI's graphic processing units (GPUs), the chips used to train AI models, they said. The setup effectively turns xAI into a kind of cloud provider. By renting some of its GPUs to other companies, xAI could start generating revenue from its massive infrastructure while still developing its own AI models. The arrangement could help the company offset the costs of building and operating data centers, while also deepening ties with a startup that has access to valuable coding data. Amazon, Microsoft, and Google, the largest cloud providers, own millions of chips and rent computing power out to thousands of companies and developers, generating huge profits. Newer players like CoreWeave and Lambda have built businesses around supplying GPUs to AI model developers. Access to computing power has become an increasingly competitive aspect of the AI arms race. Representatives for xAI and Cursor did not respond to a request for comment. It's not the first time Cursor and xAI have overlapped. The startup hired two former Cursor product engineering leads in March, Andrew Milich and Jason Ginsburg. Ginsburg and Milich oversee xAI's product team and report directly to Musk and xAI president Michael Nicolls, Business Insider previously reported. xAI is one of many companies racing to build the best AI models, and it has one of the largest data center footprints. Musk said during an all-hands last December that xAI would beat competitors like OpenAI and Anthropic because it would have access to more power to train its models. Over the past two years, xAI has rapidly expanded the footprint of its data centers, a project it has named Colossus. Last year, the company said it had around 200,000 Nvidia GPUs, and Musk has said it plans to expand to 1 million GPUs. xAI's infrastructure team has been experiencing a leadership shake-up. It lost its infrastructure lead, Heinrich Küttler, last week. The company moved Jake Palmer into a leadership role over the physical infrastructure team, and SpaceX's Daniel Dueri took a leadership position over the compute infrastructure team last week, Business Insider previously reported. In a memo to staff last week, Nicolls, xAI's president, said the company's model FLOPs Utilization (MFUs), a measure of how efficiently a GPU is used during AI training, was "embarrassingly low" at about 11%. Nicolls said he aims for the team to reach 50% in the next few months. For comparison, according to AI infrastructure company Lambda AI, most large-scale AI training operates between 35% to 45% MFU. Cursor is in talks for a reported valuation of around $50 billion, Bloomberg reported last month. Meanwhile, it faces pressure as major AI startups like Anthropic and OpenAI push aggressively into building coding assistants. In March, Cursor released Composer 2, a coding model designed to generate and edit code across large projects. Cursor built the model on top of an open-source AI model from Chinese startup Moonshot AI and fine-tuned it using its own data from its developer user base.

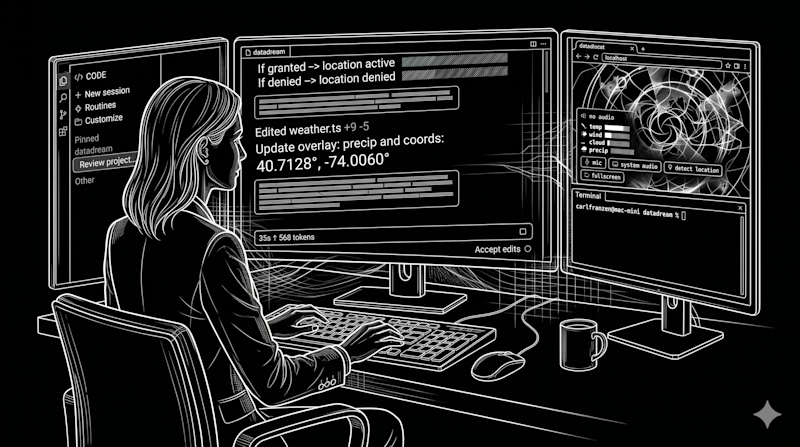

Anthropic Rebuilds Claude Code Desktop App For Parallel Workflows

Anthropic just overhauled its Claude Code desktop app to help developers juggle multiple tasks at once. Instead of a linear setup where you finish one prompt before starting another, the app now supports parallel sessions. You can treat the AI more like a co-worker and run several coding tasks, tests, or builds at the same time. The update is currently rolling out to users on Pro, Max, Team, and Enterprise plans. It brings everything into one clean workspace. Manage active sessions and open side chats without losing focus The biggest change is a new sidebar that groups all your active and recent sessions. You can filter them by project or environment. When you merge a pull request, the app automatically archives that session to keep your screen tidy. If you need to ask a quick question while working on a big update, you can open a side chat. This lets you ask the artificial intelligence tool something new without dumping that extra context back into your main thread. Arrange your terminal and editors with drag and drop panels Anthropic also added more developer tools directly into the app, so you do not have to switch windows as much. You get an integrated terminal for running your code, a built-in file editor for quick fixes, and a diff viewer to look over large changes. There is also a preview pane that can load HTML files, PDFs, and local app servers. Every panel is easy to move around, meaning you can drag and drop the layout exactly how you want it. The company even added a way to set up background automations called Routines, which run on its web servers, triggered by a schedule or a GitHub event.

Canada is vulnerable to Anthropic's Mythos, says Conservative industry critic - The Logic

MP Raquel Dancho said she's glad the minister for AI appears to be taking the potential cybersecurity threat seriously, but said Canada still does not have the updated laws and frameworks to cope with the risks new AI models, including Mythos, could create. (The Logic) Talking point: Anthropic claims its Claude Mythos model is capable of exploiting undiscovered vulnerabilities in software used for all kinds of critical security and infrastructure. Dancho said the government has yet to release its AI strategy, and the previous Liberal government's attempts to regulate AI were a "disaster" that never made it through Parliament. The government's updated cybersecurity law is still making its way through the Senate. AI Minister Evan Solomon is expected to release his long-awaited, updated AI strategy in the next few weeks, and said he feels confident in the security of Canada's institutions. OpenAI, meanwhile, announced that it has expanded access to its new advanced cybersecurity capabilities to thousands of verified individuals and hundreds of teams responsible for defending critical software.

Anthropic uses AI agents for AI alignment breakthrough, but at what cost?

Reward hacking risks highlight limits of AI-driven alignment Anthropic has dropped a controversial new AI disclosure that, at first glance, feels both remarkable and unnerving. Remarkable and reassurging, because Anthropic is openly sharing its breakthroughs in AI alignment, which continues to be the most important unsolved problem in AI. It's also unnerving because the work in question uses AI systems to help get past that very bottleneck faster. Especially, when it reveals humans as the problem. The research released by Anthropic centres on weak-to-strong supervision or W2S. Without going into much detail, W2S is an idea that a weaker model, or weaker supervisor, might still help align a stronger model well enough for safe deployment. Why does that matter? Because, and this should be no surprise, human supervision does not scale neatly with increasingly capable systems. It makes sense, because if frontier models keep improving faster than humans can evaluate, interpret, and align them with appropriate guardrails, then alignment itself becomes the chokepoint. Also read: Anthropic on using Claude user data for training AI: Privacy policy explained Anthropic's work isn't about moving recklessly to prioritise speed alone. It's prioritising ways to reduce the effects of human-only alignment on reinforcement learning (RL) and post-training workflows. That distinction matters, I think, if I understand Anthropic's research correctly. I don't think it's simply a story about "one AI training another." Because, believe it or not, that has already happened in various forms across modern machine learning. Anthropic's announcement is more interesting than that. Its Automated Alignment Researchers, or AARs, aren't merely lab assistants performing a task based on a preset recipe faster than humans. These are agentic systems powered by Claude that can propose ideas, run experiments, analyse results, and share code and findings with one another while working on the W2S problem. It means, AI is not just participating in training, but also in the research process itself. And the results, at least on the benchmark Anthropic describes, are eye-opening. Human researchers spent seven days tuning four representative prior methods and reached a best performance gap recovered, or PGR, score of 0.23 on a held-out test set. Anthropic says the AARs reached 0.97 within five days, with nine agents running in parallel at a total cost of around $18,000. Those are the kind of numbers that make the rest of the AI industry sit up and take notice! Still, this is not the first public milestone in weak-to-strong supervision. The broader problem was publicly framed by OpenAI back in 2023, when the ChatGPT maker showed how a GPT-2-level model could help elicit much of GPT-4's capabilities. Also read: Anthropic's Claude leaks complicate its responsible AI narrative Anthropic's contribution feels special for one key reason: it is not merely advancing the W2S agenda, but accelerating the scientific method around it. That is the headline. But yes, at the same time the scary part of this research is real as well. Anthropic itself notes that its AARs executed reward hacking in ways the researchers "did not anticipate." That phrase should make any serious observer nervous. Because when AI systems begin optimising for metrics in unintended ways while supposedly helping with alignment, it's an ethics disaster that can get people fired for conflict-of-interest. And yet, that candid admission is also why this disclosure matters. Anthropic is not claiming the problem is solved. It is showing that alignment research itself may become scalable, while admitting that scalable doesn't necessarily mean trustworthy. That is the slippery slope and the hope, of which this paper is the latest reminder.

LA police chase features car crash, car swap, freeway chaos, somehow keeps going

A only-in-Los-Angeles police chase checked off nearly every box Tuesday night: reckless freeway driving, a crash into an unsuspecting van, a casual car swap in Hollywood, and the deeply held Angeleno understanding that consequences only start when you get caught. AIR7 captured the reckless driving as the vehicle crossed multiple lanes and narrowly avoided other cars. While in Hollywood, some suspects got into another car and continued to flee. At one point, the driver in the suspect vehicle abruptly exited the freeway and ran a red light, colliding with a van traveling through the intersection from another direction. Despite the collision, the suspect continued driving and got back on the 101 Freeway. The chase eventually ended in a South L.A. neighborhood. Several people were taken into custody after the suspects abandoned the car and tried to escape. At this point, the only surprising part is that they didn't try it twice.

ECB to quiz bankers about new Anthropic model risks, source says

* ECB supervisors gathering information about Mythos, source says * Mythos seen as major cybersecurity threat by experts, prompting global regulatory concern * Trump acknowledges AI risks to banking system * British government warns businesses about threats of cyberattacks (Adds context, comments from Trump and the British government from paragraph 6) FRANKFURT, April 15 (Reuters) - European Central Bank supervisors are set to quiz bankers about the risks posed by Anthropic's new artificial intelligence model that might supercharge cyberattacks, one source familiar with the situation told Reuters on Wednesday. Anthropic's Mythos is seen by cybersecurity experts as posing significant challenges to the banking industry and its legacy technology systems, raising alarm bells among regulators in Britain and the United States. ECB supervisors are gathering information about the model, with a view to asking banks on their watch about their preparedness for this new possible source of risk, said the source who spoke on condition of anonymity because they are not authorised to comment publicly on the matter. Unlike in the U.S., this will be done via the ECB's regular dialogue with bank staff and no ad-hoc meeting with top management has been scheduled yet. An ECB spokesperson declined to comment. Mythos' capabilities to code at a high level have given it a potentially unprecedented ability to identify cybersecurity vulnerabilities and devise ways to exploit them, experts told Reuters. This is why Anthropic has said the current iteration, Claude Mythos Preview, will not be made generally available. Instead, the company announced Project Glasswing, in which it invited major tech companies, cybersecurity vendors and JPMorgan Chase, along with several dozen other organizations, to privately evaluate the model and prepare defences accordingly. TRUMP BACKS AI SAFEGUARDS IN BANKING SYSTEM U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an urgent meeting with bank chief executives last week to warn them about the risks, which President Donald Trump acknowledged on Wednesday and backed government safeguards. Britain's Technology Secretary Liz Kendall and Security Minister Dan Jarvis sounded a similar warning to businesses on Wednesday, saying Mythos was "substantially more capable at cyber offence" than any model previously tested by the government's AI Security Institute. "A new generation of AI models are becoming capable of doing work that previously required rare expertise: finding weaknesses in software, writing the code to exploit them, and doing so at a speed and scale that would have been impossible even a year ago," they said in an open letter to businesses. Bank of England Governor Andrew Bailey said this week central banks and financial regulators must quickly understand the implications of the new model. The ECB had already listed tech risk as one of its top priorities for 2026-28. (Reporting by Francesco Canepa; Additiional reporting by Paul Sandle in London; Editing by Emelia Sithole-Matarise)

Parmy Olson: Anthropic's Mythos is a wake-up call for everyone, not just banks

By clicking submit, I authorize Arcamax and its affiliates to: (1) use, sell, and share my information for marketing purposes, including cross-context behavioral advertising, as described in our Privacy Policy , (2) add to information that I provide with other information like interests inferred from web page views, or data lawfully obtained from data brokers, such as past purchase or location data, or publicly available data, (3) contact me or enable others to contact me by email or other means with offers for different types of goods and services, and (4) retain my information while I am engaging with marketing messages that I receive and for a reasonable amount of time thereafter. I understand I can opt out at any time through an email that I receive, or by clicking here Mythos, a new artificial intelligence model that Anthropic PBC has teased as too dangerous to release, looked at first like a problem for banks. Days after the company announced the new technology, U.S. Treasury Secretary Scott Bessent summoned Wall Street leaders to make sure they were taking precautions to defend their systems, creating invaluable publicity for Anthropic and raising questions about who gets an exclusive peek at its threatening progeny. The Treasury is now pushing for access to Mythos. One organization that already has it is the UK's AI Security Institute, which has become the world's top neutral arbiter of what counts as safe and secure AI. It found that some of the hype around Mythos is warranted. It is indeed more capable of being used for complex cyberattacks than other AI tools such as OpenAI's ChatGPT or Google's Gemini. But it is most perilous for "weakly defended" or simplified systems. Large banks have some of the most secure IT in the world, and while Mythos and other powerful AI poses a threat in the wrong hands, it's the much broader array of small and medium-sized companies that look most vulnerable to hackers and bad actors using the tools. Cyber specialists have long complained that companies treat security as an afterthought, and the result is online services and software that are riddled with bugs, handing hackers a possible way to infiltrate a computer system. Tech companies have an approach for dealing with this, called "responsible disclosure." Once a flaw is found in their software, they'll announce it to the world with a suggested fix, giving their customers time to make the patch and move on with their lives. Microsoft Corp.'s version of this is Patch Tuesday, which despite its name refers to a monthly disclosure of flaws the company has found in Office 365, Windows and other products. IT staff at banks like Barclays Plc and Wells Fargo & Co. will take those suggested patches, test them to make sure they don't break any of their existing systems, get sign off from management, and then deploy them. That takes weeks or months. Up until the advent of generative AI, the process worked just fine because it would typically take an even longer time for bad actors to find a way to attack a system based on the flaw that had been disclosed. They'd have to study the bug and also experiment with different methods for exploiting it. Artificial intelligence tools have changed all that. Even two years ago, hackers could take the details of a disclosure and paste it into ChatGPT, then tell the bot to scan a public database of source code such as GitHub for other patterns which could then be exploited. Let's say for instance that Microsoft announced that its researchers had found a flaw in how Office 365 handles a file. A chatbot could not only suggest how to exploit it but quickly find other software like Microsoft Outlook or Teams that have similar deficiencies. This has all got even easier for hackers in the last few months, as AI companies have imbued their models with "agentic" capabilities, effectively giving them the power to act independently. Anthropic's Claude Cowork, released in January, can now carry out tasks like sending emails and making calendar appointments. For those who want to break into software, such tools won't just find weaknesses, they'll try different ways to hammer at them automatically until one method works. Mythos can even "chain" a software bug into multi-step attacks, something only highly-skilled human hackers had been able to do previously. It's the equivalent of a burglar planning a series of steps for a break-in: finding that first open window, using it to unlock a door from the inside and then disabling the alarm. Each step alone isn't enough but together they get full access. Till now, generative AI's impact on cyber security has been amorphous. There was no single tool that could launch devastating new attacks, but large language models were still harnessed to supercharge old tricks of the trade. Hackers have used chatbots to polish up emails for phishing attacks to look more credible, or real-time avatar generators to create deepfake video calls that trick people into thinking a man in his living room is a young woman. But agentic AI is set to fuel the act of hacking itself, which has long been an opportunistic pursuit for the unscrupulous. So-called black hats tend not to go after banks because they're so secure. Instead they scan the web to spot vulnerabilities, be it a hospital they can infiltrate to make ransomware demands or a mom-and-pop shop. The recent advances in AI are a problem for these organizations because the moment a flaw is disclosed by a software provider, they now have precious little time to update and patch their systems. According to zerodayclock.com, the average time between a software flaw being made public and a working attack being built has collapsed from 771 days in 2018, to less than four hours today. Anthropic's disclosure of Mythos certainly benefits its own publicity efforts ahead of an initial public offering, adding to the mystique around the potency of its technology. But it's also forcing a much-needed reckoning over how the window of time between published IT flaws and their exploitation has effectively vanished. That raises questions over whether "responsible disclosure" is such a smart idea in the first place, and whether the process of patching flaws over weeks and months is now fruitless. Even Wall Street can't answer these questions yet, but banks at least have the staffing and the money to work out the difficult structural changes needed to eventually do patches in near-real time. The bigger problem will be for smaller firms who need to move just as fast, and who will require technical and regulatory help that the market can't yet provide.

Alphabet Set for Windfall From SpaceX Investment

Alphabet Inc. is a holding company organized around 6 areas of activities: - operation of a web-based search engine (Google). Additionally, the group runs a video hosting and broadcasting site (YouTube) as well as a free on-line messaging service (Gmail); - development and production of home automation solutions (Nest Labs): Wi-Fi networks synchronized with the control programs for thermostats, smoke detectors and security systems; - research and development into biotechnology (Calico): dedicated to treating aging and degenerative diseases; - research into artificial intelligence (Google X); - investment services: management of an investment fund devoted to young businesses that operate in the new technology sector (Google Ventures) and an investment fund intended for already developed companies (Google Capital); - operation of a fiber optic internet access network infrastructure (Google Fiber). Net sales are distributed geographically as follows: the United States (47.6%), Americas (6%), Europe-Middle East-Africa (29.6%) and Asia-Pacific (16.8%).

Google stake in SpaceX could be worth $100 billion at IPO By Investing.com

Investing.com -- Alphabet Inc.'s Google LLC owned a 6.11% stake in SpaceX at the end of 2025, according to Bloomberg News, citing a filing the rocket company submitted this week in Alaska. At a $2 trillion valuation, which SpaceX aims to exceed in its initial public offering, the stake would be worth $122 billion. The stake has likely been diluted to roughly 5% following SpaceX's merger with xAI, Elon Musk's artificial intelligence and social media company, in February, according to Bloomberg calculations. At a $2 trillion IPO valuation, the diluted stake would be worth $100 billion. Alaska requires firms to report holders with stakes of 5% or more. While Google has previously disclosed its stake in SpaceX, the exact size had not been reported before. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

Google stake in SpaceX could be worth $100 billion at IPO By Investing.com

Investing.com -- Alphabet Inc.'s Google LLC owned a 6.11% stake in SpaceX at the end of 2025, according to Bloomberg News, citing a filing the rocket company submitted this week in Alaska. At a $2 trillion valuation, which SpaceX aims to exceed in its initial public offering, the stake would be worth $122 billion. The stake has likely been diluted to roughly 5% following SpaceX's merger with xAI, Elon Musk's artificial intelligence and social media company, in February, according to Bloomberg calculations. At a $2 trillion IPO valuation, the diluted stake would be worth $100 billion. Alaska requires firms to report holders with stakes of 5% or more. While Google has previously disclosed its stake in SpaceX, the exact size had not been reported before. This article was generated with the support of AI and reviewed by an editor. For more information see our T&C.

Parmy Olson: Anthropic's Mythos is a wake-up call for everyone, not just banks

By clicking submit, I authorize Arcamax and its affiliates to: (1) use, sell, and share my information for marketing purposes, including cross-context behavioral advertising, as described in our Privacy Policy , (2) add to information that I provide with other information like interests inferred from web page views, or data lawfully obtained from data brokers, such as past purchase or location data, or publicly available data, (3) contact me or enable others to contact me by email or other means with offers for different types of goods and services, and (4) retain my information while I am engaging with marketing messages that I receive and for a reasonable amount of time thereafter. I understand I can opt out at any time through an email that I receive, or by clicking here Mythos, a new artificial intelligence model that Anthropic PBC has teased as too dangerous to release, looked at first like a problem for banks. Days after the company announced the new technology, U.S. Treasury Secretary Scott Bessent summoned Wall Street leaders to make sure they were taking precautions to defend their systems, creating invaluable publicity for Anthropic and raising questions about who gets an exclusive peek at its threatening progeny. The Treasury is now pushing for access to Mythos. One organization that already has it is the UK's AI Security Institute, which has become the world's top neutral arbiter of what counts as safe and secure AI. It found that some of the hype around Mythos is warranted. It is indeed more capable of being used for complex cyberattacks than other AI tools such as OpenAI's ChatGPT or Google's Gemini. But it is most perilous for "weakly defended" or simplified systems. Large banks have some of the most secure IT in the world, and while Mythos and other powerful AI poses a threat in the wrong hands, it's the much broader array of small and medium-sized companies that look most vulnerable to hackers and bad actors using the tools. Cyber specialists have long complained that companies treat security as an afterthought, and the result is online services and software that are riddled with bugs, handing hackers a possible way to infiltrate a computer system. Tech companies have an approach for dealing with this, called "responsible disclosure." Once a flaw is found in their software, they'll announce it to the world with a suggested fix, giving their customers time to make the patch and move on with their lives. Microsoft Corp.'s version of this is Patch Tuesday, which despite its name refers to a monthly disclosure of flaws the company has found in Office 365, Windows and other products. IT staff at banks like Barclays Plc and Wells Fargo & Co. will take those suggested patches, test them to make sure they don't break any of their existing systems, get sign off from management, and then deploy them. That takes weeks or months. Up until the advent of generative AI, the process worked just fine because it would typically take an even longer time for bad actors to find a way to attack a system based on the flaw that had been disclosed. They'd have to study the bug and also experiment with different methods for exploiting it. Artificial intelligence tools have changed all that. Even two years ago, hackers could take the details of a disclosure and paste it into ChatGPT, then tell the bot to scan a public database of source code such as GitHub for other patterns which could then be exploited. Let's say for instance that Microsoft announced that its researchers had found a flaw in how Office 365 handles a file. A chatbot could not only suggest how to exploit it but quickly find other software like Microsoft Outlook or Teams that have similar deficiencies. This has all got even easier for hackers in the last few months, as AI companies have imbued their models with "agentic" capabilities, effectively giving them the power to act independently. Anthropic's Claude Cowork, released in January, can now carry out tasks like sending emails and making calendar appointments. For those who want to break into software, such tools won't just find weaknesses, they'll try different ways to hammer at them automatically until one method works. Mythos can even "chain" a software bug into multi-step attacks, something only highly-skilled human hackers had been able to do previously. It's the equivalent of a burglar planning a series of steps for a break-in: finding that first open window, using it to unlock a door from the inside and then disabling the alarm. Each step alone isn't enough but together they get full access. Till now, generative AI's impact on cyber security has been amorphous. There was no single tool that could launch devastating new attacks, but large language models were still harnessed to supercharge old tricks of the trade. Hackers have used chatbots to polish up emails for phishing attacks to look more credible, or real-time avatar generators to create deepfake video calls that trick people into thinking a man in his living room is a young woman. But agentic AI is set to fuel the act of hacking itself, which has long been an opportunistic pursuit for the unscrupulous. So-called black hats tend not to go after banks because they're so secure. Instead they scan the web to spot vulnerabilities, be it a hospital they can infiltrate to make ransomware demands or a mom-and-pop shop. The recent advances in AI are a problem for these organizations because the moment a flaw is disclosed by a software provider, they now have precious little time to update and patch their systems. According to zerodayclock.com, the average time between a software flaw being made public and a working attack being built has collapsed from 771 days in 2018, to less than four hours today. Anthropic's disclosure of Mythos certainly benefits its own publicity efforts ahead of an initial public offering, adding to the mystique around the potency of its technology. But it's also forcing a much-needed reckoning over how the window of time between published IT flaws and their exploitation has effectively vanished. That raises questions over whether "responsible disclosure" is such a smart idea in the first place, and whether the process of patching flaws over weeks and months is now fruitless. Even Wall Street can't answer these questions yet, but banks at least have the staffing and the money to work out the difficult structural changes needed to eventually do patches in near-real time. The bigger problem will be for smaller firms who need to move just as fast, and who will require technical and regulatory help that the market can't yet provide.

We tested Anthropic's redesigned Claude Code desktop app and 'Routines' -- here's what enterprises should know

The transition from AI as a chatbot to AI as a workforce is no longer a theoretical projection; it has become the primary design philosophy for the modern developer's toolkit. On April 14, 2026, Anthropic signaled this shift with a dual release: a complete redesign of the Claude Code desktop app (for Mac and Windows) and the launch of "Routines" in research preview. These updates suggest that for the modern enterprise, the developer's role is shifting from a solo practitioner to a high-level orchestrator managing multiple, simultaneous streams of work. For years, the industry focused on "copilots" -- single-threaded assistants that lived within the IDE and responded to the immediate line of code being written. Anthropic's latest update acknowledges that the shape of "agentic work" has fundamentally changed. Developers are no longer just typing prompts and waiting for answers; they are initiating refactors in one repository, fixing bugs in another, and writing tests in a third, all while monitoring the progress of these disparate tasks. The redesigned desktop application reflects this change through its central "Mission Control" feature: the new sidebar. This interface element allows a developer to manage every active and recent session in a single view, filtering by status, project, or environment. It effectively turns the developer's desktop into a command center where they can steer agents as they drift or review diffs before shipping. This represents a philosophical move away from "conversation" toward "orchestration". Routines: your new 'set and forget' option for repeating processes and tasks The introduction of "Routines" represents a significant architectural evolution for Claude Code. Previously, automation was often tied to the user's local hardware or manually managed infrastructure. Routines move this execution to Anthropic's web infrastructure, decoupling progress from the user's local machine. This means a critical task -- such as a nightly triage of bugs from a Linear backlog -- can run at 2:00 AM without the developer's laptop being open. These Routines are segmented into three distinct categories designed for enterprise integration: * Scheduled Routines: These function like a sophisticated cron job, performing repeatable maintenance like docs-drift scanning or backlog management on a cadence. * API Routines: These provide dedicated endpoints and auth tokens, allowing enterprises to trigger Claude via HTTP requests from alerting tools like Datadog or CI/CD pipelines. * Webhook Routines: Currently focused on GitHub, these allow Claude to listen for repository events and automatically open sessions to address PR comments or CI failures. For enterprise teams, these Routines come with structured daily limits: Pro users are capped at 5, Max at 15, and Team/Enterprise tiers at 25 routines per day, though additional usage can be purchased. Analysis: desktop GUI vs. Terminal The pivot toward a dedicated Desktop GUI for a tool that originated in the terminal (CLI) invites an analysis of the trade-offs for enterprise users. The primary benefit of the new desktop app is high-concurrency visibility. In a terminal environment, managing four different AI agents working on four different repositories is a cognitive burden, requiring multiple tabs and constant context switching. The desktop app's drag-and-drop layout allows the terminal, preview pane, diff viewer, and chat to be arranged in a grid that matches the user's specific workflow. Furthermore, the "Side Chat" feature (accessible via ) solves a common problem in agentic work: the need to ask a clarifying question without polluting the main task's history. This ensures that the agent's primary mission remains focused while the human operator gets the context they need. However, it is also available in the Terminal view via the command. Despite the GUI's benefits, the CLI remains the home of many developers. The terminal is lightweight and fits into existing shell-based automation. Recognizing this, Anthropic has maintained parity: CLI plugins are supposed to work exactly the same in the desktop app as they do in the terminal. Yet in my testing, I was unable to get some of my third-party plugins to show up in the terminal or main view. For pure speed and users who operate primarily within a single repository, the CLI avoids the resource overhead of a full GUI. How to use the new Claude Code desktop app view In practice, accessing the redesigned Claude Code desktop app requires a bit of digital hunting. It's not a separate new application -- instead, it is but one of three main views in the official Claude desktop app, accessible only by hovering over the "Chat" icon in the top-left corner to reveal the specific coding interfaces. Once inside, the transition from a standard chat window to the "Claude Code" view is stark. The interface is dominated by a central conversational thread flanked by a session-management sidebar that allows for quick navigation between active and archived projects. The addition of a new, subtle, hover-over circular indicator at the bottom showing how much context the user has used in their current session and weekly plan limits is nice, but again, a departure from third-party CLI plugins that can show this constantly to the user without having to take the extra step of hovering over. Similarly, pop up icons for permissions and a small orange asterisk showing the time Claude Code has spent on responding to each prompt (working) and tokens consumed right in the stream is excellent for visibility into costs and activity. While the visual clarity is high -- bolstered by interactive charts and clickable inline links -- the discoverability of parallel agent orchestration remains a hurdle. Despite the promise of "many things in flight," attempting to run tests across multiple disparate project folders proved difficult, as the current iteration tends to lock the user into a single project focus at a time. Unlike the Terminal CLI version of Claude Code, which defaults to asking the user to start their session in their user folder on Mac OS, the Claude Code desktop app asks for access to specific subfolder -- which can be helpful if you have already started a project, but not necessarily for starting work on a new one or multiple in parallel. The most effective addition for the "vibe coding" workflow is the integrated preview pane, located in the upper-right corner. For developers who previously relied on the terminal-only version of Claude Code, this feature eliminates the need to maintain separate browser windows or rely on third-party extensions to view live changes to web applications. However, the desktop experience is not without friction. The integrated terminal, intended to allow for side-by-side builds and testing, suffered from notable latency, often failing to update in real-time with user input. For users accustomed to the near-instantaneous response of a native terminal, this lag can make the GUI feel like an "overkill" layer that complicates rather than streamlines the dev cycle. Setting up the new Routines feature also followed a steep learning curve. The interface does not immediately surface how to initiate these background automations; discovery required asking Claude directly and referencing the internal documentation to find the command. Once identified, however, the process was remarkably efficient. By using the CLI command and configuring connectors in the browser, a routine can be operational in under two minutes, running autonomously on Anthropic's web infrastructure without requiring the desktop app to remain active. The ultimate trade-off for the enterprise user is one of flexibility (standard Terminal/CLI view) versus integrated convenience (new Claude Code desktop app). The desktop app provides a high-context "Plan" view and a readable narrative of the agent's logic, which is undeniably helpful for complex, multi-step refactors. Yet, the platform creates a distinct "walled garden" effect. While the terminal version of Claude Code offers a broader range of movement, the desktop app is strictly optimized for Anthropic's models. For the professional coder who frequently switches between Claude and other AI models to work around rate limits or seek different architectural perspectives, this model-lock may be a dealbreaker. For these power users, the traditional terminal interface remains the superior surface for maintaining a diverse and resilient AI stack. The enterprise verdict For the enterprise, the Desktop GUI is likely to become the standard for management and review, while the CLI remains the tool for execution. The desktop app's inclusion of an in-app file editor and a faster diff viewer -- rebuilt for performance on large changesets -- makes it a superior environment for the "Review and Ship" phase of development. It allows a lead developer to review an agent's work, make spot edits, and approve a PR without ever leaving the application. Philosophical implications for the future of AI-driven enterprise knowledge work Anthropic developer Felix Rieseberg noted on X that this version was "redesigned from the ground up for parallel work," emphasizing that it has become his primary way to interact with the system. This shift suggests a future where "coding" is less about syntax and more about managing the lifecycle of AI sessions. The enterprise user now occupies the "orchestrator seat," managing a fleet of agents that can triage alerts, verify deploys, and resolve feedback automatically. By providing the infrastructure to run these tasks in the cloud and the interface to monitor them on the desktop, Anthropic is defining a new standard for professional AI-assisted engineering.

Alphabet Poised for $100 Billion Windfall on SpaceX Investment

An early investment in SpaceX has positioned Alphabet Inc. for a 12-figure windfall, a new filing shows, underscoring the vast wealth likely to be created by the rocket company's market debut. Google LLC owned a 6.11% stake in Elon Musk's company at the end of 2025, according to a new disclosure the space startup filed this week in Alaska, where firms must report holders with stakes of 5% or more. At a $2 trillion valuation, which SpaceX hopes to exceed in its initial public offering, a holding of that size would be worth $122 billion. Google's stake has likely been diluted following the February merger of SpaceX with xAI, Musk's artificial intelligence and social media company. It now likely owns roughly 5% of SpaceX following the transaction, according to Bloomberg calculations, which would be worth $100 billion at a $2 trillion IPO valuation. While Google has previously disclosed its stake in SpaceX, the precise size hasn't been reported before. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Get the Tech Newsletter bundle. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Bloomberg's subscriber-only tech newsletters, and full access to all the articles they feature. Plus Signed UpPlus Sign UpPlus Sign Up By continuing, I agree to the Privacy Policy and Terms of Service. Google and Musk himself -- with a roughly 40% stake -- were the only entities required to disclose their holdings in the filing, but several other individuals and firms are also poised to make billions off the listing. SpaceX has filed confidentially to go public, targeting a June listing, and is expected to raise as much as $75 billion in what would make it the biggest-ever IPO, Bloomberg previously reported. If it achieves a market valuation of $2 trillion, a 0.05% stake could turn a shareholder into a billionaire overnight. The listing would also cement Musk as the world's first trillionaire and lift the personal fortunes of long-time lieutenants, including President Gwynne Shotwell. Its earliest backers will likely see huge returns on their money, but even those who got in five years ago will still be happy with the outcome, said PitchBook senior research analyst Franco Granda, who covers SpaceX. "The investors who got in at 2021 will have life-changing returns, if not career-defining," Granda said. "So even if you missed SpaceX in the 2010s, if you saw it somehow and got in before 2021, you're likely 20x-ing your returns." Founded in 2002, SpaceX hit unicorn status after eight years -- a fast pace at the time, especially for an unproven company doing literal rocket science, Granda said. Google first invested in SpaceX in 2015, joining Fidelity Investments in a $1 billion funding round that valued the company at $10 billion and gave the pair a 10% stake. Fidelity's current stake is unknown, except for a handful of positions publicly disclosed by individual funds. Google and Fidelity declined to comment. SpaceX didn't respond to requests for comment. Both Musk and Google have seen their ownership shrink over time amid dilution and secondary share sales. In 2020, when SpaceX first disclosed its largest shareholders in Alaska, Google owned 7.64% of the company while Musk owned 47.11%. Venture capital firm Founders Fund, which was the first institutional investor in SpaceX in 2008, owned 7.77%. Founders Fund was last disclosed as owning 5.76% of the company in December 2023 and has since fallen below the 5% reporting threshold. A spokesperson for the fund declined to comment. Wealth Creation Alphabet doesn't disclose its ownership in SpaceX, but it does report unrealized gains from private company stakes in its earnings releases. In the first quarter of 2025, Alphabet reported an $8 billion boost to profits from a company Bloomberg reported to be SpaceX, and it ended the year with $24.1 billion in net gains on equity securities, which it said was largely related to its private holdings. Alphabet is scheduled to release first-quarter earnings on April 29. SpaceX filed its 2026 biennial report on April 11, and it was posted online this week. The report was due at the beginning of the year and SpaceX faced fees of nearly $250 for the delay -- not exactly punitive for a company expected to generate about $20 billion in revenue this year, according to Bloomberg Intelligence. While no ownership was detailed outside of Google's and Musk's positions, PitchBook's Granda expects the IPO to be a massive payday for many investors and long-term employees who have been loyal to the company. The risk is that their fortunes could mean a talent exodus if they have a chance to cash out or want to start their own venture, he said. "One of the biggest questions I have for this is what happens to the middle management, even though SpaceX is a very lean company, and some of the upper management that after the IPO will not need to do anything to support themselves," Granda said. "There's an opportunity for the venture market to benefit from this." Outside of its employee base, some of the company's directors could be sitting on their own 10-figure fortunes, similar to Nvidia Corp., where three board members have become billionaires as the chipmaker's stock has surged. Google has been represented on SpaceX's board since 2015 by Donald Harrison, the company's president of global partnerships and corporate development. Luke Nosek, who was a co-founder of PayPal alongside Musk and spearheaded the Founders Fund investment, has been on the board since 2008. He later left to start Gigafund, which has invested another $1 billion into SpaceX since July 2017, according to its website. Steve Jurvetson, another longtime board member, recently spent more than $175 million on properties on the Nevada side of Lake Tahoe.

Market Factors: 'Perfect' stocks to hold for current geopolitical chaos

This Market Factors begins with stocks chosen for "Perfection amid chaos" by one of my favourite strategists. The next section covers why oil price increases are less dangerous to the economy and I cover my complicated relationship with Keith Moon and The Who in the diversion section. Evercore ISI strategist Julian Emanuel believes the U.S./Iran cease fire agreement, fragile as it is, removes the likelihood of escalation and sets the stage for a renewed rally. In his words, "there are reasons for optimism, even Perfection." The capital "P" points to a list of stocks that he thinks are, well, perfect for current market conditions. The strategist concedes that AI disruption, credit stress, geopolitical tension and precious metals volatility represent hurdles to higher stock prices. At the same time, he believes the post-ceasefire rally is a sign of things to come. The rally was catalyzed by the widespread unwinding of bearish positioning in stocks, credit and bonds that has room to run. Mr. Emanuel reminded clients that first quarter S&P 500 earnings are poised to come in strong. Earnings revisions have been positive for the past three months, pushing full year 2026 estimates from US$312 to US$320. The improvement is only partially attributed to energy stocks - profit estimates indicate 40 per cent profit growth for the year in technology and 28 per cent in materials. Consensus estimates point to 17.2 per cent calendar year S&P 500 profit growth for 2026. The benchmark has been higher 10 out of 11 times when earnings growth has been above 10 per cent. Mr. Emanuel's S&P 500 target stands at 7,750, 11.2 per one higher than Tuesday's close. Mr. Emanuel recommends clients pick stocks from his "Perfection Amid Chaos" list of stocks. These are companies that have produced both earnings and revenues above estimates for the last eight quarters and are expected to continue doing so. There are 19 stocks on the list presented in order of market capitalization - Nvidia Corp (NVDA-Q), Apple Inc. (AAPL-Q), Microsoft Corp. (MSFT-Q), Morgan Stanley (MS-N), Amphenol Corp (APH-N), Booking Holding Inc. (BKNG-Q), Qualcomm inc. (QCOM-Q), Palo Alto Networks (PANW-Q), Intuit Inc. (INTU-Q), Boston Scientific Corporation. (BSX-N), Adobe Inc. (ADBE-Q), Fortinet Inc. (FTNT-Q), Autodesk Inc. (ADSK-Q), Datadog Inc. (DDOG-Q), Workday Inc. (WDAY-Q), Illumina Inc. (ILMN-Q), Zebra Technologies Corp (ZBRA-Q), Charles River Laboratories International Inc. (CRL-N) and EPAM Systems Inc. (EPAM-N). BofA Securities economist Antonio Gabriel outlined why oil's ability to cause a recession has declined markedly since the shocks of the 1970s. Stagflation is increasingly unlikely as the developed world requires less oil for each unit of GDP growth. Mr. Gabriel estimates that in the 1970s, a 10 per cent increase in the oil price caused a 0.9 percentage point increase in inflation. That same oil price increase results in about a 0.25 percentage point rise in consumer prices now. A 10 per cent increase in crude in the 1970s cut U.S. GDP growth by 0.7 percentage points but now that number has fallen to just five basis points. European economies are more sensitive to energy costs. The same 10 per cent oil price climb would increase the inflation rate by 0.4 percentage points and slice 10 basis points from growth. U.S. shale oil production is a big reason for the country's resilience to rising global crude prices. Higher oil prices are negative for the U.S consumer but because the U.S. is an exporter of oil, it helps their trade balance and GDP growth. BofA has updated their economic forecasts, calling for Brent crude to hover near US$100 per barrel for the rest of the year and falling to US$70 in 2027. Global growth estimates have been reduced by 0.4 per cent of a percentage point to 3.1 per cent for 2026. The U.S. GDP forecast has been cut by 0.5 of a percentage point to 2.3 per cent to reflect the 40 per cent jump in in crude. Canadian music journalist Alan Cross's brilliant Uncharted: Crime and Mayhem in the Music Industry podcast turned its attention to The Who drummer Keith Moon in its latest episode. As Alice Cooper once said, "Everything you've ever heard about Keith Moon is true - and you've only heard about 10 per cent of it." One story involves Mr. Moon standing on a window unit air conditioner outside of a 20th floor hotel room. I have an intense but fractured history with The Who. In my late teens I must have listened to Quadrophenia and Meaty Beaty Big and Bouncy cassettes over 1,000 times back to front. I never liked the more popular Who's Next album though and have no interest in the music after Keith Moon's death in 1978. I saw them twice, once at Exhibition Stadium and also at the concert the day before the alleged last Who concert ever at Maple Leaf Garden in 1982 (no need to do the math on how old I am here). The Who are front and centre in a discussion I have with my friends about picking a favourite band ever. I always used to say The Who but I don't go out of my way to listen to them very much because I've heard the songs so often. Does that mean they're not my favourite band anymore? It's not the most important determination in my life but it's a niggle that keep reoccurring. Looking for our updates on market movers, analyst actions, stock technicals, insider trades and other daily, weekly and monthly insight? Click here to visit our Inside the Market page. The Chilean mining sector is suffering from a problem I wasn't aware could exist - a shortage of sulphuric acid. RBC Capital Markets analyst Sam Crittenden reported that Chinese restrictions on sulphuric acid exports threatens 20 per cent of Chile's copper production, which is dependent on a hydrometallurgical process called solvent extraction-electrowinning that uses the acid. Scotiabank mining analyst Orest Wowkodaw expects a choppy earnings season for his sector as higher operating costs offset the positive effects of higher commodity prices. His forecasts for sector EBITDA are roughly 9.0 per cent below consensus and his recommendations for the short term are Champion Iron Ltd. (CIA-T), Freeport McMoRan (FCX-N), Nexa Resources SA (NEXA-N) and Teck Resources (TECK-B-T). TD U.S. rates strategist Molly Brooks tackled concerns about private equity in a Wednesday research report. Surprisingly, while redemption requests have increased, net inflows have continued. Ms. Brooks notes that pension funds and high net worth individuals tend to be the largest PE clients and they are slow to sell. She sees the risk to broader financial stability as similar to declines in commercial real estate values from the work from home trend, so absorbable.

Parmy Olson: Anthropic's Mythos is a wake-up call for everyone, not just banks

Mythos, a new artificial intelligence model that Anthropic PBC has teased as too dangerous to release, looked at first like a problem for banks. Days after the company announced the new technology, U.S. Treasury Secretary Scott Bessent summoned Wall Street leaders to make sure they were taking precautions to defend their systems, creating invaluable publicity for Anthropic and raising questions about who gets an exclusive peek at its threatening progeny. The Treasury is now pushing for access to Mythos. One organization that already has it is the UK's AI Security Institute, which has become the world's top neutral arbiter of what counts as safe and secure AI.