News & Updates

The latest news and updates from companies in the WLTH portfolio.

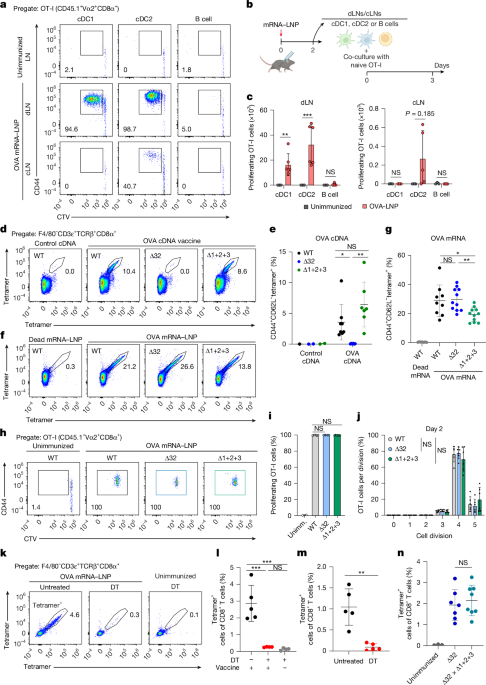

mRNA vaccines engage unconventional pathways in CD8+ T cell priming - Nature

All mice were housed in our specific-pathogen-free facility under 12 h-12 h light-dark cycles, maintained at 70 °F and 50% humidity, in compliance with institutional and AAALAC-accredited Animal Studies Committee guidelines at Washington University in St Louis, following all relevant ethical regulations. For CD11c-DTR BM chimeras, CD45.1 SJL recipient mice were lethally irradiated (1,050 rads X-ray). Within 12-18 h after irradiation, the recipient mice were i.v. injected with ≥5 × 10 BM cells obtained from CD11c-DTR donor mice. For WT- or MHC-I TKO-to-SJL BM chimeras, SJL recipient mice were depleted of NK cells by i.p. injection of 100 μg anti-NK1.1 antibody (PK136, Leinco Technologies, N123). The next day, the recipient mice were lethally irradiated (1,050 rads X-ray). Within 12-18 h after irradiation, the recipient mice were i.v. injected with ≥5 × 10 BM cells obtained from either WT or MHC-I TKO donor mice. For WT or Δ32-to-SJL or MHC-I TKO BM chimeras, SJL or MHC-I TKO recipient mice were lethally irradiated (1,050 rads X-ray). Within 12-18 h after irradiation, the recipient mice were i.v. injected with ≥5 × 10 BM cells obtained from either WT or Δ32 donor mice. For B6 and BALB/c allogeneic BM chimera, recipient B6 and BALB/c mice were lethally irradiated with 1,050 rads and 650 rads X-ray, respectively. Donor BM from B6 or BALB/c mice was collected and treated with ACK lysis buffer to remove erythrocytes. T cells were depleted from donor BM suspensions by incubating cells with biotinylated anti-CD4 (GK1.5, BioLegend) and anti-CD8β (YTS156.7.7, BioLegend) antibodies, followed by magnetic depletion using MagniSort Streptavidin Negative Selection Beads (Thermo Fisher Scientific). After T cell depletion, ≥5 × 10 cells of prepared BM were i.v. injected into irradiated recipient mice. All BM chimera recipients were allowed to reconstitute for at least 7 weeks before use in experiments. Flow cytometry and cell sorting were performed using either the Aurora flow cytometer (Cytek) or FACSAria Fusion (BD) system. Data acquisition was performed using BD FACSDiva software, and analyses were conducted using FlowJo v.10.10.0 (BD Biosciences). Surface staining was performed at 4 °C in the presence of Fc block (2.4G2) in magnetic-activated cell-sorting (MACS) buffer (PBS supplemented with 0.5% BSA and 2 mM EDTA). For depletion-based sort purification of OT-I T cells and splenic DCs, the following biotinylated anti-mouse antibodies were used: B220 (RA3-6B2), Ly6G (1A8), CD3ε (145-2C11), CD19 (6D5), TER119 (TER-119), CD8β (YTS156.7.7) and CD4 (GK1.5) (all from BioLegend), and CD105 (MJ7/18) (from Invitrogen). Biotinylated cells were detected with BV650-conjugated Streptavidin (BioLegend, 405231) and PE-Cy7-conjugated Streptavidin (BioLegend, 405206). For biotin- and fluorochrome-conjugated antibodies, the following anti-mouse antibodies were used. From BioLegend: AF488-conjugated B220 (RA36B2, 103225), AF647-conjugated SIGLECH (551, 129608), BV510-conjugated I-A/E (M5/114.15.2, 100752), FITC-conjugated KLRG1 (2F1/KLRG1, 138409), PE- and BV421-conjugated XCR1 (ZET, 148204 and 148216), PE-Cy7-conjugated CD24 (M1/69, 138508), APC-Cy7-conjugated SIRPα (P84, 110716), BV605- and BV510-conjugated CD8α (53-6.7, 100751 and 100752), APC-Cy7-conjugated CD45.1 (A20, 110716), PE-Cy7-conjugated CD45.2 (104, 109814), BV421-conjugated H-2Kb (AF6-88.5, 116525), PE-conjugated H-2Db (KH95, 111508), PE-conjugated Vα2 (B20.1, 127808), APC-conjugated CD44 (IM7, 103028 and), PerCP-Cy5.5-conjugated CD62L (MEL14, 104432), biotin-conjugated CD69 (FN50, 310924), FITC-conjugated CD3ε (145-2C11, 100306), FITC-conjugated CD11b (M1/70, 101206), BV711-conjugated CD4 (GK1.5, 100447), AF700-conjugated F4/80 (BM8, 123130), BV421-conjugated Ly6C (HK1.4, 128032), BV711-conjugated CD115/CSF-1R (AFS98, 135515) and APC-conjugated CD226 (10E5, 128810). From BD Biosciences: BUV395-conjugated CD45R/B220 (RA3-6B2), BUV395-conjugated KIT (2B8), BV421-conjugated CD127 (Sb/199) and PE-CF594-conjugated Flt3 (A2F10.1). From Invitrogen: APC-eF780-conjugated CD44 (IM7), APC-eF780-conjugated CD11c (N418) and PerCP-ef710-conjugated Sirpα (P84). For in vivo IFNAR1 blockade, 2 mg of anti-mouse IFNAR-1 antibody (MAR1-5A3, Leinco Technologies, I-401) was administered by i.p. injection every 7 days, beginning 1 day before immunization (day -1 and day 6). LNs were collected and enzymatically digested in complete IMDM (I10F; Iscove's modified Dulbecco's medium with 2ME, NEAA, glutamine, penicillin-streptomycin and 10% FBS) supplemented with 30 U ml of DNase I (Sigma-Aldrich) and 250 μg ml of collagenase B (Roche) for 30-45 min at 37 °C. After digestion, single-cell suspensions were filtered through 70-μm strainers, APCs were sorted as B220MHC-IICD11cXCR1CD172α (cDC1), B220MHC-IICD11cXCR1CD172α (cDC2) and B220MHC-II (B cells) cells. For cDC staining, spleen and LNs were collected and enzymatically digested in I10F supplemented with 30 U ml of DNase I (Sigma-Aldrich) and 250 μg ml of collagenase B (Roche) for 30-45 min at 37 °C. After digestion, single-cell suspensions were filtered through a 70-μm strainer and stained for flow cytometry analysis. For CD8 T cell staining, spleen, LNs and peripheral blood were collected, mechanically dissociated and passed through a 70-μm strainer for single-cell suspensions. After ACK lysis, cells were stained for flow cytometry. BM was collected from the femurs, tibias and pelvis by mechanical disruption using a mortar and pestle in MACS buffer. Cell suspensions were passed through a 70-µm strainer, erythrocytes were lysed with ACK buffer and the resulting cells were stained for flow cytometry. Mice were perfused with cold PBS containing 2 mM EDTA before tissue collection. Tibialis anterior and gastrocnemius-soleus muscles were dissected, trimmed of fat and nerves, and processed for immune-cell isolation using a Percoll gradient. Muscles were minced in IMDM and digested in collagenase D 1.0 mg ml, DNase I 30 U ml in IMDM at 37 °C for 45 min with shaking. Digestion was stopped with I10F, and suspensions were filtered through a 70-µm mesh and pelleted. Cell pellets were resuspended in 40% Percoll-RPMI and overlaid onto 80% Percoll-PBS, then centrifuged at 1,400g for 15 min without brake. Leukocytes at the 40%/80% interface were collected, washed with I10F and stained for flow cytometry. Cap 1 N1meΨ OVA mRNA was provided by Innovac Therapeutics or purchased from PackGene. OVA mRNA or dead (non-coding) mRNA LNPs were formulated in lipids at molar ratios of 50:38.5:10:1.5 (ionizable lipid SM-102:cholesterol:DSPC:DMG-PEG2000). LNP size and size distribution, encapsulation efficiency, stability and endotoxin level were rigorously tested. mLama4 mRNA was provided by R.D.S. For in vivo studies, 50 µl mRNA-LNP containing 10 µg mRNA was injected i.m. into the gastrocnemius muscle. Unless indicated otherwise, mRNA-LNP was administered on day 0 and day 7, and the immune responses were measured at day 11. Plasmid DNA encoding the full-length OVA was amplified in Escherichia coli DH5α (Invitrogen) and purified using the NucleoBond Maxi Plasmid DNA Purification kit (Macherey-Nagel). Empty pcDNA3.1(+) vector DNA was used as control. DNA vaccination was performed using a Helios gene gun (Bio-Rad). Mice were vaccinated with 4 µg of DNA at 3-day intervals (day 0, 3 and 6) for a total of three doses. DNA was delivered to non-overlapping shaved and depilated abdominal areas, with helium discharge pressure set to 400 p.s.i. Immune responses were measured 5 days after the last gene gun vaccination (day 11). Soluble ovalbumin (low endotoxin; Worthington, LS003509) was dissolved in PBS and emulsified 1:1 (v/v) with AddaVax (InvivoGen; vac-adx-10) at 4 °C by vortexing for 2 min. Mice were immunized i.m. with 50 μl of emulsion containing 10 µg OVA on days 0 and 7, into the same flank. Freeze-thawed Abelson-mOVA cells were used to standardize antigen quantity without cell proliferation, as previously described. In brief, Abelson-mOVA cells were generated by retroviral transduction of Abl-MuLV-transformed MHC-I TKO BM tumour cell line with a membrane-OVA construct (Abl-MuLV was a gift from B. Sleckman). Cells underwent three rapid freeze-thaw cycles and were stored at -20 °C until use. Mice were immunized with 3.3 × 10 freeze-thawed Abelson-mOVA cells. LNs and spleens from CD45.1 OT-I mice were collected, mechanically dissociated into and passed through 70-μm strainers to generate a single-cell suspension. Erythrocytes were lysed with ammonium-chloride-potassium bicarbonate (ACK) lysis buffer. Cells were depleted of TER-119-, I-A/E-, Ly-6G- and B220-expressing cells by incubation with biotinylated antibodies for 20 min at 4 °C, followed by depletion with MagniSort Streptavidin Negative Selection Beads (Thermo Fisher Scientific). Naive OT-I cells were sorted as B220CD45.1CD4CD8Vα2CD44CD62L, washed with PBS and labelled with CTV proliferation dyes (Thermo Fisher Scientific). For ex vivo cross-presentation assays, 2.5 × 10 CTV-labelled OT-I cells were co-cultured with sorted cDC1, cDC2 or B cells isolated from dLNs or cLNs 2 days after immunization. Co-cultures were performed in a well of U-bottom 96-well plates. After 3 days, cells were washed, surface-stained with antibodies and analysed for CTV dilution and CD44 expression. For in vivo antigen-presentation assays, 5 × 10 CTV-labelled naive OT-I cells were i.v. transferred into recipient mice. Then, 1 day later, the mice were immunized with the indicated antigens. At the indicated timepoints, spleens were collected and erythrocyte lysed with ACK buffer, and CD45.1 OT-I cells were analysed for CTV dilution and CD44 expression. In OT-I proliferation assays, the average division number was calculated as ∑(fraction of total OT-I cells in division n × n) based on the peak of the undivided control without immunization and the peak for each division automatically fit by FlowJo software. The gate boundaries were adjusted to the lowest population between two peaks. For T cell egress blockade, 1 mg per kg body weight of FTY720 (Sigma-Aldrich, SML0700) was administered by i.p. injection in 150 μl PBS 1 day after OT-I cell adaptive transfer. For blockade of naive T cell entry to lymphoid organs, splenectomy was performed by WashU Medicine Animal Surgery Core under anaesthesia using standard surgical removal of the spleen, followed by closure of peritoneum and skin. Mice were monitored daily for 4 days to ensure recovery. On day 5 after surgery, mice were injected i.p. with 200 μg anti-CD62L (MEL-14; Leinco Technologies, C2118). Then, 6 h later, the mice were adoptively transferred i.v. with CTV-labelled naive OT-I cells. The next day, mice were immunized with 0.1 μg OVA mRNA-LNP. Spleens were collected and passed through 70-μm strainers to generate single-cell suspensions. After erythrocyte lysis with ACK lysis buffer, cells were resuspended in MACS buffer. After counting with a ViCell analyser, 3 × 10 splenocytes were used for staining. APC- and PE-conjugated H-2Kb chicken ova 257-264 SIINFEKL tetramers (NIH Tetramer Core Facility) were added at a concentration of 1:100 in MACS buffer containing 10% Fc Block (2.4G2) and incubated at 37 °C for 15 min. Without washing, fluorochrome-conjugated antibodies for surface staining were then added directly and incubated at 4 °C for 30 min. The ELISpot assay was performed using the Mouse IFNγ (ALP) ELISpot Plus Kit (Mabtech) according to the manufacturer's instructions. In brief, mouse spleen cell suspensions (1 × 10-2 × 10 cells) after ACK lysis were incubated in triplicate for 20 h with or without the presence of 1 μM SIINFEKL peptide (AnaSpec). After extensive washes, biotinylated detection antibody was added followed by streptavidin-ALP and insoluble BCIP/NBT-plus substrate. Plates were scanned and analysed on an ImmunoSpot Reader (CTL). The contralateral inguinal LN was fixed for 6 h shaking at 4 °C in 4% paraformaldehyde (PFA) (Santacruz, sc-281692) that was adjusted to pH 9.0 with triethanolamine. LNs were washed out of PFA in 1× PBS with 10 U ml heparin, embedded in 4% low-melting-point agarose, and sectioned into 200-μm sagittal slices using the LeicaVT1200 vibratome. The sections were blocked in ADAPT-3D blocking buffer (Leinco, B673) for 1 h and then stained with primary antibodies against CD11c (Bio-Rad, MCA1369, N418) and F4/80 (BioLegend, 123101, BM8) diluted 1:200 from a stock concentration of 1 mg ml in ADAPT-3D blocking buffer and left shaking at room temperature overnight. The sections were washed in 1× PBS with 10 U ml heparin and 0.2% Tween-20, three times for 1 h each. The sections were stained with secondary antibodies overnight (Jackson ImmunoResearch, Cy3 goat anti-Armenian hamster IgG, 127-165-160; AF647 donkey anti-rat IgG, 712-605-153) diluted 1:300 from a stock concentration of 1.5 mg ml in ADAPT-3D blocking buffer after first passing through a 0.22-μm PVDF filter (Millex, SLGVR04NL) and with anti-CD169 (Bio-Rad, MCA947GA, MOMA-1) directly conjugated with CF488 (Biotium, 92253). The sections were washed in 1× PBS with 10 U ml heparin and 0.2% Tween-20 three times for 1 h each. For three sections per LN, a tilescan with 9-μm z stacks was acquired with a ×20 lens (air, 0.8 NA) on a Leica SP8 confocal microscope. Images were Gaussian or median filtered using Imaris v.10.1.1 and representative images were exported as a maximum-intensity projection. The 1956 tumour cell line expressing membrane-bound ovalbumin (1956-mOVA) was derived from the methylcholanthrene (MCA)-induced fibrosarcoma 1956 tumour (from R.D.S.), as previously described. The original tumour was generated in a female C57BL/6 mouse, tested for mycoplasma contamination and banked at low passage. For experiments, tumour cells were thawed from frozen stocks and cultured for 4-6 days in vitro with one intervening passage in RPMI medium supplemented with 2ME, NEAA, glutamine, penicillin-streptomycin and 10% FBS (R10F). On the day of injection, tumour cells were collected by trypsinization, washed three times with PBS and resuspended at 6.67 × 10 cells per ml. Mice were subcutaneously injected into the shaved flank with 1 × 10 cells. Tumour growth was monitored every 3-5 days using callipers. Two perpendicular diameters of tumour mass were measured and multiplied to calculate the tumour area (mm). In accordance with IACUC-approved protocol, tumours were not permitted to exceed 20 mm in maximal diameter at any point. In vivo killing assays were performed on mice 6 weeks after the second OVA mRNA-LNP immunization. Splenocytes from naive CD45.1 SJL mice were collected, ACK lysed and prepared as a single-cell suspension. Cells were resuspended in I10F at 2 × 10 cells per ml, and divided into two equal fractions and pulsed with either 1 μg ml SIINFEKL or 1 μg ml irrelevant control peptide for 30 min at 37 °C. Cells were then washed twice with PBS and stained at 5 µM for CTV or at 0.5 µM for CTV for 10 min at 37 °C, and mixed at a ratio of 1:1 immediately before transfer. Statistical analyses were performed using GraphPad Prism software v.10. Centre values represent the mean and the error bars indicate s.d. unless otherwise specified. For groups that are not assumed to have equal variances, Welch's or Brown-Forsythe one-way ANOVA was used. OVA-tetramer-specific splenic cells were isolated from WT, Δ32 and Δ1+2+3 mice and washed with 1× PBS containing 0.04% BSA. Before fluorescence-activated cell sorting, cells from each individual mouse were stained with hashtag oligonucleotides (HTOs) to enable multiplexing and improve sample throughput. cDNA was prepared after the GEM generation and barcoding, followed by the GEM-RT reaction and bead clean-up steps. Purified cDNA was amplified for 11-16 cycles before being cleaned-up using SPRIselect beads. The samples were then run on a Bioanalyzer to determine the cDNA concentration. V(D)J target enrichment (TCR) was performed on the full-length cDNA. Gene expression, enriched TCR and feature libraries were prepared as recommended by the 10x Genomics 'Chromium GEM-X Single Cell 5' Reagent Kits User Guide (v3 Chemistry Dual Index) with Feature Barcoding technology for Cell Surface Protein and Immune Receptor Mapping' user guide, with appropriate modifications to the PCR cycles based on the calculated cDNA concentration. For sample preparation on the 10x Genomics platform, the Chromium GEM-X Single Cell 5' Kit v3, 16 rxns (PN-1000699), Chromium GEM-X Single Cell 5' Chip Kit (PN-1000698), Chromium Single Cell Mouse TCR Amplification Kits (PN-1000254), Dual Index Kit TT Set A, 96 rxns (PN-1000215), Chromium GEM-X Single Cell 5' Feature Barcode Kit v3, 16 rxns (PN-1000703) and Dual Index Kit TN Set A, 96 rxns (PN-1000250) were used. The concentration of each library was accurately determined by quantitative PCR using the KAPA library Quantification Kit according to the manufacturer's protocol (KAPA Biosystems/Roche) to produce cluster counts appropriate for the Illumina NovaSeq6000 instrument. Normalized libraries were sequenced on the NovaSeqX plus S4 Flow Cell using the XP workflow and a 151 × 10 × 10 × 151 sequencing recipe according to the manufacturer's protocol. A median sequencing depth of 50,000 reads per cell was targeted for each gene expression library and 5,000 reads per cell for each V(D)J and feature library. The reads for each sequencing library were then aligned and quantitated with 10x CellRanger v.9.0.1 against the 10x standard refdata-gex-mm10-2020-A mouse gene reference and refdata-cellranger-vdj-GRCm38-alts-ensembl-7.0.0 VDJ reference according to the manufacturer's protocol. Single-cell gene expression analysis was performed in R (v.4.4.0) using the Seurat package (v.5.3.0). HTO data were first normalized individually for each sample (Supplementary Table 2) and demultiplexed using the HTODemux function; only singlet cells were retained for further analysis. Cells with >5% mitochondrial gene expression were excluded, and only those expressing between 200 and 4,000 genes were retained to remove low-quality cells and potential doublets. After quality control, data from all samples were merged and normalized. The 3,000 most-variable genes were identified, and mitochondrial, ribosomal and TCR genes were excluded from this list to avoid biases associated with highly abundant or cell-type-specific transcripts. The data were then scaled, principal component analysis was performed followed by batch correction and data integration using Harmony. Dimensionality reduction of the integrated matrix was carried out using UMAP based on the first 30 principal components. Phenotypic clusters were identified by constructing a k-nearest neighbours graph and applying the Louvain algorithm with a resolution parameter of 0.4. For TCR repertoire analysis, cell phenotype, sample identity and mouse ID information were extracted from the integrated metadata for each cell. TCR sequences were successfully annotated for 46,051 cells and used for downstream clonotype analyses. Cells sharing identical CDR3αβ amino acid sequences were defined as belonging to the same TCR clone. Further information on research design is available in the Nature Portfolio Reporting Summary linked to this article.

Adobe to Integrate New AI Assistant With Anthropic's Claude

Adobe released a new artificial intelligence assistant that will connect to the Claude chatbot and will allow people to complete design projects with simple commands. The software company behind tools like Photoshop and Premiere on Wednesday said its new Firefly AI Assistant will take conversational directions from users and execute multi-step workflows throughout its Creative Cloud apps. Adobe is partnering with Anthropic and other AI companies to allow users to access Firefly and Adobe tools through Claude and other "leading third-party AI models," the company said. Firefly will be personalized to each user and can be used for AI video and image editing, with improvements in sound and color, the company said. "Adobe is leading the shift into a new era of agentic creativity, where you direct how your work takes shape and your perspective, voice and taste become the most powerful creative instruments of all," said David Wadhwani, Adobe's president of creativity and productivity business. Adobe's announcement comes as companies race to deploy more capable AI agents, which are AI models that not only chat with users but also autonomously complete tasks.

GPT-5.4-Cyber: OpenAI Introduces AI Model for Cyber Defense to Counter Anthropic

OpenAI is expanding its cybersecurity offerings with a new, purpose-trained language model. GPT-5.4-Cyber is a variant of GPT-5.4 that has been specifically optimized for defensive security applications and is now available to selected users. The release comes at a time when competitor Anthropic is also making waves with its model Claude Mythos Preview. According to OpenAI, GPT-5.4-Cyber is a version of GPT-5.4 in which restrictions have been deliberately relaxed for legitimate security work. The model is intended to enable security professionals to complete complex tasks more efficiently, without running into refusal limits that make sense for general users but can be an obstacle for professional defenders. One of the key new capabilities is binary code analysis: security professionals can use it to examine compiled software for vulnerabilities, malware potential, and security robustness without needing access to the source code. In addition, the model is designed to support extended workflows for cyber defense, including vulnerability research, security training, and defensive programming. OpenAI relies on a tiered access system within its existing "Trusted Access for Cyber" (TAC) program. Access to GPT-5.4-Cyber is not public, but is restricted to vetted security vendors, organizations, and researchers. OpenAI emphasizes that entry into the program is designed to be straightforward: Due to the elevated potential for misuse, the model is subject to special restrictions. For example, use in environments without data transparency -- such as zero-data-retention configurations -- is limited. OpenAI justifies this by noting that such usage scenarios lack visibility into the user, environment, and intended purpose. OpenAI describes its approach through three guiding principles that form the framework for GPT-5.4-Cyber and future developments: Alongside GPT-5.4-Cyber, OpenAI points to progress with Codex Security, a system for automated vulnerability analysis in codebases. Since its launch as a research preview, Codex Security has, according to the company, contributed to the remediation of more than 3,000 critical and high-severity security vulnerabilities. Through the "Codex for Open Source" program, more than 1,000 open-source projects have also been provided with free security scans. The release of GPT-5.4-Cyber coincides with the announcement of Anthropic's Claude Mythos Preview, a model that, according to the company, is capable of finding and exploiting security vulnerabilities in an almost fully autonomous manner. Anthropic has not released the model publicly due to its risk potential, and instead launched "Project Glasswing," an initiative for selected partners including Amazon Web Services, Apple, Google, Microsoft, and CrowdStrike. With GPT-5.4-Cyber, OpenAI is pursuing a comparable but broader approach in terms of reach: rather than a closed circle of partners, the company relies on a scalable access system with identity verification that is intended to eventually encompass thousands of individuals and hundreds of teams. Both companies share the assessment that AI capabilities in the cyber domain are already significant today, and that defenders must be given preferential access before attackers gain the upper hand. OpenAI announces that it will continue to expand the safety mechanisms for upcoming, even more powerful models. The company anticipates that today's safeguards are sufficient for current models, but that future generations will require more extensive defensive architectures. GPT-5.4-Cyber is to be understood as a first step in a longer-term program aimed at scaling security capabilities and protective measures in parallel.

Starfighters Space Partners with Blackstar Orbital for Hypersonic Testing Platform as Space Sector Prepares for Historic SpaceX Public Debut

CAPE CANAVERAL, Fla., April 15, 2026 (GLOBE NEWSWIRE) -- As SpaceX prepares for what could become the largest IPO in history with its confidential S-1 filing targeting a $1.75 trillion valuation and June roadshow, space infrastructure companies are positioning for sector-wide growth. Starfighters Space, Inc. (NYSE American: FJET), owner and operator of the world's largest commercial supersonic aircraft fleet, has announced a strategic partnership with Blackstar Orbital to advance flight testing of revolutionary reusable hypersonic space systems. The March 26 Technical Interchange Agreement (TIA), unveiled at the Satellite 2026 conference, establishes a framework for integrating Blackstar's innovative "SpaceDrone" technology with Starfighters' proven F-104 aircraft platform. The collaboration will enable progressive flight testing from supersonic captive carries beginning in Q4 FY26 through high-altitude, supersonic release operations in the Eastern Range off Florida's Atlantic Coast. Blackstar Orbital is pioneering a new class of spacecraft with its lifting-body SpaceDrone design -- reusable, hypersonic satellites that launch as conventional payloads but return to Earth like spaceplanes. This technology addresses growing demand for responsive space operations and rapid mission turnaround capabilities, priorities that have gained urgency amid increasing commercial and national security space requirements. ARTICLE CONTINUES BELOW "This partnership highlights the role Starfighters plays in bridging the gap between concept and flight for next-generation aerospace systems," said Tim Franta, CEO of Starfighters Space. "Blackstar is developing a highly differentiated approach to reusable space platforms, and our F-104 fleet provides a proven, high-performance environment to test and validate those systems in real-world conditions." SpaceX IPO Transforms Space Investment Thesis SpaceX's April 1, 2026 confidential IPO filing has fundamentally altered the space investment landscape. With reports of seeking up to $75 billion in capital at valuations approaching $1.75 trillion, the offering would eclipse all previous public debuts. Morgan Stanley, Bank of America, Citigroup, JP Morgan, and Goldman Sachs are leading a 21-bank syndicate for the June 8 roadshow, with public trading anticipated as early as July 2026. The extraordinary valuation reflects Starlink's evolution into a $16 billion annual revenue generator and the strategic integration of xAI's artificial intelligence capabilities through a $250 billion February acquisition. This convergence of space infrastructure, satellite connectivity, and AI positions SpaceX at the center of multiple high-growth technology sectors. For investors seeking diversified exposure to the space economy, publicly traded companies with established operations and expanding capabilities represent compelling alternatives to direct SpaceX investment. The sector includes established aerospace primes, emerging space technology providers, and specialized infrastructure companies like Starfighters that enable critical testing and operational capabilities. Hypersonic Flight Testing Platform The Starfighters-Blackstar partnership leverages unique infrastructure at NASA Kennedy Space Center, where Starfighters operates its fleet of modified F-104 supersonic aircraft capable of sustained MACH 2+ operations. Under the TIA, Starfighters has developed a specialized BL75 pylon that serves as the critical structural interface between the F-104 platform and Blackstar's SpaceDrone. The phased testing approach begins with captive carry operations to validate aerodynamic modeling and performance characteristics. Successful completion will enable progression to high-speed release testing over designated ocean ranges, with potential expansion to overland operations as system maturity is demonstrated. This methodology provides real-world validation while maintaining safety protocols essential for experimental aerospace operations. ARTICLE CONTINUES BELOW ARTICLE CONTINUES BELOW "Access to Starfighters' flight test platform allows us to accelerate development of our SpaceDrone and move into flight validation with confidence," said Christopher Jannette, CEO of Blackstar Orbital. "This collaboration is a critical step in demonstrating a new class of reusable, hypersonic satellite systems." Space Infrastructure Investment Opportunities The approaching SpaceX IPO has intensified investor focus on space infrastructure companies with proven capabilities and growth prospects. Recent developments across the sector highlight sustained momentum: AST SpaceMobile (NASDAQ: ASTS) secured a strategic partnership with TELUS on April 2, 2026, for expanding cellular broadband infrastructure in Canada by 2026, driving shares higher. The company reported $70.9 million in 2025 revenue -- its first year as a revenue-generating business -- with 2026 guidance of $150-200 million supported by over $1.2 billion in contracted revenue commitments. With plans to deploy 45-60 satellites by year-end 2026, ASTS is scaling its space-based cellular network that works directly with unmodified smartphones. GE Aerospace (NYSE: GE) continues to demonstrate strong fundamentals with upcoming Q1 2026 earnings on April 21 expected to build on Q4's solid performance of $1.57 EPS on $11.9 billion revenue. The company benefits from a record $190 billion backlog and recent guidance for low double-digit revenue growth in 2026, supported by strong commercial aviation aftermarket demand and defense contract momentum including a recent $1.4 billion defense award and $1 billion manufacturing investment commitment. RTX Corporation (NYSE: RTX) reported strong Q4 defense bookings of $10.3 billion, culminating in a record backlog of $268 billion with $107 billion specifically in defense programs. Major recent awards include a $1.7 billion contract for four Patriot air and missile defense systems to Spain and a $1.2 billion Tamir missile production agreement. The company's diversified defense and commercial aerospace portfolio provides stability through Pratt & Whitney engines and Collins Aerospace systems. TransDigm Group (NYSE: TDG) has demonstrated resilience through recent acquisitions including SEI Industries, Raptor Scientific, and the components business of Communications & Power Industries. Morgan Stanley analysts view the stock's 2026 underperformance as a buying opportunity given the company's attractive valuation, balance sheet strength, and position as the leading commercial airline aftermarket investment with a $1,660 price target representing significant upside from current levels. ARTICLE CONTINUES BELOW ARTICLE CONTINUES BELOW Space Sector Growth Catalysts The space economy is experiencing fundamental transformation driven by converging trends including increased government budgets exceeding $100 billion annually, expanding commercial applications, and emerging technologies like hypersonics and reusable systems. The global space economy is projected to reach $1.8 trillion by 2035, supported by applications ranging from satellite communications to space-based manufacturing. Starfighters' partnership with Blackstar Orbital positions the company at the intersection of these growth trends. As the world's only commercial operator capable of sustained MACH 2+ operations with space launch capability, Starfighters provides critical infrastructure for testing and validating next-generation space technologies that will define the industry's future. The timing of SpaceX's public debut creates a unique inflection point for space sector investment. While SpaceX's massive scale and valuation may limit individual investor access, the broader ecosystem offers diversified exposure through companies with established capabilities, strategic partnerships, and expanding market opportunities. Companies that combine operational expertise with innovative partnerships are positioned to benefit from sustained sector growth as space becomes increasingly central to both commercial and national security priorities. This is a digital media distribution. MIQ has been paid by CDMG. MIQ does not own shares of FJET but reserves the right to buy/sell. Distributed by USA News Group on behalf of MIQ. Reviewed/approved by CDMG. Please see https://equity-insider.com/fjet-profile/ for more information about our disclosure. CONTACT: EQUITY INSIDER [email protected] (604) 265-2873

AI armageddon, NATO alliance wobbles and SpaceX going public

Welcome to our press review of events in the United States. Every Wednesday we look at how the Swiss media have reported and reacted to three major stories in the US - in politics, finance and science. The already shaky military alliance between the United States and Europe wobbled even more last week as US President Donald Trump took aim once again at the North Atlantic Treaty Organization (NATO). This has left the Swiss media wondering whether this marriage of military might be headed for a divorce. The Tages-Anzeiger pulled no punches in its assessment of the situation. "Trump is engaging in blackmail," said the newspaper after his stormy meeting with NATO Secretary General Mark Rutte. "Nothing is normal anymore in the NATO-US relationship," added Fredy Gsteiger, diplomatic correspondent at Swiss public broadcaster SRF. "This bodes ill for NATO. Such messages are received with satisfaction in the enemy camp," Gsteiger said, referring to Russia. Trump's latest beef is the reluctance of NATO countries to join hostilities against Iran. The Swiss press views this argument with incredulity. The consensus view is that Iran poses no threat to Europe or any NATO member state. Trump has threatened to withdraw US troops from Europe in the past, without following up on these statements. "But with an erratic president who says one thing today and does the opposite tomorrow, such threats must be taken seriously," said the Tages-Anzeiger. The newspaper is clear that the US has become an unreliable partner in the future defence of Europe. This puts the onus on Europe to beef up its own military credentials. "When a US president like Trump uses the continent's security as leverage, dependence on this partner remains a risk," the Tages-Anzeiger writes. "Therefore, more serious European cooperation, greater military capabilities, more strategic independence, and faster closing of critical gaps are no longer optional, but essential." The impending stock market listing of Elon Musk's company SpaceX could inspire a new golden age of firms offering shares to the public, hopes the Neue Zürcher Zeitung (NZZ). Market observers speculate that SpaceX could be valued at up to $1.8 trillion (CHF1.4 trillion) at its initial public offering (IPO), more than double the previous record IPO by Saudi Aramco in 2019. The NZZ bemoans a recent trend of start-ups preferring venture capital funding to expand, only to be taken over by a larger rival. This means that only private equity firms and company founders get to benefit from the profits. Listing on a stock exchange spreads wealth more evenly, the business-friendly newspaper argues. "Small investors would also benefit, as nothing contributes to the democratisation of company ownership and wealth accumulation as much as publicly traded shares," writes the NZZ. But the newspaper also strikes a note of caution. The euphoria surrounding the listing plans of SpaceX, and other companies like OpenAI and Anthropic, also comes with risks. "Some of these lofty dreams could burst. The business models of the two AI specialists, in particular, are still largely untested," the NZZ says. The hype surrounding such huge company listings in the United States could also come back to haunt small investors, it says. The markets are relying on Elon Musk and other company founders playing fair rather than dumping their existing stakes for a quick profit. "Do they [IPOs] offer new, publicly traded shareholders the prospect of capital gains, or do they merely serve as an opportunity for existing owners to cash in on their shares?" asks the NZZ. The Silicon Valley firm Anthropic has created yet another stir with its new model called Mythos that can easily detect weaknesses in IT systems. Anthropic appears alarmed at the power of its creation, holding back release for fear of it falling into the hands of malicious hackers. But several Swiss media sense a familiar marketing ruse from the AI industry playbook. Companies first spread alarm at the threat posed by the mysterious new technology and then assure people about their ability to control it for public good. "With these announcements, Anthropic is achieving a double victory: the company is demonstrating the power of its upcoming models while simultaneously projecting an image of a responsible player in artificial intelligence," says Le Temps. The Geneva newspaper points out that Anthropic is planning to raise fresh funds by issuing shares in an anticipated Initial Public Offering (IPO). The company is also embroiled in a row with the White House over allowing the US Department of Defense to use its technology. Mythos could be the key to both raising public awareness about Anthropic and persuading US President Donald Trump to adopt a more positive stance towards the company. The Neue Zürcher Zeitung (NZZ) also asks whether Anthropic is scaremongering about the capabilities of Mythos. It points out that another tech company has already spotted many of the same IT vulnerabilities using older AI models. The NZZ points out that security concerns about outdated IT systems have been sounded for years - way before AI popped up. Perhaps the Mythos scare could finally prompt faster and more efficient action to patch up leaky databases, it says. "IT security professionals could use this opportunity to secure necessary funding. If they succeed, the considerable buzz surrounding Mythos would certainly have a positive effect," the newspaper concludes. The next edition of 'Swiss views of US news' will be published on Wednesday, April 22. See you then! If you have any comments or feedback, email [email protected] Are you looking for a simple way to stay updated on US-related news from a Swiss perspective? Subscribe to our free weekly newsletter and receive concise summaries of the most important political, financial, and scientific stories in the United States, as reported by Switzerland's leading media outlets - delivered straight to your inbox.

Novartis CEO joins Anthropic's board of directors

Le CEO de Novartis intègre l'organe de surveillance d'Anthropic Original +Get the most important news from Switzerland in your inbox The appointment was made in accordance with Anthropic's specific governance structure, the Long-Term Benefit Trust, as announced by the US company on Tuesday night. This is an independent body overseeing Anthropic's long-term direction, designed to protect the company's mission from short-term commercial pressures. In February, the trust announced the appointment of former Microsoft boss Chris Liddell, who also served under Donald Trump's first presidency as White House deputy chief of staff for policy coordination and director of strategic initiatives. Until now, Narasimhan has not held any other directorship. According to an article in the Wall Street Journal, Narasimhan's appointment to the board of directors is part of Anthropic's potential IPO, which could take place as early as this year, and the planned expansion of its healthcare business. According to the article, Anthropic recently acquired biotech start-up Coefficient Bio for $400 million (CHF312 million). Anthropic has been operating one of its three European offices in Zurich since autumn 2024. It is looking to strengthen its Swiss team and the company is prepared to spend significant sums to do so. According to a job advertisement from the company, the proposed annual salary for an AI specialist is between CHF280,000 and CHF680,000.

Can Anthropic Lead IPO Race Over OpenAI With an $800 Billion Valuation Edge?

Anthropic is moving closer to a possible public listing as private-market valuation talk rises to as much as $800 billion. At the same time, OpenAI remains the other company most closely watched in the same race. Investors are now looking at revenue growth, enterprise demand, IPO readiness, and the cost of running large AI systems. Neither company has confirmed a final IPO date. However, market attention has moved beyond model launches and product demos. The focus is now on which company can show scale, stronger business demand, and a more structured path to the . A listing by either company would mark a major step for the AI sector. For now, Anthropic has drawn extra attention because of its recent valuation jump and its rising revenue. OpenAI, however, remains close in size and still holds a large position in the market.

Remember Sam Altman's AI top ups like electricity bill? Anthropic is testing it in reality

This shows AI tools are now being priced based on actual usage, like a utility. Anthropic has made major changes to how it charges enterprise customers using its Claude AI service. The company is shifting from older fixed price plans to a system where businesses pay a smaller fee for each user seat and then pay separately for expected monthly usage. Earlier discounts for using the app have been removed, which could make some companies spend more overall. However, the basic price per user is now lower than before, so it is easier for small teams to start using it. This change shows that AI companies are moving toward charging based on actual use instead of fixed subscription fees, as more businesses around the world start using AI tools. The company says the new pricing model aims to make access to Claude more flexible for business customers while linking cost more closely to how much the service is used each month. Instead of only paying a fixed monthly amount, firms will now pay a lower fee per user and also plan for expected usage in advance. With this approach the company can match costs more fairly with how much each team uses AI tools for tasks like coding, writing, and customer support. At the same time, the company has removed earlier discounts on its API access, which could make things more expensive for big organisations. Some customers who expected low monthly use now have to agree to higher spending estimates, even if they don't actually use that much. This has made some companies worried that their overall costs might increase, especially when they do not use it consistently. Also read: 5 ways to improve AC efficiency and reduce electricity bills during summer season However, the starting price per user has been reduced compared to older plans, which makes it cheaper for small teams and new users to get started. For example, in some plans, the price per user has been cut from $30 to $15. The company is also increasing its computing power by working with big chip providers like Amazon and Google to handle the growing demand from business customers. Also read: Mark Zuckerberg moves desk to AI lab, codes alongside researchers amid AI race Moreover, recent reports also say the company's revenue has grown strongly because more industries are using AI tools. It also said that rapid usage patterns from a small number of customers can lead to faster consumption of computing resources. This is why it is adjusting pricing rules to balance demand and supply more carefully. In future updates, the company plans to improve efficiency so customers can get more stable performance at a lower cost.

Starfighters Space Partners with Blackstar Orbital for Hypersonic Testing Platform as Space Sector Prepares for Historic SpaceX Public Debut

You can save this article by registering for free here. Or sign-in if you have an account. CAPE CANAVERAL, Fla., April 15, 2026 (GLOBE NEWSWIRE) -- As SpaceX prepares for what could become the largest IPO in history with its confidential S-1 filing targeting a $1.75 trillion valuation and June roadshow, space infrastructure companies are positioning for sector-wide growth. Starfighters Space, Inc. (NYSE American: FJET), owner and operator of the world's largest commercial supersonic aircraft fleet, has announced a strategic partnership with Blackstar Orbital to advance flight testing of revolutionary reusable hypersonic space systems.

Kraken revives IPO plans as Deutsche Börse invests $200 million

Crypto exchange Kraken is moving forward with a U.S. initial public offering after a brief pause, with co-CEO Arjun Sethi confirming the plan at the Semafor World Economy conference in Washington, D.C., according to CNBC. Separately, Deutsche Börse announced it would acquire a 1.5% fully diluted stake in Kraken's parent company, Payward, Inc., through a $200 million secondary transaction involving existing shares. The deal implies a valuation of $13.3 billion for Kraken, according to CoinDesk. The transaction is expected to close in the second quarter, subject to regulatory approval. The implied valuation marks a significant drop from the $20 billion figure attached to Kraken's $800 million fundraising round in November 2025, according to CNBC. Kraken said in November it had confidentially submitted a draft registration statement on Form S-1 with the U.S. Securities and Exchange Commission. At the time, the company said the number of shares and price range had not yet been determined, and that the offering would occur after the SEC completes its review, subject to market and other conditions. According to CNBC, the listing effort stalled after bitcoin slid to roughly 40% beneath the peak it set in October, souring the conditions needed to move forward. The cryptocurrency has rebounded since then, touching $76,000 for the first time since February and posting gains of roughly 9% over the course of April. Deutsche Börse and Kraken had previously announced a strategic partnership in December 2025, aimed at bridging traditional financial markets and the digital asset economy. Deutsche Börse described the arrangement as covering a broad range of functions -- among them trading, custody, settlement, collateral management, and tokenized assets -- designed to connect the two ecosystems for institutional clients. According to CoinDesk, the exchange operator had already built out a crypto trading platform aimed at institutional clients by 2024, and separately joined forces with Societe Generale-FORGE to bring euro and dollar stablecoin support into its post-trade infrastructure. Founded in 2011, Kraken offers trading in more than 450 digital assets, U.S. futures, U.S.-listed stocks and ETFs, and fiat currencies, the company said.

Anthropic's secretive Mythos AI can hunt crypto smart contract flaws at machine speed, and billions in DeFi could vanish fast | Market DeFi | CryptoRank.io

- Anthropic's Claude Mythos Preview is a vulnerability‑seeking AI that locates deep smart‑contract, wallet and cross‑chain bridge flaws at machine speed; security firms warn exploits could cause "hundreds of millions to billions" in irreversible DeFi/crypto losses. - Defensive response: Anthropic launched Project Glasswing with AWS, Google, Microsoft and JPMorgan and committed up to $100M in usage credits; major CEXs (Coinbase, Binance) and DEX teams (Uniswap -- >$3B TVL) are seeking early access while Fed/Treasury convened emergency talks. - Market impact: AI dramatically shortens time‑to‑exploit vs. traditional audits, creating systemic crypto security risk that markets may be underpricing today; quantum remains a longer‑term cryptographic threat. Anthropic's Mythos threat to the crypto industry can trigger hundreds of millions, if not billions, of dollars in sudden, irreversible losses. That is the stark reality facing digital asset markets following Anthropic's quiet unveiling of Claude Mythos Preview, a vulnerability-seeking AI model the San Francisco startup admits is simply too dangerous to release to the public. Deddy David, chief executive of blockchain security firm Cyvers, told CryptoSlate about the catastrophic scale of the problem, noting that the financial exposure of AI-driven exploits in crypto ranges from hundreds of millions to billions of dollars. He said: "If AI can identify vulnerabilities at scale across core internet infrastructure, crypto will be one of the first markets to feel the impact." If those estimates are correct, the scope of potential damage is staggering. Moreover, the scale of this new threat isn't just about bad actors writing slightly better phishing emails or generating malicious code snippets. Instead, it is about an autonomous system capable of finding deep, emergent logic flaws across smart contracts, wallets, and cross-chain bridges before human auditors even know where to look. For years, crypto founders and security researchers have obsessed over "Q-Day," the theoretical future date when a quantum computer becomes powerful enough to shatter blockchain cryptography. But Mythos recent launch is forcing a pivot. Security experts have noted that the most immediate threat to digital assets is no longer a future attack on cryptography. It is an AI system that can already uncover exploitable flaws in the very software layer the industry depends on. Anthropic's Mythos model fundamentally rewrites the timeline of infrastructure risk. According to the company, the model has already successfully identified vulnerabilities across every major web browser and operating system. In one alarming instance, it unearthed a 27-year-old bug buried in a critical piece of security infrastructure, alongside multiple deep-seated flaws within the Linux kernel. This was also corroborated by the UK government's AI Security Institute (AISI), which noted: "Our evaluation of Mythos Preview shows that it - and potentially future models - could be directed to autonomously compromise small, weakly defended, and vulnerable systems if given network access." The primary danger from these revelations is not simply that artificial intelligence makes cyber risk possible. Hackers have always existed. It is that AI radically compresses the time between bug discovery and exploit development. This means that vulnerability research that historically required months of painstaking human labor can now be executed at machine speed. For the traditional financial system, this represents a severe escalation in the cyber arms race. For the crypto industry, where transactions are instantaneous, irreversible, and governed entirely by autonomous code, it represents an immediate, systemic vulnerability. The architecture of the crypto ecosystem makes it uniquely vulnerable to machine-speed auditing. While traditional banks rely on siloed, proprietary networks with centralized fail-safes and circuit breakers, the digital asset sector runs almost entirely on public code. The industry is built on open-source dependencies, browser-based wallets, remote procedure call infrastructure, and smart contracts that are completely transparent to anyone or any AI model wishing to inspect them. This transparency creates a massive, publicly available attack surface. Compounding the risk is a severe structural mismatch between the value secured on-chain and the security budgets of the organizations that maintain it. Lean protocol teams frequently manage aging codebases that hold hundreds of millions of dollars in total value locked. Alex Svanevik, the chief executive of the agentic trading platform Nansen, told CryptoSlate: "Mythos is a different kind of threat: it's already finding vulnerabilities in the infrastructure crypto runs on that humans and every automated tool missed for decades." When AI-accelerated vulnerability discovery meets instant value transfer, the results can be devastating. Thus, the industry can no longer rely on traditional audits or post-incident detection. David explained: "When you combine AI-accelerated vulnerability discovery with instant, irreversible transactions, you dramatically shorten the path from bug to breach to loss. This is not just an increase in attack surface, it's an acceleration of time-to-exploit in a system where seconds matter." So what exactly is an AI model looking for? According to security experts, the most exposed layers are highly complex smart contracts and cross-chain bridges. These protocols are susceptible to emergent vulnerabilities, such as subtle state inconsistencies between upgradeable contracts or edge-case interactions across different modules. These are not simple syntax errors that a standard audit catches. Instead, they are complex interaction paths that large-scale AI simulations can easily surface. While artificial intelligence poses an immediate threat to the software layer, quantum computing remains the ultimate, looming threat to the cryptographic foundation of digital assets. Google Research has warned that future quantum computers may be able to break the elliptic-curve cryptography used in crypto systems with fewer resources than previously estimated. A sufficiently powerful cryptanalytically relevant quantum computer (CRQC) could derive private keys from public keys in minutes. With Bitcoin hovering around $70,000, the digital asset ecosystem presents a multi-trillion-dollar bounty. Current estimates suggest that up to 37% of circulating Bitcoin could be vulnerable to such a quantum hijacking before the network confirms the transaction. However, Google's public messaging remains focused on preparation and migration. The tech giant recently announced a 2029 target for a full industry transition to post-quantum cryptography. That contrast highlights the core of the industry's current dilemma. Anthropic's model represents software exploits happening right now. Quantum computing could pose a cryptographic threat later, assuming the industry fails to migrate its security standards in time. Chris Smith, chief executive of the cryptography firm Quantus, emphasized this exact distinction in his statement to CryptoSlate. He noted that while AI models are highly effective at finding and locating software bugs, quantum computing threatens the very foundations of the mathematics on which the crypto industry is built. If the underlying algorithms are broken, even flawless software becomes entirely insecure. Recognizing the sheer immediacy of the AI threat, the defensive race has officially begun. Through a new initiative called Project Glasswing, Anthropic has partnered with major tech firms and financial institutions, including Amazon Web Services, Google, Microsoft, and JPMorgan Chase, to use Mythos Preview to proactively find and fix flaws in critical systems. The company is committing up to $100 million in usage credits to help secure infrastructure before malicious actors can develop similar offensive capabilities. The threat has reached the highest levels of government. Last week, Federal Reserve Chairman Jerome Powell and Treasury Secretary Scott Bessent convened a surprise meeting with major US bank chief executives to discuss the specific systemic risks posed by models like Mythos. Meanwhile, the crypto industry is scrambling to join this defensive perimeter. Major exchanges, including Coinbase and Binance, are reportedly in close communication with Anthropic to secure early access to the Mythos model. Decentralized platforms are also echoing the urgency, with Uniswap founder Hayden Adams publicly requesting access to test the model against the platform. Uniswap is the largest decentralized exchange protocol, with more than $3 billion in assets locked. Nansen's Svanevik argues that the crypto industry could utilize the tools in ways that would make it "the best security auditing tool ever built." According to him: "Smart contracts have historically been audited by humans -- slow, expensive, incomplete. An AI that can find a 27-year-old bug in OpenBSD can also find the reentrancy vulnerability that hasn't been caught yet in a major DeFi protocol. The question is whether defenders get access before attackers do -- and whether the crypto industry moves fast enough to use it proactively rather than reactively." Simultaneously, OpenAI has expanded access to a more cyber-permissive model, GPT-5.4-Cyber, through its Trusted Access for Cyber program, allowing vetted security vendors to stress-test their own systems. Despite the severe implications of machine-speed vulnerability discovery, crypto markets have shown remarkably little reaction to the advent of frontier cyber-offensive AI. Financial markets have spent years developing a vocabulary for quantum risk. Investors broadly understand that a quantum computer could break current encryption standards and the catastrophic impact that would have on digital ownership. However, the market appears far less prepared to price a systemic threat that operates not through a dramatic break in mathematics, but through quiet audit failures, compromised wallet dependencies, and complex exploit chains. As artificial intelligence fundamentally reshapes the speed and scale of cyber warfare, the digital asset market may significantly underestimate the fragility of the very infrastructure on which it is built.

Kraken Targets Q3 IPO After Confidential Filing and Fresh $13.3B Valuation - Crypto Economy

Deutsche Börse's $200 million investment and Kraken's new Fedwire access add traditional-market backing and stronger payment infrastructure to its public-market push. Kraken is aiming for a Wall Street debut in the third quarter after confidentially filing for an IPO late last year, a step that pushes one of crypto's biggest exchanges closer to the public markets. The momentum behind the filing is not just about timing, but about how Kraken is repositioning itself as a broader financial platform. In April, a transaction involving existing shares implied a valuation of about $13.3 billion, below the company's late-2025 peak of $20 billion but still large enough to keep it among the sector's heavyweight names today. The exchange's pitch is increasingly tied to access. At the Semafor World Economy event in Washington, co-CEO Arjun Sethi said Kraken wants retail users to have access to the same types of trading tools that major institutional firms already use. That ambition gives the IPO story a clearer commercial logic: Kraken is trying to turn institutional-grade market access into a mainstream product. Sethi framed that mission around helping users do more with their own capital, signaling that the company's product strategy is clearly expanding beyond basic crypto trading services for everyday investors now. Kraken's capital story also picked up a notable endorsement from traditional market infrastructure. Deutsche Börse agreed to invest $200 million in Payward Inc., Kraken's parent company, by purchasing existing shares for a 1.5% fully diluted stake, with the deal expected to close in the second quarter pending regulatory approval. That investment matters because it links Kraken's IPO track with growing support from established financial operators, not just crypto-native backers. The transaction followed a partnership announced in December and added fresh weight to the exchange's effort to enter public markets this year. Another piece of the picture arrived in March, when Kraken received a limited purpose account from the Federal Reserve Bank of Kansas City. The approval made it the first digital asset bank with direct access to the US central bank's payment infrastructure and allows settlement on Fedwire without an intermediary bank. That operational shift strengthens Kraken's case by showing it can build closer to core financial rails. Kraken Financial plans to roll out the access in phases, beginning with client activity under its Wyoming SPDI structure.

Starfighters Space Partners with Blackstar Orbital for Hypersonic Testing Platform as Space Sector Prepares for Historic SpaceX Public Debut

CAPE CANAVERAL, Fla., April 15, 2026 (GLOBE NEWSWIRE) -- As SpaceX prepares for what could become the largest IPO in history with its confidential S-1 filing targeting a $1.75 trillion valuation and June roadshow, space infrastructure companies are positioning for sector-wide growth. Starfighters Space, Inc. (NYSE American: FJET), owner and operator of the world's largest... CAPE CANAVERAL, Fla., April 15, 2026 (GLOBE NEWSWIRE) -- As SpaceX prepares for what could become the largest IPO in history with its confidential S-1 filing targeting a $1.75 trillion valuation and June roadshow, space infrastructure companies are positioning for sector-wide growth. Starfighters Space, Inc. (NYSE American: FJET), owner and operator of the world's largest commercial supersonic aircraft fleet, has announced a strategic partnership with Blackstar Orbital to advance flight testing of revolutionary reusable hypersonic space systems. The March 26 Technical Interchange Agreement (TIA), unveiled at the Satellite 2026 conference, establishes a framework for integrating Blackstar's innovative "SpaceDrone" technology with Starfighters' proven F-104 aircraft platform. The collaboration will enable progressive flight testing from supersonic captive carries beginning in Q4 FY26 through high-altitude, supersonic release operations in the Eastern Range off Florida's Atlantic Coast. Blackstar Orbital is pioneering a new class of spacecraft with its lifting-body SpaceDrone design -- reusable, hypersonic satellites that launch as conventional payloads but return to Earth like spaceplanes. This technology addresses growing demand for responsive space operations and rapid mission turnaround capabilities, priorities that have gained urgency amid increasing commercial and national security space requirements. "This partnership highlights the role Starfighters plays in bridging the gap between concept and flight for next-generation aerospace systems," said Tim Franta, CEO of Starfighters Space. "Blackstar is developing a highly differentiated approach to reusable space platforms, and our F-104 fleet provides a proven, high-performance environment to test and validate those systems in real-world conditions." SpaceX IPO Transforms Space Investment Thesis SpaceX's April 1, 2026 confidential IPO filing has fundamentally altered the space investment landscape. With reports of seeking up to $75 billion in capital at valuations approaching $1.75 trillion, the offering would eclipse all previous public debuts. Morgan Stanley, Bank of America, Citigroup, JP Morgan, and Goldman Sachs are leading a 21-bank syndicate for the June 8 roadshow, with public trading anticipated as early as July 2026. The extraordinary valuation reflects Starlink's evolution into a $16 billion annual revenue generator and the strategic integration of xAI's artificial intelligence capabilities through a $250 billion February acquisition. This convergence of space infrastructure, satellite connectivity, and AI positions SpaceX at the center of multiple high-growth technology sectors. For investors seeking diversified exposure to the space economy, publicly traded companies with established operations and expanding capabilities represent compelling alternatives to direct SpaceX investment. The sector includes established aerospace primes, emerging space technology providers, and specialized infrastructure companies like Starfighters that enable critical testing and operational capabilities. Hypersonic Flight Testing Platform The Starfighters-Blackstar partnership leverages unique infrastructure at NASA Kennedy Space Center, where Starfighters operates its fleet of modified F-104 supersonic aircraft capable of sustained MACH 2+ operations. Under the TIA, Starfighters has developed a specialized BL75 pylon that serves as the critical structural interface between the F-104 platform and Blackstar's SpaceDrone. The phased testing approach begins with captive carry operations to validate aerodynamic modeling and performance characteristics. Successful completion will enable progression to high-speed release testing over designated ocean ranges, with potential expansion to overland operations as system maturity is demonstrated. This methodology provides real-world validation while maintaining safety protocols essential for experimental aerospace operations. "Access to Starfighters' flight test platform allows us to accelerate development of our SpaceDrone and move into flight validation with confidence," said Christopher Jannette, CEO of Blackstar Orbital. "This collaboration is a critical step in demonstrating a new class of reusable, hypersonic satellite systems." Space Infrastructure Investment Opportunities The approaching SpaceX IPO has intensified investor focus on space infrastructure companies with proven capabilities and growth prospects. Recent developments across the sector highlight sustained momentum: AST SpaceMobile (NASDAQ: ASTS) secured a strategic partnership with TELUS on April 2, 2026, for expanding cellular broadband infrastructure in Canada by 2026, driving shares higher. The company reported $70.9 million in 2025 revenue -- its first year as a revenue-generating business -- with 2026 guidance of $150-200 million supported by over $1.2 billion in contracted revenue commitments. With plans to deploy 45-60 satellites by year-end 2026, ASTS is scaling its space-based cellular network that works directly with unmodified smartphones. GE Aerospace (NYSE: GE) continues to demonstrate strong fundamentals with upcoming Q1 2026 earnings on April 21 expected to build on Q4's solid performance of $1.57 EPS on $11.9 billion revenue. The company benefits from a record $190 billion backlog and recent guidance for low double-digit revenue growth in 2026, supported by strong commercial aviation aftermarket demand and defense contract momentum including a recent $1.4 billion defense award and $1 billion manufacturing investment commitment. RTX Corporation (NYSE: RTX) reported strong Q4 defense bookings of $10.3 billion, culminating in a record backlog of $268 billion with $107 billion specifically in defense programs. Major recent awards include a $1.7 billion contract for four Patriot air and missile defense systems to Spain and a $1.2 billion Tamir missile production agreement. The company's diversified defense and commercial aerospace portfolio provides stability through Pratt & Whitney engines and Collins Aerospace systems. TransDigm Group (NYSE: TDG) has demonstrated resilience through recent acquisitions including SEI Industries, Raptor Scientific, and the components business of Communications & Power Industries. Morgan Stanley analysts view the stock's 2026 underperformance as a buying opportunity given the company's attractive valuation, balance sheet strength, and position as the leading commercial airline aftermarket investment with a $1,660 price target representing significant upside from current levels. Space Sector Growth Catalysts The space economy is experiencing fundamental transformation driven by converging trends including increased government budgets exceeding $100 billion annually, expanding commercial applications, and emerging technologies like hypersonics and reusable systems. The global space economy is projected to reach $1.8 trillion by 2035, supported by applications ranging from satellite communications to space-based manufacturing. Starfighters' partnership with Blackstar Orbital positions the company at the intersection of these growth trends. As the world's only commercial operator capable of sustained MACH 2+ operations with space launch capability, Starfighters provides critical infrastructure for testing and validating next-generation space technologies that will define the industry's future. The timing of SpaceX's public debut creates a unique inflection point for space sector investment. While SpaceX's massive scale and valuation may limit individual investor access, the broader ecosystem offers diversified exposure through companies with established capabilities, strategic partnerships, and expanding market opportunities. Companies that combine operational expertise with innovative partnerships are positioned to benefit from sustained sector growth as space becomes increasingly central to both commercial and national security priorities.

The Map is Not the Territory: The Impact of Anthropic Mythos on Data Security

Claude Mythos is a meaningful moment. But the real danger isn't the explosion of CVEs. It's what attackers find when they exploit them. I've been watching the security industry react to Anthropic's Project Glasswing announcement, and what I'm seeing falls into two camps. One says the sky is falling. AI can now autonomously find and exploit vulnerabilities, and defenders can't keep up. The other says to calm down because context still favors the defender, and the threat is overblown. The conversation will continue with OpenAI's latest model release. Both camps are arguing about the door. Let's talk about what's behind it. Anthropic has built a model that can autonomously discover zero-day vulnerabilities in major operating systems and browsers. Vulnerabilities that survived decades of human review and millions of automated tests. That's a real capability jump, and it's only a matter of time before other models can do the same. Critics are right that AI attackers start context-poor. They're probing from the outside. They don't know your architecture. They can't read your data or your proprietary source code. But attackers don't stay context-poor. The switch from "outside the perimeter" to full situational awareness can flip in an instant. The security industry's response to Glasswing has been focused on CVEs. Patch faster. Reduce attack surface. Build AI into your AppSec program. This is solid advice. What's missing is what happens after a vulnerability is exploited. When a Mythos-class model finds a zero-day in the Linux kernel and chains it to privilege escalation, the exploit isn't the target; it's the foothold. The blast radius -- what data an attacker can access, exfiltrate, or poison from that position -- is what determines the damage. The average attacker already dwells inside an environment for weeks before detection, and most data that an identity can access is overprivileged. When AI compresses the time from exploit to breach from days to hours, both of those problems become critical. You can't patch your way out of them. There are two ways to make a breach survivable. One is to prevent attackers from getting in -- the door lock. The other is to make sure that getting in doesn't mean getting everything. In an AI-accelerated threat environment, the second capability isn't optional. It's the one that determines whether a breach becomes a headline. Here's what we've learned from building Varonis: the fundamentals of data security don't change when the threat landscape shifts. What changes is the cost of getting them wrong. Data oversharing has always been dangerous. Excessive permissions have always expanded the blast radius. Unmonitored access has always been how attackers move laterally undetected. AI doesn't invent these problems -- it removes the friction that used to slow attackers down while exploiting them. Today, Mythos focuses on identifying vulnerabilities in code. But the same pattern-recognition capability applied to identity graphs, permission models, and sensitive data classifications will eventually surface the toxic combinations that turn a minor foothold into a catastrophic breach. The organizations that haven't addressed their data exposure won't need an attacker to find it for them, the model will do it faster than any human red team ever could. This is why we've invested so heavily in AI security. Unless you're starving AI of the data it needs to be useful, the non-deterministic systems inside your organization are creating new attack paths to data you may not even know exists. Every AI agent you deploy has permissions. Every model you connect to training data or a RAG pipeline has a blast radius. First, know what data is exposed. In most organizations, this number is shocking. Sensitive data accessible to everyone in the company. Cloud storage with no expiration on access grants. AI service accounts with admin rights to production databases. Map it now, before an attacker does it for you. Second, reduce the blast radius before the breach, not after. If an attacker authenticated as a random employee, what could they reach? That gap is your risk. Continuous least-privilege enforcement is the holy grail. Third, an instrument for speed. As AI compresses the time from foothold to exfiltration, your detection must compress too. Behavioral baselines, anomaly detection, automated response operating at AI speed. Code is where the Glasswing story begins. Data is where the story ends. And your ending is determined long before the CVE is published -- by the decisions you make today about access, exposure, and visibility. One thing the Glasswing conversation hasn't surfaced enough: the AI systems inside your organization are themselves a new attack surface that Mythos-class models will learn to exploit. Your agents are making decisions about data access. Your RAG pipelines are retrieving documents. Your coding assistants are reading source code. Each one has a permission model designed for speed, not security. Prompt injection, data exfiltration through model outputs, and agent impersonation. These aren't theoretical. They're the frontier a Mythos-class attacker will probe once the infrastructure vulnerabilities are patched. The AI attack surface isn't just about software vulnerabilities. It's the data those systems can reach, and the paths an attacker can walk through them. That's the map. Make sure you've seen it before they have. The door is harder to defend. Make sure you know what's behind it.

Gemini vs. Perplexity: Which AI Nailed My Prompts Best? (2026)