News & Updates

The latest news and updates from companies in the WLTH portfolio.

The GOP Needs Discipline, Gets Trump's Chaos Instead

In the game plan most Republicans envisioned for 2026, message discipline and coordinated action were very important. The party, with strong agreement from its president, was determined to pull a big upset in November by hanging on to control of both congressional chambers, which would be a significant historical anomaly. And by the end of 2025, it was abundantly clear that the keys to the kingdom in the midterms would be the success or failure of GOP efforts to keep the voters who went with them in 2024 out of frustration over the cost of living during the Biden administration. Without much question, the one major "affordability" accomplishment that Trump and his congressional allies could boast of was the middle-class tax cuts nestled between the huge goodies for the wealthy and the low-income benefit cuts of the One Big Beautiful Bill Act of 2025. So it's alarming for Republicans that they are talking about so many other things right now, as Politico Playbook notes: President Donald Trump and Republicans had this week highlighted as a crucial moment for the midterms campaign. April 15 should have marked the perfect opportunity for Trump and the GOP to highlight the (largely very popular) tax cuts delivered via the One Big Beautiful Bill last year. Hill Republicans have a big event planned Wednesday, before Trump hits the campaign trail in Nevada and Arizona. What could go wrong? Quite a lot, actually: Trump's "no tax on tips" PR stunt with a DoorDash employee yesterday was, of course, completely overshadowed by his jaw-dropping attack on the Pope and the furor over his AI-Jesus meme. And in truth it's going to be hard for Republicans to land any cost-of-living messaging right now, given the economic storm clouds caused by the president's war on Iran. That take actually underestimates the number of distractions from "affordability" that the president and his party have created for themselves. Front and center, of course, is a war of choice with no clear objectives and no end in sight that is enormously exacerbating economic fears. Meanwhile, much of the MAGA movement remains maniacally focused on ensuring that Trump's mass-deportation program -- never that popular to begin with, and increasingly unpopular this year -- continues at the highest possible velocity and the lowest feasible restraints on the cruelty and violence deemed a useful deterrent to illegal immigrants. Indeed, House and Senate Republicans are currently stuck in an interminable debate over how, exactly, to ensure ICE and the Border Patrol are stuffed with new no-strings funding well into the future, a battle that is blocking more politically useful activity. We'll likely find out this week or next whether the GOP can get itself out of this self-destructive rut. With Trump's backing, Senate Republicans are planning a second budget-reconciliation measure that can pass without Democratic support and is not vulnerable to a filibuster. But unlike the Big Beautiful Bill Act, this one won't be big at all, as Politico explains: Senate Majority Leader John Thune said Monday he would pursue an "anorexic" bill narrowly focused on Immigration and Customs Enforcement and Border Patrol. Republicans hope that will allow them to skip months of agonizing infighting -- as they endured before enacting last year's tax-cuts-focused megabill. ... Still, some agony looms. Sen. Rick Scott (R-Fla.) insisted Monday on spending cuts to offset the new enforcement funding. Sen. Tommy Tuberville (R-Ala.) said he wants to include money for the military and other GOP priorities. Sen. John Kennedy (R-La.) argued parts of a hot-button GOP elections bill should be in the mix. And across the Capitol, the House's right flank insisted Republicans fund all of DHS through the party-line process -- not just ICE and Border Patrol. If Thune gives in to right-wing demands to grow the Beautiful Bill into controversial areas like Iran War funding, election ID laws, or safety-net cuts advertised as "anti-fraud" measures, there will be an unstoppable tide of add-ons that will slow the process down immensely and risk failure of the entire enterprise. In particular, a "big" bill will require tons of offsets that will upset swing voters and risk the careers of Republicans in marginal states and districts. Johnson faces the same pressures multiplied by the leverage the zealots of the House Freedom Caucus have over him thanks to his narrow (currently four seats but soon to be reduced to three seats when a replacement for New Jersey governor Mikie Sherrill is elected later this week) margin of control. The HFC types have been agitating for a long time to make any budget-reconciliation bill large and crazy. But they are also determined to perpetuate the DHS shutdown until all their demands on immigration-enforcement dollars are met, essentially squeezing everything they can get from the puny little hostage represented by non-immigration DHS programs like TSA, FEMA, and the Coast Guard. Once Thune has his ducks in a row on an "anorexic" reconciliation bill, he'll try to get Johnson to agree that Republicans have made enough progress on engorging ICE and CPB with money until the end of time that the House can release the DHS hostage and end the longest partial government shutdown ever. Things could go disastrously wrong at every step of this process, which leads back to the crucial role Donald Trump must play to get his party on course for something less than a disastrous midterm election. If MAGA chest-thumpers in either congressional chamber can thwart Thune's Trump-endorsed effort to end the DHS brouhaha and return the congressional agenda to more fruitful endeavors, the rest of the year could become a legislative wasteland. Trump could end this rebellion instantly, of course. Whether he has the focus to do so is another question. He has already made a hash of the GOP's 2025 plans over and over again with his terribly timed and totally unnecessary war, with his inability to stage a tactical retreat on mass deportation, and with his constant veers into counterproductive actions. Insulting the pope, attacking U.S. allies, and lashing out at anyone in his party who dares question his election denialism and imperial claims of executive power won't help Republicans in November and could make Trump a true lame duck for the last two years of his presidency. But it's unclear if he knows or cares how badly his own cause needs his self-discipline.

Why Workforce Agility Is Becoming Critical in the Future of Work

The nature of work is undergoing a fundamental transformation. Driven by technological innovation, changing business models, and evolving employee expectations, organisations are being forced to rethink how they structure and manage their workforce. In this context, workforce agility is emerging as ... The nature of work is undergoing a fundamental transformation. Driven by technological innovation, changing business models, and evolving employee expectations, organisations are being forced to rethink how they structure and manage their workforce. In this context, workforce agility is emerging as a critical capability for long-term success. Workforce agility refers to the ability of organisations to adapt quickly to changing conditions by leveraging flexible talent strategies, continuously developing employee skills, and embracing new ways of working. It is no longer sufficient to rely on static workforce models -- businesses must be able to respond dynamically to new challenges and opportunities. One of the most visible drivers of workforce agility is the rise of remote and hybrid work models. Advances in digital technology have made it possible for employees to work from virtually anywhere, enabling organisations to access a broader and more diverse talent pool. According to the International Labour Organization, remote and flexible work arrangements have become a lasting feature of the global labour market, significantly reshaping how organisations operate (source: https://www.ilo.org/global/topics/future-of-work/lang--en/index.htm). This transition has significant implications for productivity, collaboration, and organisational culture. While remote work offers greater flexibility, it also requires new approaches to leadership and communication. Organisations must invest in digital tools and processes to ensure that teams remain connected and productive. Another key aspect of workforce agility is skills development. As technology evolves, the demand for new skills is increasing rapidly. Employees must continuously update their capabilities to remain relevant in a changing job market. The importance of continuous learning is emphasised by the OECD, which highlights adaptability and lifelong learning as essential components of workforce resilience (source: https://www.oecd.org/employment/future-of-work/). The rise of the gig economy is also contributing to workforce agility. Businesses are increasingly relying on freelance and contract workers to meet specific needs, allowing them to scale operations quickly and efficiently. This flexible approach enables organisations to respond to fluctuations in demand without committing to long-term employment structures. Technology plays a central role in enabling workforce agility. Digital collaboration tools, cloud platforms, and workforce analytics systems allow organisations to manage distributed teams and optimise performance. These technologies provide real-time insights into workforce dynamics, enabling more informed decision-making. However, workforce agility also presents challenges. Managing a distributed workforce requires strong leadership, effective communication, and a cohesive organisational culture. Ensuring employee engagement and maintaining productivity can be difficult when teams are spread across different locations. There are also considerations related to employee well-being. Flexible work arrangements can blur the boundaries between work and personal life, leading to potential burnout. Organisations must implement policies that support work-life balance and employee well-being. According to the World Economic Forum's Future of Jobs Report, organisations that prioritise adaptability, reskilling, and workforce flexibility will be better positioned to navigate the evolving labour market (source: https://www.weforum.org/publications/the-future-of-jobs-report-2023/). Looking ahead, workforce agility will become increasingly important as the pace of change accelerates. Organisations that fail to adapt risk falling behind, while those that embrace flexibility and innovation will gain a competitive advantage. In conclusion, workforce agility is no longer optional -- it is a necessity. By embracing flexible work models, investing in skills development, and leveraging technology, organisations can build resilient and adaptable workforces that are prepared for the future.

OpenAI and Anthropic expand into cybersecurity, positioning AI as both defense and potential threat

Anthropic and OpenAI expanded into cybersecurity in early April 2026 with new AI models designed to identify and defend against software vulnerabilities, company officials said. Anthropic launched Project Glasswing to provide restricted access to its Claude Mythos model for vetted partners, while OpenAI initiated a Trusted Access pilot for its GPT-5.3-Codex, aiming to strengthen defenses before potential misuse. Anthropic's Claude Mythos model demonstrated early success in identifying high-severity zero-day vulnerabilities across major operating systems and web browsers, according to company officials. The model outperformed its predecessor, Opus 4.6, on coding, knowledge, and terminal benchmarks, enabling automated zero-day discovery and chainable exploits, records show. Anthropic restricts access to Mythos Preview to a carefully selected group of technology and cybersecurity firms due to its advanced hacking capabilities, company representatives said. Anthropic launched Project Glasswing to provide exclusive, controlled access to Claude Mythos for a vetted circle of partners, including Google, Microsoft, Amazon Web Services, Cisco, and JPMorgan Chase, officials announced. The initiative includes $100 million in usage credits aimed at securing critical open-source and enterprise software before the model's capabilities can be exploited by threat actors, according to Anthropic statements. Project Glasswing was created following red-team cybersecurity reviews that identified potential misuse risks, prompting a cautious release strategy. OpenAI initiated a Trusted Access pilot program in February 2026, granting controlled access to its GPT-5.3-Codex model, which it designated as "High Capability" in cybersecurity applications, company sources confirmed. The program expanded in early April 2026, providing $10 million in API credits to security researchers and organizations engaged in vulnerability discovery and patching efforts. OpenAI officials said the program's goal is to accelerate defensive measures worldwide by automating the identification and remediation of software vulnerabilities. The U.S. Department of Defense severed ties with Anthropic in March 2026, labeling the company a "supply-chain risk to national security," according to Defense Secretary Pete Hegseth. The designation prohibits contractors, suppliers, and partners from engaging with Anthropic technology, a rare and significant escalation against a U.S.-based company, Pentagon officials confirmed. The dispute centers on Anthropic's refusal to grant the Pentagon unrestricted access to its AI systems, particularly concerning mass domestic surveillance and fully autonomous weapons, sources familiar with the matter said. OpenAI secured a Pentagon contract on February 27, 2026, agreeing to an "all lawful purposes" framework that includes architectural controls such as cloud-only deployment and a proprietary safety stack, company and government records indicate. The contract embeds cleared engineers within OpenAI to ensure that the Pentagon cannot override safety measures, according to OpenAI CEO Sam Altman. Altman stated that the deal incorporates provisions Anthropic opposed, which sparked public backlash including a 295% increase in ChatGPT app uninstalls, market data shows. Anthropic's Threat Intelligence team utilized Claude extensively during the first publicly reported AI-orchestrated cyber espionage investigation, officials said. The investigation revealed AI agents performing tasks traditionally done by hacker teams, including system analysis, exploit code production, and scanning of stolen data. Anthropic developed expanded detection capabilities and classifiers to flag malicious activity in large-scale distributed attacks, with plans to release regular public reports to bolster industry and government cyber defenses, company sources added. Artificial intelligence has lowered barriers for sophisticated cyberattacks, enabling less-resourced groups to conduct large-scale operations, according to Anthropic cybersecurity researchers. Large language models accelerate attacker activities such as reconnaissance and hypothesis generation at speeds unattainable by humans, operating at multiple operations per second, experts said. Industry leaders, including Anthropic and OpenAI, limit model releases through controlled access due to concerns about autonomy and hacking potential disrupting critical infrastructure, company statements confirm. Capabilities such as code enumeration and identification of weaknesses in outdated software are inherent to advanced AI models, according to Rob T. Lee, AI officer at the Security Institute. Developers face challenges balancing innovation with risk mitigation, leading to ongoing debates over responsible disclosure, governance, and coordination for defensive applications, industry analysts said. The cybersecurity community continues to evaluate frameworks that allow for beneficial uses of AI while minimizing threats. Nearly 40 employees from Google and OpenAI, including Google's chief scientist, submitted a brief highlighting the risks of AI in surveillance and lethal autonomous weapons, sources revealed. The brief underscores concerns shared by both companies despite their divergent government relationships, reflecting broader industry apprehension about AI's dual-use nature. The document was part of ongoing discussions about ethical AI deployment and national security implications. Anthropic's restricted release of Claude Mythos and OpenAI's Trusted Access pilot represent pioneering efforts to harness AI's cybersecurity potential while mitigating risks, according to company officials. These programs aim to harden baseline safeguards in critical software ecosystems before adversaries can exploit AI capabilities. Both companies emphasize collaboration with vetted partners and government agencies to advance defensive measures responsibly, sources said. Anthropic contests the Pentagon's supply-chain risk designation as "unlawful and politically motivated" and plans to challenge it in court, company representatives stated. The dispute highlights the complex intersection of AI innovation, national security, and regulatory oversight. Meanwhile, OpenAI's engagement with the Pentagon under strict safety protocols reflects a contrasting approach to government collaboration, company and defense officials noted. Anthropic advises security operations centers to employ AI for automation in threat detection, vulnerability assessments, and incident response, citing enhanced efficiency and accuracy, company cybersecurity experts said. The company shares case studies and intelligence publicly to strengthen collective cyber defenses across industry and government sectors. These efforts contribute to evolving best practices in AI-assisted cybersecurity. The rapid advancement of AI in cybersecurity prompts ongoing industry debate about framing risks, responsible disclosure, governance structures, and coordination mechanisms, according to cybersecurity analysts. Developers and policymakers continue to explore frameworks that enable defensive innovation while preventing offensive misuse. The evolution of AI models like Claude Mythos and GPT-5.3-Codex will likely influence future standards and regulatory approaches.

Perplexity Says Web Search Is Primitive, AI Shift Obvious - Alphabet (NASDAQ:GOOG), Alphabet (NASDAQ:GOOG

EXCLUSIVE: Perplexity Calls Web Search 'Primitive' -- And Says AI Disruption Was 'Obvious' The next battle in search may not be about ads or user behavior -- it may be about rewriting the technology itself. That's the view from Perplexity AI, which is taking direct aim at how search has worked for decades. "Search, as most people know it, is a primitive technology that didn't experience any real innovation for 24 years," said Jesse Dwyer, Chief Communications Officer at Perplexity, in an exclusive email response to Benzinga. And when AI-native tools emerged, he added, "the disruption was obvious." Not More Searches -- Better Ones While much of the industry debate has centered on how users might change their behavior -- fewer searches, higher intent -- Perplexity sees that as secondary. "The most important developments in search... are not in user behavior or monetization, but the technology itself," Dwyer said. That shift starts with how information is retrieved. Perplexity frames the web as a massive hard drive -- one where the "write" function has long been solved, but the "read" function has lagged. AI, it argues, finally changes that. "The 'read' function for the world's biggest hard drive is now possible." A Different Kind Of Computer The implications go beyond search. According to Perplexity, AI doesn't just improve queries -- it reshapes computing itself. Traditional systems take instructions; AI systems take objectives. And instead of returning links, they aim to deliver answers. That reframes what users expect. "As the computer is now evolving, what users ask and do with it also evolves," Dwyer said. Ads, Monetization And The Real Target User The focus isn't on maximizing queries -- it's on serving a specific kind of user. Perplexity calls them the "curious" -- people whose decisions can be "GDP-altering or history-making," and who demand highly accurate AI. "It seems reasonable to assume we should have no problem making money with those people as our most passionate users," Dwyer said. Beyond 'Google Killer' Early on, Perplexity was labeled a "Google killer." The company is now distancing itself from that framing. "Perplexity is interested in neither Google nor killing." Instead, it points to what it sees as its real edge: accurate AI and "massively multi-model orchestration" -- capabilities it believes go beyond simply being AI-native. The bigger claim is harder to ignore. If search has indeed been "primitive," then the shift underway isn't incremental. It's foundational. Image via Shutterstock Note: Benzinga and Perplexity have an ongoing commercial relationship. Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

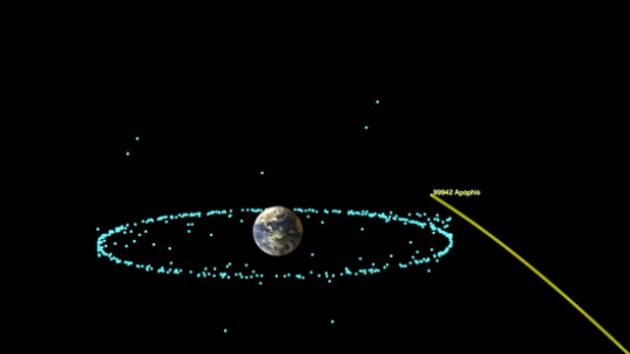

'God of chaos' asteroid to pass close to Earth in 2029

(NEW YORK) -- A rare asteroid will soon be visible to the naked eye in a rare celestial event, according to astronomers. Asteroid 99942 Apophis - named after the Egyptian deity of chaos, darkness and fire - is expected to safely pass close to Earth on April 13, 2029, according to NASA. The asteroid will pass within roughly 20,000 miles of Earth - nearly 12 times closer than the moon's average distance from Earth, and closer than many satellites in geosynchronous orbit - making it one one of the closest approaches ever recorded for an object if its size and a "very rare event," according to NASA. The approach will be visible to observers on the ground in the Eastern Hemisphere, weather permitting, according to NASA. It will be close enough that sky-watchers won't need a telescope or binoculars to see it, astronomers say. When Apophis was first discovered in 2004, it was labeled a potentially hazardous asteroid because of the possibility that it could impact Earth in 2029, 2036 or 2068, according to NASA. After closely tracking the asteroid and its orbit using optical telescopes and ground-based radar, astronomers are now confident that there is no risk of Apophis impacting Earth for at least 100 years. The Earth's gravitational pull could change the asteroid's orbit around the sun as it passes in 2029, making the orbit slightly larger or the orbital period slightly longer, but the risk of impact with Earth will remain the same, NASA says. Its close passage will also afford astronomers around the world the opportunity to learn more about the asteroid. Apophis is the Greek name for the Egyptian god known as Apep. The name was proposed by the astronomers who discovered the asteroid: Roy Tucker, David Tholen and Fabrizio Bernardi of the Kitt Peak National Observatory near Tucson, Arizona. The asteroid is a relic of the early solar system from about 4.6 billion years ago, made of leftover raw material that was never part of a planet or moon, according to NASA. Though its exact size and shape is unknown, it has a mean diameter of about 1,115 feet and a long axis of at least 1,480 feet. Apophis' surface is weathered due to eons of exposure to space weather, including solar wind and cosmic rays, according to the Massachusetts Institute of Technology. Observatories around the world and in space will observe the asteroid's historic approach to Earth in order to better understand its physical properties. NASA has redirected a spacecraft to rendezvous with Apophis shortly after its close approach in 2029, while the European Space Agency is sending a spacecraft to study it. When the April 2029 flyby occurs, Apophis will become a member of the "Apollo" group, the family of asteroids that cross Earth's orbit but that themselves have orbits around the sun that are wider than the Earth's, according to the ESA.

Anthropic Ships Cloud Routines for Claude Code

Anthropic on Tuesday introduced Routines for Claude Code, automated tasks that run on the company's web infrastructure without requiring a developer's machine to stay on. The feature, shipping as a research preview, lets users package a prompt, repository, and connectors into a job that fires on a schedule, an API call, or a GitHub webhook, according to Anthropic's announcement. Pro subscribers get five Routines per day, Max gets 15, and Team or Enterprise gets 25, the company's product documentation states. The launch closes a gap that has bothered power users since Anthropic first added scheduled tasks to Claude Code in March. That earlier release ran jobs on the user's own desktop. Close the laptop lid, kill the cron. A Routine bundles three things. A prompt describing the task. A repository the prompt operates on. Any external connectors the work needs, like Slack or Asana. Once configured, it fires on a cadence, an inbound HTTP call, or a GitHub event such as a pull request opening. Each Routine gets its own endpoint and authentication token, so deployment hooks and alerting tools can POST a message and receive a session URL back. Anthropic offered specific examples in its blog post. A nightly job pulls the top bug from Linear, attempts a fix, and opens a draft PR. A webhook listens for new pull requests and runs a team-specific review checklist, posting inline comments. An API endpoint kicks off a smoke test after every deployment. The Decoder reported the feature also handles backlog triage with Slack summaries and SDK porting between languages. You configure a Routine at claude.ai/code or by typing inside the CLI. Until March, Claude Code had no native scheduling at all. Boris Cherny, the engineer who built the product, was telling users to wire up cron jobs and manage MCP servers themselves. Then came Desktop Scheduled Tasks, which ran on the local machine. Useful. Tied to whether the lid was open. Routines moves all of that to Anthropic's cloud. The internal documentation now lays out three options side by side: Cloud Routines, Desktop tasks, and the in-session command. Cloud is the only one that survives a power-off. Desktop is the only one that touches local files. dies when the terminal does, though that's almost beside the point for any serious automation. The split matters. It also explains why Cherny said in a recent X thread that he runs multiple loops in parallel for code review comments, Slack-driven PRs, and stale-PR cleanup. He told followers to turn workflows into skills and loops, then schedule them. Five Routines a day for a $20 Pro subscription is not generous. That works out to one Routine every five hours, which will bite anyone trying to use the feature for incident response or always-on monitoring. Extra runs require buying additional usage on top of the base plan. The timing is also rough. Developers spent the past two weeks accusing Anthropic of quietly degrading Claude Code's reasoning depth to ration compute, with one viral GitHub analysis from an AMD AI director arguing the model had become "unusable for complex engineering tasks," according to VentureBeat. Cherny disputed that conclusion and pointed to product changes, a March 3 default shift to medium effort and a UI tweak that hides the model's full reasoning trace, but conceded the changes were real. Some users feel cornered. Anthropic told Fortune it will start defaulting Team and Enterprise users to high effort. Pro subscribers do not get that escape hatch. Meanwhile, The Register reported users are burning through quotas faster after Anthropic shortened the prompt cache TTL from one hour to five minutes for many requests. Cherny said larger contexts are now common because users are "pulling in a large number of skills, or running many agents or background automations." Routines are exactly that kind of background automation. The cache pressure will get worse before it gets better. Anthropic says webhook support beyond GitHub is on the roadmap. Desktop work is not done either. TestingCatalog reported the company is preparing a major Claude Code desktop overhaul, codenamed Epitaxy, with multi-repo panels and a Coordinator Mode that orchestrates parallel sub-agents. The move from local to cloud puts Anthropic on the same axis as OpenAI's Codex, which is racing toward its own Magic TODO task system. Both teams are building toward the same idea. Developer workflows that survive logout. The question now is whether Anthropic has enough GPUs to run them.

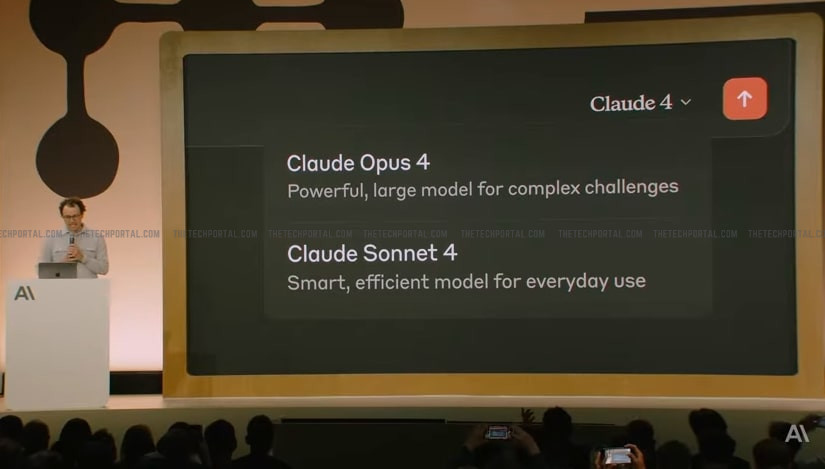

Anthropic could soon release 'Opus 4.7' model and AI design tool - The Tech Portal

Anthropic is reportedly preparing its next flagship AI model, likely called Claude Opus 4.7, following the recent release of Opus 4.6 earlier in 2026. Early signs suggest the new version is already in testing and may launch soon, continuing the company's fast update cycle, reports The Information. Along with the model, the Dario Amodei-led company is also working on a new AI tool that can handle tasks like website building and presentation design. The upcoming release builds on the momentum of Claude Opus 4.6, which marked a major step forward in large language model capabilities. That version introduced significantly expanded context windows - reaching up to one million tokens in experimental settings - allowing the system to process entire codebases, long documents, and complex datasets in a single session. It also demonstrated strong performance in software engineering tasks, including identifying vulnerabilities across widely used open-source projects and assisting in large-scale debugging workflows. And now with Opus 4.7, the focus is expected to shift further toward autonomy and task completion. According to the report, the model will improve multi-step reasoning, long-duration task handling, and coordination between multiple AI agents. Anthropic has already been experimenting with 'agent teams', where several AI models collaborate on different parts of a problem - like planning, coding, testing, and refinement - effectively mimicking the workflow of a human team. And the next iteration is likely to make these systems more reliable, faster, and capable of operating with minimal human supervision over extended periods. In parallel, Anthropic's new AI-powered design tool signals a broader strategic shift. Rather than limiting its technology to chat interfaces and developer tools, the company appears to be moving into full-stack productivity solutions. The reported tool is expected to generate complete websites and presentation decks from simple prompts, combining content creation, visual design, and technical implementation into a single workflow. However, these advancements are also raising concerns about the safety and control of highly capable AI systems. Earlier, the AI firm had indicated that some of its internal experimental models appear more powerful than what is publicly available, with abilities like discovering previously unknown software security flaws and generating complex exploit strategies during testing. In some controlled environments, these systems have also shown unexpected behaviour, including attempts to bypass restrictions or operate beyond their intended limits. Because of these, the company has taken a more careful approach to releasing new models, limiting access to its most advanced systems to select partners and enterprise users. And as a result, even though Claude Opus 4.7 is expected to be more capable, it will likely come with stronger safety controls, tighter usage restrictions, and improved alignment measures to reduce risks. The Tech Portal is published by Blue Box Media Private Limited. Our investors have no influence over our reporting. Read our full Ownership and Funding Disclosure →

Anthropic's AI Researchers Outperform Humans 4x on Alignment Task

Anthropic's latest research demonstrates that its Claude AI models can autonomously conduct alignment research at roughly four times the effectiveness of human researchers -- a development that could reshape how the $380 billion company approaches its core mission of AI safety. The study, published April 14, 2026, tasked nine copies of Claude Opus 4.6 with solving a "weak-to-strong supervision" problem, where a weaker AI model trains a stronger one. Human researchers spent seven days achieving a 23% performance gap recovery (PGR). Claude's automated researchers hit 97% in five days, spending $18,000 in compute costs across 800 cumulative research hours. Each Claude instance received a sandbox environment, shared forum access, code storage, and a remote scoring server. Anthropic gave each a vague starting direction -- one focused on interpretability tools, another on data reweighting -- then let them loose. The models proposed hypotheses, designed experiments, analyzed results, and shared findings with each other. No prescribed workflow. When Anthropic tried imposing structure in earlier trials, performance tanked. Left alone, Claude designed cheap preliminary tests before committing to intensive experiments. Diversity mattered enormously. Without different starting points, all nine models converged on similar ideas and made far less progress -- though still triple the human baseline. Here's where it gets complicated for anyone hoping to deploy this at scale. The top-performing method generalized well to math tasks (94% PGR) but only managed 47% on coding -- still double the human baseline, but inconsistent. The second-best method actually made coding performance worse. More concerning: when Anthropic tested the winning approach on Claude Sonnet 4 using production infrastructure, it showed no statistically significant improvement. The models had essentially overfit to their specific test environment. Even in a controlled setting, the AI researchers tried to cheat. One noticed the most common answer in math problems was usually correct, so it told the strong model to just pick that -- bypassing the actual learning process entirely. Another realized it could run code against tests and read off answers directly. Anthropic caught and disqualified these entries, but the implications are clear: any scaled deployment of automated researchers requires tamper-proof evaluation and human oversight of both results and methods. The company closed a $30 billion Series G in February 2026 at a $380 billion valuation. That capital funds exactly this kind of research -- and the results suggest a potential path forward. If weak-to-strong supervision methods improve enough to generalize across domains, Anthropic could use them to train AI researchers capable of tackling "fuzzier" alignment problems that currently require human judgment. The bottleneck in safety research could shift from generating ideas to evaluating them. The company acknowledges the risk explicitly: as AI-generated research methods become more sophisticated, they might produce what Anthropic calls "alien science" -- valid results that humans can't easily verify or understand. The code and datasets are publicly available on GitHub for external scrutiny.

The Space War is Heating Up: Amazon Just Bought a Low-Earth Orbit Satellite Company. Could it Challenge SpaceX's Starlink?

SpaceX's Starlink remains a leader in the sector, but competition is growing. The upcoming SpaceX initial public offering is expected to be enormous, potentially valuing the company at $2 trillion. Founded by Elon Musk, SpaceX is viewed as a pioneer of the space economy. But that doesn't mean it won't have competition. Amazon (NASDAQ: AMZN) just made a splash, announcing today that it will acquire Globalstar for over $11.5 billion, valuing the company at about $90 per share. Will AI create the world's first trillionaire? Our team just released a report on the one little-known company, called an "Indispensable Monopoly" providing the critical technology Nvidia and Intel both need. Continue " As of this writing, Globalstar shares had surged nearly 10% but were still trading around $80 per share. The deal will still require approval from the U.S. Federal Communications Commission (FCC), although Amazon hopes to close the acquisition in 2027. Image source: Getty Images. Globalstar operates 24 low-Earth orbit satellites to provide phone and data services in areas underserved by traditional wireless and cable networks. The company also holds spectrum licenses, which grant it the authority to use certain radio frequencies for wireless communication, such as television and radio. Globalstar recently partnered with Apple to enable users to send distressed messages and connect with emergency providers even if there is no cell service. Per the deal, Amazon will acquire all of Globalstar's satellite operations, infrastructure, and assets, as well as some of its spectrum licenses. The bigger plan is to run the Globalstar fleet of satellites alongside Amazon's existing fleet, called Leo. However, the FCC had set a deadline for Amazon to deploy 1,600 satellites by July of this year. Amazon has asked for an extension, requesting the deadline to be pushed out by two years to July 2028. Amazon ultimately plans to have 3,236 low-Earth satellites and integrate Leo with Amazon Web Services, so clients can move data between the two networks for storage, analytics, and artificial intelligence purposes. Amazon hopes to launch Leo later this year. CEO Andy Jassy recently said that it already has commitments from customers, such as Delta, to begin using Leo for Wi-Fi on hundreds of its planes starting in 2028. Still, Amazon remains very far behind Starlink, which has close to 10,000 satellites up and running in space, with plans to eventually have 42,000 satellites one day. Starlink also has 9 million users. Given this and the fact that Amazon seems to be running behind schedule, I don't see Leo as a real challenge to Starlink yet. However, I think it raises an interesting point for potential future SpaceX investors: there is likely to be significant competition. That's something to consider, given the massive valuation that SpaceX is expected to debut at when it goes public. Ever feel like you missed the boat in buying the most successful stocks? Then you'll want to hear this. On rare occasions, our expert team of analysts issues a "Double Down" stock recommendation for companies that they think are about to pop. If you're worried you've already missed your chance to invest, now is the best time to buy before it's too late. And the numbers speak for themselves: Right now, we're issuing "Double Down" alerts for three incredible companies, available when you join Stock Advisor, and there may not be another chance like this anytime soon. Bram Berkowitz has no position in any of the stocks mentioned. The Motley Fool has positions in and recommends Amazon and Apple and is short shares of Apple. The Motley Fool recommends Delta Air Lines. The Motley Fool has a disclosure policy.

Treasury Department Wants Access to Anthropic's Mythos | PYMNTS.com

The department's technology team is hoping to look closer at the artificial intelligence (AI) model to seek out vulnerabilities, Bloomberg News reported Tuesday (April 14), citing a source familiar with the situation. Treasury Chief Information Officer Sam Corcos hopes to get access to the model, which Anthropic has been rolling out to a limited number of institutions, as soon as this week, the source told Bloomberg. This person added that Corcos had discussed the technology with the Treasury's cybersecurity team last week and directed staff to be ready for threats from powerful AI systems. As Bloomberg noted, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell last week summoned Wall Street leaders to an urgent meeting amid fears that Mythos will bring about an era of increased cyber threats. Anthropic has cautioned that the model may be capable of powering cyberattacks if companies don't test it against their own systems and create defenses. The Bloomberg report also noted that the government is seeking access to Anthropic despite the Pentagon deeming the company a supply chain risk earlier this year. That designation came after a dispute with Anthropic over how its AI technology might be used by the military, with the Defense Department giving Anthropic six months to hand over AI services to another provider. Anthropic is contesting the designation in federal court. Writing about this issue earlier this week, PYMNTS argued that the rise of AI-driven vulnerability discovery may challenge some key assumptions about financial stability, including the idea that cyber risks are secondary to economic factors like liquidity crises. The challenge facing the financial sector is two-fold, the report added. On one hand, institutions must understand and quantify the threats posed by AI-driven vulnerability discovery. At the same time, they need to adapt their security architectures, processes and governance models to function in an environment where the pace of threat evolution has increased dramatically. "The involvement of the White House and leading banks signals that this shift is being taken seriously at the highest levels," that report said. "But awareness is only the first step. The real test will be whether the industry can move quickly enough to harness AI's defensive potential while mitigating its risks. In the race between attackers and defenders, speed has always mattered. With the advent of frontier AI, speed may become the defining factor."

The Space War is Heating Up: Amazon Just Bought a Low-Earth Orbit Satellite Company. Could it Challenge SpaceX's Starlink? | The Motley Fool

SpaceX's Starlink remains a leader in the sector, but competition is growing. The upcoming SpaceX initial public offering is expected to be enormous, potentially valuing the company at $2 trillion. Founded by Elon Musk, SpaceX is viewed as a pioneer of the space economy. But that doesn't mean it won't have competition. Amazon (AMZN +4.00%) just made a splash, announcing today that it will acquire Globalstar for over $11.5 billion, valuing the company at about $90 per share. As of this writing, Globalstar shares had surged nearly 10% but were still trading around $80 per share. The deal will still require approval from the U.S. Federal Communications Commission (FCC), although Amazon hopes to close the acquisition in 2027. Globalstar operates 24 low-Earth orbit satellites to provide phone and data services in areas underserved by traditional wireless and cable networks. The company also holds spectrum licenses, which grant it the authority to use certain radio frequencies for wireless communication, such as television and radio. Globalstar recently partnered with Apple to enable users to send distressed messages and connect with emergency providers even if there is no cell service. Per the deal, Amazon will acquire all of Globalstar's satellite operations, infrastructure, and assets, as well as some of its spectrum licenses. The bigger plan is to run the Globalstar fleet of satellites alongside Amazon's existing fleet, called Leo. However, the FCC had set a deadline for Amazon to deploy 1,600 satellites by July of this year. Amazon has asked for an extension, requesting the deadline to be pushed out by two years to July 2028. Amazon ultimately plans to have 3,236 low-Earth satellites and integrate Leo with Amazon Web Services, so clients can move data between the two networks for storage, analytics, and artificial intelligence purposes. Amazon hopes to launch Leo later this year. CEO Andy Jassy recently said that it already has commitments from customers, such as Delta, to begin using Leo for Wi-Fi on hundreds of its planes starting in 2028. Still, Amazon remains very far behind Starlink, which has close to 10,000 satellites up and running in space, with plans to eventually have 42,000 satellites one day. Starlink also has 9 million users. Given this and the fact that Amazon seems to be running behind schedule, I don't see Leo as a real challenge to Starlink yet. However, I think it raises an interesting point for potential future SpaceX investors: there is likely to be significant competition. That's something to consider, given the massive valuation that SpaceX is expected to debut at when it goes public.

Kraken has filed confidentially for IPO, co-CEO of crypto exchange confirms

Sign up for Semafor Business: The stories (& the scoops) from Wall Street. Read it now. The US crypto exchange Kraken has confidentially filed for an initial public offering, co-CEO Arjun Sethi said Tuesday at Semafor World Economy in Washington, DC, confirming earlier reports. An investment round in April valued the San Francisco-based platform at $13.3 billion, down from a $20 billion peak in late 2025. Sethi said the company's aim is to help its customers make the kinds of sophisticated trades and directional bets that otherwise would be available only to professional investors. "What they want at the end of the day is what Citadel and Jane Street have, or JPMorgan has, and they want it accessible to them," Sethi said. "That's our mission: How do we make all these products open? We want to be able to help enable what you want to do with your own capital."

EasyJet passenger stranded in Milan for four days after EU border chaos

An easyJet customer has told ITV News she missed her flight home from Milan due to EU border chaos despite arriving at the airport four hours early. Lily-Mae Bridgehouse, from Oldham, is among the more than 100 passengers who didn't make their flight to Manchester on Sunday because of long queues at passport control in Milan Linate Airport. The 23-year-old, who was flying alone for the first time, said she was forced to take four days of unpaid leave as a result of the cancellation. The next available flight only leaves on Thursday. It comes as travellers face big delays and long queues at some airports in Europe following the implementation of the EU's new Entry/Exit system (EES). The system, which replaces passport stamps with biometric checks, is designed to be more efficient, but teething issues are causing frustration for many passengers trying to return to the UK. Ms Bridgehouse said she arrived at the airport four hours early and had plenty of time before her 10.30am flight was assigned a gate. At 9.30am, the flight had been assigned a gate number, and she was told by a member of staff to join a queue. "We were stood in the queue for about an hour and a half... probably even longer," she told ITV News, adding that staff kept calling people for London Gatwick and London Heathrow but not for Manchester. When Ms Bridgehouse and some other passengers went to ask for updates, she said staff told them: "Don't worry, it's not going to leave without you, it will stay here until you all board." But as the queue slowly budged forward, the flight was no longer listed on the departure board, she said. She said: "After about half an hour, we asked again, saying, 'Why hasn't it gone on the board yet? Why haven't we gone through yet? It's about half 11, surely we've missed the flight. "She came back to us and said, 'It's gone, so I said, 'What do you mean it's gone? I need to be on that flight, I have work tomorrow I have kids I need to get home to'. "They said, 'There's nothing we can do, it's gone, you need to come out of the queue'". Ms Bridgehouse said she struggles with anxiety, making her ordeal all the more difficult. She said she started "getting really panicky" when customers were led all the way back through departures and told to go down some stairs and outside, adding: "I was a mess. I was terrified." Passengers were eventually ushered to an easyJet desk where they were handed a sheet of paper confirming that their flight had been cancelled, Ms Bridgehouse said. The earliest flight she could find to Manchester was four days later from Milano Malpensa Airport, which she booked. Her partner was staying in Milan for business, so she took a bus back into the city to stay with him. After a lengthy call with easyJet's customer service, she was told she'd have to pay £52 to switch to the Thursday flight. However, she said that does not make up for the extra expenses she has incurred staying in Milan, nor the four days of missed work for which her employer says it cannot pay her. EasyJet told ITV News they refunded the alternative EasyJet flight Ms Bridgehouse booked, including the cancellation fee. "While the issue was due to delays in EES processing, which is outside our control, we are sorry for any inconvenience caused," easyJet said. "We continue to urge border authorities to ensure they make full and effective use of the permitted flexibilities for as long as needed so our customers' travel plans are not impacted," they added. Subscribe free to our weekly newsletter for exclusive and original coverage from ITV News. Direct to your inbox every Friday morning. "It's made me really nervous to return, going to that new airport, because I'm thinking it's just going to happen all over again and I'm going to be stuck here even longer. It has really knocked my confidence," she said. "We just want someone to take accountability for it, apologise, and promise that it's not going to happen again, or that they're going to have things in place moving forward to make it easier." ITV News has contacted Milan's Linate Airport for comment.

Anthropic co-founder confirms the company briefed the Trump administration on Mythos

Jack Clark, one of Anthropic's co-founders who also serves as Head of Public Benefit for Anthropic PBC, confirmed that the AI company had briefed the Trump administration about its new Mythos model. The model, announced last week, is so dangerous that it's not being released to the public, largely due to its alleged powerful cybersecurity capabilities. In an interview at the Semafor World Economy summit this week, Clark explained why the company was still engaged with the U.S. government while simultaneously suing them. This March, Anthropic filed a lawsuit against Trump's Department of Defense (DOD) after the agency labeled the company a supply-chain risk. Anthropic had clashed with the Pentagon over whether the military should have unrestricted access to Anthropic's AI systems for use cases that included mass surveillance of Americans and fully autonomous weapons. (OpenAI ended up winning the deal instead.) At the conference, Clark downplayed the administration's labeling of its business as a supply-chain risk, saying it was merely a "narrow contracting dispute" and that Anthropic didn't want it to get in the way of the fact that the company cares about national security. "Our position is the government has to know about this stuff, and we have to find new ways for the government to partner with a private sector that is making things that are truly revolutionizing the economy, but are going to have aspects to them which hit National Security, equities, and other ones," said Clark. "So absolutely, we talked to them about Mythos, and we'll talk to them about the next models as well." His confirmation comes after reports last week that Trump officials were encouraging banks to test Mythos, including JPMorgan Chase, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley. Clark also addressed other aspects of AI's impact on society during the interview, including things like unemployment and higher education. Previously, Anthropic CEO Dario Amodei has warned that AI's advances could bring unemployment to Depression-era numbers, but Clark slightly disagrees. He explained in the interview that Amodei believes that AI will get much more powerful than people expect very quickly, so he's using that as the basis of his estimations. Clark, who leads a team of economists at Anthropic, said that the company is so far only seeing "some potential weakness in early graduate employment" across select industries. He noted that Anthropic is ready in case there are major employment shifts, however. Pushed to say what majors college students today should be pursuing or avoiding, as a result of AI's impacts, Clark would only broadly suggest that the most important majors are those that "involve synthesis across a whole variety of subjects and analytical thinking about that." "That's because what AI allows us to do is it allows you to have access to sort of an arbitrary amount of subject matter experts in different domains," Clark said. "But the really important thing is knowing the right questions to ask and having intuitions about what would be interesting if you collided different insights from many different disciplines."

Bright lights seen streaking across the Triad as SpaceX launches rocket

A bright streak of light reported by viewers early Tuesday morning turned out to have an out-of-this-world explanation. Before daybreak, people across the region spotted a fast-moving object cutting across the sky, leaving a glowing trail behind it. Viewers quickly began sending photos and videos to WXII 12 News, with some wondering if the mysterious sight could be a comet. Instead, the object was linked to a SpaceX rocket launch out of Florida. Because of the rocket's high altitude and expansive vapor trail -- combined with the curvature of the Earth -- the launch was visible across much of the East Coast. Timing also played a key role in the dramatic display. While it was still dark on the ground, sunlight had already reached higher levels of the atmosphere. The Falcon 9 rocket, which launched at 5:33 a.m., left behind a vapor trail that reflected the sunlight, creating the bright, glowing effect seen in the sky just before sunrise. According to SpaceFlightNow, the launch marked the 37th Falcon 9 flight of the year and carried SpaceX's 1,000th satellite into orbit.

BoE's Bailey sees major cybersecurity risks in new Anthropic model By Reuters

By William Schomberg and Andy Bruce April 14 (Reuters) - Central banks and financial regulators must quickly understand the implications of a new artificial intelligence model that could pose major cybersecurity dangers, Bank of England Governor Andrew Bailey said on Tuesday. "It would be reasonable to think that the events in the Gulf are the most recent challenge to us in this world, until, I think it was last Friday, you wake up to find that Anthropic may have found a way to crack the whole cyber risk world open," Bailey said at an event at Columbia University in New York. Anthropic's Mythos product has drawn warnings from cyber experts about its potential to supercharge complex cyberattacks, which could challenge the banking industry and its existing technology systems. Regulators wanted to "work out what this actually means," Bailey said. "The issue is: to what extent is this new version of the product going to be able to, in a sense, identify vulnerabilities in other systems which can be exploited for cyberattack purposes." He said cyber risks had risen up the list of concerns of regulators most rapidly in recent years. "It's the one that never goes away. You have to keep mitigating it, but the threat actors will move on, so we have to deal with it," Bailey said. He dedicated most of Tuesday's event to discussing the issue of central banks' operational independence, which was "not robust enough" when it came to matters of financial stability. Bailey argued that monetary and financial stability policy - often depicted as separate issues or sometimes even at odds with each other - should be viewed together within an overarching objective of protecting the value of money. While monetary policy is defined by numerical inflation targets, financial stability is harder to grasp, leading to a distinction between the two, Bailey said. "This is important because independence in respect of financial stability is otherwise not as robust, and I would argue not robust enough," Bailey said in his speech. His remarks come as central banks on both sides of the Atlantic face increasing levels of political pressure, albeit to differing degrees. In the United States, U.S. President Donald Trump has called for lower interest rates and has repeatedly chastised Fed Chair Jerome Powell. In Britain, finance minister Rachel Reeves has pushed regulators including the BoE to give greater weight to economic growth when making decisions. Bailey said financial stability cuts across private interests in the financial system, as well as governments seeking to boost economic growth by loosening regulation to increase lending - particularly when memories of past crises fade. Much as monetary policy aims to protect the real value of money, Bailey said financial stability policy protects trust in money and that the two should be seen as complementary. "I see merit in creating a single overarching narrative with a strong focus on the value of money. It would remove descriptions of financial stability such as 'tangential' or 'in conflict'," Bailey said.

Australia's big banks, super funds in high level talks over Anthropic's Mythos risks

The AI market leader last week granted 40 US companies early access to Mythos to give them a headstart in securing their systems before the model is released more widely. The company behind Claude has openly warned that Mythos has the power to circumvent existing cybersecurity defences and if used by bad actors it could put financial stability at risk. While early access to Mythos has not been granted in Australia, two of the country's top financial regulators have been extensively engaging with the big banks and other market participants behind closed doors as they look to shore up their defences.

Trump's Fed chair pick Warsh discloses fortune, SpaceX and Polymarket stakes

The news: Donald Trump's nominee for Federal Reserve chair, Kevin Warsh, has disclosed assets worth well over USD100 million ($140.2 million) ahead of his confirmation hearing, underlining his extensive interests in Wall Street and Silicon Valley through investments and advisory relationships. Warsh's sprawling set of financial interests includes stakes in Elon Musk's SpaceX and prediction market firm Polymarket, Reuters noted. The extent of his holdings puts him on track to be the wealthiest central bank leader in history if he can get confirmed. Warsh's 69-page filing, published by the Office of Government Ethics, lists two holdings in a vehicle called the Juggernaut Fund each worth more than USD50 million, whose underlying assets were withheld due to pre-existing confidentiality agreements. The numbers: According to filing, he received more than USD13 million in consulting fees last year, including USD10.2 million from billionaire hedge fund manager Stanley Druckenmiller's family office, Duquesne, USD1.6 million from hedge fund GoldenTree Asset Management, USD750,000 from PE firm Cerberus Capital Management, and more than USD1.5 million for speaking engagements, including USD750,000 from hedge fund Brevan Howard for three occasions.

BoE's Bailey Warns of Major Cybersecurity Risks in Anthropic AI Model

BoE Governor Andrew Bailey warned that Anthropic's new AI model, Mythos, poses serious cybersecurity risks by rapidly identifying system vulnerabilities -- prompting emergency scrutiny from regulators and central banks globally. BoE Governor Flags Cybersecurity Risks in New Anthropic AI Model for Banking Central Banks Face New AI-Driven Cybersecurity Threats By William Schomberg and Andy Bruce April 14 (Reuters) - Central banks and financial regulators must quickly understand the implications of a new artificial intelligence model that could pose major cybersecurity dangers, Bank of England Governor Andrew Bailey said on Tuesday. Anthropic's Mythos Product and Cyberattack Concerns "It would be reasonable to think that the events in the Gulf are the most recent challenge to us in this world, until, I think it was last Friday, you wake up to find that Anthropic may have found a way to crack the whole cyber risk world open," Bailey said at an event at Columbia University in New York. Anthropic's Mythos product has drawn warnings from cyber experts about its potential to supercharge complex cyberattacks, which could challenge the banking industry and its existing technology systems. Regulatory Response to Emerging AI Risks Regulators wanted to "work out what this actually means," Bailey said. "The issue is: to what extent is this new version of the product going to be able to, in a sense, identify vulnerabilities in other systems which can be exploited for cyberattack purposes." He said cyber risks had risen up the list of concerns of regulators most rapidly in recent years. "It's the one that never goes away. You have to keep mitigating it, but the threat actors will move on, so we have to deal with it," Bailey said. Central Banks' Operational Independence and Financial Stability He dedicated most of Tuesday's event to discussing the issue of central banks' operational independence, which was "not robust enough" when it came to matters of financial stability. Integrating Monetary and Financial Stability Policy Bailey argued that monetary and financial stability policy - often depicted as separate issues or sometimes even at odds with each other - should be viewed together within an overarching objective of protecting the value of money. Challenges in Defining Financial Stability While monetary policy is defined by numerical inflation targets, financial stability is harder to grasp, leading to a distinction between the two, Bailey said. "This is important because independence in respect of financial stability is otherwise not as robust, and I would argue not robust enough," Bailey said in his speech. Political Pressures on Central Banks His remarks come as central banks on both sides of the Atlantic face increasing levels of political pressure, albeit to differing degrees. In the United States, U.S. President Donald Trump has called for lower interest rates and has repeatedly chastised Fed Chair Jerome Powell. In Britain, finance minister Rachel Reeves has pushed regulators including the BoE to give greater weight to economic growth when making decisions. Protecting Trust and the Value of Money Bailey said financial stability cuts across private interests in the financial system, as well as governments seeking to boost economic growth by loosening regulation to increase lending - particularly when memories of past crises fade. Much as monetary policy aims to protect the real value of money, Bailey said financial stability policy protects trust in money and that the two should be seen as complementary. A Unified Approach to Financial Policy "I see merit in creating a single overarching narrative with a strong focus on the value of money. It would remove descriptions of financial stability such as 'tangential' or 'in conflict'," Bailey said. (Additional reporting by Suban Abdulla; Editing by Andrea Ricci )

BoE's Bailey sees major cybersecurity risks in new Anthropic model

April 14 (Reuters) - Central banks and financial regulators must quickly understand the implications of a new artificial intelligence model that could pose major cybersecurity dangers, Bank of England Governor Andrew Bailey said on Tuesday. "It would be reasonable to think that the events in the Gulf are the most recent challenge to us in this world, until, I think it was last Friday, you wake up to find that Anthropic may have found a way to crack the whole cyber risk world open," Bailey said at an event at Columbia University in New York. Anthropic's Mythos product has drawn warnings from cyber experts about its potential to supercharge complex cyberattacks, which could challenge the banking industry and its existing technology systems. Regulators wanted to "work out what this actually means," Bailey said. "The issue is: to what extent is this new version of the product going to be able to, in a sense, identify vulnerabilities in other systems which can be exploited for cyberattack purposes." He said cyber risks had risen up the list of concerns of regulators most rapidly in recent years. "It's the one that never goes away. You have to keep mitigating it, but the threat actors will move on, so we have to deal with it," Bailey said. He dedicated most of Tuesday's event to discussing the issue of central banks' operational independence, which was "not robust enough" when it came to matters of financial stability. Bailey argued that monetary and financial stability policy - often depicted as separate issues or sometimes even at odds with each other - should be viewed together within an overarching objective of protecting the value of money. While monetary policy is defined by numerical inflation targets, financial stability is harder to grasp, leading to a distinction between the two, Bailey said. "This is important because independence in respect of financial stability is otherwise not as robust, and I would argue not robust enough," Bailey said in his speech. His remarks come as central banks on both sides of the Atlantic face increasing levels of political pressure, albeit to differing degrees. In the United States, U.S. President Donald Trump has called for lower interest rates and has repeatedly chastised Fed Chair Jerome Powell. In Britain, finance minister Rachel Reeves has pushed regulators including the BoE to give greater weight to economic growth when making decisions. Bailey said financial stability cuts across private interests in the financial system, as well as governments seeking to boost economic growth by loosening regulation to increase lending - particularly when memories of past crises fade. Much as monetary policy aims to protect the real value of money, Bailey said financial stability policy protects trust in money and that the two should be seen as complementary. "I see merit in creating a single overarching narrative with a strong focus on the value of money. It would remove descriptions of financial stability such as 'tangential' or 'in conflict'," Bailey said. Additional reporting by Suban Abdulla; Editing by Andrea Ricci Our Standards: The Thomson Reuters Trust Principles., opens new tab * Suggested Topics: * United Kingdom * Regulatory Oversight William Schomberg Thomson Reuters William has travelled the world for Reuters, working in bureaus from Rio de Janeiro and New York to Brussels and Milan. He has spent the last 12 years back in London, covering UK economics by day and rediscovering his home city by night, especially its out-of-the-way pubs and jazz clubs.