News & Updates

The latest news and updates from companies in the WLTH portfolio.

Prediction: SpaceX Stock Will Crash This Year. Here's Why. | The Motley Fool

Elon Musk may seem to have the Midas touch when it comes to business. With his track record of beating the odds and creating successful businesses that can disrupt entire industries, it is tempting for investors to bet on any company that has his name attached to it. Past success, however, doesn't guarantee future results. And there are several reasons SpaceX might not live up to expectations after its initial public offering (IPO) planned for next month. Let's dig deeper into how unprofitable artificial intelligence (AI) exposure and a highly speculative business strategy could cause the stock to underperform after its public debut. SpaceX could become the largest public stock debut in history with an expected valuation of $2 trillion. To put that number in perspective, it would make SpaceX worth more than all but six public companies on the planet. Furthermore, SpaceX's potential market capitalization isn't well supported by its business fundamentals. This month, SpaceX filed its S-1 with the Securities and Exchange Commission (SEC). This document is required in the pre-IPO process, and it gives the market its first peek inside the financials of the privately held company. In 2025, SpaceX's revenue jumped 33% year over year to $18.7 billion, which is quite impressive for a company of its size. On the other hand, expenses (particularly for research and development) are also ballooning at an even faster clip, which led to operating income collapsing from a positive $466 million to a loss of $2.6 billion in the period. Investors shouldn't be too surprised that a rocket company is spending huge amounts on R&D. After all, this is a complex technology with huge regulatory and testing requirements. However, a rising portion of SpaceX's spending is going toward a much more speculative and arguably less beneficial part of its business -- generative AI. According to the S-1 filing, SpaceX's AI segment generated an operating loss of $6.36 billion in 2025. This figure gets even more alarming when you remember that this happened before the acquisition of xAI in February 2026. The new subsidiary will likely make the cash burn worse because of the need to build and maintain data-center capacity and keep up with rivals like OpenAI and Anthropic. There are signs that xAI may already be falling behind. Although the company claims capacity and energy are its primary constraints, lack of demand may play an even bigger role. The company is actually renting out excess capacity to rivals, with Anthropic reportedly paying $1.25 billion per month for access to xAI's Colossus data centers. In the near term, this deal sounds like good news for SpaceX because of the enormous revenue opportunity. However, it represents training and inference capacity that won't be going to the company's in-house large language model (LLM) Grok. Furthermore, Anthropic can exit the deal before it expires in 2029. And over time, SpaceX's data centers could struggle to compete with hyperscalers like Amazon, which plans to make $200 billion in AI-related capital expenditures this year alone. Elon Musk's proposed space-based data centers could eventually give the company an edge by enabling access to abundant solar energy and dramatically reducing cooling costs. But this strategy could run into problems ranging from space debris to maintenance challenges and should not be seen as a realistic business plan with current technology. Despite his entrepreneurial success, Elon Musk has developed a track record of overpromising and underdelivering. And the SpaceX IPO is shaping up to be one of the biggest disappointments yet.

What Anthropic Becoming Top Private AI Firm Means for Investors | Investing.com

Anthropic is closing in on a $900 billion valuation this week. That number is not a typo. According to Bloomberg and the Financial Times, the Claude maker is finalizing a funding round exceeding $30 billion. If it closes as reported, Anthropic will surpass OpenAI as the world's most valuable private AI company. For investors, this is not just a headline. It is a signal about where institutional money believes the AI race is headed. Four firms are co-leading the round: Sequoia Capital, Dragoneer Investment Group, Altimeter Capital, and Greenoaks Capital Partners. Each is reportedly committing roughly $2 billion. Existing backers, including Peter Thiel's Founders Fund and General Catalyst, are also expected to participate. The deal is structured at a pre-money valuation above $900 billion. That figure nearly triples Anthropic's $380 billion valuation from February 2026. It also edges past OpenAI's most recent private market valuation of $852 billion. The round was reportedly arranged in a matter of weeks. That pace matters. It tells you this was not a deliberate fundraising process. It tells you investors pushed their way in The valuation escalation is not arbitrary. Anthropic's annualized revenue run rate stood at roughly $9 billion at the end of 2025. By early April 2026, it had crossed $30 billion, according to Bloomberg. CEO Dario Amodei described the pace as "80x growth" in annualized revenue within the first quarter of 2026 alone. For context, Salesforce took approximately two decades to reach $30 billion in annual revenue. Anthropic did it in under three years from a standing start. Enterprise customers are the engine behind this growth. More than 1,000 businesses now spend over $1 million annually on Claude. Enterprise clients represent roughly 80% of total revenue, according to Sacra research. That is not consumer hype. That is recurring institutional spending with high switching costs. Claude Code, the company's agentic coding tool, has also emerged as a breakout product. It crossed $2.5 billion in annualized revenue and has more than doubled since January 2026, according to Anthropic's own disclosures. This funding round is likely Anthropic's last major private raise. Bloomberg has reported that the company is weighing an IPO as early as October 2026. Goldman Sachs, JPMorgan Chase, and Morgan Stanley are already in early discussions as lead underwriters, according to multiple reports. The raise is designed to bridge a compute gap before a public listing. Anthropic recently secured agreements with Google and Broadcom for approximately 3.5 gigawatts of TPU compute capacity starting in 2027. Amazon has separately committed up to $25 billion in total investment, securing additional compute for the Claude model training and deployment. At a $900 billion pre-money valuation, a successful IPO would place Anthropic among the most valuable publicly traded companies on earth. That matters for retail investors who cannot access private rounds today but will face a decision when a prospectus drops. There are real risks here. Anthropic reports revenue from cloud resellers like Amazon Web Services and Google on a gross basis, counting total end-customer spend as revenue. That accounting method inflates top-line figures relative to companies that report net of reseller payouts. Investors comparing Anthropic's revenue to public-company peers should apply that caveat carefully. The company also faces active legal disputes. A federal designation labeled Anthropic as a supply chain risk after it declined to allow its technology for autonomous weapons use. A preliminary injunction is blocking enforcement, but the case remains live. Anthropic estimated that the dispute put hundreds of millions to several billion dollars of 2026 revenue at risk. Furthermore, Anthropic faces staggering infrastructure liabilities to maintain its technological lead. A prime example is an agreement to pay xAI $1.25 billion per month for compute capacity through May 2029, a massive ongoing capital expenditure that highlights the intense burn rate required before the company hits the public markets. None of that has slowed investor demand. The question now is whether public market investors will price Anthropic with the same urgency that private markets clearly have. The answer, expected as early as October, will reset valuation benchmarks across the entire AI sector.

The SpaceX IPO and Data Centers in Space

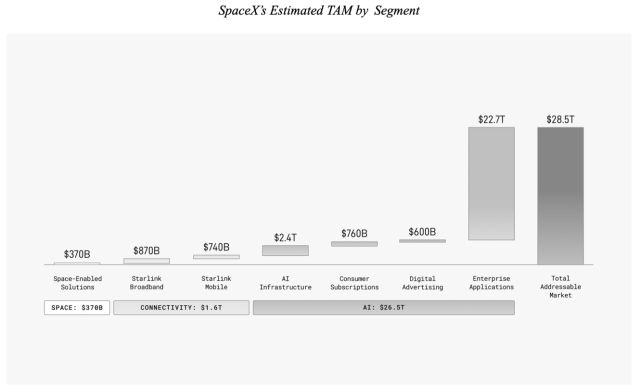

It's hardly the biggest problem in the world -- or perhaps the height of privilege to consider it a problem at all -- but one of the most annoying consumer experiences is booking an Uber Black and realizing you got assigned a Tesla Model Y (Uber finally stopped allowing new Model Y's onto Black last year). Buckle up for an uncomfortable back seat, basic plastic finishes, and, all-too-often, potential car sickness from a driver who hasn't completely mastered the Tesla's aggressive regenerative braking. Still, the fact that the Model Y ever made it to the Black level is a testament to the brand Elon Musk built. Back in 2016, when 300,000 people dropped $1,000 each in a matter of hours to reserve an as-yet-unreleased Model 3, I explained that the phenomenon was because It's a Tesla: The real payoff of Musk's "Master Plan" is the fact that Tesla means something: yes, it stands for sustainability and caring for the environment, but more important is that Tesla also means amazing performance and Silicon Valley cool. To be sure, Tesla's focus on the high end has helped them move down the cost curve, but it was Musk's insistence on making "An electric car without compromises" that ultimately led to 276,000 people reserving a Model 3, many without even seeing the car: after all, it's a Tesla. This is the same brand halo that landed what is, if we're honest, a pretty basic car on the Uber Black list. What actually makes these cars compelling is the extent to which they are computers on wheels: I know plenty of very rich people who drive a Tesla not for the finishes but rather the Full Self-Driving (Supervised); there is nothing like it on the market, at least when it comes to cars you can own. Tesla appears to be doubling down on this point of differentiation: the company stopped production of the Models S and X earlier this year, focusing production resources on the CyberCab and robots; if you want your car to drive itself, you'll get the same model as everyone else. It reminds me of Andy Warhol's famous quote: What's great about this country is that America started the tradition where the richest consumers buy essentially the same things as the poorest. You can be watching TV and see Coca-Cola, and you know that the President drinks Coke, Liz Taylor drinks Coke, and just think, you can drink Coke, too. A Coke is a Coke and no amount of money can get you a better Coke than the one the bum on the corner is drinking. All the Cokes are the same and all the Cokes are good. Liz Taylor knows it, the President knows it, the bum knows it, and you know it. That "tradition" is scale, and America is indeed better at it than any other country in the world; and, amongst Americans, no one pursues and seeks to leverage scale quite like Musk. From a press release from American Airlines: American Airlines today announced a sweeping modernization of its narrowbody inflight customer experience with the installation of Starlink, the fastest Wi-Fi in the sky, on more than 500 narrowbody aircraft beginning in Q1 2027. Starlink is widely regarded as the world's most advanced satellite constellation using a low Earth orbit to deliver broadband Internet capable of supporting inflight streaming, online gaming, collaborative meeting tools and more. With thousands of satellites in low Earth orbit, Starlink can deliver multigigabit connectivity to aircraft using its Aero Terminal, which can support up to 1 Gbps per antenna. "As a premium global airline, we are continuously seeking out world-class partners like Starlink to deliver what our customers need and want," said American Airlines Chief Customer Officer Heather Garboden. "The addition of Starlink solidifies American as a leading airline in keeping passengers connected in flight." As part of American's commitment to an elevated onboard experience, Starlink will enable seamless streaming, browsing and real-time communication capabilities across American's domestic and short-haul international routes. I linked to the press release just for the amusement of American Airlines, which has in recent years built its strategy around offering anything-but-premium on routes you need, billing their Starlink deal as a commitment to "an elevated onboard experience." That may have been the argument for United's Starlink deal when it was announced in 2024, but by this point it's tablestakes, which is surely exactly how Musk wants it. Starlink is the consumer-facing business of SpaceX, generating $8.7 billion in revenue last year and $4.4 billion in profit; while it's not totally clear exactly how SpaceX accounts for launch costs, obviously Starlink benefits greatly from the fact that it has access to SpaceX's launch capacity. That launch capacity has resulted in over ten thousand active satellites in low Earth orbit, delivering low latency high speed Internet anywhere in the world -- including in the air. That's the carrot for airlines; the stick is the prospect of everyone else having the same service, and customers making flight decisions based on the quality of Internet access available. There is a similarity to Tesla in this way. Musk companies at their best don't win the game; they change the rules through scale, such that billionaires buy economy cars because they actually drive themselves (with supervision), and airlines transform the consumer experience on their own dime. Musk makes all-in bets -- whether that be in terms of launch capacity or in autonomous driving -- not by making rational short-term business decisions, but by starting with the desired end state and working backwards. Tech has a long history of silly charts -- there is an entire category known as Bezos charts -- and the SpaceX S-1 has one that made me laugh. It came in the discussion of SpaceX's total addressable market: We believe we have identified the largest actionable total addressable market ("TAM") in human history. We estimate that our quantifiable TAM is $28.5 trillion, consisting of $370 billion in Space from space-enabled solutions; $1.6 trillion in Connectivity across $870 billion in Starlink Broadband and $740 billion in Starlink Mobile as well as additional opportunities in enterprise and government; $26.5 trillion in AI across $2.4 trillion in AI infrastructure, $760 billion in consumer subscriptions, $600 billion in digital advertising, and $22.7 trillion in enterprise applications. For illustrative purposes of sizing our addressable market opportunity, we exclude China and Russia from our global estimates. This image is approximately to scale vertically, but certainly not horizontally: I could use the help in really wrapping my mind around the $26.5 trillion AI opportunity, given it's more than 13 times the space and connectivity opportunity combined! In all seriousness, the numbers are obviously absurd, but then again, everything about this IPO is absurd. SpaceX is seeking a $2 trillion valuation on a mere $18.67 billion in revenue with $4.9 billion in losses last year, and growth actually slowed from 35% to 33%. That slowdown happened despite the addition of xAI (and thus also X), which tipped the company from a small profit to that massive loss, thanks to $5.1 billion in AI R&D expense. That R&D, keep in mind, went towards building a model that is in 5th place, and whose entire founding team recently left the company. But sure, $26.5 trillion AI opportunity! This is not to say that SpaceX won't get its desired valuation. Tesla's valuation never made any sense right up until the Models 3 and Y actually worked out, causing Tesla's share price to soar (and even then it was hard to ever build a financial model that justified the new share price). Musk's ability to make his own reality starts with investors; from 2021's Mistakes and Memes and comparing Apple and Tesla: This comparison works as far as it goes, but it doesn't tell the entire story: after all, Apple's brand was derived from decades building products, which had made it the most profitable company in the world. Tesla, meanwhile, always seemed to be weeks from going bankrupt, at least until it issued ever more stock, strengthening the conviction of Tesla skeptics and shorts. That, though, was the crazy thing: you would think that issuing stock would lead to Tesla's stock price slumping; after all, existing shares were being diluted. Time after time, though, Tesla announcements about stock issuances would lead to the stock going up. It didn't make any sense, at least if you thought about the stock as representing a company. It turned out, though, that TSLA was itself a meme, one about a car company, but also sustainability, and most of all, about Elon Musk himself. Issuing more stock was not diluting existing shareholders; it was extending the opportunity to propagate the TSLA meme to that many more people, and while Musk's haters multiplied, so did his fans. The Internet, after all, is about abundance, not scarcity. The end result is that instead of infrastructure leading to a movement, a movement, via the stock market, funded the building out of infrastructure. I explained in that Article why I generally did not cover Tesla's financial results, and the reasoning extends to why I don't expect to cover SpaceX's: Musk is the master of memes, and is himself a meme. He offers a dream -- Mars, fully autonomous vehicles, an addressable market of $28.5 trillion -- and positions his companies and their stock as access to that dream, and through the alchemy of capital markets, transforms shared delusion into mass market reality. Musk's track record matters in this regard. Building an electric car company was possible, as was full self-driving (supervised); at the same time there were ever increasing government mandates and programs around decreasing emissions that acted as the stick to Tesla's carrot. Similarly, landing rockets was possible, and the new market creation downstream from correspondingly lower launch costs was comprehensible. That Musk succeeded in both instances gives him the benefit of the doubt. The question that matters, then, is not if the numbers make sense right now (they absolutely do not); what matters is if the dream is even possible, and if there are actual reasons to think it might happen. I think that data centers in space meet these conditions. The first question about data centers in space is if they are even possible, and I think the answer is clearly yes. The key thing to consider is that there is no requirement that these data centers look anything like data centers on earth. On earth we build massive buildings full of GPUs with massive infrastructure for cooling those GPUs and massive power plants (or a connection to a grid which connects to massive power plants) to power those GPUs. The idea of transporting these massive structures to space sounds implausible, and it is! However, there is no reason that space data centers would look like data centers on earth. What makes far more sense is to think about an individual satellite as something akin to a rack. Right now the largest Starlink satellite in orbit is the V2 Mini Direct-to-Cell, which measures 7.4 meters by 2.7 meters by 0.3 meters (estimated); an NVL72 rack from Nvidia, meanwhile, measures 2.2 meters by 1.1 meters by 0.6 meters, so we're already in the right size range. The V2 Mini Direct-to-Cell consumes (and dissipates) up to an estimated 25kW of energy; the NVL72 up to 135kW, and it can fit a 1 trillion parameter model quantized to FP4. The big shortcoming for a rack-satellite is power and its dissipation, but going from 25kW to 135kW is certainly within the realm of possibility -- and given that you don't need much of the cooling and power distribution usage on earth, something closer to 100kW might deliver similar performance. There are other issues to address, including the problem of radiation screwing with calculations, reliability, etc., although those two concerns could be addressed in part by using larger chips (which are less efficient, but also use less power); these rack-satellites will also be disposable, like Starlink satellites, ameliorating reliability issues. The key factor, however, is that a fleet of racks, interconnected with lasers (as Starlink's already are), each with their own solar panels and radiator arrays for cooling (deploying 200+ square meters of radiators per rack will be a huge challenge), is possible. The next question about data centers in space is if there is a use case for them -- the carrot -- and I already made the argument that there is in The Inference Shift. Specifically, there are three types of workloads developing around LLMs: training, answer inference, and agentic inference. From the section making the case for "agentic inference": Critically, this articulation of an agentic-specific memory hierarchy implies a necessary trade-off of speed for capacity. Here's the thing, though: lower speed isn't nearly as important a consideration if there isn't a human in the loop. If an agent is waiting around for a job that is being run overnight, the agent doesn't know or care about the user experience impact; what is most important is being able to accomplish a task, and if entirely new approaches to memory make that possible, then delays are fine. If delays are fine, then all of the focus on pure compute power and high-bandwidth memory seems out of place: if latency isn't the top priority, then slower and cheaper memory -- like traditional DRAM, for example -- makes a lot more sense. And if the entire system is mostly waiting on memory, then chips don't need to be as fast as the cutting edge either. This represents a profound shift in future architectures, but it also doesn't mean that current architectures are going away: At the same time, these categories won't be equal in size or importance. Specifically, agentic inference will be the largest market by far, because that is the market that won't be limited by humans or time. Today's agents are fancy answer inference; in the future true agentic inference will be work done by computers according to dictates given by other computers, and the market size scales not with humans but with compute. It's agentic inference that makes the most sense for racks in space, and conveniently enough, that is also the market that is likely to be the largest in the long run. The third question about data centers in space is if there is a stick. Specifically, while I think that racks-in-space are both a lot more viable than people think, and a lot more relevant to agentic inference than current modes of compute, it is at the end of the day cheaper and easier to build on earth, all things being equal. All things are not equal, however: right now we are at the very beginning of the AI buildout and already one of the biggest constraints is not just power (expected), but zoning (unexpected). I wrote in an Update last week: That leads to an interesting contrast to globalization: when companies were closing down American factories and laying off workers and moving operations to China, none of the affected towns or workers had a say. They just suddenly no longer had a job, and a huge number of cities across the Rust Belt no longer had a reason to exist. People simply had to move, or worse, retreat to things like alcohol or drugs. AI, however, is the opposite: building data centers requires permission, which is to say that people actually have a say. Again, I am not at all saying that these people are well informed about data centers, or about the economic impact on their communities, much less the economic impact of AI generally; what I am noting is that people who didn't have a say in globalization are suddenly finding they do have a say about AI, and it's not a surprise they are expressing their disapproval by blocking data centers. In that Update I made the case that data center builders -- and by extension the companies that use them -- should straight up pay people for permission to build data centers in their communities. At a minimum, however, that increases the costs of terrestrial data centers. What seems very plausible in the long run is that the demand for compute ends up being so large that there eventually is nowhere left to build, making the vast expanses of space not just an alternative but in fact the only choice. If all of this happens -- and there are a lot of "if"s here! -- then suddenly that $2 trillion valuation starts looking reasonable. SpaceX is already monetizing xAI's first data center, Colossus 1, to the tune of $15 billion/year for 300MW of capacity; that's 3,000 racks-in-space. Anthropic, meanwhile, will probably make 3x the revenue on that capacity; it remains to be seen if xAI can get back in the state-of-the-art game, but if so then the amount of revenue it can generate per rack-in-space will be commensurately higher. Even without xAI, however, SpaceX has the potential to be a monopoly provider of marginal compute capacity. There are, needless to say, a massive number of assumptions baked into this argument, including assuming a huge number of engineering challenges are solved, Starship actually works, SpaceX gets sufficient supply of the right kinds of chips, compute demand is massively larger, agentic inference unbundles current architectures, and data center opponents are successful. The risk attached to all of these assumptions should discount the valuation you put on this business, which is to say I still think this IPO is nuts. At the same time, I'm glad it exists, for multiple reasons. The first one is the most obvious one: Musk, for all of his faults, has already pushed humanity forward on multiple vectors, including electric cars, self-driving, reusable rockets, satellite Internet, etc., and I'm excited to see him try and do more. The second is that I am in fact concerned about our ability to muster enough compute to fully realize the gains from AI, and am very worried about a replay of nuclear power, where our failure to build denied us the opportunity to even imagine what could be invented in a world of unlimited energy; the fact Musk is proposing an alternative path to unlimited compute is a relief. The third is that I appreciate the extent to which this IPO is a return to what an IPO should be: the opportunity for people to contribute capital to actually build the business, and to benefit if it works out. As I noted, I can't make a financial model that necessarily justifies this valuation, particularly based on current financials, but neither can a VC investing in the Series A of a company. SpaceX has already invented a lot, and its early investors are going to make a lot of money with this IPO; at the same time, there is still so much more to invent that there remains a lot of upside -- and, to be very clear, a lot of risk. It's a testament to SpaceX's ambitions that retail investors get to play VC.

Meet Sangeeta Bavi, the former Microsoft executive now leading Anthropic's India startup strategy

Anthropic's India division has inducted new talent from Microsoft's pool, especially as the American AI firm looks forward to expanding its footprint in the highly competitive AI space in India. Sangeeta Bavi, who previously worked at Microsoft for almost a decade, is now heading Anthropic's sales division, taking care of startups and digital natives. Officially appointed as the Head of Sales for Digital Natives, Startups, and Mid-Market for India, Bavi will play a key role in Anthropic's efforts to accelerate startup growth in the country. In this capacity, she will lead Anthropic's efforts to support Indian startups and mid-market companies adopting Claude, the company's premier AI model for their business needs. In a detailed post on LinkedIn, Bavi expressed her respect for startup founders, stating, "India is at an extraordinary inflection point. Our founders and growth-stage companies are not just adopting AI, they are building with it, scaling with it, and taking it to every corner of the economy." "Being part of that journey, with AI that is genuinely built to be safe and beneficial, is something I have been looking forward to for a long time," she added. A quick look at Bavi's career journey As Bavi begins her stint at Anthropic - one of the world's most valuable IT firms expected to file for an IPO in the US - she brings over 24-25 years of experience across global tech giants, with a great track record in building high-impact teams and driving scale. Bavi's Microsoft era (2014-2025) Prior to Anthropic, Bavi has had a decade-long tenure at Microsoft, which saw her rise through multiple leadership positions: - As Executive Director, Digital Natives (Feb 2022 - Jan 2025), Bavi built the vertical from the ground up, managed P&L for India & South Asia, oversaw programs for early-stage growth and unicorn ventures. She engaged with stakeholders across the startup ecosystem, enabling startups to scale locally and globally using Microsoft's technology and network. She publicly stated that "India is becoming the world's startup capital" during a 2022 interview, highlighting the country's generational shift toward entrepreneurship. Her previous years were spent in various roles at Microsoft, taking care of startups and partner businesses. Bavi had joined Microsoft in October 2014 as a Product Marketing Manager, Consumer Apps (Oct 2014 - Jul 2015), where in she handled Windows apps product marketing. Stint at Nokia (2007-2014) Sangeeta Bavi also spent nearly seven years at Nokia, prior to Microsoft, in various roles. - She had risen up the ranks to a senior product management, and R&D roles. Bavi had joined Nokia in December 2003 as a Senior Software Engineer. - Her peak at Nokia was as the Head of Developer Outreach, wherein Bavi Led developer outreach programs, fostering partnerships with the developer community and scaling the program to Tier 1 and Tier 2 towns across India. A short stint at YourStory Media After quitting Microsoft in January 2025, Bavi joined YourStory Media as Chief Operating Officer, overseeing all business operations and driving revenue growth. She reported to Shradha Sharma, Founder and CEO of YourStory. Her time at YourStory brought a different perspective to the Forbes India Leadership Awards 2025. Sangeeta Bavi's education and accolades Bavi's academic credentials reflect her strong technical and business foundation: - She did her B.Tech in Computer Science from the National Institute of Technology (NIT) Warangal, completing it in January 2000. - Bavi then pursued her MBA from the Indian Institute of Management (IIM) Bangalore (PGSEM 2010 alumna, completed in January 2011). Bavi's leadership has been recognised with prestigious awards, with the most notable one being the 'SheSparks of Corporate Award in 2023. The SheSparks 2023 Awards honoured 24 women across politics, policymaking, business, entertainment, social impact, and sports, with Bavi receiving the Corporate category award alongside notable leaders like Vani Kola (Equity category). She also served as a distinguished jury member for the Forbes India Leadership Awards 2025 (FILA 2025), chaired by Harsh Mariwala, Founder and Chairman of Marico. Editorial Note: This is an independent profile. Sangeeta Bavi and their representatives were contacted but did not respond prior to the time of publication. In the absence of direct comment, this article was reported using publicly available records and regulatory filings, where applicable. This content is not sponsored and was produced in accordance with FinancialExpress.com's editorial guidelines.

India, tech firms examine cyber risks linked to Anthropic's next-generation Mythos AI

India is assessing the cybersecurity risks posed by Anthropic's advanced AI model Mythos, with government agencies and major technology firms examining vulnerabilities in crucial software systems used across banking and public infrastructure. According to a Bloomberg report, India has started testing some of its most sensitive public-facing financial and government software systems to understand how vulnerable they could be to Mythos, Anthropic's next-generation artificial intelligence model. The report stated that the Indian IT companies Infosys and Tata Consultancy Services (TCS) are among firms carrying out tests in secure environments. Infosys is reportedly working on identifying and patching vulnerabilities in its widely used Finacle banking software. India's cybersecurity agency CERT-In is testing key digital infrastructure, including Aadhaar-related systems and government login platforms, Bloomberg reported, citing officials who are familiar with the matter. What is Mythos AI and why is it causing concern? Anthropic recently revealed Mythos as its most powerful AI model to date. According to a report by The Indian Express, the company has deliberately restricted public access to the model because of concerns over its extraordinary capacity to autonomously identify serious software vulnerabilities. Anthropic has said Mythos can detect flaws across major operating systems, browsers and widely used software infrastructure. The company fears such capabilities could potentially be misused for cyber attacks if released widely without any safeguards. The Indian Express reported that Anthropic is in talks with several governments, including India, on securing critical infrastructure such as telecom, banking, and energy systems against emerging AI-linked cybersecurity risks. "There is a belief within Anthropic that allied democracies need access to defence capabilities against powerful AI models," a source stated to The Indian Express. The discussions between Anthropic and Indian authorities were reportedly started through India's Ministry of External Affairs. Indian government steps up vigilance Concerns related to Mythos have already reached the highest levels of the Indian government. According to the Indian Express, Union Finance Minister Nirmala Sitharaman recently headed a high-level meeting to review potential risks to India's financial sector from advanced AI systems capable of weaponising software vulnerabilities. In a statement, the Finance Ministry stated that the emerging threat from AI models was "unprecedented" and required a "high degree of vigilance, preparedness, and better coordination" among financial institutions and banks, as quoted by The Indian Express. While the ministry did not clearly name Mythos, the discussion were related to concerns related to the model's capabilities. Global debate over Mythos threat Despite ongoing global concern, some cybersecurity experts believe fears around Mythos may be overstated. According to a report by Reuters, security researchers acknowledge that Mythos represents a major advancement in vulnerability detection, but many argue that AI-assisted cybersecurity testing has existed for years. "I think there's a really big communication gap between practitioners and policy makers," says Issac Evans, founder of software security firm Semgrep, stated to Reuters. Reuters reported that Mythos is capable of identifying software flaws faster and with simpler prompts than earlier AI systems. It is potentially lowering the barrier for cybercriminals. However, experts also mentioned that finding vulnerabilities is the only first step whereas validating and exploiting them remains far more complex. Cisco executive Anthony Grieco mentioned to Reuters that Mythos can help defenders scan large amounts of code more quickly and reduce false positives. It allows cybersecurity teams to focus on major threats more efficiently.

SpaceX Lands $2.3B USSF Contract for Space Data Network Backbone Prototype

SpaceX must deliver a fully operational prototype capability by the end of 2027 The U.S. Space Force has awarded SpaceX a $2.29 billion other transaction authority agreement to accelerate the delivery of the Space Data Network, or SDN, Backbone. USSF Col. Ryan Frazier, an acting portfolio acquisition executive overseeing the SDN architecture, will speak at the Potomac Officers Club's 2026 Air and Space Summit on July 30. Register now. The Space Systems Command said Tuesday the firm-fixed-price delivery order supports development of a resilient, high-speed data transport layer intended to provide secure global military connectivity. Under the OTA agreement, SpaceX is expected to deliver a fully operational prototype capability by the end of 2027. The award builds on SpaceX's expanding portfolio of Space Force contracts supporting proliferated low Earth orbit communications, including the company's recent Link-182 award to develop resilient space-to-space communications technologies, as well as Space Development Agency satellite deployments tied to missile tracking and national security missions under the National Security Space Launch Phase 3 program. The SDN Backbone is a proliferated LEO satellite constellation designed to provide low-latency, high-capacity data transport for the Joint Force through an optically interconnected mesh network for worldwide tactical and broadband communications. The system will operate alongside the SDA's Transport Layer as part of a broader hybrid mesh architecture supporting current and future War Department missions. Col. Ryan Frazier, acting Space Force portfolio acquisition executive for space-based sensing and targeting, said the program leverages commercial technologies to establish a foundational communications layer that keeps Space Force sensors and shooters continuously connected through secure global networks.

Cerebras Surged 68% on Its First Day of Trading. Here's What That Tells Us About SpaceX's Upcoming Monster IPO

But these players are best suited to investors who don't mind risk. Artificial intelligence (AI) stocks have driven the stock market higher in recent years -- and investors have piled into companies that have been on the market for decades, from Nvidia to Microsoft. But in recent times, investors have gotten the opportunity to get in on younger companies involved in the space, from CoreWeave last year to Cerebras Systems (NASDAQ: CBRS) earlier this month. This is through these companies' initial public offerings. And next up may be SpaceX. The company owned by Elon Musk recently released its prospectus, and news reports indicate a roadshow will begin the week of June 8. This suggests an IPO could actually happen as soon as next month. Will AI create the world's first trillionaire? Our team just released a report on the one little-known company, called an "Indispensable Monopoly" providing the critical technology Nvidia and Intel both need. Continue " Investors clearly are eager to see how the SpaceX operation unfolds, particularly considering the IPO may be the biggest ever at almost $2 trillion. For some clues, we can look to Cerebras, which surged 68% in its first day of trading. Here's what that tells us about SpaceX's upcoming monster IPO. Image source: Getty Images. So, first, a quick note about Cerebras. The company is a player in the AI chip space, rivaling leaders Nvidia, Advanced Micro Devices, and other tech giants. Cerebras has designed a chip that's 58 times bigger than an Nvidia chip, and the company says this large size allows for incredible memory bandwidth and speed. In fact, in certain situations, it's delivered much faster results than GPU-based systems. All of this has translated into explosive revenue growth for Cerebras and even a $20 billion, multi-year contract with AI lab OpenAI. Though Cerebras still operates at a loss, this is pretty standard for a young company in the space as it invests to build out its technology and gain customers. Investors clearly liked the story as the stock opened at $350 on May 14 -- higher than the IPO price of $185 -- and went on to jump 68% in that first day of trading. Now, let's consider what that means for the SpaceX IPO. It's clear that investors are interested in new AI investing opportunities as well as opportunities to get involved in other exciting areas. SpaceX, with its businesses of rocket launches, AI, and satellite internet service, offers investors a cocktail of such technologies. Image source: Getty Images. In fact, in its prospectus, SpaceX says it's "identified the largest actionable total addressable market in human history," at $28.5 trillion. This includes $370 billion in space opportunities, $1.6 trillion in connectivity, and more than $26 trillion in AI. It's important to note, though, that profitability and the attainment of goals won't happen overnight -- and certain goals may not even be reached over time. SpaceX reminds investors of this: "Many of the innovative products and services described elsewhere in this prospectus may ultimately be unsuccessful and may require great expense, innovations not yet achieved or technologies not yet developed." Meanwhile, SpaceX's revenue has been climbing, 79% from 2023 to reach $18 billion last year, but its merger with xAI earlier this year added a significant weight to the financial picture: The AI business delivered a loss from operations of more than $6 billion last year, and capital expenditures of more than $12 billion surpassed those of the company's other businesses. So, the bottom line is that AI investments may stand in the way of profitability in the quarters to come. Though SpaceX is distinctly different from Cerebras, we can draw some parallels: Both are involved in the high-potential -- but high-investment -- business of AI and aren't yet profitable on an operational basis from this particular business. Though they involve risk, they also may offer growth investors the opportunity to get in on a new and exciting AI and general technology story. Though these stocks may not be the best choices for every investor, aggressive investors may be intrigued. These investors who don't mind some risk and are looking for a new AI or tech bet clearly turned to Cerebras during its IPO, leading it to a huge initial gain. And we may see the same thing happen as SpaceX launches its record-breaking market launch. Before you buy stock in Cerebras Systems, consider this: The Motley Fool Stock Advisor analyst team just identified what they believe are the 10 best stocks for investors to buy now... and Cerebras Systems wasn't one of them. The 10 stocks that made the cut could produce monster returns in the coming years. Consider when Netflix made this list on December 17, 2004... if you invested $1,000 at the time of our recommendation, you'd have $477,813!* Or when Nvidia made this list on April 15, 2005... if you invested $1,000 at the time of our recommendation, you'd have $1,320,088!* Now, it's worth noting Stock Advisor's total average return is 986% -- a market-crushing outperformance compared to 208% for the S&P 500. Don't miss the latest top 10 list, available with Stock Advisor, and join an investing community built by individual investors for individual investors. Adria Cimino has no position in any of the stocks mentioned. The Motley Fool has positions in and recommends Advanced Micro Devices, Microsoft, and Nvidia. The Motley Fool has a disclosure policy.

Discord's Quiet Native ARM64 Release Transforms Experience on Snapdragon Windows Laptops

Discord has slipped a native ARM64 build of its desktop client onto its download page without fanfare. The move arrives at a moment when Windows on Arm devices from Qualcomm have gained real traction among professionals and gamers who value long battery life. And the difference feels immediate. Users on Snapdragon X Elite or X Plus machines no longer need to accept the translation tax imposed by Microsoft's Prism emulator. That layer worked. But it carried costs. Startup took longer. CPU usage climbed during voice calls or screen sharing. Battery drained faster than it should on hardware designed for efficiency. The new build sidesteps all of that. Neowin first noted the availability on May 25, 2026. The official Discord download page now presents a clear choice: x64 or ARM64 when selecting the Windows installer. No announcement accompanied the addition. No press release explained the timing. Yet the option sits there, ready for anyone to grab. This marks the end of a wait that stretched nearly two years. About a year earlier, Discord had confirmed development of the native client. The company followed through after watching the Snapdragon X series gain momentum and after Microsoft spent years coaxing developers to compile for ARM64. The result lands at a point when more hardware vendors prepare Snapdragon X2 machines and new Surface models. Performance gains appear across routine tasks. Scrolling through servers feels snappier. Voice channels launch without the slight hesitation that plagued the emulated version. Battery impact drops because the app no longer runs translated instructions. One early tester who used the x86 client on a Snapdragon X Elite device described constant sluggishness and lag before switching to an unofficial client out of frustration. The native option eliminates that pain. MakeUseOf highlighted the practical upgrades. The web version of Discord offered an alternative but sacrificed features. Global hotkeys for push-to-talk refused to work when the browser tab lost focus. Screen sharing behaved unpredictably. Notifications sometimes failed to appear. Game activity status simply did not register. The native ARM64 app restores every capability without compromise. But the story runs deeper than one application. Windows on Arm spent years fighting an uphill battle for app support. Early Surface Pro X devices suffered from spotty compatibility. Emulation improved with Prism, yet many background tools like Discord stayed stuck in x86 form. That created a persistent drag on what should have been class-leading efficiency. Discord's decision signals broader acceptance. Other holdouts now face pressure to follow. Claudia Fellerman, a Discord spokesperson, told The Verge in 2025 that the company had begun work on the ARM64 client. At the time the project remained in early stages. An experimental build already showed promise. One reviewer who installed a Canary preview reported behavior indistinguishable from the Intel version. "There is no lag navigating around and the performance is a lot better," the tester observed. The public stable release has now caught up to those early experiments. Installation remains straightforward. Visit discord.com/download, select Windows, then choose the ARM64 option. The Microsoft Store listing still lacks explicit ARM64 labeling, so the direct download serves as the reliable path. After installation, users may want to clear the existing cache to avoid any residual data from the previous version. The app itself looks and functions exactly as before. Only the underlying architecture has changed. Battery life stands out as the most tangible benefit on laptops. Voice calls that once pushed fans into audible spin now run cooler and quieter. Streaming or screen sharing sessions consume fewer resources. For professionals who keep Discord open all day in the background, the efficiency gain accumulates. Less heat. Longer unplugged sessions. Fewer complaints about sluggishness during meetings. The timing aligns with maturing Windows on Arm hardware. Qualcomm's Snapdragon X series delivered strong initial results. Successive generations promise even better performance. Microsoft has expanded native app support across its own products. Developers have responded. Discord joins a growing list that includes major productivity tools and creative applications. The platform no longer feels like an afterthought on Arm machines. Unofficial clients had filled the gap for impatient users. Some offered ARM64 builds ahead of the official release and gained popularity precisely because the emulated Discord felt inadequate. Their existence underscored the demand. Now the official client matches or exceeds those workarounds while delivering full feature parity, security updates, and Nitro benefits without question. Task Manager provides an easy way to verify the change. Switch to the Details tab and examine the Architecture column. Native ARM64 processes display clearly. Microsoft continues to refine this visibility, but the information already helps users confirm they run the optimized version. So what comes next? More apps will likely shed their emulation layer in coming months. The success of Copilot+ PCs and the steady improvement in Prism have created conditions where native development delivers clear returns. Discord's silent rollout sets a quiet precedent. No marketing blitz. Just a better product made available to users who need it. Early reactions on X reflect relief. One user noted the elimination of random lag, reduced CPU spikes, and noticeably better battery during long sessions. Another pointed out that the hardware was never the limitation. The software simply had not caught up. That gap has narrowed. Discord did not comment publicly on the release timing. The company has focused instead on features like improved audio, video, and server tools. Yet this architectural update may matter more to a segment of its user base than any flashy new interface change. For owners of ARM-based Windows laptops, the chat app that once felt like a burden now fades into the background where it belongs. The shift also carries implications for developers watching the platform. When a service as widely used as Discord makes the investment in native ARM64 support, it validates the architecture for broader adoption. Gamers who rely on Discord overlay during play on Snapdragon handhelds or laptops stand to benefit. Professionals who manage multiple voice channels during work calls gain smoother operation. The entire experience moves closer to what users on traditional x86 systems have taken for granted. Expect further refinement. Future updates will likely optimize additional subsystems for ARM64. Yet the foundation now exists. The app runs natively. Performance and efficiency improve as a direct result. And the long wait has ended.

xAI's $3B Aggressive Bid for Cursor Sparks Antitrust Scrutiny

Elon Musk's xAI has stirred fresh controversy after reports surfaced that the company approached Cursor's developers with an unusually aggressive acquisition offer. According to reporting from The Next Web, xAI extended a bid that valued the AI-powered code editor at roughly $3 billion, a figure that raised eyebrows across Silicon Valley. The proposal reportedly included demands for immediate access to Cursor's proprietary data and engineering talent before any formal agreement had been signed. Such tactics, often described as gun-jumping in merger circles, risk drawing regulatory attention at a time when Musk's expanding business empire already faces multiple antitrust examinations. Cursor, a startup that integrates large language models directly into a familiar code-editing interface, has grown rapidly since its launch. Developers praise its ability to generate, refactor, and debug code with minimal friction. The company's valuation climbed from an initial post-seed range near $400 million to the $3 billion mark that xAI apparently considered fair. Yet the manner in which xAI pursued the deal has unsettled observers. Sources familiar with the negotiations told The Next Web that xAI requested early data transfers and technical integration while the two sides were still exchanging term sheets. In antitrust parlance, this premature integration can create irreversible information flows that complicate later regulatory review. The incident arrives as Musk's companies attract heightened scrutiny from both American and European regulators. The Federal Trade Commission continues to examine whether Tesla's access to xAI's models creates unfair advantages in autonomous driving. Meanwhile, the European Commission has signaled interest in how SpaceX's Starlink contracts with governments might intersect with xAI's data-collection practices. A premature acquisition attempt involving Cursor could add another thread to an already complex web of inquiries. Antitrust lawyers note that gun-jumping violations, even when unintentional, carry civil penalties and can force parties to unwind completed integrations at considerable expense. xAI itself maintains that its interest in Cursor stems from a genuine desire to accelerate development of tools that help engineers build artificial intelligence systems faster. The company's stated mission centers on understanding the true nature of the universe, a goal that requires sophisticated software infrastructure. Musk has repeatedly argued that current coding environments lag behind the capabilities of modern models. By acquiring a leading AI coding assistant, xAI could theoretically close that gap and produce better training data for its own Grok models. Yet the speed and intensity of the approach have prompted accusations that xAI is less interested in organic growth than in removing competitive obstacles. Cursor's founders have not commented publicly on the overture. However, people close to the startup say the team felt pressure to respond quickly or risk losing key personnel to xAI's competing offers. High salaries and equity packages reportedly circulated among Cursor's engineering staff, a common tactic when larger players attempt to weaken smaller rivals without completing a full acquisition. This pattern echoes past disputes involving other Musk-led companies. When Twitter, now X, sought to hire engineers from rival social platforms, similar complaints about talent poaching surfaced. The potential deal also intersects with broader questions about Musk's plans for an initial public offering of SpaceX. Recent private share sales have valued the rocket company at more than $200 billion, and rumors of a public listing have circulated for months. Some analysts speculate that xAI's aggressive moves could serve a dual purpose: strengthening its own technology while creating synergies that make SpaceX more attractive to public investors. If xAI can demonstrate superior coding tools that improve Starlink's satellite software or Tesla's vehicle firmware, the combined value proposition grows. Yet such linkages also invite regulators to examine whether Musk's various enterprises function as a single economic unit rather than independent entities. Legal experts point out that gun-jumping cases often turn on specific evidence of information exchange. Did xAI receive Cursor's customer lists, pricing algorithms, or model weights before signing a definitive agreement? If so, antitrust enforcers could argue that the companies effectively merged operations ahead of approval. The Hart-Scott-Rodino Act in the United States requires parties to observe waiting periods precisely to prevent this kind of premature coordination. Even though the Cursor transaction might fall below some filing thresholds due to its size, regulators retain authority to investigate anticompetitive behavior regardless of formal pre-merger notification requirements. Beyond the immediate legal risks, the episode highlights growing tension between rapid innovation and traditional competition policy. AI coding tools have compressed development cycles from months to days. Startups like Cursor, Replit, and others have captured significant mindshare among software engineers who now expect autocomplete suggestions to understand entire codebases rather than single lines. When a well-funded player such as xAI enters the arena with vast computational resources, smaller companies face an existential choice: sell early or compete against an opponent with seemingly unlimited capital. This dynamic repeats across multiple sectors where Musk operates. Similar concerns have been raised about Tesla's dominance in electric vehicles, SpaceX's position in commercial rocketry, and X's role in digital communication. Industry veterans recall that Microsoft faced comparable accusations when it integrated GitHub's Copilot into its developer tools. Critics argued that access to billions of lines of public code gave Microsoft an unfair training advantage. Courts ultimately sided with the technology giant, citing the transformative benefits for programmers. Yet the Cursor situation differs because xAI sits outside the traditional software establishment. As a relatively new entrant backed by Musk's personal fortune, xAI lacks the decades of regulatory goodwill that Microsoft has accumulated. Every move therefore draws sharper inspection. Observers also question whether xAI's $3 billion valuation offer for Cursor accurately reflected market conditions or represented an attempt to preempt rival bids. Anthropic, OpenAI, and Google have all explored investments in developer tooling. A bidding war could have driven Cursor's price even higher. By approaching the company early and insisting on immediate data access, xAI may have sought to lock in favorable terms before other suitors arrived. Such strategies sometimes succeed but frequently leave a trail of resentment among founders and employees who feel strong-armed. The controversy arrives at a delicate moment for Musk's public image. After acquiring Twitter and rebranding it as X, he faced accusations of undermining competition in digital advertising. Regulatory filings related to that transaction remain under review. Adding an aggressive pursuit of a promising AI startup to the list of concerns could complicate efforts to take SpaceX public. Investment banks preparing a potential IPO will need to address how regulators view the interconnectedness of Musk's companies. Any perception that xAI functions as an extension of SpaceX or Tesla rather than a standalone venture could affect valuation multiples. For developers who rely on Cursor daily, the situation carries practical implications. Many worry that an acquisition by xAI would change the product's direction. Cursor currently supports models from multiple providers, including OpenAI's GPT series and Anthropic's Claude. Integration with xAI's Grok might reduce that flexibility and steer users toward a single ecosystem. Others fear that sensitive codebases uploaded to Cursor during the evaluation period could end up training xAI's models without clear consent. These concerns reflect broader unease about data ownership in the age of foundation models. xAI has responded to media inquiries by emphasizing its commitment to building tools that benefit all of humanity. The company points to Grok's availability on X as evidence of its open approach. Yet actions speak louder than statements. The reported pressure tactics during the Cursor negotiations suggest a more hard-edged strategy than the public rhetoric implies. Whether those tactics cross legal lines remains a question for antitrust authorities to examine. As the story develops, several outcomes appear possible. Regulators could open a formal investigation into the exchange of information between xAI and Cursor. The startup might reject the overture and seek funding from alternative sources to maintain independence. Or the two companies could reach an agreement that satisfies both shareholder value and regulatory requirements. Each path carries consequences for the wider AI industry. The episode underscores a central tension in artificial intelligence development. On one side stands the need for massive computational resources and talent concentration to push model capabilities forward. On the other lies the principle that competitive markets produce better outcomes than consolidated power. Musk has long argued that humanity must accelerate toward artificial general intelligence to ensure its long-term survival. Critics counter that concentrating too much capability in too few hands creates unacceptable risks, regardless of stated intentions. Cursor represents one small but significant node in this larger debate. Its technology directly affects how quickly new AI systems can be built. Whoever controls that workflow gains influence over the pace and direction of progress. When a company backed by one of the world's wealthiest individuals pursues that control with particular intensity, the technology community takes notice. The reports from The Next Web have crystallized those concerns into a concrete narrative that regulators, investors, and engineers will watch closely in coming months. The coming weeks may reveal whether xAI's approach was an isolated misstep or part of a consistent pattern. Antitrust officials possess broad discretion to request internal communications and interview participants. Their findings could shape not only the fate of this particular transaction but also the ground rules for future AI acquisitions. In an industry where talent and data move at extraordinary speed, traditional legal frameworks sometimes struggle to keep pace. Yet the core principle remains: competition drives innovation, and premature consolidation can stifle it. For now, Cursor continues operating independently while fielding interest from multiple parties. xAI presses forward with its ambitious roadmap, undeterred by the controversy. Musk's constellation of companies -- spanning electric cars, rockets, satellite internet, social media, and artificial intelligence -- grows more tightly interwoven with each passing quarter. How regulators choose to disentangle or accommodate those connections will influence the technological trajectory of the next decade. The Cursor episode offers an early test of their willingness to draw clear boundaries around one of technology's most ambitious entrepreneurs.

SpaceX Starship IPO timeline: V3 test ahead of roadshow

SpaceX just gave investors a vivid new image to study ahead of its public offering: a Starship launch. On May 22, 2026, the company flew Starship for the 12th time, sending the first Version 3 configuration up from Starbase, Texas, only two days after putting its IPO prospectus into the market. That timing makes the SpaceX Starship IPO story about more than finance. It ties the company's biggest fundraising pitch directly to its biggest long-term technology bet. That connection is hard to miss. A rocket test can look like engineering progress to space fans and a growth narrative to Wall Street at the same time. In this case, the flight did both, giving SpaceX a fresh proof point just as the countdown to its roadshow begins. And yet the message is not simple. The V3 flight ended with a splashdown in the Indian Ocean, while full rapid reusability for the new design remains unproven. So the pitch to investors is powerful, but incomplete. The 12th Starship launch arrived at a moment that appears carefully aligned with SpaceX's IPO timeline. SpaceX launched the first Version 3 Starship configuration from Starbase, Texas, on May 22, 2026. Two days earlier, it had dropped its IPO prospectus on the market. That sequence matters because Starship is not just another project inside SpaceX. It sits at the center of the company's future growth narrative. By putting a V3 vehicle in the air days before investor meetings are set to begin, SpaceX can point to live hardware progress rather than a distant roadmap. In practice, that gives the company something concrete to discuss as it pushes the SpaceX Starship IPO toward the market. Investors are not only being asked to buy into a launch company with a huge valuation target. They are also being asked to believe that Starship V3 can eventually become a repeatable, faster-turnaround system that supports a much bigger business than today's launch cadence alone would suggest. The schedule now gives investors a clear set of dates to watch. That is a tight window, and it means the May 22 flight landed at a useful moment for investor relations. The company now heads toward the roadshow with a recent test behind it, not just a concept deck. Why this matters: public market buyers often want a simple answer to a hard question. In this case, the question is whether SpaceX deserves one of the biggest valuation ranges ever targeted in a public offering. A fresh Starship V3 flight does not settle that debate, but it gives bankers and executives a concrete event to discuss with institutions as they make the case. SpaceX is targeting an IPO valuation between $1.5 trillion and $1.75 trillion. That alone would put the offering in rare territory and instantly make the SpaceX Starship IPO one of the biggest market events of the year. But the governance structure may be nearly as important as the price tag. Elon Musk is expected to retain approximately 85% of voting power after the IPO. For public investors, that means economic exposure is not the same thing as real influence. Dual-class structures are not unusual in major listings, but 85% voting control would leave outside shareholders with very little say over the company's direction. That makes this less like a conventional public company and more like a tightly controlled business offering outside investors access without much authority. Why this matters: when a company asks the market to support a valuation as high as $1.75 trillion, governance becomes part of the investment case. Some investors may accept limited influence if they believe SpaceX can keep executing at a pace few companies can match. Others may see Elon Musk voting power as a reason to demand a more careful look at the terms. The most important technical question did not disappear because Flight 12 got off the ground. The V3 mission ended with a splashdown in the Indian Ocean, and full rapid reusability for the design remains unproven. That point sits at the center of the company's long-term story. A Starship system that can be turned around quickly and flown again with minimal delay would support a very different financial outlook than one that still needs major work between missions. The first V3 configuration flying at all is meaningful. However, the harder proof investors may want has not arrived yet. That distinction matters because the market is being asked to price future capability, not just current achievement. A splashdown in the Indian Ocean may show progress in testing, but it does not close the loop on the company's most important operating promise. The immediate effect of Flight 12 is not that it solved every open question around Starship. It is that SpaceX enters the June roadshow with momentum. In practical terms, the company can tell investors that its next-generation vehicle has already reached flight status in Version 3 form. Still, the core tension remains. The IPO target is massive, the Nasdaq listing window is near, and governance is expected to stay firmly in Musk's hands. At the same time, the technical foundation behind the biggest part of the growth story is still being tested in public. That is why the next stretch matters so much. Between June 4 and the potential June 12 listing, investors will be weighing two things at once: whether the latest Starship V3 flight strengthens belief in the future, and whether belief alone is enough to justify a valuation between $1.5 trillion and $1.75 trillion.

Musk to merge Tesla with SpaceX?

Not for the first time there are again rumours - wholly unconfirmed - that once the SpaceX IPO is floated and wrapped up, that Elon Musk will merge SpaceX with Tesla. The SpaceX IPO will happen on June 12th. The latest report originated on CNBC and suggested: · There is already massive company operational overlap. · They are separate entities only on paper. · The speculation - again - gave Tesla shares a lift CNBC added that a merger between the two would create a business with a value of more than $3 trillion. Recent analyst commentary, including from Wedbush's Dan Ives citing synergies in AI, robotics, and manufacturing, sees high probability of a 2027 merger, though SpaceX IPO filings indicate no immediate plans. SpaceX (and by extension its xAI division) is a major customer of Tesla's energy business, using Megapacks for robotics power and data centre needs. This has been an ongoing commercial relationship, not a one-off, stated Business Insider. In early 2026, Tesla and SpaceX announced the 'Terafab' project: a sprawling chip complex in Austin, Texas, with two advanced factories. One focuses on chips for Tesla cars and Optimus robots; the other produces radiation-hardened processors for AI data centres in space (tied to SpaceX's orbital ambitions). Musk described this as a massive shared effort to scale computing capacity.

Cerebras Surged 68% on Its First Day of Trading. Here's What That Tells Us About SpaceX's Upcoming Monster IPO | The Motley Fool

Artificial intelligence (AI) stocks have driven the stock market higher in recent years -- and investors have piled into companies that have been on the market for decades, from Nvidia to Microsoft. But in recent times, investors have gotten the opportunity to get in on younger companies involved in the space, from CoreWeave last year to Cerebras Systems (CBRS 5.87%) earlier this month. This is through these companies' initial public offerings. And next up may be SpaceX. The company owned by Elon Musk recently released its prospectus, and news reports indicate a roadshow will begin the week of June 8. This suggests an IPO could actually happen as soon as next month. Investors clearly are eager to see how the SpaceX operation unfolds, particularly considering the IPO may be the biggest ever at almost $2 trillion. For some clues, we can look to Cerebras, which surged 68% in its first day of trading. Here's what that tells us about SpaceX's upcoming monster IPO. So, first, a quick note about Cerebras. The company is a player in the AI chip space, rivaling leaders Nvidia, Advanced Micro Devices, and other tech giants. Cerebras has designed a chip that's 58 times bigger than an Nvidia chip, and the company says this large size allows for incredible memory bandwidth and speed. In fact, in certain situations, it's delivered much faster results than GPU-based systems. All of this has translated into explosive revenue growth for Cerebras and even a $20 billion, multi-year contract with AI lab OpenAI. Though Cerebras still operates at a loss, this is pretty standard for a young company in the space as it invests to build out its technology and gain customers. Investors clearly liked the story as the stock opened at $350 on May 14 -- higher than the IPO price of $185 -- and went on to jump 68% in that first day of trading. Now, let's consider what that means for the SpaceX IPO. It's clear that investors are interested in new AI investing opportunities as well as opportunities to get involved in other exciting areas. SpaceX, with its businesses of rocket launches, AI, and satellite internet service, offers investors a cocktail of such technologies. In fact, in its prospectus, SpaceX says it's "identified the largest actionable total addressable market in human history," at $28.5 trillion. This includes $370 billion in space opportunities, $1.6 trillion in connectivity, and more than $26 trillion in AI. It's important to note, though, that profitability and the attainment of goals won't happen overnight -- and certain goals may not even be reached over time. SpaceX reminds investors of this: "Many of the innovative products and services described elsewhere in this prospectus may ultimately be unsuccessful and may require great expense, innovations not yet achieved or technologies not yet developed." Meanwhile, SpaceX's revenue has been climbing, 79% from 2023 to reach $18 billion last year, but its merger with xAI earlier this year added a significant weight to the financial picture: The AI business delivered a loss from operations of more than $6 billion last year, and capital expenditures of more than $12 billion surpassed those of the company's other businesses. So, the bottom line is that AI investments may stand in the way of profitability in the quarters to come. Though SpaceX is distinctly different from Cerebras, we can draw some parallels: Both are involved in the high-potential -- but high-investment -- business of AI and aren't yet profitable on an operational basis from this particular business. Though they involve risk, they also may offer growth investors the opportunity to get in on a new and exciting AI and general technology story. Though these stocks may not be the best choices for every investor, aggressive investors may be intrigued. These investors who don't mind some risk and are looking for a new AI or tech bet clearly turned to Cerebras during its IPO, leading it to a huge initial gain. And we may see the same thing happen as SpaceX launches its record-breaking market launch.

Spain Blocks Kalshi and Polymarket, Citing Lack of Gambling Licences

Spain has initiated proceedings against the two US-based prediction market platforms Polymarket and Kalshi and has provisionally blocked their websites. The authorities accuse the companies of operating in the Spanish market without the required gambling licence. Similar measures have since been taken in other EU countries as well as in several US states. The Spanish Ministry of Social Rights, Consumer Affairs and the 2030 Agenda has opened official sanctions proceedings against Polymarket and Kalshi through the Dirección General de Ordenación del Juego (DGOJ). Both platforms are alleged to be operating in Spain without the mandatory regulatory authorisation, which constitutes a violation of Spanish gambling law. As an immediate measure, the Ministry has issued an order to block the websites of both platforms. This block serves as a provisional precautionary measure and remains in effect until a final decision is reached in the sanctions proceedings. The proceedings themselves are expected to last three to four months. Notice of the initiation of proceedings was served via the Official State Gazette (BOE), as direct attempts to serve the foreign-based operators had been unsuccessful. The DGOJ emphasises that unlicensed operators fail to meet essential protection standards, including identity verification, access controls for minors, and mechanisms to protect individuals who have self-excluded from gambling. On prediction markets, users buy and sell shares that reflect the outcome of future events. The prices of these shares reflect the collectively estimated probability of a particular outcome. Trading takes place on events in the areas of politics, economics, sports, or weather. The platforms themselves do not consider themselves traditional betting providers, but rather exchanges where buyers and sellers face each other, similar to stock or derivatives markets. However, regulatory authorities in numerous countries do not share this view and classify the business model as gambling. Spain is not the only European country taking action against Polymarket and Kalshi. In recent months, several EU member states have taken measures: In the United States, regulatory measures against the industry are also mounting. The Nevada Gaming Control Board has filed a civil enforcement action and applied for a preliminary injunction to prevent Polymarket from offering unlicensed bets. As early as the beginning of January, the sports betting regulator of the state of Tennessee had ordered Polymarket, Kalshi, and Crypto.com to shut down their sports prediction markets and refund bets placed. In addition, Kalshi faces a class action lawsuit in the Southern District of New York, in which the company is accused of operating an illegal and unlicensed betting operation. Polymarket and Kalshi have grown significantly in importance over the past two years, particularly around the 2024 US presidential election. According to a report by Dune and Keyrock from November 2025, the two platforms together record monthly trading volumes of over 13.5 billion US dollars and more than 43 million transactions per month. This growth is, however, increasingly attracting the attention of authorities. In addition to the fundamental question of licensing requirements, the issue of insider trading is also coming into focus. At the beginning of January, a Polymarket user had earned over 436,000 US dollars after correctly betting on Nicolás Maduro's loss of power shortly before a US intervention in Venezuela. The incident prompted Democratic congressman Ritchie Torres to draft a bill that would prohibit federal employees with relevant insider knowledge from using such platforms. Regulatory authorities in Europe and the United States take a fundamentally different view and are increasingly tightening their stance towards the industry. Whether prediction markets will establish themselves in the long term as an independent asset class or fall under gambling law is likely to be decided in numerous proceedings in the coming months.

USSF Gives SpaceX $2.29B for New Data Network 'Backbone'