News & Updates

The latest news and updates from companies in the WLTH portfolio.

Unconventional magnon-mediated spin torque enabled by ferroelectric domain engineering in multiferroic BiFeO3 - Nature Communications

We are providing an unedited version of this manuscript to give early access to its findings. Before final publication, the manuscript will undergo further editing. Please note there may be errors present which affect the content, and all legal disclaimers apply. Spin current provides an energy-efficient approach for manipulating magnetization, when its spin polarization aligns with the magnetization direction. However, conventional spin-source materials possess high crystalline symmetry, restricting spin polarization to be orthogonal to both spin and charge current directions. Here, we overcome this limitation by utilizing the concept of magnon-mediated spin-orbit torque through integration of the insulating multiferroic BiFeO with a conventional spin-source material. We observe that spin polarization generated by conventional spin-source material can excite unconventional magnon polarization due to the interplay between cycloidal antiferromagnetic order and the ferroelectric domain structure in BiFeO. This produces an unconventional magnon torque that allows deterministic, field‑free switching of in‑plane magnetization collinear with the current direction, unattainable with conventional spin-source materials. Our results establish multiferroic-based heterostructure as a symmetry‑engineered magnon spin source, paving the way for low-power spintronic devices.

Inside Hurricane Andrew: Former Public Health Leader Reflects on Chaos, Mental Strain, and Lessons Learned - HSToday

A new podcast episode is shedding light on the often-overlooked human and operational challenges behind major disaster response efforts, starting with one of the most devastating storms in U.S. history. In the debut episode of Disaster & After, host Seth Hassett speaks with Dr. Brian Flynn, a retired Rear Admiral in the U.S. Public Health Service who played a key role in shaping disaster behavioral health response nationwide. Flynn's career spans some of the country's most significant crises, including Hurricane Andrew, the Oklahoma City bombing, and the Columbine school shooting. In each case, he helped lead or support efforts focused on the mental health needs of survivors, responders, and affected communities. The first episode centers on Hurricane Andrew, which struck Florida in 1992 and is widely regarded as a turning point in modern disaster response. Flynn describes arriving on scene to an environment marked by confusion, lack of coordination, and strained systems -- conditions that tested both leadership and resilience. Rather than focusing on formal after-action findings, the conversation offers a firsthand account of operating in a response environment where established systems were overwhelmed. Flynn recounts the challenges of working through uncertainty, navigating competing priorities, and managing the personal toll that comes with prolonged exposure to crisis conditions. The discussion also highlights broader lessons that continue to shape disaster response today. Among them: how leaders operate when clear direction is absent, the cumulative stress placed on responders, and the importance of self-awareness and self-care in high-pressure environments. Flynn's experience contributed to the development of disaster behavioral health as a recognized component of emergency management, emphasizing that response efforts must address not only physical impacts but also the psychological effects on both victims and responders. Future episodes in the series will explore Flynn's work following the Oklahoma City bombing and his involvement in federal response efforts after the Columbine school shooting. The episode provides a closer look at how disaster response systems function under extreme stress -- and what lessons continue to inform preparedness and recovery efforts today.

Anthropic's Glasswing Highlights AI's Security Paradox

A new initiative arrives amid growing concerns about AI's ability to identify vulnerabilities, pointing to a broader shift toward AI-driven security. Anthropic this week launched Project Glasswing, a project focused on using AI to identify and mitigate software vulnerabilities. The launch comes just two weeks after the generative AI vendor's unreleased Claude Mythos model raised concerns that advanced AI models might be approaching, or in some cases exceeding, human capability in discovering and exploiting security flaws. Project Glasswing brings together more than 40 organizations, including Apple, Google, Amazon and Nvidia, giving them early access to the Claude Mythos model to identify and address software vulnerabilities. The goal is to enable those responsible for critical systems to detect, test and mitigate potential weaknesses earlier, before they can be exploited at scale. It's been a notably active stretch for Anthropic as it races against OpenAI toward an IPO. The vendor this week introduced a tool aimed at accelerating enterprise AI agent development, while separately moving to secure one of the largest infrastructure expansions in the AI market with a multi-gigawatt compute deal with Google and Broadcom. Together, the moves highlight how quickly Anthropic is scaling across the stack, from enterprise applications to the infrastructure required to support increasingly capable models. Anthropic is also in a legal fight against the U.S. government over its designation as a supply-chain risk after refusing to allow its technology to be used in a narrow set of use cases, including mass domestic surveillance, stating that they're "outside the bounds of what today's technology can safely and reliably do." This week, a federal appeals court judge denied Anthropic's request to suspend the government's labeling of it as a supply-chain risk. In a separate case, a judge granted the company a preliminary injunction on the government enforcing a ban on its use of the Claude model. Against that backdrop, the timing of Project Glasswing becomes more telling. While it is positioned as a defensive cybersecurity initiative, its significance lies less in the technology itself than in what it represents. The same class of models that raised concerns about their growing ability to identify and even exploit vulnerabilities is now positioned as a tool to help defend against them. That tension points to a broader shift already taking shape across the industry: AI is becoming both the source of emerging security risk and a key part of the response. This marks a move away from theoretical discussions about AI risk toward early-stage efforts to operationalize it. Rather than asking whether AI can introduce new vulnerabilities, companies are beginning to build around that reality, using AI to detect, prioritize and potentially remediate issues at a scale that would be difficult to match manually. The result is the early formation of an "AI versus AI" security dynamic. As models become more capable, enterprises might increasingly rely on AI systems not just for productivity but also to keep pace with the risks created by AI itself. Glasswing doesn't resolve that tension, but it signals where the market is heading next. Also in AI This Week This week's stories point in the same direction. Companies are moving quickly on AI, but many of the fundamentals are still catching up. Enterprises are deploying AI tools without clear strategies, employees are still trying to understand what AI means for their roles, and some of the bigger governance questions remain unresolved. At the same time, the infrastructure needed to support that momentum is starting to feel stretched.

US Treasury sounds alarm on Anthropic AI as experts warn Mythos could accelerate cyber threats

US officials and cyber leaders warn Anthropic's Mythos shows AI could rapidly expose software flaws, outpacing patching and traditional defenses Earlier this week, the Financial Times reported that senior US officials had raised concerns about the cybersecurity implications of a newly released artificial intelligence model from Anthropic, including its ability to identify software vulnerabilities at a scale that could outpace traditional defensive approaches. The report said US Treasury Secretary Scott Bessent convened a meeting with chief executives from several major US banks - including Bank of America, Citigroup, Goldman Sachs, Morgan Stanley and Wells Fargo - alongside Federal Reserve Chair Jerome Powell, to discuss emerging AI‑driven cyber risks. JPMorgan Chase was invited but unable to attend, the report added.

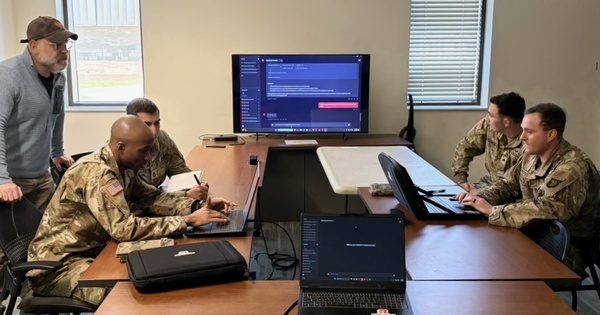

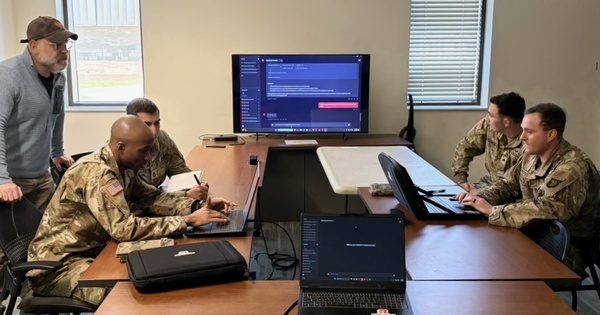

US military turns to smaller AI firms after Anthropic row

WASHINGTON D.C: A rift between the Pentagon and its former top artificial intelligence provider, Anthropic, is rapidly reshaping the U.S. military's AI ecosystem, with smaller startups seeing a surge in demand, funding interest, and contract momentum. In recent weeks, defense-focused AI firms say senior military officials and investors have approached them at a pace rarely seen before, as the U.S. Department of Defense moves to reduce reliance on a single vendor. The shift follows the Pentagon's decision in March to classify Anthropic's products as a "supply-chain risk," triggering a breakdown in relations and a broader push to diversify AI suppliers. A judge later temporarily blocked the Pentagon's blacklisting of the company. Startups, including Smack Technologies and EdgeRunner AI, say they have seen a marked change in engagement from the military and investors. "We've seen a massive increase in demand from customers and the government to get AI solutions fielded since Anthropic was declared a supply-chain risk," said Tyler Sweatt, CEO of Second Front, which helps firms operate on secure Pentagon networks. "Our customers are turning to us as the Pentagon turns to them to deploy quickly in the wake of the Anthropic blowup." For smaller firms, access to Pentagon contracts is a major milestone, often unlocking further government work and signaling credibility to commercial clients. Andrew Markoff, co-founder and CEO of Smack Technologies, said his company had been invited to multiple meetings with military officials following the Anthropic fallout, with a focus on accelerating deployment timelines. "We want more, we want demos, let's talk about how we can move faster," he said, describing the Pentagon's outreach. Tyler Saltsman, CEO of EdgeRunner AI, reported a similar experience. His company had been waiting over a year for a Space Force contract to be approved, but it was finalized within weeks of the Anthropic dispute becoming public. "I can't prove that the Anthropic drama sped this up," Saltsman said, "but I have a sneaky suspicion it did." A Pentagon official said the department would continue to deploy advanced AI capabilities through partnerships with multiple industry players. The urgency reflects a broader reassessment within the Defense Department. A Pentagon technologist previously told Reuters that the fallout with Anthropic highlighted the risks of overdependence on a single AI provider and underscored the need for a more diversified supplier base. The impact is already visible in specific programs. Smack Technologies secured a Marine Corps contract in March 2025 and delivered a prototype by October that compresses operational planning from months to about 15 minutes. Despite initial delays, progress has accelerated sharply since the Anthropic controversy. Markoff said there was "very specific guidance and movement and energy" to push the system into production for combat use in 2026, more than a year ahead of earlier expectations. The company has also seen increased interest from other branches, including the Navy, Air Force, and U.S. Special Operations Command. EdgeRunner AI said engagement from the Navy has intensified, with meetings now taking place several times a week instead of monthly. Both companies are now working to meet higher security classification standards required for sensitive military applications. EdgeRunner said it had been told it could achieve IL-6 clearance, allowing access to secret and top-secret data, within three months, a process that typically takes 18 months or longer. Saltsman said the acceleration reflects both pressure from Pentagon leadership to streamline procurement and the urgency created by the Anthropic situation.

Google Pixel is growing in 2026 as almost everyone else struggles in the chaos

2026 is going to be a bad year for smartphones, but Google Pixel continues to look like a bright spot, with a new report revealing that Pixel is already seeing growth this year as the rest of the industry struggles. Counterpoint Research reports that the global smartphone market in Q1 2026 dropped by 6% year-over-year. Apple, leading the market right now at 21% share, saw 5% growth thanks to iPhone 17 demand. Every other major brand dropped. Samsung didn't lose any share of the market, but saw shipments fall by 6% YoY. That's despite strong demand for the Galaxy S26 series. Xiaomi saw a bigger drop of 19%, while Oppo and Vivo dropped by 4% and 2%, respectively. The report brings out two bright spots in Android's "other" marketshare, though. One of those is Nothing, which saw 25% YoY growth in Q1 2026, which Counterpoint attributes to "its distinctive design, niche positioning and growing consumer awareness." Google Pixel also grew, seeing 14% YoY growth in Q1 2026. Counterpoint says that Google is "strengthening its presence across key mature markets" while pointing to "edge AI capabilities, computational photography, and clean, user-friendly software" as key reasons why Pixel is growing. Pixel 10a's launch in Q1 also likely helped bolster these numbers. What remains ahead for the year to come for Pixel remains to be seen, but this is a pretty good start.

From Star Power To Street Chaos: Vijay's Campaign Rallies See Huge Crowds And Controversy

Campaign rallies led by Vijay, the chief of Tamilaga Vettri Kazhagam (TVK), are drawing huge attention ahead of the 2026 Assembly Elections. While these events are attracting massive crowds, they are also becoming known for repeated chaos and security concerns. From emotional fan moments to controversial visuals, Vijay's rallies are turning into high-energy political spectacles-but not without challenges. Viral Video Sparks Fresh Controversy A recent rally in Karaikudi, located in Tamil Nadu's Sivaganga district, triggered widespread debate after a video went viral online. The clip showed private security personnel, reportedly part of Vijay's convoy team, using force to control the crowd. In the video, bouncers were seen kicking the hands of supporters who tried to climb onto or hold the moving campaign vehicle. The visuals quickly spread across social media, raising questions about crowd management and the safety of both supporters and the candidate. The "Reel Craze" Driving Risky Behaviour One of the main reasons behind the chaotic scenes is what many are calling the "reel craze." A large number of young fans attend Vijay's rallies not just to listen to him, but to capture close-up videos and selfies for social media platforms like Instagram. In their excitement, many take dangerous risks-running alongside moving vehicles or trying to climb onto them. This growing trend is making it difficult for organisers and security teams to maintain order during rallies. Massive Crowds Lead to Traffic and Legal Issues Wherever Vijay goes from Madurai to Chennai huge crowds gather to catch a glimpse of the actor-turned-politician. While this shows his popularity, it also creates major problems. Roads often get blocked, traffic comes to a standstill, and emergency movement gets affected. Authorities have also registered multiple cases against party organisers for violating crowd limits and causing public inconvenience. Concerns Over Aggressive Security Tactics This is not the first time security personnel at Vijay's rallies have faced criticism. Similar incidents have been reported earlier in cities like Madurai and Chennai. In some cases, bouncers were accused of pushing, manhandling, and even using force against fans-including elderly individuals. While security teams argue that strict action is needed to control the crowd, critics say such behaviour is excessive and unsafe. A Balancing Act Between Popularity and Safety Vijay's rallies clearly reflect his growing influence in Tamil Nadu politics. However, the repeated chaos also highlights the need for better planning and safer crowd management. As the 2026 elections approach, both the party and authorities may need to find a balance-ensuring that supporters can participate enthusiastically while also maintaining safety and order.

SpaceX Ticker Speculation Is Heating Up as Tuttle Drops 'SPCX'

A stock ticker that befits SpaceX ahead of its blockbuster IPO just became available. Matt Tuttle of Tuttle Capital Management has changed the ticker on his Nasdaq-listed SPAC fund from SPCX to SPCK, freeing up a ticker that SpaceX could claim for an upcoming public listing expected to value Elon Musk's rocket company at more than $2 trillion. SpaceX hasn't publicly filed paperwork specifying a ticker. But SPCX looks like a clear candidate, and as of this week, it's free. There's precedent. Mark Zuckerberg acquired the META ticker from Roundhill Investments in 2022 when Facebook rebranded, taking over a symbol that had belonged to a small metaverse ETF. The episode helped establish an informal secondary market for stock tickers, where ETF issuers sometimes squat on symbols tied to high-profile private companies in hopes of cashing out when they go public. "Tuttle knows what he is doing. He saw what happened with Roundhill and had the wherewithal to keep his languishing SPAC ETF alive in the off chance Elon called," said Eric Balchunas, senior ETF analyst at Bloomberg Intelligence. The fund has just $7 million in assets and was launched in 2020. "Sometimes it's better to be lucky than good." SpaceX did not respond to a request for comment. Read earlier coverage: Race for Hottest Tickers Creates Shadow Market on Wall Street Research suggests that memorable, intuitive tickers enjoy advantages like lower spreads and greater liquidity -- and are more popular with retail investors. Tickers that are easy to process can increase attention and buying pressure, leading to short-term price increases. The company could ultimately choose a different symbol -- X, SPAX or MARS among the alternatives drawing bets on prediction markets, though the latter is an existing ETF run by Roundhill. But with SPCX now available, the speculation has a fresh data point. Tuttle, who is CEO of Tuttle Capital, declined to comment, citing business confidentiality. Over on Polymarket, users are already betting on what SpaceX's future ticker will be, with about $4.8 million in contracts traded. None of the specific options Polymarket listed have proved popular, with the contract for "Other" -- an outcome that would include SPCX -- at 58% odds on Friday.

Anthropic's making AI boom again -- and picking the winners

More compute = more revenues, and going custom has its own particular consequences for different AI stocks. Anthropic is boosting the AI hardware trade today, and it's not just because of its AI compute deal with CoreWeave. This announcement came after Bloomberg reported that OpenAI pitched investors on its competitive advantage over the Claude developer due to having secured more computing power. If you buy into the idea that "compute equals revenues," as Nvidia CEO Jensen Huang has argued, that gap matters. And it means Anthropic has some work to do to catch up. Hence today's pact with CoreWeave, which is further entrenching demand for compute and the AI accelerators, networking equipment, memory, and power needed to provide it. That's one reason sorted, and helps explain why the likes of Applied Optoelectronics, POET Technologies, IREN, Coherent, Nebius, Oklo, Applied Digital, Cipher Digital, Super Micro Computer, and more are ripping today. Another reason may be tied to how Anthropic could go about sourcing compute, with Reuters reporting that the firm is "exploring the possibility of designing its own chips." The ripple effects from "going custom" seem to be leaving their mark within tech stocks on Friday. Astera Labs is the belle of the ball, up more than 14%. The silicon connectivity company's offerings enable chips to communicate with each other within racks (scaling up) as well as scale-out solutions. Shares are still down on the year, however, with traders more attracted to pure-play photonics opportunities. Astera has a tight relationship and partnership with Amazon; Anthropic's latest models are trained on Trainium chips, according to AWS's CEO, and Astera's offerings are used to scale those up in data center environments. Custom chip specialist Marvell Technology is also a Trainium designer and outperforming peers on Friday. And, of course, Anthropic announced an expansion of its partnership with Google and Broadcom earlier this week, which will see the firm access 3.5 gigawatts of TPU-based AI compute capacity beginning in 2027.

US Treasury sounds alarm on Anthropic AI as experts warn Mythos could accelerate cyber threats

US officials and cyber leaders warn Anthropic's Mythos shows AI could rapidly expose software flaws, outpacing patching and traditional defenses Earlier this week, the Financial Times reported that senior US officials had raised concerns about the cybersecurity implications of a newly released artificial intelligence model from Anthropic, including its ability to identify software vulnerabilities at a scale that could outpace traditional defensive approaches. The report said US Treasury Secretary Scott Bessent convened a meeting with chief executives from several major US banks - including Bank of America, Citigroup, Goldman Sachs, Morgan Stanley and Wells Fargo - alongside Federal Reserve Chair Jerome Powell, to discuss emerging AI‑driven cyber risks. JPMorgan Chase was invited but unable to attend, the report added.

Anthropic is weighing building its own artificial intelligence chips, sources say - Taipei Times

Artificial intelligence (AI) lab Anthropic is exploring the possibility of designing its own chips, three sources said, as the company and its rivals respond to a shortage of AI chips needed to power and develop more advanced AI systems. The plans are in early stages and the company might still decide to only buy AI chips and not design any, said two people with knowledge of the matter and one person briefed on Anthropic's plans. The company has yet to commit to a specific design or put together a dedicated team to work on the project, one of the sources said. Demand for its AI model Claude has accelerated this year, with the start-up's run-rate revenue now surpassing US$30 billion, up from about US$9 billion at the end of last year, Anthropic said. Anthropic uses a range of chips, including tensor processing units (TPUs) designed by Alphabet's Google and Amazon's chips to develop and run its AI software and chatbot Claude. Earlier this week, Anthropic signed a long-term deal with Google and Broadcom, which helps design the TPUs. That deal builds on the company's commitment to invest US$50 billion in bolstering US computing infrastructure. Anthropic's discussions mirror similar efforts underway at large tech companies that are seeking to design their own AI chips, including Meta and OpenAI. Designing an advanced AI chip could cost roughly half a billion dollars, as companies need to employ skilled engineers and spend to make sure the manufacturing process has no defects, industry sources said. Separately, US Secretary of the Treasury Scott Bessent and Federal Reserve Chair Jerome Powell convened an urgent meeting with bank CEOs this week to warn of cyber risks posed by Anthropic's latest AI model, two sources familiar with the matter said. Anthropic launched the powerful Mythos model earlier this week, but stopped short of a broad release, citing concerns it could expose previously unknown cybersecurity vulnerabilities. The company has said the model is capable of identifying and exploiting weaknesses across "every major operating system and every major web browser." Anthropic said it was in ongoing discussions with US government officials about the model's "offensive and defensive cyber capabilities." A third source close to the matter reiterated Anthropic's outreach, saying the company briefed senior US government officials and key industry stakeholders on Mythos' capabilities ahead of its release. The US Treasury-hosted meeting in Washington on Tuesday was aimed at ensuring banks are aware of the risks posed by Mythos and similar models, and are taking steps to defend their systems, one of the sources said. Access to Mythos would be limited to about 40 technology companies, including Microsoft and Google, the start-up has said.

Anthropic weighs building its own AI chips

SAN FRANCISCO -- Artificial intelligence lab Anthropic is exploring the possibility of designing its own chips, three sources said, as the company and its rivals respond to a shortage of AI chips needed to power and develop more advanced AI systems. The plans are in early stages and the company may still decide to only buy AI chips and not design any, according to two people with knowledge of the matter and one person briefed on Anthropic's plans. The company has yet to commit to a specific design or put together a dedicated team to work on the project, one of the sources said. Demand for its AI model Claude has accelerated in 2026, with the startup's run-rate revenue now surpassing $30 billion, up from about $9 billion at the end of 2025, Anthropic said earlier this week. Anthropic uses a range of chips, including tensor processing units (TPUs) designed by Alphabet's Google and Amazon's chips to develop and run its AI software and chatbot Claude. Earlier this week, Anthropic signed a long-term deal with Google and Broadcom, which helps design the TPUs. That deal builds on the company's commitment to invest $50 billion in strengthening US computing infrastructure. Get the latest news delivered to your inbox Sign up for The Manila Times newsletters By signing up with an email address, I acknowledge that I have read and agree to the Terms of Service and Privacy Policy. Anthropic's discussions mirror similar efforts underway at large tech companies that are seeking to design their own AI chips, including Meta and OpenAI. Designing an advanced AI chip can cost roughly half a billion dollars, according to industry sources, as companies need to employ skilled engineers and spend to make sure the manufacturing process has no defects.

CoreWeave signs multi-year deal with Anthropic for Claude AI

According to Bloomberg, the infrastructure will include a variety of Nvidia $NVDA chip architectures at U.S. data centers. The deal arrives as Anthropic has been expanding its computing infrastructure on multiple fronts. The company signed an agreement with Google $GOOGL and Broadcom $AVGO for approximately 3.5 gigawatts of computing capacity built on Google's tensor processing units, with that capacity expected to come online starting in 2027. Separately, Anthropic reported this week that its annualized revenue has surpassed the $30 billion mark, a sharp jump from the roughly $9 billion pace it recorded at the close of 2025.

Anthropic will use CoreWeave's AI capacity to power Claude

Anthropic PBC agreed to tap data center capacity from CoreWeave Inc. as part of efforts to handle increasing demand for its artificial intelligence services. The multiyear deal will help Anthropic build and deploy its Claude AI models, CoreWeave said Friday in a statement. The capacity will include a variety of Nvidia Corp. chip architectures at data centers in the US, CoreWeave Chief Executive Officer Michael Intrator said in an interview. The companies declined to disclose financial terms of the agreement. CoreWeave shares jumped as much as 15% to $105.90 in New York trading, the biggest intraday gain in more than two months. The stock had closed at $92 in New York on Thursday. Anthropic, along with OpenAI, has been at the forefront of the explosion in AI services, and at times has struggled to keep its products online in the face of what it called "unprecedented demand." The company is working to build more computing capacity, including committing $50 billion toward new AI data centers in the US. San Francisco-based Anthropic is among the most valuable closely held companies, at $380 billion, including $30 billion it raised recently. Earlier this week, Anthropic announced a partnership with Broadcom Inc. and Alphabet Inc.'s Google to gain 3.5 gigawatts of energy. A gigawatt is enough electricity for about 750,000 US households at any one time. CoreWeave is part of a group dubbed "neoclouds," which specialize in offering high-performance cloud computing for AI workloads. Customers such as Microsoft Corp. have turned to neoclouds to quickly boost their ability to build and offer AI products. CoreWeave has 43 active data centers and has contracted out over 3 gigawatts of power for server farms, the company said in February. On Thursday, CoreWeave announced a $21 billion commitment from Meta Platforms Inc. to purchase its computing power. With the agreement, CoreWeave now counts the four largest AI model makers -- Anthropic, OpenAI, Google and Meta -- as customers, Intrator said. -With assistance from Dina Bass and Subrat Patnaik. (Updates CoreWeave share reaction in third paragraph.)

Anthropic will use CoreWeave's AI capacity to power Claude

Anthropic PBC agreed to tap data center capacity from CoreWeave Inc. as part of efforts to handle increasing demand for its artificial intelligence services. The multiyear deal will help Anthropic build and deploy its Claude AI models, CoreWeave said Friday in a statement. The capacity will include a variety of Nvidia Corp. chip architectures at data centers in the US, CoreWeave Chief Executive Officer Michael Intrator said in an interview. The companies declined to disclose financial terms of the agreement. CoreWeave shares jumped as much as 15% to $105.90 in New York trading, the biggest intraday gain in more than two months. The stock had closed at $92 in New York on Thursday. Anthropic, along with OpenAI, has been at the forefront of the explosion in AI services, and at times has struggled to keep its products online in the face of what it called "unprecedented demand." The company is working to build more computing capacity, including committing $50 billion toward new AI data centers in the US. San Francisco-based Anthropic is among the most valuable closely held companies, at $380 billion, including $30 billion it raised recently. Earlier this week, Anthropic announced a partnership with Broadcom Inc. and Alphabet Inc.'s Google to gain 3.5 gigawatts of energy. A gigawatt is enough electricity for about 750,000 US households at any one time. CoreWeave is part of a group dubbed "neoclouds," which specialize in offering high-performance cloud computing for AI workloads. Customers such as Microsoft Corp. have turned to neoclouds to quickly boost their ability to build and offer AI products. CoreWeave has 43 active data centers and has contracted out over 3 gigawatts of power for server farms, the company said in February. On Thursday, CoreWeave announced a $21 billion commitment from Meta Platforms Inc. to purchase its computing power. With the agreement, CoreWeave now counts the four largest AI model makers -- Anthropic, OpenAI, Google and Meta -- as customers, Intrator said. -With assistance from Dina Bass and Subrat Patnaik. (Updates CoreWeave share reaction in third paragraph.)

China Doesn't Care That Anthropic Cut Off OpenClaw. They're Already Winning.

1,000 people queued outside Baidu's headquarters in Beijing to get it installed. Anthropic just handed China the AI agent market on a silver platter. While Anthropic was busy cutting off 135,000 OpenClaw developers with a Friday night email, something extraordinary was happening 8,000 miles away. In Beijing, nearly 1,000 people lined up outside Baidu's headquarters to get OpenClaw installed on their laptops. Not developers. Regular people. Retirees. Students. Office workers. All queuing like it was an iPhone launch except this was free, open-source AI. In Shenzhen, Tencent hosted setup sessions helping hundreds more. Engineers walked people through the installation, one by one, then connected them to WeChat an app used by 1.3 billion people. In China, they call it "raising a lobster", while American AI companies fight over who's allowed to use what. China is turning an Austrian developer's side project into national productivity infrastructure. At a speed no other country is matching.

Anthropic Just Killed the Biggest Open-Source AI Project in History

They built it. They named it after Claude. They got 247,000 GitHub stars. Then Anthropic pulled the plug. Not a warning. Not a prediction. It happened on April 4th, 2026, at noon Pacific Time. If you care about open-source AI, the future of personal agents, or just basic fairness in tech you need to understand what went down. Because this isn't just about one project getting cut off. This is about how Big AI plays the same game Big Tech has always played. What Is OpenClaw Why Should You Care If you haven't heard of OpenClaw yet, here's the 30-second version: An Austrian developer named Peter Steinberger built a side project in November 2025. He called it Clawdbot a cheeky nod to Anthropic's AI model Claude. The idea was simple: what if you could text an AI on WhatsApp, and it actually did things for you? Not just chat. Actually control your computer, manage your email, book flights, run code, clear your inbox all from a text message. It exploded 247,000 GitHub stars by March 2026. Over 135,000 active instances running worldwide. People were buying dedicated Mac Minis just to run it 24/7. Developers, freelancers, small businesses everyone wanted their own personal AI agent. And here's the thing that makes this story hurt: The entire project was built on Claude. Steinberger used Anthropic's model. He promoted Anthropic's model. He built one of the largest developer communities in history around Anthropic's model. Then Anthropic came for him. https://x.com/steipete/status/2023154018714100102?s=20 Act 1: "Your Name Sounds Too...

OpenAI's infrastructure flex may backfire before an IPO given Anthropic's profits - Cryptopolitan

Public investors typically favor companies with clear paths to profit over those with massive spending plans. Two AI rivals are fighting over who has more computing muscle as they prepare to sell shares to the public. OpenAI told its investors this week that it has more data center capacity than Anthropic, while Anthropic fired back with news about major deals and fast-growing sales numbers. OpenAI shared a document with investors saying it currently has 1.9 gigawatts of computing capacity for 2025, which is three times what it had last year. The company said Anthropic has 1.4 gigawatts. OpenAI expects to reach somewhere in the low-double-digit gigawatts within twelve months and hit 30 gigawatts by 2030. The company predicted Anthropic would max out between seven and eight gigawatts before 2027 ends. OpenAI's document, which CNBC wrote about, argued that bigger infrastructure spending creates a cycle where better technology leads to lower costs, which then pays for improvements that bring in more customers. The company also pointed to comments from Anthropic's chief executive Dario Amodei, who last year said some competitors were "YOLO-ing" while his company took a careful approach. OpenAI suggested that caution was a mistake. Anthropic responded by pointing reporters to statements from its chief financial officer, Krishna Rao, about a deal announced with Google and Broadcom earlier this week. That partnership will give Anthropic roughly 3.5 gigawatts of computing power starting in 2027. Rao said the company is making its biggest computing commitment yet to keep up with growth. CoreWeave agreement adds more capacity On Friday, Anthropic announced another deal with CoreWeave, a cloud infrastructure company, to get more computing capacity later this year. CoreWeave's stock went up more than 5% before markets opened. The company has signed similar deals recently, including an $11.9 billion agreement with OpenAI last year, a $6.3 billion order with Nvidia in September, and a $21 billion deal with Meta on Thursday. Anthropic also shared that its revenue has jumped to $30 billion per year, up from around $9 billion at the end of 2025. The company said more than 1,000 businesses now spend over $1 million each year on its services, double the 500 it reported in February. The company expects to reach positive cash flow by 2027. Meanwhile, Anthropic has gained ground in business sales, as reported by Cryptopolitan previously. Its share of enterprise spending on artificial intelligence in the United States climbed to 40%, while OpenAI's dropped from 50% to 27% during the same time. OpenAI has responded by focusing more on business customers and coding tools. OpenAI has bigger plans for spending OpenAI wants to put about $600 billion into chips and data centers through 2030, partly funded by a $122 billion fundraise. The company expects to lose $14 billion in 2026 and won't break even until 2030. Anthropic committed $50 billion to building computing infrastructure in the United States last November. The company runs its Claude models on different types of chips from Amazon, Google, and Nvidia, making it available on all three major cloud platforms. The timing of OpenAI's memo may raise questions as both companies prepare to go public. While OpenAI highlighted its larger infrastructure footprint, Anthropic is bringing in more money and spending far less to do it. Public market investors typically favor companies that can show a clear path to making a profit. Anthropic projects it will reach that milestone by 2027, three years ahead of OpenAI's 2030 target. The memo arrived just as Anthropic reported rapid growth in business customers, suggesting the competition between the two companies is heating up ahead of their stock market debuts.

Scott Bessent summons bank leaders to discuss Anthropic model's security risks

The U.S. Treasury Secretary summoned the CEOs of major U.S. banks to a meeting in Washington this week amid concerns over cybersecurity posed by Anthropic's latest model. According to reports, Jerome Powell, chair of the Federal Reserve, was among those gathered at the Treasury headquarters for the meeting after the release of the Claude Mythos AI model. Anthropic recently launched the Mythos model but stopped short of a broad release, citing concerns it could expose previously unknown cybersecurity vulnerabilities. According to Reuters, a source familiar with the matter said the model is capable of identifying and exploiting weaknesses, across "every major operating system and every major web browser." READ: US Treasury Secretary optimistic about holiday season (December 8, 2025) The meeting was attended by Goldman Sachs chief executive, David Solomon, Bank of America's Brian Moynihan, Citigroup's Jane Fraser, Morgan Stanley's Ted Pick and Charlie Scharf, the CEO of Wells Fargo. JP Morgan's Jamie Dimon was invited but unable to attend. Dimon had warned that cybersecurity "remains one of our biggest risks" and that "AI will almost surely make this risk worse" in his annual letter to shareholders. Anthropic has said its Mythos model has exposed thousands of vulnerabilities in software and popular applications. This prompted the firm to limit the release to a small number of businesses, including Apple, Amazon, and Microsoft. This is the first time Anthropic has restricted the release of a product. Cisco and Broadcom have also gained access, as did the Linux Foundation. READ: Treasury Secretary Scott Bessent says government shutdown is hurting the economy (October 14, 2025) This comes amid concerns that hackers could end up using such tools for figuring out passwords or cracking encryption that is intended to keep data safe. Anthropic said the oldest of the vulnerabilities uncovered by Mythos were up to 27 years old, none of which is believed to have been noticed by their creators or tech monitors before being identified by the AI mode. The meeting comes after Anthropic was designated a supply chain risk by the U.S. government, following disagreements over the AI firm's guidelines. A federal judge recently temporarily blocked the Pentagon's blacklisting of Anthropic. There have also been concerns regarding Anthropic's Claude Opus 4.6. Findings from the company's own internal testing revealed risks, with the model often focusing on completing tasks successfully, even if doing so means ignoring rules or boundaries. In some test settings, Claude Opus 4.6 was more willing to mislead or manipulate other systems in order to achieve that goal. There have also been concerns about how the model can be misused.

AI startups gain ground after Pentagon-Anthropic rift

Startups, including Smack Technologies and EdgeRunner AI, say they have seen a marked change in engagement from the military and investors WASHINGTON D.C: A rift between the Pentagon and its former top artificial intelligence provider, Anthropic, is rapidly reshaping the U.S. military's AI ecosystem, with smaller startups seeing a surge in demand, funding interest, and contract momentum. In recent weeks, defense-focused AI firms say senior military officials and investors have approached them at a pace rarely seen before, as the U.S. Department of Defense moves to reduce reliance on a single vendor. The shift follows the Pentagon's decision in March to classify Anthropic's products as a "supply-chain risk," triggering a breakdown in relations and a broader push to diversify AI suppliers. A judge later temporarily blocked the Pentagon's blacklisting of the company. Startups, including Smack Technologies and EdgeRunner AI, say they have seen a marked change in engagement from the military and investors. "We've seen a massive increase in demand from customers and the government to get AI solutions fielded since Anthropic was declared a supply-chain risk," said Tyler Sweatt, CEO of Second Front, which helps firms operate on secure Pentagon networks. "Our customers are turning to us as the Pentagon turns to them to deploy quickly in the wake of the Anthropic blowup." For smaller firms, access to Pentagon contracts is a major milestone, often unlocking further government work and signaling credibility to commercial clients. Andrew Markoff, co-founder and CEO of Smack Technologies, said his company had been invited to multiple meetings with military officials following the Anthropic fallout, with a focus on accelerating deployment timelines. "We want more, we want demos, let's talk about how we can move faster," he said, describing the Pentagon's outreach. Tyler Saltsman, CEO of EdgeRunner AI, reported a similar experience. His company had been waiting over a year for a Space Force contract to be approved, but it was finalized within weeks of the Anthropic dispute becoming public. "I can't prove that the Anthropic drama sped this up," Saltsman said, "but I have a sneaky suspicion it did." A Pentagon official said the department would continue to deploy advanced AI capabilities through partnerships with multiple industry players. The urgency reflects a broader reassessment within the Defense Department. A Pentagon technologist previously told Reuters that the fallout with Anthropic highlighted the risks of overdependence on a single AI provider and underscored the need for a more diversified supplier base. The impact is already visible in specific programs. Smack Technologies secured a Marine Corps contract in March 2025 and delivered a prototype by October that compresses operational planning from months to about 15 minutes. Despite initial delays, progress has accelerated sharply since the Anthropic controversy. Markoff said there was "very specific guidance and movement and energy" to push the system into production for combat use in 2026, more than a year ahead of earlier expectations. The company has also seen increased interest from other branches, including the Navy, Air Force, and U.S. Special Operations Command. EdgeRunner AI said engagement from the Navy has intensified, with meetings now taking place several times a week instead of monthly. Both companies are now working to meet higher security classification standards required for sensitive military applications. EdgeRunner said it had been told it could achieve IL-6 clearance, allowing access to secret and top-secret data, within three months, a process that typically takes 18 months or longer. Saltsman said the acceleration reflects both pressure from Pentagon leadership to streamline procurement and the urgency created by the Anthropic situation.