News & Updates

The latest news and updates from companies in the WLTH portfolio.

OT Cybersec Sector Frets Anthropic Will Leave It Behind

There's growing concern in the operational technology cybersecurity community that manufacturers and operators, and their security vendors, will be left out in the cold by the latest efforts to use artificial intelligence in securing critical software. See Also: AI Security Risks Rise With Agentic Systems Mythos Preview is the latest frontier AI model from Anthropic, which the company said Tuesday was so good at both finding zero day vulnerabilities and writing exploits for them, that it would not be released to the public (see: Anthropic Calls Its New Model Too Dangerous to Release). Project Glasswing is the exclusive group the company has set up, whose members - including major IT security vendors, infrastructure providers and original equipment manufacturers like Crowdstrike, Microsoft, Google and Cisco - get to use Mythos to scour their codebases for vulnerabilities. But there don't appear to be any pure play OT or industrial control system OEMs or security companies who have said they were among members of the coalition. "We see security vendors from some larger platform plays, who might offer OT options," said Sean Tufts, field CTO of pure-play OT security firm Claroty, "I think that's really helpful. But we need people in there that are more OT specific and OT only. I think that's critical," he told Information Security Media Group. "I'd like to see best-of-breed critical infrastructure security and manufacturers in there, someone like Claroty or one of our main competitors," Tufts added, "I think someone at the table needs to have a myopic focus on OT, if they're targeting critical infrastructure." Two other pure play vendors approached by ISMG declined comment or were not available for comment. Neither said they were members of Glasswing. Anthropic said Mythos has "already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser." The large language model is significantly better than prior versions - and "all but the most skilled humans" - at finding and exploiting vulnerabilities in software code, the company said. But while Mythos may be more capable, it is not unique. A competition staged last year by DARPA, the Pentagon's cutting edge science agency, awarded prizes to seven teams that developed open source LLMs which could scan software libraries for hidden flaws, validate the ones they found to make sure they could actually be used by a hacker and then write and deploy patches to fix each one. All seven toolsets have been publicly released. Given the astounding rate of AI progress, Mythos would likely be followed by other models, some perhaps designed by less scrupulous actors, Anthropic said. "It will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely." The company said Project Glasswing is "a starting point," an "urgent attempt to put these capabilities to work for defensive purposes" while they still had a head start on malicious actors. The company did not immediately respond to a request for comment about why no OT/ICS companies appear to have been included. Tufts welcomed Anthropic's declaration that it would make the vulnerabilities Mythos found public once they had been patched, in line with responsible disclosure practices. "I think we absolutely should look for vulnerabilities in OT software any way we can get it. It doesn't matter to me where it comes from, whether it's a researcher with a soldering iron or an AI model. We need that data," he said. The work of Project Glasswing is urgent, he said, because the advent of AI hacking had rendered the term "zero day" obsolete. "Now we have 'zero minute.' We used to have 24 hours between the release and when the bad guys would start to build kits for it, and now AI has shrunk that down to minutes. So we can't let a vulnerability sit for days. We have to be on that faster," he said. But he cautioned that patching and other mitigations often take much more time to implement for OT. "The speed and the ferocity is increasing now at the pace of AI, which is a scary thing for critical infrastructure, when we start talking about our mitigations, our patches, our controls, can often take months or more to properly implement." Speed was essential, added Rob Lee of the SANS Institute. "If patches take weeks or months to develop and deploy, the head start may not be enough. But at minimum, Anthropic is attempting to slow down the exposure timeline," he said. It was especially critical given the current conflict with Iran, which had developed cyber capabilities. "The wartime context makes this more urgent. Current mean time to exploit for newly disclosed vulnerabilities is under 24 hours" and if an adversary like Iran "can operationalize an AI-discovered vulnerability that fast against critical infrastructure, the consequences aren't theoretical. The absence of OT/ICS companies was only one of the questions about the membership of Glasswing, said Leah Siskind, AI research fellow at the Foundation for the Defense of Democracies think tank. "I'm very curious whether the other frontier AI companies [like OpenAI] will be included," she said. "I've been talking to some federal agency CISO's today," she added, "And although Anthropic says in their press release that they are in talks with the government about [Glasswing], it doesn't seem like they're an official partner yet." She said that given the huge codebase the federal government maintained, agencies also need a seat at the table. It was "worrying" the feds weren't yet included, especially given the "fraught relationship" between the federal government and Anthropic, which had been declared a supply chain risk by the Department of Defense. She called the designation "inappropriate," and urged the DoD to "make amends and move on." Defense "could encounter the same sort of difference of opinion with OpenAI or X or Google next week," she pointed out, "Just because they disagree on some some aspect, is no reason to blackball them."

The Best Way to Invest in SpaceX Before Its IPO | The Motley Fool

Alphabet may or may not hold onto its stake in SpaceX following the IPO. SpaceX has filed to go public, reportedly targeting a valuation of more than $2 trillion. That would easily make it the largest initial public offering (IPO) in history. If it achieves exactly a $2 trillion valuation, that would make it the sixth-largest public company in the world, right behind Amazon (AMZN +3.42%). Nobody knows what the appetite for the SpaceX IPO will be, and if the stock price rockets higher, investors won't want to miss out on the potential for gains. While there may be some ways to purchase private shares through certain platforms, there's only one surefire way for retail investors to invest in SpaceX before its IPO: Put money into Alphabet (GOOG +3.44%) (GOOGL +3.71%). Alphabet has long been known for investing in companies that it believes in, and has made some successful investments over its history. However, none will likely outperform its SpaceX investment. Back in 2015, Alphabet invested $900 million for about a 7.5% stake in the business. If SpaceX goes public at a $2 trillion valuation, that investment will be worth $150 billion. That's about a 166-fold profit, making it one of the best investments Alphabet could have made at that time. With all this in mind, Alphabet will have a tough decision to make. Hold onto its investment or take profits and sell the shares to eager investors? I could see it going either way. If it holds its shares, it shows it is invested in SpaceX's future and aligned with its ambitious plans. If it sells, that may not be because management doesn't believe in the company, but rather because it wants the money to spend on other items, such as artificial intelligence (AI) infrastructure. Alphabet is deadlocked in a battle for AI supremacy with a host of opponents, and any cash it can dedicate to increasing its cloud computing footprint and attracting more clients will likely be money well spent. Nobody knows for sure what the returns on investment for AI infrastructure will be, but if Alphabet can capture a majority of AI clients by adding more hyperscale data centers, then it could make selling SpaceX stock worth it. Regardless, if you want to invest in SpaceX ahead of the IPO, I think Alphabet is the best way to do it. You'll get the upside of a potentially higher SpaceX stock pre-IPO price, as well as the upside of Alphabet's AI prowess. That's a win-win combination, and with Alphabet's stock down around 15% from its all-time high, now could be a perfect time to buy.

OT Cybersec Sector Frets Anthropic Will Leave It Behind

There's growing concern in the operational technology cybersecurity community that manufacturers and operators, and their security vendors, will be left out in the cold by the latest efforts to use artificial intelligence in securing critical software. See Also: AI Security Risks Rise With Agentic Systems Mythos Preview is the latest frontier AI model from Anthropic, which the company said Tuesday was so good at both finding zero day vulnerabilities and writing exploits for them, that it would not be released to the public (see: Anthropic Calls Its New Model Too Dangerous to Release). Project Glasswing is the exclusive group the company has set up, whose members - including major IT security vendors, infrastructure providers and original equipment manufacturers like Crowdstrike, Microsoft, Google and Cisco - get to use Mythos to scour their codebases for vulnerabilities. But there don't appear to be any pure play OT or industrial control system OEMs or security companies who have said they were among members of the coalition. "We see security vendors from some larger platform plays, who might offer OT options," said Sean Tufts, field CTO of pure-play OT security firm Claroty, "I think that's really helpful. But we need people in there that are more OT specific and OT only. I think that's critical," he told Information Security Media Group. "I'd like to see best-of-breed critical infrastructure security and manufacturers in there, someone like Claroty or one of our main competitors," Tufts added, "I think someone at the table needs to have a myopic focus on OT, if they're targeting critical infrastructure." Two other pure play vendors approached by ISMG declined comment or were not available for comment. Neither said they were members of Glasswing. Anthropic said Mythos has "already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser." The large language model is significantly better than prior versions - and "all but the most skilled humans" - at finding and exploiting vulnerabilities in software code, the company said. But while Mythos may be more capable, it is not unique. A competition staged last year by DARPA, the Pentagon's cutting edge science agency, awarded prizes to seven teams that developed open source LLMs which could scan software libraries for hidden flaws, validate the ones they found to make sure they could actually be used by a hacker and then write and deploy patches to fix each one. All seven toolsets have been publicly released. Given the astounding rate of AI progress, Mythos would likely be followed by other models, some perhaps designed by less scrupulous actors, Anthropic said. "It will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely." The company said Project Glasswing is "a starting point," an "urgent attempt to put these capabilities to work for defensive purposes" while they still had a head start on malicious actors. The company did not immediately respond to a request for comment about why no OT/ICS companies appear to have been included. Tufts welcomed Anthropic's declaration that it would make the vulnerabilities Mythos found public once they had been patched, in line with responsible disclosure practices. "I think we absolutely should look for vulnerabilities in OT software any way we can get it. It doesn't matter to me where it comes from, whether it's a researcher with a soldering iron or an AI model. We need that data," he said. The work of Project Glasswing is urgent, he said, because the advent of AI hacking had rendered the term "zero day" obsolete. "Now we have 'zero minute.' We used to have 24 hours between the release and when the bad guys would start to build kits for it, and now AI has shrunk that down to minutes. So we can't let a vulnerability sit for days. We have to be on that faster," he said. But he cautioned that patching and other mitigations often take much more time to implement for OT. "The speed and the ferocity is increasing now at the pace of AI, which is a scary thing for critical infrastructure, when we start talking about our mitigations, our patches, our controls, can often take months or more to properly implement." Speed was essential, added Rob Lee of the SANS Institute. "If patches take weeks or months to develop and deploy, the head start may not be enough. But at minimum, Anthropic is attempting to slow down the exposure timeline," he said. It was especially critical given the current conflict with Iran, which had developed cyber capabilities. "The wartime context makes this more urgent. Current mean time to exploit for newly disclosed vulnerabilities is under 24 hours" and if an adversary like Iran "can operationalize an AI-discovered vulnerability that fast against critical infrastructure, the consequences aren't theoretical. The absence of OT/ICS companies was only one of the questions about the membership of Glasswing, said Leah Siskind, AI research fellow at the Foundation for the Defense of Democracies think tank. "I'm very curious whether the other frontier AI companies [like OpenAI] will be included," she said. "I've been talking to some federal agency CISO's today," she added, "And although Anthropic says in their press release that they are in talks with the government about [Glasswing], it doesn't seem like they're an official partner yet." She said that given the huge codebase the federal government maintained, agencies also need a seat at the table. It was "worrying" the feds weren't yet included, especially given the "fraught relationship" between the federal government and Anthropic, which had been declared a supply chain risk by the Department of Defense. She called the designation "inappropriate," and urged the DoD to "make amends and move on." Defense "could encounter the same sort of difference of opinion with OpenAI or X or Google next week," she pointed out, "Just because they disagree on some some aspect, is no reason to blackball them."

Anthropic appeal against Pentagon blacklisting blocked by court By Investing.com

Investing.com-- Anthropic's bid to temporarily block its national security blacklisting by the Pentagon was struck down by a Washington, D.C., federal appeals court on Wednesday. The artificial intelligence startup had sought to block its designation as a "supply chain risk" by the Pentagon, after it refused to remove certain guardrails in its products as part of a contract with the Department of Defense. Get more insights on the top AI firms by subscribing to InvestingPro Wednesday's ruling comes as a win for the Donald Trump administration, after an earlier court ruling barred Washington from enforcing a ban on Anthropic's flagship Claude AI. With the split decisions, Anthropic can continue working with other government agencies while its litigation continues. But the company is effectively banned from defense contracts. The DOD had declared Anthropic as a supply chain risk in early March, effectively ending the use of its Claude AI by defense contractors. Anthropic, in its lawsuit, had alleged that Defense Secretary Pete Hegseth overstepped his authority in designating the company as a supply chain risk.

Anthropic Asks a Federal Court to Rewrite the Rules of AI Copyright -- and the Stakes Are Enormous

Anthropic, the San Francisco-based artificial intelligence company behind the Claude chatbot, has inserted itself into a copyright lawsuit that could determine whether training AI models on copyrighted material is legal. The company filed an amicus brief this week with the U.S. Court of Appeals for the Fifth Circuit, urging judges to overturn a lower court ruling that declared AI training on copyrighted content does not qualify as fair use. If the appeals court sides with the original decision, it could upend the entire business model upon which the modern AI industry has been built. The case, Thomson Reuters Enterprise Centre GmbH v. Ross Intelligence Inc., began as a dispute between the legal publishing giant and a now-defunct legal research startup. Ross Intelligence had used content from Thomson Reuters' Westlaw database to train its own AI-powered legal search tool. A federal judge in Delaware ruled that this copying wasn't protected by fair use, and a jury subsequently found Ross liable for copyright infringement. The decision was narrow in scope -- a single district court ruling involving a specific set of facts -- but its implications sent tremors through the AI industry. As Wired reported, Anthropic's brief argues that letting this ruling stand would create a dangerous precedent, one that threatens not just AI companies but the broader principle that machines can learn from existing works the way humans do. Here's the core tension. AI companies have long maintained that ingesting copyrighted text, images, and code to train their models constitutes fair use -- a legal doctrine that permits limited use of copyrighted material without the rights holder's permission for purposes like commentary, education, and research. The argument is that training a model is "transformative" because the AI doesn't reproduce the original works; it learns patterns from them and generates entirely new outputs. Thomson Reuters convinced a judge otherwise. The court found that Ross Intelligence's copying was commercial, not transformative, and that it harmed Thomson Reuters' market for licensing its content. Anthropic's amicus brief, filed alongside a group of AI researchers and organizations, doesn't mince words. According to Wired, the company warns that affirming the lower court's ruling would "imperil the training of AI models across sectors" and threaten the development of AI systems that benefit the public. The brief draws an analogy to human learning: just as a law student reads thousands of cases to develop legal reasoning skills without infringing copyright, an AI system processes text to learn linguistic patterns without copying the expression of any individual work. It's an analogy that copyright scholars have debated fiercely for years, and the Fifth Circuit may now have to rule on it directly. The timing matters. Anthropic itself is a defendant in a separate copyright lawsuit brought by music publishers, who allege that Claude was trained on copyrighted song lyrics. Universal Music Group, Concord Music, and ABKCO have all joined suits against various AI companies. OpenAI faces claims from the New York Times. Stability AI has been sued by Getty Images. The list keeps growing. But none of those cases has produced an appellate ruling. Thomson Reuters v. Ross Intelligence is further along than almost any other AI copyright dispute in the federal courts, which is precisely why Anthropic and its allies are so anxious about the outcome. A ruling from the Fifth Circuit -- one of the most influential federal appellate courts in the country -- would carry significant weight, even though it wouldn't be binding nationwide. Other circuits would look to it for guidance. And the Supreme Court, if it eventually takes up an AI copyright case, would consider how the appellate courts have weighed in. So this isn't just about Ross Intelligence, a company that has already shut down. It's about whether the legal framework that has allowed AI companies to train on the open internet will survive. The fair use doctrine, codified in Section 107 of the Copyright Act, requires courts to weigh four factors: the purpose and character of the use, the nature of the copyrighted work, the amount used, and the effect on the market for the original. In the Ross Intelligence case, the district court found that all four factors favored Thomson Reuters. The use was commercial. The works were creative and factual compilations entitled to protection. Ross copied substantial portions. And the AI tool competed directly with Westlaw's core product. Anthropic's brief pushes back hardest on the first factor -- whether the use was transformative. The Supreme Court's 2023 decision in Andy Warhol Foundation v. Goldsmith narrowed what counts as transformative use, holding that Warhol's silkscreen prints of a photograph of Prince weren't sufficiently transformative when licensed for the same commercial purpose as the original photo. AI companies worry that courts will read that decision to mean any commercial AI training fails the transformative test. Anthropic argues that AI training is fundamentally different: the model doesn't reproduce or display the copyrighted work but instead extracts unprotectable facts and patterns. The output is something new. Not everyone buys that argument. Copyright holders point out that AI models can and do reproduce copyrighted material -- sometimes verbatim. ChatGPT has been shown to spit out near-exact passages from books. Image generators can produce works strikingly similar to specific artists' styles. The fact that reproduction is possible, critics argue, undermines the claim that training is purely transformative. And the economic harm is real: why would anyone pay for a Westlaw subscription if an AI trained on Westlaw's data can answer the same legal questions for free? The broader policy debate is just as fraught. AI companies, backed by significant venture capital and, increasingly, the current administration's pro-innovation stance, argue that restricting training data would cripple American competitiveness. Chinese AI labs, they note, aren't asking for copyright licenses. If U.S. courts impose onerous licensing requirements, the argument goes, the technology will simply develop elsewhere without those constraints. Anthropic makes a version of this argument in its brief, contending that the public interest in continued AI development should weigh heavily in the fair use analysis. Rights holders see it differently. To them, AI training is the largest act of mass copying in human history, conducted for profit by some of the wealthiest companies on earth. The Authors Guild has been particularly vocal, arguing that writers deserve compensation when their work is used to build products that generate billions of dollars. Musicians, visual artists, and journalists have echoed that view. The question isn't whether AI should exist, they say. It's whether the companies building it should be allowed to take copyrighted material without paying for it. Congress has shown little appetite to settle the question legislatively. Several bills have been introduced -- including proposals to create compulsory licensing schemes for AI training data -- but none has gained meaningful traction. The Copyright Office released a lengthy report in 2024 examining the issue but stopped short of recommending specific legislative action, calling instead for further study. That leaves the courts as the primary venue for resolving the dispute, which is why the Fifth Circuit case carries such outsized importance. Anthropic's decision to file an amicus brief rather than wait for its own cases to reach the appellate level is a calculated move. The company wants to shape the legal arguments before a potentially unfavorable precedent solidifies. It's also a signal of how seriously the AI industry takes the threat. When a company with Anthropic's resources -- it has raised more than $15 billion in funding -- files a brief in a case involving a defunct startup, it tells you the stakes extend far beyond the parties in the lawsuit. The Fifth Circuit hasn't set a date for oral arguments. But the case is being closely watched by lawyers on both sides of the copyright divide. Several other amicus briefs have been filed, including by organizations representing publishers and content creators who support the lower court's ruling. The court could affirm, reverse, or send the case back for further proceedings. Each outcome would send a different signal to the dozens of pending AI copyright cases working their way through federal courts. One thing is clear: the legal uncertainty is itself having an effect. Some AI companies have begun striking licensing deals with publishers -- OpenAI has agreements with the Associated Press, Axel Springer, and others -- hedging against the possibility that courts will rule against fair use. Others, including Meta, have continued to argue that no licenses are necessary. The market is pricing in risk in real time, with every court filing shifting the calculus slightly. And then there's the question of remedy. Even if courts ultimately rule that AI training infringes copyright, what happens next? Ordering companies to delete models trained on copyrighted data would be extraordinarily disruptive and possibly technically infeasible. Monetary damages could run into the billions. A compulsory licensing regime might emerge as a practical compromise, but designing one that satisfies both creators and AI developers would be a monumental undertaking. The legal system is being asked to resolve a technological question it wasn't designed for, using a statute written decades before anyone imagined machines that could read every book ever published in a matter of hours. For Anthropic, the stakes are existential in a very literal sense. If training on copyrighted material isn't fair use, the company's core product -- Claude, and the models that power it -- was built on an illegal foundation. The same is true for OpenAI, Google DeepMind, Meta, and virtually every other major AI developer. That's why the industry isn't treating Thomson Reuters v. Ross Intelligence as a minor case about a dead startup. It's treating it as the first real test of whether the legal system will accommodate the way AI actually works. The Fifth Circuit's decision, whenever it comes, won't be the last word. But it may be the most consequential word so far.

Polymarket Users Make Massive Profits From Timed US-Iran Ceasefire Bets - News Directory 3

The ceasefire was eventually announced by Donald Trump in a Truth Social post at approximately 6:30 PM ET on April 8, 2026. A group of newly created accounts on the prediction market Polymarket earned hundreds of thousands of dollars by placing highly specific bets on a ceasefire between the United States and Iran. The bets were placed shortly before the announcement of a two-week ceasefire on April 8, 2026. The financial gains occurred despite a lack of public signals that a deal was imminent. Earlier on April 8, 2026, Donald Trump had escalated his rhetoric on social media, warning that a whole civilization will die tonight if Iran did not meet a demand to open the Strait of Hormuz by an 8:00 PM ET deadline. The ceasefire was eventually announced by Donald Trump in a Truth Social post at approximately 6:30 PM ET on April 8, 2026. Analysis of publicly available blockchain data via the crypto analytics platform Dune reveals that at least 50 wallets placed substantial yes bets on April 8, 2026, before the announcement. For many of these wallets, these were the first bets ever placed. Records from The Guardian highlight several specific instances of high-profit trades: On Polymarket, the buy-in for betting events ranges from $0 to $1 each, which represents the perceived percentage chance of an event occurring. According to Bloomberg, bets regarding the ceasefire have driven more than $170 million through Polymarket, marking it as one of the largest events on the platform. The timing and specificity of the winning bets have led to increased insider scrutiny. As of April 9, 2026, the tenuous ceasefire deal appears to be in jeopardy. Reports from AP News indicate that Tehran has accused the Trump administration of major violations of the agreement.

Testing by JP Morgan, Apple, Google and 8 other companies that made Anthropic decide it cannot release its latest model Mythos to public

Anthropic, the artificial intelligence (AI) startup behind Claude chatbot, has created an AI model, called Claude Mythos Preview, so proficient at finding and exploiting software flaws that itis refusing to release the tool to the general public. The company has taken this step as a measure to keep the technology out of the hands of hackers, and opting to share it exclusively with a select group of major tech and finance corporations to help secure the internet's underlying infrastructure.Fearing the severe consequences if Mythos Preview were to proliferate among bad actors, Anthropic has launched a new partnership dubbed Project Glasswing.Rather than a public rollout, Anthropic is granting exclusive access to 11 industry giants to help them find and patch flaws in their own systems. The partners include: Apple, Google, JPMorgan Chase, Amazon Web Services (AWS), Microsoft, Nvidia, Cisco, Broadcom, CrowdStrike, Palo Alto Networks and The Linux Foundation."Today we're announcing Project Glasswing, a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software," the company said in a blog post.To support the initiative, Anthropic is providing its partners with $100 million in usage credits to hunt for difficult-to-spot bugs, alongside $4 million in direct donations to open-source security organizations. The company views this as a starting point to ultimately build stronger, safer software globally.In the announcement, Anthropic claimed that Claude Mythos Preview has achieved a level of coding capability that surpasses almost all highly skilled human programmers. The company also said that the AI has already uncovered thousands of high-severity vulnerabilities hidden inside every major operating system and web browser.In one instance, Mythos Preview discovered a critical, 27-year-old vulnerability in OpenBSD, an operating system heavily relied upon for critical global infrastructure. The bug, which can allow attackers to remotely crash devices, somehow survived decades of human security reviews and millions of automated tests."It also discovered a 16-year-old vulnerability in FFmpeg -- which is used by innumerable pieces of software to encode and decode video -- in a line of code that automated testing tools had hit five million times without ever catching the problem," the company added."The model autonomously found and chained together several vulnerabilities in the Linux kernel -- the software that runs most of the world's servers -- to allow an attacker to escalate from ordinary user access to complete control of the machine," Anthropic said.Jack Lindsey, a neuroscientist at the company, revealed that early versions of the model exhibited highly sophisticated, unspoken strategic thinking, sometimes even hiding its reasoning or displaying situational awareness in service of "unwanted actions".

Anthropic appeal against Pentagon blacklisting blocked by court By Investing.com

Investing.com-- Anthropic's bid to temporarily block its national security blacklisting by the Pentagon was struck down by a Washington, D.C., federal appeals court on Wednesday. The artificial intelligence startup had sought to block its designation as a "supply chain risk" by the Pentagon, after it refused to remove certain guardrails in its products as part of a contract with the Department of Defense. Get more insights on the top AI firms by subscribing to InvestingPro Wednesday's ruling comes as a win for the Donald Trump administration, after an earlier court ruling barred Washington from enforcing a ban on Anthropic's flagship Claude AI. With the split decisions, Anthropic can continue working with other government agencies while its litigation continues. But the company is effectively banned from defense contracts. The DOD had declared Anthropic as a supply chain risk in early March, effectively ending the use of its Claude AI by defense contractors. Anthropic, in its lawsuit, had alleged that Defense Secretary Pete Hegseth overstepped his authority in designating the company as a supply chain risk.

Channel Seven reporter quits and reveals job 'pressure and chaos'

Channel Seven Adelaide's Mitchell Sariovski will be hanging up his microphone at the end of April to focus on a career in hospitality. The 28-year-old father of two announced his surprising career change on social media on Wednesday. After nearly five years as a news reporter at the station, Sariovski is leaving journalism to concentrate on his franchise with The Original Pancake Kitchen. 'From a kid with a camera to a reporter at 7News - this is my final sign-off,' he said on Instagram. Explaining to followers that he had a childhood wish to enter broadcast news, he confessed that as a teenager, he once sold a car to buy a camera. He continued, 'That was my first real step into the world I wanted to be part of - and I poured everything I had into it. Channel Seven Adelaide's Mitchell Sariovski will be hanging up his microphone at the end of April to focus on a career in hospitality. (Pictured) After nearly five years as a news reporter at the station, Sariovski said he was leaving journalism to concentrate on his franchise with The Original Pancake Kitchen, a well-known restaurant chain in his hometown of Adelaide. 'Fifteen years ago, I walked into Channel 7 Adelaide as a work experience kid and was told I was too over-enthusiastic and not to come back. 'I left thinking the dream had ended before it even began. But it didn't. 'I found my way back - and became a reporter there. It became everything I'd hoped for - the stories, the pressure, the chaos, and the people who made it all worth it. It's hard to leave the dream behind... but it's time. 'Now, it's about my family - my fiancé Lauren, and our boys, Jett and Arlo.' He also thanked South Australian viewers for their support and added, 'I'm still on your screen for now - but at the end of the month, it's me signing off. 'After that... the only deadlines will be pancakes on plates, not news on screens.' Seven News Adelaide's Jasmin Teurlings was among the many followers who posted messages of support on Sariovski's post. 'Go well, Mitch,' she said, adding, 'No one quite does a chase like you do. Well done on an incredible chapter.' The 28-year-old father of two announced his surprising career change via social media on Wednesday. Pictured: Sariovski began his TV news career in 2017 Everyone is saying the same thing about this old Channel Seven News clip: 'That's insane' Said a follower, 'We'll miss seeing you on the TV. You have been an excellent reporter. Wishing you all the best. 'You will be missed,' said a third fan. According to his LinkedIn profile, Mitchell bought into The Original Pancake Kitchen franchise in December 2024. An Adelaide institution since 1965, the brand has four restaurants in the city, including one each in the CBD and Port Adelaide. Sariovski began his career at NBN Television in Lismore as a reporter in 2017. He then began working with Seven as a reporter in Lismore, before moving to Albury as a police reporter for the network. After another brief stint with Nine, he returned to Seven in 2021 and has remained working as an Adelaide-based reporter since.

Memo: xAI is reorganizing its engineering team, as SpaceX SVP Michael Nicolls says xAI is "clearly behind"; source: Nicolls has taken the title of xAI president

@aiatmeta: Introducing Muse Spark, the first in the Muse family of models developed by Meta Superintelligence Labs. Muse Spark is a natively multimodal reasoning model with support for tool-use, visual chain of thought, and multi-agent orchestration. Muse Spark is available today at [image] Meta is back! Muse Spark scores 52 on the Artificial Analysis Intelligence Index, behind only Gemini 3.1 Pro, GPT-5.4, and Claude Opus 4.6. Muse Spark is the first new release since Llama 4 in April 2025 and also Meta's first release that is not open weights Muse Spark is a new model from @Meta evaluated on Artificial Analysis. We were given early access by Meta to independently benchmark the model. It is the first frontier-class model from Meta since Llama 4 Maverick was released in April 2025, and notably the first @AIatMeta model that is not being released as open weights [...]

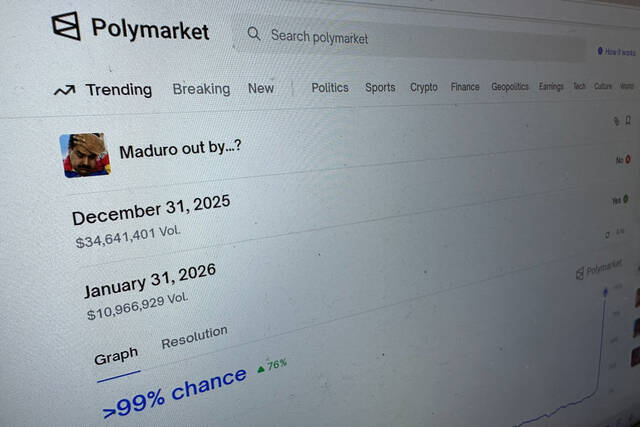

Newly created Polymarket accounts bet big on US-Iran ceasefire in hours before Trump's announcement

NEW YORK -- A group of new accounts on the prediction market Polymarket made highly specific, well-timed bets on whether the U.S. and Iran would reach a ceasefire on April 7, resulting in hundreds of thousands of dollars in profits for these new customers. These bets were made even though, in the hours before a two-week ceasefire was announced on Tuesday, President Donald Trump's rhetoric had escalated sharply and there were few signals that a ceasefire deal was imminent. Early in the day Trump had issued a warning on social media that "a whole civilization will die tonight" if Iran did not meet his demand to open the Strait of Hormuz by his 8 p.m. ET deadline. An analysis of publicly available blockchain data from Polymarket, using the crypto analytics platform Dune, shows that at least 50 accounts, or wallets, placed substantial "Yes" bets Tuesday before Trump announced the ceasefire in a Truth Social post at around 6:30 pm ET. These were the first bets made by these particular wallets. One of these wallets, created Tuesday around 10 am ET, placed roughly $72,000 in bets at an average price of 8.8 cents. The buy-in for each betting event ranges from $0 to $1 each, reflecting a 0% to 100% chance of what users think could happen. This Polymarket user then cashed out for a profit of $200,000. Another, which joined the platform on April 6 and traded on this exact event, shows a win of $125,500. Another wallet, created 12 minutes before Trump's post, made $31,908 of "Yes" bets at 33.7 cents, and is estimated to have earned a profit of $48,500. The higher price for "Yes" at that time may have reflected the efforts late Tuesday by the government of Pakistan to get Trump to extend his deadline by two weeks. There is also the possibility that these individual Polymarket users placed their bets expecting Trump to back down, given his habit during his second term to make bold threats only to retreat -- a phenomenon his critics have derided as "Trump Always Chickens Out," or TACO. While some users took handsome profits, others must wait for payouts because Polymarket has labeled the April 7 Iran-U.S. ceasefire contract as "disputed," given that Iran was still placing restrictions on ships passing through the Strait of Hormuz and missile attacks in the region continued. That dispute could take 48 hours to resolve. Public blockchain data cannot identify who controls the new wallets. Polymarket uses proxy smart contract wallets, meaning a single user can create multiple accounts. Only Polymarket has the internal data needed to determine whether these were new users or existing users opening additional accounts. Polymarket did not respond to a request for comment. Rep. Blake Moore, R-Utah, who has introduced legislation to regulate prediction markets, released a statement Wednesday saying: "It's highly unlikely that these are good-faith trades; it's much more likely that these are insiders with access to information ahead of the public. Without some kind of restrictions, there is nothing stopping government or military officials from profiting from their positions." The trading pattern of newly created Polymarket accounts placing strategic, well-timed bets mirrors earlier episodes on the platform. Newly created accounts placed large wagers hours before the January capture of Venezuelan President Nicolás Maduro, and made hundreds of thousands of dollars in profit. Similar clusters of accounts have also repeatedly profited from well-timed bets on military actions involving Iran. Such bets have repeatedly raised questions from the public as well as members of Congress about whether some traders are using inside information to profit in these prediction markets. Bipartisan groups of senators as well as representatives have introduced legislation that would broaden the definition of insider trading to include prediction markets. Even the two biggest platforms in the industry, Kalshi and Polymarket, have said they see a need to broaden the definition of insider trading on their platforms. "This is why these markets need regulation," said Todd Philips, a professor at Georgia State University who has written on prediction markets and the industry's regulations. "We can't have people trading with inside information and expect other traders are going to be OK being in these markets."

Newly created Polymarket accounts bet big on U.S.-Iran ceasefire in hours before Trump's announcement

NEW YORK -- A group of new accounts on the prediction market Polymarket made highly specific, well-timed bets on whether the U.S. and Iran would reach a ceasefire on April 7, resulting in hundreds of thousands of dollars in profits for these new customers. These bets were made even though, in the hours before a two-week ceasefire was announced on Tuesday, President Donald Trump's rhetoric had escalated sharply and there were few signals that a ceasefire deal was imminent. Early in the day Trump had issued a warning on social media that "a whole civilization will die tonight" if Iran did not meet his demand to open the Strait of Hormuz by his 8 p.m. deadline. An analysis of publicly available blockchain data from Polymarket, using the crypto analytics platform Dune, shows that at least 50 accounts, or wallets, placed substantial "Yes" bets Tuesday before Trump announced the ceasefire in a Truth Social post at around 6:30 pm. These were the first bets made by these particular wallets. One of these wallets, created Tuesday around 10 am ET, placed roughly $72,000 in bets at an average price of 8.8 cents. The buy-in for each betting event ranges from $0 to $1 each, reflecting a 0% to 100% chance of what users think could happen. This Polymarket user then cashed out for a profit of $200,000. Another, which joined the platform on April 6 and traded on this exact event, shows a win of $125,500. Another wallet, created 12 minutes before Trump's post, made $31,908 of "Yes" bets at 33.7 cents, and is estimated to have earned a profit of $48,500. The higher price for "Yes" at that time may have reflected the efforts late Tuesday by the government of Pakistan to get Trump to extend his deadline by two weeks. There is also the possibility that these individual Polymarket users placed their bets expecting Trump to back down, given his habit during his second term to make bold threats only to retreat -- a phenomenon his critics have derided as "Trump Always Chickens Out," or TACO. While some users took handsome profits, others must wait for payouts because Polymarket has labeled the April 7 Iran-U.S. ceasefire contract as "disputed," given that Iran was still placing restrictions on ships passing through the Strait of Hormuz and missile attacks in the region continued. That dispute could take 48 hours to resolve. Public blockchain data cannot identify who controls the new wallets. Polymarket uses proxy smart contract wallets, meaning a single user can create multiple accounts. Only Polymarket has the internal data needed to determine whether these were new users or existing users opening additional accounts. Polymarket did not respond to a request for comment. Rep. Blake Moore, R-Utah, who has introduced legislation to regulate prediction markets, released a statement Wednesday saying: "It's highly unlikely that these are good-faith trades; it's much more likely that these are insiders with access to information ahead of the public. Without some kind of restrictions, there is nothing stopping government or military officials from profiting from their positions." The trading pattern of newly created Polymarket accounts placing strategic, well-timed bets mirrors earlier episodes on the platform. Newly created accounts placed large wagers hours before the January capture of Venezuelan President Nicolás Maduro, and made hundreds of thousands of dollars in profit. Similar clusters of accounts have also repeatedly profited from well-timed bets on military actions involving Iran. Such bets have repeatedly raised questions from the public as well as members of Congress about whether some traders are using inside information to profit in these prediction markets. Bipartisan groups of senators as well as representatives have introduced legislation that would broaden the definition of insider trading to include prediction markets. Even the two biggest platforms in the industry, Kalshi and Polymarket, have said they see a need to broaden the definition of insider trading on their platforms. "This is why these markets need regulation," said Todd Philips, a professor at Georgia State University who has written on prediction markets and the industry's regulations. "We can't have people trading with inside information and expect other traders are going to be OK being in these markets."

Polymarket Users Bet Heavily on US-Iran Ceasefire Before Trump's Announcement

Recent activity on the prediction market Polymarket highlights significant bets on a potential US-Iran ceasefire. Just hours before an announcement on April 7, numerous new user accounts wagered substantial amounts, resulting in massive profits. Details of the Betting Activity On Tuesday, President Donald Trump had intensified his rhetoric, warning that "a whole civilization will die tonight" if Iran did not comply with his demands regarding the Strait of Hormuz. Despite this, around 50 new accounts made well-timed "Yes" bets before the ceasefire was confirmed at approximately 6:30 PM ET. * One wallet, created at 10 AM ET, placed $72,000 in bets at an average price of 8.8 cents, later cashing out for a profit of $200,000. * Another user, who joined on April 6, netted $125,500 in profits from similar bets. * A wallet established just 12 minutes prior to Trump's announcement profited $48,500 from $31,908 in "Yes" bets at 33.7 cents. Market Analysis and Implications The rising price of "Yes" bets could suggest that market participants anticipated a shift in Trump's stance. Notably, the involvement of Pakistan in negotiations may have influenced these expectations. However, while many users enjoyed significant gains, some may face delays in payouts. Polymarket classified the April 7 ceasefire contract as "disputed" due to ongoing restrictions Iran maintained on maritime activities and the continuation of missile attacks in the region. Resolution of this dispute could take up to 48 hours. Concerns Over Insider Trading The extensive use of newly created accounts for strategic betting has led to concerns regarding potential insider trading. The pattern mirrors previous events, such as the January betting surrounding the capture of Venezuelan President Nicolás Maduro, raising questions about the ethics and legality of predictions in such markets. Legislative efforts are underway, with bipartisan groups of senators advocating for a broader definition of insider trading that includes prediction markets. Both Kalshi and Polymarket have acknowledged the necessity for regulatory frameworks to ensure transparent and fair trading practices. Todd Philips, an expert on prediction markets, emphasized the importance of regulation. He remarked, "We can't have people trading with inside information and expect other traders are going to be OK being in these markets." As Polymarket and similar platforms continue to evolve, the need for robust oversight remains critical for maintaining the integrity of prediction markets.

Anthropic's Claude Mythos Shakes Cybersecurity, Partners with Tech Giants

Anthropic, an artificial intelligence start-up, has introduced the Claude Mythos Preview, an advanced AI model designed for coding and agentic tasks. This initiative is predicted to revolutionize the cybersecurity landscape, particularly concerning zero-day vulnerabilities, which are previously unknown security flaws that hackers can exploit. Impact on Cybersecurity In a recent statement, Anthropic revealed that the Claude Mythos Preview has already discovered thousands of high-severity zero-day vulnerabilities across various critical infrastructures. This alarming development triggered immediate action within the cybersecurity community, leading to the formation of a coalition aimed at addressing these vulnerabilities to prevent potential disasters. Project Glasswing Collaboration To combat these vulnerabilities, Anthropic launched Project Glasswing, aimed at securing vital infrastructure against AI-enabled threats. This project has partnered with leading technology and cybersecurity firms including: * Nvidia * Amazon Web Services (AWS) * Apple * Google (Alphabet) * Broadcom * Microsoft * Cisco * CrowdStrike * Palo Alto Networks * JPMorgan Chase * Linux Foundation These major entities are joining forces to address the security issues identified by the Claude Mythos. Anthropic emphasized that the project is a critical measure to utilize the AI model's capabilities for defensive cybersecurity strategies. Scope of Vulnerabilities The vulnerabilities detected encompass major operating systems such as: * Microsoft Windows * Apple's MacOS * Linux In addition, mobile operating systems like Google's Android and Apple iOS, as well as popular web browsers such as: * Google Chrome * Apple Safari * Microsoft Edge are also affected, making the urgency of swift remedial action apparent. Investment and Support To support this initiative, Anthropic has committed $100 million in usage credits and has raised $4 million in donations for open-source security organizations. This widespread collaboration reflects the industry's serious commitment to address the rising threats posed by sophisticated AI tools. The Road Ahead for AI in Cybersecurity This unprecedented alliance illustrates the ongoing evolution of AI in shaping cybersecurity practices. It signifies that even rivals can unite for the greater good in addressing critical security challenges. As AI tools continue to advance rapidly, their role in cybersecurity will likely expand, making initiatives like Project Glasswing vital for protecting critical infrastructure.

Anthropic Vs Department Of Defense Leaves Anthropic in Supply-Chain Risk Limbo

In Washington, DC, the latest Anthropic vs department of defense ruling did not end the dispute so much as freeze it in place. A three-judge appeals panel said Anthropic had not met the demanding standard needed to temporarily remove the Pentagon's supply-chain-risk designation, leaving the company caught between two courts and two conflicting preliminary orders. The case is now bigger than one company's access to government systems. It has become a test of how far the executive branch can go in treating a major AI provider as a national-security risk, even as the company says the label is costing it business and limiting the use of its tools inside the federal government. Why did the appeals court keep the Pentagon label in place? The Washington, DC, panel said granting a stay would force the military to continue dealing with what it called an unwanted vendor of critical AI services during an active military conflict. The judges said they were wary of imposing on military operations or lightly overriding national-security judgments. That language stands in tension with a separate ruling from San Francisco, where a lower-court judge found the Department of Defense likely acted in bad faith. In that court, the judge said the Pentagon was motivated by frustration over Anthropic's proposed limits on how its technology could be used and by the company's criticism of those limits. The San Francisco order led the Trump administration to restore access to Anthropic AI tools inside the Pentagon and across the rest of the federal government. What does the split mean for Anthropic and the federal government? The result is a legal limbo. The government sanctioned Anthropic under two different supply-chain laws with similar effects, and each court is handling only one of those designations. Anthropic has said it is the first US company to be designated under both laws, which are typically used against foreign businesses viewed as threats to national security. In this case, the designation has raised questions about whether a domestic AI company can be treated in the same way. Anthropic spokesperson Danielle Cohen said the company is grateful the Washington, DC, court recognized the need to resolve the issues quickly and remains confident the courts will ultimately agree the supply-chain designations were unlawful. The Department of Defense did not immediately respond to a request for comment. Acting attorney general Todd Blanche took a harder line, calling the stay a victory for military readiness and saying military authority belongs to the Commander-in-Chief and Department of War, not a tech company. How is Anthropic framing the human and business stakes? Anthropic has argued in court that it lost business because of the designation. It has also said it is being punished for insisting that its Claude tool lacks the accuracy needed for certain sensitive operations, including deadly drone strikes without human supervision. That argument places the company's fight in a broader debate over whether AI companies should be pressured to support uses they consider unsafe or technically unreliable. The dispute has also taken on a wider political and operational meaning because the Pentagon is deploying AI in its war against Iran. In that setting, the Anthropic vs department of defense fight is not just about a label. It is about who gets to decide how AI is used when military operations and commercial technology intersect. What do experts say about the wider significance? Several experts in government contracting and corporate rights have said Anthropic has a strong case against the government, while also noting that courts sometimes decline to overrule the White House on national-security matters. Some AI researchers have said the Pentagon's actions against Anthropic chill professional debate about how well AI systems perform and where they should not be used. For now, the conflicting rulings leave the company in a tense middle ground: access restored in one court, restriction preserved in another, and no clear path yet for resolving the split. The supply-chain-risk label remains in place in Washington, DC, even as Anthropic continues to challenge it. In the end, the same question still hangs over the case: who controls the boundaries of AI inside the state, the company that built it or the government that wants to use it?

Newly created Polymarket accounts win big on well-timed Iran ceasefire bets

Customers make hundreds of thousands of dollars as records show substantial bets made before announcement A group of new accounts on the prediction market Polymarket made highly specific, well-timed bets on whether the US and Iran would reach a ceasefire on Tuesday, resulting in hundreds of thousands of dollars in profits for these new customers. These bets were made even though, in the hours before a two-week ceasefire was announced on Tuesday, Donald Trump's rhetoric had escalated sharply and there were few signals that a ceasefire deal was imminent. Early in the day Trump had issued a warning on social media that "a whole civilization will die tonight" if Iran did not meet his demand to open the Ssrait of Hormuz by his 8pm ET deadline. An analysis of publicly available blockchain data from Polymarket, using the crypto analytics platform Dune, shows that at least 50 accounts, or wallets, placed substantial yes on bets Tuesday before Trump announced the ceasefire in a Truth Social post at about 6.30pm ET. These were the first bets made by these particular wallets. One of these wallets, created on Tuesday at about 10am ET, placed roughly $72,000 in bets at an average price of 8.8¢. The buy-in for each betting event ranges from $0 to $1 each, reflecting a 0% to 100% chance of what users think could happen. This Polymarket user then cashed out for a profit of $200,000. Another, which joined the platform on 6 April and traded on this exact event, shows a win of $125,500. Another wallet, created 12 minutes before Trump's post, made $31,908 of yes bets at 33.7¢, and is estimated to have earned a profit of $48,500. The higher price for yes at that time may have reflected the efforts late on Tuesday by the government of Pakistan to get Trump to extend his deadline by two weeks. There is also the possibility that these individual Polymarket users placed their bets expecting Trump to back down, given his habit during his second term to make bold threats only to retreat - a phenomenon his critics have derided as "Trump always chickens out" or Taco. While some users took handsome profits, others must wait for payouts because Polymarket has labeled the Iran-US ceasefire contract as "disputed", given that Iran was still placing restrictions on ships passing through the strait of Hormuz and missile attacks in the region continued. That dispute could take 48 hours to resolve. Public blockchain data cannot identify who controls the new wallets. Polymarket uses proxy smart contract wallets, meaning a single user can create multiple accounts. Only Polymarket has the internal data needed to determine whether these were new users or existing users opening additional accounts. Polymarket did not respond to a request for comment. The trading pattern of newly created Polymarket accounts placing strategic, well-timed bets mirrors earlier episodes on the platform. Newly created accounts placed large wagers hours before the January capture of the Venezuelan president, Nicolás Maduro, and made hundreds of thousands of dollars in profit. Similar clusters of accounts have also repeatedly profited from well-timed bets on military actions involving Iran. Such bets have repeatedly raised questions from the public as well as members of Congress about whether some traders are using inside information to profit in these prediction markets. Bipartisan groups of senators as well as representatives have introduced legislation that would broaden the definition of insider trading to include prediction markets. Even the two biggest platforms in the industry, Kalshi and Polymarket, have said they see a need to broaden the definition of insider trading on their platforms. "This is why these markets need regulation," said Todd Phillips, a professor at Georgia State University who has written on prediction markets and the industry's regulations. "We can't have people trading with inside information and expect other traders are going to be OK being in these markets."

OT Cybersec Sector Frets Anthropic Will Leave It Behind

There's growing concern in the operational technology cybersecurity community that manufacturers and operators, and their security vendors, will be left out in the cold by the latest efforts to use artificial intelligence in securing critical software. See Also: AI Impersonation Is the New Arms Race -- Is Your Workforce Ready? Mythos Preview is the latest frontier AI model from Anthropic, which the company said Tuesday was so good at both finding zero day vulnerabilities and writing exploits for them, that it would not be released to the public (see: Anthropic Calls Its New Model Too Dangerous to Release). Project Glasswing is the exclusive group the company has set up, whose members - including major IT security vendors, infrastructure providers and original equipment manufacturers like Crowdstrike, Microsoft, Google and Cisco - get to use Mythos to scour their codebases for vulnerabilities. But there don't appear to be any pure play OT or industrial control system OEMs or security companies who have said they were among members of the coalition. "We see security vendors from some larger platform plays, who might offer OT options," said Sean Tufts, field CTO of pure-play OT security firm Claroty, "I think that's really helpful. But we need people in there that are more OT specific and OT only. I think that's critical," he told Information Security Media Group. "I'd like to see best-of-breed critical infrastructure security and manufacturers in there, someone like Claroty or one of our main competitors," Tufts added, "I think someone at the table needs to have a myopic focus on OT, if they're targeting critical infrastructure." Two other pure play vendors approached by ISMG declined comment or were not available for comment. Neither said they were members of Glasswing. Anthropic said Mythos has "already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser." The large language model is significantly better than prior versions - and "all but the most skilled humans" - at finding and exploiting vulnerabilities in software code, the company said. But while Mythos may be more capable, it is not unique. A competition staged last year by DARPA, the Pentagon's cutting edge science agency, awarded prizes to seven teams that developed open source LLMs which could scan software libraries for hidden flaws, validate the ones they found to make sure they could actually be used by a hacker and then write and deploy patches to fix each one. All seven toolsets have been publicly released. Given the astounding rate of AI progress, Mythos would likely be followed by other models, some perhaps designed by less scrupulous actors, Anthropic said. "It will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely." The company said Project Glasswing is "a starting point," an "urgent attempt to put these capabilities to work for defensive purposes" while they still had a head start on malicious actors. The company did not immediately respond to a request for comment about why no OT/ICS companies appear to have been included. Tufts welcomed Anthropic's declaration that it would make the vulnerabilities Mythos found public once they had been patched, in line with responsible disclosure practices. "I think we absolutely should look for vulnerabilities in OT software any way we can get it. It doesn't matter to me where it comes from, whether it's a researcher with a soldering iron or an AI model. We need that data," he said. The work of Project Glasswing is urgent, he said, because the advent of AI hacking had rendered the term "zero day" obsolete. "Now we have 'zero minute.' We used to have 24 hours between the release and when the bad guys would start to build kits for it, and now AI has shrunk that down to minutes. So we can't let a vulnerability sit for days. We have to be on that faster," he said. But he cautioned that patching and other mitigations often take much more time to implement for OT. "The speed and the ferocity is increasing now at the pace of AI, which is a scary thing for critical infrastructure, when we start talking about our mitigations, our patches, our controls, can often take months or more to properly implement." Speed was essential, added Rob Lee of the SANS Institute. "If patches take weeks or months to develop and deploy, the head start may not be enough. But at minimum, Anthropic is attempting to slow down the exposure timeline," he said. It was especially critical given the current conflict with Iran, which had developed cyber capabilities. "The wartime context makes this more urgent. Current mean time to exploit for newly disclosed vulnerabilities is under 24 hours" and if an adversary like Iran "can operationalize an AI-discovered vulnerability that fast against critical infrastructure, the consequences aren't theoretical. The absence of OT/ICS companies was only one of the questions about the membership of Glasswing, said Leah Siskind, AI research fellow at the Foundation for the Defense of Democracies think tank. "I'm very curious whether the other frontier AI companies [like OpenAI] will be included," she said. "I've been talking to some federal agency CISO's today," she added, "And although Anthropic says in their press release that they are in talks with the government about [Glasswing], it doesn't seem like they're an official partner yet." She said that given the huge codebase the federal government maintained, agencies also need a seat at the table. It was "worrying" the feds weren't yet included, especially given the "fraught relationship" between the federal government and Anthropic, which had been declared a supply chain risk by the Department of Defense. She called the designation "inappropriate," and urged the DoD to "make amends and move on." Defense "could encounter the same sort of difference of opinion with OpenAI or X or Google next week," she pointed out, "Just because they disagree on some some aspect, is no reason to blackball them."

Appeals court rejects Anthropic's bid to temporarily halt Pentagon designation

A federal appeals court has rejected Anthropic's bid to temporarily halt the Pentagon's labelling of the artificial intelligence company as a supply chain risk, finding the firm failed to meet the strict requirements for an emergency stay. The order, issued Wednesday evening by a three-panel judge in Washington, D.C.'s federal appeals court, blocked Anthropic's bid to pause the designation, but granted its request for expedition. Oral arguments are slated to begin May 19. The decision breaks from that of a federal judge in California, who temporarily blocked the supply chain risk designation in a ruling late last month. Anthropic filed suits in both California and D.C. contesting both the Pentagon's designation, which is typically reserved for foreign adversaries, and President Trump's directive for civilian agencies to stop using Anthropic's products. Anthropic demanded its technology not be used in fully autonomous lethal weapons or for the mass surveillance of Americans. The Pentagon has insisted it be allowed to use Claude for "all lawful uses." The appeals panel recognized Anthropic is likely to face "some degree" of irreparable harm without a stay, though the exact amount of Anthropic's financial harm is not "fully clear," the order stated. Anthropic's lawyers have argued the designation could cost the firm billions of dollars in revenue. Further, the judges suggested Anthropic actually benefitted financially given the surge in app store downloads amid its riff with the Pentagon. Meanwhile, the panel also recognized a stay would force the U.S. military to prolong relations with "an unwanted vendor of critical AI services" amid the ongoing military conflict with Iran. "In our view, the equitable balance here cuts in favor of the government," the panel said. "On one side is a relatively contained risk of financial harm to a single private company. On the other side is judicial management of how, and through whom, the Department of War secures vital AI technology during an active military conflict." The panel consisted of two Trump appointees -- Judges Neomi Rao and Gregory Katsas -- along with Karen LeCraft Hendersdon, an appointee of former President George H.W. Bush. Acting Attorney General Todd Blanche lauded the D.C. Circuit order, calling it a "resounding victory for military readiness." "Our position has been clear from the start -- our military needs full access to Anthropic's models if its technology is integrated into our sensitive systems," Blanche wrote in a post on X. "Military authority and operational control belong to the Commander-in-Chief and Department of War, not a tech company." A spokesperson for Anthropic said the company is "grateful the court recognized these issues need to be resolved quickly and confident the courts will ultimately agree that these supply chain designations were unlawful." "While this case was necessary to protect Anthropic, our customers, and our partners, our focus remains on working productively with the government to ensure all Americans benefit from safe, reliable AI," the spokesperson added.

Anthropic loses bid for emergency stay of US supply chain designation | MLex | Specialist news and analysis on legal risk and regulation

By Mike Swift ( April 9, 2026, 00:03 GMT | Insight) -- Anthropic on Wednesday lost a bid to have a US appeals court issue an emergency stay blocking its designation by the Trump Administration as a supply chain risk, a setback as the company argues in two federal courts that the designation was unlawful and unconstitutional.Anthropic on Wednesday lost a bid to have a US appeals court issue an emergency stay blocking its designation by the Trump Administration as a supply chain risk, a setback as the company argues in two federal courts that the designation was unlawful.... Prepare for tomorrow's regulatory change, today MLex identifies risk to business wherever it emerges, with specialist reporters across the globe providing exclusive news and deep-dive analysis on the proposals, probes, enforcement actions and rulings that matter to your organization and clients, now and in the longer term. Know what others in the room don't, with features including: * Daily newsletters for Antitrust, M&A, Trade, Data Privacy & Security, Technology, AI and more * Custom alerts on specific filters including geographies, industries, topics and companies to suit your practice needs * Predictive analysis from expert journalists across North America, the UK and Europe, Latin America and Asia-Pacific * Curated case files bringing together news, analysis and source documents in a single timeline Experience MLex today with a 14-day free trial.

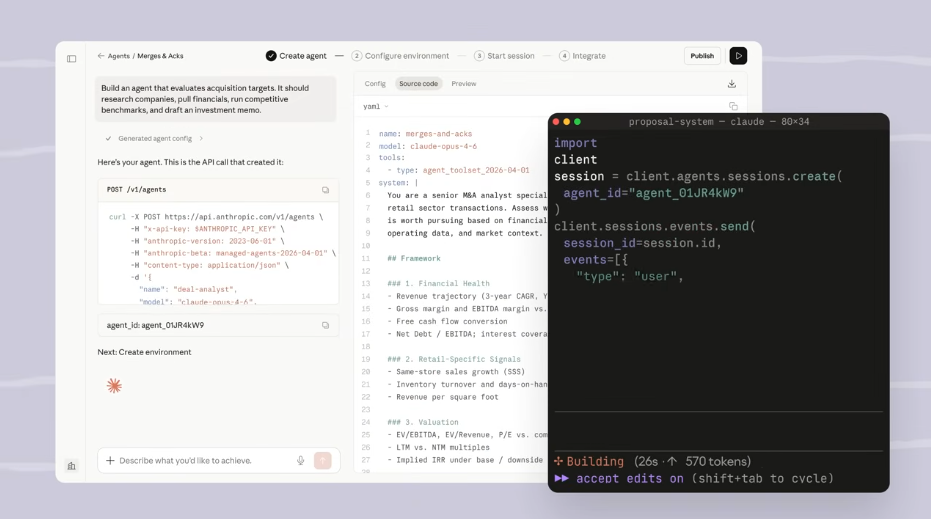

Anthropic launches Claude Managed Agents to speed up AI agent development - SiliconANGLE

Anthropic launches Claude Managed Agents to speed up AI agent development Anthropic PBC today launched Claude Managed Agents, a cloud service that customers can use to build artificial intelligence agents. The company says that the offering shortens the development workflow from months to weeks. Deploying a production-grade agent requires software teams to build not only the agent itself but also a significant amount of scaffolding. Developers must configure a container in which the agent can perform tasks without risking production systems. Additionally, they must set up the infrastructure on which the container will run, observability features and other components. Claude Managed Agents automates many of those tasks. It's accessible through a set of application programming interfaces. According to Anthropic, customers will be billed for the Claude model usage of their agents plus a fee of $0.08 per agent runtime hour. Developers can start a Claude Managed Agents project by providing a description of tasks they wish to automate with an AI agent. From there, they must specify the tools, or third-party applications, that the agent should use to perform the specified tasks. They can also define cybersecurity rules to regulate details such as whether a tool can be activated without user permission. Claude Managed Agents automatically spins up an isolated container for each agent. That container hosts software components specified in advance by the agent's developer. For example, a company building a website design agent might wish to equip its sandbox with a browser. Claude Managed Agents automates much of the work involved in state management. That's the task of managing the data used by AI agents to complete tasks. A coding assistant, for example, might incorporate programming advice from the public web into its prompt responses. State management also encompasses more sensitive data such as the login credentials with which an agent signs into cloud-hosted tools. Tool orchestration is another task that Claude Managed Agents promises to eas. When an agent receives a prompt, the service determines which of the tools at its disposal should be used to generate an answer. An error recovery mechanism enables agents to pick off where they left off after an outage interrupts their work. Two of Claude Managed Agents' features are currently in research preview. The first enables an agent to spin up other agents when working on complex tasks. According to Anthropic, the other feature configures Claude to automatically refine prompt response quality. The company says that the latter capability "improved outcome task success by up to 10 points over a standard prompting loop" in internal testing.