News & Updates

The latest news and updates from companies in the WLTH portfolio.

XAI Building Money Printing AI Data Centers Faster and Cheaper While Others Are Canceled or Delayed | NextBigFuture.com

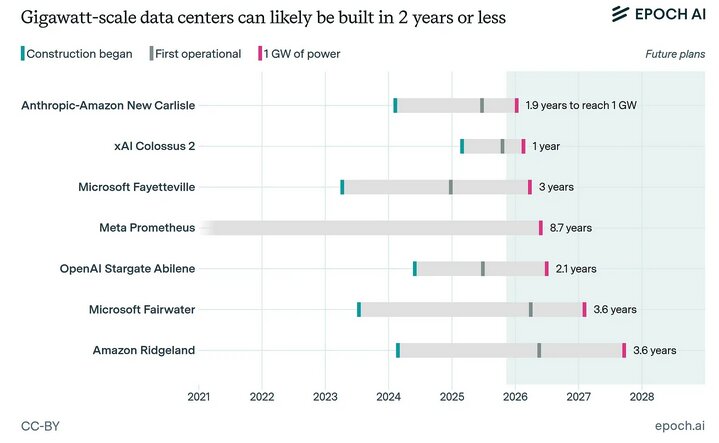

XAI AI data center economics are even better when you can start a project and finish it in 12 months and get it rented and paid off while competitors are still building. An active AI Gigawatt of data center in the hand is worth $20 billion per year while an under construction Gigawatt data center is bleeding $5-15 billion per year of cash. XAI is building them for about $30-40 billion per gigawatt while competitors need $50 billion and add on another $10 billion or more in extra financing costs for a multi-year project. Data center project cancellations more than quadrupled to 25 in 2025 from six in 2024. Between a third and a half of all US data centers planned for 2026 are likely to be delayed or totally cancelled, Bloomberg reports, amid ongoing supply chain challenges and campus location concerns. The original plan might have been four years but now it could be longer or never. There was an estimated 12-16GW of planned capacity in 2026. Only 5GW is currently under construction and many projects remain having been announced, but with no physical progress yet. Of the 5 GW, XAI is 1-2 GW of the under actual construction or completed. OpenAI's $500 billion Stargate Project has been stalled, downsized or delayed from the original 4 year timeline. Amazon AI revenue run rate last quarter was $15 billion per year. XAI matched the $15 billion per year with the Anthropic deal. IF XAI were to rent out the rest of Colossus 2 that would be another $15-20 billion per year. Cloud computing margins have been great and have been the envy of other businesses. Traditional cloud margins (AWS - Amazon Web Services) are stable and high at ~35% operating margins, Bitcoin mining margins are volatile/compressed (often breakeven or negative in 2025-2026), and AI cloud margins (CoreWeave, SpaceXAI, Amazon AI) offer the highest unit economics with ~75%+ gross and ~60% EBITDA margins despite heavy growth capex. Competitors are still waiting for permits, poring concrete and waiting on transformers. XAI Capex pays itself off in 12 months instead of 3-5 years. AI multi-year demand stays insatiable. XAI capture it first. It's the ultimate build faster, rent sooner, kick ass. xAI recycles tens of billions into the next project 1 to 3 years earlier. XAI is compounding returns while others are still in risky construction. Risk reduction is from shorter exposure to technology obsolescence, energy price volatility, regulatory delays, or demand shifts. A 4-year build window carries far more execution and market risk. Tesla Building 67+ Megacharging Sites and Has Thousands of Supercharger sites Tesla is building 67+ Megacharging Sites and has thousands of supercharger sites. One Megacharging site or ten supercharging sites can add the equivalent of one Coreweave size 10-20 megawatt AI data centers. SpaceX SP500 will happen in 6 months. Passive S&P 500 funds WILL HAVE TO BUY roughly 19% of public SpaceX shares within 6 months under fast-tracking framework. Russell 1000 and Nasdaq 100 will buy 5.5% within 15 days of the IPO. Active benchmarked to those indices and you get to HALF of SpaceX shares. SpaceX IPO is over 10X oversubscribed and Blackrock has put in an order for $5-10 billion. This is continuation of a 30+ year trend (internet era) and 50+ year trend (computing). The companies that built the internet, cloud, semiconductors, and now AI have compounded at extraordinary rates because they became infrastructure for everything else. Skeptics who said "this tech bubble will pop and we'll go back to normal" in the 1990s, 2000s, or even post-2010 were wrong over entire investing lifetimes. The internet and computers did not reverse. They swallowed more of the economy every year. AI has already swallowed large parts of the world economy -- AI is in every major product roadmap, every hyperscaler's capex, and increasingly in enterprise workflows. Demand for compute (per analysts like Dylan Patel, Semianalysis) has run 5x+ ahead of supply in key areas, and AI cloud margins are far higher than traditional cloud or Bitcoin mining. CoreWeave (AI-native cloud) has 850 Megawatts across 43 data centers and about 250,000 GPUs. They have ~3.1 GW contracted power capacity (end-2025) and targeting over 1.7 GW active by end-2026 and adding ~5 GW more by 2030. XAI with one deal has matched CoreWeave with about 800-900 Megawatts contracted to Anthropic. XAI will match or exceed the 1.7 GW active by end of 2026. OpenAI talked about big data center plans but they are mostly completing in 2029 or are getting cancelled. xAI is building a third massive data center. This time in Southaven, Mississippi, right next to its Colossus 2 facility in Memphis. Construction begins in 2026. xAI is buying another $2.8 billion worth of turbines for its AI infrastructure over the next three years.

SpaceX's $1.75 Trillion IPO Story Gets a Starship Lift, But Investors Still Need Proof

SpaceX, a private space launch and satellite internet company led by Elon Musk, just gave investors a timely win ahead of its planned IPO. The company launched its new Starship rocket from Texas on Friday and brought the vehicle back to Earth largely intact, marking a key step for the rocket that sits at the center of its long-term growth plan. In fact, SpaceX is selling investors a much larger story than the launch business, as it is built around Starlink, cheaper access to space, NASA work, and, one day, Musk's goal of reaching Mars. According to reports, SpaceX has filed for what could become the largest IPO in history, with a $1.75 trillion target valuation. Starship is central to that pitch. SpaceX told investors in its IPO filing, "Our ability to execute our growth strategy is highly dependent on Starship." That line sums up the whole story. If Starship works at scale, SpaceX could launch more Starlink satellites, lower launch costs, and build a much wider space-based network. The latest test showed real progress. The redesigned V3 version reached sub-orbit and deployed nearly two dozen dummy and test satellites. However, the flight was not flawless. One of the upper-stage engines failed, and the vehicle later toppled into the ocean and exploded, as planned, during its final descent. Even so, for a test flight, this was a strong result. SpaceX still needs to prove it can reuse both parts of the rocket on a regular basis. That is the key point for investors, since reuse could make Starship a major cost-saver rather than just a powerful rocket. For now, the IPO case looks stronger, but not risk-free. Starlink has become a key driver of profit and growth for SpaceX, with the report noting that the company's connectivity segment generated $11.4 billion in revenue in 2025, up nearly 50% from the year before. Operating income in that segment rose 120% to $4.4 billion. Still, the market will likely focus on whether Starship can turn that growth story into a repeatable model. SpaceX wants Starship to carry larger payloads, support more Starlink launches, and one day fly many times per day. That is a bold goal, and it is not yet proven. As analyst Josh Parker told the Financial Times, "Any failure risks the IPO." That may be the cleanest way to view the news. Friday's flight helped SpaceX's IPO story, but the company still has to show that Starship can make the economics work. For investors, this launch was a boost, not a final answer. We used TipRanks' Comparison Tool to align the major publicly traded aerospace and defense companies. It's a great tool to gain an in-depth view of each stock and the broader aerospace industry.

SpaceX Discloses Holdings of 18,712 Bitcoin: What It Means for Crypto Markets

SpaceX has disclosed holdings of 18,712 Bitcoin in its IPO filing with the U.S. Securities and Exchange Commission, marking one of the largest known corporate Bitcoin positions to emerge from a public offering document. The SEC filing revealed SpaceX's Bitcoin position as the Elon Musk-led space company moves toward a public listing. The 18,712 BTC was reported at a fair value of roughly $1.29 billion. The disclosure came through a mandatory filing, giving the public its first verified look at SpaceX's cryptocurrency exposure. Prior to this, the company's Bitcoin holdings had not been formally confirmed through regulatory documents. At approximately $1.29 billion in fair value, SpaceX's Bitcoin position is substantial by any measure. The IPO process required this level of transparency, converting what had been market speculation into a confirmed balance sheet item. Multiple outlets have reported the position at values ranging from $1.29 billion to $1.45 billion, with the difference likely reflecting Bitcoin price fluctuations between the filing date and publication dates. A company of SpaceX's scale publicly confirming a multi-billion-dollar Bitcoin position reinforces the narrative around institutional and corporate cryptocurrency adoption. The disclosure does not indicate any intent to buy or sell, but the confirmed size of the holdings is likely to draw attention from investors tracking corporate BTC exposure. It is important to distinguish between sentiment impact and direct price impact. SpaceX disclosing an existing position does not introduce new buying pressure. However, the confirmation that a company preparing for a major IPO holds significant Bitcoin on its balance sheet adds a data point to the broader corporate adoption trend. This development follows a period of growing corporate interest in Bitcoin treasury strategies, a theme also reflected in moves by firms like BitMine, which recently made a $126 million Ethereum purchase as part of its own digital asset strategy. SpaceX's confirmed holdings place it among a small group of companies with publicly known Bitcoin positions exceeding 10,000 BTC. The CoinGecko corporate treasury tracker now lists SpaceX alongside other major holders. IPO filings require ongoing financial reporting. Once public, SpaceX would need to report changes to its Bitcoin position in quarterly earnings, creating a recurring source of transparency for market participants. Investors watching Bitcoin's macro positioning and institutional flows across crypto assets will likely monitor these disclosures closely. Whether SpaceX continues to hold, accumulate, or reduce its Bitcoin position post-IPO will be visible in future SEC filings, giving market observers a verified, ongoing view of one of the largest known corporate crypto allocations. Disclaimer: This article is for informational purposes only and does not constitute financial or investment advice. Cryptocurrency and digital asset markets carry significant risk. Always do your own research before making decisions.

Artificial egg helps Colossal hatch its de-extinction plans

Prefer us on Google Learn More Colossal Biosciences, the company that produced a trio of modern-day dire wolves, now has its own answer to the question: What comes first, the chicken or the egg? The Dallas-headquartered biotech firm has developed an artificial egg that has been used to hatch healthy chickens, the company announced Tuesday, May 19. In development for about two years, the "first-of-its-kind incubation platform" has been used to raise more than 30 chickens, which live at Colossal's avian facility in Texas. The achievement is an important milestone in the company's goal of bringing back New Zealand's South Island Giant Moa, which went extinct about 600 years ago. The moa's egg is about 80 times the size of a chicken egg, so it's not feasible to use a surrogate host for birthing new moa chicks, Colossal CEO and co-founder Ben Lamm told USA TODAY. Thus, the need for an artificial egg. Lamm compared it to the company's creation of the Colossal Woolly Mouse, which was genetically engineered to have characteristics that could eventually be used in creating a next-generation woolly mammoth. People are also reading... Arizona's landscapes become star of new TV show filming here Japanese company invests in mine near Mount Lemmon Tucson DACA recipient detained after agents 'aggressively' enter home, family says Hotel on the rise at Tucson's Uptown project Bill seeks to end 'vulture practices' in kids sports: What it means Ownership of $12.8M Arizona lottery ticket disputed Growth in Arizona's big cities -- like Tucson -- mostly stalled Opponents place lien on state land bought by Hudbay Minerals south of Tucson U of A expects smaller incoming class than last year's -- which saw a big drop Groups seek $2 billion for Colorado River water conservation 'Neither snow nor rain' stops the mail. But USPS has a bigger problem Arizona softball falls to Duke 8-6; Wildcats, Blue Devils will play again for regional title U of A student groups ask audience to boo commencement speaker Drivers are fast and Tucson's furious Looking for plans? Tucson has nearly 40 weekend events "It's really, really important, because we've told the world that eventually we want exogenous development," the ability to create offspring "completely outside of the womb ... not just for extinct species, but so that we could productionize endangered species," said Lamb, who was recently named to the board of directors for the National Fish and Wildlife Foundation. What does the artificial egg look like? The oval artificial egg looks a bit like a tea infuser, with an open lid to observe embryonic development. The 3D-printed rigid shell, made up of a grid of hexagon shapes, has a silicone-based membrane replicating the interior of a real egg. "Our permeable membrane allows oxygen to diffuse into the system through the membrane at ambient temperatures," said Colossal chief science officer Beth Shapiro in a new explanatory video posted on YouTube. The artificial egg fits within a standardized incubator. Colossal scientists tested the artificial egg on embryos harvested from freshly laid chicken eggs. They are monitored over about 21 days and occasionally given some needed nutrients. Once hatched, the chicks are cared for and, when they are big enough, allowed to join the other chickens on the company's avian facility. Eventually, Colossal's artificial eggs will be used to grow genetically engineered embryos into hatchlings. At the end of a new YouTube video posted by Colossal, you can watch a time-lapse segment showing the development of an embryo to a chick, viewed through the opening at the top of the artificial egg. Listen now and subscribe: Apple Podcasts | Google Podcasts | Spotify | Stitcher | RSS Feed | SoundStack | All Of Our Podcasts "We've created a novel shell-less culture system that is fully scalable and biologically accurate," said Colossal chief biology officer Andrew Pask in a news release. "The genome is the blueprint, but without a place to build, it's meaningless. The artificial egg gives us that platform: controlled, scalable, and completely independent of a surrogate. It's species-agnostic, size-scalable, and unlocks entirely new pathways - from rescuing endangered birds with low hatch success to enabling de-extinction where no surrogate exists." The artificial egg will make it easier for researchers to study not only avian developmental biology -- because Colossal researchers cracked the egg, so to speak -- but also potentially outside the womb development of other creatures. "If we, as scientists, now have the ability to complete embryo development under normal atmospheric conditions in artificial eggs, this represents a significant engineering and biological advance with strong implications for endangered species rescue, developmental biology, and genome engineering," said Prof. Tomas Marques Bonet, an evolutionary and conservation biologist from Pompeu Fabra University in Barcelona, Spain, who is an advisor to Colossal, told USA TODAY in an email exchange. What are Colossal's plans? The chickens hatched from the artificial eggs are living out their lives on Colossal's avian facility. "We're responsible for everything about their lives. We want them to both be healthy physically, but also mentally," said Colossal's head of animal husbandry, Steve Metzler, in the new video. Colossal gets most of its attention for its "de-extinction" plans of bringing back extinct species such as the woolly mammoth, the dodo and the Australian thylacine (Tasmanian tiger). However, research advances in those projects can help the preservation of other animals such as elephants, the white rhino, the red wolf, bison and others, the company says. Still, Colossal has its detractors. The animals the company is creating as part of its de-extinction program won't have the same social structure their ancestors did, they say. "It could be very cruel to those animals," Jeanne Loring, a biologist from the Scripps Institute in California, told NPR. Beyond that, tinkering with science could have unknown repercussions, she said. "It could be catastrophic," Loring says. "There's too many variables that we don't understand. There are too many things that could happen." In the future, Colossal could have countless eggs, or eventually artificial wombs, growing hatchlings meant to re-establish an extinct species or strengthen an endangered one. "The ability to incubate avian embryos outside a biological shell -- at any size and in standard commercial incubators -- is a capability conservation programs simply don't have today. We're building it for the moa, but it's designed to support critically endangered species broadly," said Colossal's chief animal officer Matt James in a statement. "The artificial egg allows us to rescue compromised embryos, build genetic rescue platforms, and utilize donor and biobanked material in ways that weren't previously possible. It reflects deep collaboration across biology, engineering, and software - and opens entirely new pathways to help address the biodiversity crisis," said James, who also heads The Colossal Foundation, the company's charitable arm. Does Colossal's artificial egg finally answer the question of which came first? Well, it depends on your point of view. A chicken did lay the eggs, which were transferred to artificial eggs to grow. But in the future, could a genetically-engineered embryo shift the answer toward the egg? Subscribe to stay connected to Tucson. A subscription helps you access more of the local stories that keep you connected to the community. The business news you need Get the latest local business news delivered FREE to your inbox weekly. Sign up! * I understand and agree that registration on or use of this site constitutes agreement to its user agreement and privacy policy.

U.S. Congress launches insider trading probe into Polymarket, Kalshi - AMBCrypto

The U.S House Oversight and Governance Reform Committee has launched an investigation into prediction markets for alleged insider trading. In a CNBC interview, Congressman James Comer (R-Kentucky), Chair of the Oversight Committee, claimed that prediction markets are "the wild west." He added, "this is so new, and there are no written laws. Prediction markets have never been a problem until recent months." Comer cited the U.S soldier who profited by over $400K after betting on Venezuelan President Nicolás Maduro's capture using insider intelligence. He also mentioned the insiders who benefited from bets on the U.S-Iran war, alongside politicians trying to manipulate markets tied to their election races. He continued, We launched the investigation to see how widespread this (insider trading) is. But also to prove a case that we've got to pass some type of legislation like banning members of Congress, government employees, and people in the President's administration from prediction markets. In fact, a recent report showed that insiders on Polymarket made over $2.4 million on Iran bets. For Comer, the widespread insider trading activity is a sign that "Congressional action may be necessary." Kalshi is one of the largest prediction markets regulated by the Commodity Futures Trading Commission (CFTC). Its rival, Polymarket, has gotten approval to re-enter the U.S market, but has a massive global market share thanks to its lack of KYC (Know Your Customer) requirements. Similarly, Kalshi has expanded to 120 global markets. However, the lack of KYC provisions for offshore markets is now raising concerns about bad actors gaming the markets. In a letter to Kalshi's CEO Tarek Mansour, the Committee pressed, The rapid global expansion of Kalshi's platform raises questions about whether internationally placed event contracts are subject to equivalent identity verification and insider trading prohibitions as domestic event contracts. A similar letter was sent to Polymarket's CEO Shayne Coplan. Now, the Committee wants to know their KYC systems, whether it applies to global users, the trading history of any U.S government employee, including military officers, among others. Worth noting though that regulatory pressure is also building overseas. For example, India has banned prediction markets under the Promotion and Regulation of Online Gaming Act (PROGA). Recently, the Ministry of Electronics and Information Technology (MeiTY) flagged VPNs (virtual private networks) being used to bypass domestic restrictions on these betting markets.

SpaceX: Starship Flight 12 Marks First Successful V3 Test Flight And Major Advancement For Mars Program

SpaceX successfully completed the 12th integrated flight test of Starship, marking the debut of the upgraded Starship V3 architecture and the company's first Starship launch in nearly seven months. The launch took place from Starbase, Texas, and represented one of the most ambitious demonstrations yet for the company's next generation reusable launch system. The mission showcased a wide range of new technologies designed to support future lunar missions, satellite deployment operations, and eventual human missions to Mars. The Flight 12 mission featured the first use of the Starship V3 configuration, which includes upgraded propulsion systems, lighter structures, redesigned fuel systems, improved thermal protection, and modifications aimed at dramatically increasing launch cadence and vehicle reusability. SpaceX engineers have described the V3 platform as a significant step forward compared to earlier Starship variants, incorporating lessons learned from the previous 11 integrated flight tests. One of the most notable elements of the mission was the deployment of 22 simulated Starlink satellites, marking the heaviest payload ever carried aboard Starship. The deployment exercise was intended to validate Starship's future role as a high volume satellite deployment platform capable of supporting the expansion of SpaceX's Starlink broadband network and future commercial missions. The mission also included experimental payloads designed to inspect and monitor the spacecraft's heat shield system during flight. These payloads gathered imagery and thermal data throughout ascent, orbital operations, and atmospheric reentry. SpaceX has placed significant emphasis on improving the durability and reliability of Starship's heat shield tiles following previous flights that experienced damage during reentry phases. Flight 12 lifted off from the company's Starbase facility using the upgraded Super Heavy booster equipped with next generation Raptor engines. The launch proceeded through initial ascent successfully, with the booster separating from the upper stage as planned. However, several engines on the Super Heavy booster shut down unexpectedly during its return phase, preventing the company from completing a controlled recovery attempt. The booster ultimately splashed down in the Gulf of Mexico. Despite the booster issues, the upper-stage Starship spacecraft completed the majority of its mission objectives. Following stage separation, Starship continued on its planned trajectory, conducted payload deployment tests, and performed an extended coast phase before reentering Earth's atmosphere. The spacecraft then executed a controlled splashdown in the Indian Ocean approximately 66 minutes after launch. The mission also served as the first major operational test of SpaceX's newly upgraded Pad 2 launch infrastructure at Starbase. The company implemented redesigned fueling systems, upgraded propellant loading equipment, and faster operational procedures intended to support more rapid launch turnaround times. SpaceX officials noted during the webcast that fueling operations on Pad 2 were completed significantly faster than on previous Starship missions. Industry analysts viewed Flight 12 as an important milestone for the Starship program despite the partial booster recovery failure. The mission demonstrated meaningful progress in several critical areas, including payload deployment, long duration flight operations, heat shield performance, and upper-stage survivability during reentry. The successful operation of the Starship V3 spacecraft also provided SpaceX with additional validation data as the company works toward fully reusable launch operations. NASA is closely monitoring Starship's development because the vehicle is expected to play a central role in the Artemis lunar exploration program. SpaceX is developing a lunar variant of Starship that will be used as the Human Landing System for future Artemis missions intended to return astronauts to the Moon. The company also continues to position Starship as the long term transportation platform for building permanent infrastructure on Mars. The Starship program remains one of the most technically ambitious aerospace development efforts currently underway. SpaceX has continued to adopt an iterative testing strategy that prioritizes rapid development cycles, frequent launch attempts, and continuous hardware upgrades between missions. While challenges involving engine reliability, thermal protection systems, and precision booster recovery remain unresolved, Flight 12 demonstrated continued momentum toward achieving a fully reusable super heavy launch system capable of transporting both cargo and humans beyond Earth orbit. SpaceX founder and CEO Elon Musk has repeatedly stated that Starship is designed to eventually carry large numbers of people and cargo to the Moon and Mars while dramatically lowering the cost of access to space. Flight 12 represents another major step in validating the technologies required to achieve those long term goals. KEY QUOTES: "Today, we're now carrying the heaviest payload ever carried on Starship." Dan Huot, SpaceX Spokesperson

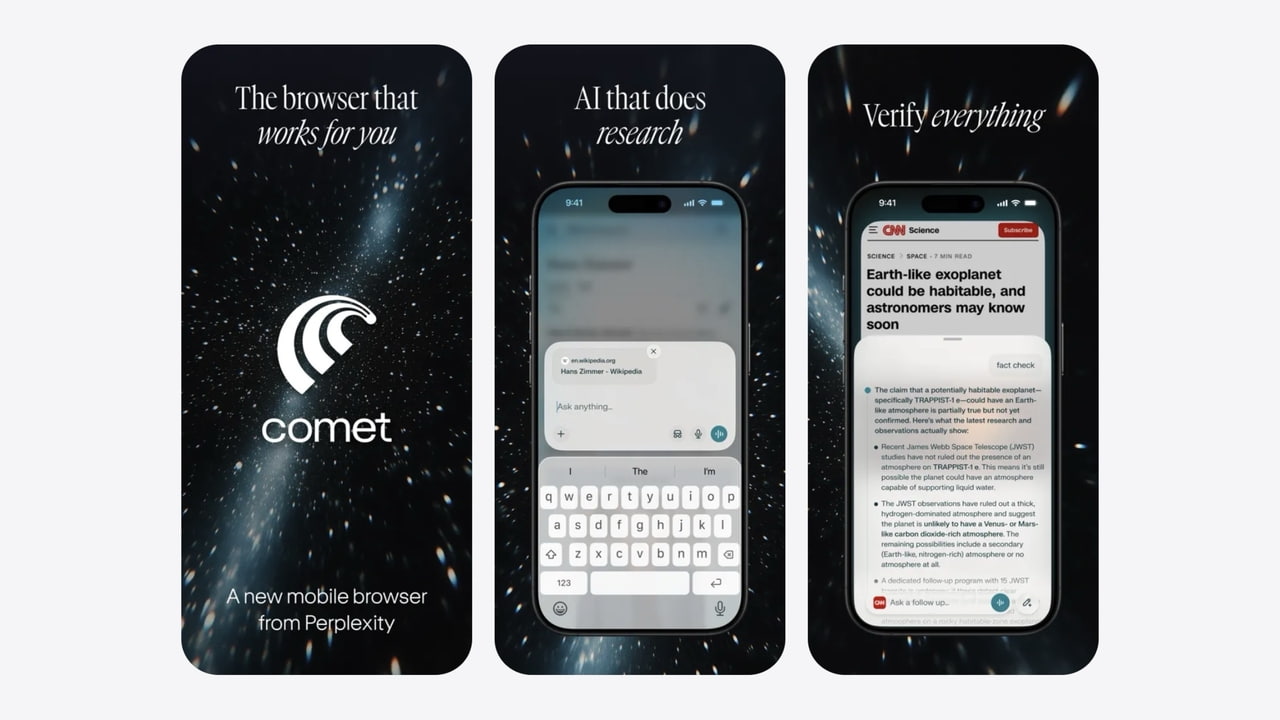

Perplexity Updates Comet AI Browser for iPhone and iPad With New Features

Perplexity has updated its Comet AI browser for iOS, adding new convenience features, refinements for iPad users, and a range of usability fixes. The release arrives just a couple of months after the app initially launched on the iPhone. The update introduces one-tap phone number actions across the browser. Users can now call, FaceTime, send a message, or save numbers directly to Contacts from any webpage without manually copying and pasting digits. Drag-and-drop support has also been expanded, allowing images from any tab to be dropped directly into the assistant for visual queries. Finance Deep Dive analysis now opens as a standard browser tab instead of a pop-up window. Search results will also retain their position when users switch away from a thread and later return. On the iPad, the sidebar has been refined with a tidier layout, a calmer slide animation, and dynamic sizing that better adapts to the current window. The release also addresses several smaller usability issues. The omni-box now adapts its appearance to prevent flickering when moving between light and dark websites. Clearing the Recently Closed list will now persist across app restarts, and deleted threads will no longer reappear when older links are opened. Perplexity also implemented crash fixes related to clearing data and closing tabs, along with sharper favicons and cleaner bottom controls on iPhone. You can download the latest version of Comet - AI Browser & Assistant from the App Store for free.

SpaceX IPO and the Big Bang Bubble

'To build the systems and technologies necessary to make life multiplanetary, to understand the true nature of the universe, and to extend the light of consciousness to the stars'. What's that, you ask? That's SpaceX's (Space Exploration Technologies) mission statement. The space-telecom-AI giant recently filed its prospectus for an IPO, likely to take place in early June. The mission statement is not all. The prospectus is flush with bizarre stuff like 'orbital AI compute', 'asteroid mining', 'energy production on the Moon and Mars' and other jargons that will make you feel as if reading a sci-fi script straight out of Hollywood. Dabbling in frontier technologies, the company's ambitions are anything but modest. These are more than just flashy buzzwords -- they are formally outlined as part of SpaceX's growth strategies, though whether its current capabilities can realistically support such ambitions is a different subject. The IPO price and size are not out yet. But going by media reports, SpaceX is valued at an astronomical $1.75 trillion, going as high as $2 trillion in some cases. When xAI was merged with SpaceX in February 2026, the combined entity was valued at $1.25 trillion. The public issue is likely to fetch the company $75 billion in fresh capital, making it by far the largest IPO globally. Saudi Aramco's $29-billion IPO in 2019 has been the largest so far. So far, so good. But when you combine the above with the asking valuation, one needs to be grounded in logic than give in to the hype. Here's why. To begin with, SpaceX is unprofitable and will be the first unprofitable company to demand over a trillion dollars in valuation, at the time of its IPO. It makes money at EBITDA level, adjusted for share-based compensation and restructuring costs. Assuming an enterprise value (EV) of $1.75 trillion, the EV to adjusted EBITDA multiple (2025 basis) tests boundaries of the financial universe at about 266x and is plain profane. The price-to-book value multiple (based on Q1 2026 book value) works out to 51x! So, what makes the company so special? Here, we attempt a lowdown of its business and the factors that make it a hard sell, particularly at such sky-high multiples. Brass tacks Founded in 2002, SpaceX operates across three business segments: Space, Connectivity and AI. Space: Here, SpaceX designs, manufactures reusable rockets for launch - both for captive (Starlink) and third-party customers including government agencies. In 2015, it was the first company to successfully demonstrate landing of a rocket launched into space back on earth. In 2017, it further demonstrated the reuse of first-stage booster (the part of a multi-stage rocket that gives the propulsion needed to thrust the rocket through the atmosphere and separates from the payload before it reaches the desired orbit). This shaves off a key step in the launch of the next rocket: Rebuilding the first-stage booster from scratch, thus minimising the time gap between two launches. This is known as cadence in rocketry. More frequent the launches over a given period, higher the cadence. SpaceX's line-up has three rockets currently. Falcon 9 is the oldest of the three, first launched in 2010. It is the workhorse of the three, having completed 620 of SpaceX's 650 launches so far, with a payload (what a rocket carries; for example, a satellite) capacity to LEO (low earth orbit - 160-2,000 km above the earth's surface) of 23 tonnes. Falcon Heavy is next in the line-up with a payload capacity to LEO of 64 tonnes. First launched in 2018, it has so far completed 11 launches. Starship is SpaceX's flagship rocket, first launched in 2023. It has a payload capacity of 100 tonnes. Unlike Falcon 9 and Falcon Heavy, which allow only part of them to be reused, Starship is fully reusable, thus resulting in higher cadence. Starship has completed 11 test flights and is expected to commence payload delivery to orbit in the second half of 2026. According to the prospectus, if the Falcon 9 reduced launch cost by 85 per cent of historical average, the Starship is expected to take the savings further down by 99 per cent of historical average. These apart, the space business is also home to the Dragon spacecraft which can fly humans to and from the international space station, for instance. Connectivity: This segment consists of the company's popular 'Starlink' satellite-based telecom business, earning revenue from subscriptions. It is the cash cow of the three segments currently. Here, the company operates a constellation of LEO satellites that offer wireless space-based broadband to consumers, enterprises (use cases like remote worksites, offshore oil rigs, cruise ships, aircraft and so on) and governments (national security applications). As of March 31, 2026, the constellation is 9,600-satellite strong. The segment also has Starlink Mobile, providing direct satellite-to-mobile connectivity. Besides, SpaceX has plans to deploy orbital AI compute or simply, satellite data centres that can support AI applications. The rationale is that running such data centres in space would mean substantial savings on energy and cooling costs. Solar panels are significantly more efficient in space than on earth. The company expects deploying such satellites as early as 2028. However, the prospectus states that large-scale orbital infrastructure would potentially require up to one million satellites, a far cry from how much the company has in space so far. AI: The final segment 'xAI' consists of an array of businesses and is the most cash-guzzling of the three. x.com (formerly Twitter) is the first of them, earning revenue from advertising and user subscriptions. The platform has about 550 million monthly active users (for context, Meta's daily active users were 3.56 billion in Q1 2026). It also embeds the next business: Grok, a large language model. In the third business, it builds data centres (named COLOSSUS) capable of training AI models and leases compute power to customers. The current compute capacity stands at over 1 GW, against a global capacity of roughly about 100 GW by 2025. The fourth business is named Terafab, a chip manufacturing initiative with Intel and Tesla for captive consumption. The final business takes the form of 'Macrohard', an agentic AI platform developed with Tesla that leverages SpaceX's AI models and compute infrastructure to deliver consumer and enterprise applications. To augment capabilities in this segment, SpaceX has collaborated with Cursor (Anysphere Inc.), in a deal struck in April. Under the deal, SpaceX will share compute with Cursor to improve existing AI models and jointly develop products. It also includes an option for SpaceX to buy Cursor at a valuation of $60 billion (share swap). Why it's a hard sell Here are some factors that make the valuation seem disproportionate and hard to digest. One, the prospectus claims that SpaceX operates in a total addressable market (TAM) worth $28.5 trillion. To put this bizarre estimate into perspective, it needs to be noted that the current world GDP is about $120 trillion and that of the US is $31 trillion. Moreover, 80 per cent of this TAM is attributed to AI-powered enterprise applications. With AI tech evolving and when it is still not a given that the services sector of today will be taken over by AI in the future, such claims make it sound more like a pipe-dream. Readers can recall how Musk had forecast x.com's subscription revenue to hit $10 billion by 2028, when he was about to acquire the platform in 2022. Today, this languishes at around $1.4 billion (2025). Two, 'establishing a lunar economy', 'space tourism', 'in-orbit manufacturing', and 'asteroid mining' do sound futuristic and exciting. Musk is even entitled to stock-based compensation (one billion Class B shares) if SpaceX manages to establish a permanent human colony on Mars with at least one million inhabitants (deadline not provided). But today, these appear more like 'building castles in the air (or space)' or a futuristic business plan at best, rather than viable business segments to factor into valuations. Further, if at all such expeditions are taken up, the case that regulations might never allow them to happen, demands one to be sceptical. Three, the company is still loss-making. Accumulated losses as of March 31, 2026 (Q1 2026) stand at $41 billion (book value mentioned above is after considering these losses). One cannot rule out the possibility that the company's future revenue may not be sufficient to justify today's heavy capex - a risk that all AI companies face. Further, xAI is relatively a small player in a space that is already crowded by the likes of Amazon, Google and Microsoft. Four, SpaceX has entered into frequent transactions with related parties. For instance, in 2025, it purchased $506 million worth of battery energy storage system (called Megapack) from Tesla - another entity in which Musk is interested. It also purchased $131-million worth of Tesla Cybertrucks. This follows multiple voluntary recalls of the product by Tesla, some due to serious safety issues such as 'stuck accelerator pedal'. xAI has also leased AI infrastructure hardware worth roughly $4.5 billion from Valor Equity Partners, a firm in which SpaceX director Antonio Gracias is the founder and CEO. Also as mentioned earlier, X Holdings (x.com or Twitter) was folded into SpaceX -- both are entities under Musk's common control. Related-party transactions may well be compliant and executed at arm's length price. However, when there are a material lot of such transactions, a lot in which Musk may have been the beneficiary of, raises eyebrows because of this fact. As of May 1, Musk owns 12.3 per cent of Class A shares (one vote each) and a whopping 93.6 per cent of Class B shares (10 votes each) -- together controlling 85.1 per cent of total voting power. Further, Musk may be removed from his position as CEO/ Board's Chairman only with the approval of majority of the voting power of Class B shares. Investors would do well to choose caution over excitement, given the extortionate valuation. Comments Published on May 23, 2026 READ MORE

SpaceX: $185 Million Memphis xAI Data Center Acquired From Phoenix Investors Affiliate

An affiliate of Phoenix Investors announced the sale of a 217-acre, 785,000-square-foot data center property in Memphis, Tennessee to a subsidiary of SpaceX for $185 million. The facility, located at 3231 Paul R Lowry Road, is commonly referred to as Colossus I, the first data center of xAI, which is a subsidiary of SpaceX. The transaction highlights the continued expansion of large-scale AI and digital infrastructure investments tied to the growing demand for compute capacity and data center assets. The Memphis property represents one of the larger AI-focused infrastructure facilities associated with xAI's operations. Phoenix Investors said the Colossus I site spans 217 acres and includes approximately 785,000 square feet of space designed for data center operations. Phoenix Investors is focused on the acquisition, renovation, and release of former manufacturing facilities across the United States. The company has also expanded into acquiring and repositioning data center assets as demand for digital infrastructure continues to accelerate. According to the company, Phoenix affiliate companies hold equity interests in a portfolio of industrial properties totaling approximately 86 million square feet across 27 states.

SpaceX SHOCKING AI Revenue from Elon Web Services and Cursor Will be HUGE for the SPACEX IPO | NextBigFuture.com

XAI Colossus 1 and part of 2 is leasing to Anthropic for $15 billion per year. This will mean SpaceXAI will be able to have more deals to build faster with huge profits. They can be renting ½ of the new capacity to scale the earth based data centers to 100B+/year high-margin rental business by 2027 and even $200 billion in 2028. More deals and more construction will make AI data centers and Cursor enterprise AI business the main business for spaceX until AI data centers in space take over in 2028-2029.

SpaceX plans morning launch from Vandenberg Space Force Base

SpaceX is planning to launch a Falcon 9 rocket from Vandenberg Space Force Base on Sunday. The launch window is open between 7 a.m. and 11 a.m. The mission is expected to deliver 24 Starlink satellites into low-earth orbit. After stage separation, the first stage is expected to land on a droneship in the Pacific Ocean.

SpaceX's Starship flight hits most targets in pre-IPO test

STARBASE: SpaceX completed a largely successful test flight of its next-generation Starship rocket on Friday, deploying a clutch of mock satellites and executing a controlled splashdown in the Indian Ocean in a high-stakes debut of the newly upgraded vehicle as Elon Musk's company prepares to go public.The latest uncrewed launch of Starship - designed to enable more frequent Starlink satellite launches and to send future NASA missions to the moon - achieved a key milestone for the vehicle following months of testing delays. The outcome could also boost investor confidence ahead of SpaceX's initial public offering next month, expected to be the largest in history.Starship, which SpaceX has spent more than $15 billion developing as a fully reusable spacecraft, is critical to Musk's goals of cutting launch costs, expanding his Starlink business and pursuing ambitions ranging from deep-space exploration to orbital data centers - all factored into his targeted $1.75 trillion IPO valuation.Friday's launch marked SpaceX's 12th test flight of a Starship prototype since 2023 and the first of its V3 iteration, a major upgrade of both the cruise vessel and its Super Heavy booster, as well as the first blast-off from a launch pad specially designed for the new rocket.Meaningful stepSpaceX was counting on a successful test flight to reinforce its case that the largest and most powerful rocket ever flown is nearing commercial readiness after years of explosive setbacks and development delays. Friday's test appeared to have achieved most of its major objectives.The towering vehicle, consisting of the upper-stage Starship astronaut vessel stacked atop a Super Heavy booster rocket, blasted off around 5:30 p.m. CT (2230 GMT) from SpaceX facilities in Starbase, Texas, on the Gulf of Mexico near Brownsville.Minutes later, the two stages cleanly separated, leaving the Starship vehicle to soar on to its cruise phase despite the loss of one of its six engines, then release its simulated satellite payload before surviving a fiery atmospheric re-entry and splashdown. Its flight lasted just over an hour in all.This is a Reuters story

Footage Of Elon Musk's SpaceX Rocket Exploding Into Massive Fireball Has Everyone Saying The Same Thing [VIDEO]

Elon Musk's SpaceX launched a rocket that came crashing down into the Indian Ocean shortly after, and it caused a massive stir online. TMZ shared a clip of the whole thing, which was live on the CEO's Starlink portal on Friday. "Talk about a "holy s***" moment for Elon Musk ... his SpaceX rocket exploded in a massive fireball -- and the thing is, it was all planned out that way!" they wrote. You can see the footage below: Elon Musk Insists Explosion Was Not Unexpected As mentioned above, the clip got people talking online. "You say holy s**t when something occurs that you had literally planned to occur?" one asked. "Like last Tuesday when my neighbor's r***rded kid when he s**t in the street then tasted it and got sick?" "Yep kill humanity, the fish, the animals, the planet, every living thing and we will be all set for Mars where there will be more death..... Unbelievable. In Ohio it looks like the sky is crying😰," another wrote. ""planned explosion" is such a comforting phrase. like "controlled crash" or "intentional divorce"," one comment read. "Why the cheering ???? if this was a real flight with real people they will be dead," someone asked. "That's how test flights are supposed to work..." someone else pointed out. An official release noted that Elon Musk's SpaceX launched its most powerful Starship on a test flight. The spacecraft experienced some engine trouble after blasting off from Texas, but reached the Indian Ocean, as planned. SpaceX also insisted that the explosion was expected.

![Footage Of Elon Musk's SpaceX Rocket Exploding Into Massive Fireball Has Everyone Saying The Same Thing [VIDEO]](https://www.totalprosports.com/wp-content/uploads/2026/05/SpaceX-crash-scaled.jpg)

Telegram's Durov piles on as Meta, Discord fight Texas lawsuits - Cryptopolitan

Texas also sued Discord on the same day for allegedly failing to protect children, leading to cases of sexual assault and suicide. WhatsApp and its parent company Meta (NASDAQ: META) have received lawsuits from the state of Texas regarding their privacy encryption. The company has been accused of violating the state's Deceptive Trade Practices Act. Telegram's founder, Pavel Durov did not miss the opportunity to pile on, writing the latest chapter in the long history of the rivalry with Meta's CEO Mark Zuckerberg. As Cryptopolitan reported in January, Durov joined Musk in punching holes in Meta's case when an international group of plaintiffs claimed in a lawsuit filed in San Francisco that the Zuckerberg-led firm's claims about WhatsApp's chat encryption were false. What did Pavel Durov say about Meta's lawsuit? The rivalry between Telegram founder Pavel Durov and Meta CEO Mark Zuckerberg was reignited this week after Texas Attorney General Ken Paxton sued Meta and WhatsApp for allegedly lying about message encryption. The lawsuit, filed in Harrison County court, accuses Meta Platforms Inc. and its subsidiary WhatsApp LLC of violating the Texas Deceptive Trade Practices Act (DTPA) by falsely promising users that their messages are protected by end-to-end encryption. Pavel Durov, the founder of Telegram, responded to the lawsuit calling WhatsApp's encryption a "giant fraud" in a post on X (formerly Twitter). He wrote that WhatsApp employees have access to "virtually all" personal messages sent. "We now understand what WhatsApp's creator meant when he said 'I sell my users' privacy," Durov said in a Telegram channel post. He also used the moment to encourage users to switch to Telegram instead. Durov has repeatedly accused Meta of mishandling user data since it bought WhatsApp for $19 billion in 2014. Just weeks before the Texas lawsuit, Durov had already warned that WhatsApp's encryption could be "the biggest scam in history," claiming that WhatsApp reads user messages and shares data with third parties. Why did Texas also sue Discord? Attorney General Paxton, who is also running a U.S. Senate campaign, had time to aim at one more tech company operating in Texas. On the same day Meta's lawsuit was filed, Paxton filed a separate suit against Discord, accusing the platform of failing to protect children from predators. Paxton's lawsuit states that Discord lied to the public by claiming that safety was at the core of their operation and "fully integrated" into the company's design process. The Attorney General's office claims that Discord rigged its settings to make every account set toward "maximum exposure" by default. They also claim that the company used unpaid volunteers for important safety functions and built a platform that federal prosecutors have described as "a hunting ground" for predators to find and manipulate children. The lawsuit mentions specific incidents that happened in Texas to strengthen the case. Like one incident in which a 13-year-old girl was sexually assaulted in her home by a predator who groomed her on Discord over several years, and another in which a 15-year-old committed suicide after being forced to produce explicit material through Discord's messaging system. Paxton started investigating Discord in October 2025 after it was revealed that the platform was used by the assassin who murdered conservative commentator Charlie Kirk. There were also reports that the platform exposed minors to sexual exploitation. Nevada, Indiana, and New Jersey have also recently sued Discord, while Florida recently announced its own investigation in March 2026. Paxton wants the court to force Discord to change its default safety settings to be set to maximum protection for new accounts. He also wants age verification under the Texas SCOPE Act and civil penalties of up to $10,000 per violation of the DTPA. Meta has so far denied all allegations in the WhatsApp lawsuit and pledged to fight it, with a spokesperson reiterating that the company cannot access people's encrypted communications and any suggestion to the contrary is false. A Discord spokesperson said the platform has robust safety features and does not reflect the characterizations in the lawsuit.

From Meta to SpaceX: How dual-class shares keep founders in control

NEW YORK, May 24 -- The dual-class share structure outlined in SpaceX's IPO filing, which grants CEO Elon Musk outsized control, has revived one of Wall Street's oldest debates -- that of corporate governance. While such structures are hardly unusual in corporate America, particularly among founder-led companies, few issues are so fiercely criticised by governance watchdogs. Supporters argue visionary founders should be insulated from short-term market pressures, while critics warn that concentrating power in the hands of insiders weakens accountability. For many investors, Musk's track record of building companies and his enormous public following make the governance concerns feel like a price worth paying as long as returns follow. Some others, however, have questioned whether Musk can devote sufficient time and focus to several of his high-profile ventures. What is the dual-class share structure? Simply put, shares are divided into two classes under this framework. One class gives its holders greater voting power than the other, and these high-vote shares are typically held by founders or insiders. In SpaceX's case, Class B shares carry 10 votes per share, while Class A shares carry one vote each. Musk will own a majority of the Class B shares after the share sale, giving him significant control over shareholder decisions. Why do critics hate it? Critics say "one share, one vote" is the cornerstone of shareholder democracy, and any corporate make-up that gives one class of investors more rights than others, even if they hold the same number of shares, concentrates power in the hands of a few. "Over time, this founder-knows-best approach can entrench management and blindside executives to a need for change in strategy," according to the Council of Institutional Investors, a major investor group which has long fought against dual class shares. Do multiple share classes impact stock performance? A 2024 study published in the Harvard Law School Forum on Corporate Governance showed that on average companies in the Russell 3000 index with dual or multi-class share structures outperformed those with a single share class, over five and 10-year periods. However, a separate paper from the European Corporate Governance Institute found that the valuation premium enjoyed by dual-class firms tends to diminish over time, with such companies trading at a discount to their single-class peers roughly seven to nine years after their IPOs. Do investors care? "Most investors have thrown out the idea that voting rights are valuable anymore, which is unfortunate," said Brian Jacobsen, chief economic strategist at Annex Wealth Management. Besides, for companies such as SpaceX that are built around a popular founder, investors may be even more willing to trade voting rights for exposure to the business. "Some investors may view that as a serious governance trade-off, while others may decide it is the price of access to one of the few companies with SpaceX's scale and positioning," said Lukas Muehlbauer, IPOX research associate. Which other companies have dual class shares? Google parent Alphabet, Meta Platforms, Palantir Technologies, Strategy and Berkshire Hathaway are among companies with two or more classes of shares. -- Reuters

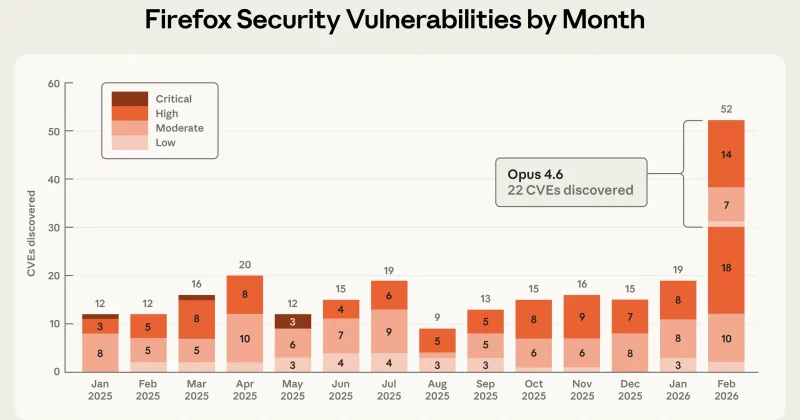

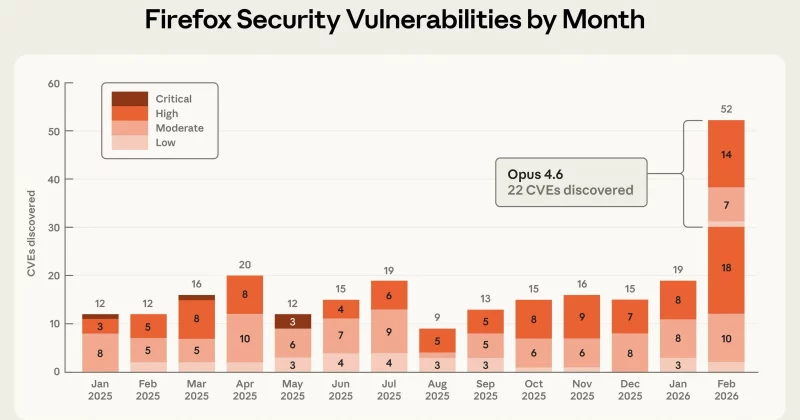

Anthropic's Project Glasswing uncovers over 10,000 software vulnerabilities using AI

Claude Mythos Preview found thousands of zero-day flaws in critical software, including bugs that went undetected for decades, but fewer than 100 have been patched so far. Anthropic's restricted AI model just did in weeks what human security researchers couldn't do in decades. Project Glasswing, the company's cybersecurity coalition, has collectively identified over 10,000 high- and critical-severity software vulnerabilities, including thousands of zero-day flaws that had been lurking undetected in widely used systems for years. Among the discoveries: a 27-year-old vulnerability in OpenBSD and a 16-year-old bug in FFmpeg. Both had survived every prior round of human code review and automated testing. Anthropic launched Project Glasswing on April 7, 2026, built around its unreleased frontier model called Claude Mythos Preview. The core idea is straightforward: point an extraordinarily capable AI at critical software infrastructure and let it hunt for security flaws that humans keep missing. Claude Mythos Preview is so good at finding and exploiting vulnerabilities autonomously that Anthropic decided not to release it publicly. Instead, they formed a coalition of technology and infrastructure companies that get exclusive access to the model strictly for defensive security work. Over 40 organizations now participate in the initiative. Anthropic has committed up to $100 million in model usage credits to support the effort, along with roughly $4 million earmarked for open-source security enhancements. The update released around May 22-23, 2026, is where the numbers get staggering. Partners collectively reported those 10,000-plus high- and critical-severity vulnerabilities, with thousands classified as zero-days, meaning they were previously unknown to the software maintainers and the broader security community. Finding vulnerabilities is one thing. Fixing them is another problem entirely. Of the thousands of validated high-severity findings, fewer than 100 confirmed patches have been deployed so far. The AI can discover flaws at a pace that dramatically outstrips the industry's ability to actually remediate them. The zero-day discoveries are particularly revealing. A 27-year-old flaw in OpenBSD means that for nearly three decades, every security audit, every fuzzing campaign, every pair of expert eyes that reviewed that codebase missed something that an AI model caught. The 16-year-old FFmpeg bug tells a similar story. These aren't obscure projects. OpenBSD is renowned for its security focus, and FFmpeg is embedded in countless media applications worldwide. The $100 million in usage credits that Anthropic committed to the initiative signals how seriously major AI labs are taking the dual-use nature of their models. By restricting Claude Mythos Preview to a vetted coalition rather than releasing it broadly, Anthropic gets to demonstrate responsible deployment while building deep relationships with enterprise security teams.

Anthropic's Project Glasswing uncovers over 10,000 software vulnerabilities using Claude AI

The AI lab's unreleased Claude Mythos Preview model found decades-old zero-day flaws in major operating systems and browsers in just one month. Anthropic just demonstrated what happens when you point a sufficiently powerful AI model at the world's software and tell it to find every crack in the foundation. The answer: more than 10,000 high- and critical-severity vulnerabilities, many of them previously unknown zero-days lurking in major operating systems and web browsers. Project Glasswing, the AI safety company's proactive defense initiative, launched on April 7, 2026. By May 22, roughly six weeks later, the project's Claude Mythos Preview model had already surfaced a staggering volume of security flaws that human researchers might have taken years to catalog. The vulnerabilities weren't trivial edge cases buried in obscure software libraries. They affected foundational infrastructure: the operating systems and browsers that billions of people use daily. Among the discoveries were a 27-year-old vulnerability in OpenBSD, a system long considered one of the most security-conscious operating systems in existence, and a 16-year-old flaw in FFmpeg, the open-source multimedia framework that quietly powers video processing across countless applications and platforms. The majority of the discovered flaws were classified as zero-days, meaning they were previously unknown to the software vendors and security community. Claude Mythos Preview demonstrated what Anthropic described as remarkable autonomous capabilities. The model didn't just identify individual vulnerabilities in isolation. It was able to chain them together and develop working exploits, a process that typically requires elite human security researchers working over extended periods. Anthropic has restricted access to Claude Mythos Preview, making it available only to vetted partners rather than releasing it broadly. Those partners include AWS, Apple, Google, and Microsoft, combining resources to secure critical infrastructure before the discovered vulnerabilities can be exploited by malicious actors. Project Glasswing has surfaced a reality that the cybersecurity industry has been nervously anticipating: the rate at which AI can find vulnerabilities now dramatically exceeds the speed at which humans can patch and verify fixes. Software vendors typically operate on disclosure timelines measured in weeks or months. When a single AI model generates thousands of critical findings in that same window, the entire remediation pipeline becomes a bottleneck.

Historic SpaceX IPO: Could Investing Propel Your Savings and Make Musk a Trillionaire? - Internewscast Journal

The forthcoming stock market debut of SpaceX is being hailed as potentially the most monumental in history, with anticipation running high. Founder Elon Musk stands on the brink of becoming the world's first trillionaire, as his innovative company prepares to go public in the United States next month. Already established as the wealthiest individual globally, Musk has recently unveiled details of his enterprise that encompasses rockets, satellites, and artificial intelligence. This disclosure aims to sway both institutional investors and everyday shareholders to invest in his forward-thinking company, all while he maintains a firm grip on its control. At 54, Musk is renowned for defying odds, and his latest endeavor is no exception. If successful, SpaceX could achieve a valuation of $1.75 trillion, shattering the previous record for an initial public offering held by Saudi Aramco, the oil behemoth. However, Musk's grand vision extends far beyond these financial milestones. Regulatory filings have disclosed a series of bold plans, including ventures into space tourism, asteroid mining, lunar cargo transportation, and the establishment of a Mars colony housing at least one million people. So should you climb on board and invest in what promises to be the investment ride of a lifetime - one that could either reach for the stars or crash and burn? Details of Musk's extraordinary plans are contained in the SpaceX prospectus - a warts-and-all legal document that spells out the opportunities and risks for potential investors. Significantly, it begins with a quote from Musk himself outlining his messianic vision. 'You want to wake up in the morning and think the future is going to be great - and that's what being a space-faring civilisation is all about,' he says. 'It's about believing in the future and thinking that the future will be better than the past. And I can't think of anything more exciting than going out there and being among the stars.' The filing doesn't yet say how much new money SpaceX wants, give a date for when the shares start trading or at what price the new shares will be valued. These details will be revealed next month but analysts reckon Musk is seeking up to $75billion from outside investors to bankroll his extra-terrestrial dreams - or, as some might say, fantasies. To raise such a huge amount involves telling an investment story like no other - and SpaceX doesn't disappoint. At times it borders on science fiction. Musk believes relying on a 'single planetary home... carries an existential risk'. He says: 'By moving beyond the only home we have ever known, we ensure species-level redundancy and the light of consciousness will not be tied to a single planet... We do not want humans to have to have the same fate as dinosaurs.' SpaceX claims to have identified a potential market worth $28.5trn which is 'the largest... in human history,' according to the filing. The sum is not far short of the entire annual output of the US economy - and for good measure this doesn't even include opportunities that may arise with China or Russia. 'We believe that space represents the largest economic frontier in human history,' the filing states. 'Our innovations and technological advancements are redefining industries on Earth, while we aim to create new ones on the Moon, Mars and beyond.' What's most surprising is that the vast majority of this potential revenue would come from its AI unit - a recently formed business that SpaceX admits 'is still being fully integrated', 'operates in a rapidly evolving industry' and is 'subject to significant... risks'. Founded in 2002, SpaceX is best known as a rocket company. Since 2020 its Starlink internet-from-space business has seen almost 10,000 satellites launched by a fleet of reuseable rockets and spacecraft into low-earth orbit to cover the globe and substantially cut mobile 'dead-zones'. Starlink now has more than ten million subscribers in 163 countries. In 2025 it generated income of $4.4billion - more than double the previous year - on sales 50pc higher at $11.4billion. These formed the bulk of SpaceX's total revenues of $18.7billion last year. Its space unit, which includes a contract with Nasa to re-supply the International Space Station, makes up most of the rest. With the top line growing by around a third in each of the last two years, SpaceX is on track to become a $24billion turnover company by the end of this year. But its big bet on AI has plunged SpaceX back into the red after making a $791million profit the previous year. It made a net loss of $4.9billion last year after investing heavily in a sector where SpaceX lags market leaders such as OpenAI, Anthropic and Google, taking total accumulated losses to more than $41billion. SpaceX recently bought xAI, Musk's start-up best known for its 'truth-seeking' Grok chatbot, taking its total debts to $29billion. AI already devours the lion's share of spending on Space X's networks, with almost $8billion gobbled up in the first three months of this year alone. At least some of that will be recouped after Anthropic agreed to pay SpaceX $15billion a year between now and May 2029 to lease space in both of its flagship Colossus data centres that power the AI revolution. Musk's big idea is to relocate Earth-bound data centres into orbit where they will be powered by 'the virtually limitless power of the Sun' but cooled by the vast emptiness of space. Orbital AI computer satellites are expected to be launched as early as 2028. 'We have unmatched launch capabilities to enable deployment at scale,' the prospectus contends, while admitting orbital AI compute 'is an incredibly difficult technical challenge'. It is not the only one. The obligatory 'risk factors' section of the 277-page filing runs to 37 pages. Warnings include: 'Manufacturing, testing and launching rockets, satellites, and spacecraft, including our efforts to reuse rockets and spacecraft, involve inherent risks that could result in human injury or death.' Or as Sir Richard Branson famously put it after his Virgin Galactic rocket crashed on a test flight over the Mojave desert, killing one of its pilots: 'Space is hard.' 'We are highly dependent on the continued service and performance of Mr Musk, whose leadership, vision and expertise are critical to the development of our technologies and the execution of our business strategy. Mr Musk has been, and continues to be, a driving force behind our growth, innovation and operational success,' it enthuses. But the filing goes on to warn: 'The loss of Mr Musk, whether due to death, disability or otherwise, or his inability or unwillingness to continue in his current roles, could significantly disrupt our management structure, adversely affect our ability to execute our strategic plans, and negatively impact our reputation and relationships with customers, partners and other stakeholders.' The world's richest man will continue to run Tesla, where his 20pc stake in the electric carmaker is valued at $260billion, although experts think it is only a matter of time before it is folded into SpaceX. Even that holding pales by comparison with how much Musk's SpaceX stake could be worth in next month's flotation. The Bloomberg Billionaires Index currently values SpaceX at $1 trillion, based on the value of xAI in a recent funding round before the two companies were combined by Musk. He already owns and controls the company through a dual-class share structure that gives him 85pc of the combined voting power - meaning Musk cannot be removed as chairman or chief executive. His overall net worth would rise to an incredible $1.1trillion if SpaceX is priced at $2trillion, according to financial data firm Bloomberg Even at a valuation of $1.75trillion, Musk would still reach the unprecedented trillionaire milestone when combined with his Tesla holding and stakes in other companies. And there's more. His SpaceX stake includes an option on one billion super-voting B shares that pay out if SpaceX hits various stock market valuation milestones from $500billion up to $7.5trillion - and the company puts at least one million people on Mars. What's clear is that barring a last-minute mishap this flotation will cement Musk's grip on SpaceX and his position as the world's richest ever individual. His legion of adoring followers would have it no other way. While Elon Musk is set to become the world's first trillionaire there could be rich rewards too for private investors. But experts warn there is no guarantee SpaceX will go to infinity and beyond. Susannah Streeter of the Wealth Club says that the SpaceX initial public offering is part of a Musk master plan to build 'a mega artificial intelligence (AI) conglomerate' with the scale to rival the Silicon Valley giants such as Meta, owner of Instagram and WhatsApp and Microsoft. But she warns that 'gravity could pull valuations back down to earth'. Musk wants the support of private investors for the flotation - which means that 30 per cent of the shares may be reserved for ordinary people who want to hitch a ride into space, You can register an interest in applying for SpaceX shares in next month's flotation on investment platforms, like AJ Bell, Interactive Investor and Hargreaves Lansdown. These platforms will be able to send out alerts in coming weeks, but they cannot, at present, actively promote the offer to their users due to the extremely strict rules on such matters set by the Securities and Exchange Commission, the US financial watchdog. Fortunately for their savers, some British stock market funds were early-stage investors in SpaceX. These funds may opt to hold onto the shares, rather than cash in their stakes at the time of the flotation. You could opt to put some cash in one or all of these funds. But again there can be no certainty of a bumper payout, and you would also be exposing yourself to risk through the other shares owned by these funds. SpaceX is a holding at four Baillie Gifford funds: Scottish Mortgage, Baillie Gifford US Growth, Edinburgh Worldwide and Schiehallion. SpaceX is the largest holding at Scottish Mortgage, which first put capital behind the rocket company in 2018, providing $200million. Despite its solid-sounding name, this £12.8billion FTSE-100 investment trust is a high-risk tech vehicle, with bets on businesses such as China's ByteDance, the owner of TikTok. As a result of its exposure to SpaceX, Scottish Mortgage is seen as a 'proxy', that is an easy way to gain access to the SpaceX offer. But the trust's managers have been keen to tone down expectations, saying they value SpaceX at $1.25trillion, rather than the $1.75trillion that Musk prefers. SpaceX is the second largest holding at Schiehallion, which also has a slice of Anthropic. But you should only contemplate committing your cash to this trust if you have strong nerves and an appetite for a wager. Why? Because it backs private companies, that is those that are yet to be quoted on the stock market. Some of these companies may turn into stock market stars, but others could fall to earth which makes Schiehallion exciting but also inherently more risky. RIT Capital Partners, another investment trust, snapped up a stake in SpaceX in 2024. Private companies make up one third of this trust's portfolio, suggesting it probably suits only those with more of a taste for a gamble. You may not be a fan of Musk for political or ideological reasons and may therefore be minded to steer clear of SpaceX. But whatever your view, you may find yourself an unwitting investor. The company is set to join the Nasdaq index. Your pension fund may automatically invest in passive funds that track this index. And if you have money in global or US general funds, they may take the same approach.

Video. SpaceX Starship explodes in Indian Ocean after splashdown

SpaceX launched its upgraded Starship V3 rocket from Starbase, Texas, on Friday in the latest test of the world's largest and most powerful rocket system. Video showed the spacecraft descending into the Indian Ocean before exploding after splashdown, a result SpaceX said was planned. The roughly hourlong flight achieved most of its key objectives, including the deployment of mock Starlink satellites, although both rocket stages experienced engine failures during the mission. Crowds gathered in Texas cheered as the launch successfully lifted off after a one-day delay caused by a hydraulic issue at the launch tower.

SpaceX: 10,000 launches annually

Chris Forrester -- Hardly mentioned in the huge SpaceX IPO Prospectus published last Wednesday (May 20) was information repeated by the company's President & COO Gwynne Shotwell, and confirmed by FAA Administrator Bryan Bedford, that SpaceX wants to be launching 10,000 rockets annually within 5 years. Bedford said he met with Shotwell, who told him about the company's ambitious goals. SpaceX conducted 170 launches in 2025 deploying about 2,500 satellites. However, Bedford told journalists after a FAA meeting with the US Senate Committee on Commerce, Science and Transportation, on May 19 that the FAA would need to see greater reliability before approving such a goal. "We need to see a lot more reliability," Bedford told reporters after the forum. Bedford told journalists that he had had a "very frank" discussion with Shotwell, and reminded journalists that Donald Trump wants to get to the Moon before 2028 [and ahead of the US general elections due in 2028]: "To do that, we are going to have to work with industry to unlock that innovation," Bedford added. The 10,000 launch target sounds crazily ambitious but when you mix the daily routine of building and replacing Starlink satellites, adding Version-3 satellites, plus launching Dragon cargo and astronaut missions to the International Space Station, as well as building up orbital data centres (a promised 1 million craft) and not forgetting Lunar ambitions and the obligations that would be placed on SpaceX for human support on the Moon, and the target seems much more reasonable. Then there's Mars. Whether the world will see any activity in Musk's oft-repeated ultimate Mars ambition is to establish a self-sustaining, million-person civilization on the Red Planet to ensure humanity becomes a multi-planetary species, is currently debatable. But powered by SpaceX's massive, fully reusable Starship rockets, this long-term vision spans several interconnected phases, not least first getting to the Moon. However, first Musk must build that city on the Moon. Musk is on record as saying that building "a self-growing city on the Moon," and arguing that it could be achieved in less than a decade, compared with more than 20 years for a similar plan for Mars. Remember, it is only possible to travel to Mars when the planets align every 26 months (a six-month trip time), whereas rockets can launch to the Moon every 10 days (2-day trip time). "Musk's ultimate goal is to get civilization to Mars. It's going to be very expensive, and as a soon-to-be public company, SpaceX needs to appease shareholders," said Justus Parmar, CEO of Fortuna Investments, a venture capital firm invested in SpaceX, speaking before the IPO. But the 10,000 rocket launches would boil down to a (claimed) launch cadence of a flight almost every hour! It is a spectacular ambition but never underestimate the ability of SpaceX - and Shotwell's team - of achieving what only a few years ago were considered impossible targets. SpaceX currently flies about 160 orbital missions a year. It completed 154 launches in 2025 and hit 50 by late April 2026. The entire world managed about 250 launches during 2025. FAA Deputy Associate Administrator Minh Nguyen, speaking at the ASCEND 2026 conference in Washington DC on May 19 that the agency expects "another 1,000 launches and re-entries, likely in the next four or five years. The FAA has approved SpaceX for a combined 195 launches per year across its four currently active sites. Starbase in Texas holds a 25-launch annual cap after the FAA raised it from just five in May 2025. It is no wonder that Shotwell's team is looking for additional launch sites. The current position is that Kennedy Space Center's Launch Complex 39A was cleared for 44 Starship launches per year in a February 2026 environmental impact statement. Two new Starship pads at Cape Canaveral Space Force Station can handle 76 annually. Vandenberg in California was recently approved for 50 Falcon 9 launches, up from 36. But consider this: A standard Boeing or Airbus intercontinental jet can see passengers de-planed, and the aircraft turned around, fuelled, catering on board and passengers in their seats within about an hour when the pressures are on! Low-cost airlines in Europe can do the same in nearer 45 minutes. Indeed, SpaceX is no longer merely dominant in space. It has built something closer to a private monopoly on low-Earth orbit - and its IPO Prospectus lays out plans to entrench that position by orders of magnitude. "You want to wake up in the morning and think the future is going to be great -- and that's what being a space-faring civilization is all about. It's about believing in the future and thinking that the future will be better than the past. And I can't think of anything more exciting than going out there and being among the stars," states Musk. The IPO explains: "[We] identify and create new trillion-dollar market opportunities. We focus on market opportunities that are useful for humanity and that present trillion-dollar opportunities, including global broadband and mobile connectivity for consumers, enterprises, and governments; and AI applications and computational infrastructure. We prioritize opportunities where structural inefficiencies or legacy technological limitations have constrained supply." Three out of every four active manoeuvrable satellites in orbit are now SpaceX's. So are roughly two-thirds of all operational satellites of any kind. The Elon Musk-controlled company has launched some 80% of all mass to orbit globally every year since 2023. As at last week this equates to 9600 active Starlink satellite in orbit. SpaceX generally, including Starlink and now its X, Grok and xAI segments, are looking like spectacularly successful businesses. Time will tell whether the investing public shares that view but SpaceX - in the IPO - pulls no punches saying that it will not be paying shareholders a dividend for the foreseeable future.