News & Updates

The latest news and updates from companies in the WLTH portfolio.

OpenAI, Anthropic, Google unite to combat AI model copying in China

The firms are sharing information through the Frontier Model Forum, an industry non-profit that the three tech firms founded with Microsoft Corp. in 2023, to detect so-called adversarial distillation attempts that violate their terms of service, according to people familiar with the matter. The rare collaboration underscores the severity of a concern raised by US AI firms that some users, especially in China, are creating imitation versions of their products that could undercut them on price and siphon away customers while posing a national security risk. US officials have estimated that unauthorised distillation costs Silicon Valley labs billions of dollars in annual profit, according to a person familiar with the findings who described them on the condition of anonymity. OpenAI confirmed it's part of the information sharing effort on adversarial distillation through the Frontier Model Forum and pointed to a recent memo it sent to Congress on the practice, where it accused Chinese firm DeepSeek of trying to "free-ride on the capabilities developed by OpenAI and other US frontier labs". Google, Anthropic, and the Frontier Model Forum declined to comment. Distillation is a technique where an older "teacher" AI model is used to train a newer, "student," model that replicates the capabilities of the earlier system -- often at a much lower cost than producing an original model from scratch. Some forms of distillation are widely accepted and even encouraged by AI labs, such as when companies create smaller, more efficient versions of their own models, or allow outside developers to use distillation to build non-competitive technology. Yet distillation has been controversial when used by third parties -- particularly in adversary nations like China or Russia -- to replicate proprietary work without authorisation. Leading US AI labs have warned that foreign adversaries could use the technique to develop AI models stripped of safety guardrails, such as limits that would prevent users from creating a deadly pathogen. Most models made by Chinese labs are open weight, meaning that parts of the underlying AI system are publicly available for users to freely download and run on their own platforms, and therefore cheaper to use. That poses an economic challenge for US AI companies that have kept their models proprietary, betting that customers will pay for access to their products and help offset the hundreds of billions of dollars they've spent on data centres and other infrastructure. Distillation first drew significant scrutiny in January 2025 in the weeks after DeepSeek's surprise release of the R1 reasoning model that took the AI world by storm. Soon after, Microsoft and OpenAI investigated whether the Chinese startup had improperly exfiltrated large amounts of data from the US firm's models to create R1, Bloomberg previously reported. In February, OpenAI warned US lawmakers that DeepSeek had continued to use increasingly sophisticated tactics to extract results from US models, despite heightened efforts to prevent misuse of its products. OpenAI claimed in its memo to the House Select Committee on China that DeepSeek was relying on distillation to develop a new version of its breakthrough chatbot. Information-sharing by US AI companies about adversarial distillation echoes a standard practice in the cybersecurity industry, where firms regularly swap data on attacks and adversaries' tactics as a way to strengthen network defenses. By working together, the AI firms are similarly seeking to more effectively detect the practice, identify who's responsible and try to prevent unauthorized users from succeeding. Trump administration officials have signaled their openness to fostering information sharing among AI companies to rein in adversarial distillation. The AI Action Plan unveiled by President Donald Trump last year called for the creation of an information sharing and analysis center, in part for this purpose. For now, information sharing on distillation remains limited due to AI companies' uncertainty about what can be shared under existing antitrust guidance to counter the competitive threat from China, according to people familiar with the matter. The firms would benefit from greater clarity from the US government, the people said. Distillation has ranked as a top concern among American AI developers since DeepSeek rattled global markets in early 2025 with its R1 release. Highly capable open-source models continue to proliferate in China, and many in the industry are watching closely for a major upgrade to DeepSeek's model. Last year, Anthropic blocked Chinese-controlled companies from using its Claude chatbot model, and in February it identified three Chinese AI labs -- DeepSeek, Moonshot, and MiniMax -- as illicitly extracting the model's capability via distillation. This year, Anthropic said the threat "extends beyond any single company or region" and poses a national security risk, since distilled models often lack safety guardrails designed to prevent bad actors from using AI tools for malicious activities. Google has published a blog saying it identified an increase in model extraction attempts. The three US AI labs have not yet provided evidence showing how much of China's model innovation is reliant on distillation, but they note that the prevalence of attacks can be measured based on volumes of large-scale data requests.

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

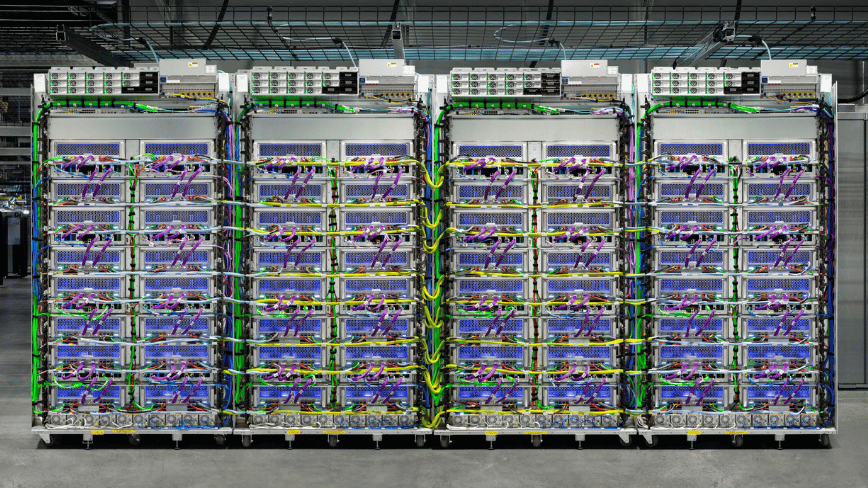

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Anthropic lands billion-dollar AI chip deals with Google, Broadcom

The deal will help Anthropic to secure computing resources to meet AI demands Anthropic has announced plans to invest hundreds of billions of dollars in chip deals with tech giant Google and Broadcom. Under the deal, a US-based AI company will spend the money on Google's chips and cloud services, aiming to secure critical computing resources to meet the company's sugaring demand in the midst of the AI boom. Taking to X, Anthropic announced partnership with Google and Broadcom "for multiple gigawatts of next-generation TPU capacity, coming online starting in 2027, to train and serve frontier Claude models." Besides using Google's TPU, a competitor chip to Nvidia's GPU, Anthropic will also get access to search giant's cloud services. Broadcom will deliver around 3.5GW of capacity on Google's hardware. On the whole, the company under the deal will gain access to close to 5GW in new computing capacity over coming years. In recent months, Anthropic executives are racing to secure resources related to computing power to meet surging demand for coding agents, helping to fund and train them. "We are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," said Krishna Rao, Anthropic's chief financial officer The company also announced its intentions to keep supplying custom TPUs for Google as a part of a long-term agreement through 2031. The continuous supply of chips will help Google to boost sales of its in-house chips to compete with Nvidia. Circular deals Last year, OpenAI, the rival of Anthropic, also struck a deal with Broadcom, AMD, and Nvidia to expand AI tools and infrastructure. Google also invested billions into Anthropic, giving it a 14 per cent stake as of March last year. In November, Anthropic committed to purchase $30 billion of additional capacity from Nvidia and Microsoft and spend $50 billion on new data centers in New York and Texas.

OpenAI, Anthropic, Google Collaborate to Fight Against Chinese Model Imitations

This super composite rating is the result of a weighted average of the rankings based on the following ratings: Fundamentals (Composite), Global Valuation (Composite), EPS Revisions (1 year), and Visibility (Composite). We recommend that you carefully review the associated descriptions. This composite rating is the result of an average of the rankings based on the following ratings: Fundamentals (Composite), Valuation (Composite), Financial Estimates Revisions (Composite), Consensus (Composite), and Visibility (Composite). The company must be covered by at least 4 of these 5 ratings for the calculation to be performed. We recommend that you carefully review the associated descriptions.

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

SpaceX IPO Details Release With June Roadshow Planned

The long-anticipated public debut of SpaceX is no longer a matter of speculation but of timing. According to recent reporting, the private aerospace giant is preparing for an initial public offering, with an early June roadshow now on the table. For years, founder Elon Musk resisted the idea of taking SpaceX public, citing the short-term pressures of quarterly earnings and the long arc required for interplanetary ambitions. So what changed? More importantly, what does this shift reveal -- not just about SpaceX, but about the broader relationship between innovation, capital markets, and the political economy shaping both? From its inception, SpaceX has operated as something of a paradox. It is a private company undertaking what are effectively public missions: satellite deployment, national security launches, and even NASA partnerships. It has disrupted legacy aerospace players while relying heavily on government contracts. It is both a symbol of free-market ingenuity and a beneficiary of federal spending. For years, Musk argued that remaining private insulated SpaceX from the distortions of public markets. And he was not wrong. Quarterly earnings expectations often force companies into incremental thinking, sacrificing long-term breakthroughs for short-term stock performance. That tension is especially acute for a company whose ultimate goal -- colonizing Mars -- is measured in decades, not quarters. Yet even a company as successful as SpaceX cannot escape the gravitational pull of capital markets forever. The sheer scale of its ambitions -- Starship development, global satellite networks via Starlink, and deep-space exploration -- requires funding on a level that private markets alone struggle to sustain indefinitely. The IPO, then, is not a betrayal of principle. It is a concession to reality. But let's ask the uncomfortable question: why now? The answer likely lies as much in Washington as in Hawthorne. The modern American economy is increasingly shaped by a fusion of government policy, regulatory frameworks, and corporate strategy. SpaceX operates at the center of that nexus. Federal contracts -- particularly through NASA and the Department of Defense -- have provided billions in revenue. At the same time, regulatory battles over satellite deployment and spectrum allocation have intensified. Starlink's global footprint places SpaceX squarely in the crosshairs of geopolitical tensions, from Ukraine to Taiwan. Going public may provide not just capital, but political insulation. A publicly traded SpaceX becomes less of a singularly controlled entity and more of a broadly held national asset. Institutional investors, pension funds, and retail shareholders all become stakeholders. In an era when political scrutiny often follows concentrated power, dispersing ownership can be a strategic advantage. In other words, the IPO is not merely financial -- it is defensive. Here is where the irony sharpens. For decades, progressives have argued that capitalism must be restrained, regulated, and redirected toward social goals. Yet it is precisely the discipline of markets -- competition, efficiency, and risk-taking -- that enabled SpaceX to succeed where government-run programs stagnated. NASA itself increasingly relies on private contractors like SpaceX because they deliver results faster and cheaper. The very institution that once symbolized state-driven innovation now outsources its most ambitious missions to a private firm. And now, that firm turns to public markets -- the same markets often maligned by the political left -- for its next phase of growth. The contradiction is difficult to ignore. If markets are so inherently flawed, why do even the most ambitious technological endeavors ultimately depend on them? SpaceX's IPO also signals something deeper: a cultural shift in how America approaches innovation. The 20th century was defined by large-scale, government-led projects -- the Manhattan Project, the Apollo program, the interstate highway system. These were collective efforts, driven by national urgency and centralized planning. The 21st century, by contrast, increasingly relies on private actors to achieve similar scale. Companies like SpaceX, Tesla, and others operate with a speed and flexibility that government agencies struggle to match. But this shift comes with trade-offs. Private companies are accountable to shareholders, not voters. Their priorities, while often aligned with national interests, are ultimately driven by profitability and strategic positioning. The IPO will only intensify that dynamic. Once SpaceX answers to Wall Street, its decisions will be scrutinized not just for their technological merit, but for their financial returns. Will Mars still matter if margins shrink? Will long-term exploration survive short-term market pressures? These are not hypothetical questions. They are the central tension of modern capitalism. There is also a philosophical layer to consider. Human exploration has always carried a moral dimension. From the biblical mandate in Genesis to "have dominion" over the earth, to the age of discovery, to the space race, exploration reflects a fundamental aspect of human nature: the desire to push beyond known boundaries. SpaceX embodies that impulse. Its mission is not merely commercial; it is civilizational. Yet when such a mission becomes entangled with market incentives, the risk is that higher purposes are subordinated to lower ones. Profit is not inherently immoral, but it is an insufficient guide for endeavors that shape the future of humanity. As Scripture reminds us, "Where there is no vision, the people perish" (Proverbs 29:18, KJV). The question facing SpaceX -- and its future shareholders -- is whether that vision can endure in a system that rewards immediate returns over distant horizons. For investors, the SpaceX IPO will undoubtedly be one of the most significant market events in years. Demand will be immense. The company's track record, combined with its dominance in both launch services and satellite internet, makes it a rare asset. But for citizens, the implications are broader. SpaceX is not just another tech company. It is a central player in national security, global communications, and the future of space exploration. Its transition to a public company raises questions about accountability, governance, and the role of private enterprise in shaping public outcomes. Will public ownership democratize its mission, or dilute it? Will market pressures sharpen its efficiency, or constrain its ambition? These are not questions with easy answers. But they are questions worth asking -- precisely because the stakes extend far beyond any stock ticker. In the end, SpaceX's IPO represents both an opportunity and a test. It is an opportunity to bring one of the most transformative companies of our time into the public sphere, allowing broader participation in its success. But it is also a test of whether America's market-driven model can sustain truly long-term, visionary projects. Can a company dedicated to reaching Mars remain faithful to that mission while answering to quarterly earnings calls? Or will the same forces that drive innovation ultimately constrain it? The answer will not come from Wall Street alone. It will come from the interplay of markets, policy, culture -- and the willingness to prioritize vision over convenience. Reaching the stars has always required sacrifice. The question now is what kind of sacrifice we are willing to make.

Elon Musk's shocking SpaceX IPO demand raises eyebrows | News.az

Elon Musk is making headlines again, this time for an unconventional demand tied to the highly anticipated SpaceX IPO. According to reports, Musk is requiring banks and advisers involved in the deal to purchase subscriptions to Grok, an AI chatbot developed by his company xAI, News.Az reports, citing foreign media. The SpaceX IPO is expected to be one of the largest in history, potentially valuing the company at over $1 trillion. That scale gives Musk significant leverage over Wall Street firms eager to participate. As part of the arrangement, banks, law firms, and auditors working on the IPO must commit to using Grok, turning a financial deal into a major boost for Musk's AI business. Some institutions have reportedly already agreed to spend tens of millions of dollars annually on the tool and are integrating it into their internal systems. Major banks including Morgan Stanley, Goldman Sachs, JPMorgan Chase, Bank of America, and Citigroup are reportedly serving as lead advisers on the IPO. Their willingness to accept the condition highlights just how competitive and lucrative the deal is expected to be. The strategy suggests Musk is using the massive visibility of the SpaceX listing to accelerate adoption of Grok in the enterprise world. By tying access to one of the most anticipated IPOs to AI subscriptions, Musk is effectively turning Wall Street demand into a growth engine for his broader tech ecosystem. The move reflects a growing trend where tech leaders leverage flagship events to promote adjacent businesses -- but Musk's approach stands out for its scale and boldness. If successful, it could reshape how major deals are negotiated in the future, blending finance and technology in new ways.

Anthropic revenue tops $30 billion as Claude AI demand surges

Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. "Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025," Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, "today that number exceeds 1,000, doubling in less than two months." Partnership with Google, Broadcom to expand compute capacity This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity."This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide," Anthropic said in a statement. Massive infrastructure push to support AI growth "This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," said Krishna Rao, CFO of Anthropic. "We are making our most significant compute commitment to date to keep pace with our unprecedented growth." US investment expands; multi-cloud strategy continues The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. "Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier," the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). Comments Published on April 7, 2026 READ MORE

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

SpaceX launches Falcon 9 rocket, creating bright plume in SoCal skies

VANDENBERG SPACE FORCE BASE, Calif. (KABC) -- You may have seen something bright light up the night sky on Monday. It was another successful SpaceX rocket launch from Vandenberg Space Force Base in Santa Barbara County. The Falcon 9 rocket carrying Starlink satellites into low-Earth orbit lifted off around 7:50 p.m., creating a dazzling display that was seen over a large area. Eyewitness News viewers across Southern California spotted the rocket as it left behind a bright plume in the skies. SpaceX's previous launch date was set for Sunday, but it was pushed back due to weather conditions. SpaceX said residents in San Luis Obispo, Santa Barbara and Ventura counties may have heard one or more sonic booms during the launch.

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Anthropic Locks In Multi-Gigawatt AI Compute Deal With Google And Broadcom

Anthropic has signed a multi-gigawatt compute agreement with Google and Broadcom, securing access to next-generation tensor processing units (TPUs) expected to come online in 2027. The deal shows the intensifying competition in the AI infrastructure race, where access to high-performance computing capacity is increasingly becoming a key differentiator. Anthropic said the TPUs will be used to train and deploy its flagship Claude models, as demand for generative AI tools continues to surge globally. Alongside the announcement, the company disclosed a sharp jump in its annualised revenue run rate, which has crossed $30 billion -- more than tripling from $9 billion at the end of 2025. The growth reflects accelerating enterprise adoption, with Anthropic noting that over 1,000 business customers are now spending more than $1 million annually on its services, doubling in less than two months. "We are building the capacity necessary to serve the exponential growth we have seen in our customer base," CFO Krishna Rao said, adding that the investments will help Claude "define the frontier of AI development." ALSO READ: OpenAI, Anthropic, Google Unite To Combat Model Copying In China For Broadcom, the agreement marks a deepening of its strategic role in AI infrastructure. In a separate filing, the company confirmed a long-term deal with Google to supply future generations of TPUs -- custom chips designed specifically for machine learning workloads in data centres. The partnership has also been expanded to provide Anthropic access to approximately 3.5 gigawatts of TPU-based compute capacity. The majority of this new infrastructure will be located in the United States, aligning with Anthropic's earlier commitment to invest $50 billion in domestic computing capacity. The move also reflects broader industry trends toward localising critical AI infrastructure amid geopolitical and regulatory pressures. The development comes as Anthropic prepares for a potential public listing and navigates regulatory scrutiny in the US. The company is currently engaged in a dispute with the federal government after pushing back against the use of its technology in mass surveillance and autonomous weapons systems. In March, the Trump administration designated Anthropic as a "supply-chain risk," a classification the company is challenging in court. ALSO READ: Anthropic Wins US Court Order Pausing Trump Ban On AI Tool Essential Business Intelligence, Continuous LIVE TV, Sharp Market Insights, Practical Personal Finance Advice and Latest Stories -- On NDTV Profit.

Anthropic revenue surges to USD 30 billion; Secures major TPU deal with Google and Broadcom

New Delhi [India], April 7 (ANI): Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. 'Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025,' Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, 'today that number exceeds 1,000, doubling in less than two months.' This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. 'This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide,' Anthropic said in a statement. 'This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development,' said Krishna Rao, CFO of Anthropic. 'We are making our most significant compute commitment to date to keep pace with our unprecedented growth.' The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. 'Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier,' the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry). (ANI)

Fluor signs contract with X-Energy for Texas nuclear project

Fluor Corporation has entered into a contract with X-energy to support the company's proposed advanced nuclear project at Dow's UCC Seadrift Operations in south Texas. Under the agreement, Fluor will initially deliver Front-End Loading Stage 2 (FEL-2) services. FEL-2 focuses on project definition, strategic planning, feasibility assessment, cost control and risk mitigation. Fluor will recognise the undisclosed contract value for this initial portion of work in the first quarter of 2026. The X-energy project proposes to develop four, 80-megawatt small modular reactor (SMR) units to supply Dow's Seadrift site with safe, reliable, carbon free electricity and industrial steam, replacing aging energy and steam infrastructure. The project is supported by the US Department of Energy's (DOE) Advanced Reactor Demonstration Program (ARDP), which accelerates the commercialisation of advanced nuclear technologies through cost shared partnerships with industry. A construction permit application was submitted in March 2025 and is currently being reviewed by the US Nuclear Regulatory Commission. "X‑energy's technology offers a powerful pathway for small modular reactors to deliver safe, reliable and fit‑for‑purpose baseload power in an industrial setting," said Pierre Bechelany, Fluor's Business Group President of Energy Solutions. "With eight decades of nuclear experience, Fluor brings the proven expertise and disciplined execution required to help advance this landmark project." X-energy was selected by the DOE in 2020 to develop, license and build its XE 100 advanced SMR and a first TRISO-X fuel fabrication facility. Since then, the company has completed engineering and preliminary reactor design, advanced development and licensing of its fuel facility in Oak Ridge, Tennessee. The Seadrift project is expected to become the first grid scale advanced nuclear reactor deployed to serve an industrial facility in North America. Dow's UCC Seadrift Operations span 4,700 acres and produce more than 4 billion pounds of materials annually for applications including food packaging, footwear, wire and cable insulation, solar cell components, and medical and pharmaceutical packaging. -OGN/TradeArabia News Service

Anthropic signs biggest compute deal yet with Google and Broadcom as run rate hits $30bn | TNW

In short: Anthropic has agreed to access approximately 3.5 gigawatts of next-generation Google TPU compute capacity via Broadcom from 2027, its largest infrastructure commitment to date -- while simultaneously disclosing that its revenue run rate has surpassed $30bn, more than tripling from roughly $9bn at the end of 2025. Anthropic has announced it is securing multiple gigawatts of next-generation compute capacity through a new agreement with Google and Broadcom, while disclosing revenue growth figures that underscore why the AI lab now requires infrastructure at a scale that would have seemed implausible two years ago. The deal, announced on 6 April 2026, gives Anthropic access to approximately 3.5 gigawatts of Google tensor processing unit (TPU) capacity via Broadcom starting in 2027, building on the 1 gigawatt already being supplied to the company in 2026. Krishna Rao, Anthropic's chief financial officer, described it as "our most significant compute commitment to date," adding that the agreement represents a continuation of the company's "disciplined approach to scaling infrastructure." The majority of the new capacity will be located in the United States, extending Anthropic's November 2025 commitment to invest $50bn in American AI computing infrastructure. The announcement is as much about Broadcom as it is about Anthropic or Google. Under the new arrangement, Broadcom acts as the intermediary layer between Google's custom silicon and Anthropic's training and inference workloads. In parallel, Broadcom has signed a separate long-term agreement with Google to design and supply future generations of custom TPU chips, and a supply assurance agreement to provide networking and other components for Google's next-generation AI data racks through 2031. This makes Broadcom an increasingly indispensable node in the AI infrastructure graph. The chipmaker, led by CEO Hock Tan, is not building AI models; it is building the silicon and the interconnects on which AI models are built. Broadcom shares rose approximately 3% in extended trading on the announcement, a reaction that reflects investor appetite for companies positioned at the physical layer of the AI stack rather than the application layer on top of it. Analysts at Mizuho, led by Vijay Rakesh, estimated that Broadcom would record $21bn in AI revenue from Anthropic in 2026 alone, rising to $42bn in 2027, figures that, even as projections, illustrate the financial weight of what is being committed. Broadcom had first signalled the scale of its Anthropic relationship in September 2025, when Hock Tan disclosed during an earnings call that a mystery customer had placed a $10bn order for custom TPU racks. In December 2025, he confirmed the customer was Anthropic, and that an additional $11bn order had since followed. The April 2026 announcement is the third act of the same story: a partnership that has now graduated from a reported $21bn commitment to multi-gigawatt infrastructure with a defined delivery timeline. The compute deal is intelligible only against the backdrop of Anthropic's commercial growth. The company says its run-rate revenue has now exceeded $30bn, up from approximately $9bn at the end of 2025. That trajectory -- more than a threefold increase in roughly three months, is the result of a compounding enterprise sales motion that accelerated sharply after Anthropic closed its Series G funding round on 12 February 2026. That round raised $30bn at a post-money valuation of $380bn, led by GIC and Coatue, and co-led by D.E. Shaw Ventures, Dragoneer, Founders Fund, ICONIQ, and MGX. When the Series G closed, Anthropic reported that more than 500 business customers were each spending over $1m on an annualised basis. As of the April announcement, that number has exceeded 1,000, doubling in less than two months. The pace of enterprise adoption is the proximate cause of the compute expansion: more revenue requires more inference capacity, more inference capacity requires more training compute, and more training compute requires more gigawatts. What distinguishes Anthropic's infrastructure approach from many of its peers is an explicit multi-vendor chip strategy. Claude is trained and served across three hardware platforms: Amazon's Trainium chips, Google's TPUs, and Nvidia GPUs. Anthropic says Claude is the only frontier model available on all three major cloud platforms, AWS, Google Cloud, and Microsoft Azure, a claim that carries commercial as well as technical significance. The multi-vendor stance gives Anthropic both resilience and negotiating leverage. If capacity is constrained on any single platform, workloads can shift. If one chipmaker faces supply disruption, export controls, or pricing pressure, Anthropic is not exposed to the full force of that shock. The strategy has precedent: Microsoft's own AI models reflect a similar instinct to hedge against single-vendor dependence, though in Microsoft's case the hedge is against a partner rather than a hardware supplier. The AWS relationship remains foundational. In late 2024, Anthropic named Amazon its primary cloud and training partner, with total Amazon investment reaching $8bn. Project Rainier, an Anthropic supercomputer cluster running roughly 500,000 Amazon Trainium 2 chips in Indiana, is expected to scale beyond one million Trainium 2 chips by the end of 2025. The Google relationship, which now extends through the new Broadcom deal to multi-gigawatt scale in 2027, sits alongside this rather than replacing it. The April deal is framed explicitly as an extension of Anthropic's November 2025 domestic infrastructure pledge: a $50bn commitment to American AI computing infrastructure, developed initially in partnership with Fluidstack, the UK-based neocloud operator, with data centre sites in Texas and New York coming online through 2026. The new Broadcom capacity, the majority of which will be US-based, expands that footprint into 2027 and beyond. This domestic emphasis is not incidental. The Trump administration's AI Action Plan has explicitly targeted US-based compute capacity as a strategic priority, and Anthropic, like its peers, has positioned its infrastructure investments accordingly. Whether that alignment reflects sincere strategic conviction or tactical regulatory positioning -- or both -- the practical effect is the same: a substantial share of the world's next-generation AI training capacity is being locked into American geography. The Anthropic-Google-Broadcom announcement is a data point in a pattern that has been building for 18 months. SoftBank's $40bn bridge loan to fund its OpenAI commitment reflected the same underlying dynamic: AI labs have grown so fast that their compute requirements now exceed what can be financed from revenue alone, requiring financial engineering at a scale once reserved for infrastructure utilities. Meta's $27bn infrastructure deal with Nebius reflects a parallel logic at the hyperscaler level. The compute arms race is also reshaping how AI companies manage their relationships with the services built on top of their models. Anthropic has been attentive to this: the company recently moved to restrict access to Claude via certain third-party frameworks, a decision that illustrated how the cost dynamics of frontier model inference are forcing AI labs to make difficult choices about which use cases they subsidise and which they price explicitly. For Broadcom, the trajectory is simpler: a chipmaker that was not widely discussed in the context of AI two years ago is now a load-bearing element of the infrastructure on which two of the world's most consequential AI models, Google's Gemini and Anthropic's Claude -- are built and served. That position, cemented through 2031 for Google's custom silicon and through the new multi-gigawatt agreement for Anthropic's TPU access, is the real story beneath the headline numbers. Nvidia remains the dominant force in AI accelerators, and firms like Nvidia's enterprise AI platform continues to expand its reach. But Broadcom's rise as the custom silicon partner of choice for hyperscale AI compute is one of the defining semiconductor industry shifts of this decade.

Anthropic Revenue Soars to $30B, Signs Major Google TPU Deal

Anthropic's run-rate revenue surpassed the USD 30 billion threshold, marking a substantial increase from the approximately USD 9 billion reported at the close of 2025, according to the company. "Demand from Claude customers has accelerated in 2026. Our run-rate revenue has now surpassed $30 billion--up from approximately $9 billion at the end of 2025," Anthropic said in a statement. The company noted that the surge in revenue followed an acceleration in demand from Claude customers throughout 2026. As per the company, the number of business clients spending over USD 1 million on an annualized basis doubled. While Anthropic reported 500 such customers during its Series G fundraising in February, "today that number exceeds 1,000, doubling in less than two months." This financial growth coincided with the signing of a new agreement with Google and Broadcom to secure multiple gigawatts of next-generation Tensor Processing Unit (TPU) capacity. "This significant expansion of our compute infrastructure will power our frontier Claude models and help us serve extraordinary demand from customers worldwide," Anthropic said in a statement. "This ground breaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure: we are building the capacity necessary to serve the exponential growth we have seen in our customer base while also enabling Claude to define the frontier of AI development," said Krishna Rao, CFO of Anthropic. "We are making our most significant compute commitment to date to keep pace with our unprecedented growth." The vast majority of the new compute capacity was slated for placement within the United States. This move represented an expansion of the company's November 2025 commitment to invest USD 50 billion in American computing infrastructure. The arrangement also deepened existing collaborations with Google Cloud, building on TPU capacity increases previously announced in October. Despite the expanded deal with Google and Broadcom, Anthropic maintained its multi-platform hardware approach. The firm continued to train and run Claude on a range of AI hardware, including AWS Trainium, Google TPUs, and NVIDIA GPUs. The company stated that this diversity of platforms allowed for better performance and greater resilience for customers who depended on the model for critical work. "Amazon remains our primary cloud provider and training partner, and we continue to work closely with AWS on Project Rainier," the company said. Claude also maintained its position as the only frontier AI model available to customers across the three largest cloud platforms: Amazon Web Services (Bedrock), Google Cloud (Vertex AI), and Microsoft Azure (Foundry).