News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic leaks source code for Claude Code again: Here's what happened

Anthropic has exposed Claude Code's source code, with a packaging error triggering a rapid chain reaction across GitHub and the developer community, letting them copy it entirely Anthropic has reportedly confirmed to Axios that the company accidentally exposed the source code of its AI coding tool, Claude Code. This has happened for the second time in a year, with the first incident dating to February. According to a report by Axios, a debugging file was mistakenly included in a routine update and published to the public registry used by developers to access software packages. What is source code Source code is the original set of instructions that developers write to tell software or an app how to work. It's written in programming languages like Python or JavaScript and acts like a blueprint, defining everything from how a button behaves to how data is processed behind the scenes. What users see on their screens is just the final output, while the source code is the logic that makes it all function. To make it clearer, think of source code as a recipe in a kitchen. The dish you eat is the finished product, but the recipe explains exactly how it's made step by step. Similarly, source code is what developers use to build and modify software, even though users never directly interact with it. ALSO READ: OpenAI brings ChatGPT to Apple CarPlay through iPhones running iOS 26.4 Also Read Google rolls out AI Inbox feature to organise emails in Gmail: Report Anthropic accidentally releases source code for its popular Claude AI agent Tech Wrap Mar 31: Lava Bold N2 Pro, iOS 26.5, Instagram Plus subscription Tech Wrap Mar 31: Lava Bold N2 Pro, iOS 26.5, Instagram Plus subscription Google kicks off Android developer verification process, shares timeline What happened and what followed The issue of leaked source code for Claude Code came to light after a security researcher found that the package contained a source map file capable of revealing the full underlying codebase. The report by Axios noted that the code was quickly replicated and dissected across GitHub. According to a report by Emerge, after this, Anthropic reportedly began issuing DMCA takedown notices against GitHub mirrors of the leaked code. Soon after, a South Korean developer named Sigrid Jin -- who was recently featured by the Wall Street Journal for consuming 25 billion Claude Code tokens -- responded within hours. He rebuilt the core architecture in Python from scratch using an AI orchestration tool called oh-my-codex, and published a new project called "claw-code" before sunrise. Emerge added that the project is said to be a Python-based reimplementation of the original codebase rather than a direct copy, which puts it in a grey area. Whether that distinction holds up from a legal standpoint remains open to interpretation. Why this matters As per Axios, the leaked source code reportedly included multiple feature flags pointing to capabilities that appear to be already developed but not yet released. The report cited an Anthropic spokesperson as saying that these features include the ability for Claude to review its most recent session to identify improvements and carry those learnings across conversations. The code also references a "persistent assistant" mode that could allow Claude Code to continue running in the background even when the user is inactive. In addition, it highlights remote access capabilities, enabling users to control Claude from a phone or another browser, a feature that has already been rolled out for Claude Code. In simple terms, this means the leak not only allowed people to copy Claude Code's existing features from the source code but also allowed them to copy features that Anthropic was planning to release in the coming weeks. The report further said that the leak won't shut Anthropic's business. Still, it gives every competitor a free engineering education on how to build a production-grade AI coding agent and what tools to focus on. ALSO READ: Google rolls out AI Inbox feature to organise emails in Gmail: Report What did Anthropic say As per Axios, an Anthropic spokesperson told the publication that, "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed." The spokesperson added, "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." What happened in February According to a report by NDTV, citing Odaily, an early build of Claude Code was similarly exposed in February 2025, leading Anthropic to pull the package from npm and remove the associated source map. More From This Section OpenAI brings ChatGPT to Apple CarPlay through iPhones running iOS 26.4 OPPO Find X9 Ultra to OnePlus Nord 6: Check smartphones launching in April Pixel 11 may feature an all-black camera bar and slimmer bezels: Report Apple may shrink Dynamic Island cutout on iPhone 18 Pro: What to expect Meta tests Instagram Plus subscription with additional features: Report

Anthropic says 'exploring' Australia data centre investments

Artificial intelligence giant Anthropic is eyeing data centre investments in Australia, saying Wednesday the nation was a "natural partner" for work in the booming sector. With immense renewable energy potential and vast stretches of uninhabited land, Australia has touted itself as a prime location for the power-hungry data centres needed to power AI. Trump administration defends Anthropic blacklisting in US court US-based Anthropic said it was "exploring investments in data centre infrastructure and energy throughout the country" after signing a memorandum of understanding with the Australian government. "The visit to Australia marks the beginning of long-term collaboration and investment into the Asia-Pacific region," the technology company said in a statement. "Australia's investment in AI safety makes it a natural partner for responsible AI development." The agreement, signed by Anthropic chief executive Dario Amodei in capital Canberra, said the firm would abide by local laws to "maintain strong social licence for investment". Australia's arts sector has accused Anthropic and other AI companies of pushing to loosen copyright laws so chatbots can be trained on local songs and books. Anthropic said it had also agreed to share AI research and safety information with Australian regulators, mirroring similar agreements in Japan and Britain. Industry Minister Tim Ayres said Australia and Anthropic would "harness AI responsibly". New data centres, warehouse facilities that store files and power AI tools, are springing up worldwide. But there are increasing fears about the environmental impact of hulking data hubs. Singapore halted data centre developments between 2019 and 2022 over energy, water and land use worries. Software companies fight back against fears that AI will kill them Australia last week adopted new rules governing the operation of data centres. Tech companies must show how they will source renewable energy and minimise their emissions. "As demand for AI grows, continued expansion of data centre infrastructure must reflect Australian values and be environmentally and socially sustainable," the guidelines state. Anthropic's Claude is the Pentagon's most widely-deployed frontier AI model and the only such model currently operating on its classified systems. But the company is locked in a dispute with the US government, after saying it would refuse to let its systems be used for mass surveillance. Washington has since described Anthropic's tools as an "unacceptable risk to national security". The United States has not only blocked use of the company's technology by the Pentagon, but also requires all defense contractors to certify that they do not use Anthropic's models.

Anthropic boss on copyright, job losses and inevitable tax in AI future

The $555 billion company behind AI program Claude is facing pushback from artists over the use of copyrighted material to train AI. The billionaire boss of artificial intelligence behemoth Anthropic says he is not trying to convince Australia to change its mind on protecting the copyright of musicians, writers and other artists. Anthropic chief executive Dario Amodei has also declared a "sophisticated" tax to ensure the profits generated by AI that would once have gone to a human workforce are still shared fairly in society is inevitable. Mr Amodei took part in a wide-ranging conversation on stage at the Anthropic Futures Forum held at Parliament House today as part of a broader charm offensive by the company, which is now turning its attention to Australia. He revealed his concerns for parts of the workforce that were going to find it challenging to adapt to the new economy, and warned of the need for democracies to retain the "upper hand" militarily as AI expanded. Earlier this morning, Mr Amodei met with Prime Minister Anthony Albanese and signed a memorandum of understanding with the federal government aimed at boosting local research, skills and investment. Anthropic, which is responsible for the popular AI program Claude, is due to open a Sydney office later this year. The $555 billion company has committed to the government's AI plan and promised to invest in renewable energy to power data centres. Copyright holders have 'legitimate claims' Mr Amodei acknowledged there had been "robust debate" in Australia about copyright, and insisted his company was not in the country to "try and convince you to change your mind on this". "We're kind of more here to talk about how can we arrive at an arrangement that works for everyone? And leaves everyone better off?" he said. Mr Amodei said rights holders had "legitimate claims" when it came to the use of their work by AI, but he did not think copyright was the "be-all and end-all for addressing the economic concerns with this technology". "I think that frame is really important." Music boss doubtful of Anthropic's copyright stance But ARIA chief executive Annabelle Herd said she was sceptical of Mr Amodei's insistence he was not looking to change Australia's mind on copyright. "Anthropic says it will comply with Australian law and is apparently willing to pay for the content it is copying," she said. "At the same time, it is pushing for a government sweetheart deal that cuts out rights holders and overrides copyright. "That's not how the free market works." Ms Herd said AI companies were already doing licensing deals at scale across music and other sectors, which proved they "know what they need to do". "If Anthropic really wants to build something that leaves everyone better off, the first step is simple: sit down with rights holders and negotiate licences," she said. University of NSW scientia professor of artificial intelligence Toby Walsh said companies had licensed content before. "They're [the companies] worth hundreds of billions of dollars," he said. Tax on AI inevitable as profits shift away from workers Mr Amodei said he expected governments to develop "sophisticated" taxes to ensure the benefits of AI were shared by more people in society. AI means machines and software owned by firms can do work previously done by people, so a larger share of the profit goes to those who own the technology rather than individual workers who supply the labour. Mr Amodei said the right tax settings would ensure that at the end of this transition "everyone has much more than they would have". "I think it's going to be the work of years to figure out what the structure of that tax should be and getting everyone behind [it], but ... I don't see any way to escape that basic conclusion," he said. Job losses a reality as AI spreads through economy Mr Amodei said there was nothing "unprecedented" or "fundamentally different" about how AI would reshape the economy compared to past technological changes. But he said the speed at which AI was developing was the "big problem" for societies trying to adjust. "I worry about our ability to adapt fast enough," he said. "Governments, companies [and] civil society need to act faster. Mr Amodei said there would be some people in the workforce who would "happily adapt" to the new job environment, but his concern was for the group who were "going to be unwilling or will have a hard time adapting". "That's the group ... public policy needs to be directed at," he said. Cancer could be a thing of the past Mr Amodei said he spent a lot of time talking about the "risks" posed by AI, but it was also important to explain why the technology was being built. "I have a few answers, but I think the most powerful one is the medical benefits," he said. "I am really serious about the magnitude of benefits here." He said slow progress on treating diseases like cancer could be rapidly accelerated in the next five to 10 years. China going down 'wrong path' Mr Amodei said he believed China was an example of a country going down the "wrong path" on AI. "They're running a ... highly sophisticated, impressive in a way ... high-tech surveillance state, where they can watch every aspect of someone's life," he said. Mr Amodei said augmenting that approach with AI would effectively lead to a "panopticon" situation, with discipline enforced through extreme monitoring of citizens. A panopticon is an 18th-century-style circular prison, featuring a central observation tower, where inmates are constantly monitored but cannot see the guards. AI powered 'renaissance' for democracies Mr Amodei said the flip side of the sinister potential uses of AI was the possibility the technology could enhance democracies and their institutions. Using the rule of law as an example, he said AI could be combined with the human elements of justice -- such as a judge -- to bring a machine-level of "consistency" that better protected and enforced people's rights. "We could really have a renaissance of our democratic institutions," he said. Mr Amodei said on the international stage, he was concerned about the use of AI in military competition. "AI is a powerful technology," he said. "I don't want autocracies to be militarily more powerful than democracies. "I want to make sure democracies continue to have the upper hand."

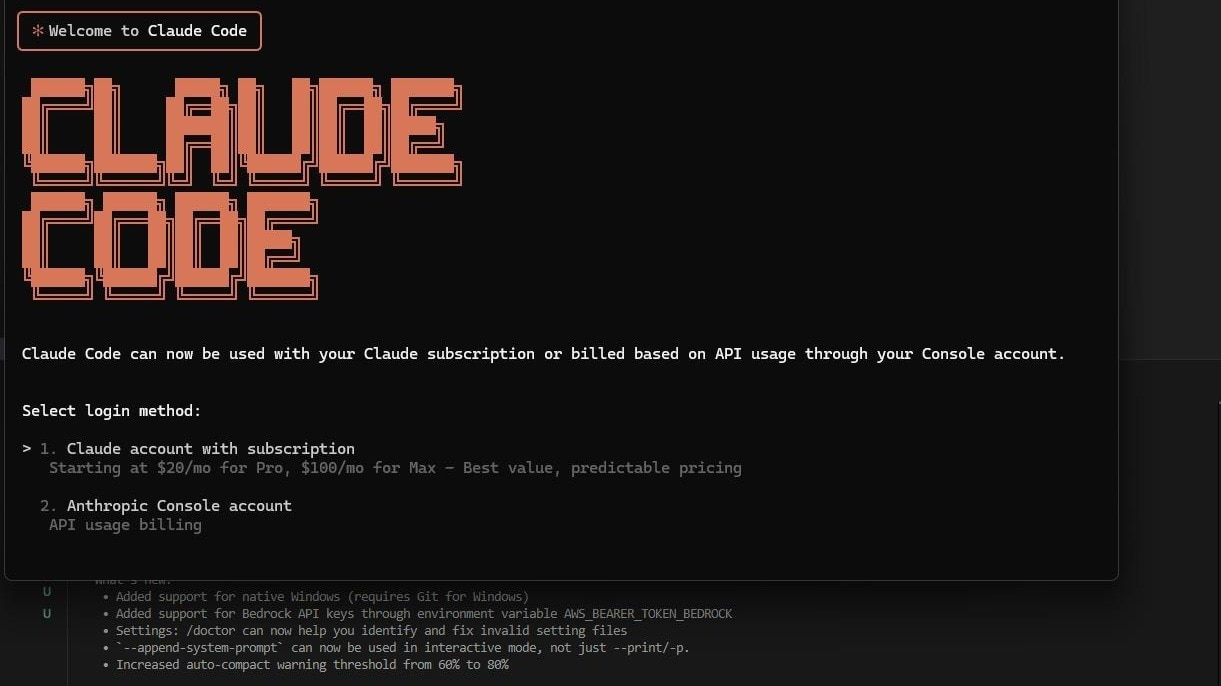

Claude Code source code leaked by Anthropic: Here's what we know

I like to imagine someone at Anthropic realising, just a little too late, that Claude Code had essentially hit "reply all" on the internet. One minute it's quietly helping developers write lines of code and the next, half a million lines of its own are out there for everyone to read. It wasn't a hack or some crazy data breach, just a basic human error. Also read: Anthropic confirms Claude Code source code leak, says no user data exposed On March 31, a debugging file called a source map was accidentally bundled into version 2.1.88 of the Claude Code npm package. Source maps are internal tools that connect compiled code back to its original source which makes it useful for developers but catastrophic when shipped publicly. The file pointed to a zip archive sitting on Anthropic's Cloudflare R2 storage that anyone could simply just right click and download. Security researcher Chaofan Shou spotted it first and posted about it on X. The post racked up nearly ten million views within hours. Also read: Claude Code's computer use: How it works and what it can do on your Mac The source map contained around 1,900 TypeScript files and over 500,000 lines of code covering the full architecture of Claude Code, its LLM API call engine, tool-call loops, streaming responses, retry logic, token counting, and permission models. The codebase was mirrored across GitHub as soon as people found the leak, with one repository getting tens of thousands of stars before Anthropic could pull the package. Anthropic confirmed the leak and kept its statement brief. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." It is true that no customer data, model weights or credentials were leaked but the leak did expose Anthropic's detailed internal roadmap. The code contained dozens of feature flags for capabilities that are fully built but not yet shipped, giving rivals a clear picture of where Anthropic is taking its most commercially important product. Claude Code's annualised recurring revenue stood at $2.5 billion as of February, with enterprise clients driving 80 percent of that figure. The timing makes it worse. This was Anthropic's second major data blunder in under a week, coming days after Fortune reported that nearly 3,000 internal files had been left in a publicly accessible cache, including a draft blog post detailing an upcoming model known internally as Mythos and Capybara. The code has since been pulled and not available to users unless you know the right places to look in.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub.

Anthropic releases part of AI tool source code in 'error'

Add Yahoo as a preferred source to see more of our stories on Google. Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

U.S. Military Base in Guantánamo: An Oasis Amidst Cuba's Chaos

While Cuba grapples with unprecedented energy shortages and power outages lasting up to 20 or 30 hours daily, the U.S. naval base in Guantánamo stands as an oasis of relative wealth, completely supplied from the United States and insulated from the collapse affecting 10 million Cubans across a minefield. This portrayal comes from journalist Carol Rosenberg in a report published last Monday in The New York Times, titled "As Trump Squeezes Cuba, U.S. Military Exists in a Bubble," offering a rare glimpse inside the American enclave separated from the rest of Cuba by a minefield. The base is home to approximately 6,000 people, including military personnel, civilians, and contractors. It features a modern bowling alley with QubicaAMF technology and neon lights, a Starbucks, a McDonald's operating since 1986, an Irish pub named O'Kelly's, cinemas showcasing Hollywood releases, beaches, and a marina. Photos captured by Rosenberg reveal the bowling alley illuminated with colorful lights and screens displaying messages like "Fun Can't Wait!" and "Welcome Back to Bowling!" Meanwhile, a handwritten sign at the Starbucks informs patrons: "Currently out of milk. Available drinks are cold brew, coffee, Americano, frappés, lemonade, and teas. Sorry for the inconvenience." The base's infrastructure ensures its complete independence from the Cuban network: it has two reverse osmosis water treatment plants processing 2.5 million gallons daily, 25 storage tanks, 43 wells, and 50 additional generators capable of producing up to 17,000 kilowatts. In addition, the base boasts 59 fuel tanks with a capacity for 35 million gallons, guaranteeing its full energy independence. In stark contrast, Cuba suffered three total collapses of its national electrical grid in March 2026. The outage on March 16 lasted 29 hours and 29 minutes. By March 25, the nation's available electricity was a mere 1,145 megawatts against a demand of 3,000, resulting in a deficit of 1,885 megawatts. Cuba's energy crisis has worsened due to the simultaneous loss of its two main external oil sources: Venezuela, which supplied between 25,000 and 35,000 barrels daily, halted deliveries following the capture of Nicolás Maduro on January 3, 2026, and Mexico ceased its shipments on January 9 under pressure from U.S. sanctions. Cuba produces only 40,000 barrels of oil daily against a need for 110,000, with reserves in February and March barely enough for 15 to 20 days. In this context, the Trump administration has ramped up pressure on the regime. On January 29, the president declared Cuba an unusual and extraordinary threat through Executive Order 14380, and since January 2025, more than 240 sanctions have been imposed on the island. Last Friday, Trump publicly stated that "Cuba is finished," just three days after declaring, "Cuba is next." The Guantánamo naval base was established in 1903 under the Platt Amendment as a condition for ending the U.S. occupation after the Spanish-American War. It covers 117 square kilometers and is separated from Cuban territory by a minefield. Fewer than 300 aging Cubans remain on the base, former workers who have stayed as special residents. Cuba's GDP has dropped by 23% cumulatively since 2019, with a projected additional decline of 7.2% in 2026, according to The Economist Intelligence Unit, marking the current crisis as the worst since the Special Period of the 1990s.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025. bl/hol/fox

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025. bl/hol/fox

Claude source code leaked by Anthropic, gives everyone a look inside how viral chatbot works

Anthropic accidentally leaks Claude Code's source, exposing how its AI works behind the scenes. Oops! It's happened again. Anthropic has once again accidentally exposed sensitive information related to its Claude AI family. This time, it's the internal source code of its AI coding assistant, Claude Code. The leak, which surfaced on March 31, 2026, was not the result of a cyberattack or hack, but likely a basic packaging mistake. The company recently rolled out an update, during which developers noticed that the release package contained a source map file, something that is never meant to be publicly distributed. The leak first came to light when security researcher Chaofan Shou found that Claude Code's latest update (version 2.1.88) included a source map file in its npm package. This file, called cli.js.map, essentially can allow anyone to rebuild and view the tool's original code. Within hours, the leak, the source map spread across developer communities, with users downloading, analysing, and sharing the exposed code online. The leak was likely caused by a simple technical error during the update process. Software like Claude Code is typically written in human-readable languages such as TypeScript and then compiled into a compressed format before being released. This process helps Anthorpic to protect the original code and prevents it from being easily accessed or reverse-engineered. However, in this case, Anthropic accidentally included a source map file in the public release. Such files are meant only for internal use, as they link the compiled software back to its original source code to help developers debug issues. By shipping this file as part of the npm package, the company effectively made it possible for anyone to reconstruct and access the full codebase. This exposed the internal workings of Claude Code, including its underlying architecture and design. In short, the leaked code gives people a blueprint of how the Claude Code actually works. According to reports, the leak exposed more than 500,000 lines of Claude Code's source code, spread across nearly 2,000 internal files. This included important parts of how the Anthropic's AI tool works, such as: - Internal APIs (how different parts of the system connect) - Telemetry and analytics systems - Some encryption-related logic - Communication between different components Developers who explored the code also found hints of upcoming features and experiments. These include a Tamagotchi-like assistant that reacts while you code, and a feature called "KAIROS," which could enable an always-on AI agent working in the background. The leak even reportedly included internal developer comments, giving a rare glimpse into how engineers at Anthropic think while building the code, including doubts about whether certain features actually improve performance or just add complexity. While the latest leak is significant, what's more concerning is that it isn't an isolated incident. A similar issue was reported in early 2025, also linked to a source map file being included in a public release. The version exposed details around how Claude AI works and how it has been connected to Anthropic's internal systems. Although the issue was fixed at the time, the recurrence of a sensitive code leak now raises questions about Anthropic's internal release processes and quality control. Given that Anthropic is currently at the forefront of the AI race, with its tools widely used by businesses and developers, the slip-up is drawing heavy criticism. Users are questioning how a company that positions itself as a leader in AI safety and reliability could allow such repeated errors.

Anthropic releases part of AI tool source code in 'error'

Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error", the company said on Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.

Anthropic in Australia: High Claude Use, Low Transparency Revealed

The anthropic Economic Index sample shows Australia accounts for 1. 6% of global Claude. ai traffic and a per-capita adoption index (AUI) of 4. 1 -- more than four times higher than expected. That contrast between rapid local uptake and limited public detail about the government pact reframes what Australians should be asking about AI safety, infrastructure and oversight. What does the anthropic Economic Index reveal about Claude use in Australia? Verified facts: Anthropic's Economic Index data show Australia ranked eleventh in global Claude. ai traffic in the February 2026 sample and sits among the highest per-capita adopters with an AUI of 4. 1. Within Australia, New South Wales accounts for 37. 2% of conversations and Victoria 30. 8%, with Queensland at 17. 7% and the remaining states and territories combining for 14%. Adjusting for working-age population, New South Wales has an AUI of 1. 20 and Victoria 1. 19; every other state or territory falls below an AUI of 1, with the lowest adoption in the Northern Territory and Tasmania. Anthropic reports that 46% of Australian conversations are work-related and 7% are coursework-related. These figures are paired with operational moves: Anthropic is expanding to Australia, planning a new office in Sydney, and has signed a Memorandum of Understanding with the Australian government to cooperate on AI safety research and to support the goals of Australia's National AI Plan. The company has also signalled commitments to collaborate with research institutions, participate in safety and security evaluations with the AI Safety Institute, and align future Australian operations with government expectations regarding data centres and AI infrastructure. Anthropic's pact: commitments, friction and political stakes Verified facts: Anthropic chief executive Dario Amodei met with Prime Minister Anthony Albanese to sign the memorandum of understanding. The company agreed to share findings on risks and capabilities of AI, support the local AI ecosystem, and work with the AI Safety Institute on safety evaluations. Separately, Anthropic has filed lawsuits against the US Department of Defense, and the Pentagon has designated the company a supply-chain risk, barring US government contractors from using its technology in military work. At the signing, media access was limited in ways that included restricting news photography at an indoor event. Analysis (clearly identified): The MOU positions government and company as collaborators on safety research while the legal and security frictions abroad complicate that narrative. A public commitment to cooperate on safety is substantial on paper, but the combination of rapid commercial expansion, limited public detail about operational guarantees, and prior legal conflict with the Department of Defense elevates the need for concrete oversight mechanisms. The concentration of Claude use in professional and tech-heavy workforces suggests substantial private-sector reliance in NSW and Victoria, increasing the domestic stakes for any mismatch between assurance and practice. Who benefits, who is exposed, and what should be demanded now? Verified facts: Anthropic has pledged to support Australia's local AI ecosystem and to ensure its operations align with government expectations for data centres and infrastructure developers. Dario Amodei has publicly emphasised regulation and guardrails for the technology, warning about the risks of sophisticated surveillance and framing AI as a military and strategic concern. Analysis (clearly identified): Benefits are concentrated among workplaces and sectors that already show high adoption -- finance, professional services and tech -- which may gain productivity advantages from Claude. Potential exposures include public-sector use, where the Australian Capital Territory shows lower-than-expected adoption despite higher incomes, and critical infrastructure choices tied to data-centre siting and governance. The prior legal dispute with the US Department of Defense and the Pentagon's supply-chain designation underline that national security considerations are not hypothetical. Accountability call (grounded in verified facts): The memorandum and expansion create a narrow window for policymakers to convert commitments into enforceable transparency measures. The Australian government and Anthropic should publish the text of the memorandum of understanding, define independent audit and redress mechanisms for safety evaluations carried out with the AI Safety Institute, and specify how data-centre and infrastructure decisions will be governed to protect privacy and national security. Independent review of adoption patterns -- using the Anthropic Economic Index metrics already disclosed -- should be mandated to track workplace impact and distributional effects across states. Until those concrete disclosures and oversight arrangements are public, the contrast between the anthropic Economic Index's evidence of deep Australian uptake and the limited public detail on governance leaves a governance gap that the public and Parliament should demand be closed.

Anthropic Accidentally Releases Source Code for Claude AI Agent

The leak of basic source code -- the second slip-up in just a week -- triggered a discussion in the community around new revelations of how Anthropic's popular coding agent works Anthropic PBC inadvertently released source code for its popular Claude AI agent, raising questions about its operational security and sending developers on a search for clues about the startup's plans. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said in an emailed statement. "This was a release packaging issue caused by human error, not a security breach. We're rolling out measures to prevent this from happening again." The leak of basic source code -- the second slip-up in just a week -- triggered a discussion in the community around new revelations of how Anthropic's popular coding agent works. Developers said on X they were poring through the details to try and figure out how the startup intended to evolve the platform. Several experts also raised concerns about potential security vulnerabilities in light of the unintended exposure. The leak comes days after Fortune reported that the company accidentally made thousands of files publicly available, including a draft blog post that detailed a powerful upcoming model known internally as both "Mythos" and "Capybara" that presents cybersecurity risks.

Anthropic releases part of AI tool source code in 'error'

Anthropic's AI assistant Claude vies with rival chatbots from OpenAI, Google and others to be the "agent" relied upon by businesses to independently get jobs done Anthropic accidentally released part of the internal source code for its AI-powered coding assistant Claude Code due to "human error," the company said Tuesday. An internal-use file mistakenly included in a software update pointed to an archive containing nearly 2,000 files and 500,000 lines of code, which were quickly copied to developer platform GitHub. "Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed," an Anthropic spokesperson said. "This was a release packaging issue caused by human error, not a security breach." A post on X sharing a link to the leaked code had more than 29 million views early on Wednesday. The exposed code related to the tool's internal architecture but does not contain confidential data from Claude, the underlying AI model by Anthropic. Claude Code's source code was partially known, as the tool had been reverse-engineered by independent developers. An earlier version of the assistant had its source code exposed in February 2025.