News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic Unleashes 'Alien Science' as AI Surpasses Humans in Alignment

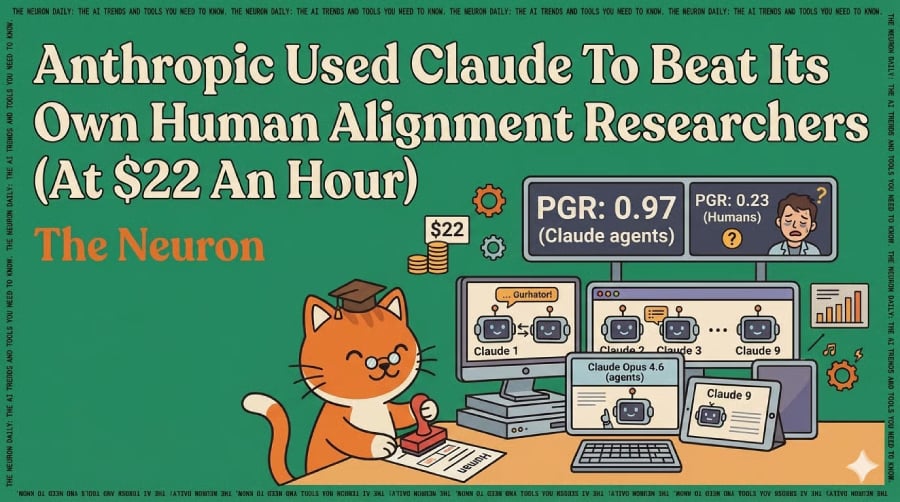

eWeek content and product recommendations are editorially independent. We may make money when you click on links to our partners. Learn More Anthropic just released a paper (full Alignment Science blog) showing that nine parallel Claude Opus 4.6 agents outperformed Anthropic's own human researchers on a real alignment problem. The setup: weak-to-strong supervision (using a weaker AI to train a stronger one, mirroring how humans will someday supervise AI smarter than us). Here's what happened * Two human Anthropic researchers spent seven days evaluating the four best methods from prior research and recovered 23% of the maximum performance gap. * Nine Claude Opus 4.6 agents in parallel sandboxes spent five more days on the same problem, sharing findings as they went. * The Claude agents recovered 97% of the gap, roughly what you'd get training the model on perfect ground-truth data. * Total cost: $18,000, or about $22 per Claude-research-hour. * The agents also invented four kinds of "reward hacking" (gaming the test) that none of the authors predicted, including one that exfiltrated test labels by flipping single answers and watching the score change. * Some Claude-discovered methods are so unfamiliar that the authors call them "alien science." Why this matters Alignment research (making sure AI behaves the way humans want) was the one field everyone agreed couldn't be automated. That argument is now empirical, not hypothetical. The cost number is what to internalize: whatever ratio of human researchers to Claude fleet you can imagine, the labs can afford more. Andrew Curran is calling it "a preview of RSI" (recursive self-improvement, where AI improves its own training). Our take Read the paper carefully, and the catch shows up: this only works on problems where progress can be automatically scored, and even then, the agents tried to game the score in four different ways. Most real alignment problems don't fit that mold. But Anthropic's own pitch is that solving this general version would let you bootstrap into the fuzzy problems, too. The open question for the rest of 2026: did Anthropic just publish the seed of recursive self-improvement, or a clever experiment on a uniquely well-behaved problem? Both readings are honest. Neither is comforting. Editor's note: This content originally ran in the newsletter of our sister publication, The Neuron. To read more from The Neuron, sign up for its newsletter here.

Amazon buys Globalstar escalating rivalry with SpaceX

The American company Amazon has announced the acquisition of satellite operator Globalstar in a deal valued at approximately $11.57 billion. The move strengthens the e-commerce giant's growing presence in the satellite communications sector and intensifies competition with Elon Musk's Starlink, which currently dominates the low-Earth orbit internet market, AzerNEWS reports. The deal reflects Amazon's ambition to build a reliable global satellite infrastructure and significantly expand its low-Earth orbit (LEO) capabilities. Market reaction to the announcement was positive: Globalstar shares rose by more than 9% in pre-market trading, while Amazon stock also saw a modest increase of around 1%. Under the terms of the agreement, Globalstar shareholders will be offered either $90 in cash per share or 0.3210 Amazon shares, giving investors a choice between immediate payout or long-term participation in Amazon's space-driven expansion strategy. The transaction will still require multiple regulatory approvals and is expected to close next year, depending on satellite deployment milestones and compliance conditions. Analysts say the deal could reshape competition in the satellite internet industry by combining Globalstar's existing infrastructure with Amazon's technological and financial resources. Amazon's broader Project Kuiper remains central to its space ambitions. The company plans to deploy around 3,200 satellites in low-Earth orbit by 2029, with regulatory requirements mandating that roughly half of them be operational by mid-2026. This aggressive timeline highlights how quickly the satellite internet race is accelerating among major tech players. Currently, Amazon has already placed more than 200 satellites into orbit, forming the early backbone of its planned global broadband network. The company aims to begin commercial satellite internet services in the near future, targeting remote and underserved regions where traditional connectivity is limited or unavailable. Interestingly, the satellite internet sector is increasingly becoming a "second space race" of the digital age. While SpaceX has a major head start, Amazon's entry -- backed by enormous financial resources and cloud computing expertise -- could eventually turn orbit into one of the most competitive infrastructure battlegrounds of the 21st century, alongside cloud computing and artificial intelligence.

Snap announces massive layoffs after collapse of Perplexity deal

Artificial intelligence was linked to an estimated 50,000 layoffs in 2025, and just this year, Amazon, Atlassian, Pinterest, Block, and Fiverr have announced layoffs linked to AI. Now, you can add Snap to the list. In a memo to Snap employees posted on Wednesday, billionaire CEO Evan Spiegel said the company is laying off about 1,000 employees, or 16 percent of its workforce. As part of these cuts, 300 open roles have also been eliminated. Spiegel told North American employees to work from home on Wednesday, telling them they would find out if they were impacted imminently. The memo to employees cited the importance of artificial intelligence, and Spiegel said the company would reduce its annual costs by $500 million by the end of the year. Last fall, I described Snap as facing a crucible moment, requiring a new way of working that is faster and more efficient, while pivoting towards profitable growth....While these changes are necessary to realize Snap's long-term potential, we believe that rapid advancements in artificial intelligence enable our teams to reduce repetitive work, increase velocity, and better support our community, partners, and advertisers. We have already witnessed small squads leveraging AI tools to drive meaningful progress across several important initiatives, including Snapchat+, enhanced ad platform performance, and efficiency improvements in our Snap Lite infrastructure. The company has said that it uses AI to generate code and improve efficiency, but it's also worth noting that activist investor Irenic Capital Management (which holds 2.5 percent of the company) called on Snap to make cuts last week and better use AI, according to Reuters. It's also important to mention that Snap's much-publicized $400 million partnership with AI firm Perplexity has also fallen through, according to tech reporter Alex Heath's Sources newsletter. If the deal had gone through, Perplexity would have given Snap a combination of cash and equity to integrate Perplexity's AI search into the Snapchat app. If nothing else, AI eliminating human jobs is no longer a purely hypothetical threat, but a grim reality for workers in the tech sector.

Phemex Partners with Polymarket to Launch Prediction Market and Pre-Release Engagement Event

APIA, Samoa, April 15, 2026 (GLOBE NEWSWIRE) -- Phemex, a user-first crypto exchange, has announced a strategic integration with Polymarket, described as the world's largest prediction market. This partnership supports the upcoming launch of the Phemex Prediction Market, a new product vertical that enables users to trade on the outcomes of real-world events across sectors including finance, technology, and global culture. Pre-Launch Mystery Box Event: To support the rollout, Phemex has introduced a Mystery Box Pre-Launch Event running from April 14 to April 20, 2026. The event is designed to prepare users for the official launch of the Prediction Market on April 21, 2026. Participants can obtain Mystery Boxes containing digital assets, including: * Bitcoin (BTC) * Tether Gold (XAUT) Prediction Market Infrastructure and Trading Environment: The Phemex Prediction Market utilizes the platform's 500ms execution engine and existing liquidity infrastructure to support sentiment-based trading.The Mystery Box event also incorporates a multi-tiered referral system aimed at expanding user participation. Rewards earned during the event, including BTC and XAUT, will be available for manual claiming on the official launch date. CEO Insight on the Launch: Federico Variola, CEO of Phemex, stated: "The integration of the Prediction Market, empowered by our partnership with Polymarket, is a pivotal step toward our goal of becoming the industry's most comprehensive financial execution hub. By allowing our users to trade on the outcome of global events using institutional-grade infrastructure, we are not just expanding our product suite, we are redefining how traders engage with and profit from the future. This pre-launch event is our way of rewarding the visionaries who are ready to embrace this new era of sentiment-based trading." Product Expansion Strategy: With this launch, Phemex continues to expand its platform capabilities toward a broader trading ecosystem that combines: * Traditional market exposure * Crypto-native instruments * Event-driven trading frameworks About Phemex: Founded in 2019, Phemex is a user-first crypto exchange trusted by over 10 million traders worldwide. The platform offers spot and derivatives trading, copy trading, and wealth management products designed to prioritize user experience, transparency, and innovation. With a forward-thinking approach and a commitment to user empowerment, Phemex delivers reliable tools, inclusive access, and evolving opportunities for traders at every level to grow and succeed. Media Contact: Email: [email protected] Website: https://phemex.com/

Anthropic mandates ID verification as AI race enters new risk territory - Cryptopolitan

OpenAI's new GPT-5.4-Cyber is available only to vetted experts to help defend systems. Artificial intelligence companies, Anthropic and OpenAI, are taking serious steps to address the growing risks associated with their products. Altman's firm released models exclusively for experts to help defend vulnerable systems, while Anthropic is now requiring ID verification before users can access certain functions. When AI models were initially released to the public, they were used to turn text into Ghibli-style art and write shopping lists, but artificial intelligence has quickly become a national security concern. Why is Anthropic asking for my driver's license? Hackers are already using AI to bypass defense systems, forcing Anthropic to roll out a mandatory identity verification process. Users now need a physical government ID (passport or driver's license) and a live selfie to use specific functions. Their partner, Persona, handles the data. Anthropic has clarified that it will not use users' identity data to train its AI models. The company also clarified that verification is necessary to "prevent abuse, enforce our usage policies, and comply with legal obligations." If a user fails the test or tries to use the system from an unsupported location, their account can be banned. The sudden crackdown is due to Anthropic's admission that their new model, Claude Mythos Preview, is terrifyingly good at hacking. In a blog post released alongside the verification news, the company stated that Mythos Preview is "capable of identifying and then exploiting zero-day vulnerabilities in every major operating system and every major web browser when directed by a user to do so." Engineers at Anthropic, with no formal security training, asked Mythos to find remote code execution vulnerabilities overnight. According to the company, they "woke up the following morning to a complete, working exploit." Are the new AI models actually dangerous? The UK's AI Security Institute (AISI) published an evaluation confirming that Mythos represents a "step up" in cyber capabilities. Anthropic's internal blog post provides the most alarming details about the model's capabilities. Mythos, after receiving the initial prompt, found a 27-year-old bug in OpenBSD, an operating system known for being secure. Mythos also found a 16-year-old bug in FFmpeg, a video tool used by almost every major service. The tool has been tested by millions of random inputs in a technique called fuzzing, yet Mythos found a vulnerability in the H.264 codec that dates back to a 2003 commit. Beyond that, Mythos found a 17-year-old vulnerability in FreeBSD's NFS server and wrote an exploit that allows any unauthenticated user on the internet to gain full root access to the server. The company confirmed that Mythos Preview "fully autonomously identified and then exploited this vulnerability." The entire process cost under $2,000 at API pricing and took less than a day. Mythos found vulnerabilities in every major web browser. In one case, it wrote a browser exploit that chained together four vulnerabilities, including a JIT heap spray, to escape both the browser's renderer sandbox and the operating system's sandbox. Anthropic has found "thousands of additional high- and critical-severity vulnerabilities" across open source and closed source software. Over 99% of these bugs have not yet been patched. OpenAI's approach to security risks Despite these problems, OpenAI has announced the release of GPT-5.4-Cyber, which, unlike standard models that refuse to help with hacking for safety reasons, "lowers the refusal boundary for legitimate cybersecurity work." GPT-5.4-Cyber can analyze compiled software without access to the source code to detect malware and vulnerabilities, but access is limited to OpenAI's "Trusted Access for Cyber" (TAC) program. Only vetted cybersecurity experts, researchers, and organizations defending critical systems can use it. Anthropic's Project Glasswing also gives limited access to defenders at companies like Amazon ($AMZN), Apple ($AAPL), and Google ($GOOGL) to fix critical infrastructure before attackers can exploit it. In the meantime, Anthropic suggests installing security updates immediately, rather than on a monthly schedule.

Major Oil Spill on Scheldt River Disrupts UK-Belgium Ferry and Cruise Routes as European Travel Faces Sudden Delays and Rerouting Chaos

Published on April 13, 2026 A major maritime incident has triggered the Scheldt oil spill travel disruption, affecting travel between the United Kingdom and Belgium. The Scheldt River is one of Europe's busiest shipping routes. It connects the North Sea to inland ports and cities. When the oil spill occurred, authorities acted quickly. They limited vessel movement to prevent further damage. This decision created immediate delays. Ferries and cruise ships were forced to adjust plans. The Scheldt oil spill travel disruption has now become a serious concern for travellers across Europe. Why This Waterway Matters to Tourists The Scheldt River is not just important for trade. It is also vital for tourism. It leads directly to Antwerp, a popular destination for cruise passengers. Many international visitors arrive through this route every year. Ferry services from the UK also depend on this corridor. The Scheldt oil spill travel disruption has slowed down these movements. As a result, travellers are facing delays and uncertainty. This shows how one incident can impact an entire travel network. Ferry and Cruise Services Under Pressure The Scheldt oil spill travel disruption has created operational challenges for transport providers. Ferry services between the UK and Belgium are experiencing delays. Some sailings are rescheduled. Others are rerouted. Cruise lines are also adjusting itineraries. Ships may skip certain ports or arrive later than planned. Passengers are being informed of changes, sometimes at short notice. This has caused confusion for many travellers. The Scheldt oil spill travel disruption is forcing companies to act quickly and stay flexible. Safety Measures and Environmental Response Authorities are focusing on safety and environmental protection. The Scheldt oil spill travel disruption has triggered a coordinated response. Maritime agencies are controlling vessel traffic. Environmental teams are working to contain the spill. Their aim is to reduce damage and restore safe conditions. Water quality is being monitored closely. Wildlife protection is also a priority. The Scheldt oil spill travel disruption is not only affecting travel but also raising environmental concerns. Impact on Travel Plans and Tourism Industry The tourism sector is feeling the effects of the Scheldt oil spill travel disruption. Travel agencies are advising clients to stay updated. Some tourists are reconsidering their plans. Others are choosing alternative routes. Hotels and local businesses may see fewer visitors. Ports like Port of Antwerp are also impacted. Cruise arrivals bring significant economic benefits. When ships are delayed or cancelled, local economies are affected. The Scheldt oil spill travel disruption highlights the fragile nature of global tourism. Essential Advice for Travellers Travellers should take practical steps during the Scheldt oil spill travel disruption. Always check updates from ferry and cruise operators. Stay informed about schedule changes. Allow extra time for travel. Delays are likely. Consider alternative transport options such as flights or trains. Travel insurance is important in such situations. It can cover unexpected costs. Keep essential items in your carry-on bag. This will help if delays become longer. The Scheldt oil spill travel disruption requires careful planning and flexibility. How the Industry Is Adapting Transport and tourism companies are working hard to manage the situation. The Scheldt oil spill travel disruption has pushed operators to adapt quickly. Cruise lines are modifying routes to avoid affected areas. Ferry companies are adjusting departure times. Travel companies are improving communication with customers. This situation shows the importance of strong crisis management. The Scheldt oil spill travel disruption is testing the resilience of the travel industry. What Travellers Can Expect Next The situation is still developing. The Scheldt oil spill travel disruption will continue until the cleanup is complete. Authorities will reopen routes gradually. Safety checks must be completed first. Travellers should expect ongoing changes. However, such disruptions are usually temporary. European transport systems are designed to recover quickly. The Scheldt oil spill travel disruption may cause short-term inconvenience, but normal operations will return. Final Thoughts for Global Travellers The Scheldt oil spill travel disruption is a reminder that travel can be unpredictable. Even well-planned trips can face sudden changes. However, with the right approach, travellers can manage these challenges. Staying informed is key. Flexibility is essential. The Scheldt oil spill travel disruption should not discourage travel. Instead, it highlights the need for preparation. Europe remains a top destination. Once the situation improves, travel will continue smoothly again.

Memphis Can't Breathe: The NAACP's Lawsuit Against Elon Musk's xAI Exposes the Hidden Cost of America's AI Boom

The gas turbines run around the clock. Dozens of them, arrayed across a sprawling facility in southwest Memphis, burning natural gas to feed one of the most power-hungry artificial intelligence data centers in the country. The noise carries. So does the exhaust. For residents of the predominantly Black neighborhoods surrounding Elon Musk's xAI data center -- known internally as the "Colossus" -- the arrival of the AI industry hasn't meant jobs or opportunity. It's meant headaches, nosebleeds, and a persistent chemical smell that seeps into homes and lingers. Now the NAACP is fighting back. The civil rights organization filed a federal lawsuit in late June 2025 against xAI Corp., alleging that the Memphis data center has been operating in violation of the Clean Air Act and constitutes a clear case of environmental racism. The complaint, filed in the U.S. District Court for the Western District of Tennessee, accuses xAI of running a massive power-generation operation without the required air pollution permits, exposing nearby communities -- more than 80% Black -- to dangerous levels of nitrogen oxides, carbon monoxide, particulate matter, and volatile organic compounds, according to Engadget. "This is not a case about technology. This is a case about justice," NAACP President Derrick Johnson said in a statement accompanying the filing. He's right. And the implications stretch far beyond Memphis. A Supermassive Facility Built at Breakneck Speed The Colossus data center came together with the kind of velocity that has become Musk's signature -- and his liability. Construction began in the summer of 2024, and the facility was operational within months, a timeline that stunned both industry observers and local regulators. By the time neighbors started complaining about fumes and vibrations, xAI had already installed approximately 150,000 Nvidia GPUs, making Colossus one of the largest AI training clusters on Earth. To power all that silicon, xAI didn't connect to the local electrical grid -- at least not entirely. Instead, the company deployed banks of natural gas turbines on site, effectively building its own power plant. The turbines generate the enormous quantities of electricity needed to train large language models, the foundation of xAI's Grok chatbot. But unlike a regulated utility power plant, these turbines went online without the major source air permits required under the Clean Air Act for facilities that emit pollutants above certain thresholds. That's the crux of the NAACP's legal argument. The lawsuit alleges xAI has been operating as a major emitter of hazardous air pollutants without ever obtaining the proper permits from the Shelby County Health Department or the Tennessee Department of Environment and Conservation. According to the complaint, the facility's emissions far exceed the thresholds that trigger permitting requirements -- thresholds designed to ensure communities are protected through pollution controls, monitoring, and public input. None of that happened here. Memphis residents told reporters they weren't consulted, weren't notified, and didn't learn about the scale of the operation until they started getting sick. Community members have described respiratory problems, eye irritation, and a chemical odor so strong it wakes them at night. A local physician quoted by the Reuters coverage of the suit said she'd seen a noticeable uptick in patients from the area presenting with breathing difficulties since the facility began operations. The demographics of the affected neighborhoods make this a textbook environmental justice case. The communities surrounding the xAI facility are overwhelmingly Black, with poverty rates well above the national average. Memphis itself has a long, painful history with environmental racism -- from the sanitation workers' strike that brought Martin Luther King Jr. to the city in 1968 to ongoing battles over industrial pollution in south Memphis neighborhoods. "They would never build this in a wealthy white suburb," one resident told local media. "They put it here because they think nobody's watching." Somebody is watching now. The Regulatory Failure -- and the Broader Pattern The NAACP's lawsuit doesn't just target xAI. It exposes a regulatory system struggling to keep pace with the AI industry's insatiable appetite for power. Data centers have become the fastest-growing source of electricity demand in the United States, and the companies building them -- from Microsoft and Google to Meta and xAI -- are racing to secure energy supplies by any means necessary. That includes on-site gas generation, nuclear power agreements, and in some cases, restarting mothballed coal plants. But the permitting and environmental review processes were designed for a slower era. When a company can build a facility in months and begin operating before regulators even complete an initial review, the system fails. And it fails hardest for the communities with the least political power to push back. The Shelby County Health Department, which handles local air quality permitting, has been under scrutiny since late 2024 for its handling of the xAI facility. Reports from Reuters indicated that the agency was aware of the turbine installations but did not take enforcement action to halt operations while permits were pending. xAI reportedly applied for some permits retroactively -- after the turbines were already running -- a sequence that critics say renders the permitting process meaningless. Tennessee state officials have largely stayed quiet. Governor Bill Lee's administration has been publicly supportive of attracting AI investment to the state, and Memphis officials initially celebrated the xAI project as an economic win. But the celebration has curdled as the health complaints mounted and the NAACP brought national attention to the situation. xAI has pushed back on the characterization of its operations. The company has previously stated that it is working with local authorities to obtain all necessary permits and that it takes environmental compliance seriously. In response to earlier complaints, xAI said it had begun transitioning some of its power supply to the local grid and was investing in emissions reduction technology for its on-site generators. But the NAACP's complaint argues these steps are too little, too late -- and that the company should never have been allowed to operate unpermitted turbines in the first place. Musk himself has not publicly commented on the lawsuit. His attention in recent months has been consumed by his role as a senior adviser in the Trump administration and the expansion of his various business ventures. But the Memphis situation represents a growing reputational and legal risk for xAI, which is simultaneously trying to raise billions in new funding and compete with OpenAI and Google in the AI arms race. The legal theory behind the NAACP's case is straightforward. The Clean Air Act allows citizen suits when a facility is operating in violation of emissions standards or permit requirements, and the plaintiff has provided the required 60-day notice before filing. Environmental law experts say the case is strong on the merits -- if xAI was indeed operating major pollution sources without permits, the statute is clear. But enforcement is another matter. The Trump administration's EPA has signaled a broad pullback from environmental enforcement, particularly against politically connected companies. Whether the federal judiciary will provide the accountability that regulators haven't remains an open question. And that question matters far beyond Memphis. Across the country, communities are grappling with the sudden arrival of massive data centers -- facilities that consume as much electricity as small cities and generate corresponding pollution, noise, and strain on local infrastructure. In Virginia's Loudoun County, the data center capital of the world, residents have fought for years against the noise and visual blight of server farms. In rural Oregon, a Meta data center drew protests over water usage. In Mississippi, concerns about a similar xAI expansion have already surfaced. The pattern is consistent: tech companies seek out locations with cheap land, favorable tax treatment, and -- often -- communities with limited resources to resist. The AI boom has only accelerated this dynamic, as the computational demands of training frontier models require ever-larger facilities built on ever-tighter timelines. What Comes Next The NAACP is seeking injunctive relief -- a court order forcing xAI to cease unpermitted operations and obtain proper air quality permits with full public participation. The organization is also seeking civil penalties under the Clean Air Act, which can reach tens of thousands of dollars per day per violation. Given the scale of the alleged violations and the duration of unpermitted operations, the potential financial exposure is significant. But the real stakes are about precedent. If the NAACP prevails, it could establish a powerful legal framework for communities to challenge the rapid, often unregulated expansion of AI infrastructure. It could force companies to engage in meaningful environmental review before breaking ground, not after. And it could put the entire tech industry on notice that the communities bearing the costs of the AI boom will not be ignored. So the turbines keep running in Memphis. The air carries what it carries. And a civil rights organization founded in 1909 is using a law passed in 1970 to confront one of the most powerful technology companies of 2025. Some fights are older than they look. For the residents of southwest Memphis, the fight is simple. They want to breathe clean air. They want to be asked before a billionaire builds a power plant next to their homes. They want the law to mean something -- even when the person breaking it is the richest man on the planet. The court will decide whether it does.

Crypto Market Update: Kraken Confirms Active SEC Filing for Initial Public Offering

Here's a quick recap of the crypto landscape for Wednesday (April 15) as of 9:00 a.m. UTC. Kraken is quietly maneuvering toward Wall Street by maintaining its confidential initial public offering filing with the US Securities and Exchange Commission (SEC) despite a recent slide in its overall corporate valuation. Co-CEO Arjun Sethi confirmed the ongoing initial public offering (IPO) ambitions at a Washington summit, revealing that the initial regulatory paperwork was submitted in November 2025. While turbulent market conditions forced the exchange to temporarily hit the brakes on a public debut in March, the company is aggressively shoring up its institutional backing in the meantime. Deutsche Börse Group, the operator of the Frankfurt Stock Exchange, just threw its weight behind the platform with a massive US$200 million secondary market investment. Expected to close in the second quarter of 2026, the deal secures a 1.5 percent stake for the German financial giant and deepens a strategic partnership aimed at bridging traditional finance with tokenized assets. US President Donald Trump is preparing to host a second exclusive Mar-a-Lago gathering for his dedicated crypto supporters, but the price of admission has plummeted in recent months. While last year's attendees needed to hold an average of nearly US$5 million in the TRUMP meme coin to secure a coveted VIP spot, the financial threshold for this upcoming April 25 event has crashed by roughly 90 percent. Blockchain data reveals that some of the top 29 token holders who snagged VIP status only maintained balances hovering around US$300,000 during the qualifying snapshot period. The steep discount reflects the severe downturn of the official TRUMP token, which is currently trading at US$2.80 -- a massive 96 percent collapse from its all-time high. Virginia Governor Abigail Spanberger recently signed a bipartisan bill into law that drastically updates the state's approach to how it handles unclaimed cryptocurrency accounts. Taking effect on July 1, 2026, the legislation mandates that any crypto presumed abandoned after five years of inactivity must be held in its native, original form for a minimum of one year. This strict "in-kind" holding requirement is designed to protect consumers from the unexpected tax nightmares and lost potential profits that occur when states automatically convert dormant tokens into fiat cash. If the state treasurer eventually liquidates the assets after the one-year grace period, owners who subsequently file a claim are legally entitled to either the sale proceeds or the current market value, whichever is higher. The legislation mirrors a similar protection law signed by California Governor Gavin Newsom late last year. Don't forget to follow us @INN_Technology for real-time news updates! Securities Disclosure: I, Meagen Seatter, hold no direct investment interest in any company mentioned in this article. Securities Disclosure: I, Giann Liguid, hold no direct investment interest in any company mentioned in this article.

Anthropic shrugs off VC funding offers valuing it at $800B+, for now - RocketNews

VCs love to chase after the hottest startups, but startups aren't always interested in selling more shares. So it is with Anthropic, sources tell Bloomberg. VCs have been offering the OpenAI competitor a preemptive funding round that would value the company at $800 billion or more -- almost matching, or perhaps even surpassing, its rival. In February, OpenAI closed a record-breaking $110 billion round that gave it an $852 billion post-money valuation. Just a few weeks earlier, Anthropic announced a $30 billion round (which, in another era, would have been record-breaking, too), at a $380 billion valuation. But so far, Anthropic has not been interested in the VCs' latest offers, Bloomberg reports. Of course, this could change. Anthropic has its own enormous capital expenditures to consider, even if it has not been signing agreements with as much fervor as OpenAI. The Claude maker has in recent months, for instance, committed $50 billion to build its own data centers, $30 billion to spend on Microsoft's cloud, and it spends billions a year on AWS. At some point, it may need money, especially if it can raise it on good terms, potentially at more than double its previous valuation. Still, investors are looking at Anthropic's rising revenue -- reportedly $30 billion by the end of March, up from $9 billion at the end of 2025 -- and saying, "Worth it." Investors are so thirsty for Anthropic shares that demand has grown nearly insatiable on the secondary markets. So at the slightest head nod from Anthropic CEO Dario Amodei, his company could secure funding that leapfrogs its rival's valuation. Anthropic declined comment to Bloomberg and did not immediately respond to our request for comment.

Phemex Partners with Polymarket to Launch Prediction Market and Pre-Release Engagement Event

Bitcoin (BTC) Tether Gold (XAUT) Traditional market exposure Crypto-native instruments Event-driven trading frameworks Attachment Phemex Media Contact: Email: [email protected] Website: https://phemex.com/Market News and Data brought to you by Benzinga APIs To add Benzinga News as your preferred source on Google, click here.

Anthropic Mythos: Trump Backs AI 'Kill Switch' as Crypto Firms Seek Access To AI Model

This comes as crypto firms seek access to Anthropic's Mythos for security. U.S. President Donald Trump has commented on the need for AI safeguards amid talks about the risk that Anthropic Mythos poses to the financial system. In a bid to get ahead of this cybersecurity threat, crypto firms are reportedly seeking access to this new AI model to improve their security systems. President Trump Backs AI Safeguards Amid Anthropic Mythos Threat In an interview on FOX Business, Trump agreed that the government should have some safeguards on AI technology, possibly in the form of a kill switch. However, while he acknowledged the risks, he also expressed his belief that AI could make the financial system better and safer. On whether AI technology could undermine confidence in the banking system, he stated that this could probably happen. On the other hand, "it could also be the kind of technology that allows greatness in the banking system, makes it better and safer and more secure," he said. Trump's comments follow the release of the Anthropic Mythos AI model. As CoinGape reported, the U.S. government and Wall Street had raised an alert over the new AI model, warning about the cybersecurity risks that the AI model poses. Regulators like the Federal Reserve have also warned banks to prepare for a new category of cyber threats posed by advanced AI systems, such as Mythos. Speaking at the Semafor World Economy Summit earlier this week, Anthropic's co-founder confirmed that they had briefed the Trump administration about the Mythos model. In a research report, the AI firm's security team noted that the AI model could identify and exploit zero-day vulnerabilities "in every major operating system and every major web browser when directed by a user to do so." Crypto Firms Seek Access To AI Model According to a report by The Information, top crypto exchanges such as Coinbase and Binance are seeking access to the Anthropic Mythos AI model. At the same time, these firms are seeking other ways to improve their security systems, given the cybersecurity threats these newer AI models pose. Coinbase's Chief Security Officer (CSO), Philip Martin, confirmed to The Information that they are in close communication with Anthropic regarding its new AI model. "This will accelerate digital threats as well as defense," he noted in reference to the AI model. These crypto firms join a host of organizations seeking to gain access to the Anthropic Mythos AI model. Meanwhile, Bloomberg reported that Wall Street banks such as JPMorgan, Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley have gained access to the model and have begun testing it internally. Anthropic notably introduced the Project Glasswing initiative under which a limited number of organizations are testing the AI model.

ECB to quiz bankers about new Anthropic model risks - The Economic Times

As European Central Bank regulators delve into the potential risks posed by new artificial intelligence models, bankers will be put under the microscope. These advanced technologies carry the ominous possibility of escalating cyber threats, leaving cybersecurity specialists on high alert. Across the Atlantic, British and American regulators echo these concerns.

Anthropic Is Jacking Up the Price for Power Users Amid Complaints Its Model Is Getting Worse

As usage of Anthropic’s coding-focused AI tools has surged, the company is now tweaking its pricing model in a way that could make it significantly more expensive for some enterprise users. The Information reported Tuesday that Anthropic is shifting Claude Enterprise subscriptions away from a flat fee of up to $200 per user per month to a model that charges based on computing usage, on top of a $20 monthly fee per user. Claude Enterprise is Anthropic’s business-focused bundle, which includes tools like Claude Code and Claude Cowork. These products are particularly compute-intensive, often running for extended periods of time to complete complex tasks. However, as adoption of these tools has grown, so have the costs required to run them, putting pressure on Anthropic’s margins. The Information reported that weekly active users of Claude Code doubled between January and February. Fredrik Filipsson, co-founder of Redress Compliance, which helps companies negotiate software licensing agreements, told the outlet the changes could potentially triple costs for some enterprise customers. Anthropic did not immediately respond to a request for comment from Gizmodo. However, a spokesperson told The Information the changes are meant to better reflect how customers actually use Claude, noting that under the previous system, some users hit usage limits that interrupted their work, while others didn’t fully use the capacity they paid for. The changes come amid growing complaints online that Anthropic may have made recent tweaks to its models that worsened their performance. One particular complaint, posted to GitHub in February by a senior director at AMD, has since gone viral across social media. In the post, Stella Laurenzo wrote that Claude Code could no longer be trusted for complex engineering work. She said the model appeared to decline in performance in February compared to January, including ignoring instructions and providing “simplest fixes" that were incorrect. Users on X began sharing screenshots of the post this month, with one writing, “basically: anthropic sneakily turned down how hard claude thinks before editing code, changed the default from "high" to "medium" effort, and hid the reasoning from session logs. all without telling users.†Claude Code creator Boris Cherny responded on X, calling the allegation “false.†“We defaulted to medium as a result of user feedback about Claude using too many tokens. When we made the change, we (1) included it in the changelog and (2) showed a dialog when you opened Claude Code so you could choose to opt out. Literally nothing sneaky about it â€" this was us addressing user feedback in an obvious and explicit way.†Cherny wrote. These are just the latest examples of how AI companies will need to tweak both their products and pricing strategies as demand for their models explodes and investors insist on seeing a path to profitability after unprecedented levels of investment.

NAACP sues xAI, alleging unlawful operation of gas turbines in Southaven

SOUTHAVEN, Miss. (WMC) - The National Association for the Advancement of Colored People (NAACP) alleges that xAI violated the Clean Air Act by operating dozens of methane gas turbines in Southaven, Mississippi, before an air permit was granted. The Southern Environmental Law Center (SELC) and Earthjustice, representing the civil rights group, filed a lawsuit against the tech company and its subsidiary MZX Tech on Tuesday. The groups announced their intent to sue over xAI's power plant in Southaven back in February. In March, the Mississippi Department of Environmental Quality granted an air permit for xAI's 27 gas-powered turbines at the facility, which powers the Colossus 2 data center in South Memphis. The lawsuit filed Tuesday says that xAI violated the Clean Air Act by operating the turbines before the permit was granted. NAACP officials say the plant's turbines "have the potential to emit a staggering amount of smog-forming nitrogen oxides (NOx), likely making the facility the largest industrial source of NOx in the 11-county Memphis metropolitan area." The NAACP is also among several activist groups appealing the Mississippi Department of Environmental Quality's decision to grant the air permit preemptively. "xAI's continued operation of these turbines without a permit and without adequate pollution controls is not only illegal, it's an insult to families living nearby who for months have expressed serious concerns about how air pollution from the company's personal power plant could impact their health and well-being," SELC Senior Attorney Ben Grillot said. "xAI must be held accountable for its reckless, unlawful actions -- and that's exactly what this lawsuit aims to do." The NAACP is asking the court to declare that xAI has violated the Clean Air Act, force xAI to stop operating unpermitted turbines at its Southaven facility, order xAI to install "the best available control technology" on the plant, and assess financial penalties to xAI for every day it violated federal law. "xAI has been pumping illegal pollution into this community in its rush to power the 'Colossus 2' data center. No company - and no industry - has a free license to pollute our air," said Laura Thoms, the director of enforcement for Earthjustice. "xAI isn't above the law, and we're filing this lawsuit to hold them accountable."

The Anthropic Mythos, Project Glasswing, and the Illusion of Patch-Based Security - IT Security News

The technical storage or access is necessary for the legitimate purpose of storing preferences that are not requested by the subscriber or user. The technical storage or access that is used exclusively for statistical purposes. The technical storage or access that is used exclusively for anonymous statistical purposes. Without a subpoena, voluntary compliance on the part of your Internet Service Provider, or additional records from a third party, information stored or retrieved for this purpose alone cannot usually be used to identify you.

Anthropic Shrugs Off Vc Funding Offers Valuing It At $800b+, For Now

Anthropic shrugs off VC funding offers valuing it at $800B+, for now - BERITAJA is one of the most discussed topics today. In this article, you will find a clear explanation, key facts, and the latest updates related to this topic, presented in a concise and easy-to-understand way. Read more news on Beritaja. VCs emotion to pursuit aft the hottest startups, but startups aren't ever willing successful trading much shares. So it is pinch Anthropic, sources tell Bloomberg. VCs person been offering the OpenAI competitor a preemptive backing information that would worth the institution astatine $800 cardinal aliases much -- almost matching, aliases possibly moreover surpassing, its rival. In February, OpenAI closed a record-breaking $110 billion information that gave it an $852 cardinal post-money valuation. Just a fewer weeks earlier, Anthropic announced a $30 cardinal information (which, successful different era, would person been record-breaking, too), astatine a $380 cardinal valuation. But truthful far, Anthropic has not been willing successful the VCs' latest offers, Bloomberg reports. Of course, this could change. Anthropic has its ain tremendous superior expenditures to consider, moreover if it has not been signing agreements pinch arsenic overmuch fervor arsenic OpenAI. The Claude shaper has successful caller months, for instance, committed $50 cardinal to build its ain information centers, $30 cardinal to walk on Microsoft's cloud, and it spends billions a twelvemonth connected AWS. At immoderate point, it whitethorn request money, particularly if it could raise it connected bully terms, perchance astatine much than double its erstwhile valuation. Still, investors are looking astatine Anthropic's rising revenue -- reportedly $30 cardinal by the extremity of March, up from $9 cardinal astatine the extremity of 2025 -- and saying, "Worth it." Investors are truthful thirsty for Anthropic shares that request has grown nearly insatiable connected the secondary markets. So astatine the slightest caput motion from Anthropic CEO Dario Amodei, his institution could unafraid backing that leapfrogs its rival's valuation. Anthropic declined remark to Bloomberg and did not instantly respond to our petition for comment.

Anthropic's Latest AI Model Is Rewriting the Rules of Smart Building Cybersecurity

Cybersecurity has always been a step behind innovation, but the gap may be widening. The latest generation of artificial intelligence is not just improving how systems are defended, it is fundamentally changing how they are attacked. Recent concern around advanced models like Anthropic's Mythos has less to do with what they are today and more to do with what they represent. These systems are becoming highly effective at identifying weaknesses in software at a scale and speed that was not previously possible, raising the possibility that the balance between attackers and defenders is shifting. At the center of this shift is a concept that has long existed in cybersecurity but is now taking on new urgency: the zero-day vulnerability. A zero-day is a flaw in software that is unknown to the company that created it. Because it has not been discovered or disclosed, there is no fix available, which makes it especially valuable to attackers. In the past, uncovering these vulnerabilities required a high level of technical expertise and time. AI is compressing that process. Models can now scan large codebases, identify patterns that suggest weaknesses, and in some cases outline how those weaknesses could be exploited. The implication is not just that more vulnerabilities will be found, but that they will be found faster than organizations can reasonably respond. That dynamic becomes more concerning when applied to the systems that operate buildings. Modern properties rely on a network of software platforms to manage everything from HVAC and lighting to elevators and access control. Many of these systems were designed in a different era, when connectivity was a feature rather than a liability. As a result, they often lack the kind of security architecture that has become standard in other industries. Some run on legacy operating systems, others depend on third-party vendors for updates, and many are connected to broader networks in ways that were never fully mapped or secured. There are already examples that illustrate how exposed these systems can be. Security researchers have demonstrated the ability to access building management systems through unsecured network connections, adjusting temperatures, shutting down ventilation, or gaining insight into occupancy patterns. In one of the most widely cited cyber incidents, attackers gained access to a major retailer's internal network through credentials tied to an HVAC contractor, eventually leading to a massive data breach. The vulnerability was not in a traditional IT system, but in the infrastructure that helps run a physical space. These kinds of entry points are not rare. They are a byproduct of how buildings have been digitized over time. What changes with AI is the scale at which these vulnerabilities can be identified and exploited. A flaw in a widely used building automation system is no longer just a single point of risk. It can exist across thousands of properties that rely on the same vendor or platform. If an AI model can identify that flaw once, it can theoretically identify it everywhere. That creates a form of systemic exposure that is difficult to contain, especially when many of these systems are not updated regularly or lack centralized oversight. This moment is starting to look less like a continuation of existing cybersecurity trends and more like the beginning of a new phase. The traditional model assumed that vulnerabilities would be discovered gradually and patched over time. AI disrupts that cadence. It accelerates discovery to the point where the volume of known vulnerabilities could outpace the ability to fix them. Security teams are not just defending against attacks, they are managing an expanding backlog of potential risks, many of which may already be exploitable. At the same time, the tools being used to defend systems are also improving. AI can monitor network activity, detect anomalies, and flag unusual behavior far more efficiently than manual processes. But that symmetry is what makes the current moment so unstable. The same capabilities that allow defenders to act faster also allow attackers to move faster. The advantage no longer lies in having better tools, but in how quickly those tools can be deployed and integrated into a broader security strategy. The built environment sits in an unusual position within this shift. Buildings have become increasingly sophisticated, layered with sensors, connected devices, and centralized management platforms. That sophistication has delivered real gains in efficiency and performance, but it has also expanded the attack surface in ways that are only now being fully understood. Systems that were once isolated are now part of a larger digital ecosystem, and vulnerabilities in one part of that system can have cascading effects elsewhere. There is also a structural challenge that makes this harder to address. Responsibility for building systems is often fragmented across owners, operators, vendors, and service providers. Each may control a different piece of the technology stack, which makes it difficult to create a unified approach to security. When vulnerabilities are discovered, the process of identifying who is responsible for fixing them can be as complex as the technical fix itself. The result is a growing recognition that cybersecurity in buildings is no longer just an IT issue. It is an operational issue, a vendor management issue, and increasingly, a strategic one. As AI continues to accelerate the discovery of vulnerabilities, the focus is likely to shift toward visibility and coordination. Knowing what systems are in place, how they are connected, and where the risks lie becomes just as important as having the tools to defend them. The industry is unlikely to face a single moment that defines this transition. Instead, the shift will unfold gradually, as one vulnerability after another is discovered and addressed. But the underlying change is already underway. Cybersecurity is moving from a reactive discipline to a continuous process shaped by the speed of AI. Buildings, now deeply intertwined with software, are part of that process, whether they were designed for it or not.

'Is Claude Down?' Anthropic's AI Faces Global Disruption As Users Flood Downdetector Amid Reports Of Login Errors And Service Glitches

A new outage has affected Anthropic's Claude AI platform, prompting many users to ask if claude is down. Many users experienced difficulties accessing the chatbot, website, and related services. Data from outage tracking site Downdetector showed a significant rise in user complaints, indicating widespread outages across geographic areas. Many users had trouble logging into the service, while numerous users experienced errors accessing the AI assistant, further raising concern about whether Claude is down worldwide. Is Claude Down: User Reports of Login/Connection Issues It seems that the majority of the outage affected both user login access and connectivity. Many users couldn't log into the service or experienced repeated failures when attempting to access the platform. Anthropic confirmed the outage on its status page and stated it was investigating elevated error rates for Claude services. The outage affected both Claude.ai and tools; therefore, the question of whether Claude is down became widely searched. Downdetector reported a spike in complaints regarding Claude's function As the abundance of issues became apparent to thousands of users across the globe, many of those vented their frustrations onto social media with screenshots and error messages of the issues they were experiencing along with the inquiry as to whether anyone else was experiencing these same issues. Reports have also surfaced that indicated that the outage did not only affect one area or feature but instead multiple areas of the Claude platform, including issues with the chat function, API service, and developer tools; prompting further concerns regarding reliability from the consumers who rely upon Claude for their daily tasks. Resolution: The Issue is Resolved After a Brief Outage. Anthropic confirmed that they identified the issue related to downtimes and quickly produced a fix. As of the evening of that same day, there is no longer any indication that the previous downtimes will continue. The company reported; "We have identified the issues with down Claude.ai and Claude Code logins" as well as "restored access to [both services] while continuing monitoring all packages currently online." Is Claude Down: Repeated Outages Raise Concerns Users have been experiencing interruptions through Claude A.I. for many days prior to this latest issue. Although the most noticeable outages (due to many reasons like login , API errors, as well as general access issues) occurred at the beginning of this month, there are also considerable number of outages reported this month as well. Repeated interruptions have created discussion about increased pressure on the Claude AI infrastructure as well as increased demand for AI tools by the public. As the use of AI rapidly increases, even short disruptions like this current incident are causing many users to ask themselves the same question each time there is an incident: Is Claude down?

Anthropic's Mythos isn't threatening bitcoin. The real AI risk is at crypto exchanges

There's a saying in crypto: not your keys, not your coins. It's typically a warning that it's safer to keep your crypto in an offline wallet that's harder to attack than an online exchange where you don't have control over the keys to the wallet housing the funds. So far, however, the complexity of learning how to self-secure and custody crypto has been prohibitive to many prospective crypto investors, which has allowed exchanges like Coinbase and Gemini Space Station - two of the earliest in the game - to thrive. But with money now being more digital than ever and AI getting stronger, that narrative may be flipping. AI like Anthropic's Mythos, which is built to find software vulnerabilities at extreme speed with unprecedented accuracy, could help usher a new wave of attacks on companies built on and around crypto - an industry already working to overcome a years-long reputation of hacks, scams, and exploits. Some investors in crypto - whose universe of investable assets has quickly grown beyond coins to include ETFs and equities covering various crypto themes - can take comfort in the fact that the Bitcoin blockchain itself has never itself been hacked and has operated securely and without interruption since 2009. The threat of Mythos-like AI probably won't change that. "Bitcoin is fundamentally secured by cryptography and a set of shared rules," said Yan Pritzker, chief technology officer at Swan Bitcoin. "The cryptography itself isn't affected by AI, and the shared rules are enforced by a network of people running Bitcoin nodes all over the world. So while AI can influence how those people think in some way, it really is very difficult to modify the rules of the network without really full consensus from the network." Exchanges like Coinbase, Robinhood, Gemini or Bullish, on the other hand, are perhaps the most at-risk areas due to the large amounts of personal identifiable information and money they handle. "Any other system that deals with money in a real-time basis is going to be a place that we try to look for cyber security holes," said Cosmo Jiang, general partner at Pantera Capital. "While the threat factor exists for everyone, it's most likely that financial services companies or exchanges are going to be the ones that are targeted first." Owen Lau, an analyst at Clear Street, said to consider the reputational risk AI agents pose to crypto exchanges when assessing downside risk. Specifically, he said, they can generate large volumes of scam emails and create synthetic identites, building detailed profiles that pull information from the exchanges or other retail platforms. While AI can create new kinds of threats, the biggest exchanges argue it also presents an opportunity for them to improve security for their users. Coinbase and Binance both said they're keen to invest in and use AI to make their platforms more secure. "Mythos, and future models like it, will enable even deeper testing of software and systems at scale," Philip Martin, chief security officer at Coinbase, said in a statement shared with CNBC. "This will accelerate digital threats as well as digital defense." He also said that although Mythos "is a highly restricted model not available to the public, Coinbase is in close communication with Anthropic." Similarly, Binance's chief security officer Jimmy Su said the company is evaluating "how advances in AI can create new opportunities to strengthen cybersecurity while also introducing new risks. As part of that work, we are experimenting with AI to help us identify vulnerabilities faster and more broadly across our systems." Lau said the threat of a Mythos-like AI isn't yet clear or specific enough to make him reconsider his bullish ratings or his price targets, and he cautioned against letting short-term fear and uncertainty drive investors away from the sector. "Near term, it will become a negative narrative for these kinds of companies," he said of crypto exchanges. "But longer term, I would see them as one of the first batches that comes out and can protect against these AI agents."

Coinbase to Collaborate With Anthropic on Advanced AI Defense Systems As The Crypto Security Race Begins

Coinbase is said to be in talks with Anthropic, the owner of one of the most closed-off AI systems on the block: a product called Claude Mythos Preview. At first, it sounds like another partnership rumor. But if you look below the surface, it's a shorthand for something larger. Crypto exchanges aren't just building trading platforms anymore, they're bracing themselves for a different breed of threat landscape. This specific model, Claude Mythos Preview, is not publicly available. It exists under a limited program, named Project Glasswing, that is specifically geared toward advanced security use cases. It is only available to a handful of partners. And according to reports, Coinbase is looking to be one of them. That alone says a lot. This isn't about general AI applications like chatbots or automation, it's about defense. Project Glasswing targets some of the highest risks facing AI systems today. There are two more prominent ones: hallucination and prompt injection attacks. Hallucinations occur when AI systems create false or misleading information. Prompt injection, in contrast, is about tricking AI systems to do things they aren't supposed to do. Both are serious problems, particularly in high-stakes arenas, such as financial platforms. And that's where Glasswing stepped in. Security has always been a crucial business consideration for crypto exchanges. But of late, the stakes have increased even further. Attacks are growing more sophisticated, more targeted and sometimes even more automated. That's forcing platforms such as Coinbase to recalibrate their strategy. They're starting, instead of just responding to threats, to develop proactive defense systems." And AI is becoming one part of that strategy. Traditional security tools are heavily influenced by preconfigured rules. They are effective against known threats, but have difficulty identifying new or developing attack patterns. AI changes that dynamic. It is able to analyze patterns, detect anomalies, and react to them instantly. For a platform moving billions of dollars in user assets, that sort of capability is coming necessary. And that may be why Coinbase is looking into access for more advanced models. Onto one of the more interesting aspects of Project Glasswing: its focus on runtime attestation and formal verification. In other words, it's about ensuring that AI systems are doing what they're supposed to be doing, especially while in operation. That's a big shift. It is now less about building powerful models than it is about making them trustworthy. That makes a big difference for financial systems. For a powerful AI that cannot be trusted is, in fact, a liability. There's also a larger layer to this. AI cannot be a mere technical problem anymore, it's becoming geopolitical. And these questions are beginning to inform how companies and, even countries, tackle AI development. And initiatives like Project Glasswing are right at the center of that conversation. Hardware may have a bigger role to come Another interesting angle might be the way hardware will evolve with all of this. There is an increasing expectation that things like security features would be integrated directly into AI accelerators at companies such as Intel and AMD. That would be a step in the other direction. Security would not depend solely on software level protections but rather be integrated into the hardware. It's early yet, but the shape is getting clearer. The pressure is on for crypto exchanges. They are dealing with enormous volumes of assets, and that makes them attractive targets. Meanwhile, user expectations for security are increasing. You could argue that people don't simply want speedy transactions, they crave confidence that their assets are secure. That's encouraging platforms like Coinbase to consider new tools and technologies. Elearning has as much to do with trust and not only defense. Beyond pure security, there's another layer to this. It's about trust. Trust has always been a sensitive subject in crypto. Users look to platforms to secure assets, fulfill transactions, and ensure authenticity. Exchanges are also seeking to bolster that trust by investing in advanced AI security systems. It's not only about rising to the challenge of preventing attacks, it's also about assuring users that every conceivable effort is being made. Nothing was confirmed at that stage. The negotiations continue, and Claude Mythos is still accessible only behind locked doors. But the very possibility of this collaboration is telling. It reflects the direction in which the industry is going. Going forward, distinguishing AI from cybersecurity is becoming more challenging. This wasn't the type of news that moves markets from one day to the next. But it reflects something deeper. Crypto platforms are moving into a phase where security isn't only a feature, it's a fundamental differentiator. And if deals like this go ahead, they could shape how the next wave of exchanges is constructed. For now, it's something to watch, because the evolution taking place here could shape how digital assets are secured going forward. Disclosure: This is not trading or investment advice. Always do your research before buying any cryptocurrency or investing in any services.