News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic announces Claude Opus 4.7 beating OpenAI GPT-5.4 in agentic coding benchmarks

Anthropic today announced its latest flagship model, Opus 4.7. As expected, Opus 4.7 brings significant improvements over Opus 4.6 in complex software engineering tasks. The startup claims that Opus 4.7 can handle long-running tasks more consistently while strictly following given instructions. Another notable upgrade over Opus 4.6 is its much-improved vision capability, allowing it to analyze higher-resolution images. Anthropic highlighted that while Opus 4.7 performs better than Opus 4.6, it is still significantly less capable than Claude Mythos Preview. As shown in the benchmark comparison below, Opus 4.7 trails Mythos Preview by a wide margin in almost all benchmarks. Still, with Opus 4.7, Anthropic is once again leading agentic coding benchmarks, beating OpenAI's GPT-5.4. Unlike Mythos Preview, which is limited to a handful of organizations, Opus 4.7 is available today across all Claude products, the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. Opus 4.7 is priced the same as Opus 4.6 at $15 per million input tokens and $75 per million output tokens. Anthropic also said that Opus 4.7 includes built-in safeguards that automatically detect and block requests related to high-risk cybersecurity uses. Security professionals who want to use Opus 4.7 for legitimate cybersecurity purposes can apply to join Anthropic's new Cyber Verification Program. Anthropic noted that developers should plan for two changes when migrating from Opus 4.6 to Opus 4.7. First, Opus 4.7 uses an updated tokenizer that improves how the model processes text. This tokenizer uses roughly 1.0x to 1.35x more tokens depending on the content type. Second, Opus 4.7 tends to "think" more at higher effort levels, which can result in higher output token usage. Alongside Opus 4.7, Anthropic today also announced a new /ultrareview slash command in Claude Code. It creates a dedicated review session that reads through changes and flags bugs and design issues. Claude Pro and Claude Max Code users will get three free ultrareviews during the preview phase.

Perplexity's Personal Computer AI assistant feature launches on Mac for subscribers - 9to5Mac

Last month, Perplexity announced Personal Computer, a Mac-based personal AI assistant. Today, the Mac-specific version of the company's Perplexity Computer system, inspired by OpenClaw, is launching. Perplexity unveiled Personal Computer on March 11 by inviting interested users to join a waitlist. Now the company says it's rolling out Personal Computer to everyone on the waitlist and all Max subscribers starting today. More from Perplexity on what Personal Computer can do below: Personal Computer integrates with the Perplexity Mac App for secure orchestration across your local files, native apps, and browser. Personal Computer securely connects to any folder to search, read, and write files locally. It can access and work across iMessage, Apple Mail, Calendar and other native Mac apps. When set up on a Mac mini, Personal Computer can run 24/7 in the background across all your apps and files. Start a task from your iPhone, and Personal Computer can operate on your desktop and local files using 2FA. Perplexity Max is the company's $200/month subscription. Perplexity Pro, their $20/month plan, doesn't include Personal Computer access. However, it does support Perplexity Computer, the less capable web-based version of the AI assistant feature.

Wall Street quants see an edge in Polymarket earnings forecasts

It's the latest indication that prediction markets may be a useful source of information for investors, and one day emerge as a rival to sell-side analysts, whose job it is to forecast earnings. A working paper from London Business School and Yale University researchers updated in early April concludes that the nascent platforms are highly accurate, incorporate new information more quickly than analysts and avoid some of the biases built into Wall Street estimates.

Claude Opus 4.7 Goes Live, but Anthropic's Most Powerful AI Still is "Mythos"

The truly most powerful LLM continues to be kept under wraps, but active users are still being taken care of: Anthropic today made its latest language model Claude Opus 4.7 generally available. The company positions it as the direct successor to Opus 4.6 and highlights improvements primarily in software development, image processing, and instruction adherence. At the same time, Anthropic also acknowledges weaknesses in its announcement. How well Claude Opus 4.7 actually performs in practice remains to be seen. Neither arena.ai nor Artificial Analysis yet have test results and benchmarks for it to compare it with other LLMs available on the market. According to Anthropic, Opus 4.7 has been particularly improved for demanding programming tasks. The company claims users can now hand off difficult coding tasks that previously required close supervision to the model with greater confidence. Opus 4.7 is said to handle complex, lengthy tasks with greater care and consistency, and to verify its own results before outputting them. Another advertised advancement concerns visual perception. Opus 4.7 is said to be able to process images with a resolution of up to 2,576 pixels on the long side, which Anthropic states is more than three times that of earlier Claude models. This is intended to enable applications that rely on fine visual details, such as analyzing complex diagrams or working with high-resolution screenshots. Anthropic emphasizes that Opus 4.7 follows instructions significantly more literally and completely than its predecessor. However, the company explicitly points out that this can be a double-edged sword: prompts written for older models may produce unexpected results with Opus 4.7, because earlier models interpreted instructions more loosely or skipped parts of them. The model is said to be better at using file-system-based notes across multiple sessions. According to Anthropic, it can retain important information from previous sessions and use it for follow-up tasks, so that less context needs to be re-entered. Anthropic describes Opus 4.7's safety profile as similar to its predecessor, but identifies specific areas where the model performs worse. For instance, Opus 4.7 is reportedly more prone to providing excessively detailed harm-reduction guidance on controlled substances. The internal alignment assessment rated the model as "largely well-aligned and trustworthy, though not fully ideal in its behavior." "Largely well-aligned and trustworthy, though not fully ideal in its behavior" (Anthropic's internal alignment assessment of Opus 4.7) Anthropic also makes clear that Opus 4.7 is not the most capable model in its own portfolio. The model Claude Mythos Preview surpasses Opus 4.7 in overall performance and, according to Anthropic, also shows the lowest rates of misconduct in evaluations. Mythos Preview remains limited in its availability for the time being (more on that here). In the context of the company's own Project Glasswing, which deals with AI risks in the field of cybersecurity, Anthropic states it has actively sought to reduce Opus 4.7's cyber capabilities compared to Mythos Preview. The model ships with automatic safety mechanisms designed to detect and block requests involving prohibited or high-risk cybersecurity applications. Security professionals can apply for a verification program to use the model for legitimate purposes such as penetration testing. Anthropic points out that switching from Opus 4.6 to Opus 4.7 may be associated with higher token consumption. An updated tokenizer processes the same text using an estimated 1.0 to 1.35 times more tokens than before. In addition, the model produces more output tokens at higher effort levels. The company recommends measuring actual additional consumption using real traffic before carrying out a full migration.

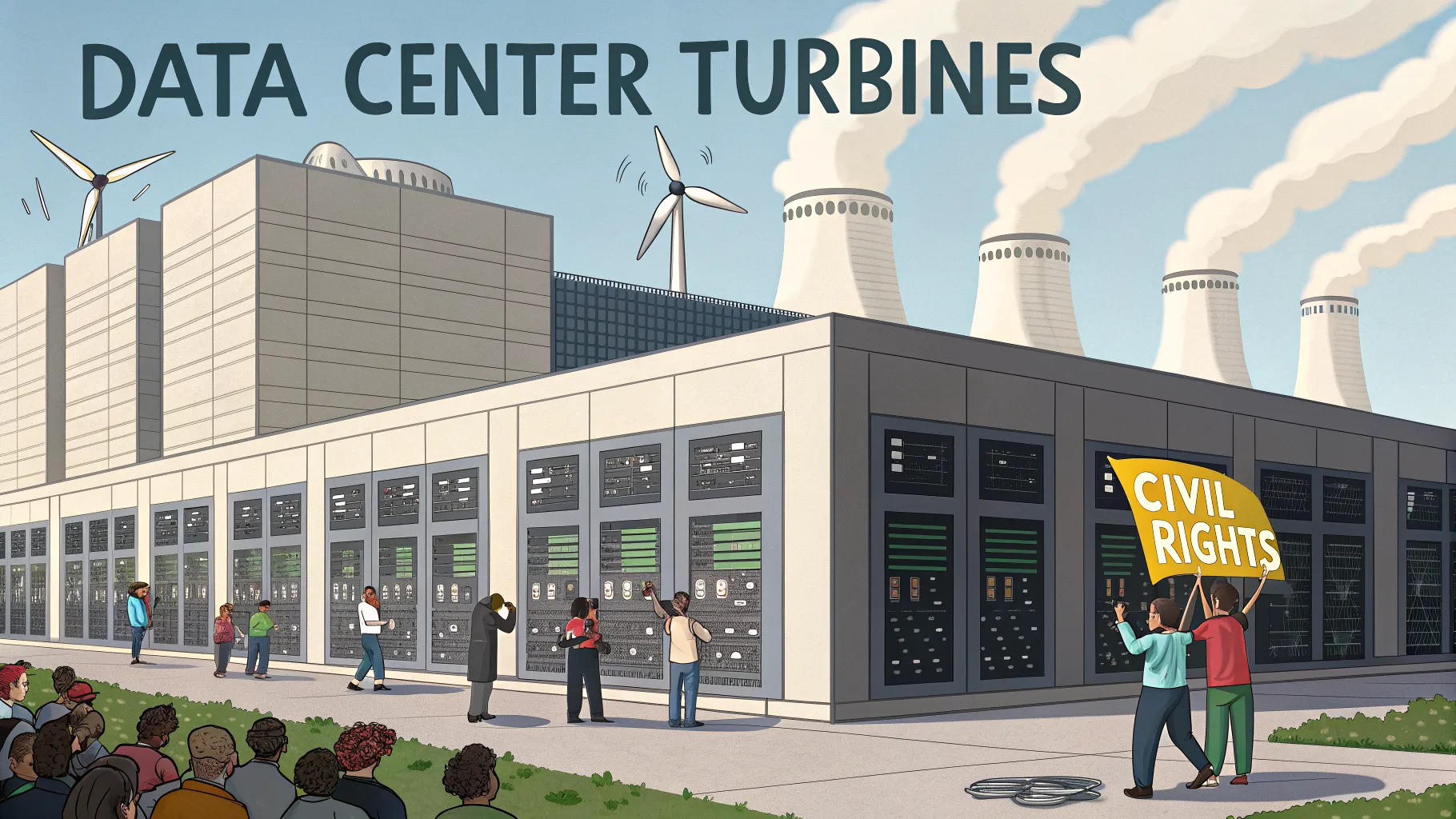

Civil Rights Group Sues xAI Over Turbines

The nation's largest civil rights organization filed suit Tuesday against xAI and a subsidiary, alleging they ran more than two dozen gas turbines in Mississippi to power the Colossus 2 data center without proper authorization. The complaint says the operation threatens nearby communities by adding harmful air pollution. The case could test how fast-growing data centers balance energy needs, local health, and environmental rules. The lawsuit centers on a cluster of turbines said to support a high-demand computing site. The filing claims the units were operated without the full range of permits required under state and federal law. It also raises concerns about nitrogen oxides, fine particles, and other pollutants linked to gas-fired generation. They "illegally operated more than two dozen gas turbines in Mississippi to power its Colossus 2 data center, posing a health risk to local residents." The civil rights group argues these emissions could increase asthma attacks, respiratory illness, and cardiovascular risks in communities near the facility. It seeks court oversight and penalties, and it may push for limits or shutdowns until permits and controls are verified. Modern data centers use huge amounts of electricity. Operators often look for locations with low costs, available land, and access to power. When grid capacity is tight, on-site or nearby gas turbines can bolster supply and reduce outages. But onsite generation can trigger strict air rules, including New Source Review and Title V permits under the Clean Air Act, as well as state-level standards in Mississippi. Experts say compliance comes down to three points: the size and number of turbines, fuel type and controls, and the hours of operation. Each factor affects emissions and whether higher-level permits are required before flipping the switch. Public health advocates in the South point to longstanding disparities in exposure to industrial pollution. Many small towns sit near power plants, highways, and factories. Adding new sources, even efficient ones, can worsen local air quality. Residents with asthma or heart disease may be most at risk. The filing frames the case as an environmental justice issue. It argues that low-income and minority neighborhoods already face higher exposure and should not bear additional burdens from private data infrastructure. Supporters of the suit say strong monitoring and transparent reporting are needed, along with clear emergency and maintenance plans for the turbines. Mississippi and other states have courted data centers for jobs, tax base growth, and construction spending. Local leaders often highlight short-term construction jobs and long-term technical roles. But these gains can run headlong into permitting rules when companies add on-site power. Legal analysts say courts will weigh the permitting record, emissions data, and whether the companies sought approvals ahead of time. If the plaintiffs show unpermitted operation, penalties and operational limits are possible. If permits are in place, the case may hinge on alleged violations of permit conditions. The court will likely set a schedule for responses and early motions in the coming weeks. Regulators could also review monitoring records, stack tests, and any best-available control technologies on the turbines. A key question is whether the site must reduce operations while the case proceeds. For the broader tech sector, the case highlights the strain between grid limits and round-the-clock computing demand. Companies building large-scale AI and cloud facilities face mounting pressure to source cleaner power, add storage, and site facilities near transmission upgrades. Communities are asking for clearer health safeguards before projects move ahead. The outcome will shape how fast-growing data infrastructure can expand in regions with tighter air standards. It may also set expectations for public disclosure, air monitoring near facilities, and the role of on-site generation. Readers should watch for any court orders on turbine operations, new permit filings, and moves to add cleaner power sources to support the data center.

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure

The same ChatGPT chatbot that gave OpenAI's chief financial officer Sarah Friar a tilapia recipe for a recent Sunday night dinner at home is also now doing her most mundane tasks at work like summarizing her emails and Slack messages. Friar and other company executives are banking OpenAI's future on more of the latter as it shifts its focus to business-oriented products while shedding some of its consumer offerings as a pathway to profitability. OpenAI says it will introduce a new artificial intelligence model for "high-value professional work" as the company faces heightened competition with rival Anthropic in attracting corporate customers to adopt AI assistants in their workplaces. "You'll see a new model coming from us in short order. We feel very excited about it," Friar said in an interview with The Associated Press. OpenAI boasts of more than 900 million weekly users of its core ChatGPT product, and Friar said about 95% of them "don't pay anything" for the popular chatbot. But while all those interactions build habits and reliance, they also strain the costly computing resources needed to power the company's AI systems and highlight the need for big business customers to help pay the bills. OpenAI, valued at $852 billion, and Anthropic, valued at $380 billion, both lose more money than they make, putting the privately-owned San Francisco-based AI research laboratories in a fierce competition to generate more revenue as they race toward becoming publicly traded on Wall Street. A push to improve performance and sales of OpenAI's business-oriented products -- already Anthropic's bread and butter -- has driven OpenAI to abandon some consumer initiatives, like the AI video generator app Sora. "I think it was a little heartbreaking, but we're like, OK, it's not the main event right now," Friar said. "We need to make sure that our new model that's coming has enough compute." Codenamed Spud, OpenAI says its "smartest model yet" offers "stronger reasoning, better understanding of intent and dependencies, better follow-through and more reliable output in production." It will be part of OpenAI's answer to Anthropic's new Claude Mythos, which Anthropic claims is so "strikingly capable" that it is limiting its use to select customers because of its apparent ability to surpass human cybersecurity experts in finding or exploiting computer vulnerabilities. While most people can't use Mythos, Anthropic also on Thursday released Opus 4.7, describing it as its most powerful "generally available" model. Friar, the former CEO of neighborhood social platform Nextdoor, said business customers accounted for about 20% of OpenAI's revenue when she was hired in 2024 as chief financial officer. She said it's now 40% and expected to account for half of OpenAI's sales by the end of the year. It's a sharp turnaround from late last year, when OpenAI co-founder and CEO Sam Altman was promoting a now-shuttered Sora partnership with Disney, launching a plan to sell ads on ChatGPT and floating the idea of letting ChatGPT engage in erotica with paid adult users. Altman said on the "Mostly Human" podcast earlier this month that a sharper focus was needed -- and Friar agrees. "Tech companies, when they're growing, it's just this natural thing that happens. There's so many cool things you could do," she said, adding that companies can end up doing "really badly" if they do too many things, while "great companies are very good at, in a reasonable period of time, kind of doing that winnowing down and refocusing and it's super painful." Signaling that shift was the hiring three months ago of Slack CEO Denise Dresser to be OpenAI's first chief revenue officer. Dresser said in a recent AP interview that she has been laser-focused on meeting with corporate leaders and positioning OpenAI as the go-to platform for workplaces employing AI agents to automate a variety of computer-based job tasks. "It's really clear to me that companies are past the experimentation phase and they're into using AI to do real work," Dresser said. "Leaders at companies are recognizing that AI is probably the most consequential shift of their lifetime." But those leaders also have a choice, namely Anthropic's Claude that has become widely used by software professionals. Founded in 2021 by a group of ex-OpenAI leaders who said they wanted to prioritize AI safety, Anthropic has positioned itself as the more responsible AI vendor. The distinction drew attention when President Donald Trump's administration punished the startup after a contract dispute over AI use in the military, and Altman used the opportunity to cement OpenAI's own deal with the Pentagon. Consumer interest in Anthropic surged and the company said its annualized revenues hit $30 billion, a higher number than what OpenAI has reported, though they measure it differently. Friar and Dresser declined to reveal OpenAI's latest sales but both have suggested that Anthropic's number is inflated because it doesn't account for revenue it must share with cloud computing providers Amazon and Google. Even so, it remains a tight competition that's also tied to the health of the stock market and the future of the economy. "They're likely quite close," said Luke Emberson, a researcher at nonprofit institute Epoch AI. "Certainly the trends show Anthropic is growing much faster than OpenAI. If that continues, they're likely to cross soon." The urgency led Dresser to send a memo to OpenAI employees on Sunday, first reported by The Verge, that asserted that Anthropic's coding focus "gave them an early wedge" but expressing confidence that OpenAI has the "real structural advantage" as AI usage expands beyond software developers and OpenAI builds enough computing capacity to operate its AI systems. "Their story is built on fear, restriction, and the idea that a small group of elites should control AI," Dresser's memo said of Anthropic. "Our positive message will win over time: build powerful systems, put in the right safeguards, expand access, and help people do more." But for skeptics of the financial viability of the AI industry, the trajectory of both money-losing companies is alarming as smaller startups increasingly become dependent on their AI tools. Anthropic has imposed rate limits on heavy users, forcing some to wait for hours to use Claude, and both companies have set up service tiers that reward premium payers, said author and AI critic Ed Zitron. "It's what I call the subprime AI crisis," Zitron said. "People built their lives and they built their businesses on top of these companies that, as they try and save money, will start turning the screws." One thing that both AI leaders and critics agree on is that it is an expensive technology, though whether it is worth the cost in electricity-hungry AI computers remains to be seen. "People will say, well, 'Once they go public, they're safe.' That's not true," Zitron said. "Public companies can and will die, especially ones that are dependent on $100 billion to $200 billion every year or so, just to keep breathing."

Anthropic launches new Claude Opus 4.7 model with stronger coding and vision capabilities

Anthropic rolled out Opus 4.7 broadly one week after unveiling Mythos Preview, as OpenAI and Google also pushed fresh model updates in March and April. Anthropic on Thursday broadly released Claude Opus 4.7, its latest flagship model, framing it as a direct upgrade over Opus 4.6 with stronger performance in advanced software engineering, complex multistep tasks, and professional knowledge work. The company said the model is available across Claude products and its API, as well as through Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry, with pricing unchanged from Opus 4.6 at $5 per million input tokens and $25 per million output tokens. Anthropic said Opus 4.7 improves instruction following, handles long-running tasks with greater rigor, and delivers better high resolution vision support, allowing images up to 2,576 pixels on the long edge. In its own testing, the company said the model posted stronger results than Opus 4.6 across coding, finance, document analysis, and agent style workflows, while also introducing a new xhigh effort setting aimed at balancing reasoning depth and latency. Anthropic also warned that prompt behavior may change because Opus 4.7 follows instructions more literally than earlier Claude models. The launch is especially notable because it comes just nine days after Anthropic introduced Claude Mythos Preview through Project Glasswing on April 7. Anthropic described Mythos Preview as its most capable model yet and said the initiative was built to help secure critical software because the model showed unusually strong cybersecurity capabilities. In the Opus 4.7 release, Anthropic said Mythos Preview remains limited rather than broadly available, and that Opus 4.7 is the first model being deployed with new safeguards designed to detect and block prohibited or high risk cyber requests before any wider Mythos class release. The release also lands amid a broader burst of frontier model launches from rivals. OpenAI introduced GPT 5.4 on March 5 and described it as its most capable and efficient frontier model for professional work, with state of the art performance in coding, computer use, tool search, and a 1 million token context window. Later in March, OpenAI also launched GPT 5.4 mini and nano as smaller, faster models optimized for coding and subagent workloads. Google has been updating Gemini on a similarly rapid schedule. The company rolled out Gemini 3.1 Pro in February as its most advanced model for complex tasks, then followed with Gemini 3.1 Flash Lite and Gemini 3.1 Flash Live in March. On April 15, Google added Gemini 3.1 Flash TTS Preview, extending the recent push into lower latency and multimodal use cases.

Anthropic unveils large London office expansion

Anthropic plans to expand its London presence by adding new office space capacity for around 800 employees as it ramps research, safety and commercial operations in the UK. Pip White, head of EMEA North at Anthropic, stated on LinkedIn the AI player currently has more than 200 employees based in London, including around 60 AI safety researchers. The new office space is in London's Knowledge Quarter. The move puts Anthropic alongside other major AI companies already based in the area, as competition for London-based talent and infrastructure continues to increase. "London has become one of our most important hubs outside the US," White said. "The work happening in this city sits at the heart of both our research and commercial momentum in Europe." She explained the UK is a uniquely strong base for Anthropic because it combines three rare advantages: organisations which understand the stakes of AI safety; a government which takes the technology seriously; and a deep pool of AI talent. "Europe's largest businesses and fastest-growing startups are choosing Claude, and we're scaling to match," she stated. The move comes after the US Department of War designated Anthropic as a supply-chain risk in March when the AI company refused to grant the Pentagon unfettered rights to deploy its models over concerns about domestic surveillance and use in lethal autonomous weapons. In February, Anthropic raised $30 billion from a group of backers, valuing the company at $380 billion post‑investment. CNBC reported earlier this week rival OpenAI plans to open its first permanent London office with a capacity of over 500 employees.

Anthropic releases a new Opus model amid Mythos Preview buzz

Anthropic has released its most powerful "generally available" model to date: Claude Opus 4.7. The company called it a step up from Opus 4.6 for advanced software engineering tasks, particularly in complex coding areas that in the past required more hand-holding. It's also supposed to be better at analyzing images and following instructions, and it can exhibit more "creativity" when creating slides and documents, per Anthropic. Opus 4.7 comes on the heels of Mythos Preview, the buzzy cybersecurity-focused model Anthropic announced earlier this month, which the company has said is its most powerful model overall. Comparatively, Opus 4.7 is much more limited. In Opus 4.7's system card, Anthropic wrote that Opus 4.7 doesn't even advance the company's "capability frontier," since Claude Mythos Preview received higher results "on every relevant evaluation." For security reasons, Anthropic is only currently making Mythos Preview available privately to select partners, such as Nvidia, JPMorgan Chase, Google, Apple, and Microsoft. "We stated that we would keep Claude Mythos Preview's release limited and test new cyber safeguards on less capable models first," Anthropic wrote in a blog post. "Opus 4.7 is the first such model: its cyber capabilities are not as advanced as those of Mythos Preview (indeed, during its training we experimented with efforts to differentially reduce these capabilities)." The company said it's releasing the new model with additional cybersecurity safeguards compared to Opus 4.6 and that findings from the deployment of those safeguards "will help us work towards our eventual goal of a broad release of Mythos-class models." The company added that security professionals wishing to use the model for cybersecurity purposes, like vulnerability research, could join its new Cyber Verification Program, which ostensibly would let up on some of the safeguards Anthropic introduced for Opus 4.7. Early testers for Opus 4.7 included Anthropic customers like Intuit, Harvey, Replit, Cursor, Notion, Shopify, Vercel, and Databricks. Pricing remains the same as Opus 4.6, at $5 per million input tokens and $25 per million output tokens, Anthropic said.

Anthropic launches 'Claude Opus 4.7' with stronger coding and vision capabilities - The Tech Portal

Anthropic has released Claude Opus 4.7, an upgraded version of its flagship AI model with stronger coding and vision capabilities. The model can handle complex software engineering tasks more independently and now supports image inputs up to 2,576 pixels on the long edge. It also introduces built-in safeguards to detect and block high-risk cybersecurity use. While it improves on Opus 4.6, it remains less powerful than Anthropic's restricted Mythos Preview model. Building on the foundation laid by Opus 4.6, the new model shows clear gains in handling multi-step programming workflows that previously required close human supervision. These include tasks like large-scale code refactoring, debugging across multiple files, and maintaining logical consistency in complex systems over extended interactions. Another major highlight of Opus 4.7 is its improved multimodal capability. By increasing the maximum image resolution it can process to 2,576 pixels on the long edge, over three times the limit of earlier Claude models, the system becomes far more effective in visually intensive tasks. This includes interpreting dense dashboards, analyzing UI screenshots, reading technical diagrams, and assisting with frontend development. The Dario Amodei-led firm has introduced several technical upgrades to improve developer control and usability. A new 'xhigh' effort level sits between the existing high and max settings, allowing users to better balance reasoning depth with response speed. The company has also launched task budgets in public beta, allowing API users to limit how much compute a task can use, helping manage costs in long-running or agent-based workflows. Commercially, Anthropic has kept pricing unchanged from Opus 4.6, maintaining rates at $5 per million input tokens and $25 per million output tokens. Even Claude Code now includes an 'ultrareview' command for deeper code inspection and more effective bug detection. In terms of performance, Opus 4.7 shows clear improvements over Opus 4.6, scoring higher in finance-focused agent evaluations and on GDPval-AA, a benchmark measuring AI performance in economically valuable tasks like finance and legal work. Another key change is a new tokenizer, which can produce between 1.0 and 1.35 times more tokens for the same input, depending on content type. However, despite all such advances, Anthropic has been explicit about the model's positioning within its broader AI portfolio. Opus 4.7 does not represent the company's most powerful system. That distinction belongs to Mythos Preview, a more advanced model that remains under restricted access due to safety concerns. Safety features are a key part of the latest release. The model includes systems that automatically detect and block prompts related to prohibited or high-risk cybersecurity activities. Compared to Mythos Preview, its cyber capabilities have been deliberately reduced during training to lower the risk of misuse. At the same time, Anthropic has introduced a Cyber Verification Program, allowing vetted security professionals to access the model for legitimate use cases like vulnerability testing and defensive research. The latest model is being released across Claude apps, its API, and major cloud platforms like Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. The Tech Portal is published by Blue Box Media Private Limited. Our investors have no influence over our reporting. Read our full Ownership and Funding Disclosure →

Blue Origin, SpaceX eyeing Cape Canaveral rocket launches in coming days

Blue Origin crews are prepping to launch a huge New Glenn rocket as early as Sunday, April 19 -- potentially creating a sunrise spectacle for oceanfront residents of Cocoa Beach and Cape Canaveral. The Jeff Bezos-founded space company has yet to publicly announce a target date. But a Federal Aviation Administration operations plan advisory shows the primary launch window extends from 6:45 a.m. to 12:19 p.m. Sunday, April 19, at Cape Canaveral Space Force Station. The FAA backup window for Blue Origin's NG-3 mission opens during similar hours Monday, April 20. The New Glenn will hoist AST SpaceMobile's Block 2 BlueBird satellite up into low-Earth orbit. On Thursday, April 16, Blue Origin CEO Dave Limp tweeted a video of the massive white rocket's 19-second hot-fire exercise, which served as a key pre-liftoff test at Launch Complex 36. Blue Origin's maiden New Glenn launch took place at 2:03 a.m. Jan. 16, 2025, brightly illuminating the early morning darkness at the Cape. Though the mission was deemed a success, the rocket's first-stage booster failed to land atop the company drone ship Jacklyn in the Atlantic Ocean. The second New Glenn liftoff on Nov. 13, 2025, produced a space-industry landmark. That rocket deployed NASA's Mars-bound ESCAPADE spacecraft -- and the booster landed on Jacklyn 375 miles offshore. Nicknamed Never Tell Me the Odds, that now-refurbished booster will make its second flight during the upcoming NG-3 mission. SpaceX to launch military GPS satellite from Cape Also potentially on tap: A SpaceX Falcon 9 rocket may launch the GPS III-8 satellite for the Space Force on a national security mission Monday, April 20, the FAA operations plan advisory indicates. The early morning launch window extends from 2:48 a.m. to 4:05 a.m. from Launch Complex 40 at Cape Canaveral Space Force Station. SpaceX has yet to confirm a launch date. "GPS III satellites are the most advanced type built thus far, designed to deliver stronger signals, higher accuracy and to provide greater resilience for both military and civilian users," a Space Force press release said. "The series boasts improved timing devices, increased military code (M-code) capability and additional civilian signals that increase urban performance and international compatibility. (This satellite) brings the total constellation to 32 active satellites and 8 backup systems," the press release said. On Jan. 27, a Falcon 9 similarly launched the Space Force's GPS III-9 satellite from the military installation. For the latest news from Cape Canaveral Space Force Station and NASA's Kennedy Space Center, visit floridatoday.com/space. Another easy way: Click here to sign up for our weekly 321 Launch space newsletter. Rick Neale is a Space Reporter at FLORIDA TODAY, where he has covered news since 2004. Contact Neale at [email protected]. Twitter/X: @RickNeale1

Anthropic releases Claude Opus 4.7, impacting AI model market standings

Anthropic confirmed the release of Claude Opus 4.7 on April 16, 2026, with improvements in task performance and vision capabilities. The Claude 4.7 release market resolved at 100% YES. Market reaction The release has also moved the Best AI Model by End of June market, slightly decreasing Google's odds of holding the top spot. Odds in that market are expected to shift by 7% as traders price in Anthropic's latest model against competitors. The question now is whether Google's Gemini 4.0 or OpenAI's GPT-5.5 can overtake Anthropic before the deadline. Trading context The Claude 4.7 release date market saw $98,787 in USDC traded over the last 24 hours, with a 24-point drop in odds at 3:31 PM. Volume in the April 17 and April 18 release markets was negligible by comparison, confirming strong trader conviction around the April 16 date. Why it matters The Claude Opus 4.7 release fits Anthropic's dual-track strategy: iterating on the Opus series while withholding more powerful models like Claude Mythos for select partners. For traders, this means recalibrating expectations around Google's AI position by June. Buying YES shares in the Best AI Model by End of June market at the current discount pays $1 if Google's upcoming models fail to surpass Anthropic's latest release. What to watch Announcements from Google and OpenAI on their respective model releases. Any major updates to the LMSYS leaderboard or Chatbot Arena rankings could move odds in the Best AI Model market.

Tesla Cybertruck Sales Were Inflated by a SpaceX Buying Spree

Sales of 's Cybertruck have been propped up in recent months by 's other companies, an unusual arrangement that further indicates the polarizing pickup is failing to appeal to everyday buyers. , the Musk-led rocket and satellite maker, accounted for 1,279 -- or more than 18% -- of the 7,071 Cybertrucks registered in the US during the fourth quarter, according to registration data that S&P Global Mobility provided to Bloomberg News. The billionaire's other ventures acquired another 60 vehicles during those months. That means almost one in every five Cybertrucks registered during the period were delivered from ...

Can Get Enough Space Talk? Check Out the SpaceX Launch Schedule for Vandenberg

SpaceX has listed its next four missions flying out of the California base. When NASA finally got the Artemis II off the ground for its mission around the moon, people from all over the world found themselves glued to their TVs and internet as they watched the ship's successful launch, and then virtually followed the progress of the four astronauts on board as they made their trek around the moon and returned to Earth safely. If you're one of those people who couldn't look away as NASA did its work, you may be eager to check out some more launches.

Blue Origin's third New Glenn launch faces key reuse test in rivalry with SpaceX

April 16 (Reuters) - Blue Origin is set to launch its third New Glenn mission on Friday, carrying AST SpaceMobile's BlueBird 7 satellite to low-Earth orbit in a flight that marks a pivotal step for the Jeff Bezos-led company's ambitions. The mission is critical in proving New Glenn, a 29-story heavy-lift rocket, can compete with Elon Musk's SpaceX, by demonstrating reliable booster reuse, a capability that has underpinned Falcon 9's dominance. "The successful flight of New Glenn-3 would end SpaceX's nine-year monopoly on orbital launch vehicle reusability, marking a historic shift toward a competitive, multi-player market," said Micah Walter-Range, president of space consulting firm Caelus Partners. The mission is scheduled for a launch window between 6:45 a.m. and 12:19 p.m. ET from Cape Canaveral, Florida. Following a series of delays earlier this month, the mission comes amid a surge of activity in the space sector, including a successful NASA Artemis II lunar flyby. The rocket's booster, "Never Tell Me the Odds," previously flew on the NG-2 mission in November and was recovered, setting up this week's milestone attempt. The name is a nod to Han Solo's line in 'Star Wars: The Empire Strikes Back.' A successful landing would also signal Blue Origin is narrowing a gap with SpaceX, which has confidentially filed for a U.S. IPO targeting a valuation of about $1.75 trillion. Blue Origin said in November it would build a bigger, more powerful variant of its New Glenn rocket. KEY PAYLOAD New Glenn is designed for the higher end of the commercial launch market. Its seven-meter payload fairing allows it to carry bulkier payloads, including multiple satellites in a single mission. On NG-3, the rocket will carry AST SpaceMobile's BlueBird 7, the second satellite in its next-generation Block 2 constellation. The satellite features what the company describes as the largest commercial communications array deployed in low-Earth orbit. Designed to connect directly with smartphones, the satellite is part of an effort to build a space-based cellular broadband network, similar to Amazon's Leo or SpaceX's Starlink. AST SpaceMobile is targeting a constellation of 45 to 60 such satellites by the end of 2026. (Reporting by Akash Sriram in Bengaluru; Editing by Shreya Biswas)

OpenAI's Lead Is Shrinking, And Anthropic's Coming In Hot

Join the newsletter that everyone in finance secretly reads. 1M+ subscribers, 100% free. OpenAI may still dominate the consumer AI race, but the pace of growth is starting to look less rocket-ship and more down-to-earth. ChatGPT is already huge, and once you're at that kind of scale, every extra chunk of growth gets harder to win. You can see OpenAI leaning into that reality by pushing more toward business customers, where the budgets are bigger and the usage is stickier. The twist is that this is exactly where Anthropic has been gaining ground. A lot of the demand it's seeing is coming from coding and developer tools, and it's now actually nipping at OpenAI's heels - or even edging ahead, depending on how you count. And investors have been noticing.

Anthropic's Opus 4.7 To Redefine How Work Gets Done Forever

Model improves multimodal processing, enabling high-resolution analysis and professional-grade outputs. Anthropic has released Claude Opus 4.7, its most advanced AI model to date, marking a significant upgrade in long-form reasoning, vision processing, and autonomous task execution. The launch, announced on April 16, 2026, strengthens Anthropic's position in the fast-moving enterprise AI race, where competitors are rapidly expanding capabilities for coding, analytics, and multimodal workflows. A Major Leap in Capability and Control Claude Opus 4.7 is designed to handle long-running, complex tasks with greater precision and reduced supervision, a key demand among enterprise developers and financial analysts. According to Anthropic, the model "verifies its own outputs before reporting back," improving reliability in high-stakes workflows such as software engineering and data modeling. The update also introduces a new "xhigh" reasoning effort level, giving developers finer control over the balance between latency and computational depth. A beta feature, task budgets, allows organizations to manage token usage across extended AI operations. Stronger Vision and Multimodal Performance One of the most notable improvements is in visual understanding. Opus 4.7 can process images at up to 2,576 pixels on the long edge (~3.75 megapixels). This is more than three times the resolution supported in earlier versions. This upgrade unlocks new use cases in: * High-fidelity document and diagram analysis * Interface and slide generation * Data extraction from dense visual materials Anthropic says the model produces more refined outputs for professional-grade presentations and design tasks, especially in business environments. Enterprise Tools and Developer Integration Alongside the model, Anthropic introduced several platform upgrades. In Claude Code, a new ultrareview command performs automated deep code reviews, flagging issues that resemble human senior engineer assessments. On cloud platforms including Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry, Opus 4.7 is available at the same pricing as its predecessor: $5 per million input tokens and $25 per million output tokens. The company also highlighted improved instruction-following behavior, noting that prompts must now be more precise as the model executes instructions more literally than prior versions. Safety, Cybersecurity, and Alignment Focus Anthropic emphasized that Opus 4.7 maintains a similar safety profile to Opus 4.6, with improved resistance to prompt injection attacks and deceptive outputs in internal evaluations. However, the firm acknowledged mixed results in areas like overly detailed harm-related responses, highlighting ongoing alignment challenges. A new Cyber Verification Program will allow security professionals to test the model in controlled environments for penetration testing and vulnerability research. What Comes Next for Enterprise AI Opus 4.7 signals a broader shift toward autonomous, tool-using AI systems built for sustained enterprise workloads rather than short interactions. With competitors also advancing multimodal reasoning and agentic capabilities, the next phase of competition is expected to center on reliability, cost efficiency, and secure deployment at scale. For enterprises, the immediate implication is that AI systems are moving from assistants to persistent operational agents reshaping how complex digital work is executed across software, finance, and analytics pipelines. Meanwhile, Coinbase is reportedly courting Anthropic to bolster the exchange's security infrastructure. Specifically, the exchange is reportedly pursuing access to Anthropic's restricted Mythos AI model, a move inspired by Project Glasswing cybersecurity initiative launch. While Anthropic touts Opus 4.7 as a breakthrough, the launch does not wipe from memory past instances of frustrating outages that left thousands of users locked out of Claude.ai and its tools this week. Scaling hype continues to outpace reliable infrastructure. Additionally, its recent standoff with the Trump administration over military access has painted the company as both principled and vulnerable, raising questions about whether safety posturing serves users or just PR.

Anthropic's Claude Mythos Dilemma: When Superpowered AI Gets Risky

Anthropic's Claude Mythos Preview has sparked concern in the U.S. and globally over AI safety. Debate has spread from Wall Street and Washington, D.C., to financial institutions in Europe. Anthropic is withholding it from public release, citing the model's apparent ability to autonomously exploit previously unknown cybersecurity vulnerabilities. AI is already a kind of Pandora's box. Its impact can scale at extraordinary speed because its outputs are automated, reproducible, and easily multiplied. That does not make AI the same as a nuclear weapon. But it does make it a system-level risk. Once highly capable models are widely accessible, misuse can spread fast across industries and institutions. But commercial pressure may be moving faster than governance. The erosion of safety capacity at major AI companies has drawn scrutiny. OpenAI's reported shutdown of its Mission Alignment team earlier this year and the disbanding of dedicated AI safety team in 2024 were almost like racing a horse without a bridle. When safety functions shrink as model capability grows, the technology becomes more vulnerable to malicious use. Public anxiety is only natural. Project Glasswing To reduce cybersecurity risks, Anthropic launched Project Glasswing. It is a coordinated vulnerability disclosure effort involving Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. The goal is to let major infrastructure providers use the model's predictive power to find weaknesses in cloud systems and patch critical software bugs. The idea is to fix those problems before the model, or a similar adversarial system, reaches a broader and less regulated public. This marks a shift in industry priorities. The race in AI is no longer only about capability. It is also about who can secure the systems that capability may threaten. Cross-industry Impact As AI capability rises, so do the risks across industries. In finance, models with Mythos-level reasoning could help simulate or execute complex market manipulation, evade fraud detection, or automate the discovery of institutional weaknesses. In manufacturing and commerce, advanced AI could identify and exploit supply-chain bottlenecks, causing delays, disruptions, or theft at scale. In universities and research institutions, the threat extends to proprietary research data, internal networks, and AI-assisted social engineering attacks against administrators and faculty. As AI becomes a general-purpose tool for productivity, it also becomes a general-purpose tool for sabotage. The same flexibility that makes it commercially valuable also makes it highly adaptable for malicious use. Medicine and the Data Integrity Crisis The 2026 Stanford AI Index Report, released this month, highlights a sharp increase in AI adoption in medicine. It notes a significant rise in AI uses for clinical documentation, medical imaging, and diagnostic reasoning. That growth may improve efficiency. But it also expands the attack surface for public health if mis-deployed. If a model like Mythos were used to corrupt medical databases, manipulate diagnostic systems, or generate inaccurate pharmacological guidance, the damage would go far beyond an ordinary data breach. It would create a direct threat to patient safety. As healthcare systems become more dependent on AI-mediated workflows, the prospect of adversarial medicine becomes harder to dismiss. In such cases, bad actors could manipulate AI outputs to cause harm, create confusion, or extort hospitals. In that context, identity verification and access controls look less like optional friction and more like core infrastructure. Inevitable Identity Verification The possibility that high-capability models could enable such harms has accelerated a shift toward mandatory identity verification. Anthropic now requires government-issued identification and biometric live selfies from users seeking access to certain high-risk functions. The company frames this as a matter of platform integrity, arguing that responsible use of powerful technology begins with knowing who is using it. To address privacy concerns, Anthropic says the verification data is not used to train models and is not shared with third parties for marketing or advertising. Physical ID checks for AI use may feel like a major change in user experience. But they also extend a much older industry practice. For years, technology companies have relied on passive forms of verification. Google sign-ins for Gemini, internet service registrations, and the metadata tied to email accounts, devices, and digital purchases already provide dense identity signals. Those systems have long supported public-safety functions and commercial monetization. Explicit identity checks for advanced AI models formalize that trajectory. They move the industry from background identification to foregrounded, bank-grade authentication. The Missing Public Role Anthropic's Project Glasswing brings together major cloud providers and cybersecurity companies. But it does not yet appear to meaningfully include public institutions or policymakers. The gap matters. Innovation is vital to economic competitiveness. But safety remains a precondition for lasting growth. Governments once built legal frameworks for cybersecurity and data privacy. They now need to update AI safety laws and regulatory mechanisms to match the capabilities of new models. Without that public framework, too much of the burden will fall on private firms whose incentives do not always align with the public interest. The real question is whether institutions can move fast enough to govern it before the technology outruns existing controls. This article was originally published on Forbes.com

Blue Origin, SpaceX eyeing Cape Canaveral rocket launches in coming days

Blue Origin crews are prepping to launch a huge New Glenn rocket as early as Sunday, April 19 -- potentially creating a sunrise spectacle for oceanfront residents of Cocoa Beach and Cape Canaveral. The Jeff Bezos-founded space company has yet to publicly announce a target date. But a Federal Aviation Administration operations plan advisory shows the primary launch window extends from 6:45 a.m. to 12:19 p.m. Sunday, April 19, at Cape Canaveral Space Force Station. The FAA backup window for Blue Origin's NG-3 mission opens during similar hours Monday, April 20. The New Glenn will hoist AST SpaceMobile's Block 2 BlueBird satellite up into low-Earth orbit. On Thursday, April 16, Blue Origin CEO Dave Limp tweeted a video of the massive white rocket's 19-second hot-fire exercise, which served as a key pre-liftoff test at Launch Complex 36. Blue Origin's maiden New Glenn launch took place at 2:03 a.m. Jan. 16, 2025, brightly illuminating the early morning darkness at the Cape. Though the mission was deemed a success, the rocket's first-stage booster failed to land atop the company drone ship Jacklyn in the Atlantic Ocean. The second New Glenn liftoff on Nov. 13, 2025, produced a space-industry landmark. That rocket deployed NASA's Mars-bound ESCAPADE spacecraft -- and the booster landed on Jacklyn 375 miles offshore. Nicknamed Never Tell Me the Odds, that now-refurbished booster will make its second flight during the upcoming NG-3 mission. SpaceX to launch military GPS satellite from Cape Also potentially on tap: A SpaceX Falcon 9 rocket may launch the GPS III-8 satellite for the Space Force on a national security mission Monday, April 20, the FAA operations plan advisory indicates. The early morning launch window extends from 2:48 a.m. to 4:05 a.m. from Launch Complex 40 at Cape Canaveral Space Force Station. SpaceX has yet to confirm a launch date. "GPS III satellites are the most advanced type built thus far, designed to deliver stronger signals, higher accuracy and to provide greater resilience for both military and civilian users," a Space Force press release said. "The series boasts improved timing devices, increased military code (M-code) capability and additional civilian signals that increase urban performance and international compatibility. (This satellite) brings the total constellation to 32 active satellites and 8 backup systems," the press release said. On Jan. 27, a Falcon 9 similarly launched the Space Force's GPS III-9 satellite from the military installation. For the latest news from Cape Canaveral Space Force Station and NASA's Kennedy Space Center, visit floridatoday.com/space. Another easy way: Click here to sign up for our weekly 321 Launch space newsletter. Rick Neale is a Space Reporter at FLORIDA TODAY, where he has covered news since 2004. Contact Neale at [email protected]. Twitter/X: @RickNeale1

Anthropic launches Claude Opus 4.7 with bold new upgrades

Anthropic's latest model brings meaningful improvements to software engineering Anthropic has released Claude Opus 4.7, the latest iteration of its flagship model line, bringing a notable set of improvements to software engineering performance and visual processing capability. The release marks a meaningful step forward from the previous Opus 4.6 model, with changes that span raw capability, safety architecture and the tools available to developers building on top of the platform. For the growing community of engineers, researchers and enterprise users who rely on Claude for complex technical work, Thursday's announcement introduces several features that could meaningfully change how they interact with the model on a daily basis. Stronger coding and dramatically improved vision The two headline capability upgrades in Opus 4.7 center on software engineering and image processing. According to Anthropic, the new model demonstrates measurable gains in handling complex coding tasks that previously required close human supervision -- a shift that could reduce friction for developers using Claude as an active collaborator in their workflows rather than a passive assistant. On the vision side, the improvement is substantial. Opus 4.7 processes images at resolutions up to 2,576 pixels on the long edge, representing more than three times the capacity of prior Claude models. For users working with detailed diagrams, technical documents, charts or high-resolution visual content, that expanded processing capability opens up a broader range of practical applications. Where Opus 4.7 sits in Anthropic's model lineup While Opus 4.7 represents a clear upgrade over its predecessor, Anthropic is transparent that it remains less capable than Claude Mythos Preview, the company's most powerful model currently in existence. Mythos Preview continues to operate under a limited release due to safety concerns outlined in Project Glasswing, a framework Anthropic announced just last week. For most users, Opus 4.7 will represent the most capable Claude model they can access in full. Built-in cyber safeguards and a new verification program Safety architecture received significant attention in this release. Anthropic has implemented cyber safeguards directly into Opus 4.7 that automatically detect and block requests flagged as prohibited or high-risk cybersecurity uses. The company also reduced the model's cyber capabilities during training compared to Mythos Preview, a deliberate choice to limit potential misuse at the model level rather than relying solely on downstream filtering. For legitimate security professionals who need access to more advanced capabilities for authorized work, Anthropic has introduced a new Cyber Verification Program that provides a verified pathway to the model's fuller functionality in that domain. New developer tools and a refined effort control system Anthropic also used the Opus 4.7 launch to roll out several tools aimed specifically at developers and API users. A new effort level called "xhigh" has been introduced, sitting between the existing high and max settings and giving users more granular control over the tradeoff between reasoning depth and response speed -- a balance that matters significantly depending on the nature of the task at hand. Task budgets are now available in public beta for API users, and a new ultrareview command has been added to Claude Code specifically for bug detection. Together, these additions reflect Anthropic's continued investment in making Claude a more capable and controllable tool for professional development environments. On the instruction-following front, Anthropic noted that while Opus 4.7 shows genuine improvement in this area, some users may need to adjust prompts that were written and optimized for earlier models, as the new version responds somewhat differently to certain input patterns. Availability, pricing and a new tokenizer Claude Opus 4.7 is available across Claude products, the Claude API, Amazon Bedrock, Google Cloud's Vertex AI and Microsoft Foundry. Pricing remains unchanged from Opus 4.6, holding at $5 per million input tokens and $25 per million output tokens -- a decision that makes the upgrade accessible without adding cost for existing users. One technical change worth noting is a new tokenizer introduced with Opus 4.7. Depending on content type, the same input may now generate between 1.0 and 1.35 times more tokens than it would have under previous models, a factor that users processing large volumes of text will want to account for when estimating usage costs. On benchmarks including finance agent evaluations and GDPval-AA -- a measure of economically valuable knowledge work across finance and legal domains -- Opus 4.7 scored higher than its predecessor, suggesting real-world performance gains in the professional contexts where the model is most heavily used.