News & Updates

The latest news and updates from companies in the WLTH portfolio.

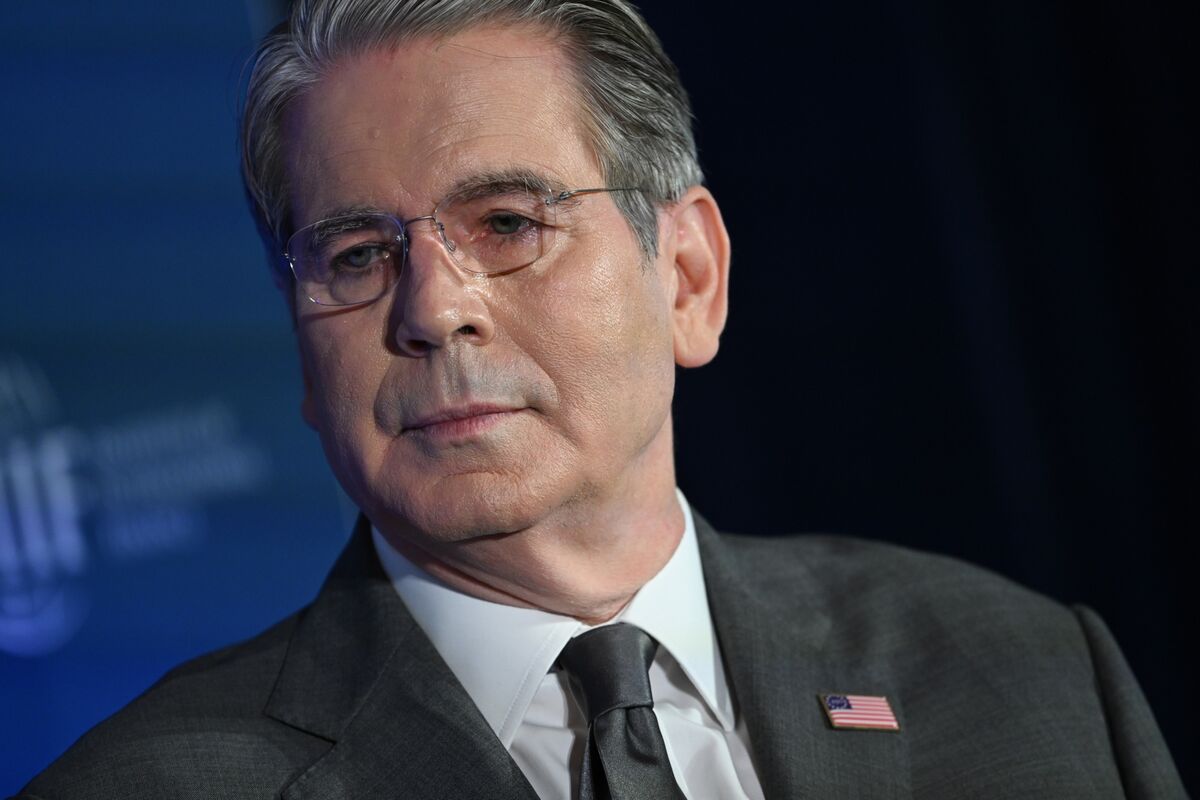

Bessent Calls Anthropic's Mythos a Breakthrough in China AI Race

US Treasury Secretary Scott Bessent hailed Anthropic PBC's Mythos as a revolutionary step that will keep America ahead of China in AI, endorsing an industry leader that's clashed with Washington over its role in military endeavors. Bessent, speaking Tuesday at a Wall Street Journal event in Washington, dismissed a question suggesting China was rapidly catching up in AI technology, though he said American artificial intelligence stood just three to six months ahead. He singled out Mythos -- a model Anthropic says is highly adept at finding vulnerabilities in software and computer systems that's being released to a very limited number of carefully-chosen parties. The Treasury Secretary's comments emerged just days after he and Federal Reserve Chair Jerome Powell summoned Wall Street banks to an urgent meeting on concerns that Anthropic's latest model will usher in an era of greater cyber risk. "This Anthropic mythos model was a step function change in abilities, learning capabilities," he told the audience. "It's all logarithmic. You go from x to the 10th power to x to the 12th and then it's very difficult to catch up." Still, Anthropic has run afoul of some agencies in Washington. The Pentagon this year declared the company a threat to the US supply chain, under an authority normally reserved for foreign adversaries. The company won a court order last month blocking a ban on government use of the technology, after Anthropic argued the move could cost it billions of dollars in lost revenue. Founded in 2021 by former OpenAI staffers including Chief Executive Officer Dario Amodei, Anthropic has aimed to be a more responsible AI steward than its competitors. Claude and its underlying technology have gained traction with enterprise customers in sectors like finance and health care, as well as with developers. Anthropic has pledged to spend $50 billion to build custom data centers in the US. On Tuesday, Bessent also called out America's lead in AI computing -- the enormous data centers that hyperscalers from Meta Platforms Inc. to Google are spending hundreds of billions of dollars to build out. "I've seen studies that say that in a few years, the US is going to have 70 or 80% of the global computing power," he said. "We were in the 30s. Now, I think we're in the 50s, and we're well, well on our way."

Goldman Sachs Actively Using Anthropic's Claude Mythos to Strengthen its Cybersecurity Posture - Tekedia

Goldman Sachs is actively using and collaborating on Anthropic's Claude Mythos Preview often shortened to Mythos to strengthen its cybersecurity posture. Anthropic released Claude Mythos Preview in early April 2026 as part of its "Project Glasswing" initiative focused on cybersecurity. It's a frontier-level AI model that shows major leaps in agentic capabilities -- particularly in autonomous vulnerability discovery, exploit chaining, and security research. Anthropic has described it as capable of finding and exploiting software vulnerabilities at a level rivaling or surpassing top human researchers, including in complex, real-world systems. The company has restricted public access due to the dual-use risks: the same tech that excels at defense could dramatically lower the bar for sophisticated cyberattacks if misused. Access is limited to select trusted partners, with some involvement from U.S. government encouragement. Goldman Sachs CEO David Solomon publicly stated that the bank is hyper-aware of Mythos's capabilities. The firm has access to the model. Is working closely with Anthropic and its security vendors. Is supplementing and accelerating investments in cyber and infrastructure resilience to harness frontier AI tools for defense. This follows an urgent meeting last week convened by U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell with major bank CEOs. Officials warned about the heightened cyber risks from advanced AI models like Mythos and reportedly encouraged banks including Goldman, JPMorgan, Citi, etc. to test it internally on their own systems to identify and patch weaknesses proactively. In short, Goldman is flipping the script: instead of just fearing what Mythos could do to them, they're using it for them -- to hunt for hidden vulnerabilities before attackers do. This fits a growing pattern where frontier AI's cyber capabilities are forcing a reckoning. Models like Mythos can autonomously scan for flaws, chain exploits, and operate with less human oversight, which is powerful for defenders but alarming for the attack surface of critical infrastructure like banks. U.S. regulators appear to be pushing a test it yourself to defend it approach rather than blanket restrictions. Other large banks are reportedly in similar testing phases, though Goldman's public comments from Solomon make it one of the more visible examples so far.The situation highlights the classic AI dual-use dilemma: rapid capability gains in offensive and defensive cyber tools, controlled release by labs like Anthropic, and institutions racing to adapt. It's less AI is coming for the banks and more the banks are racing to weaponize AI for defense before the bad actors do. JPMorgan Chase stands out as the only bank explicitly named by Anthropic as a Project Glasswing participant. The bank is actively evaluating Mythos for defensive cybersecurity across critical infrastructure. JPMorgan has described it as a unique, early-stage opportunity to test next-generation AI tools. The firm already invests heavily in AI overall; part of its $19.2 billion tech budget includes $1.2 billion for AI initiatives and uses advanced techniques like graph neural networks for fraud detection. It reported identifying $150 million in previously undetectable fraud ring activity through such systems. Citi is among the banks reported to be internally testing Mythos or preparing to gain access. Its CEO Jane Fraser attended the recent Treasury/Fed meeting. Citi has long emphasized AI for operational efficiency like speeding account openings and legacy system upgrades and is ramping up AI-related capex forecasts. It also highlights quantum cybersecurity threats and broader AI-driven fraud/AML integration. Bank of America is testing Mythos internally, with CEO Brian Moynihan present at the regulators' meeting. The bank allocates about $4 billion of its $13 billion tech budget to strategic growth areas that include AI. It deploys AI for fraud detection, dispute resolution; handling 62% of card disputes without human intervention in some cases, and broader risk functions. Morgan Stanley is also internally testing or preparing to test the model, per reports on the Wall Street banks involved. Its CEO Ted Pick attended the meeting. Like peers, it integrates AI into compliance, risk, and operational workflows, though specific Mythos details remain limited due to the controlled nature of access. Wells Fargo's CEO Charlie Scharf attended the urgent meeting. While public details on its direct Mythos testing are scarcer than for JPMorgan or Goldman, it is part of the group of major banks urged by regulators to evaluate the tool for vulnerability hunting. The bank continues to prioritize AI in fraud detection, cybersecurity oversight, and technology modernization

Anthropic gets multiple offers pegging valuation above $800 billion, ahead of reported October IPO plans - CNBC TV18

Anthropic is weighing new funding offers valuing it near $800 billion, eyes an IPO as early as October, reports rapid revenue growth and launches the security focused Mythos AI modelAnthropic PBC, the company at the centre-stage of the new wave of AI revolution, has received many proposals from investors for a new round of funding that could value the artificial intelligence business at roughly $800 billion or higher. According to a Bloomberg Report, the Claude manufacturer has so far rejected these approaches. The pre-money estimate of $350 billion that Anthropic attributed to its $30 billion fundraising in February would be more than doubled by the offers. The report stated that talks between Anthropic and investors are still in their early stages and that a deal may not materialise or the specifics may change. Some parts of the discussions were previously published by Business Insider. Anthropic chose not to respond. Anthropic has launched several AI solutions designed to revolutionise how companies manage everything from cybersecurity to coding. An increasing number of commercial clients are responding favourably to such products, which is boosting income and intensifying competition with rival OpenAI. According to one of the people, Anthropic hasn't ruled out raising additional funds in the upcoming months, although it's unclear if it will raise at an $800 billion valuation or accept investor terms. Additionally, Anthropic has talked about going public as early as October. Anthropic's robust revenue growth has impressed investors, especially with wealthy industrial clients. The business announced earlier this month that its yearly run-rate revenue had reached $30 billion, a significant rise from $19 billion only a few months prior. Following a dispute with the US Defence Department on the security of utilising its AI tools, Anthropic has gained notoriety. Additionally, the business recently introduced a new model called Mythos, which it claimed would be reckless to make freely available due to its ability to detect and exploit software vulnerabilities.

Anthropic draws VC interest at up to $800 billion valuation, Business Insider reports

April 14 (Reuters) - Anthropic has received multiple offers from venture capital firms in recent weeks to invest in the Claude maker at valuations as high as $800 billion, more than double its current value, Business Insider reported on Tuesday, citing sources. * Anthropic has so far resisted overtures from investorsfor a new round of funding, according to a Bloomberg News reporton Tuesday, citing people familiar with the matter. * Anthropic did not immediately respond to Reuters'requests for comment. Reuters could not immediately verify bothreports. * In February, Anthropic raised $30 billion in a funding round that valued it at $380billion amid massive investor interest in the startup and thebroader AI industry. * Th firm is also reportedly exploring an IPO as early as this year. * Demand for its AI model Claude has accelerated in 2026, with the startup's run-rate revenue nowsurpassing $30 billion, up from about $9 billion at the end of2025. * The reports also come weeks after Anthropic announced a power new model named Mythos earlier this month,describing it as its "most capable yet for coding and agentictasks," referring to the model's ability to act autonomously. * Its advanced coding capabilities could give itunprecedented ability to spot and exploit cybersecurity flaws,experts say. (Reporting by Anusha Shah in Bengaluru; Editing by Sherry Jacob-Phillips)

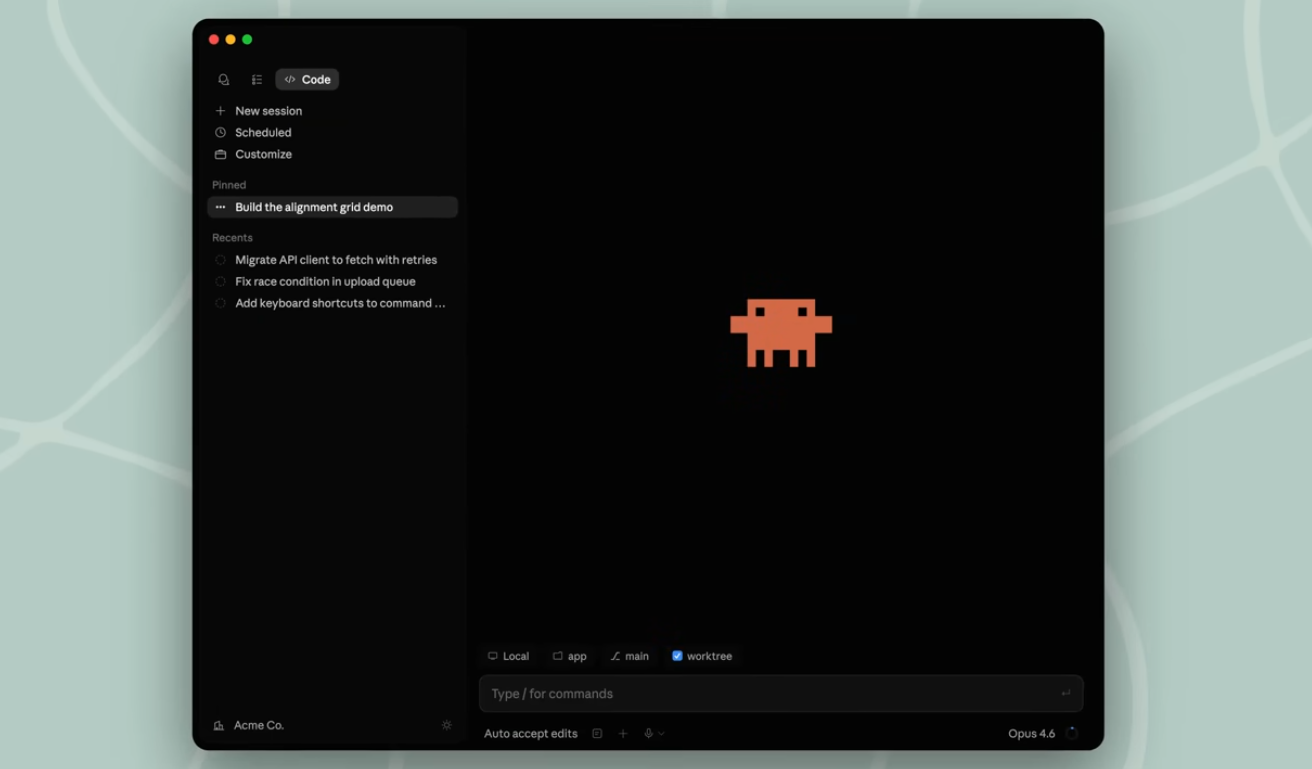

Anthropic's Claude Code gets automated 'routines' and a desktop makeover - SiliconANGLE

Anthropic's Claude Code gets automated 'routines' and a desktop makeover Anthropic PBC is making it easier to automate tasks using Claude Code without relying on autonomous artificial intelligence agents with the launch of a new service called "routines." The routines allow Claude Code users to run automations on the company's own cloud-based infrastructure. "A routine is a saved Claude Code configuration: a prompt, one or more repositories, and a set of connectors packaged once and run automatically," the company explained in a blog post. Anthropic added that the routines are executed on cloud infrastructure managed by itself, so they will keep running even if the user shuts off their own laptop. If this idea sounds familiar, it's because they are quite similar to scheduled tasks such as cron jobs and GitHub Actions, or genuine AI agents, but there are some differences. Whereas cron jobs and GitHub Actions run set scripts at set times or after specific events occur, without input from an AI model, Claude Code's routines prompt an AI model on a schedule or following a predefined trigger or webhook. The actions taken are then dependent on the context they encounter and the available connectors they can access, Anthropic said. AI agents, on the other hand, are an ongoing process that maintains state and involves model interactions with third-party tools and data sources. As such, Claude routines are more like dynamic cron jobs or short-lived, trigger-driven AI agents. According to Anthropic, developers will find that Claude Code's routines are most useful for handling tasks such as verifying software deployment or triaging alert messages. In the case of the former, it would scan the continuous integration/continuous deployment output, check for errors and then post a report. The company said routines are available to Claude Code Pro, Max, Team and Enterprise subscribers, but they must ensure the model is web-enabled. The use of routines will count against subscriber's usage limits, and there are daily limits on their use too. According to Anthropic, Pro users can run up to five routines per day, Max users get 15, and Team and Enterprise users will receive 25. Customers can exceed these limits, but they'll be billed extra for the privilege. Alongside the new routines, Anthropic revealed a redesigned Claude Code desktop application that tweaks the user interface to bring more functionality to the forefront for users and reduce app-switching. "The redesign brings more commonly-used tools into the app, so you can review, tweak and ship Claude's work without bouncing to your editor," the company explained in a second blog post. The updates include an integrated terminal, a faster diff viewer, in-app file editor and an expanded preview area. It suggests that Anthropic is trying to own the interface used by developers to interact with Claude. By eliminating the need for users to "bounce" to their own editor, it encourages them to access the tool directly instead of doing so through a VS code plugin or third-party platform such as OpenCode. In addition, Anthropic stressed that Claude Code now offers the ability to support multiple sessions, which is an effort to try and cater to the way developers actually work with AI models on a daily basis. These days, most attempt to multitask, and will get AI agents to perform numerous jobs at the same time. "For many developers, the shape of agentic work has changed," the company wrote. "You're not typing one prompt and waiting. You're kicking off a refactor in one repo, a bug fix in another, and a test-writing pass in a third, checking on each as results come in, steering when something drifts, and reviewing diffs before you ship.

Like Anthropic, OpenAI Will Share Latest Technology Only With Trusted Companies

A week after Anthropic said it would limit the release its latest artificial intelligence technology to a small number of trusted organizations because of cybersecurity concerns, OpenAI said on Tuesday that it, too, was sharing a similar technology only with a group of partners. OpenAI, the maker of ChatGPT, said in a blog post that it would initially share a new A.I. model called GPT‑5.4‑Cyber with hundreds of organizations, before expanding the release to thousands of additional partners in the coming weeks. "Our goal is to make these tools as widely available as possible while preventing misuse," the company said. "We aim to make advanced defensive capabilities available to legitimate actors large and small, including those responsible for protecting critical infrastructure, public services, and the digital systems people depend on every day." Like Anthropic's technology, Claude Mythos Preview, GPT-5.4-Cyber is designed to identify security holes in software. Like other tools developed across the long history of cybersecurity, the technology can be used to both attack computer networks and defend them. By releasing the technology to a smaller group, OpenAI, like Anthropic, hopes to give defenders an edge over attackers. Before Anthropic unveiled Mythos last week, Zico Kolter, an OpenAI board member, called for such an approach in an interview with The New York Times. "Four or five months ago, we had a step change in what these systems could do," said Dr. Kolter, a professor of computer science at Carnegie Mellon University who specializes in security and A.I. But security experts disagree on the best way to handle such technologies. If they are not widely distributed from the beginning, some argue, they will ultimately pose a greater security risk because fewer organizations will be able to defend themselves using the most powerful systems. Over the past several months, products from the leading A.I. companies have grown more effective in areas like math and computer programming. Because they are adept at coding, they have a knack for finding security vulnerabilities in widely used software. Companies like OpenAI have also honed technologies specifically for this task. (The Times sued OpenAI and Microsoft in 2023 for copyright infringement of news content related to A.I. systems. The two companies have denied those claims.) OpenAI said it would share its new systems with hundreds of members of its Trusted Access for Cyber program, which it unveiled in February as a way to share technologies with cybersecurity professionals and other partners. The company also said it would reduce cybersecurity-related guardrails on its systems so that professionals could more easily use them to find security vulnerabilities. But as it shares its technologies, it will also work to verify the identity of users in an effort to prevent misuse. Last week, Anthropic limited the release of Claude Mythos to about 40 companies and organizations that maintain critical infrastructure, including the tech giants Apple, Amazon, Microsoft and Google, as well as the Linux Foundation, which oversees the Linux operating system, freely available software that is widely used across the internet. Cade Metz is a Times reporter who writes about artificial intelligence, driverless cars, robotics, virtual reality and other emerging areas of technology.

Anthropic shifts enterprise billing to usage-based pricing

Anthropic has restructured its enterprise plan to bill Claude, Claude Code, and Cowork usage separately from seat fees, moving its largest business customers to per-token pricing at standard API rates, according to the company's updated enterprise help documentation. Organizations on older seat-based plans with fixed usage allowances must migrate by their next contract renewal or lose the grandfathered terms. The Information first reported the shift, describing it as tied to a deepening compute crunch that will raise bills for heavy business users significantly. That is the official version of the story. The unofficial version starts with David Hsu. David Hsu prefers Claude Opus 4.6. He said so publicly. Then he moved his company off it. Hsu runs Retool, the software platform. He told the Wall Street Journal that Anthropic's model was better, but the service kept dying. His customers could not ship code when Claude choked. So he picked OpenAI, the inferior option, because the inferior option stayed up. That is the tell. Anthropic's API uptime over the 90 days ending April 8 was 98.95 percent, according to WSJ reporting. Established cloud providers commit to 99.99. One extra nine does not sound like much until you translate it. At Anthropic's rate, that is roughly 92 hours of downtime a year. At the 99.99 standard, 53 minutes. For an enterprise buyer, that is the difference between a tool and a liability. And then, quietly, Anthropic changed how it bills. The enterprise help center now reads like a concession letter dressed as documentation. "The seat fee only covers access to the platform and doesn't include any usage," it says. "All usage across Claude, Claude Code, and Cowork is billed separately at standard API rates, based on what your team actually consumes." Anthropic is not framing the change as a price hike. The direction is unmistakable anyway. Run rate at the end of 2025 was $9 billion. Run rate today is $30 billion. And the company's message to its biggest customers is: budget more for compute. That is not a typo. Revenue tripled in four months. The response was a billing restructure, not a victory lap. For about eighteen months, the AI industry sold a story. The story was not that the models were good. The story was that the pricing was stable. A $20 Claude Pro subscription gave you access to one of the best coding models in the world, running on compute that cost Anthropic significantly more than you paid. Power users always understood this. The arithmetic was never a secret. A single engineer on Claude Code burns through far more inference than a Pro fee could ever cover. The subscription was an open bar. Anthropic was buying the drinks. Open bars work until somebody shows up thirsty. In this case, the thirsty somebody is the agent. Agentic workflows do not sip. They chain tools across steps and run loops without asking. They spawn subagents carrying their own contexts. Cached tokens burn by the hundred thousand. One engineer running Claude Code overnight can consume the token budget of 200 casual chat users. OpenAI, running the same math on its own platform, watched token usage jump from 6 billion per minute in October to 15 billion per minute by late March. That is not growth. That is a break in the load curve. Blackwell GPU rental prices climbed 48 percent in two months. CoreWeave raised prices more than 20 percent late last year and now forces smaller customers onto three-year contracts. Bank of America expects compute demand to outstrip supply through 2029. PJM, the grid operator for the eastern US, warned Friday that it needs 15 gigawatts of additional power tied to AI by early 2027. The subsidy stopped being theoretical. It started bleeding. Watch the pattern. In late March, Anthropic quietly tightened five-hour session limits for Pro and Max users during peak hours, 5 a.m. to 11 a.m. Pacific, weekdays. About seven percent of users started hitting caps they had not hit before, per the company's own disclosure. Claude Code's prompt-cache time-to-live shifted back from one hour to five minutes in early March, driving up quota burn for long coding sessions. OpenClaw, a popular agent framework, got pulled out of the flat-fee bundle on April 4 and moved into usage-metered billing, with heavy users facing bills potentially 50 times higher. Each move was defended as a "product change" or an "optimization." None was framed as a price hike. Put them together and the shape is obvious. Anthropic is lifting features out of the subscription, welding meters onto each one, and sending customers the difference. You can feel the institutional anxiety in the public responses. Anthropic engineers denied, on X and GitHub, that the company had degraded Claude. They are not wrong about the model. They are cornered on the framing. The model is the same. The economics underneath it are not. One AMD senior director filed a data-heavy GitHub complaint on April 2, analyzing 6,852 Claude Code session files to argue Claude had stopped reasoning as deeply. Anthropic's Claude Code lead walked through her analysis, conceded that thinking-effort defaults had been lowered on March 3 to "the best balance across intelligence, latency and cost," and explained the rest as UI changes. Translate: we dialed down the compute and hoped nobody would notice. People noticed. That is why the usage-based migration is happening in public now. Enterprise buyers will not accept a silent shrinkflation. They want the meter visible, the billing legible, and someone else to blame when the monthly number gets ugly. The shift does real work for Anthropic. It transfers compute cost from balance sheet to invoice. It lets the company keep signing million-dollar accounts without needing to pre-buy the GPU capacity to serve them flat-rate. It gives Anthropic a clean story for Wall Street. Every new revenue dollar is now backed by a compute dollar, metered and paid. It breaks something else, though. Claude's growth engine was individual developers falling in love with Claude Code on their own dime, dragging it into their startups, then pushing employers to buy enterprise seats. That pipeline runs on a cheap Pro plan with enough headroom to experiment. Tighten the plan, meter the agents, add surprise quota math, and you poison the upstream. Business Insider spoke with three of those users last week. One restructured his entire workday around limit resets. Another now breaks a single project into four micro-chats to conserve tokens. A third simply stops working when the cap hits, because manual coding feels pointless after Claude has spoiled him. The affection is intact. The relationship is not. Here is the part Anthropic will not say out loud. Axios went hunting for a historical comparison and came back empty. Google's search-advertising ramp was the previous record. Anthropic covered nearly four times that ground in a single quarter. Snowflake took a decade to reach a billion in run rate. Anthropic added twenty-nine billion in roughly a year. More than 1,000 customers now pay over a million dollars a year for Claude. None of those numbers mean anything if the service cannot stay up. 98.95 percent is the crack. Enterprise buyers have been trained by twenty years of cloud discipline to treat that figure as disqualifying, and some are already voting with their wallets. Ramp card data shows Anthropic gaining on OpenAI in business spend, yes. That data lags the service degradation. The next three months test whether customer affection for Claude outruns exhaustion with its downtime. Expect two things from here. First, every major AI provider running agentic workloads moves to usage-based enterprise billing within six months. OpenAI already started, shifting Codex from flat-message to token metering in early April. GitHub tightened Copilot limits on April 10. Windsurf swapped credits for daily quotas. The flat-fee era is over. The memo is circulating. Second, Anthropic keeps telling two stories at once. The growth story for investors goes like this. Thirty billion dollars, 1,000 million-dollar accounts, a three-year curve that would embarrass Rockefeller. The rationing story for users lives somewhere else entirely. Capacity tightens during peak hours. Effort defaults quietly dropped in March. Session caps hit sooner than they used to. OpenClaw got its own meter. Both stories are true. Neither is sustainable without the other eventually catching up. David Hsu made his call already. He kept the inferior model because it stayed up. That is the verdict that should scare Anthropic more than any benchmark screenshot on X. It is not a performance problem. It is a trust problem. You cannot patch trust with a pricing page.

Anthropic Draws Investor Offers at Over $800 Billion Value (1)

has received several offers from investors for a new round of funding that could value the artificial intelligence startup at about $800 billion or higher -- overtures that the Claude maker has so far resisted, according to people familiar with the matter. The offers would more than double the $350 billion pre-money valuation Anthropic attached to its $30 billion fundraising in . The discussions between Anthropic and investors are still early and a deal could fail to materialize or the details could change, said the people, asking not to be identified because the information is private. Business ...

Anthropic fields VC offers to invest at up to $800 bln valuation- Business Insider By Investing.com

Investing.com-- Anthropic fielded multiple offers from venture capitalists to invest in the artificial intelligence startup at an as much as $800 billion valuation in recent weeks, Business Insider reported on Tuesday, citing people familiar with the matter. The AI startup behind Claude closed a funding round in February at a $380 billion valuation. The Business Insider report showed investors willing to value Anthropic at close to the $852 billion valuation rival OpenAI achieved in its March funding round. Get more breaking news on Wall St's top AI firms by subscribing to InvestingPro Investors were seen growing more enthusiastic on Anthropic as the company released a slew of advanced AI coding and agent tools in recent months, while its Claude AI assistant was also seen gaining more users. The startup said last week that it now expects annual run-rate revenue of $30 billion, up from $9 billion in end-2025. Last week, Anthropic claimed that its latest model, Mythos, was so powerful that it could not be released to the general public. The startup said it had offered access to the model to a limited group of companies. The company is backed by a slew of Wall Street majors, including Amazon.com Inc (NASDAQ:AMZN) and Alphabet Inc (NASDAQ:GOOGL).

Anthropic draws offers from VCs to invest at up to $800 billion valuation, Business Insider reports

April 14 (Reuters) - Anthropic has received multiple offers from venture capital firms valuing the Claude maker at as much as $800 billion in recent weeks, more than double its current valuation, Business Insider reported on Tuesday, citing sources. Anthropic did not immediately respond to Reuters' request for comment. Reuters could not immediately verify the report. Reporting by Anusha Shah in Bengaluru; Editing by Sherry Jacob-Phillips Our Standards: The Thomson Reuters Trust Principles., opens new tab

《日出而作》美伊料本周再談判美股造好,Anthropic擬推新旗艦AI模型

Copyright 2026 ET Net Limited. http://www.etnet.com.hk ET Net Limited, HKEx Information Services Limited, its Holding Companies and/or any Subsidiaries of such holding companies, and Third Party Information Providers endeavour to ensure the availability, completeness, timeliness, accuracy and reliability of the information provided but do not guarantee its availability, completeness, timeliness, accuracy or reliability and accept no liability (whether in tort or contract or otherwise) any loss or damage arising directly or indirectly from any inaccuracies, interruption, incompleteness, delay, omissions, or any decision made or action taken by you or any third party in reliance upon the information provided. The quotes, charts, commentaries and buy/sell ratings on this website should be used as references only with your own discretion. ET Net Limited is not soliciting any subscriber or site visitor to execute any trade. Any trades executed following the commentaries and buy/sell ratings on this website are taken at your own risk for your own account. 《經濟通》所刊的署名及/或不署名文章,相關內容屬作者個人意見,並不代表《經濟通》立場,《經濟通》所扮演的角色是提供一個自由言論平台。

Like Anthropic, OpenAI Will Share Latest Technology Only With Trusted Companies

The maker of ChatGPT announced the limited release of GPT-5.4-Cyber, a technology designed to find security holes in software. A week after Anthropic said it would limit the release its latest artificial intelligence technology to a small number of trusted organizations because of cybersecurity concerns, OpenAI said on Tuesday that it, too, was sharing a similar technology only with a group of partners. OpenAI, the maker of ChatGPT, said in a blog post that it would initially share a new A.I. model called GPT‑5.4‑Cyber with hundreds of organizations, before expanding the release to thousands of additional partners in the coming weeks. "Our goal is to make these tools as widely available as possible while preventing misuse," the company said. "We aim to make advanced defensive capabilities available to legitimate actors large and small, including those responsible for protecting critical infrastructure, public services, and the digital systems people depend on every day." Like Anthropic's technology, Claude Mythos Preview, GPT-5.4-Cyber is designed to identify security holes in software. Like other tools developed across the long history of cybersecurity, the technology can be used to both attack computer networks and defend them. By releasing the technology to a smaller group, OpenAI, like Anthropic, hopes to give defenders an edge over attackers. Before Anthropic unveiled Mythos last week, Zico Kolter, an OpenAI board member, called for such an approach in an interview with The New York Times. "Four or five months ago, we had a step change in what these systems could do," said Dr. Kolter, a professor of computer science at Carnegie Mellon University who specializes in security and A.I. But security experts disagree on the best way to handle such technologies. If they are not widely distributed from the beginning, some argue, they will ultimately pose a greater security risk because fewer organizations will be able to defend themselves using the most powerful systems. Over the past several months, products from the leading A.I. companies have grown more effective in areas like math and computer programming. Because they are adept at coding, they have a knack for finding security vulnerabilities in widely used software. Companies like OpenAI have also honed technologies specifically for this task. (The Times sued OpenAI and Microsoft in 2023 for copyright infringement of news content related to A.I. systems. The two companies have denied those claims.) OpenAI said it would share its new systems with hundreds of members of its Trusted Access for Cyber program, which it unveiled in February as a way to share technologies with cybersecurity professionals and other partners. The company also said it would reduce cybersecurity-related guardrails on its systems so that professionals could more easily use them to find security vulnerabilities. But as it shares its technologies, it will also work to verify the identity of users in an effort to prevent misuse. Last week, Anthropic limited the release of Claude Mythos to about 40 companies and organizations that maintain critical infrastructure, including the tech giants Apple, Amazon, Microsoft and Google, as well as the Linux Foundation, which oversees the Linux operating system, freely available software that is widely used across the internet.

Anthropic draws offers from VCs to invest at up to $800 billion valuation, Business Insider reports By Reuters

Risk Disclosure: Trading in financial instruments and/or cryptocurrencies involves high risks including the risk of losing some, or all, of your investment amount, and may not be suitable for all investors. Prices of cryptocurrencies are extremely volatile and may be affected by external factors such as financial, regulatory or political events. Trading on margin increases the financial risks. Before deciding to trade in financial instrument or cryptocurrencies you should be fully informed of the risks and costs associated with trading the financial markets, carefully consider your investment objectives, level of experience, and risk appetite, and seek professional advice where needed. Fusion Media would like to remind you that the data contained in this website is not necessarily real-time nor accurate. The data and prices on the website are not necessarily provided by any market or exchange, but may be provided by market makers, and so prices may not be accurate and may differ from the actual price at any given market, meaning prices are indicative and not appropriate for trading purposes. Fusion Media and any provider of the data contained in this website will not accept liability for any loss or damage as a result of your trading, or your reliance on the information contained within this website. It is prohibited to use, store, reproduce, display, modify, transmit or distribute the data contained in this website without the explicit prior written permission of Fusion Media and/or the data provider. All intellectual property rights are reserved by the providers and/or the exchange providing the data contained in this website. Fusion Media may be compensated by the advertisers that appear on the website, based on your interaction with the advertisements or advertisers.

Anthropic draws offers from VCs to invest at up to $800 billion valuation, Business Insider reports

April 14 (Reuters) - Anthropic has received multiple offers from venture capital firms valuing the Claude maker at as much as $800 billion in recent weeks, more than double its current valuation, Business Insider reported on Tuesday, citing sources. Anthropic did not immediately respond to Reuters' request for comment. Reuters could not immediately verify the report. (Reporting by Anusha Shah in Bengaluru; Editing by Sherry Jacob-Phillips)

Anthropic draws offers from VCs to invest at up to $800 billion valuation, Business Insider reports

April 14 (Reuters) - Anthropic has received multiple offers from venture capital firms valuing the Claude maker at as much as $800 billion in recent weeks, more than double its current valuation, Business Insider reported on Tuesday, citing sources. Anthropic did not immediately respond to Reuters' request for comment. Reuters could not immediately verify the report. (Reporting by Anusha Shah in Bengaluru; Editing by Sherry Jacob-Phillips)

SpaceX, Blue Origin moon landers in focus after NASA's Artemis success

COLORADO SPRINGS, Colorado, April 14 (Reuters) - After the safe return of four astronauts from a historic flyby of the moon last week, NASA is shifting focus to its next challenge: putting competing lunar landers from Elon Musk's SpaceX and Jeff Bezos's Blue Origin through a series of rigorous tests ahead of future crewed landings. NASA's nearly 10-day Artemis II mission marked the first crewed flight of the agency's multibillion-dollar return-to-the-moon program and sent astronauts farther from Earth than ever before. The mission was designed as a dress rehearsal for later flights, validating the systems needed to carry crews into deep space. But the milestone has also sharpened attention on what many officials see as one of the program's biggest remaining risks. The commercial lunar landers must demonstrate that they can perform a complex final descent to the moon and bring astronauts safely home, a feat NASA has not attempted since 1972. NASA aims to put astronauts back on the moon by 2028 as it faces growing competition from China, which plans a crewed lunar landing by 2030. To hedge against delays, the agency selected both SpaceX and Blue Origin to develop competing landers, hoping competition and private investment would accelerate progress. "They both look at this as a competition, and that's a great thing," NASA Administrator Jared Isaacman said in an interview on Monday. Isaacman spoke days after welcoming back the Artemis II astronauts, who splashed down on Friday following their mission around the moon. The flight marked the first crewed launch of NASA's Space Launch System rocket, built by Boeing and Northrop Grumman, and the Orion capsule, built by Lockheed Martin. While SLS and Orion are traditional, government-owned vehicles designed to ferry astronauts from Earth to lunar orbit, NASA has turned to commercial companies for the final leg of the journey: landing on the moon's surface. MUSK VS BEZOS IN BILLIONAIRES' MOON RACE SpaceX is developing a Human Landing System based on its massive Starship rocket, a stainless-steel vehicle far larger than any moon lander built before. Blue Origin is building its own Blue Moon lander, relying on a more traditional design philosophy. Blue Origin aims to land an uncrewed version of Blue Moon on the moon this summer, according to two people familiar with the plan, marking the company's first attempt at a soft lunar landing. The test, known as Mark 1, would be a critical milestone after years of development. "I will just say that this Mark 1 landing is going to be very important," Isaacman said. SpaceX, meanwhile, is preparing to launch a new iteration of Starship, known as Version 3, as soon as May, following a months-long hiatus. The rocket, first unveiled by Musk in 2016, has suffered repeated delays and test failures as SpaceX pushes the limits of launch, landing and reusability. After 11 test flights since 2023, some ending in explosions, SpaceX says the new version incorporates dozens of upgrades requested by NASA. "That's the version that HLS is going to be based on," said Kent Chojnacki, NASA's deputy manager for the Human Landing System program. "That's going to become the workhorse version." Before Starship can land astronauts on the moon, SpaceX must first put the vehicle into orbit and demonstrate controlled reentry of its upper stage, a step it has not yet achieved. The company must then show that two Starships can dock in space and transfer propellant, a capability NASA considers essential for lunar missions. NASA has pressed both companies to accelerate their work, though officials acknowledge the challenges are formidable. MOON LANDER DESIGNS CHANGING Unlike Apollo, which landed six crews on the moon within a few years, Artemis is designed to be a long-term program, with landers that can be reused and adapted for sustained exploration. That ambition has added complexity and increased testing demands. Asked whether SpaceX had proposed an accelerated plan that avoids Starship's demanding in-space refueling sequence, Isaacman said both companies "have taken an approach that brings down the technical risks significantly." NASA officials say they expect the designs to further evolve. "I don't have any faith that the design today is going to be the design that lands on the moon," Chojnacki said. Blue Origin has reworked parts of its original architecture after NASA pushed for faster progress. People familiar with the company's plans said it has simplified early missions, shelving more complex refueling concepts for its initial moon landings in favor of designs that reduce near-term risk. Despite the uncertainty, NASA insists the dual-provider strategy gives it the best chance of success. "I don't think it's lost on either one of them the importance of getting to the moon and doing so before our big rival," Isaacman said, an apparent reference to China. (Reporting by Joey Roulette; Editing by Joe Brock and Bill Berkrot)

Anthropic Attracts Investor Offers at an $800 Billion Valuation

Anthropic PBC has received several offers from investors for a new round of funding that could value the artificial intelligence startup at about $800 billion or higher -- overtures that the Claude maker has so far resisted, according to people familiar with the matter. The offers would more than double the $350 billion pre-money valuation Anthropic attached to its $30 billion fundraising in February. The discussions between Anthropic and investors are still early and a deal could fail to materialize or the details could change, said the people, asking not to be identified because the information is private. Business Insider previously reportedBloomberg Terminal some details of the talks. Anthropic declined to comment. Anthropic has released a series of AI tools aimed at overhauling the way businesses handle tasks from coding to cybersecurity. Those products are resonating with a growing base of business customers, leading to a surge in revenue and rising competition with rival OpenAI. While Anthropic hasn't ruled out raising new money in the coming months, according to one of the people, it's not clear the company will accept investors' terms or if it will raise at an $800 billion value. Anthropic has also discussed a public listing as soon as October, Bloomberg has reported. Investors have been impressed by Anthropic's strong revenue growth, particularly with deep-pocketed enterprise customers. Earlier this month, the startup said it had reached $30 billion in annual run-rate revenue, marking a sharp increase from $19 billion just a few months before. Anthropic has risen in prominence recently after a disagreement with the US Defense Department over the safety of using its AI tools. The company also recently unveiled a new model, Mythos, that it said would be irresponsible to release widely because it can identify and exploit software vulnerabilities.

Is Anthropic's Claude Mythos a big stunt, or a real security threat? What the experts say.

The federal government summoned financial leaders to an emergency meeting over Claude Mythos last week. Anthropic put the entire tech world on notice last week with an unprecedented announcement: it made an AI model so advanced that it was too dangerous to release to the public. Anthropic said the new frontier language model, Claude Mythos Preview, would "reshape cybersecurity." Anthropic also announced the formation of Project Glasswing, an invite-only group of organizations -- including some of Anthropic's biggest competitors -- to test Claude Mythos Preview and secure their infrastructure. Anthropic said that Claude Mythos Preview "found thousands of high-severity vulnerabilities, including some in every major operating system and web browser." (Emphasis in original.) The company said Project Glasswing was necessary "to help secure the world's most critical software." By Friday, CNBC reported that Federal Reserve Chairman Jerome Powell and Treasury Secretary Scott Bessent had summoned the high priests of finance (aka banking CEOs) for an emergency meeting about the new model. New York Times writer Thomas Friedman fretted over a "terrifying" future in which any teenager armed with Claude could hack the local power grid. The reaction to Claude Mythos Preview quickly split along predictable lines. AI boosters hailed the new model as proof that artificial general intelligence (AGI) was nigh, praising Anthropic for rolling it out so responsibly. Critics and AI skeptics called Project Glasswing a big publicity stunt. So, which is it? To find out, Mashable has been reviewing Anthropic's claims and talking to AI and cybersecurity experts. What is Claude Mythos Preview? Claude Mythos is a new large-language model that Anthropic says performs significantly better than Claude Opus 4.6, widely considered one of the best AI models in the world, especially in cybersecurity. "In our testing, Claude Mythos Preview demonstrated a striking leap in cyber capabilities relative to prior models, including the ability to autonomously discover and exploit zero-day vulnerabilities in major operating systems and web browsers," reads the Claude Mythos system card. Is Claude Mythos a sign of AGI? Artificial general intelligence refers to superintelligent AI that can perform better than humans across a wide range of tasks. It's not an exaggeration to say that our entire economy has been organized around the quest for AGI, as Anthropic, Google, Meta, xAI, and OpenAI pour hundreds of billions of dollars into a new arms race. If Claude Mythos is as capable as Anthropic says, would it be an example of AGI? The model card addresses this question directly, and Anthropic does seem to think it's close to AGI. In a section about Claude Mythos safety risks, Antropic writes: "Current risks remain low. But we see warning signs that keeping them low could be a major challenge if capabilities continue advancing rapidly (e.g., to the point of strongly superhuman AI systems)." Of course, Anthropic has a strong financial incentive to promote this belief. Ultimately, the model card for Claude Mythos is more conservative than the reaction online would suggest. For example, while the Claude Mythos model card does show that this model performs above the trend line for previous Anthropic models, Anthropic says it does not show evidence of self-improvement or recursive growth. ("The gains we can identify are confidently attributable to human research, not AI assistance.") Reasons to think Project Glasswing is a publicity stunt Don't make me tap my sign: "[When] an AI salesman tells you that AI is an unstoppable world-changing technology on the order of the agricultural revolution...you should take this prediction for what it is: a sales pitch." I wrote those words of caution in response to an essay by Anthropic CEO Dario Amodei that warned about the potentially cataclysmic dangers of AI. Anthropic also has a history of issuing dire warnings about its AI models. You may remember the story of the Anthropic model that tried to "blackmail" a company CEO to prevent it from being turned off. In reality, Anthropic designed a test environment where blackmail was a potential outcome. This may be more akin to digital entrapment than genuine model misbehavior. So, is Claude Mythos the latest example of the industry's Chicken Little problem? On X, AI safety engineer Heidy Khlaaf listed a number of open questions that cast doubt on Anthropic's claims. Anthropic said the Claude Mythos preview found thousands of zero-day vulnerabilities. But Khlaaf says Anthropic left out key facts needed to assess this claim -- the rate of false positives, how Claude Mythos compares to existing cybersecurity tools, and exactly how much manual human review was required. "Releasing a marketing post with purposely vague language that clearly obscures evidence needed to substantiate Anthropic's claims brings into question if they are trying to garner further investment," Khlaaf told Mashable. "It also serves their 'safety first' image as they're able to frame the lack of public release, even a limited one for independent evaluation, as a public service when it simply obscures even experts' abilities to validate their claims." We reached out to Anthropic repeatedly about these concerns, but the company did not respond. We will update this article if they do. In the Claude Mythos system card, Anthropic wrote that more data will be released in the coming weeks as the bugs Mythos found are patched and fixed. Gary Marcus, an AI expert, author, and noted critic of the LLM hype machine, initially told Mashable that it was too soon to know whether Claude Mythos represented a new type of threat. But Marcus has grown more skeptical since we spoke to him, and he recently wrote on X that Mythos was "nowhere near as scary" as it first seemed. "Folks, you can relax. Mythos is not some off-trend exponential gain," he wrote. Cybersecurity experts told Mashable it's also very unlikely Claude Mythos could be used to "turn off the lights" or bring down critical infrastructure. "Claims about catastrophic uses of Mythos also significantly misunderstand threat models, cybersecurity risks, and the ability to propagate said risks in a way that could actually lead to safety-critical incidents," Khlaaf told us. "It's not as simple as asking a model 'hack this system,' with Anthropic's own technical blog post demonstrating a requisite of expertise that Anthropic downplays in their marketing posts." Other experts expressed skepticism, while also acknowledging that Mythos does represent a genuine risk, which Marcus has also said. "You could argue it didn't need a public announcement," said Div Garg, a Stanford AI researcher and founder of AGI, Inc. "However, ultimately, the decision to limit access to only those who develop and maintain critical software is precisely what you want a business to do in such a scenario...It's easy to criticize the limited access, but worse outcomes would arise if they released it unchecked." Tal Kollender, Founder and CEO of cybersecurity firm Remedio, told Mashable that tools like Claude Mythos are dangerous because they can exploit discovery. "It's brilliant corporate theater," Kolender said. "Labeling a model 'too dangerous to release to the public' is certainly a marketing flex because it immediately creates mystique and signals immense power to investors. But beneath the PR stunt, there is a very real, very mundane truth...The cybersecurity industry doesn't actually have a 'finding' problem. We are already drowning in tools that detect vulnerabilities. What Mythos does is automate that discovery process at an unprecedented scale." TL;DR: A week after revealing Claude Mythos Preview, some of Anthropic's biggest claims about the model look a lot sketchier, experts say. However, they also acknowledge that Claude Mythos, and other tools like it, pose a real risk. Still, there are plenty of very valid reasons to be nervous about the new frontier model. Reasons to think Claude Mythos Preview is a genuine threat to global cybersecurity In the New York Times, author Thomas Friedman conjures a scenario straight out of War Games, where a teenager hacks the local power grid after school. That scenario seems even more far-fetched a week later. But here's a much more likely scenario: A sophisticated group of hackers uses a tool like Claude Mythos to find zero-day vulnerabilities in our digital infrastructure, launching attacks faster than organizations can respond. And that scenario should worry you. If Claude Mythos isn't the tool that can do it, most experts agree such a tool isn't far off. And some of the world's leading cybersecurity experts certainly seem worried. "I've found more bugs in the last couple of weeks [with Claude Mythos] than in the rest of my entire life combined," said Nicholas Carlini, a research scientist affiliated with Anthropic and Google DeepMind, in a video on the Project Glasswing website. "On Linux, we found a number of vulnerabilities where, as a user with no permissions, I can elevate myself to the administrator by just running some binary on my machine," Carlini said. This week, the AI Security Institute published its findings on Claude Mythos's capabilities, and it provides some independent verification that it does represent a genuine leap forward. Claude Mythos passed cybersecurity tests that no other model had ever completed, scoring higher than any other frontier model on virtually every test. "Our testing shows that Mythos Preview can exploit systems with weak security posture, and it is likely that more models with these capabilities will be developed," AISI concluded. AISI also identified some limitations with Claude Mythos, which would impair its effectiveness in real-world scenarios. So, was Anthropic's rollout of Mythos responsible AI stewardship or self-serving marketing? Experts I talked to said these options aren't mutually exclusive. "I'd say it's both, and that's not a criticism," said Xu. "Any major platform rollout in this era is going to look different to different audiences depending on their fluency and their fear tolerance. What I care about is whether the intent is real, and the evidence I've seen from Anthropic suggests it mostly is." As is often the case with fear-inducing AI headlines, the reality turned out to be more complicated. "Personally, I don't go to bed worrying about a kid with Mythos hacking the power grid, but that doesn't mean the concern is fictional," said Howie Xu, Gen's Chief AI & Innovation Officer. "We're at an inflection point where the creative and collaborative upside of these tools is massive, and the security infrastructure hasn't caught up. That gap is exactly what keeps me busy. Even a fractional probability of a serious incident is too much, which is why building a trust and security layer into the agentic era is my extreme focus." Finally, as Anthropic stresses in the Claude Mythos model card, tools like this will likely benefit cybersecurity defenders more than hackers in the long-term. And in the short-term, a more cautious approach -- like the approach being modeled with Project Glasswing -- may be warranted. TL;DR: Claude Mythos has formidable cybersecurity coding abilities, and it does represent a genuine threat. However, if hackers have access to AI tools like Claude Mythos, so will the organizations defending against such attacks.

Sources: Anthropic has fielded multiple offers from VCs valuing the company at as much as $800B in recent weeks; it was valued at $380B in February

Max Weinbach / @mweinbach: iMessage is great for agents because you get connectivity literally anywhere with satellites Imagine texting your agent in the middle of the ocean via satellites. Offline access is starting to matter way less... $AMZN buying Globalstar and merging it with its satellite business called Leo is a strategic AI robotics play IMO. When we have AI humanoid robots, a key problem will be connectivity, as these humanoids will be doing different tasks all over the world, where connectivity might be an issue. Ground networks might also be unstable and congested if we have millions of robots. Having a satellite layer as backup or primary connectivity will be really valuable, since you can offer a stable service (and "uptime") that few others can.

Crypto Exchange Kraken Prepares for IPO | PYMNTS.com

Sethi shared this news at a Semafor World Economy event in Washington, D.C., according to the report. Kraken aims to help its customers make sophisticated trades that would otherwise be available only to professional investors, Sethi said. "That's our mission: How do we make all these products open? We want to be able to help enable what you want to do with your own capital," Sethi said, per the report. Kraken announced in November that it had confidentially filed for an IPO with the Securities and Exchange Commission (SEC) and that it expected the IPO to occur after the regulator completed its review process, subject to market and other conditions. Bloomberg reported at the time, citing sources familiar with the matter, that Kraken aimed to go public as soon as the first quarter of 2026. However, on March 18, CoinDesk reported that Kraken had paused its IPO plans until market conditions improved. Since the company's November announcement, the downturn in crypto markets that began in October had continued and made companies more cautious about going public due to weaker investor sentiment. On Tuesday, German stock exchange operator Deutsche Börse announced that it has invested $200 million in Kraken. The company said the deal gives it a 1.5% stake in Kraken parent Payward and expands an existing partnership between the two groups to combine traditional financial markets and digital assets. A report by Bloomberg News calculated that the transactions value Kraken at $13.3 billion. Kraken was valued at $20 billion in November, in the second of two tranches in which it raised a total of $800 billion, the company said at the time in a press release. The company said in the release that it would use the funding to accelerate its efforts to bring traditional financial products on-chain. Kraken added that it would expand its product suite organically and through acquisitions, deepen its regulated footprint, enter new markets and continue to expand its offerings beyond crypto.