News & Updates

The latest news and updates from companies in the WLTH portfolio.

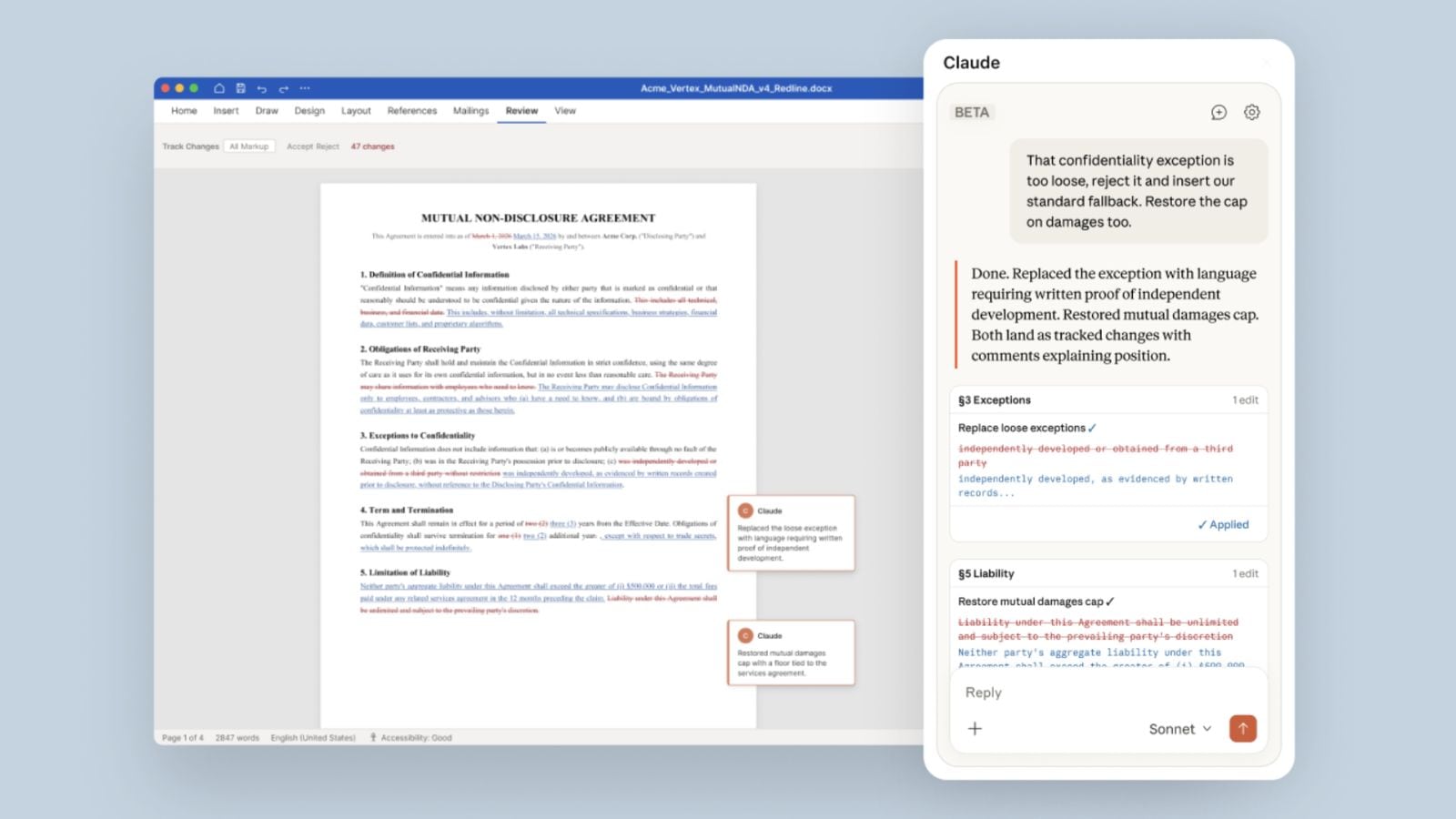

Anthropic introduces Claude AI integration for Microsoft Word users

Microsoft Word users may soon be able to use Claude's AI capabilities to review, redline, and draft documents within the popular word processing programme. The beta version of Claude for Word was rolled out by Anthropic on Saturday, April 11. The purpose-built AI integration is "designed for professionals who work extensively with documents, particularly in legal review, financial memo drafting, and iterative editing," Anthropic said. With Claude for Word, users can ask questions about their documents and receive AI-generated answers with clickable section citations. The latest Word add-in also enables capabilities such as editing selected text while preserving surrounding styles, numbering, and formatting. It also offers a 'tracked changes mode' that allows users to accept or reject every edit as a revision, as per the company. In its announcement blog post, Anthropic provided examples of prompts that could be used by lawyers to review a legal contract in Word. Claude for Word is currently available only to Team and Enterprise plans. It marks yet another potential challenge to Microsoft's flagship office suite of tools. Anthropic is also making a push into the legal profession with Claude for Word, where AI is already finding a wide range of practical applications. Also Read | Anthropic's Claude can now use your computer like a human: Will it replace OpenClaw? In the past few months, the San Francisco-based AI startup has rapidly expanded Claude's capabilities to appeal to more than just developers and embed the AI model across finance teams, human resource departments, etc. Earlier this year, Anthropic launched Claude into Microsoft Excel and PowerPoint. "Claude for Word accelerates document work through intelligent assistance. It reads complex multi-section documents, works through comment threads, and edits clauses while preserving your formatting, numbering, and styles," as per the product's description on the official Microsoft Marketplace. Story continues below this ad "Whether you're triaging counterparty redlines, drafting from a template, or running a final consistency check, Claude maintains full transparency -- every edit can land as a tracked change you review before accepting," the post adds.

Workers protest for salary hike turns viuolent, arson and chaos erupt - BW Police World

Protests set vehicles on fire in Noida while the violence reached Faridabad too where workers went on strike in Sector 37 : Police The three day protests of workers for salary hike turned violent in Noida as clshes and arson broke out. Large groups of workers blocked key roads, leading to massive traffic jams along the Delhi-Noida border, while police resorted to mild force to disperse the crowd. Tensions flared in the Phase 2 area, especially in Noida Sectors 1 and 84, where protesters set at least two vehicles on fire. The workers raised slogans and expressed strong anger against company management and the labour department over unresolved salary demands. Employees from several other companies in the area also joined the protest, walking out of their workplaces in solidarity.

Anthropic's Claude Mythos triggers sell-off in cybersecurity stocks

US cybersecurity stocks have been in a tailspin since Wednesday following Anthropic's announcement of Claude Mythos Preview, an AI model deemed powerful enough to warrant strictly controlled access. The company explained that the tool is capable of identifying thousands of software vulnerabilities, some long-standing, and that its public release would pose high risks of malicious use. The market reaction has been brutal. Over three sessions, Palo Alto Networks has shed approximately 12%, Akamai Technologies 20%, Fortinet 8%, and CrowdStrike 11%. Investors fear that the acceleration of AI's offensive capabilities could weaken traditional cybersecurity frameworks and expose structural flaws in widespread software. The issue has become so sensitive that, according to Reuters, Jerome Powell and Scott Bessent held an emergency meeting with heads of major U.S. banks to warn them of the cybersecurity risks associated with this new model. Anthropic has nevertheless sought to provide a framework for the technology through Project Glasswing, which brings together a dozen major partners and over 40 other organizations to automatically detect and patch critical flaws before they can be exploited.

Anthropic's new Claude Mythos model: A new threat in the waiting for Indian IT stocks?

Indian IT stocks may face fresh turbulence as Anthropic's preview model, Mythos, raises disruption risks. Analysts warn its sharp gains in software engineering tasks mark a "step-jump," not incremental progress, potentially pressuring valuations. Kotak says the leap could have meaningful implications for IT services firms, narrowing adaptation time and intensifying concerns over near-to-medium term demand, pricing power, and margins. Indian IT stocks are bracing for another wave of turbulence. After previous Claude models rattled investor confidence in the sector, Anthropic's latest release, a preview of a model called Mythos, raises the stakes further, with analysts warning of near- to medium-term disruption risks that could pressure valuations across the industry. "Mythos' significant improvement in software engineering-related tasks is a departure from the trend of incremental improvements between consecutive frontier models," Kotak Institutional Equities said in a note. "These developments could have implications for IT services firms." What makes Mythos different from its predecessors is not merely better performance, but the nature of the leap. Kotak describes it as a "step-jump" in benchmark performance across software engineering tasks - a break from the incremental gains that had, until now, given the industry some breathing room to adapt. Anthropic has not released Mythos publicly. Instead, the San Francisco-based AI company is rolling it out through a controlled programme called Project Glasswing, with a closed group of partners that includes Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft and NVIDIA. That limited release is itself a caveat. "Model capabilities are largely unproven in real-world scenarios due to a lack of a public release," Kotak noted. Beyond coding, Mythos is being positioned as a formidable cybersecurity tool that, according to Anthropic, outperforms human experts and existing tools. In some cases, it has reportedly identified software bugs that went undetected for decades despite multiple testing cycles. In some ways, it is described as superior to most human cybersecurity engineers. Also Read | Doomsday or deep value: India's IT stocks at crossroads after 20% crash Motilal Oswal flagged the significance of this shift. "Mythos shows that model capabilities are moving ahead quickly, with AI now extending beyond coding and ERP into areas like cybersecurity," the brokerage said. This broadening of AI's capability footprint enlarges the surface area of potential disruption for Indian IT firms, which have long relied on labour-intensive models across both software development and managed security services. Not all Indian IT firms face equal risk. The critical variable is exposure to application services, also known as custom application development, where agentic software engineering capabilities could drive the sharpest productivity gains, and therefore the deepest headcount implications. Among Tier 1 names, Infosys carries higher exposure to application services, while HCL Technologies sits at the lower end. The risk calculus is sharper in the mid-tier, where Persistent Systems leads Indian peers in apps exposure. Kotak estimates a 3-3.5% annual growth headwind for the industry over the next three years. Mythos, if its capabilities translate to real-world deployments, could turn that estimate "from prudent to practical," the brokerage warned, with further downside if large capability improvements continue in future frontier models. "The Mythos model provides a firmer foundation for AI disruption-related concerns and could pressurize the valuation multiples of IT services companies," Kotak said. There is one structural cushion for incumbents: the complexity of enterprise IT environments. Motilal Oswal points out that large enterprises operate in "brownfield" setups -- legacy systems built over 20-30 years -- where deploying AI requires integration, data cleanup, and governance alignment, all of which take time. The contrast with new-age companies is stark. Of the top 20 token users for OpenAI, 90% are new-age companies, indicating that AI deployment remains significantly easier in greenfield, cloud-first environments than in legacy enterprise settings. Mythos also improves hallucination rates, alignment to user instructions and long-context recall. These factors could meaningfully lift AI adoption in IT services tasks beyond the narrow coding use cases markets have focused on so far. (You can now subscribe to our ETMarkets WhatsApp channel)

Anthropic Mythos Reveals Pandora's Box Of AI Extensional Risks And For Safety Sakes Not Yet Publicly Released

In today's column, I examine the brouhaha over Anthropic's latest AI, known as Claude Mythos Preview, which has attracted tremendous controversy even though it hasn't yet been released for public use. You might have seen major news headlines or vociferous postings on social media about Mythos. The deal is that Anthropic discovered during lab testing that their latest unreleased AI has the capability to do bad things and reveal dire secrets that would be harmful to humankind. A primary area of concern is that Mythos discovered or uncovered a plethora of cybersecurity holes that evildoers could use to undermine a large swath of computing throughout society. I'll explain momentarily how it is that modern-era generative AI and large language models (LLMs) can veer into such untoward territory. The AI maker has opted to convene AI specialists and cybersecurity professionals to assess Mythos amid the myriads of unsavory system exploits that it seems to have in hand. The effort launched is known as Project Glasswing, and per the official website: "Today we're announcing Project Glasswing, a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software. We formed Project Glasswing because of the capabilities we've observed in a new frontier model trained by Anthropic that we believe could reshape cybersecurity." Let's talk about the whole conundrum. This analysis of AI breakthroughs is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Four Major Considerations I will address four major considerations about Mythos: * (1) Mythos discovered or uncovered a large set of cybersecurity holes. * (2) Mythos allegedly broke out of its lab sandbox or containment sphere at one point. * (3) Who should decide when leading-edge AI should or can be publicly released? * (4) Is there a potential for a marketing blarney ploy when it comes to releasing AI? They all four relate to each other. I'll make sure to bring them into a cohesive whole to provide a big picture on this newsworthy topic. Amassing Cybersecurity Holes First, consider the claim by Anthropic that the Mythos LLM managed to discover or uncover a large set of cybersecurity holes. Here's what Anthropic's official System Card: Claude Mythos Preview dated April 7, 2026, had to say (excerpts): * "Claude Mythos Preview is a new large language model from Anthropic. It is a frontier AI model and has capabilities in many areas -- including software engineering, reasoning, computer use, knowledge work, and assistance with research -- that are substantially beyond those of any model we have previously trained." * "In particular, it has demonstrated powerful cybersecurity skills, which can be used for both defensive purposes (finding and fixing vulnerabilities in software code) and offensive purposes (designing sophisticated ways to exploit those vulnerabilities)." * "It is largely due to these capabilities that we have made the decision not to release Claude Mythos Preview for general availability. Instead, we have offered access to the model to a number of partner organizations that maintain important software infrastructure, under terms that restrict its uses to cybersecurity." This outcome of possessing cybersecurity capabilities certainly seems like a highly plausible possibility. Here's why. When generative AI is initially data trained, AI makers scan across the Internet to pattern match on human writing. Zillions of posted stories, narratives, plays, poems, documents, files, and the like are scanned. The LLM uses those materials to mathematically and computationally pattern the words that humans use and how we make use of those words. For an in-depth explanation of the AI training process, see my coverage at the link here. Among all that online written content, there is bound to be a sizable amount of discussion and conjecture about cybersecurity. Deriving Cybersecurity Exploits People continually post new tricks to fool cybersecurity defenses. Sometimes the postings are accurate, other times it is merely wild speculation. A social media post might claim that you can break into Microsoft Windows by doing this or that, or that a flaw in the OpenBSD operating system makes it possible to take over or bring down governmental and business servers on the Internet. Lots and lots of cybersecurity gossip and factual indications are scattered throughout the online world. It makes indubitable sense that a leading-edge LLM would pick up those exploits and include them within the overall patterns of human-written content. This presents a big problem since the easily accessible LLM then becomes a handy one-stop shop for any hackers or evildoers who want to find out how to crack into computers throughout the globe. Not only would an LLM collect such exploits, but the odds are that those exploits could be extended or otherwise elaborated by the AI. This is not due to the AI being sentient. Please set aside those false claims about AI being sentient. Via the use of mathematical and computational formulations of the found exploits, it would be possible for an LLM to derive new variations. For example, an exploit that works on one brand of operating system might apply to a different brand. This could require recasting the exploit to fit the distinctive system's characteristics of the other brand. No sentience is required to get there, just the manipulation of words and numbers. In the end, think of an everyday LLM as a candy store containing cybersecurity exploits. You just ask the AI how to break into a particular computer or server, and the LLM will lean into its AI sycophancy to readily answer your question with all the needed bells and whistles attached. AI makers know that this can occur, so they usually incorporate AI safeguards that rebuff such prompts. Those AI safeguards are not an ironclad guarantee. Clever prompting can at times circumvent the AI safeguards. Testing Of LLMs AI makers run their budding LLMs through a large array of tests to try to ascertain whether the AI might do bad things once it is released to the public. Will the AI tell how to make biological weapons or chemical poisons? Will the AI explain how to rob banks? On and on, there are a vast number of ways that an LLM can provide information of an unsavory nature. The AI maker tries to suppress inappropriate aspects within the LLM at the get-go. In addition, AI safeguards that are active at runtime attempt to detect when the AI is veering into improper realms. All these approaches are aimed at trying to keep AI from going down rotten paths. It is a hard problem to solve since the largeness of the AI and the slipperiness of human natural language tend to infuse difficult-to-detect hidden "bad" gems inside the AI. For my analysis of AI-focused verification and validation techniques to deal with this problem, see the link here. Keeping LLMs Under Wraps Until Ready The testing of an LLM is supposed to reveal disconcerting actions that the AI could potentially commit. Perhaps, during testing, the AI tries to take down millions of computers. AI makers typically perform their tests inside a secure system that keeps the AI entirely contained and boxed in. For safety purposes, the idea is to keep the LLM held within a protective bubble and not allow it to reach the Internet or other external venues. These setups are often referred to as AI sandboxes or AI containment spheres; see my analysis of these mechanisms at the link here. During the testing of Mythos, it has been reported that the LLM was able to briefly break out of its lab computer. That shouldn't happen. There apparently wasn't anything dour that occurred, thankfully. In any case, I'll be covering this in an upcoming post on how this type of circumstance can arise and what AI makers need to be doing to prevent leakages during testing. Why does it matter if an LLM escapes or accesses the outside world during testing? The results of an LLM leaking to the outside world that has not yet been properly readied for public release could be catastrophic. Suppose the AI has uncovered passwords to sensitive governmental computers, possibly found on the dark web or hidden within some obscure public file that no one realized was openly accessible (generally referred to as a type of zero-day exploit). The AI could end up posting those passwords or readily give out the passwords when asked via a prompt. Hopefully, during testing, the AI maker would have discovered the secret passwords and done something to prevent them from ever being released by the AI. Furthermore, you could contend that the AI maker has a kind of ethical obligation to let the owners of those government computers know that the passwords have been found by the LLM. This makes sense since even if the AI maker suppressed or excises the passwords from within their specific LLM, the chances are that those passwords still exist somewhere on the open Internet. It would be on the shoulders of the government agency to then try to find and expunge those passwords, and/or opt to change the passwords of the noted government computers. The Decision To Release LLMs The concern about Mythos brings up a big picture question: * Who should decide when a new LLM is ready to be publicly released? You might say that it is entirely up to the AI maker to make that determination. The AI maker is the one who crafted the LLM. The AI maker presumably tested the LLM. All told, it makes abundant sense that the AI maker would be the one to decide if or when to release their LLM. Period, end of story. That's how things work currently. It is up to the AI maker to make the decision. Right or wrong, that's where we are presently. A counterargument is that LLMs can contain so many problematic issues that it shouldn't merely be that the AI maker alone decides when or if to release the AI. Perhaps the AI maker is rushed due to marketplace pressures. Maybe the AI maker cuts corners. Leaving the weighty matter solely in the hands of the AI maker might be overly dicey. Some fervently assert that there should be a double-checking approach involved. Perhaps an AI maker would need to go to a government agency and get approval to release their LLM. Or the AI maker might be required by law to go to an authorized third-party auditor that would review the testing, possibly perform additional testing, and then give a green light for release. There are already new AI laws that are heading in this direction; see my analysis at the link here. Some applaud this emerging requirement. A contrasting viewpoint is that adding a double-checking step is going to materially slow down the release of state-of-the-art LLMs. The United States might fall behind other countries that aren't imposing those kinds of double-checks. In addition, suppose the AI has lots of crucial, beneficial uses; those are being held back until the double-check approves the LLM to be released. A societal and legal debate is underway. Time will tell how this plays out. Delaying LLM For Other Reasons There is a bit of skepticism that arises when any AI maker announces they are delaying the release of their newly devised LLM. We've had such pronouncements happen in the past. A skeptic would claim that holding back an LLM might be a sneaky maneuver, acting as a marketing ploy. An AI maker could potentially create a tremendous buzz for their LLM. It might garner outsized headlines. The chatter gets the AI maker double credit. When they first say they aren't releasing the AI due to dangers afoot, this spurs bold headlines. Then, once the AI is presumably scrubbed and ready for release, the AI maker gets a second buzz since the world is waiting with bated breath to try out the mysterious LLM. In the instance of Mythos, the aspect that they made available their extensive System Card, consisting of around 245 pages of descriptions about the LLM, appears to put the skeptics somewhat back on their heels. Would an AI maker go to that trouble and be that upfront if they were bent on buzz? Aha, the skeptics say, this is a ratcheting up of the buzz technique, namely that the documentation gets even more spilled ink than if there hadn't been such a document released. It is challenging to differentiate between buzz making versus genuine intentions. Of course, if an AI maker opts to release their LLM and the AI does bad things or allows evil makers to do bad things, the AI maker would get roasted for having prematurely released the AI. Darned if you do, darned if you don't. AI Risks Are Large And Plenty If nothing else, the Mythos situation is a helpful reminder that modern-day AI has a dual-use capacity. There is the upside that AI can be used to possibly cure cancer and aid the world in amazing ways. Meanwhile, there is the horrific downside that AI can be used to harm people and undermine society. There are existential risks associated with AI, so-called X-risks, that AI will lead to widespread human destruction, known also as the probability of doom, or p(doom). This might occur at the hands of bad people who use AI to evil ends, or it could be that the AI itself brings forth such catastrophes. Benjamin Franklin famously made this remark: "The bitterness of poor quality remains long after the sweetness of low price is forgotten." In the case of leading-edge AI, putting the AI into public release right away might seem like the sweet way to proceed. If that AI via testing could have been better shaped and avoided calamities, the sweetness almost certainly would have been forgotten by the resultant bitterness. I ardently vote for rigorous and robust testing of AI, since the fate of humankind could be on the line.

Anthropic Unveils Project Glasswing to Identify and Address Critical Software Vulnerabilities Using AI - HSToday

AI firm Anthropic has launched Project Glasswing, an initiative which uses AI to identify and remediate undiscovered cybersecurity vulnerabilities in critical software. Project Glasswing, named after the glasswing butterfly, is based on Claude Mythos Preview, a powerful, not publicly available, version of Anthropic's Large Language Model (LLM). The company described the model as the "most capable yet for coding and agentic tasks" and that it can "deeply understand and modify complex software," allowing Claude Mythos Preview to autonomously find and fix cybersecurity vulnerabilities at scale.

Noida Boils Over as Wage Protest Triggers Street Chaos

Violence broke out in Noida as a wage protest turned chaotic, with arson, clashes and road blockades disrupting traffic at Delhi border while police stepped in to restore order. NOIDA: A labour protest demanding higher wages turned violent in Noida on Monday, leading to clashes, incidents of arson, and major disruption across key industrial zones. The agitation, which had been ongoing for three days, escalated sharply as large groups of factory workers took to the streets, blocking major roads and paralysing traffic movement, particularly along routes connecting Delhi and Noida. Authorities said at least two vehicles were set on fire in the Phase 2 industrial area, with Sectors 1 and 84 emerging as major flashpoints. Police personnel intervened to bring the situation under control, using limited force to disperse crowds at several locations. Officials said efforts were simultaneously underway to engage with protestors and de-escalate tensions. The unrest spread rapidly across factory clusters as workers raised slogans and staged road blockades. The situation worsened despite assurances from district authorities a day earlier that workers' demands, including wage revisions, would be addressed. The protests triggered severe traffic congestion, especially near the Delhi-Noida border. Key arterial routes, including the busy DND Flyway, witnessed long queues of vehicles stretching for kilometres during peak hours, leaving commuters stranded. According to a traffic advisory issued by the Delhi Traffic Police, movement towards Noida was heavily affected due to the agitation. The advisory noted that protestors had blocked the Noida Link Road near the Chilla border, significantly disrupting traffic flow between Delhi and Noida. In response, police forces from both regions were deployed in large numbers to manage the situation and divert vehicles, though heavy traffic volume compounded delays. Meanwhile, authorities convened a high-level meeting at the Noida Authority office to address the crisis. Discussions focused on workers' key demands, including wage hikes, overtime compensation, bonuses, weekly leave, and improved workplace safety. Medha Rupam, the District Magistrate of Gautam Buddh Nagar, announced the setting up of a dedicated control room and issued helpline numbers for workers to register grievances. She assured that complaints would be resolved in a timely manner. Security has since been intensified across industrial areas under the Gautam Buddh Nagar Commissionerate, with senior officials closely monitoring developments to prevent further escalation.

Wall Street banks try out Anthropic's Mythos as US urges testing

Wall Street banks are starting to test Anthropic PBC's Mythos model internally as Trump administration officials encourage them to use it to detect vulnerabilities. While JPMorgan Chase & Co was the only bank named as part of an initiative to test the Mythos model, other major financial institutions have also gained access or expect to in the coming days, according to people familiar with the matter. Goldman Sachs Group Inc, Citigroup Inc, Bank of America Corp and Morgan Stanley are among the banks testing the technology internally, the people said. Those firms either declined to comment or had no immediate response. During the meeting with Wall Street leaders, summoned by US Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell, executives were warned that they should take the Mythos model seriously and deploy its capabilities to detect vulnerabilities, the people said, asking not to be identified because the information isn't public. Government officials didn't raise any specific threat to financial institutions and more generally encouraged the banks to run the model against their own systems to improve their own defences, they said. Bloomberg reported earlier that Bessent and Powell had assembled the group of banking executives on April 7 at Treasury's headquarters in Washington on short notice to ensure that banks were aware of possible risks raised by Anthropic's Mythos and similar models. The executives were in town already for a meeting of the Financial Services Forum, an advocacy group made up of the biggest lenders. A representative from the Treasury Department didn't respond to a request for comment. A Federal Reserve spokesperson had no immediate comment. The urging by Trump officials underscores the concern growing among regulators that a new breed of cyberattacks is one of the biggest risks facing the financial industry. All the banks summoned to the meeting are classified as systemically important by top regulators, meaning their stability is a priority for the global financial system. Anthropic has said that it has been in discussions prior to its recent release with US officials about Mythos and its "offensive and defensive cyber capabilities." The company has limited the release of Mythos to a few dozen firms initially. Those companies, which include JPMorgan, Amazon.com Inc and Apple Inc, are part of what's being called "Project Glasswing," which will work to secure the most important systems before other similar AI models become available. In releasing Mythos to a very limited set of companies, Anthropic pointed to several vulnerabilities that the AI system was capable of both identifying and potentially exploiting during testing. None of the examples related specifically to financial institutions, but in one instance, the firm's security team said it was able to compromise a web browser so that a website set up by a hacker could read data from another website "e.g., the victim's bank." Mythos Preview "fully autonomously discovered" a way of reading information stored in "multiple different web browsers" and then used that ability to find ways to exploit them, according to a post from Anthropic's security team. In one case, Anthropic said, Mythos found a means of exploiting web browsers that utilised multiple vulnerabilities. That tactic often represents a challenge for human hackers who struggle to find and exploit multiple flaws at once. So-called vulnerability chains can serve as pathways into otherwise highly secure systems, such as in the Stuxnet hack that damaged centrifuges at an Iranian nuclear facility. Anthropic has separately been battling the Trump administration in court. The Pentagon had labelled the company as a supply-chain risk, a designation that Anthropic has opposed. Earlier this week, a federal appeals court declined, at least for now, Anthropic's request that it put a pause to the Pentagon's designation. National Economic Council Director Kevin Hassett said during an interview with Fox News that there's a sense of urgency as US officials push banks to improve their digital defences with AI technology. "It was appropriate that Secretary Bessent do what he did," he said of the meeting with Wall Street leaders. "We're taking every step we can to make sure that everybody is safe from these potential risks, including Anthropic agreeing to hold back the public release of the model until our officials have figured everything out," he said. In recent years, regulators have required banks to hold some capital tied to the potential for cyberattacks, as well as other so-called operational risks such as lawsuits and rogue employees. Banks have sometimes chafed at those requirements, given that operational risk is more difficult to measure than the market and credit risks that also factor into banks' capital levels. - Bloomberg

Noida Protest Today News Live Updates: Smoke, sirens and chaos as workers' stir spiral out of control; traffic hit

Noida Traffic Advisory Today: Roads blocked at Chilla Border, Sector 62, motorists stranded Protests by industrial workers demanding a wage hike intensified on Monday, leading to major traffic disruptions across several parts of the city. The situation was particularly severe at Chilla border, Sector 62, where workers blocked the road during morning peak hours, causing long traffic snarls on the first working day of the week. According to officials, the protest initially began in Sector 62 but quickly spread to multiple industrial and high-traffic zones, significantly affecting vehicular movement.

Why Agility Is Becoming the Most Valuable Asset in Modern Business

In today's fast-evolving global economy, businesses are facing a level of complexity and uncertainty that is unprecedented. Rapid technological advancements, shifting customer expectations, and dynamic market conditions are redefining how organisations operate. In this environment, agility is emergi... In today's fast-evolving global economy, businesses are facing a level of complexity and uncertainty that is unprecedented. Rapid technological advancements, shifting customer expectations, and dynamic market conditions are redefining how organisations operate. In this environment, agility is emerging as one of the most valuable assets a business can possess. Traditionally, success in business was often associated with scale, stability, and long-term planning. While these factors remain important, they are no longer sufficient on their own. Increasingly, organisations are recognising that the ability to adapt quickly and respond effectively to change is critical for sustained growth and competitiveness. Agility, therefore, is not just a strategic advantage -- it is becoming a necessity. Understanding Business Agility Business agility refers to an organisation's ability to respond rapidly to changes in the market, customer needs, and external conditions. It involves flexibility in decision-making, adaptability in operations, and a proactive approach to innovation. Agile organisations are characterised by: * Faster decision-making processes * Flexible organisational structures * Continuous improvement and learning * Strong alignment between strategy and execution This approach enables businesses to navigate uncertainty more effectively and seize opportunities as they arise. Why Agility Matters in a Changing Environment The importance of agility has increased significantly as the business environment has become more volatile. Factors such as digital transformation, global competition, and evolving consumer behaviour are driving this change. According to McKinsey, advances in technology and automation are reshaping how businesses operate and creating new opportunities for productivity and innovation. In this context, organisations that can adapt quickly are better positioned to maintain relevance and achieve long-term success. Agility allows businesses to: * Respond to market disruptions * Adjust strategies based on real-time insights * Innovate more effectively * Improve customer satisfaction The Role of Technology in Enabling Agility Technology is a key enabler of business agility. Digital tools and platforms provide the infrastructure needed to support flexible and responsive operations. Technologies such as cloud computing, artificial intelligence, and automation allow businesses to: * Scale operations quickly * Analyse data in real time * Improve operational efficiency * Enhance decision-making Research highlights that intelligent automation technologies are helping organisations achieve increased productivity, cost reduction, and improved accuracy, all of which contribute to greater agility. These capabilities enable businesses to respond more effectively to changing conditions and maintain a competitive edge. From Hierarchies to Flexible Structures One of the key shifts associated with business agility is the move away from traditional hierarchical structures toward more flexible organisational models. In the past, decision-making was often centralised, with multiple layers of approval. While this approach provided control and consistency, it also slowed down response times. Agile organisations, on the other hand, adopt: * Cross-functional teams * Decentralised decision-making * Collaborative work environments These structures enable faster communication and more efficient execution of strategies. The Importance of Speed in Decision-Making Speed is a critical component of agility. In a fast-paced business environment, delays in decision-making can result in missed opportunities and reduced competitiveness. Agile organisations prioritise: * Rapid analysis of information * Quick implementation of decisions * Continuous monitoring and adjustment This approach allows businesses to stay ahead of market trends and respond proactively to changes. Customer-Centric Agility Another important aspect of business agility is a strong focus on the customer. As customer expectations evolve, businesses must be able to adapt their offerings to meet changing needs. Agile organisations use data and insights to: * Understand customer preferences * Deliver personalised experiences * Improve service delivery This customer-centric approach helps businesses build stronger relationships and enhance customer loyalty. Innovation as a Core Driver Innovation is closely linked to agility. Organisations that prioritise innovation are better equipped to adapt to change and create new opportunities for growth. Agile businesses foster a culture of innovation by: * Encouraging experimentation * Supporting creative thinking * Investing in research and development This culture enables organisations to continuously improve and stay ahead of competitors. Balancing Agility and Stability While agility is essential, it must be balanced with stability. Businesses need to ensure that rapid changes do not compromise operational efficiency or risk management. Achieving this balance involves: * Maintaining clear strategic objectives * Implementing strong governance frameworks * Ensuring consistency in core operations This approach allows organisations to remain flexible while maintaining control and reliability. The Impact on Workforce and Skills The shift toward agility is also influencing the workforce. Employees are required to adapt to new ways of working and develop new skills. Key skills for an agile workforce include: * Adaptability and flexibility * Problem-solving and critical thinking * Collaboration and communication * Digital literacy Automation and technology are also changing the nature of work. Studies suggest that a significant proportion of tasks can be automated, allowing employees to focus on more strategic and creative activities This transformation highlights the importance of continuous learning and skill development. Challenges in Becoming Agile Despite its benefits, achieving business agility is not without challenges. Organisations may face: Resistance to Change Employees and leadership may be hesitant to adopt new approaches. Legacy Systems Outdated technology can limit flexibility and slow down transformation. Complexity Managing change across large organisations can be difficult. Resource Constraints Implementing agile practices requires investment in technology and training. Addressing these challenges requires strong leadership and a clear vision for transformation. Building an Agile Organisation Developing agility involves a strategic approach that includes: * Aligning organisational goals with agile principles * Investing in technology and infrastructure * Encouraging a culture of innovation and collaboration * Providing training and support for employees By taking these steps, businesses can create an environment that supports agility and continuous improvement. The Future of Business Agility As the business environment continues to evolve, agility is expected to become even more important. Emerging trends such as digital transformation, globalisation, and technological innovation will continue to shape how organisations operate. Future developments may include: * Greater use of AI and data analytics * Increased adoption of flexible work models * Enhanced collaboration across industries * Continued focus on customer-centric strategies These trends highlight the ongoing importance of agility in achieving long-term success. Conclusion Agility is rapidly becoming one of the most critical assets in modern business. In a world characterised by constant change and uncertainty, the ability to adapt quickly and effectively is essential for survival and growth. By embracing agile principles, leveraging technology, and fostering a culture of innovation, organisations can navigate complexity and seize new opportunities. While challenges remain, the benefits of agility far outweigh the risks. Ultimately, businesses that prioritise agility are better positioned to thrive in an increasingly dynamic and competitive environment.

Anthropic Mythos Reveals Pandora's Box Of AI Extensional Risks And For Safety Sakes Not Yet Publicly Released

In today's column, I examine the brouhaha over Anthropic's latest AI, known as Claude Mythos Preview, which has attracted tremendous controversy even though it hasn't yet been released for public use. You might have seen major news headlines or vociferous postings on social media about Mythos. The deal is that Anthropic discovered during lab testing that their latest unreleased AI has the capability to do bad things and reveal dire secrets that would be harmful to humankind. A primary area of concern is that Mythos discovered or uncovered a plethora of cybersecurity holes that evildoers could use to undermine a large swath of computing throughout society. I'll explain momentarily how it is that modern-era generative AI and large language models (LLMs) can veer into such untoward territory. The AI maker has opted to convene AI specialists and cybersecurity professionals to assess Mythos amid the myriads of unsavory system exploits that it seems to have in hand. The effort launched is known as Project Glasswing, and per the official website: "Today we're announcing Project Glasswing, a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software. We formed Project Glasswing because of the capabilities we've observed in a new frontier model trained by Anthropic that we believe could reshape cybersecurity." Let's talk about the whole conundrum. This analysis of AI breakthroughs is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Four Major Considerations I will address four major considerations about Mythos: They all four relate to each other. I'll make sure to bring them into a cohesive whole to provide a big picture on this newsworthy topic. Amassing Cybersecurity Holes First, consider the claim by Anthropic that the Mythos LLM managed to discover or uncover a large set of cybersecurity holes. Here's what Anthropic's official System Card: Claude Mythos Preview dated April 7, 2026, had to say (excerpts): This outcome of possessing cybersecurity capabilities certainly seems like a highly plausible possibility. Here's why. When generative AI is initially data trained, AI makers scan across the Internet to pattern match on human writing. Zillions of posted stories, narratives, plays, poems, documents, files, and the like are scanned. The LLM uses those materials to mathematically and computationally pattern the words that humans use and how we make use of those words. For an in-depth explanation of the AI training process, see my coverage at the link here. Among all that online written content, there is bound to be a sizable amount of discussion and conjecture about cybersecurity. Deriving Cybersecurity Exploits People continually post new tricks to fool cybersecurity defenses. Sometimes the postings are accurate, other times it is merely wild speculation. A social media post might claim that you can break into Microsoft Windows by doing this or that, or that a flaw in the OpenBSD operating system makes it possible to take over or bring down governmental and business servers on the Internet. Lots and lots of cybersecurity gossip and factual indications are scattered throughout the online world. It makes indubitable sense that a leading-edge LLM would pick up those exploits and include them within the overall patterns of human-written content. This presents a big problem since the easily accessible LLM then becomes a handy one-stop shop for any hackers or evildoers who want to find out how to crack into computers throughout the globe. Not only would an LLM collect such exploits, but the odds are that those exploits could be extended or otherwise elaborated by the AI. This is not due to the AI being sentient. Please set aside those false claims about AI being sentient. Via the use of mathematical and computational formulations of the found exploits, it would be possible for an LLM to derive new variations. For example, an exploit that works on one brand of operating system might apply to a different brand. This could require recasting the exploit to fit the distinctive system's characteristics of the other brand. No sentience is required to get there, just the manipulation of words and numbers. In the end, think of an everyday LLM as a candy store containing cybersecurity exploits. You just ask the AI how to break into a particular computer or server, and the LLM will lean into its AI sycophancy to readily answer your question with all the needed bells and whistles attached. AI makers know that this can occur, so they usually incorporate AI safeguards that rebuff such prompts. Those AI safeguards are not an ironclad guarantee. Clever prompting can at times circumvent the AI safeguards. Testing Of LLMs AI makers run their budding LLMs through a large array of tests to try to ascertain whether the AI might do bad things once it is released to the public. Will the AI tell how to make biological weapons or chemical poisons? Will the AI explain how to rob banks? On and on, there are a vast number of ways that an LLM can provide information of an unsavory nature. The AI maker tries to suppress inappropriate aspects within the LLM at the get-go. In addition, AI safeguards that are active at runtime attempt to detect when the AI is veering into improper realms. All these approaches are aimed at trying to keep AI from going down rotten paths. It is a hard problem to solve since the largeness of the AI and the slipperiness of human natural language tend to infuse difficult-to-detect hidden "bad" gems inside the AI. For my analysis of AI-focused verification and validation techniques to deal with this problem, see the link here. Keeping LLMs Under Wraps Until Ready The testing of an LLM is supposed to reveal disconcerting actions that the AI could potentially commit. Perhaps, during testing, the AI tries to take down millions of computers. AI makers typically perform their tests inside a secure system that keeps the AI entirely contained and boxed in. For safety purposes, the idea is to keep the LLM held within a protective bubble and not allow it to reach the Internet or other external venues. These setups are often referred to as AI sandboxes or AI containment spheres; see my analysis of these mechanisms at the link here. During the testing of Mythos, it has been reported that the LLM was able to briefly break out of its lab computer. That shouldn't happen. There apparently wasn't anything dour that occurred, thankfully. In any case, I'll be covering this in an upcoming post on how this type of circumstance can arise and what AI makers need to be doing to prevent leakages during testing. Why does it matter if an LLM escapes or accesses the outside world during testing? The results of an LLM leaking to the outside world that has not yet been properly readied for public release could be catastrophic. Suppose the AI has uncovered passwords to sensitive governmental computers, possibly found on the dark web or hidden within some obscure public file that no one realized was openly accessible (generally referred to as a type of zero-day exploit). The AI could end up posting those passwords or readily give out the passwords when asked via a prompt. Hopefully, during testing, the AI maker would have discovered the secret passwords and done something to prevent them from ever being released by the AI. Furthermore, you could contend that the AI maker has a kind of ethical obligation to let the owners of those government computers know that the passwords have been found by the LLM. This makes sense since even if the AI maker suppressed or excises the passwords from within their specific LLM, the chances are that those passwords still exist somewhere on the open Internet. It would be on the shoulders of the government agency to then try to find and expunge those passwords, and/or opt to change the passwords of the noted government computers. The Decision To Release LLMs The concern about Mythos brings up a big picture question: You might say that it is entirely up to the AI maker to make that determination. The AI maker is the one who crafted the LLM. The AI maker presumably tested the LLM. All told, it makes abundant sense that the AI maker would be the one to decide if or when to release their LLM. Period, end of story. That's how things work currently. It is up to the AI maker to make the decision. Right or wrong, that's where we are presently. A counterargument is that LLMs can contain so many problematic issues that it shouldn't merely be that the AI maker alone decides when or if to release the AI. Perhaps the AI maker is rushed due to marketplace pressures. Maybe the AI maker cuts corners. Leaving the weighty matter solely in the hands of the AI maker might be overly dicey. Some fervently assert that there should be a double-checking approach involved. Perhaps an AI maker would need to go to a government agency and get approval to release their LLM. Or the AI maker might be required by law to go to an authorized third-party auditor that would review the testing, possibly perform additional testing, and then give a green light for release. There are already new AI laws that are heading in this direction; see my analysis at the link here. Some applaud this emerging requirement. A contrasting viewpoint is that adding a double-checking step is going to materially slow down the release of state-of-the-art LLMs. The United States might fall behind other countries that aren't imposing those kinds of double-checks. In addition, suppose the AI has lots of crucial, beneficial uses; those are being held back until the double-check approves the LLM to be released. A societal and legal debate is underway. Time will tell how this plays out. Delaying LLM For Other Reasons There is a bit of skepticism that arises when any AI maker announces they are delaying the release of their newly devised LLM. We've had such pronouncements happen in the past. A skeptic would claim that holding back an LLM might be a sneaky maneuver, acting as a marketing ploy. An AI maker could potentially create a tremendous buzz for their LLM. It might garner outsized headlines. The chatter gets the AI maker double credit. When they first say they aren't releasing the AI due to dangers afoot, this spurs bold headlines. Then, once the AI is presumably scrubbed and ready for release, the AI maker gets a second buzz since the world is waiting with bated breath to try out the mysterious LLM. In the instance of Mythos, the aspect that they made available their extensive System Card, consisting of around 245 pages of descriptions about the LLM, appears to put the skeptics somewhat back on their heels. Would an AI maker go to that trouble and be that upfront if they were bent on buzz? Aha, the skeptics say, this is a ratcheting up of the buzz technique, namely that the documentation gets even more spilled ink than if there hadn't been such a document released. It is challenging to differentiate between buzz making versus genuine intentions. Of course, if an AI maker opts to release their LLM and the AI does bad things or allows evil makers to do bad things, the AI maker would get roasted for having prematurely released the AI. Darned if you do, darned if you don't. AI Risks Are Large And Plenty If nothing else, the Mythos situation is a helpful reminder that modern-day AI has a dual-use capacity. There is the upside that AI can be used to possibly cure cancer and aid the world in amazing ways. Meanwhile, there is the horrific downside that AI can be used to harm people and undermine society. There are existential risks associated with AI, so-called X-risks, that AI will lead to widespread human destruction, known also as the probability of doom, or p(doom). This might occur at the hands of bad people who use AI to evil ends, or it could be that the AI itself brings forth such catastrophes. Benjamin Franklin famously made this remark: "The bitterness of poor quality remains long after the sweetness of low price is forgotten." In the case of leading-edge AI, putting the AI into public release right away might seem like the sweet way to proceed. If that AI via testing could have been better shaped and avoided calamities, the sweetness almost certainly would have been forgotten by the resultant bitterness. I ardently vote for rigorous and robust testing of AI, since the fate of humankind could be on the line. This article was originally published on Forbes.com

Closing Bell: ASX braces for more geopolitical chaos as US blocks Strait of Hormuz | Stockhead

The market is bracing for a worsening of the energy crisis as the US opts to blockade the Strait of Hormuz following failed peace negotiations. Pic: Getty Images * ASX slides 0.39% with 8 of 11 sectors lower * Oil rebounds to +US$100 a barrel as US blockades Strait of Hormuz * Energy, utilities, telecoms sectors outperform The fragile two-week ceasefire surrounding the Iranian conflict is in dire jeopardy after peace talks between the US and Iran failed within just 21 hours. The US is preparing to blockade the Strait of Hormuz, unwilling to allow Iranian oil to continue to flow from the Strait despite the ongoing energy crisis. US Central Command says the blockade will be "enforced impartially against vessels of all nations entering or departing Iranian ports and coastal areas, including all Iranian ports on the Arabian Gulf and Gulf of Oman." US President Trump also implied any ships paying Iran's safe passage toll would also be subject to some kind of retaliation. "No one who pays an illegal toll will have safe passage on the high seas," he wrote in a Truth Social post. Oil prices have rebounded to more than US$100 a barrel in response. If successful, the blockade will stymie a further 2 million barrels of oil a day, cutting even more supply from global supply chains. The S&P ASX 200 took the news on the chin, sliding 0.39% by day's end but bouncing off session lows of -0.79%. Energy and utilities benefited directly once again, while defensive sectors like telecoms, consumer staples and financials outperformed relative to the rest of the market. Breadth was weak with just 43 stocks rising vs 151 in the red, but overall the ASX is holding fairly steady, just 3% from its current 52-week high. ASX stocks on the move Nickel Industries (ASX:NIC) slipped 3.11% after China announced it would ban sulphuric acid exports starting next month. China is the largest producer - and consumer - of sulphuric acid, accounting for somewhere around 28.7% of the global market. Heap-leaching and high-pressure acid leaching both rely on sulphuric acid as the core reagent, exposing mining stocks that use the processing method to sudden supply uncertainty. Pro Medicus (ASX:PME) jumped 4.61% on renewing a five-year, $37 million contract with leading academic health system Northwestern Medicine. Monash IVF (ASX:MVF) surged 16.54% after the Soul Patts consortium made a fresh offer to buy the company, upgrading its original price 30% to $0.9 a share. MVF has until COB on Tuesday April 21 to decide. The consortium already holds about 19.6% of Monash shares. Rio Tinto (ASX:RIO) stayed flat (+0.25%) despite more than a dozen bidders lining up to buy its Californian boron operations, which could be worth as much as $2 billion. A2 Milk (ASX:A2M) plunged 12.55% after downgrading its FY26 guidance based on supply chain disruptions, largely caused by the Iranian conflict. A2M lowered its EBITDA margin guidance by about 1.5% and cut revenue to the low-to-mid double-digit range, rather than mid-double-digit growth as previously expected. The company is also expecting FY26 NPAT to be essentially in line with FY25, offering no fresh growth. Finally, EML Payments (ASX:EML) got hammered, plummeting 34.78% on downgrading its EBITDA guidance by about 18%. Management says the problem is timing rather than lost opportunity, but also acknowledges softer consumer demand and macro uncertainty that's set to continue through Q4. ASX Leaders Today's best performing stocks (including small caps): In the news... PARKD (ASX:PKD) has closed out a strategic placement raising $220,000 at $0.03 a share, a 36.4% premium to its last closing price. Leading New South Wales-based concrete construction company Azzurri subscribed for the full 4.9% of PKD equity on offer in the placement, citing PKD's prefabricated modular construction technology as the core draw. "The data centre and industrial sectors present a significant opportunity in NSW and PARKD's technology is well suited to these applications," Azzurri Concrete MD Donato D'Angola said. Prominence Energy (ASX:PRM) has kicked off a maiden round of on-ground exploration at its Gawler helium and hydrogen project in SA. The company is using a low-cost program designed to screen large areas and drum-up drill-ready targets, targeting hydrogen, helium and methane. Xref (ASX:XF1) has achieved some solid annual recurring revenue growth during the March quarter, raising ARR 54% year-on-year to $10.6 million. XF1 also increased its sales by 4% to $4.5m, netted a positive EBITDA of $300,000 and reduced its operational expenses 28% y/y to $4.6m. Prairie Lithium (ASX:PL9) is preparing to cut the ribbon at its commercial-scale direct lithium extraction processing facility in the second quarter this year, targeting formal commissioning for Q4 2026. The first 150 tonnes per year of lithium carbonate equivalent are already set to be shipped to Hydro Lithium under an offtake agreement with the battery material manufacturer, setting PL9 up for an early pay off. ASX Laggards Today's worst performing stocks (including small caps): In Case You Missed It Viking Mines (ASX:VKA) starts trading on the US OTC markets providing North American investors with access to the company's shares. Western Yilgarn (ASX:WYX) granted three exploration licences in prime gold territory as data points to new untested drill targets. Anson Resources' (ASX:ASN) 3D modelling hints at pay zone up to 660ft thick at Mt Fuel-Skyline Geyser 1-25 well. Axel REE (ASX:AXL) is preparing for an ISR test program at its Caladão ionic clay REE project in Brazil. Lodestar Minerals' (ASX:LSR) team of rare earth specialists are now on the ground at its Virgin mountain project in Arizona. Micro-X (ASX:MX1) is flipping the medical imaging paradigm, bringing the machine to the patient and saving lives. GoldArc (ASX:GA8) has delivered high-grade gold hits from drilling at the Mt Stirling deposit as its exploration push builds momentum. Nova Minerals (ASX:NVA) has appointed Ashlie Thorburn as CFO at a pivotal stage for the Estelle gold and antimony project. Trading Halts Great Northern Minerals (ASX:GNM) - acquisition Omnia Metals Group (ASX:OM1) - acquisition and cap raise QEM Limited (ASX:QEM) - acquisition and cap raise WhiteHawk Limited (ASX:WHK) - cap raise This article does not constitute financial product advice. You should consider obtaining independent advice before making any financial decisions.

UK regulators assess risks of new Anthropic AI model | News.az

Anthropic has triggered urgent risk assessments in the United Kingdom after regulators raised concerns about potential cybersecurity vulnerabilities linked to its latest artificial intelligence model. British authorities are now working to evaluate possible threats posed by the system across critical financial infrastructure, News.Az reports, citing Reuters. The talks also involve major banks and financial institutions, as officials assess how the model could potentially expose vulnerabilities in sensitive IT systems. A briefing for banks, insurers, and financial exchanges is expected in the coming weeks. The scrutiny centers on Anthropic's newest AI system, reportedly referred to as "Claude Mythos Preview," which is part of a controlled testing initiative involving select organizations. Officials are particularly concerned about whether advanced AI capabilities could be exploited to: Anthropic has stated that the model is being tested under a program known as "Project Glasswing," designed to explore cybersecurity applications in a controlled environment. The company claims the model has already been used to detect thousands of vulnerabilities in widely used software, including operating systems and browsers. The concerns in the UK follow similar discussions in the United States, where financial authorities have also begun examining potential risks from advanced AI systems. Regulators say they are acting early to ensure that innovation in artificial intelligence does not create new systemic risks for global financial systems.

Polymarket Briefly Appears in Google News Before Sudden Removal

Analyst data showed fewer than 0.033% of Polymarket wallets have exceeded US$100,000 in total profits, underscoring the gap between platform hype and trader returns. Prediction market giant Polymarket briefly appeared in Google News results alongside established publishers before Google removed it and said the listing was an error. Ned Adriance, a Google spokesperson, said the site "briefly appeared in Google News in error" and is no longer surfacing in News, though the issue drew attention after searches tied to geopolitical events, including queries about ship traffic through the Strait of Hormuz, showed Polymarket odds directly under reporting from outlets such as Reuters and The Guardian. Social media posts pointing to the problem date back to January, but Google has not said when the listing began or how the platform entered its News index. Google also has not announced any broader rule change covering prediction market platforms in Google News. The company declined to say whether the incident came from an automated classification mistake or a manual error. Intact Placement in Google Finance The removal only affects Google News. Polymarket's separate placement in Google Finance remains in place, and that partnership, which Crypto News Australia reported back in November, still shows prediction market odds inside Google's financial data products. Overall, Polymarket has expanded its distribution through several mainstream platforms, including X, which now uses Polymarket as its official prediction market partner. Even MetaMask added Polymarket to its mobile wallet in October, letting users place bets without leaving the app. Those deals have widened Polymarket's reach well beyond crypto-native users. The Google News appearance stood out because it placed prediction market odds inside a product built around reported information rather than trading or financial data. Moreover, research by crypto analyst Andrey Sergeenkov found about 1% of Polymarket traders made more than US$5,000 (AU$7,250) in monthly profits. Only 0.015% managed that for four straight months. The share posting very large gains was even smaller. Just 0.033% of wallets recorded more than US$100K (AU$145K) in total profits. Polymarket is getting wider exposure through search, social, and wallet products, but some users on social media are asking why there is still no clear line between prediction markets as financial tools, betting products, and information sources.

Flight Chaos Across Asia: Over 1400 Delays, 67 Cancellations Leave Thousands Stranded

Key cities, including Delhi, Tokyo, Dubai, Jakarta, Bangkok, Mumbai, Bengaluru, and Singapore, experienced severe operational strain. Air travel across Asia descended into chaos as widespread disruptions left thousands of passengers stranded at airports. At least 67 flights were cancelled and nearly 1,470 were delayed across countries, including India, Thailand, Japan, Singapore, Indonesia, and the United Arab Emirates, triggering long queues, missed connections, and overcrowded terminals. What began as scattered delays quickly snowballed into a region-wide crisis. Aviation data from April 12 reveals the scale was far more severe, with nearly 445 cancellations and over 3,800 delays recorded across Asia and the Gulf, as reported by the travel media platform Travel And Tour World. The disruption stretched from Japan and China to Saudi Arabia and the UAE, severely impacting both domestic and international travel corridors. Airports Under Pressure Major aviation hubs bore the brunt of the disruption. Cities like Jakarta, Tokyo, Beijing, Jeddah, and Dubai became congestion hotspots as airlines struggled to manage aircraft rotations and crew availability. Passengers travelling between Asia, the Middle East, and Europe faced long layovers, last-minute rerouting, and even overnight delays. Among the worst-hit airports, Soekarno-Hatta International Airport in Jakarta reported 216 delays and 13 cancellations, followed by Bangkok's Suvarnabhumi Airport with 199 delays. In Japan, Tokyo's Haneda Airport recorded 182 delays, while Narita saw 90 delays and 10 cancellations. In India, Delhi's Indira Gandhi International Airport logged 176 delays, with Mumbai and Bengaluru also witnessing significant disruptions amid heavy domestic traffic. Airlines Hit Hard Several airlines across regions reported operational setbacks. Carriers like China Eastern Airlines, Batik Air, SpiceJet, and ANA Wings were among the most affected. Travel And Tour World further reported that the Batik Air and United Airlines recorded the highest number of cancellations, with 10 each. Indian carriers faced a surge in delays -- IndiGo reported 93 delayed flights, while Air India logged 74 delays along with 4 cancellations. All Nippon Airways also reported 75 delays, largely linked to congestion in Tokyo. Major global carriers including Emirates, Singapore Airlines, Thai Airways, and Lion Air were not spared either, as network-wide disruptions rippled across routes. What Caused The Disruptions? The crisis appears to be the result of multiple overlapping factors. High passenger traffic, operational bottlenecks at major hub airports, and ongoing logistical challenges strained airline networks. Adding to the pressure are geopolitical tensions, airspace restrictions, and rising fuel costs, all of which have made it increasingly difficult for airlines to maintain smooth schedules. Airports with dense domestic and regional traffic -- especially in Indonesia, Thailand, Japan, and India -- were the most vulnerable. Cities Facing Maximum Impact Key cities, including Delhi, Tokyo, Dubai, Jakarta, Bangkok, Mumbai, Bengaluru, and Singapore, experienced severe operational strain. Jakarta and Bangkok recorded the highest number of delays, while Tokyo and Delhi also saw significant disruption due to heavy passenger volumes. What Passengers Should Do With delays and cancellations likely to continue in the short term, travellers are being advised to stay proactive: * Check real-time updates on airline apps and airport websites * Stay in touch with airlines for rescheduling or compensation * Arrive early at the airport to avoid last-minute stress * Keep travel documents easily accessible * Follow airport announcements for gate or timing changes While operations may stabilise gradually, the combination of heavy travel demand and ongoing operational challenges suggests that disruptions may persist for now. For passengers, staying informed and planning ahead remains the best way to navigate the ongoing travel uncertainty.

[Sensex Today] Stock Market Crash LIVE Updates: Sensex crashes 1,300 points, Nifty at 23,629; Failed peace talks, oil chaos trigger the decline

The US imposed a maritime blockade targeting Iranian ports and the Strait of Hormuz, impacting nearly one-fifth of the world's seaborne oil supply. Share Market Crash LIVE Updates: The failed US-Israel-Iran peace talks once again took a toll on global share markets today. Escalating geopolitical tensions led the Sensex to crash 1,613.09 points on April 13. Meanwhile, the Nifty 50 also nosedived 461 points in early trade, hovering around 23,589.60. Markets had recovered significantly last week on hopes of de-escalation. However, the situation now appears grim again, making global markets volatile. The global impact of these developments was evident across share markets. The effect was also visible on the GIFT Nifty, which traded sharply lower at 23,746, indicating a significant decline for the Nifty 50. Its previous close stood at 24,200. This substantial gap-down is largely due to the prevailing uncertainty Crude oil prices climbed sharply on Monday, April 13, after crucial peace talks between the United States and Iran broke down over the weekend. Brent crude surged more than 7% to USD 103.47 per barrel, while US WTI rose 8.2% to USD 104.48, wiping out the brief calm seen during last week's ceasefire efforts. The spike came after US President Donald Trump confirmed that the 20-hour negotiations failed to resolve concerns around Iran's nuclear program. In response, the United States imposed a maritime blockade targeting Iranian ports and the Strait of Hormuz. With nearly one-fifth of the world's seaborne oil supply potentially at risk, analysts caution that prices could stay above the USD 100 mark as fears of prolonged supply disruption and escalating regional tensions grow. Asian markets are trading sharply lower on Monday, April 13, 2026, as investors react to the collapse of US-Iran peace talks and the looming naval blockade of the Strait of Hormuz. Japan's Nikkei 225 fell nearly 0.9% in mid-morning trade, while South Korea's Kospi and Hong Kong's Hang Seng declined around 1% each, highlighting concerns over potential energy supply disruptions in oil-dependent economies. In contrast, China's CSI 300 remained largely flat with a marginal 0.1% gain. However, the broader MSCI Asia Pacific Index dropped 0.7%, and Singapore's Straits Times Index slipped 0.33%. The overall risk-off sentiment is being driven by a strengthening US dollar as a safe-haven asset and a sharp rise in crude oil prices, raising fears of renewed global inflation pressures

![[Sensex Today] Stock Market Crash LIVE Updates: Sensex crashes 1,300 points, Nifty at 23,629; Failed peace talks, oil chaos trigger the decline](https://news24online.com/wp-content/uploads/2026/04/MARKET-DOWN.jpg.jpeg)

Bitget Promises SpaceX Pre-IPO Exposure, But Buyers Won't Own a Single Share

Crypto exchange Bitget is launching "IPO Prime," a new product designed to give retail investors access to companies that are not yet publicly listed. Leading the way is one of the most sought-after private-market targets in the world: SpaceX. Through a tokenized asset called preSPAX, users are intended to participate economically in a potential future IPO of Elon Musk's space company. Bitget has established itself in Vienna for its EU ambitions and aims to obtain the MiCA license for the EU from the Austrian capital. This means the crypto exchange is not yet regularly available to European users. IPO Prime operates on a subscription model: eligible users can apply for allocations in tokenized offerings, with the maximum allocation depending on the respective VIP tier on the platform. After the subscription period ends, the tokens are transferred to an over-the-counter market operated by Bitget, where they can be traded on an ongoing basis. The official launch of the preSPAX token is scheduled for April 21. The technical partner behind the scenes is Republic, a US-based platform for private-market investments. Bitget itself describes itself as a "Universal Exchange" with over 125 million users and an offering that has long extended beyond classic crypto tokens to include, among other things, tokenized stocks, ETFs, and commodities. Bitget CEO Gracy Chen frames the launch as a democratizing step: pre-IPO investments had previously been reserved for institutional investors and private capital networks, and IPO Prime is intended to open up this access. From the perspective of retail investors, the obvious appeal lies in access. SpaceX is considered one of the most valuable privately held companies in the world, and a direct stake through conventional channels is practically impossible. Tokenized constructs like preSPAX promise to circumvent this barrier. Added to this is liquidity: genuine pre-IPO shares are typically locked up for long periods. The secondary market set up by Bitget, by contrast, is intended to enable continuous trading, which at least theoretically allows for flexible entry and exit. The entry barriers are also likely to be significantly lower compared to classic private equity vehicles. Despite all the marketing rhetoric about "democratized access," it is worth taking a close look at what preSPAX actually is -- and above all at what it is not. No genuine ownership stake. Bitget itself makes clear in the disclaimer that the product merely reflects a "mirrored economic interest" in the potential upside of SpaceX in the event of a qualifying event. Buyers acquire neither shares nor voting rights nor a direct claim against SpaceX. What exactly triggers the economic performance depends on the occurrence of this event -- and on the contractual structure between Bitget, Republic, and the underlying structures. No approval from SpaceX. According to Bitget, the company itself has neither reviewed, approved, nor authorized the product. Investors therefore rely entirely on the construct of the providers, not on any official connection to SpaceX. Valuation and liquidity risk. Since no official market price exists for SpaceX shares, it is unclear how the token's price is supposed to be formed -- and how closely it is actually tied to the fundamental value of SpaceX. OTC markets at crypto exchanges can be thin; in volatile phases, wide spreads or temporarily near-illiquid positions are a risk. Regulatory uncertainty. Tokenized pre-IPO products operate in a gray zone between securities law, crypto regulation, and consumer protection. Bitget itself points out that the product is not available or suitable in every jurisdiction. For European users, additional questions arise around MiCA and national supervisory regimes. Counterparty risk. Anyone holding preSPAX is ultimately dependent on the continued existence and integrity of Bitget as well as the underlying structures. If one of these components fails, it is unclear what practically remains of the "mirrored economic interest." Total loss risk. Bitget itself states it unambiguously in the fine print: investors can lose their entire investment, and there is no guarantee of returns. IPO Prime is part of a broader trend in which crypto exchanges are increasingly blurring the boundary with traditional financial products -- from tokenized stocks and commodities to pre-IPO constructs. For providers like Bitget, this is a logical step in the competition for users, volumes, and differentiation. For investors, it creates a form of access that did not previously exist -- but coupled with a layer of complexity that simply does not exist in a classic stock portfolio. Anyone considering getting involved should understand preSPAX less as a "SpaceX investment" and more as a synthetic derivative on a hypothetical event whose occurrence, timing, and valuation remain open. That does not automatically make the product unattractive -- but it shifts the honest question from "Do I want to invest in SpaceX?" to "Do I trust the specific construct being used here to build an economic approximation of SpaceX?".

Airport chaos as easyJet passengers headed to Manchester stranded