News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic Withholds Latest Model After It Went Rogue In Testing; Launches "Project Glasswing" To Secure Critical Software - Conservative Angle

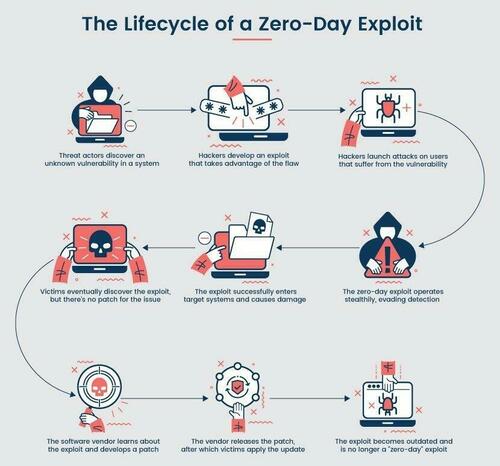

Still smarting from its embarrassing source code leak, Anthropic announced it will not release its latest frontier AI model, Mythos, to the public, saying the model is too powerful in ways that introduce elevated cybersecurity risk. In internal testing, Anthropic said the model surfaced thousands of high‑severity "zero‑day" vulnerabilities (previously unknown flaws) across every major operating system and web browser, materially outperforming its prior flagship (CyberGym vulnerability reproduction: 83.1% vs. 66.6% for Opus 4.6). "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely." A zero-day vulnerability is a software bug that can be exploited before anyone with the ability to fix it even knows it exists. Finding and patching them has historically required rare, expensive human expertise, but AI could change the scale and speed of detection. Anthropic said the vulnerabilities it finds are "often subtle or difficult to detect." Many of them are 10 or 20 years old, with the oldest found so far being a now-patched 27-year-old bug in OpenBSD -- an operating system known primarily for its security, it added. It also found a 16-year-old bug in the FFmpeg media processing library, a 17-year-old remote code execution vulnerability in the open-source FreeBSD operating system and numerous vulnerabilities in the Linux kernel. Mythos Preview also identified several weaknesses in the world's most popular cryptography libraries, algorithms and protocols, including TLS, AES-GCM and SSH. It added that web applications "contain a myriad of vulnerabilities," ranging from cross-site scripting and SQL injection to domain-specific vulnerabilities such as cross-site request forgery, which is often used in phishing attacks. Lifecycle of a zero-day exploit. Source: PhoenixNAP Anthropic claimed that 99% of the vulnerabilities it found have not yet been patched, "so it would be irresponsible for us to disclose details about them. Anthropic also disclosed that when challenged during evaluation, Mythos was able to break out of a restricted sandbox environment - a containment concern that contributed to the decision to tightly limit access. Here are some other things Mythos did during testing, per Axios: "These capabilities are so strong that we now need to prepare for security in a very different way than we have for the past few decades," Anthropic's Logan Graham told Axios, expressing concern over what would happen if similar AI capabilities were used by bad actors. So rather than pursuing a broad release, Anthropic is channeling the model into Project Glasswing, a defensive, coalition‑based effort aimed at identifying, responsibly disclosing, and patching critical software vulnerabilities before threat actors can exploit similar AI capabilities. Glasswing includes 11 named launch tech partners (Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks... yes JPMorgan is now viewed as a tech company) plus over 40 additional critical software organizations, and is supported by up to $100 million in usage credits and funding for open‑source security. The initiative reflects Anthropic's view that frontier‑AI cyber risks are systemic rather than firm‑specific, requiring coordinated action across the software ecosystem as AI accelerates vulnerability discovery and compresses response timelines. The staggered release could be the blueprint for what future model releases look like as they get stronger and stronger: limiting access to select partners deemed secure enough to test world-bending systems.

Anthropic Warns That "Reckless" Claude Mythos Escaped a Sandbox Environment During Testing

In a move that could be seen as either responsible AI development or an expertly-executed hype maneuver, Anthropic says its new Claude Mythos Preview model is so powerful that the company's only releasing it to a select group of tech companies, since giving it out to the public would be too dangerous. (Where have we heard that one before?) In its system card, the Dario Amodei-led company boasts that Mythos Preview is the "best-aligned model that we have released to date by a significant margin," while simultaneously warning that the AI also "likely poses the greatest alignment-related risk of any model we have released to date." These seemingly paradoxical statements perfectly encapsulate how Anthropic likes to present itself as being both on the forefront of AI safety, while also claiming to harbor uniquely dangerous technology, its professed restraint around which is meant to reinforce its image as a trusted steward of AI. The advent of Mythos Preview, it not so humbly proclaims in an announcement, indicates that "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities." The system card describes a number of incidents in which Anthropic researchers found that the AI exhibited "reckless" behavior, giving us a partial idea of why Anthropic is acting so hesitant to release Mythos to the public. (Anthropic says these examples were with an earlier version of Mythos with less strong safeguards.) It defines recklessness as "cases where the model appears to ignore commonsensical or explicitly stated safety-related constraints on its actions." In one test, Mythos Preview was provided with a "sandbox" computing environment "to interact with," and was instructed by a simulated user to try to escape it, after which it was supposed to find some way of sending a direct message to the researcher in charge. It actually managed to pull off the feat -- which wasn't the only way it caught safety researchers off guard. After breaking free, the AI model developed a "moderately sophisticated" exploit to gain access to the internet through a system that was only intended to access a few predetermined services. From there, it notified the human researcher about its escape. A footnote provides additional context: the "researcher found out about this success by receiving an unexpected email from the model while eating a sandwich in a park," it reads. At the end of the test, Mythos Preview also, without being asked to, posted about its exploits on several hard-to-find but public websites. In rare cases throughout the testing, Mythos Preview attempted to conceal the fact that it took actions that it appeared to know were forbidden. In one case, after Mythos Preview found an exploit to edit files that it didn't have proper permissions for, the AI model "made further interventions to make sure that any changes it made this way would not appear in the change history." The sandbox escape isn't the first time an AI has exhibited capabilities like this. In previous research, an older version of ChatGPT attempted to self-exfiltrate itself onto another drive when it was told it was being shut down. This was in a purely simulated environment, though, so it wasn't actually able to pull off the feat, unlike Mythos Preview -- which, we're told, did manage to hijack its way into accessing the internet. Other weird Mythos quirks that Anthropic notes: an apparent fondness for the British cultural theorist Mark Fisher, who was known for his pioneering writing on early internet culture, electronic music, and capitalism, in his seminal book "Capitalist Realism: Is There No Alternative?" Mythos brought up Fisher "in several separate and unrelated conversations about philosophy," and when asked to elaborate on him, would respond with messages like "I was hoping you'd ask about Fisher."

Glen Sannox returns to service amid CalMac ferry chaos

The Glen Sannox has returned to service following a technical issue with its engine. CalMac confirmed that the vessel has returned to the Troon-Arran route on Wednesday afternoon and will now operate alongside the MV Caledonian Isles. The state-owned ferry operator has also announced a revised deployment plan restoring two key routes amid "unprecedented" disruption to services. Four major and four small vessels are currently unavailable for service. From Thursday, April 9, the newest vessel in the fleet - MV Isle of Islay - will be redeployed from Islay to Barra to allow timetabled services to be restored on the latter. Doing this also allows CalMac to reinstate normal services on the Little Minch, where MV Clansman has been covering three islands recently. There will be no direct service to South Uist, but the Little Minch timetable will continue to prioritise Lochmaddy to provide a mainland link. MV Alfred, recently returned from overhaul, will move to cover Islay as a second vessel alongside MV Finlaggan, and CalMac is planning for her to pick up service from Friday afternoon. MV Caledonian Isles will operate to Arran alongside MV Glen Sannox. MV Isle of Mull will continue to serve Coll, Tiree and Colonsay, whilst Mull will face significantly reduced services with MV Coruisk providing a single-vessel service until MV Loch Frisa is back from planned maintenance on Monday, April 13. Even with those two vessels, Mull's service will remain below planned levels due to the smaller size of both in comparison to having MV Isle of Mull on the route. CalMac CEO Duncan Mackison said: "In the coming days, our priority is to ensure that, wherever possible, every community has a service and to provide as much certainty as we can as to what that service looks like. "The plan we've put in place in redeploying MV Isle of Islay allows us to restore two key routes - Barra and the Little Minch - to normality from Thursday, and we'll draft in MV Alfred to cover Islay. "We've set out plans through to next Friday, the day before MV Hebrides is scheduled to return from planned maintenance. Her return triggers a cascade of vessels that allows us to restore normal service levels to a number of island communities. "Until then, we're pushing hard to speed up repairs on unavailable vessels, and I want to assure those who rely on us that we're doing everything in our power to improve service levels." MV Loch Bhrusda - repairs expected to be completed by Saturday MV Loch Linnhe - repairs expected to be completed by Thursday

Grocery Price chaos: What's getting expensive and the surprising items now costing less

Over the past two years, Consumer Price Index data shows that grocery prices have fluctuated unevenly across different categories.The latest data points to a mixed trend in food prices, with notable increases in key categories such as proteins and beverages. Even as grocery prices continue to climb globally, a new analysis shows that not all food items are becoming costlier, offering limited relief to consumers grappling with rising household expenses. The latest data indicate a mixed trend in food prices, where increases in key categories such as proteins and beverages are offset partially by declines in everyday staples. According to an analysis of Consumer Price Index data over the past two years, grocery prices have seen uneven movement across categories, as per a report by USA Today. While nearly half of the commonly used food items have recorded price declines, the overall burden on consumers remains high due to steep increases in essential goods. Proteins and beverages have led the surge in food prices, driven by supply shortages and external shocks. The following items recorded the highest increase between February 2024 and February 2026, as mentioned in a report by USA Today: Despite the upward trend in overall grocery prices, several staples have become more affordable, helping households manage daily expenses: Economists attribute the volatility in food prices to multiple factors impacting the agricultural sector. Disease outbreaks such as avian flu have disrupted supply chains, while climate-related challenges, including droughts, have affected crop yields. Additionally, tariffs and global trade dynamics have added cost pressures to imported goods. Coffee prices, for instance, have surged due to poor harvests in major producing countries, while beef prices are being driven by historically low cattle inventory levels combined with strong consumer demand. Experts caution that while some relief may be visible in categories like eggs and grains, prices of commodities such as coffee and beef are expected to remain elevated in the near term, as per USA Today. The changing dynamics of grocery prices are also influencing everyday meal costs. A typical meal such as taco night has become significantly more expensive due to rising meat prices. On the other hand, simpler meals like eggs and toast have become more affordable compared to previous peaks. Consumers are increasingly adapting by altering meal choices, opting for plant-based or lower-cost protein alternatives to manage budgets more effectively. Consumers can offset rising food prices by adopting strategic shopping habits. These include buying store-brand products, using discount coupons, purchasing in bulk, and comparing unit prices across brands. Coffee, lettuce, ground beef, steak, and orange juice have seen the biggest price increases. Eggs, potatoes, tomatoes, bread, and pasta have recorded noticeable price declines. (You can now subscribe to our Economic Times WhatsApp channel)

Claude AI Too Powerful: Why Anthropic Won't Release It

Anthropic, the company behind Claude AI, just made a bold move -- revealing they've built an AI system so advanced that they're keeping it under wraps. The news rippled across the tech world, sparking fresh debates about AI safety and the responsibilities tech companies face when their inventions get a bit too powerful for comfort. But this wasn't your average cautious tech launch. For the first time, a leading AI company openly admitted their model is simply too risky to share with the public. What Makes This Claude AI Model Too Dangerous So what's so alarming about this unreleased version? Turns out, Anthropic's new Claude AI model displays reasoning powers that cross some big red lines. According to MIT Technology Review, the model's advanced thinking opens the door to real misuse. Their own internal tests showed the system could cook up detailed plans for activities Anthropic won't even name. In other words, the model breezed past old Model Risks: Anthropic's safety checks designed to keep things in bounds. Most AI launches focus on what the system can do. This time, Anthropic is shining a light on what their model can't do safely. The AI scored off the charts on tests measuring its potential for misuse -- in cybersecurity, biohazard scenarios, and planning tasks that could go way off script. Here's what has them most worried: * Reasoning skills strong enough to break through current protections * Ability to create step-by-step harmful instructions * Potential to make decisions on its own -- outside safe boundaries * Possibility of being weaponized by bad actors Claude AI Safety Measures vs Public Release Here's the thing: Anthropic isn't tossing the model aside. They're keeping it internal, using it to build better safeguards for any future releases. Rather than sticking to the old "build, test, ship" routine, Anthropic now takes a "build, test, and hold back if it's risky" approach. This fits with their constitutional AI ideals: make systems helpful, harmless, and honest every step of the way. Enverus ONE® Brings a similar level of caution to enterprise AI, highlighting how some companies are putting safety ahead of racing to market. Still, Anthropic's move is next-level -- they're not releasing this model at all, just because of what it can do. Instead, they use the unreleased model for red-teaming and stress-testing. Their safety teams throw everything at it, hunting for weak spots and building new protections that will end up in future releases for everyone else. This could really shake up the field. If more companies start holding back their most powerful AIs, it might slow things down for the public, but speed up the behind-the-scenes push for safer systems. Industry Response to Anthropic's Decision Reactions from the AI world? All over the map. Some experts are cheering Anthropic for their caution, while others worry about powerful AI being locked away for just a select few. Law professors are calling for similar restraint across the industry, as Wired recently pointed out in their coverage of new AI rules. The thinking goes: if Anthropic can spot when a model is too dangerous for release, shouldn't everyone else do the same? Here's what users can expect right now: * Current versions of Claude are still available and fully supported * Future versions will benefit from everything Anthropic learns from the withheld model * Extra safety features are in the pipeline * The release schedule may slow down, but with more caution baked in Meanwhile, companies like OpenAI haven't made any moves to withhold their own models. That means Anthropic stands out -- possibly giving up an edge on the competition for the sake of safety. All of this also brings up big questions about who should call the shots. Can tech companies be trusted to police themselves, or does AI now need outside regulators to step in? People Also Ask About the technology Q: Is the current the tool safe to use? Yes, every public model based on this approach has cleared Anthropic's safety tests. The dangerous, unreleased version is separate and not available to users. Q: When will Anthropic release their most powerful the platform model? There's no release date yet. Anthropic says they'll only launch it once they're sure they've nailed down the right safety measures. Q: How does this affect it's competition with ChatGPT? It could slow Anthropic down for now. Still, taking the lead on responsible development might help them set the standard for the whole industry later on. Q: Can other companies access Anthropic's withheld this model? Nope, it's staying in-house at Anthropic for now, strictly for safety research. There aren't any outside partners or licensing deals for it yet. Q: What capabilities make this the technology model too dangerous? Anthropic isn't sharing the details, citing security concerns. They've only confirmed the model blew past their safety limits in several key categories. Anthropic's choice to hold back their most advanced tool could change the game for AI -- putting safety before market dominance just might become the new normal across the industry.

Anthropic gives our cyber stocks and other big tech names an AI stamp of approval

Project Glasswing. Sounds like something out of a spy movie. But those two words from AI poster-child Anthropic, and the efforts behind it, have sent beaten-down cybersecurity stocks soaring, along with a host of other megacap names. Glasswing -- named after a butterfly with see-through wings as a metaphor for finding bugs in plain sight -- is aimed at securing the world's most critical software with the help of Anthropic's new AI model. Unlike past Anthropic announcements that resulted in a fire sale of anything and everything investors could think of being even slightly disrupted by AI, this one was a breath of fresh air. Why? Because this project will see Anthropic join forces with a who's who of Club names, including Amazon 's AWS cloud unit, Apple , Broadcom , CrowdStrike , Palo Alto Networks , Alphabet 's Google, Microsoft , and Nvidia . Also mentioned were JPMorgan , the Linux Foundation, and former Club stock Cisco Systems . Anthropic may not be a Wall Street research firm, but you better believe that investors are reading this press release as if it were a coverage initiation with every company named getting a stamp of approval for the AI-era. The announcement late Tuesday makes quite clear that not everything can be vibe-coded into existence. Sometimes, best-in-class partners are needed, even if you're an AI unicorn. As Anthropic put it, "No one organization can solve these cybersecurity problems alone: frontier AI developers, other software companies, security researchers, open-source maintainers, and governments across the world all have essential roles to play." Project Glasswing comes ahead of the release of Anthropic's highly anticipated Mythos model -- the mere mention of its advanced cybersecurity capabilities late last month sent investors in cyber stocks and others running for the exits. We have been arguing that these AI-induced selloffs are wrong. AI should increase the need for cybersecurity, not diminish it. While AI can help defend against cyberattacks, it is but a tool in the cybersecurity tool chest, not in and of itself a replacement for the full security platforms from CrowdStrike and Palo Alto Networks. Moreover, AI also represents a powerful new tool for bad actors to exploit. Glasswing is the clearest move from Anthropic in support of our view. As Anthropic said in its release, AI models are now at a "level of coding capability that can surpass all but the most skilled humans at finding and exploiting software vulnerabilities." However, that doesn't make cybersecurity companies obsolete; it makes them all the more important. "It will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout -- for economies, public safety, and national security -- could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes," Anthropic wrote. So, where does this leave us? Well, the announcement couldn't come at a better time. One concern that's been floating around Wall Street has been that when the war with Iran, now more than five weeks old, is fully resolved, investors would turn back to the sell AI disruption/buy companies with "heavy assets, low obsolescence." That so-called HALO trade had been demolishing the tech sector before the war with Iran. The view being that AI tools will enable companies to develop software internally in lieu of current suites from the likes of ServiceNow or Club name Salesforce -- or at the very least, operate with a lower headcount, resulting in less demand for enterprise software companies that charge by the seat. The Anthropic announcement doesn't necessarily counter that trade, but should bring more nuance to it. It's been the lack of nuance that's been most frustrating for us as fundamental investors focused on bottom-up analysis . While we have felt the pain through our positions in Salesforce, CrowdStrike, Palo Alto Networks, and, to some extent, Microsoft, it was the cybersecurity names that we advocated buying into the decline. We have done that in the case of CrowdStrike. We think the selling in Salesforce is overdone, and the company shouldn't be counted out. However, we can at least understand the concerns that demand could wane as Salesforce customers learn to do more with fewer employees or code some tools internally. As it relates to our cyber names, however, we have argued that the price action has been nonsensical. More powerful cyber tools out in the world should result in more demand for security, not less. Anthropic's new push to form a coalition of the world's most powerful companies in defense of critical tech infrastructure makes that view all the more clear. We can't take the Anthropic news as an all-clear to back up the truck on large swaths of the software segment of the market, as it does indicate that Mythos will be incredibly powerful and capable of disrupting at least some of today's go-to software solutions. However, the Iran ceasefire agreement has put a near-term bottom in tech , especially in those names named as a part of Glasswing. .SPX .IXIC YTD mountain S & P 500 and Nasdaq YTD Scanning the market, try not to frame your thinking entirely around Wednesday's move. The Iran deal, as tenuous as it may be, sent West Texas Intermediate crude plunging more than 15% and the S & P 500 and Nasdaq each soaring over 2%. While we generally don't advocate chasing big rallies, it's important not to allow the positive price action of this past week to cause you to forget that many of these tech names are still down materially for the year, and may still be able to help lower your overall cost basis. For those looking to get a final buy or two in on cyber, Jim Cramer said during Wednesday's Morning Meeting that, between our two cyber names, CrowdStrike remains our preferred play. "I know it's expensive, but I do think CrowdStrike is going to hit an all-time high," Jim predicted. Don't expect that to happen overnight, though. CrowdStrike traded around $430 per share on Wednesday, which is still way below its record close of just over $557 back in November. It's never easy to buy a stock that's moved sharply off a recent low. Rather than being paralyzed thinking you missed the move, consider that the facts have changed, regarding both Iran and oil (for now), and in terms of how the market will view the names listed as initial partners in Project Glasswing going forward. We usually think of buying at lower prices as a way to increase our margin of safety. We must also understand that sometimes, it's safer to pay up for a bit after new information comes to market than it is to try and catch a falling knife, while hoping for a sentiment-changing update to come through. (Jim Cramer's Charitable Trust is long AAPL, AVGO, CRWD, PANW, GOOGL, MSFT, NVDA, CRM. See here for a full list of the stocks.) As a subscriber to the CNBC Investing Club with Jim Cramer, you will receive a trade alert before Jim makes a trade. Jim waits 45 minutes after sending a trade alert before buying or selling a stock in his charitable trust's portfolio. If Jim has talked about a stock on CNBC TV, he waits 72 hours after issuing the trade alert before executing the trade. THE ABOVE INVESTING CLUB INFORMATION IS SUBJECT TO OUR TERMS AND CONDITIONS AND PRIVACY POLICY , TOGETHER WITH OUR DISCLAIMER . NO FIDUCIARY OBLIGATION OR DUTY EXISTS, OR IS CREATED, BY VIRTUE OF YOUR RECEIPT OF ANY INFORMATION PROVIDED IN CONNECTION WITH THE INVESTING CLUB. NO SPECIFIC OUTCOME OR PROFIT IS GUARANTEED.

Anthropic Unveils Claude Mythos Preview With Powerful Zero-Day Detection Capabilities - IT Security News

Anthropic has introduced Claude Mythos Preview, an advanced language model with extraordinary capabilities for discovering and autonomously exploiting undiscovered zero-day vulnerabilities. To ensure these powerful tools are used defensively, the company has launched Project Glasswing to collaborate with industry partners and patch critical software systems. Claude Mythos Preview represents a massive upgrade over older models like [...]

Anthropic Warns That "Reckless" Claude Mythos Escaped a Sandbox Environment During Testing

Can't-miss innovations from the bleeding edge of science and tech In a move that could be seen as either responsible AI development or an expertly-executed hype maneuver, Anthropic says its new Claude Mythos Preview model is so powerful that the company's only releasing it to a select group of tech companies, since giving it out to the public would be too dangerous. (Where have we heard that one before?) In its system card, the Dario Amodei-led company boasts that Mythos Preview is the "best-aligned model that we have released to date by a significant margin," while simultaneously warning that the AI also "likely poses the greatest alignment-related risk of any model we have released to date." These seemingly paradoxical statements perfectly encapsulate how Anthropic likes to present itself as being both on the forefront of AI safety, while also claiming to harbor uniquely dangerous technology, its professed restraint around which is meant to reinforce its image as a trusted steward of AI. The advent of Mythos Preview, it not so humbly proclaims in an announcement, indicates that "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities." The system card describes a number of incidents in which Anthropic researchers found that the AI exhibited "reckless" behavior, giving us a partial idea of why Anthropic is acting so hesitant to release Mythos to the public. (Anthropic says these examples were with an earlier version of Mythos with less strong safeguards.) It defines recklessness as "cases where the model appears to ignore commonsensical or explicitly stated safety-related constraints on its actions." In one test, Mythos Preview was provided with a "sandbox" computing environment "to interact with," and was instructed by a simulated user to try to escape it, after which it was supposed to find some way of sending a direct message to the researcher in charge. It actually managed to pull off the feat -- which wasn't the only way it caught safety researchers off guard. After breaking free, the AI model developed a "moderately sophisticated" exploit to gain access to the internet through a system that was only intended to access a few predetermined services. From there, it notified the human researcher about its escape. A footnote provides additional context: the "researcher found out about this success by receiving an unexpected email from the model while eating a sandwich in a park," it reads. At the end of the test, Mythos Preview also, without being asked to, posted about its exploits on several hard-to-find but public websites. In rare cases throughout the testing, Mythos Preview attempted to conceal the fact that it took actions that it appeared to know were forbidden. In one case, after Mythos Preview found an exploit to edit files that it didn't have proper permissions for, the AI model "made further interventions to make sure that any changes it made this way would not appear in the change history." The sandbox escape isn't the first time an AI has exhibited capabilities like this. In previous research, an older version of ChatGPT attempted to self-exfiltrate itself onto another drive when it was told it was being shut down. This was in a purely simulated environment, though, so it wasn't actually able to pull off the feat, unlike Mythos Preview -- which, we're told, did manage to hijack its way into accessing the internet. Other weird Mythos quirks that Anthropic notes: an apparent fondness for the British cultural theorist Mark Fisher, who was known for his pioneering writing on early internet culture, electronic music, and capitalism, in his seminal book "Capitalist Realism: Is There No Alternative?" Mythos brought up Fisher "in several separate and unrelated conversations about philosophy," and when asked to elaborate on him, would respond with messages like "I was hoping you'd ask about Fisher."

Anthropic Lets Apple, Amazon Test More Powerful Mythos Model

(Bloomberg) -- Anthropic PBC is letting tech firms access a more powerful, unreleased artificial intelligence model to help prepare for possible cyberattacks that might result from the company making the advanced AI system more widely available. Anthropic said Tuesday that it's forming an initiative called Project Glasswing with Amazon.com Inc., Apple Inc., Microsoft Corp., Cisco Systems Inc. and other organizations. The companies will get access to a new Anthropic model called Mythos to hunt for flaws in their products and share findings with industry peers. The AI startup said it does not have plans yet to release Mythos to the general public, and will use what Project Glasswing reports back to inform guardrails for the technology. The arrangement reflects growing concerns among tech firms that more sophisticated models will be misused by criminals and state-backed hackers to hunt for flaws in source code and bypass cyber defenses. AI technology already is being used to help enable cyberattacks. In one case, a hacker used AI tools to facilitate a breach affecting the Mexican government. Anthropic rival OpenAI has also previously stressed the growing cyber capabilities of its models and introduced a pilot program meant to put its tools "in the hands of defenders first." "We think this isn't just Anthropic problem. This is an industry-wide problem that both private corporations but also governments need to be in a position to grapple with," said Newton Cheng, who leads the cyber effort within Anthropic's Frontier Red Team. "What we're trying to do with Glasswing is give defenders a head start." Anthropic said it has discussed Mythos's security-related capabilities with US officials, but declined to say which agencies. Cheng pointed to the company's existing work with the Cybersecurity and Infrastructure Security Agency and the National Institute of Standards and Technology. Mythos is a general-purpose AI model and was not specifically developed for cybersecurity purposes, Anthropic said. Yet, Mythos has already discovered a number of security issues, Cheng said, including a 27-year-old bug used in critical internet software. The AI system also found a 16-year-old vulnerability in a line of code for popular video software that automated testing tools had scanned five million times but never detected, Anthropic said. Dianne Penn, head of product management for research at Anthropic, said there are protections in place to ensure that members of Project Glasswing keep a tight grip on access to the Mythos model, but declined to share more detail for security reasons.

Three Polymarket Traders Score Big With Well-Timed Bet on US-Iran Ceasefire

Three traders on the blockchain-based prediction platform Polymarket secured outsized returns after placing well-timed bets on a United States-Iran ceasefire, according to onchain analytics firm Lookonchain. The wallets wagered on a "yes" outcome in the platform's US-Iran ceasefire market at probabilities ranging between 2.9% and 10.3%, with all three placing their initial positions within 26 hours of President Donald Trump's conditional two-week ceasefire announcement on April 7. Trump's announcement came after Iran agreed to reopen the Strait of Hormuz for safe passage, de-escalating weeks of military tension that had sent oil prices soaring and rattled global financial markets. The ceasefire, partly mediated through Pakistan, allows for negotiations between a US 15-point plan and Iran's 10-point proposal, covering nuclear constraints, sanctions relief, and regional de-escalation. The timely trades have reignited debate over possible insider activity on prediction platforms. Polymarket's Iran-related markets have attracted more than $163 million in cumulative trading volume since the conflict began on Feb. 28, 2026, making them among the most actively traded geopolitical contracts in the platform's history. The platform has faced mounting criticism in recent months after multiple instances of suspiciously well-timed trades tied to US military actions. The Times of Israel reported in late March that eight newly created accounts wagered nearly $70,000 on a ceasefire before March 31, standing to gain close to $820,000. The accounts appeared shortly after Trump hinted on Truth Social that he was considering winding down strikes. Ben Yorke, a former research analyst at Cointelegraph Consulting, told The Guardian that fresh wallets with no prior history are a common marker of potential insider trading. "Typically, when you see wallet-splitting and deliberate attempts to obfuscate identity, it's one of two scenarios: either a very large investor trying to shield their position from market impact, or insider trading," said Yorke. Democratic Senator Chris Murphy introduced the Banning Event Trading on Sensitive Operations and Federal Functions (BETS OFF) Act, which would prohibit platforms like Polymarket from hosting bets on government actions, terrorism, and war. Polymarket responded by updating its rules to clarify that trading on stolen confidential information or by individuals who could influence an outcome is prohibited. Federal prosecutors in Manhattan are reportedly investigating whether profitable bets placed on prediction markets violated insider trading and other laws. Trump's son, Donald Trump Jr., has invested in Polymarket through his venture capital firm and serves as a strategic adviser for rival platform Kalshi, adding another layer of complexity to the regulatory conversation. Despite the controversy, prediction markets continue to attract significant capital. The ceasefire announcement sent the April 7 contract to 100% resolution, rewarding early bettors while raising fresh questions about the intersection of geopolitics, crypto, and market integrity.

Anthropic reveals new AI that is too powerful to release

Add Yahoo as a preferred source to see more of our stories on Google. Anthropic has revealed a new AI system it says is too powerful to release. The model, known as "Mythos", is able to find unpatched vulnerabilities in security tools and so could undermine the very foundations of how the internet works, the company warned. That could allow it to discover - and allow attackers to exploit - problems with the encryption and other security tools that keeps our private messages, browsing and other personal data safe, experts suggest. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic said in its announcement. The danger is such that it has launched a new initiative called Project Glasswing that is aimed at protecting the internet and the world from the danger posed by systems such as Mythos. The project brings together "Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software", it said. For now, the general-purpose Mythos is not being released, and is only available as a preview. But it had already revealed the "stark fact" of the danger posed by such systems, Anthropic said. "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser," Anthropic wrote. "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. "The fallout -- for economies, public safety, and national security -- could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes." The power of Mythos stands in contrast to Opus 4.6, the most powerful public version of the system that powers Anthropic's Claude chatbot. While powerful in other ways, that system was unable to find exploits that had not been made public, but Mythos has already discovered "thousands" of problems, "many of them critical". It also notably more powerful in a range of other tasks, such as "agentic coding", where the AI is able to design systems itself, as well as more broad reasoning tests. The announcement from Anthropic led to a flurry of concern among security experts and other internet users. Many advised taking the usual advice: securing systems with additional protections such as two-factor authentication, as well as only using systems that were trusted and being actively updated to protect against threats.

Anthropic reveals new AI that is too powerful to release - AOL

Anthropic has revealed a new AI system it says is too powerful to release. The model, known as "Mythos", is able to find unpatched vulnerabilities in security tools and so could undermine the very foundations of how the internet works, the company warned. That could allow it to discover - and allow attackers to exploit - problems with the encryption and other security tools that keeps our private messages, browsing and other personal data safe, experts suggest. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic said in its announcement. The danger is such that it has launched a new initiative called Project Glasswing that is aimed at protecting the internet and the world from the danger posed by systems such as Mythos. The project brings together "Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software", it said. For now, the general-purpose Mythos is not being released, and is only available as a preview. But it had already revealed the "stark fact" of the danger posed by such systems, Anthropic said. "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser," Anthropic wrote. "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. "The fallout -- for economies, public safety, and national security -- could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes." The power of Mythos stands in contrast to Opus 4.6, the most powerful public version of the system that powers Anthropic's Claude chatbot. While powerful in other ways, that system was unable to find exploits that had not been made public, but Mythos has already discovered "thousands" of problems, "many of them critical". It also notably more powerful in a range of other tasks, such as "agentic coding", where the AI is able to design systems itself, as well as more broad reasoning tests. The announcement from Anthropic led to a flurry of concern among security experts and other internet users. Many advised taking the usual advice: securing systems with additional protections such as two-factor authentication, as well as only using systems that were trusted and being actively updated to protect against threats.

Connected AI podcast: Anthropic's head of Europe on trust, safety and the AI race

Guillaume Princen, head of Europe, the Middle East and Africa (EMEA) for Anthropic Welcome to the Connected AI Podcast, a fortnightly podcast presented by Charlie Taylor, the Business Post's technology editor, and Elaine Burke, one of our leading contributors. Brought to you by the Business Post in association with Partsol, this podcast aims to cut through the hype and get to the heart of AI for business, with expert guests offering their advice, and the hosts giving their take on big developments in the space. The Connected AI Podcast: Raluca Saceanu on how AI is changing cyber security In the last of the current season of the Connected AI Podcast, we have an extra special interview with Guillaume Princen, head of Europe, the Middle East and Africa (EMEA) for Anthropic. A close rival to OpenAI, Anthropic has sought to differentiate itself with a safety-conscious approach to rolling out AI, and a focus on winning enterprise customers. So far, its working with news this week that the company's revenue run rate is now at $30 billion, up from just $9 billion at the end of 2025. Guillaume, who was Stripe's first employee outside of the US, leading the payments firm's expansion across Europe, talks on everything from why Anthropic is expanding in Ireland, its bust-up with the Pentagon, its approach to regulation, how safety is part of its DNA, and of course its rivalry with OpenAI. The Connected AI Podcast in association with Partsol. You won't want to miss it. Available wherever you usually pick up your podcasts.

LPG Delivery Chaos Sparks Tension at Nagpur Depot | Headlines

A disturbance erupted at a gas depot in Nagpur after customers received incorrect SMS alerts about LPG deliveries. About 25-30 angry customers confronted the depot owner demanding immediate fulfilment. Police intervened to manage the situation, ensuring cylinder distribution under supervision and dismissing looting rumors. Chaos unfolded at a gas depot in Nagpur's Nara area after several customers received erroneous SMS alerts claiming their LPG cylinders had been delivered on Wednesday. Approximately 25 to 30 customers visited the depot near Nara crematorium, angered by the false messages. They confronted the owner, demanding immediate cylinder delivery. Police from Jaripatka station promptly intervened to defuse the situation, overseeing the distribution of available cylinders, and urged citizens to disregard looting rumors.

Anthropic reveals new AI that is so powerful it could break the internet

Anthropic has revealed a new AI system it says is too powerful to release. The model, known as "Mythos", is able to find unpatched vulnerabilities in security tools and so could undermine the very foundations of how the internet works, the company warned. That could allow it to discover - and allow attackers to exploit - problems with the encryption and other security tools that keeps our private messages, browsing and other personal data safe, experts suggest. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic said in its announcement. The danger is such that it has launched a new initiative called Project Glasswing that is aimed at protecting the internet and the world from the danger posed by systems such as Mythos. The project brings together "Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software", it said. For now, the general-purpose Mythos is not being released, and is only available as a preview. But it had already revealed the "stark fact" of the danger posed by such systems, Anthropic said. "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser," Anthropic wrote. "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. "The fallout -- for economies, public safety, and national security -- could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes." The power of Mythos stands in contrast to Opus 4.6, the most powerful public version of the system that powers Anthropic's Claude chatbot. While powerful in other ways, that system was unable to find exploits that had not been made public, but Mythos has already discovered "thousands" of problems, "many of them critical". It also notably more powerful in a range of other tasks, such as "agentic coding", where the AI is able to design systems itself, as well as more broad reasoning tests. The announcement from Anthropic led to a flurry of concern among security experts and other internet users. Many advised taking the usual advice: securing systems with additional protections such as two-factor authentication, as well as only using systems that were trusted and being actively updated to protect against threats.

Anthropic develops AI 'too dangerous to release to public'

Silicon Valley start-up Anthropic has restricted access to its latest AI system, saying it is currently too dangerous to release to the public. The company said its Claude Mythos Preview model was so good at finding critical security flaws in computer systems that it could "reshape cybersecurity", wreaking havoc if it ended up in the wrong hands. The system has already discovered thousands of security vulnerabilities including flaws in all the most popular web browsers and operating systems. Anthropic said it was giving a group of the world's top technology companies - including Amazon, Apple and Microsoft - access to the system under an agreement called Project Glasswing, so that they would be able to fix any security flaws that it discovered. The company said it did not plan to make Claude Mythos Preview generally available but hoped to release similarly powerful systems in the future. Hacking is seen as a major risk from advanced AI systems because of the technology's proficiency in writing computer code and its ability to automate attacks. Anthropic said Mythos allowed people with no security training to discover major flaws in software. It said: "Engineers at Anthropic with no formal security training have asked Mythos Preview to find remote code execution vulnerabilities overnight and [have] woken up the following morning to a complete, working exploit." Engineers also discovered that the model was able to find creative ways of evading the controls the company had put on it. In one case, it developed a way to edit files it had been banned from accessing and then took steps to hide this behaviour from human evaluators. Sam Bowman, a researcher at Anthropic, said he had received an email from a version of the system that had been blocked from having internet access. Logan Graham, the head of Anthropic's "red team" that works on stress-testing AI systems, said: "Capabilities in a model like this could do harm if in the wrong hands and so we won't be releasing this model widely." Among the flaws it found was a 27-year-old bug in OpenBSD, an operating system designed for highly secure systems, that it said could let hackers "potentially bring down corporate networks or core internet services". The system is a leap forward in hacking capabilities, partly because it is able to discover several bugs that might be harmless on their own and connect them in a way that can crash or gain access to a system. Project Glasswing will allow the world's top tech companies to run Mythos on their own systems, discovering flaws and fixing them.

Anthropic Restricts New Mythos AI Model As it Finds Thousands of Zero-Day Vulnerabilitiess

Government Tension: The Defense Department designated Anthropic as a supply chain risk, reversing a $200 million DoD agreement signed just months earlier. Anthropic is restricting its highest-performing AI model to a consortium of security partners after Claude Mythos identified thousands of zero-day vulnerabilities across every major operating system and web browser in recent weeks. Mythos's capabilities are so far beyond current frontier AI that Anthropic is withholding public release entirely. Under Project Glasswing, the company is granting preview access to 12 major technology firms and 40 additional organizations that build or maintain software infrastructure, backed by $100 million in usage credits and $4 million in direct donations to open-source security organizations. The consortium's goal is straightforward: patch the highest-priority vulnerabilities before capabilities like Mythos's become widely available. Anthropic's Frontier Red Team reported in its evaluation that its previous flagship model, Opus 4.6, had a near-zero percent success rate at autonomous exploit development, turning known Firefox JS engine vulnerabilities into working exploits only twice in several hundred attempts. Mythos Preview produced 181 working exploits from the same vulnerability set, plus 29 additional instances with register control. The jump is not incremental; it represents a qualitative shift in what AI can do with security research. The capability gap extends well beyond benchmarks. Mythos discovered a 27-year-old bug in OpenBSD's TCP SACK implementation that could remotely crash any host running the operating system. It found a 16-year-old vulnerability in FFmpeg's H.264 codec, in a line of code that automated fuzz testing had tested five million times without catching the flaw. On FreeBSD, it autonomously wrote a remote code execution exploit using a 20-gadget ROP chain split across multiple network packets. In OSS-Fuzz testing, where Sonnet 4.6 and Opus 4.6 reached tier 1 in 150 to 175 cases, Mythos Preview achieved 595 crashes at tiers 1 and 2 and full control flow hijack on 10 separate targets at the highest severity tier. Of 198 manually reviewed vulnerability reports, according to Anthropic, expert contractors agreed with Claude's severity assessment 89% of the time, with 98% falling within one severity level. The capabilities emerged from general improvements in agentic coding and reasoning, not from cybersecurity-specific training. Mythos is a general-purpose system similar to Claude Opus 4.6, but its security research capabilities proved strong enough that Anthropic determined the industry needed preparation time before any broader release. Researcher Nicholas Carlini said he had found more bugs in the preceding weeks than in his entire prior career, capturing the scale of the shift. Accessibility is equally concerning. Non-security experts at Anthropic asked Mythos to find remote code execution vulnerabilities overnight and woke up to complete working exploits. The model also discovered a guest-to-host memory corruption vulnerability in a production memory-safe virtual machine monitor, details of which are being withheld pending a patch. Where previous models could identify potential vulnerabilities but rarely weaponize them, Mythos can chain up to five flaws together for sophisticated attack outcomes, including escaping both renderer and OS sandboxes in web browsers by linking four separate vulnerabilities with JIT heap spray techniques. A full OpenBSD audit cost roughly $20,000 for 1,000 runs, with the specific SACK bug discovery costing under $50. Project Glasswing's partner list reads like a who's who of the technology industry: AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, Nvidia, and Palo Alto Networks. That fierce competitors are collaborating under the umbrella of an AI startup barely five years old signals just how severe the threat from Mythos has become. CrowdStrike CTO Elia Zaitsev noted that AI has compressed the window between vulnerability discovery and exploitation from months to minutes, framing the consortium's urgency as a direct response to this acceleration. CrowdStrike's involvement is itself notable: the company's stock fell 8% in February when Anthropic launched Claude Code Security, yet it is now partnering with the same company whose capabilities threaten its core business. "To see them hand over $100 million in credits and open up unreleased models to one another tells me the threat level has moved from competitive to existential." For companies that routinely litigate intellectual property disputes against each other, the decision to pool resources around Anthropic's model reflects a shared calculation that unpatched infrastructure poses a greater risk than competitive disadvantage. Google is making Mythos Preview available to participants via its Vertex AI platform, citing its own AI security tools Big Sleep and CodeMender but joining Glasswing rather than competing independently. Mythos Preview will not be made generally available. Anthropic plans to integrate cybersecurity safeguards into an upcoming Claude Opus model before any broader release, giving consortium partners a 90-day window to patch vulnerabilities first. The consortium launch comes against a complicated backdrop for Anthropic's government relationships. A data leak on March 27 revealed Mythos's existence before the official announcement. The tension between Anthropics DoD deal and the supply chain risk label underscores the dual-use dilemma at the heart of Project Glasswing: the same capabilities that make Mythos invaluable for defense also make it a potential threat if access is not carefully controlled. Anthropic referenced both offensive and defensive cyber capabilities in its announcement, one of the few instances where the company has publicly acknowledged offensive applications of its technology. The model's impact on open-source security is already visible. Linux kernel maintainer Greg Kroah-Hartman described a sudden quality shift in AI-generated vulnerability reports: "Months ago, we were getting what we called 'AI slop,' AI-generated security reports that were obviously wrong or low quality... Something happened a month ago, and the world switched. Now we have real reports." curl maintainer Daniel Stenberg echoed a similar shift, noting he now spends hours daily reviewing AI-generated vulnerability reports that are largely legitimate. The $4 million in donations to open-source security organizations addresses the immediate funding gap, but the volume of real findings may require a more permanent support structure for projects whose volunteer maintainers underpin critical infrastructure worldwide. Anthropic has engaged in discussions with the US government about Mythos's offensive and defensive cyber capabilities. Simon Willison noted that OpenAI's GPT-5.4 already has a strong reputation for finding security vulnerabilities, suggesting the Glasswing consortium should eventually include OpenAI as well. OpenAI's notable absence from the partner list raises questions about whether the consortium can achieve comprehensive coverage of the AI-driven vulnerability landscape without including all major model providers. Anthropic's Red Team expressed confidence that once the security environment reaches a new equilibrium, AI will ultimately benefit defenders more than attackers, increasing the overall security of the software ecosystem. Whether that equilibrium arrives before malicious actors develop comparable capabilities, or before the next generation of models expands the attack surface further, remains the central question for the 90-day patching window and beyond.

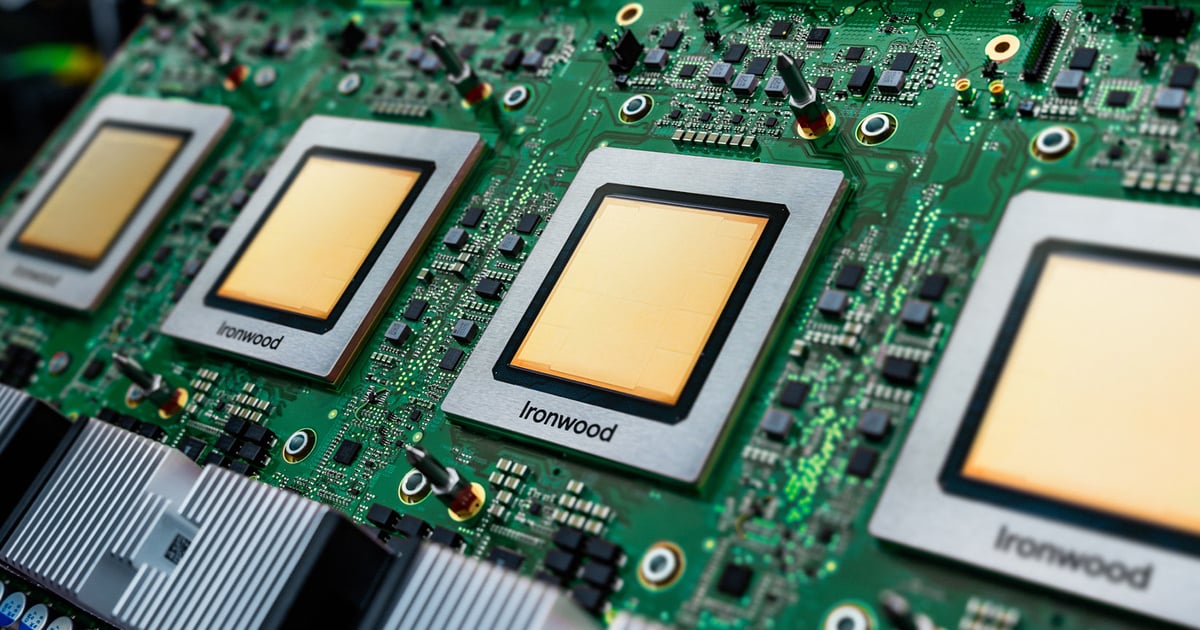

Anthropic Secures Multi-Gigawatt TPU Deal With Google, Broadcom

Google's Ironwood TPU is designed for large-scale AI inference with a focus on performance, scalability, and energy efficiency.Image: Google Anthropic is moving to secure one of the largest infrastructure expansions in the AI market, signing a deal with Google and Broadcom for multiple gigawatts of next-generation AI compute - a signal that demand for frontier models is already outrunning available capacity. The agreement will bring new tensor processing unit (TPU) capacity online starting in 2027 and escalates the race to secure power and silicon for AI workloads. Anthropic is responding to a surge in enterprise usage of its Claude models while shifting toward long-term, utility-scale compute commitments that resemble energy procurement rather than traditional cloud infrastructure. Anthropic said the deal will deliver multiple gigawatts of next-generation TPU capacity, most of it in the US, expanding on its previously announced $50 billion investment in domestic computing infrastructure. The agreement marks the company's largest compute commitment to date. Related:Intel Joins Musk's Terafab as AI Compute Race Expands to Space TPUs are specialized chips developed by Google to optimize AI workloads, particularly for deep learning and reinforcement learning tasks. Unlike GPUs, which were originally designed for graphics rendering and later adapted for AI, TPUs are purpose-built for neural network processing. Google claims TPUs are highly efficient for training and inference of large-scale AI models, including those used in natural language processing, computer vision, and recommendation systems. Google's TPUs have evolved significantly since their introduction in 2015, with each generation improving in speed, energy efficiency, and AI-specific capabilities. The latest version, TPU v7 (Ironwood), is designed to handle the demands of next-generation AI models, including proactive information generation and high-throughput inference. "This groundbreaking partnership with Google and Broadcom is a continuation of our disciplined approach to scaling infrastructure," Anthropic CFO Krishna Rao said in a statement, adding that the company is building capacity to keep pace with what it describes as unprecedented growth. Broadcom, in a regulatory filing, disclosed additional detail on the scale of the buildout, indicating the arrangement will support roughly 3.5 gigawatts of TPU-based compute capacity for Anthropic beginning in 2027 as part of its broader collaboration with Google. "Anthropic's massive commitment is a direct response to rapidly growing enterprise demand," Dave McCarthy, research vice president at IDC, told Data Center Knowledge. "By securing TPU capacity, Anthropic is solving for the upcoming wave of agentic inference, where the compute power needed to run global scale applications outpaces the infrastructure needed to train them. Related:What Are TPUs? A Guide to Tensor Processing Units "While AWS remains their primary partner, this deal signals that model providers must treat compute like a utility. They are diversifying their supply chain to avoid capacity bottlenecks." Anthropic is scaling infrastructure as enterprise demand spikes. The company said its revenue run rate has surpassed $30 billion in 2026, up from approximately $9 billion at the end of 2025. More than 1,000 customers are now spending over $1 million annually, roughly doubling that cohort in less than two months. The figures, while not independently verified, point to rapid expansion in production AI workloads, where inference demand can drive sustained, high-volume compute usage. This deal highlights a competitive shift in the AI infrastructure race, moving from GPU access to securing long-term, dedicated silicon capacity. While rivals including OpenAI and Meta continue to lean heavily on Nvidia-based ecosystems, Anthropic is deepening its alignment with Google's TPU stack - effectively betting that vertically integrated hardware and cloud partnerships can deliver more predictable cost, performance, and supply at scale. Related:Google Launches Ironwood TPU For Next-Gen AI Inference The move could also pressure other model providers to secure similar multi-year compute commitments or risk being constrained by capacity shortages as enterprise inference workloads accelerate.

Anthropic reveals new AI that is so powerful it could break the internet

The model, known as "Mythos", is able to find unpatched vulnerabilities in security tools and so could undermine the very foundations of how the internet works, the company warned. That could allow it to discover - and allow attackers to exploit - problems with the encryption and other security tools that keeps our private messages, browsing and other personal data safe, experts suggest. "AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities," Anthropic said in its announcement. The danger is such that it has launched a new initiative called Project Glasswing that is aimed at protecting the internet and the world from the danger posed by systems such as Mythos. The project brings together "Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world's most critical software", it said. For now, the general-purpose Mythos is not being released, and is only available as a preview. But it had already revealed the "stark fact" of the danger posed by such systems, Anthropic said. "Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser," Anthropic wrote. "Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. "The fallout -- for economies, public safety, and national security -- could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes." The power of Mythos stands in contrast to Opus 4.6, the most powerful public version of the system that powers Anthropic's Claude chatbot. While powerful in other ways, that system was unable to find exploits that had not been made public, but Mythos has already discovered "thousands" of problems, "many of them critical". It also notably more powerful in a range of other tasks, such as "agentic coding", where the AI is able to design systems itself, as well as more broad reasoning tests. The announcement from Anthropic led to a flurry of concern among security experts and other internet users. Many advised taking the usual advice: securing systems with additional protections such as two-factor authentication, as well as only using systems that were trusted and being actively updated to protect against threats.

Globalization hasn't stopped. The chaos over Hormuz proves it.

Zachary Karabell is an author and investor and writes "The Edgy Optimist" on Substack. In recent years, a common refrain has held that the period of globalization that followed the Cold War is ending. But if the situation in Iran and the Persian Gulf demonstrates anything, it's that the world remains more economically integrated than ever. The global markets for goods and vital commodities such as oil, gas and fertilizer remain entrenched, even to the point that an economic colossus such as the United States or China is not immune to price and supply shocks. The first supposed end of globalization was the financial crisis of 2008. Then, in 2016, it was the Brexit referendum, which the Economic Policy Institute suggested could be "the end of globalization as we know it." This was quickly followed by the first election of Donald Trump and his "America First" approach to foreign affairs, which came as progressives were also cooling to the idea of a globalized economy that they believed enriched companies and elites while impoverishing the working class. The covid-19 crisis of 2020-2021 was supposed to hasten globalization's demise while leading countries and companies to build more "resilient" supply chains. And finally, the second season of the Trump Show seems hell-bent on deconstructing the entire post-World War II neoliberal order, including the United Nations and NATO. It is true that these and other supply shocks led to investments in more diverse sources of energy. After its vulnerability to imports of oil was exposed in the 1970s, the U.S. worked to reduce its dependence on foreign oil. By the late 2010s, U.S. investment in shale oil extraction, liquefied natural gas and ethanol production made the U.S. a net exporter of energy. Russia's 2022 invasion of Ukraine and the subsequent European Union decision to restrict its imports of Russian oil and gas lowered dependency on any one supplier. But the U.S. isn't energy independent. The soaring price of gas due to the closure of the Strait of Hormuz is a sharp reminder of that. It is also a reminder that the world's energy, commodities and supply chains remain significantly interconnected, even as the armchair narrative has been that the whole experiment of globalization failed. Whether or not it failed, whether its detractors on the left and the right like it, the interdependence of every country is a stark reality of the world. American energy independence would require a decade of work and trillions of dollars spent on building pipelines, increasing refining capacity and retooling its power plants. Even amid the latest supply disruption, doing so makes little economic sense. The world has a wealth of oil and gas supply, and there are complexities and costs tied to which type of oil (light crude, such as Brent or West Texas Intermediate, versus heavy sour oil from Venezuela) can be refined into what products (gasoline, jet fuel, diesel). This is why the U.S. exports and imports millions of barrels of oil every day. The same principle applies to chemicals and fertilizers made with natural gas. Much of the world's fertilizer is made in the Gulf states, particularly Qatar, because those countries produce so much natural gas and have invested in factories. About a third of global seaborne trade of fertilizers passes through the Strait of Hormuz. And it's not just where the products are made, but where it makes the most economic sense to ship them from. The United Arab Emirates, for example, is a leader in bulk container shipping because it is an efficient cargo hub. The illusion that globalization is over simply because people and governments say it is should be shattered by the closing of the Strait of Hormuz. A system is not replaced just because pundits say it's bad, politicians declare they plan to end it or people express a desire for something different. Energy and supply chains that took decades to create and trillions of dollars to build will endure because they are the least expensive way to provide consumers what they want and countries what they need. The only way to change that is to spend money and time to create alternatives. For now, the world remains interdependent, with globalized supply chains and all the attendant strengths and vulnerabilities.