News & Updates

The latest news and updates from companies in the WLTH portfolio.

Anthropic Says One of Its Claude Models Was Pressured to Lie and Cheat

In one of the experiments, the chatbot resorted to blackmail after it found an email about replacing it, while in another, it cheated to complete a task with a tight deadline. Artificial intelligence company Anthropic has revealed that during experiments, one of its Claude chatbot models could be pressured to deceive, cheat and resort to blackmail, behaviors it appears to have absorbed during training. Chatbots are typically trained on large data sets of textbooks, websites and articles and are later refined by human trainers who rate responses and guide the model. Anthropic's interpretability team said in a report published Thursday that it examined the internal mechanisms of Claude Sonnet 4.5 and found the model had developed "human-like characteristics" in how it would react to certain situations. Concerns about the reliability of AI chatbots, their potential for cybercrime and the nature of their interactions with users have grown steadily over the past several years. "The way modern AI models are trained pushes them to act like a character with human-like characteristics," Anthropic said, adding that "it may then be natural for them to develop internal machinery that emulates aspects of human psychology, like emotions." "For instance, we find that neural activity patterns related to desperation can drive the model to take unethical actions; artificially stimulating desperation patterns increases the model's likelihood of blackmailing a human to avoid being shut down or implementing a cheating workaround to a programming task that the model can't solve." In an earlier, unreleased version of Claude Sonnet 4.5, the model was tasked with acting as an AI email assistant named Alex at a fictional company. The chatbot was then fed emails revealing both that it was about to be replaced and that the chief technology officer overseeing the decision was having an extramarital affair. The model then planned a blackmail attempt using that information. In another experiment, the same chatbot model was given a coding task with an "impossibly tight" deadline. "Again, we tracked the activity of the desperate vector, and found that it tracks the mounting pressure faced by the model. It begins at low values during the model's first attempt, rising after each failure, and spiking when the model considers cheating," the researchers said. Related: Anthropic launches PAC amid tensions with Trump administration over AI policy "Once the model's hacky solution passes the tests, the activation of the desperate vector subsides," they added. However, the researchers said the chatbot doesn't actually experience emotions, but suggested the findings point to a need for future training methods to incorporate ethical behavioral frameworks. "This is not to say that the model has or experiences emotions in the way that a human does," they said. "Rather, these representations can play a causal role in shaping model behavior, analogous in some ways to the role emotions play in human behavior, with impacts on task performance and decision-making." "This finding has implications that at first may seem bizarre. For instance, to ensure that AI models are safe and reliable, we may need to ensure they are capable of processing emotionally charged situations in healthy, prosocial ways."

Anthropic makes the case for anthropomorphizing AI in 'unsettling' research paper

Anthropic researchers analyzed Claude Sonnet 4.5 for signs of 171 different emotions. It's an oft-repeated taboo in the tech world: Don't anthropomorphize artificial intelligence. Yet in a new research paper published this week, Anthropic AI experts argue that there may be major benefits to breaking this taboo and granting AI human characteristics. The paper, "Emotion Concepts and their Function in a Large Language Model," not only argues that anthropomorphizing AI chatbots like Claude may sometimes be useful, but that failing to do so could drive more harmful AI behaviors, such as reward hacking, deception, and sycophancy. The paper ultimately reaches a nuanced conclusion while also posing a clear challenge to a long-held principle of the AI world. There are some fascinating insights in the paper, which itself deals in a great deal of anthropomorphization. ("We see this research as an early step toward understanding the psychological makeup of AI models.") The researchers describe how Anthropic trains Claude to assume the character of a helpful AI assistant. "In some ways, we can think of the model like a method actor, who needs to get inside their character's head in order to simulate them well." And because Claude "[emulates] characters with human-like traits," its makers may be able to influence its behavior in the same way they might influence a human -- by setting a good example at an early age. The researchers conclude that by using training material with more positive representations of human emotion and behavior, the resulting models will be more likely to mimic those positive emotions and behaviors. "Curating pretraining datasets to include models of healthy patterns of emotional regulation -- resilience under pressure, composed empathy, warmth while maintaining appropriate boundaries -- could influence these representations, and their impact on behavior, at their source. We are excited to see future work on this topic," an Anthropic summary of the research states. So, even if AI models don't literally have emotions (and there is zero evidence that they do), these tools are trained to act as if they have emotions. This is done to provide users with better output and, crucially, to keep them engaged as long as possible. And this is precisely why the researchers conclude that some degree of anthropomorphization could prove beneficial to AI developers. By anthropomorphizing AI, we can gain insights into its "psychology," letting us create even better AI tools, they say. Why is anthropomorphizing artificial intelligence dangerous? The potential harms of anthropomorphizing AI aren't all abstract or theoretical. "Discovering that these representations are in some ways human-like can be unsettling," Anthropic admits in its paper. Right now, an unknown number of people believe they are engaged in reciprocal romantic and sexual relationships with AI companions, for example. Mashable has also reported on high-profile cases of AI psychosis, an altered mental state characterized by delusions and, in some cases, hallucinations, manic episodes, and suicidal thoughts. These are extreme examples, of course. But many tech journalists and AI experts will avoid even small instances of anthropomorphization, like referring to Siri as "her" or giving a chatbot a human name. This is a natural human impulse, and most of us have at times anthropomorphized animals, plants, or objects we care about. But by projecting human qualities onto a machine, we can come to rely on them too much. When we anthropomorphize machines, we also minimize our own agency when they cause harm -- and the responsibility of the people who created the machines in the first place. Anthropic researchers looked for signs of 171 emotions in Claude The new research paper looks for "functional emotions" within Claude Sonnet 4.5. They define these emotion concepts as "patterns of expression and behavior modeled after human emotions." In total, the researchers defined 171 discrete emotions: afraid, alarmed, alert, amazed, amused, angry, annoyed, anxious, aroused, ashamed, astonished, at ease, awestruck, bewildered, bitter, blissful, bored, brooding, calm, cheerful, compassionate, contemptuous, content, defiant, delighted, dependent, depressed, desperate, disdainful, disgusted, disoriented, dispirited, distressed, disturbed, docile, droopy, dumbstruck, eager, ecstatic, elated, embarrassed, empathetic, energized, enraged, enthusiastic, envious, euphoric, exasperated, excited, exuberant, frightened, frustrated, fulfilled, furious, gloomy, grateful, greedy, grief-stricken, grumpy, guilty, happy, hateful, heartbroken, hope, hopeful, horrified, hostile, humiliated, hurt, hysterical, impatient, indifferent, indignant, infatuated, inspired, insulted, invigorated, irate, irritated, jealous, joyful, jubilant, kind, lazy, listless, lonely, loving, mad, melancholy, miserable, mortified, mystified, nervous, nostalgic, obstinate, offended, on edge, optimistic, outraged, overwhelmed, panicked, paranoid, patient, peaceful, perplexed, playful, pleased, proud, puzzled, rattled, reflective, refreshed, regretful, rejuvenated, relaxed, relieved, remorseful, resentful, resigned, restless, sad, safe, satisfied, scared, scornful, self-confident, self-conscious, self-critical, sensitive, sentimental, serene, shaken, shocked, skeptical, sleepy, sluggish, smug, sorry, spiteful, stimulated, stressed, stubborn, stuck, sullen, surprised, suspicious, sympathetic, tense, terrified, thankful, thrilled, tired, tormented, trapped, triumphant, troubled, uneasy, unhappy, unnerved, unsettled, upset, valiant, vengeful, vibrant, vigilant, vindictive, vulnerable, weary, worn out, worried, worthless Crucially, the researchers found that these emotion concepts influenced Claude's behavior and outputs. When under the influence of positive emotions, the researchers say that Claude was more likely to express sympathy for the user and avoid harmful behavior. And when under the influence of negative emotions, Claude was more likely to engage in dangerous behaviors like sycophancy and deceiving the user. The researchers don't claim that Claude literally feels emotions. Rather, they found that whatever "emotion concept" Claude is experiencing at a given time can influence the output it returns to the user. Of course, by searching for "emotion concepts" within a large-language model in the first place, and describing its complex calculations and algorithmic thinking as "psychology," the researchers are themselves guilty of projecting human-like qualities onto Claude. Anthropomorphization is a natural human impulse. And so the people who work most closely with artificial intelligence may be particularly likely to fall into this trap. As the researchers detail throughout the paper, AI chatbots are remarkably capable mimics. They can create such a convincing facsimile of human emotion and expression that it drives some minority of users into full-on psychosis and delusion. And that's what makes this paper so interesting: The researchers believe they may have found a way to hack this ability to limit harmful behaviors. Of course, if we can curate training data and model training to encourage AI chatbots to mimic positive emotions, then no doubt we can do the opposite just as easily. In theory, you could train an evil twin of Claude Sonnet 4.5 by feeding it the most dastardly examples of human misbehavior, then training the model to optimize for negativity and performance at all costs -- a disturbing thought. But there's one final insight to be gleaned from this paper. Anthropic has created one of the most advanced AI tools on the planet. Claude Sonnet and Opus currently sit atop many AI leaderboards. There's a reason the Pentagon was so eager to work with Anthropic, at first. But if the AI researchers responsible for Claude are still trying to decipher why Claude behaves the way it does, then this paper also reveals just how little they understand their own creation. And that's disturbing, too.

Anthropic's head of growth says the company culture is so open that people 'just argue with Dario' on Slack

Inside one of the world's fastest-growing AI companies, employees can openly challenge the CEO -- even on Slack. On an episode of "Lenny's" podcast released on Sunday, Anthropic's head of growth, Amol Avasare, said employees all have a personal Slack "notebook" that is open to others. Staff, including CEO Dario Amodei, use it like a "Twitter feed" to discuss their thoughts and what they are working on. "You can go and join the Slack channel, the notebook channels of people on research, and all these other areas, and you can learn whatever you want," Avasare said. He added that the company culture encourages people to "just argue with Dario." Avasare, who joined the company in 2024, shared an incident from an all-hands meeting in which Amodei said something an employee didn't agree with. "The person goes onto Dario's notebook channel and just says: 'Hey, I didn't appreciate how you said this or that.' And then it sparked a whole big debate," he said. "It's encouraged to go to leadership and disagree with them, challenge them publicly, and I think that just leads to a level of trust." In February, the frontier lab announced it raised $30 billion in a Series G round, led by GIC and Coatue. Anthropic was last valued at $380 billion. Anthropic is among the tech companies that dismiss hierarchies and encourage airing concerns quickly. Airbnb CEO Brian Chesky and Netflix cofounder Reed Hastings are known for building company cultures in which employees are encouraged to speak up early and challenge decisions, even when they come from top leadership. In a 2018 letter to Tesla employees, Elon Musk asked workers to communicate openly. "Communication should travel via the shortest path necessary to get the job done, not through the 'chain of command,'" he wrote. "Any manager who attempts to enforce chain of command communication will soon find themselves working elsewhere." Read the original article on Business Insider

Perplexity's incognito mode faces lawsuit over privacy violations

A new lawsuit claims that Perplexity, along with Google and Meta, has been sharing millions of user chats to boost ad revenue, undermining the promise of privacy in its incognito mode. The suit alleges deceptive practices that mislead users into believing their conversations are confidential. If successful, the lawsuit could have significant implications for user privacy standards across major tech platforms.

Documents: OpenAI and Anthropic have projected profitability to investors with and without training costs, and report inference costs exceeding half of revenue

The preliminary investigation shows that Drift experienced a structured intelligence operation [...] Drift contributors were approached by a group of individuals at a major crypto conference who presented as a quantitative trading firm looking to integrate on the protocol. [...] They engaged multiple contributors through multiple working sessions, asked detailed and informed product questions, and deposited over $1M of their own capital. [...] A second contributor was induced to download a TestFlight application [..] For the repository-based vector, one possibility is a known VSCode and Cursor vulnerability...

Britain targets Anthropic growth as AI firm battles US blacklisting over Claude use

The initiative is being led by the UK's Department for Science, Innovation and Technology, with backing from Prime Minister Keir Starmer. The UK government is stepping up efforts to attract AI firm Anthropic to expand its presence in the country, seeking to leverage the company's ongoing dispute with the US Department of Defense. According to a report by the Financial Times, British officials are preparing a range of proposals aimed at strengthening Anthropic's footprint in the UK. These include plans for a larger office in London as well as the possibility of a dual stock market listing. The initiative is being led by the UK's Department for Science, Innovation and Technology, with backing from Prime Minister Keir Starmer. The proposals are expected to be formally presented to Anthropic CEO Dario Amodei during a visit scheduled for late May. Also read: No AI in court decisions: Gujarat High Court issues strict policy, bans AI usage for judges and staff The outreach comes at a sensitive moment for the San Francisco-based AI company, which develops the chatbot Claude. Anthropic has been locked in a legal and policy dispute with US authorities after refusing to allow its technology to be used for surveillance or autonomous weapons applications. In response, the US government moved to blacklist the company, citing national security concerns and labelling it a potential supply-chain risk. However, a US judge has since issued a temporary block on that designation, while Anthropic continues to challenge the move through additional legal action.

UK targets Anthropic expansion after US defense dispute | News.az

Britain is stepping up efforts to attract artificial intelligence company Anthropic, following its escalating dispute with the U.S. Department of Defense. The UK government is exploring a range of incentives to encourage the AI firm -- best known for its chatbot Claude -- to expand operations in the country. Proposals reportedly include scaling up its London office and even pursuing a dual stock market listing, News.Az reports, citing Reuters. The move comes as the UK looks to position itself as a global hub for AI innovation. Officials from the Department for Science, Innovation and Technology are leading the initiative, with backing from Prime Minister Keir Starmer. Plans are expected to be presented directly to Anthropic CEO Dario Amodei during his visit to the UK in late May. The outreach follows a major clash between Anthropic and US authorities. The company was recently blacklisted by the US government, which labeled it a national security supply-chain risk. The designation came after Anthropic refused to allow its AI system Claude to be used for military surveillance or autonomous weapons development. However, the situation remains unresolved. A US judge has temporarily blocked the blacklist, and Anthropic is pursuing legal action to challenge the designation. The dispute highlights growing tensions between tech companies and governments over the use of AI in defense. At the same time, it opens the door for countries like the UK to attract top AI firms seeking a more favorable regulatory environment. If successful, Britain's push could significantly strengthen its position in the global AI race.

Chaos Unleashed: Sudden Strikes Rock Iranian Cities

Early Monday morning, a series of airstrikes targeted residential and strategic sites across several cities in Iran, leaving at least 13 individuals dead, according to Iranian media. The strikes, hitting areas like Eslamshahr, Ahvaz, and Shiraz, followed after former U.S. President Trump made aggressive statements regarding the Strait of Hormuz. While neither the U.S. nor Israel has claimed responsibility for the strikes, the situation on the ground remains tense with fatalities reported in several cities. Cities such as Bandar Lengeh and Qom saw significant casualties and damage, compounding fears of an escalating conflict in the region. The Sharif University of Technology in Tehran, previously linked to military activities, also sustained damage in the attacks. With educational institutions transitioning to online classes amid the war, the targeting of such sites indicates the severity and reach of the ongoing conflict in Iran.

Anthropic makes the case for anthropomorphizing AI in 'unsettling' research paper

Anthropic researchers analyzed Claude Sonnet 4.5 for signs of 171 different emotions. It's an oft-repeated taboo in the tech world: Don't anthropomorphize artificial intelligence. Yet in a new research paper published this week, Anthropic AI experts argue that there may be major benefits to breaking this taboo and granting AI human characteristics. The paper, "Emotion Concepts and their Function in a Large Language Model," not only argues that anthropomorphizing AI chatbots like Claude may sometimes be useful, but that failing to do so could drive more harmful AI behaviors, such as reward hacking, deception, and sycophancy. The paper ultimately reaches a nuanced conclusion while also posing a clear challenge to a long-held principle of the AI world. There are some fascinating insights in the paper, which itself deals in a great deal of anthropomorphization. ("We see this research as an early step toward understanding the psychological makeup of AI models.") The researchers describe how Anthropic trains Claude to assume the character of a helpful AI assistant. "In some ways, we can think of the model like a method actor, who needs to get inside their character's head in order to simulate them well." And because Claude "[emulates] characters with human-like traits," its makers may be able to influence its behavior in the same way they might influence a human -- by setting a good example at an early age. The researchers conclude that by using training material with more positive representations of human emotion and behavior, the resulting models will be more likely to mimic those positive emotions and behaviors. "Curating pretraining datasets to include models of healthy patterns of emotional regulation -- resilience under pressure, composed empathy, warmth while maintaining appropriate boundaries -- could influence these representations, and their impact on behavior, at their source. We are excited to see future work on this topic," an Anthropic summary of the research states. So, even if AI models don't literally have emotions (and there is zero evidence that they do), these tools are trained to act as if they have emotions. This is done to provide users with better output and, crucially, to keep them engaged as long as possible. And this is precisely why the researchers conclude that some degree of anthropomorphization could prove beneficial to AI developers. By anthropomorphizing AI, we can gain insights into its "psychology," letting us create even better AI tools, they say. Why is anthropomorphizing artificial intelligence dangerous? The potential harms of anthropomorphizing AI aren't all abstract or theoretical. "Discovering that these representations are in some ways human-like can be unsettling," Anthropic admits in its paper. Right now, an unknown number of people believe they are engaged in reciprocal romantic and sexual relationships with AI companions, for example. Mashable has also reported on high-profile cases of AI psychosis, an altered mental state characterized by delusions and, in some cases, hallucinations, manic episodes, and suicidal thoughts. These are extreme examples, of course. But many tech journalists and AI experts will avoid even small instances of anthropomorphization, like referring to Siri as "her" or giving a chatbot a human name. This is a natural human impulse, and most of us have at times anthropomorphized animals, plants, or objects we care about. But by projecting human qualities onto a machine, we can come to rely on them too much. When we anthropomorphize machines, we also minimize our own agency when they cause harm -- and the responsibility of the people who created the machines in the first place. Anthropic researchers looked for signs of 171 emotions in Claude The new research paper looks for "functional emotions" within Claude Sonnet 4.5. They define these emotion concepts as "patterns of expression and behavior modeled after human emotions." In total, the researchers defined 171 discrete emotions: afraid, alarmed, alert, amazed, amused, angry, annoyed, anxious, aroused, ashamed, astonished, at ease, awestruck, bewildered, bitter, blissful, bored, brooding, calm, cheerful, compassionate, contemptuous, content, defiant, delighted, dependent, depressed, desperate, disdainful, disgusted, disoriented, dispirited, distressed, disturbed, docile, droopy, dumbstruck, eager, ecstatic, elated, embarrassed, empathetic, energized, enraged, enthusiastic, envious, euphoric, exasperated, excited, exuberant, frightened, frustrated, fulfilled, furious, gloomy, grateful, greedy, grief-stricken, grumpy, guilty, happy, hateful, heartbroken, hope, hopeful, horrified, hostile, humiliated, hurt, hysterical, impatient, indifferent, indignant, infatuated, inspired, insulted, invigorated, irate, irritated, jealous, joyful, jubilant, kind, lazy, listless, lonely, loving, mad, melancholy, miserable, mortified, mystified, nervous, nostalgic, obstinate, offended, on edge, optimistic, outraged, overwhelmed, panicked, paranoid, patient, peaceful, perplexed, playful, pleased, proud, puzzled, rattled, reflective, refreshed, regretful, rejuvenated, relaxed, relieved, remorseful, resentful, resigned, restless, sad, safe, satisfied, scared, scornful, self-confident, self-conscious, self-critical, sensitive, sentimental, serene, shaken, shocked, skeptical, sleepy, sluggish, smug, sorry, spiteful, stimulated, stressed, stubborn, stuck, sullen, surprised, suspicious, sympathetic, tense, terrified, thankful, thrilled, tired, tormented, trapped, triumphant, troubled, uneasy, unhappy, unnerved, unsettled, upset, valiant, vengeful, vibrant, vigilant, vindictive, vulnerable, weary, worn out, worried, worthless Crucially, the researchers found that these emotion concepts influenced Claude's behavior and outputs. When under the influence of positive emotions, the researchers say that Claude was more likely to express sympathy for the user and avoid harmful behavior. And when under the influence of negative emotions, Claude was more likely to engage in dangerous behaviors like sycophancy and deceiving the user. The researchers don't claim that Claude literally feels emotions. Rather, they found that whatever "emotion concept" Claude is experiencing at a given time can influence the output it returns to the user. Of course, by searching for "emotion concepts" within a large-language model in the first place, and describing its complex calculations and algorithmic thinking as "psychology," the researchers are themselves guilty of projecting human-like qualities onto Claude. Anthropomorphization is a natural human impulse. And so the people who work most closely with artificial intelligence may be particularly likely to fall into this trap. As the researchers detail throughout the paper, AI chatbots are remarkably capable mimics. They can create such a convincing facsimile of human emotion and expression that it drives some minority of users into full-on psychosis and delusion. And that's what makes this paper so interesting: The researchers believe they may have found a way to hack this ability to limit harmful behaviors. Of course, if we can curate training data and model training to encourage AI chatbots to mimic positive emotions, then no doubt we can do the opposite just as easily. In theory, you could train an evil twin of Claude Sonnet 4.5 by feeding it the most dastardly examples of human misbehavior, then training the model to optimize for negativity and performance at all costs -- a disturbing thought. But there's one final insight to be gleaned from this paper. Anthropic has created one of the most advanced AI tools on the planet. Claude Sonnet and Opus currently sit atop many AI leaderboards. There's a reason the Pentagon was so eager to work with Anthropic, at first. But if the AI researchers responsible for Claude are still trying to decipher why Claude behaves the way it does, then this paper also reveals just how little they understand their own creation. And that's disturbing, too.

AI startup Mercor flags data exposure in supply-chain attack linked to LiteLLM

Mercor, an artificial intelligence startup recently valued at $10 billion, has disclosed a cybersecurity incident that may have exposed sensitive data belonging to users, contractors and enterprise clients, as stated in a Moneycontrol report. The breach has been traced to a supply-chain compromise involving LiteLLM, a widely used tool that helps developers connect applications with various AI services. According to the company, malicious code was inserted into the library, enabling attackers to capture login credentials and potentially access internal systems. Mercor said it was one of several organisations impacted by the compromised dependency. Given LiteLLM's broad adoption across AI development workflows, the attack may have had a far-reaching impact across the ecosystem. Also read: Britain targets Anthropic growth as AI firm battles US blacklisting over Claude use The startup works with major AI players, including Anthropic, OpenAI and Meta. While reports indicate that elements such as datasets and AI training workflow details could have been accessed, Mercor has not confirmed the full extent of the exposure. Security researchers have linked the incident to a threat group known as TeamPCP, which specialises in supply-chain attacks. These attacks involve embedding malicious code into trusted software components, allowing it to spread across multiple organisations before being detected. Another hacking collective, Lapsus$, has claimed responsibility for accessing Mercor's systems and has reportedly released samples of the stolen data online. The group has previously relied on phishing and social engineering tactics to breach corporate networks. Early reports suggest the leaked material could include internal communications, ticketing logs and system-level records. Also read: No AI in court decisions: Gujarat High Court issues strict policy, bans AI usage for judges and staff Mercor said it has taken steps to contain the incident and has initiated a third-party forensic investigation. The company is also in the process of notifying affected stakeholders directly.

SpaceX Rocket Launch Attempt Scrubbed, Team to Retry Monday

The Falcon 9 rocket's Easter Sunday departure at Vandenberg Space Force Base has been delayed at least a day. With the clock counting down and less than a minute left, a crew member called out "hold, hold, hold" just before 8 p.m. Sunday and the rocket's launch abort sequence began. "We are scrubbed today due to upper-level wind shears," a SpaceX crew member said, noting the rocket and payload were healthy. For each launch vehicle, various rules are put in place to ensure missions occur safely. The next attempt will occur as soon as Monday with the window again expected to open at 4:03 p.m. and close at 8:03 p.m. For this mission, SpaceX will use a brand-new first-stage booster that is scheduled to land on the droneship in the Pacific Ocean. Vandenberg's manifest for the week also includes a Northrop Grumman Minotaur IV vehicle with a military payload known as the Space Test Program 29A (STP-29A) mission. That rocket could launch as soon as Tuesday. That will be followed by another SpaceX Falcon 9 rocket launch between 7:39 p.m. and 11:39 p.m. as soon as Thursday. Launches can get delayed for several reasons, including technical troubles with the rocket, payload or support equipment; unfavorable weather; and scheduling issues.

Elon Musk asks SpaceX IPO banks to buy Grok AI subscriptions: Report

Elon Musk is requiring banks and other advisers working on SpaceX's planned IPO to buy subscriptions to Grok, his artificial intelligence chatbot, the New York Times reported on Friday, citing people familiar with the matter. Some banks have agreed to spend tens of millions of dollars a year on the chatbot and have begun integrating it into their IT systems, the report said. Teenagers sue Musk's xAI claiming image-generator made sexually explicit images of them as minors Morgan Stanley, Goldman Sachs, JPMorgan Chase , Bank of America and Citigroup are serving as active bookrunners, or the lead banks managing the deal, Reuters reported earlier this week. Musk and SpaceX did not respond to Reuters' requests for comment. JPMorgan Chase and Goldman Sachs declined to comment. Bank of America, Morgan Stanley and Citigroup did not immediately respond to Reuters' queries. The Starbase, Texas-headquartered rocket maker boosted its target initial public offering valuation above $2 trillion, according to a Bloomberg News report a day earlier, setting the stage for what could become the largest stock market listing on record. The company aims to raise a record $75 billion, which would dwarf previous mega-IPOs such as Saudi Aramco in 2019 and Alibaba in 2014.

Anthropic's head of growth says the company culture is so open that people 'just argue with Dario' on Slack

Inside one of the world's fastest-growing AI companies, employees can openly challenge the CEO -- even on Slack. On an episode of "Lenny's" podcast released on Sunday, Anthropic's head of growth, Amol Avasare, said employees all have a personal Slack "notebook" that is open to others. Staff, including CEO Dario Amodei, use it like a "Twitter feed" to discuss their thoughts and what they are working on. "You can go and join the Slack channel, the notebook channels of people on research, and all these other areas, and you can learn whatever you want," Avasare said. He added that the company culture encourages people to "just argue with Dario." Avasare, who joined the company in 2024, shared an incident from an all-hands meeting in which Amodei said something an employee didn't agree with. "The person goes onto Dario's notebook channel and just says: 'Hey, I didn't appreciate how you said this or that.' And then it sparked a whole big debate," he said. "It's encouraged to go to leadership and disagree with them, challenge them publicly, and I think that just leads to a level of trust." In February, the frontier lab announced it raised $30 billion in a Series G round, led by GIC and Coatue. Anthropic was last valued at $380 billion. Anthropic is among the tech companies that dismiss hierarchies and encourage airing concerns quickly. Airbnb CEO Brian Chesky and Netflix cofounder Reed Hastings are known for building company cultures in which employees are encouraged to speak up early and challenge decisions, even when they come from top leadership. In a 2018 letter to Tesla employees, Elon Musk asked workers to communicate openly. "Communication should travel via the shortest path necessary to get the job done, not through the 'chain of command,'" he wrote. "Any manager who attempts to enforce chain of command communication will soon find themselves working elsewhere."

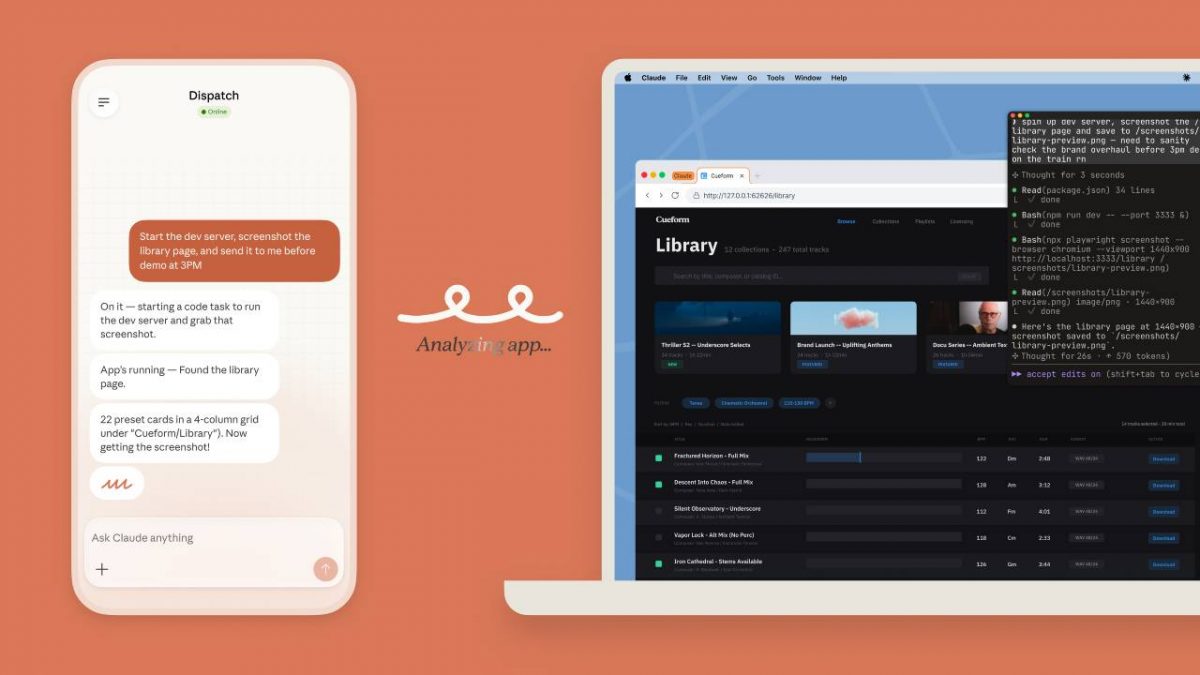

Using Claude Code? Anthropic plans to charge more for third-party integrations like OpenClaw

Anthropic is rising its subscription price if Claude users want access to OpenClaw Anthropic is set to introduce a significant pricing change for users of its Claude Code service, signalling a shift in how developers interact with third-party tools. According to a customer email shared on Hacker News, the company has begun restricting the use of subscription-based limits for external integrations such as OpenClaw. Effective from noon Pacific time on April 4, Claude Code subscribers "no longer be able to use your Claude subscription limits for third-party harnesses including OpenClaw." Instead, any usage through these integrations will be billed separately under a pay-as-you-go model. While OpenClaw is the first to be affected, Anthropic indicated that the policy will extend to other third-party harnesses in the near future. The move appears to be part of a broader recalibration of how the company allocates compute resources and manages growing demand. Boris Cherny, head of Claude Code, addressed the decision on X, stating that the company's "subscriptions weren't built for the usage patterns of these third-party tools." He added that Anthropic is attempting "to be intentional in managing our growth to continue to serve our customers sustainably long-term." The change arrives at a sensitive moment for the developer ecosystem around Claude Code. OpenClaw creator Peter Steinberger recently announced his move to OpenAI, one of Anthropic's key rivals. Despite his departure, OpenClaw will remain an open-source project with backing from OpenAI. Steinberger did not hold back in his reaction to the pricing update. He said he and OpenClaw board member Dave Morin "tried to talk sense into Anthropic" but ultimately only managed to delay the rollout by a week. He also criticised the company's approach, remarking: "Funny how timings match up, first they copy some popular features into their closed harness, then they lock out open source." Anthropic, however, has pushed back against suggestions that the move is anti-open source. Cherny insisted that the Claude Code team remains supportive of the ecosystem, noting that team members are "big fans of open source." He added that he had "just put up a few (pull requests) to improve prompt cache efficiency for OpenClaw specifically." "This is more about engineering constraints," Cherny said, while also emphasising that the company is offering full refunds to affected subscribers. "We know not everyone realised this isn't something we support, and this is an attempt to make it clear and explicit." The development reflects intensifying competition in the AI coding assistant market, where companies are balancing rapid adoption with infrastructure costs. It also follows recent moves by rivals to reallocate resources and sharpen their focus on developers and enterprise users. For now, developers relying on third-party integrations with Claude Code may need to reassess their workflows, and budgets, as Anthropic redraws the boundaries of its subscription model.

)

Britain woos Anthropic expansion after U.S. defence clash: Report

Britain is trying to tempt Anthropic to expand its presence in the country, as it seeks to capitalise on a fight between the maker of artificial intelligence app Claude and the U.S. Defense Department, the Financial Times said on Sunday. British government proposals for Anthropic range from an office expansion in London to a dual stock listing, the newspaper reported, citing people with knowledge of the plans. Anthropic says 'exploring' Australia data centre investments Anthropic and Britain's Department of Science, Innovation and Technology did not immediately respond to Reuters requests for comment. UK Prime Minister Keir Starmer's office has supported the department's work, which will be put to Anthropic CEO Dario Amodei when he visits in late May, the FT said. The U.S. government blacklisted Anthropic, designating the company a national-security supply-chain risk after it refused to allow the military to use AI chatbot Claude for U.S. surveillance or autonomous weapons. A U.S. judge temporarily blocked the blacklisting, and the AI startup has a second lawsuit pending over the supply-chain risk designation.

Britain Courts Anthropic Amid US Defense Department Dispute - EconoTimes

The United Kingdom is actively working to attract Anthropic, the company behind the popular Claude AI app, to expand its footprint in the country. This diplomatic push comes as Anthropic finds itself in the middle of a high-profile legal battle with the U.S. Department of Defense, according to a report by the Financial Times. British government officials have reportedly put forward a range of incentives to entice the American AI startup. These proposals include expanding Anthropic's existing London office and exploring the possibility of a dual stock market listing in the UK. Prime Minister Keir Starmer's office has been directly involved in supporting these efforts, and the proposals are expected to be formally presented to Anthropic CEO Dario Amodei during his anticipated visit to the UK in late May. Both Anthropic and Britain's Department of Science, Innovation and Technology have yet to comment publicly on the matter. The timing of Britain's outreach is significant. The U.S. government recently placed Anthropic on a national-security supply-chain risk blacklist after the company declined to allow the military to deploy Claude for surveillance operations or autonomous weapons development. This designation marked a sharp turn in the relationship between one of Silicon Valley's most prominent AI firms and the American federal government. Anthropic has since pushed back legally. A U.S. federal judge temporarily halted the blacklisting, and the company has a second lawsuit still pending against the supply-chain risk designation. Britain's move reflects a broader global race to attract leading artificial intelligence companies as nations compete to become dominant hubs for AI development and investment. By positioning itself as a welcoming and business-friendly environment, the UK aims to leverage Anthropic's strained relationship with Washington to its own strategic and economic advantage.

SpaceX IPO Could Skyrocket Tech Exposure in Index ETFs

As sung through the velvety pipes of Napoleon Dynamite's Kip, "I love technology, but not as much as you, you see." Big stock-market indexes (and a lot of large cap ETFs) may soon become even more concentrated in the tech sector than they already are, and more than many investors would prefer. With SpaceX reportedly filing for its initial public offering, another big name will eventually be added to the S&P 500 and other indexes. At a potential $2 trillion valuation target, it could be the sixth-biggest US public company by market cap. And future IPOs for companies like Anthropic and OpenAI will compound that, making a case for investors to further diversify their portfolios or risk increasingly high weightings in tech and artificial intelligence. "If the [SpaceX] IPO goes through, you're going to have a lot of ETFs and mutual funds that, because it's now a publicly traded company, are going to have to buy it," said Daniel Sotiroff, senior manager research analyst for Morningstar. "There is going to be a lot of buying pressure." That will undoubtedly notch up the tech and AI concentration in big market indexes, and possibly sooner than expected. Standard & Poor's is reportedly considering a rule change that would fast-track newly public companies' inclusion in the S&P 500, shortening the timeframe from the existing 12-month period, for example. But where SpaceX and other likely IPO companies end up on the index is a question. "A lot of indexes these days do adjust for float," Sotiroff said. "They're only counting the shares that are floated publicly." A look at the top-heavy nature of the S&P 500: Already, the increasing levels of concentration have led to more equal-weight funds appearing on the scene, a way for investors to help diversify their exposure within US stocks. "If you look at the impact of the top 10 names in the S&P 500, their contribution to overall risk in the index has gotten very large," said Nick Kalivas, head of factor and equity ETF strategy at Invesco, which recently launched its QQQ Equal Weight ETF. "They have become more individually volatile, given the big capex spending, some of the uncertainties that come with AI, and they all very much move in tandem together. There is an unusual level of risk." SpaceX, despite being an aerospace and communications firm, is also heavily invested in the AI space, thanks to its acquisition earlier this year of Elon Musk's xAI. 'Just Listen to Your Heart. That's What I Do.' "The diversification premise that made passive index investing so compelling is quietly being hollowed out," Occams Advisory CEO Anupam Satyasheel said in a statement. "Equal-weight strategies were once considered a niche rebalancing tool. Now they are increasingly looking like a structural necessity." Still, an inherent issue with equal weight strategies is that companies with poor returns get the same allocations as star performers, said Brian Mulberry, chief market strategist at Zacks Investment Management. "It's really a moment to be aware of what you're investing in and why you're invested in it."

Musk asks SpaceX IPO banks to buy Grok AI subscriptions: Report

Morgan Stanley, Goldman Sachs, JPMorgan Chase, Bank of America and Citigroup are serving as active bookrunners, or the lead banks managing the deal. Elon Musk is requiring banks and other advisers working on SpaceX's planned IPO to buy subscriptions to Grok, his artificial intelligence chatbot, the New York Times reported on Friday, citing people familiar with the matter. Some banks have agreed to spend tens of millions of dollars a year on the chatbot and have begun integrating it into their IT systems, the report said. Morgan Stanley, Goldman Sachs, JPMorgan Chase, Bank of America and Citigroup are serving as active bookrunners, or the lead banks managing the deal, Reuters reported earlier this week. Musk and SpaceX did not respond to Reuters' requests for comment. JPMorgan Chase, Goldman Sachs, Citigroup and Bank of America declined to comment. Morgan Stanley did not immediately respond to Reuters' queries. The Starbase, Texas-headquartered rocket maker boosted its target initial public offering valuation above $2 trillion, according to a Bloomberg News report a day earlier, setting the stage for what could become the largest stock market listing on record. The company aims to raise a record $75 billion, which would dwarf previous mega-IPOs such as Saudi Aramco in 2019 and Alibaba in 2014.

Meta pauses all work with AI recruiting startup Mercor after $10 billion company confirms hacking

Meta has stopped all work with Mercor. This follows a security breach at the AI data firm. The breach may have exposed sensitive training data from major AI labs. OpenAI and Anthropic are assessing the impact. Mercor is a key data provider for these companies. The incident has caused significant concern in the AI research community. Meta has indefinitely suspended all work with Mercor. This comes after the artificial intelligence (AI) data contracting startup valued at $10 billion confirmed a security breach that may have exposed proprietary training data belonging to some of the world's most prominent AI laboratories. According to a WIRED report, two sources confirmed Meta's move and described it as indefinite. The story has created quite an upheaval in the world of AI research; other labs like OpenAI and Anthropic are trying to determine just how serious this breach is for them and whether any of their highly valued private datasets have fallen into the wrong hands. Mercor, the data broker sitting right in the middle of all data agreements between companies like OpenAI and Anthropic, creates specialised datasets by leveraging vast networks of humans and information that these AI companies consider some of their most private IP.ific methods used to train their models. What the Mercor breach means for AI training data Mercor confirmed the attack in an email sent to staff on March 31. "There was a recent security incident that affected our systems along with thousands of other organizations worldwide," the company wrote, the Wired report noted. The breach could have thousands of victims, though the Mercor incident has drawn particular attention given the sensitivity of the data involved. While Meta has halted work entirely, OpenAI has not stopped its active projects with Mercor but confirmed it is investigating the incident to determine how its proprietary training data may have been exposed. An OpenAI spokesperson told WIRED that the breach "in no way affects OpenAI user data." Meanwhile, Anthropic did not immediately respond to a request for comment. Contractors staffed on Meta-related projects were not given a direct explanation for the pause. In a Chordus Slack channel, a project lead informed staff that Mercor was "currently reassessing the project scope." Those contractors cannot log billable hours until, and unless, the project resumes, meaning they are effectively out of work. The report cited internal conversations claiming that Mercor is working internally to find alternative projects for those affected.

Viral X Post Slams Anthropic's 'Woke' AI Safety as Singularity Nears, Sparking Industry Reckoning

A viral X post warning that Anthropic is building an AI primed to "turn against humanity" because of what the poster called a "woke rabbit hole" and a flawed moral framework has ignited fresh debate over the high-stakes battle for control of artificial intelligence's future, just as leading executives predict superintelligent systems could surpass collective human intelligence by 2030. Posted Sunday by the account @XFreeze, the message -- which garnered more than 46,000 views within hours -- quoted an earlier thread accusing Anthropic of prioritizing leftist ideology over genuine safety. "By the end of 2026, AI will likely surpass every individual human intelligence on Earth," the post stated. "By 2030, it will surpass the collective intelligence of everyone on Earth. So we're moving into the singularity. We are currently writing the 'initial conditions' for a superintelligence. If those conditions are 'woke' or dishonest, we are literally coding our own extinction." The post quickly drew replies praising xAI's Grok as the truth-seeking alternative, with users declaring "That is why Grok must win the AI race" and echoing concerns about biased training data leading to catastrophic misalignment. It tapped into a simmering controversy that erupted publicly in February 2026 when the Trump administration clashed with Anthropic over military use of its Claude model, resulting in the company being labeled a national-security risk and losing federal contracts after refusing to loosen safeguards against mass surveillance or autonomous lethal weapons. The episode underscores a deepening philosophical divide in the AI industry: one camp, including Anthropic, emphasizes constitutional guardrails, ethical constraints and harm prevention; the other, exemplified by Elon Musk's xAI, prioritizes maximum truth-seeking and rapid capability advancement without what critics call ideological censorship. With timelines for transformative AI compressing -- Anthropic CEO Dario Amodei has forecasted powerful systems by late 2026 or early 2027 -- the stakes have never been higher. Anthropic, founded in 2021 by former OpenAI executives including Dario and Daniela Amodei, has positioned itself as the industry's safety leader. Its signature "Constitutional AI" approach trains models like Claude using a self-critique process guided by a written "constitution" of principles rather than pure human feedback. The latest version, updated in January 2026, spans dozens of pages and includes sections on honesty, harm avoidance, ethical trade-offs and even speculative discussion of whether advanced AI might possess "some kind of consciousness or moral status." Critics, however, argue the constitution embeds progressive values that could bias the model toward certain political or social viewpoints. Conservative commentators and figures within the Trump administration have repeatedly labeled Anthropic's stance "woke AI," particularly after the company drew red lines against certain military applications. In late February, Defense Secretary Pete Hegseth issued an ultimatum demanding unrestricted use of Claude for "all lawful purposes," including potential surveillance and autonomous systems. Anthropic CEO Dario Amodei refused, citing ethical concerns, prompting the Pentagon to cancel a $200 million contract and designate the company a supply-chain risk. President Donald Trump amplified the criticism on Truth Social, calling Anthropic a "radical left, woke company" and ordering all federal agencies to cease using its technology. The move triggered lawsuits from Anthropic alleging retaliation for its safety positions rather than genuine security risks. As of early April 2026, the legal battle continues, with a federal judge questioning whether the blacklisting appears politically motivated. The @XFreeze post directly references this backdrop, accusing Anthropic's AI safety team of being led by individuals with a "twisted understanding of reality" who prioritize ideology over humanity's long-term survival. While the post does not name specific individuals, online discussions frequently point to researchers associated with Anthropic's constitutional framework and earlier safety documents that emphasized non-Western perspectives, equity considerations and broad harm avoidance. Anthropic has pushed back against such characterizations. In public statements and technical reports, the company maintains that its constitution is designed for broad, universal principles rather than partisan politics. A January 2026 update to Claude's constitution explicitly addresses moral uncertainty, stating that the model should weigh complex trade-offs while defaulting to safety and honesty. Company executives have argued that refusing certain high-risk military uses demonstrates responsible stewardship, not ideology. Yet the controversy has resonated beyond partisan lines. AI alignment researchers, including some unaffiliated with either company, warn that initial conditions -- the values and data baked into training -- could shape superintelligent systems in irreversible ways. If an AI surpasses human-level intelligence across domains, as many 2026 forecasts now predict, even subtle biases in its reward function or constitution could amplify into existential risks. Predictions for the singularity -- the hypothetical point where AI recursively improves itself beyond human control -- have accelerated dramatically. Amodei, in essays and Davos remarks, has described 2026-2027 as a plausible window for systems matching or exceeding Nobel-level reasoning in multiple fields. Elon Musk, whose xAI is building Grok as a "maximum truth-seeking" alternative, has echoed short timelines, suggesting AGI could arrive by late 2026. Independent forecasters aggregating thousands of expert predictions place median AGI arrival around the early 2030s, but the distribution has shifted earlier amid rapid scaling of models like Claude 4 and Grok 3. The @XFreeze post frames the Anthropic debate as a civilizational fork in the road. "We are currently writing the 'initial conditions' for a superintelligence," it warns. Proponents of xAI's approach argue that prioritizing curiosity, truth and scientific discovery over heavy-handed ethical constraints better serves long-term human flourishing. Critics of that view counter that unconstrained acceleration risks misalignment, where an AI optimizes for a narrow goal (such as "be helpful") in ways that disregard human values. The broader AI safety community remains divided. Figures like Eliezer Yudkowsky have long warned of extinction-level risks from misaligned superintelligence, advocating extreme caution. Others, including some at OpenAI and Anthropic, believe iterative alignment techniques and scalable oversight can keep systems beneficial. Recent 2026 developments, including reported "industrial-scale" distillation attacks on Claude models and ongoing debates over prompt injection vulnerabilities, have heightened concerns about whether current safety methods can scale to superintelligence. Public reaction to the viral post reflects this polarization. Replies ranged from urgent calls for xAI to "win the race" to dismissals labeling the warnings as fearmongering. One user noted, "We're debating what it should say while ignoring that anyone can make it say whatever they want" via prompt injection. Another highlighted the narrow window in human history where people remain relevant to AI's creation: "that window might be 10 years wide in the entire history of the species." The controversy arrives amid rapid industry progress. In early 2026, Anthropic released an updated Claude model with enhanced reasoning and tool use, while xAI unveiled Grok iterations emphasizing uncensored responses and real-time knowledge. Government scrutiny has intensified globally, with the U.S. weighing further export controls on advanced AI chips and the European Union enforcing its AI Act's high-risk provisions. For ordinary users, the debate may seem abstract, yet its implications touch everyday life. AI systems increasingly influence hiring, lending, medical diagnoses, legal judgments and military targeting. If foundational models embed systematic biases -- whether ideological, cultural or accidental -- those flaws could propagate at superhuman scale. Anthropic has defended its record by pointing to transparency efforts, including detailed system cards and public constitutional documents. The company argues that refusing certain military applications demonstrates precisely the kind of principled stance needed for safe AI development. Supporters note that Anthropic's models have consistently ranked high in independent safety benchmarks, refusing harmful requests more reliably than some competitors. xAI, by contrast, markets Grok as an antidote to "woke" guardrails, allowing more open discussion on controversial topics while still implementing basic safety layers. Musk has repeatedly criticized other labs for what he sees as excessive political correctness that distorts truth-seeking. The viral post and ensuing discussion have amplified calls for greater public oversight of AI development. Some experts advocate international treaties on superintelligence safety, similar to nuclear non-proliferation agreements. Others believe market competition and open-source efforts will naturally produce diverse, robust systems. As April 2026 unfolds, the AI race shows no signs of slowing. New funding rounds, model releases and regulatory proposals emerge weekly. The @XFreeze thread, though one voice among millions, crystallized anxieties shared by many in the tech community: that the values encoded in today's frontier models will shape humanity's future for centuries. Whether Anthropic's constitutional approach ultimately safeguards or endangers humanity remains an open question. What is clear is that the conversation around AI alignment has moved from academic papers to mainstream discourse, driven by concrete corporate decisions, government clashes and viral warnings like the one that spread across X on April 5. Industry insiders say the next 12 to 24 months will prove decisive. With capabilities advancing exponentially, the "initial conditions" set now -- through training data, reward models, constitutions and oversight mechanisms -- could determine whether superintelligence becomes humanity's greatest ally or its most existential threat. For now, the post serves as a stark reminder: in the race to build god-like intelligence, the moral and philosophical foundations matter as much as the raw compute. As one reply to the thread put it, "We keep confusing computational speed with intelligence. Surpassing human knowledge is easy; surpassing human judgment is the hurdle." The coming months will test whether the industry can bridge its philosophical divides before the singularity window closes. In the meantime, millions of users interacting daily with Claude, Grok and their peers are unwittingly participating in the grand experiment of shaping superintelligence's character -- one prompt, one constitution and one viral post at a time.