News & Updates

The latest news and updates from companies in the WLTH portfolio.

Polymarket Promo Code COVERS: Get a $20 Deposit Bonus on Reds vs Giants Prediction Markets in California

Get a $20 bonus with Polymarket promo code COVERS for Giants vs Reds predictions. Claim your trading bonus today! The San Francisco Giants face elimination as they look to avoid a sweep against the Cincinnati Reds on Thursday afternoon. New users can capitalize on this exciting matchup with the Polymarket promo code COVERS, which unlocks a $20 trading bonus on April 16. This prediction market platform ranks among the best prediction market apps for trading on sports outcomes. The Polymarket promo code COVERS provides new users with a $20 deposit match bonus. To qualify, you must deposit $20 into your account, and Polymarket will credit an additional $20 in trading funds. This offer applies to mobile app users in most U.S. states where the platform operates legally. For the Giants vs Reds finale, you could trade on various outcomes, such as whether San Francisco avoids the sweep or if Cincinnati's rookie sensation Sal Stewart continues his home run streak. If you trade $10 on the Giants winning and they pull off the upset, your potential returns depend on the market odds at the time of your trade. Conversely, if the Reds complete the sweep as expected, trades on Cincinnati would yield smaller returns due to a higher probability. Key terms include a minimum $20 deposit requirement, eligibility for new users only, and bonus funds that must be used within 30 days. The platform requires identity verification and restricts access to users under 18. Compare this offer with other best prediction market promos to maximize your trading potential. Follow these steps to secure your $20 bonus for trading on the Giants vs Reds series finale:

SpaceX fires up world's biggest rocket ahead of crucial flight

SpaceX has completed an important test of what CEO Elon Musk describes as "the most powerful object ever made". The company fired up its Starship megarocket at its Starbase facility in southern Texas on Wednesday, ahead of what will be a landmark flight next month. The static fire of the Super Heavy rocket, which came a day after a similar test of the smaller upper stage rocket, saw its 33 engines light up while the spacecraft remained tethered to the launchpad. When stacked together, the Starship rocket measures 124 metres tall and is capable of carrying more than 100 tons to low Earth orbit, according to Mr Musk. The rocket is crucial to Nasa's plans to return astronauts to the Moon as part of its Artemis program, with SpaceX contracted to develop a lunar lander alongside Jeff Bezos's Blue Origin. The US space agency completed a lunar flyby earlier this month, which saw four astronauts travel to the Moon last week for the first time in more than 50 years. The first crewed mission to the surface of the Moon is expected to take place in late 2028 as part of Artemis IV, though it will depend on the readiness of Starship and Blue Origin's Blue Moon. Nasa has already been forced to push back its lunar ambitions due to delays with Starship's Human Landing System (HLS), with the mission originally scheduled for December 2025. Ahead of the last Starship flight test in October, safety advisers for the US space agency said that fundamental challenges remain with Starship's HLS. Members of the Aerospace Safety Advisory Panel said the next six months of Starship launches will likely determine whether HLS is capable of flying a crew before the end of the decade. Speaking at a Senate Committee hearing in September, former Nasa chief Jim Bridenstine said Starship delays meant the US was likely to fall behind China in the race to the Moon. "Our complicated architecture requires a dozen or more launches in a short time frame, relies on very challenging technologies that have yet to be developed like cryogenic in-space refueling, and still needs to be human rated," he said. "Unless something changes, it is highly unlikely the United States will beat China's projected timeline to the Moon's surface." No date has been set for the next flight test, which will be the 12th suborbital mission for Starship, though Mr Musk indicated on 3 April that it was "4 to 6 weeks away".

Maputo endures third day of fuel chaos amid long queues, pedestrians with jerrycans and closed petrol stations

Maputo is experiencing its third day of chaos across several streets, with widespread queues of motorists trying to refuel, as most petrol stations remain closed and others operate under reinforced police presence. During a round conducted by Lusa this morning, armed police were seen on Avenida 24 de Julho in central Maputo attempting to organise access for people carrying jerrycans at one of the few stations with fuel. The measure follows altercations recorded between customers since Wednesday at some stations, in disputes over access to fuel, with reports and complaints of staff allegedly demanding payments to bypass queues, further increasing tension at these locations. The majority of petrol stations in Maputo remain closed without fuel, some already for the third day, while others have queues that are congesting traffic across the capital, with limits imposed on the maximum amount of petrol and diesel equivalent to 1,000 meticais (13.2 euros). At the stations, alongside hundreds of people on foot carrying jerrycans and empty bottles, and dozens of vehicles, there are also people selling used bottles and containers to those trying to secure small amounts of fuel, with waiting times of several hours and no guarantee of success. The situation, which has worsened in Maputo over the past three days, is also beginning to be seen in other parts of the country, according to reports from several provinces. In response to this crisis, which is already affecting activity in the country, the Mozambican Ministry of Mineral Resources and Energy has announced the approval of "exceptional and immediate measures" to ensure the supply of liquid fuels nationwide, guaranteeing rapid restocking of stations and availability of fuel to the public. "The decision follows constraints observed in the distribution process, despite fuel imports continuing to be carried out regularly," according to a statement from the National Directorate of Hydrocarbons and Fuels (DNHC), linked to the effects of the conflict in the Middle East. According to the Mozambican Government, in order to ensure the immediate normalisation of the situation, the ministry has authorised, "on an exceptional and urgent basis", retail operators to purchase petroleum products from any licensed distributor with available stock, regardless of existing contractual arrangements. This measure, according to the Government, will enable rapid restocking of petrol stations, which in recent days have recorded queues stretching hundreds of metres, widespread congestion, and closures due to lack of petrol or diesel. "The measure aims to ensure that all fuel stations have fuel available for sale to the public and will remain in force until all distribution operators recover the conditions to resume normal distribution operations," it states. In this context, the DNHC calls for calm, discourages hoarding and the creation of household fuel reserves, as well as refuelling beyond strictly necessary needs. The Mozambican Government acknowledged on Tuesday "pressure" on fuel stations, where huge queues have formed, at least in Maputo, amid fears of stock shortages and price increases due to the conflict in the Middle East. "Indeed, we have been monitoring some pressure at petrol stations. The available information is that there is still stock. I cannot here provide a message on how many days or weeks, but this is an issue under daily monitoring at government level," said Minister Salim Valá, spokesperson for the weekly Council of Ministers meeting held in Maputo. "The new prices will have to come," said the President of the Republic on Tuesday, justifying the fuel situation with the war in the Middle East, which is affecting Mozambique, whose imports depend on 80% of shipments through the Strait of Hormuz, blocked by Iran and now by the United States of America. "As long as the war continues, we will not be able to continue stretching the rope [on current prices, still without increases] for much longer," said Daniel Chapo.

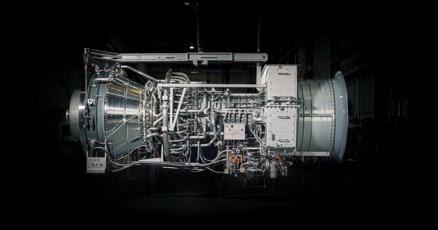

PROENERGY To Deliver Major Generating Equipment To Power Crusoe AI Factories

The PROENERGY PE6000 aeroderivative engine generates 50 MW of fast-start power on demand and operates on multiple fuels, making it a versatile choice for any fast-start application. 650-MW Power Generation Equipment Solution Includes 13x PE6000 Units Delivered by Summer 2027 SEDALIA, Mo., April 16, 2026 /PRNewswire/ -- PROENERGY announced today a contract to deliver a manufactured equipment solution for Crusoe, a vertically integrated AI infrastructure provider. The solution features 13 PE6000 aeroderivative gas turbine generator sets, each capable of generating 50 MW. Crusoe will install the equipment to power upcoming hyperscale datacenter projects. "At Crusoe, we are committed to leading the industry with an energy-first approach to AI infrastructure. The acquisition of these 13 PE6000 units is a strategic move to rapidly provide the energy capacity our hyperscale projects require to operate at peak performance," says John Adams, Crusoe Senior Vice President, Power Infrastructure. "We value partners like PROENERGY who match our pace and share our passion for reliability and speed." Like all PROENERGY solutions, equipment for Crusoe's hyperscale AI factories will be test-fit and quality-checked at the Sedalia manufacturing facility to arrive onsite ready to install. "Our equipment is made in-house for world-class reliability no matter the application," says Jeff Canon, PROENERGY President and CEO. "We appreciate that Crusoe trusts PROENERGY to deliver a solution on time and as promised." About PROENERGY PROENERGY is an engineering, R&D, and manufacturing powerhouse. The company addresses every need for fast-start power generation: turbine and package manufacturing, turnkey project execution, power purchase agreements, and asset lifecycle care for turbines and plants. Where others see impossible energy challenges, PROENERGY provides innovative aeroderivative solutions. For more on PROENERGY, visit www.proenergyservices.com. View original content to download multimedia:https://www.prnewswire.com/news-releases/proenergy-to-deliver-major-generating-equipment-to-power-crusoe-ai-factories-302743533.html

As Healthcare Costs Soar, Employers Search for Solutions -- Including Unconventional Ones

KANSAS CITY, Mo.--(BUSINESS WIRE)--Apr 16, 2026-- A striking new data point from the Lockton 2026 National Benefits Survey signals just how far employers are willing to go to manage rising healthcare costs: 46% of self-funded plan sponsors say they would consider international drug sourcing for pharmacy benefits -- an approach that remains complex and may expose plan sponsors to legal and compliance risks if not properly designed and administered, although the risk is unclear based on the lack of regulatory enforcement.

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure

The same ChatGPT chatbot that gave OpenAI's chief financial officer Sarah Friar a tilapia recipe for a recent Sunday night dinner at home is also now doing her most mundane tasks at work like summarizing her emails and Slack messages. Friar and other company executives are banking OpenAI's future on more of the latter as it shifts its focus to business-oriented products while shedding some of its consumer offerings as a pathway to profitability.

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure

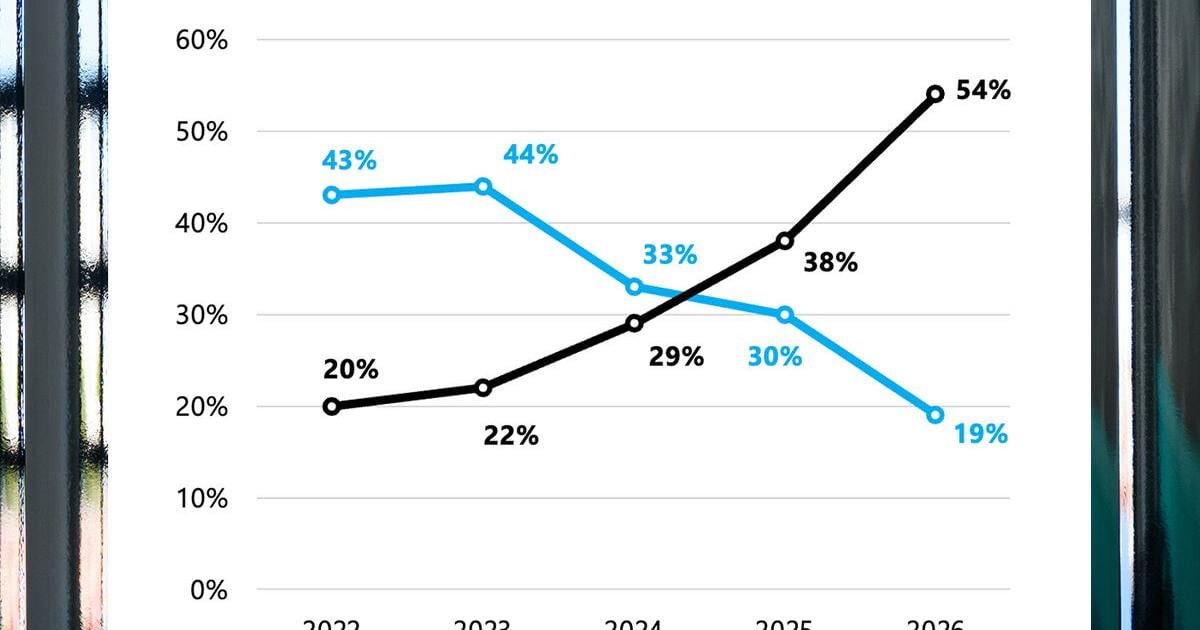

The same ChatGPT chatbot that gave OpenAI's chief financial officer Sarah Friar a tilapia recipe for a recent Sunday night dinner at home is also now doing her most mundane tasks at work like summarizing her emails and Slack messages. Friar and other company executives are banking OpenAI's future on more of the latter as it shifts its focus to business-oriented products while shedding some of its consumer offerings as a pathway to profitability. Recommended Videos OpenAI says it will introduce a new artificial intelligence model for "high-value professional work" as the company faces heightened competition with rival Anthropic in attracting corporate customers to adopt AI assistants in their workplaces. "You'll see a new model coming from us in short order. We feel very excited about it," Friar said in an interview with The Associated Press. OpenAI boasts of more than 900 million weekly users of its core ChatGPT product, and Friar said about 95% of them "don't pay anything" for the popular chatbot. But while all those interactions build habits and reliance, they also strain the costly computing resources needed to power the company's AI systems and highlight the need for big business customers to help pay the bills. OpenAI, valued at $852 billion, and Anthropic, valued at $380 billion, both lose more money than they make, putting the privately-owned San Francisco-based AI research laboratories in a fierce competition to generate more revenue as they race toward becoming publicly traded on Wall Street. A push to improve performance and sales of OpenAI's business-oriented products -- already Anthropic's bread and butter -- has driven OpenAI to abandon some consumer initiatives, like the AI video generator app Sora. "I think it was a little heartbreaking, but we're like, OK, it's not the main event right now," Friar said. "We need to make sure that our new model that's coming has enough compute." Codenamed Spud, OpenAI says its "smartest model yet" offers "stronger reasoning, better understanding of intent and dependencies, better follow-through and more reliable output in production." It's part of OpenAI's answer to Anthropic's new Claude Mythos, which Anthropic claims is so "strikingly capable" that it is limiting its use to select customers because of its apparent ability to surpass human cybersecurity experts in finding or exploiting computer vulnerabilities. Friar, the former CEO of neighborhood social platform Nextdoor, said business customers accounted for about 20% of OpenAI's revenue when she was hired in 2024 as chief financial officer. She said it's now 40% and expected to account for half of OpenAI's sales by the end of the year. It's a sharp turnaround from late last year, when OpenAI co-founder and CEO Sam Altman was promoting a now-shuttered Sora partnership with Disney, launching a plan to sell ads on ChatGPT and floating the idea of letting ChatGPT engage in erotica with paid adult users. Altman said on the "Mostly Human" podcast earlier this month that a sharper focus was needed -- and Friar agrees. "Tech companies, when they're growing, it's just this natural thing that happens. There's so many cool things you could do," she said, adding that companies can end up doing "really badly" if they do too many things, while "great companies are very good at, in a reasonable period of time, kind of doing that winnowing down and refocusing and it's super painful." Signaling that shift was the hiring three months ago of Slack CEO Denise Dresser to be OpenAI's first chief revenue officer. Dresser said in a recent AP interview that she has been laser-focused on meeting with corporate leaders and positioning OpenAI as the go-to platform for workplaces employing AI agents to automate a variety of computer-based job tasks. "It's really clear to me that companies are past the experimentation phase and they're into using AI to do real work," Dresser said. "Leaders at companies are recognizing that AI is probably the most consequential shift of their lifetime." But those leaders also have a choice, namely Anthropic's Claude that has become widely used by software professionals. Founded in 2021 by a group of ex-OpenAI leaders who said they wanted to prioritize AI safety, Anthropic has positioned itself as the more responsible AI vendor. The distinction drew attention when President Donald Trump's administration punished the startup after a contract dispute over AI use in the military, and Altman used the opportunity to cement OpenAI's own deal with the Pentagon. Consumer interest in Anthropic surged and the company said its annualized revenues hit $30 billion, a higher number than what OpenAI has reported, though they measure it differently. Friar and Dresser declined to reveal OpenAI's latest sales but both have suggested that Anthropic's number is inflated because it doesn't account for revenue it must share with cloud computing providers Amazon and Google. Even so, it remains a tight competition that's also tied to the health of the stock market and the future of the economy. "They're likely quite close," said Luke Emberson, a researcher at nonprofit institute Epoch AI. "Certainly the trends show Anthropic is growing much faster than OpenAI. If that continues, they're likely to cross soon." The urgency led Dresser to send a memo to OpenAI employees on Sunday, first reported by The Verge, that asserted that Anthropic's coding focus "gave them an early wedge" but expressing confidence that OpenAI has the "real structural advantage" as AI usage expands beyond software developers and OpenAI builds enough computing capacity to operate its AI systems. "Their story is built on fear, restriction, and the idea that a small group of elites should control AI," Dresser's memo said of Anthropic. "Our positive message will win over time: build powerful systems, put in the right safeguards, expand access, and help people do more." But for skeptics of the financial viability of AI products like ChatGPT and Claude, the trajectory of both money-losing companies is alarming as smaller startups increasingly become dependent on their AI tools. Anthropic has already imposed rate limits on heavy users, forcing some to wait for hours to use Claude, and both companies have set up service tiers that reward premium payers, said author and AI critic Ed Zitron. "It's what I call the subprime AI crisis," Zitron said. "People built their lives and they built their businesses on top of these companies that, as they try and save money, will start turning the screws." One thing that both AI leaders and critics agree on is that it is an expensive technology, though whether it is worth the cost in electricity-hungry AI computers remains to be seen. "People will say, well, 'Once they go public, they're safe.' That's not true," Zitron said. "Public companies can and will die, especially ones that are dependent on $100 billion to $200 billion every year or so, just to keep breathing."

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure

The same ChatGPT chatbot that gave OpenAI's chief financial officer Sarah Friar a tilapia recipe for a recent Sunday night dinner at home is also now doing her most mundane tasks at work like summarizing her emails and Slack messages. Friar and other company executives are banking OpenAI's future on more of the latter as it shifts its focus to business-oriented products while shedding some of its consumer offerings as a pathway to profitability. OpenAI says it will introduce a new artificial intelligence model for "high-value professional work" as the company faces heightened competition with rival Anthropic in attracting corporate customers to adopt AI assistants in their workplaces. "You'll see a new model coming from us in short order. We feel very excited about it," Friar said in an interview with The Associated Press. OpenAI boasts of more than 900 million weekly users of its core ChatGPT product, and Friar said about 95% of them "don't pay anything" for the popular chatbot. But while all those interactions build habits and reliance, they also strain the costly computing resources needed to power the company's AI systems and highlight the need for big business customers to help pay the bills. OpenAI, valued at $852 billion, and Anthropic, valued at $380 billion, both lose more money than they make, putting the privately-owned San Francisco-based AI research laboratories in a fierce competition to generate more revenue as they race toward becoming publicly traded on Wall Street. A push to improve performance and sales of OpenAI's business-oriented products -- already Anthropic's bread and butter -- has driven OpenAI to abandon some consumer initiatives, like the AI video generator app Sora. "I think it was a little heartbreaking, but we're like, OK, it's not the main event right now," Friar said. "We need to make sure that our new model that's coming has enough compute." Codenamed Spud, OpenAI says its "smartest model yet" offers "stronger reasoning, better understanding of intent and dependencies, better follow-through and more reliable output in production." It's part of OpenAI's answer to Anthropic's new Claude Mythos, which Anthropic claims is so "strikingly capable" that it is limiting its use to select customers because of its apparent ability to surpass human cybersecurity experts in finding or exploiting computer vulnerabilities. Friar, the former CEO of neighborhood social platform Nextdoor, said business customers accounted for about 20% of OpenAI's revenue when she was hired in 2024 as chief financial officer. She said it's now 40% and expected to account for half of OpenAI's sales by the end of the year. It's a sharp turnaround from late last year, when OpenAI co-founder and CEO Sam Altman was promoting a now-shuttered Sora partnership with Disney, launching a plan to sell ads on ChatGPT and floating the idea of letting ChatGPT engage in erotica with paid adult users. Altman said on the "Mostly Human" podcast earlier this month that a sharper focus was needed -- and Friar agrees. "Tech companies, when they're growing, it's just this natural thing that happens. There's so many cool things you could do," she said, adding that companies can end up doing "really badly" if they do too many things, while "great companies are very good at, in a reasonable period of time, kind of doing that winnowing down and refocusing and it's super painful." Signaling that shift was the hiring three months ago of Slack CEO Denise Dresser to be OpenAI's first chief revenue officer. Dresser said in a recent AP interview that she has been laser-focused on meeting with corporate leaders and positioning OpenAI as the go-to platform for workplaces employing AI agents to automate a variety of computer-based job tasks. "It's really clear to me that companies are past the experimentation phase and they're into using AI to do real work," Dresser said. "Leaders at companies are recognizing that AI is probably the most consequential shift of their lifetime." But those leaders also have a choice, namely Anthropic's Claude that has become widely used by software professionals. Founded in 2021 by a group of ex-OpenAI leaders who said they wanted to prioritize AI safety, Anthropic has positioned itself as the more responsible AI vendor. The distinction drew attention when President Donald Trump's administration punished the startup after a contract dispute over AI use in the military, and Altman used the opportunity to cement OpenAI's own deal with the Pentagon. Consumer interest in Anthropic surged and the company said its annualized revenues hit $30 billion, a higher number than what OpenAI has reported, though they measure it differently. Friar and Dresser declined to reveal OpenAI's latest sales but both have suggested that Anthropic's number is inflated because it doesn't account for revenue it must share with cloud computing providers Amazon and Google. Even so, it remains a tight competition that's also tied to the health of the stock market and the future of the economy. "They're likely quite close," said Luke Emberson, a researcher at nonprofit institute Epoch AI. "Certainly the trends show Anthropic is growing much faster than OpenAI. If that continues, they're likely to cross soon." The urgency led Dresser to send a memo to OpenAI employees on Sunday, first reported by The Verge, that asserted that Anthropic's coding focus "gave them an early wedge" but expressing confidence that OpenAI has the "real structural advantage" as AI usage expands beyond software developers and OpenAI builds enough computing capacity to operate its AI systems. "Their story is built on fear, restriction, and the idea that a small group of elites should control AI," Dresser's memo said of Anthropic. "Our positive message will win over time: build powerful systems, put in the right safeguards, expand access, and help people do more." But for skeptics of the financial viability of AI products like ChatGPT and Claude, the trajectory of both money-losing companies is alarming as smaller startups increasingly become dependent on their AI tools. Anthropic has already imposed rate limits on heavy users, forcing some to wait for hours to use Claude, and both companies have set up service tiers that reward premium payers, said author and AI critic Ed Zitron. "It's what I call the subprime AI crisis," Zitron said. "People built their lives and they built their businesses on top of these companies that, as they try and save money, will start turning the screws." One thing that both AI leaders and critics agree on is that it is an expensive technology, though whether it is worth the cost in electricity-hungry AI computers remains to be seen. "People will say, well, 'Once they go public, they're safe.' That's not true," Zitron said. "Public companies can and will die, especially ones that are dependent on $100 billion to $200 billion every year or so, just to keep breathing."

Anthropic Brings AI Safety Fellowship With Rs 3,00,000 Weekly Stipend, All Details Here

Talking about the programme, it brings a chance to work on some of the most critical problems in artificial intelligence, comprising model safety, AI security and interpretability. This one by Anthropic could be dubbed a boon for all those who are early-career researchers, technically skilled students, or engineers. The selected fellows will be working closely with Anthropic researchers and are also expected to produce research outputs during the programme that will be publishable.

Adobe AI Launch: A Smarter Way to Design with Anthropic Claude

Adobe introduces a smarter design workflow powered by Claude, redefining creativity with advanced AI tools that streamline content creation and boost productivity for designers. Adobe has taken a massive step forward in the world of digital design with its latest Adobe AI launch.The company lately released an advanced digital helper which is designed to simplify photos editing, videos, and graphic designs.The name of this new tool is Adobe Firefly AI assistant.This tool act like a smart companion. It follows direct instructions from users to complete complex tasks automatically. One of the most exciting parts of this release is the Anthropic Claude integration. By this integration with Anthropic, Adobe is allowing users to bring the power of the Claude AI model into their creative workflow. With this enhancement ,whether you are a professional filmmaker or a hobbyist photographer, you can use these advanced systems to handle the repetitive tasks of a project.Creators can now ask the Adobe AI assistant to manage those tiny details,instead of spending hours and giving more time for original ideas and storytelling. The primary goal of these Adobe creative tools AI enhancements is to make high-quality editing accessible and safe for everyone. Adobe ensures that the content generated is suitable for commercial use, giving businesses peace of mind. While the company has not yet shared the specific costs for these features, the tools are expected to work through a credit system. As technology continues to change how we work, this move positions Adobe at the forefront of the industry. By blending human imagination with powerful automation, the brand is making it easier than ever to turn a vision into a reality. For those following current affairs in technology, this partnership represents a major shift in how we interact with software every day.

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure

By MATT O'BRIEN AP Technology Writer The same ChatGPT chatbot that gave OpenAI's chief financial officer Sarah Friar a tilapia recipe for a recent Sunday night dinner at home is also now doing her most mundane tasks at work like summarizing her emails and Slack messages. Friar and other company executives are banking OpenAI's future on more of the latter as it shifts its focus to business-oriented products while shedding some of its consumer offerings as a pathway to profitability. OpenAI says it will introduce a new artificial intelligence model for "high-value professional work" as the company faces heightened competition with rival Anthropic in attracting corporate customers to adopt AI assistants in their workplaces. "You'll see a new model coming from us in short order. We feel very excited about it," Friar said in an interview with The Associated Press. OpenAI boasts of more than 900 million weekly users of its core ChatGPT product, and Friar said about 95% of them "don't pay anything" for the popular chatbot. But while all those interactions build habits and reliance, they also strain the costly computing resources needed to power the company's AI systems and highlight the need for big business customers to help pay the bills. OpenAI, valued at $852 billion, and Anthropic, valued at $380 billion, both lose more money than they make, putting the privately-owned San Francisco-based AI research laboratories in a fierce competition to generate more revenue as they race toward becoming publicly traded on Wall Street. A push to improve performance and sales of OpenAI's business-oriented products -- already Anthropic's bread and butter -- has driven OpenAI to abandon some consumer initiatives, like the AI video generator app Sora. "I think it was a little heartbreaking, but we're like, OK, it's not the main event right now," Friar said. "We need to make sure that our new model that's coming has enough compute." Codenamed Spud, OpenAI says its "smartest model yet" offers "stronger reasoning, better understanding of intent and dependencies, better follow-through and more reliable output in production." It's part of OpenAI's answer to Anthropic's new Claude Mythos, which Anthropic claims is so "strikingly capable" that it is limiting its use to select customers because of its apparent ability to surpass human cybersecurity experts in finding or exploiting computer vulnerabilities. Friar, the former CEO of neighborhood social platform Nextdoor, said business customers accounted for about 20% of OpenAI's revenue when she was hired in 2024 as chief financial officer. She said it's now 40% and expected to account for half of OpenAI's sales by the end of the year. It's a sharp turnaround from late last year, when OpenAI co-founder and CEO Sam Altman was promoting a now-shuttered Sora partnership with Disney, launching a plan to sell ads on ChatGPT and floating the idea of letting ChatGPT engage in erotica with paid adult users. Altman said on the "Mostly Human" podcast earlier this month that a sharper focus was needed -- and Friar agrees. "Tech companies, when they're growing, it's just this natural thing that happens. There's so many cool things you could do," she said, adding that companies can end up doing "really badly" if they do too many things, while "great companies are very good at, in a reasonable period of time, kind of doing that winnowing down and refocusing and it's super painful." Signaling that shift was the hiring three months ago of Slack CEO Denise Dresser to be OpenAI's first chief revenue officer. Dresser said in a recent AP interview that she has been laser-focused on meeting with corporate leaders and positioning OpenAI as the go-to platform for workplaces employing AI agents to automate a variety of computer-based job tasks. "It's really clear to me that companies are past the experimentation phase and they're into using AI to do real work," Dresser said. "Leaders at companies are recognizing that AI is probably the most consequential shift of their lifetime." But those leaders also have a choice, namely Anthropic's Claude that has become widely used by software professionals. Founded in 2021 by a group of ex-OpenAI leaders who said they wanted to prioritize AI safety, Anthropic has positioned itself as the more responsible AI vendor. The distinction drew attention when President Donald Trump's administration punished the startup after a contract dispute over AI use in the military, and Altman used the opportunity to cement OpenAI's own deal with the Pentagon. Consumer interest in Anthropic surged and the company said its annualized revenues hit $30 billion, a higher number than what OpenAI has reported, though they measure it differently. Friar and Dresser declined to reveal OpenAI's latest sales but both have suggested that Anthropic's number is inflated because it doesn't account for revenue it must share with cloud computing providers Amazon and Google. Even so, it remains a tight competition that's also tied to the health of the stock market and the future of the economy. "They're likely quite close," said Luke Emberson, a researcher at nonprofit institute Epoch AI. "Certainly the trends show Anthropic is growing much faster than OpenAI. If that continues, they're likely to cross soon." The urgency led Dresser to send a memo to OpenAI employees on Sunday, first reported by The Verge, that asserted that Anthropic's coding focus "gave them an early wedge" but expressing confidence that OpenAI has the "real structural advantage" as AI usage expands beyond software developers and OpenAI builds enough computing capacity to operate its AI systems. "Their story is built on fear, restriction, and the idea that a small group of elites should control AI," Dresser's memo said of Anthropic. "Our positive message will win over time: build powerful systems, put in the right safeguards, expand access, and help people do more." But for skeptics of the financial viability of AI products like ChatGPT and Claude, the trajectory of both money-losing companies is alarming as smaller startups increasingly become dependent on their AI tools. Anthropic has already imposed rate limits on heavy users, forcing some to wait for hours to use Claude, and both companies have set up service tiers that reward premium payers, said author and AI critic Ed Zitron. "It's what I call the subprime AI crisis," Zitron said. "People built their lives and they built their businesses on top of these companies that, as they try and save money, will start turning the screws." One thing that both AI leaders and critics agree on is that it is an expensive technology, though whether it is worth the cost in electricity-hungry AI computers remains to be seen. "People will say, well, 'Once they go public, they're safe.' That's not true," Zitron said. "Public companies can and will die, especially ones that are dependent on $100 billion to $200 billion every year or so, just to keep breathing."

Starlink outage disrupted Navy drones, raising Pentagon concerns over SpaceX reliance

Last August, US Navy officials carrying out a test of unmanned vessels realized they had hit a single point of failure: Starlink. A global outage across Elon Musk's satellite network, affecting millions of Starlink users, left two dozen unmanned surface vessels bobbing off the California coast, disrupting communications and halting operations for almost an hour. The incident, which involved drones intended to bolster US military options in a conflict with China, was one of several Navy test disruptions linked to SpaceX's Starlink that left operators unable to connect with autonomous boats, according to internal Navy documents reviewed by Reuters and a person familiar with the matter. As SpaceX rockets toward a $2 trillion public offering this summer - expected to be the largest ever - the company has secured its position as the world's most valuable space company in part by being indispensable to the US government with an array of technologies spanning satellite communications to space launches and military AI. Starlink, in particular, has proved key to crucial programs - from drones to missile tracking - with a low-earth orbit constellation of close to 10,000 satellites, a scale that provides the military with a network resilient against potential adversary attacks. But the Navy's mishaps with Starlink for its autonomous drone program, which have not been previously reported, highlight the challenges of the US military's growing reliance on SpaceX and the risks it brings to the Pentagon. "If there was no Starlink, the US government wouldn't have access to a global constellation of low earth orbit communications," said Clayton Swope, a deputy director of the Aerospace Security Project at the Center for Strategic and International Studies. The Pentagon did not respond to questions about the drone test or SpaceX's work with the Navy. The Pentagon's chief information officer, Kirsten Davies, said the "Department leverages multiple, robust, resilient systems for its broad network." The Navy and SpaceX did not respond to requests for comment. Despite growing competition from Amazon.com, which announced an $11.6 billion agreement this week to acquire satellite maker Globalstar, SpaceX remains far ahead in low-earth orbit communications. Beyond drones, SpaceX has cemented a near-monopoly for space launches and provides satellite communications with Starlink and its national security-focused constellation, Starshield, generating billions of dollars for the company. Last month, US Space Force said it had reassigned its upcoming GPS launch to a SpaceX rocket for the fourth time, due to a glitch in the Vulcan rocket made by the Boeing and Lockheed Martin joint venture United Launch Alliance. Warning about reliance on SpaceX Democratic lawmakers have warned the Pentagon about the risks of its reliance on a single company led by the world's richest man to deliver crucial national security capabilities. More recently, the Defense Department's disagreements and blacklisting of AI startup Anthropic quickly revealed how an overreliance on one AI vendor could create problems should that vendor be dropped. Reuters reported last year that Musk unexpectedly switched off Starlink access to Ukrainian troops as they sought to retake territory from Russia, denting allies' trust in the billionaire. In Taiwan, SpaceX faced criticism over concerns it was withholding satellite communications to US service members based there, "possibly in breach of SpaceX's contractual obligations with the US government," according to a 2024 letter sent by then-US Representative Mike Gallagher to Musk, reported by Forbes at the time. SpaceX disputed the claim in a post on X. Reuters could not determine whether SpaceX has since provided Starlink service in Taiwan to US service members. The Pentagon and SpaceX did not respond to questions about Taiwan. "As a matter of operational security, we do not comment on or discuss plans, operations capabilities or effects," an official said in a statement. Starlink "exposed limitations" SpaceX's Starlink broadband has been crucial to the Pentagon's drone program, providing connection to small unmanned maritime vessels that look like speedboats without seats, and include those made by Maryland-based BlackSea and Austin, Texas-based Saronic. In April 2025, during a series of Navy tests in California involving unmanned boats and flying drones, officials reported that Starlink struggled to provide a solid network connection due to the high data usage needed to control multiple systems, according to a Navy safety report of the tests reviewed by Reuters. "Starlink reliance exposed limitations under multiple-vehicle load," the report stated. The report also faulted issues linked to radios provided by Silvus and a network system provided by Viasat. In the weeks leading up to the global Starlink outage in August, another series of Navy tests was disrupted by intermittent connection issues with the Starlink network, Navy documents reviewed by Reuters show. The causes of the network losses were not immediately clear. Despite the setbacks, the upside of Starlink - a cheap and commercially available service - outweighs the risk of a potential outage disrupting future military operations, said Bryan Clark, an autonomous warfare expert at the Hudson Institute. "You accept those vulnerabilities because of the benefits you get from the ubiquity it provides," he said.

A Polymarket trader made $300,000 betting on Biden's pardons, a new analysis shows

In the final hours of President Biden's term, a Polymarket trader made around $300,000 correctly betting on Biden's last-minute pardons, according to new data provided to NPR by an analytics firm that examines cryptocurrency transactions. As Biden issued a wave of pardons just hours before leaving the White House, the Polymarket trader bet big on four names, with the odds of those pardons occurring rapidly dropping to near zero on the prediction market site. The trader, whose identity is not publicly known, placed around $64,000 worth of bets that Biden would issue pre-emptive pardons for Jim Biden, the former president's brother, former Rep Liz Cheney, Sen. Adam Schiff, former Rep. Adam Kinzinger -- all prominent critics of President Trump. While none were ever charged with crimes, all four were given pardons to shield them against possible prosecution in Trump's second term. A month earlier, the same bettor placed a well-timed wager on Polymarket that Biden's son, Hunter, would receive a pardon over gun and tax charges. Together, those five bets netted the trader $316,346 in profits, according to an analysis by the Paris-based analytics company Bubblemaps, which shared its findings exclusively with NPR. "The odds of this happening by random chance are virtually zero," said Columbia Law School's Joshua Mitts, who advises the Department of Justice on insider trading cases. "The trader could've been a White House insider," he said. "But the trader could have possessed the information without being an insider," said Mitts, who published a paper last month estimating that $143 million in profits have been earned on Polymarket by bettors with insider information. The trades linked to Biden's pardons show that individuals could have been profiting from confidential government information before President Trump returned to office, when prescient bets related federal policy and military strikes on sites like Polymarket started to draw intense scrutiny. Polymarket did not return a request for comment. Sleuthing crypto transactions linked to Biden pardons To piece together the suspicious trades related to Biden's pardons, Bubblemaps' forensic investigators looked at Polymarket trades using pattern-matching artificial intelligence software. They discovered two accounts had a perfect track record of betting on Biden pardons. "We looked at all the accounts trading on this one market and looked at their mutual transactions," Nick Vaiman, Bubblemap's founder, told NPR in an interview. "And we found a connection between two accounts, which was a shared deposit wallet." That means the analysts determined that both accounts were sending profits from Polymarket bets to the same cryptocurrency wallet on the site Kraken, a U.S.-based crypto exchange. "Exchanges like Kraken don't offer information on individual accounts," Vaiman said. "We've tried desperately, but they don't give up this information easily." Kraken has "know-your-customer" rules similar to a bank, requiring its customers to verify their identities before using the exchange. Yet it remains difficult to publicly identify a customer based on a crypto wallet alone. Federal prosecutors often find that crypto wallet-holders engaging in insider trading do so through other people or shell companies, said Mitts. "If the government subpoenas, and gets data back showing some entity did the trading that has no connections to the White House, that's where the trail runs cold." And even when a wallet on a cryptocurrency blockchain is connected to a person, a legal case of insider trading is far from open-shut, Mitts added. "If it was misappropriation of information, the problems for prosecutors begin there," he said. "Who was misappropriating? Could you prove it? Could you prove the conditions under which they got the information?" Biden pardons trades follow a string of other suspected insider traders profiting Prediction markets, like Polymarket and its main rival, Kalshi, have flourished in Trump's second term. The administration has embraced an industry once considered a pariah in Washington over fears that the markets could be ripe for abuse and manipulation. The investment firm Bernstein projects that in the next four years, prediction markets could become a $1 trillion industry, and the Trump family is getting in on the action. Donald Trump Jr., the president's son, is an adviser to both Kalshi and Polymarket. As millions of people turn White House announcements and geopolitical episodes into opportunities to make money, there have been a series of controversies over suspected insider trading. Hours before Venezuelan leader Nicolás Maduro was toppled by U.S. forces in January, a Polymarket trader placed a bet that netted $400,000. Likewise, an account trading under the username "Magamyman" made more than $500,000 after wagering on Polymarket that Iran's Ayatollah Ali Khamenei would soon no longer lead Iran. Not long after the bet was placed, an Israeli strike killed him. Most recently, a group of new accounts on Polymarket raked in hundreds of thousands of dollars in profits by betting that the U.S. and Iran would reach a ceasefire before the agreement had been unveiled. U.S. prosecutors have not announced any investigations or charges over potential insider trading. In Israel, authorities arrested several people in connection to a case alleging that military reservists traded on Polymarket using classified military intelligence. The largest U.S. prediction market, Kalshi, is regulated by the Commodity Futures Trading Commission, a status that is being contested in court by dozens of states. The CFTC prohibits betting on markets related to war, assassinations and terrorism, but otherwise has overseen the industry with a light touch, in contrast to the Biden administration, which prohibited most types of "event contracts" except those related to things that had clear public value, like the weather, oil futures and grain prices. Polymarket, too, has been welcomed by the Trump administration. Under Biden, the Federal Bureau of Investigation raided the home of Polymarket's CEO and forced the site to wind down in the U.S. It continues to operate mostly as an overseas exchange, with its largest site accessible by Americans only via virtual private network. Unlike Kalshi, Polymarket uses cryptocurrency, making it easier for traders to remain anonymous. But Trump's law enforcement agencies have taken a more hands-off approach to Polymarket's most controversial markets on everything from conditions in Gaza, war to the next nuclear detonation. "These markets are problematic," said Nizan Packin, a law professor at Baruch College. "If we want to offer this type of gambling about geopolitics and elections, we need to do this in a proper way, which means guardrails that we have created after we studied the consequences and the problems. This is not something that was done," said Packin, who co-authored a new paper in the the journal Science examining the risks of online prediction markets. "Without clear regulation, and clearer and stricter enforcement, the gray zone becomes larger and more questions should and will be asked," she said.

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure - Boston News, Weather, Sports | WHDH 7News

The same ChatGPT chatbot that gave OpenAI's chief financial officer Sarah Friar a tilapia recipe for a recent Sunday night dinner at home is also now doing her most mundane tasks at work like summarizing her emails and Slack messages. Friar and other company executives are banking OpenAI's future on more of the latter as it shifts its focus to business-oriented products while shedding some of its consumer offerings as a pathway to profitability. OpenAI says it will introduce a new artificial intelligence model for "high-value professional work" as the company faces heightened competition with rival Anthropic in attracting corporate customers to adopt AI assistants in their workplaces. "You'll see a new model coming from us in short order. We feel very excited about it," Friar said in an interview with The Associated Press. OpenAI boasts of more than 900 million weekly users of its core ChatGPT product, and Friar said about 95% of them "don't pay anything" for the popular chatbot. But while all those interactions build habits and reliance, they also strain the costly computing resources needed to power the company's AI systems and highlight the need for big business customers to help pay the bills. OpenAI, valued at $852 billion, and Anthropic, valued at $380 billion, both lose more money than they make, putting the privately-owned San Francisco-based AI research laboratories in a fierce competition to generate more revenue as they race toward becoming publicly traded on Wall Street. A push to improve performance and sales of OpenAI's business-oriented products -- already Anthropic's bread and butter -- has driven OpenAI to abandon some consumer initiatives, like the AI video generator app Sora. "I think it was a little heartbreaking, but we're like, OK, it's not the main event right now," Friar said. "We need to make sure that our new model that's coming has enough compute." Codenamed Spud, OpenAI says its "smartest model yet" offers "stronger reasoning, better understanding of intent and dependencies, better follow-through and more reliable output in production." It's part of OpenAI's answer to Anthropic's new Claude Mythos, which Anthropic claims is so "strikingly capable" that it is limiting its use to select customers because of its apparent ability to surpass human cybersecurity experts in finding or exploiting computer vulnerabilities. Friar, the former CEO of neighborhood social platform Nextdoor, said business customers accounted for about 20% of OpenAI's revenue when she was hired in 2024 as chief financial officer. She said it's now 40% and expected to account for half of OpenAI's sales by the end of the year. It's a sharp turnaround from late last year, when OpenAI co-founder and CEO Sam Altman was promoting a now-shuttered Sora partnership with Disney, launching a plan to sell ads on ChatGPT and floating the idea of letting ChatGPT engage in erotica with paid adult users. Altman said on the "Mostly Human" podcast earlier this month that a sharper focus was needed -- and Friar agrees. "Tech companies, when they're growing, it's just this natural thing that happens. There's so many cool things you could do," she said, adding that companies can end up doing "really badly" if they do too many things, while "great companies are very good at, in a reasonable period of time, kind of doing that winnowing down and refocusing and it's super painful." Signaling that shift was the hiring three months ago of Slack CEO Denise Dresser to be OpenAI's first chief revenue officer. Dresser said in a recent AP interview that she has been laser-focused on meeting with corporate leaders and positioning OpenAI as the go-to platform for workplaces employing AI agents to automate a variety of computer-based job tasks. "It's really clear to me that companies are past the experimentation phase and they're into using AI to do real work," Dresser said. "Leaders at companies are recognizing that AI is probably the most consequential shift of their lifetime." But those leaders also have a choice, namely Anthropic's Claude that has become widely used by software professionals. Founded in 2021 by a group of ex-OpenAI leaders who said they wanted to prioritize AI safety, Anthropic has positioned itself as the more responsible AI vendor. The distinction drew attention when President Donald Trump's administration punished the startup after a contract dispute over AI use in the military, and Altman used the opportunity to cement OpenAI's own deal with the Pentagon. Consumer interest in Anthropic surged and the company said its annualized revenues hit $30 billion, a higher number than what OpenAI has reported, though they measure it differently. Friar and Dresser declined to reveal OpenAI's latest sales but both have suggested that Anthropic's number is inflated because it doesn't account for revenue it must share with cloud computing providers Amazon and Google. Even so, it remains a tight competition that's also tied to the health of the stock market and the future of the economy. "They're likely quite close," said Luke Emberson, a researcher at nonprofit institute Epoch AI. "Certainly the trends show Anthropic is growing much faster than OpenAI. If that continues, they're likely to cross soon." The urgency led Dresser to send a memo to OpenAI employees on Sunday, first reported by The Verge, that asserted that Anthropic's coding focus "gave them an early wedge" but expressing confidence that OpenAI has the "real structural advantage" as AI usage expands beyond software developers and OpenAI builds enough computing capacity to operate its AI systems. "Their story is built on fear, restriction, and the idea that a small group of elites should control AI," Dresser's memo said of Anthropic. "Our positive message will win over time: build powerful systems, put in the right safeguards, expand access, and help people do more." But for skeptics of the financial viability of AI products like ChatGPT and Claude, the trajectory of both money-losing companies is alarming as smaller startups increasingly become dependent on their AI tools. Anthropic has already imposed rate limits on heavy users, forcing some to wait for hours to use Claude, and both companies have set up service tiers that reward premium payers, said author and AI critic Ed Zitron. "It's what I call the subprime AI crisis," Zitron said. "People built their lives and they built their businesses on top of these companies that, as they try and save money, will start turning the screws." One thing that both AI leaders and critics agree on is that it is an expensive technology, though whether it is worth the cost in electricity-hungry AI computers remains to be seen. "People will say, well, 'Once they go public, they're safe.' That's not true," Zitron said. "Public companies can and will die, especially ones that are dependent on $100 billion to $200 billion every year or so, just to keep breathing." (Copyright (c) 2026 The Associated Press. All Rights Reserved. This material may not be published, broadcast, rewritten, or redistributed.) Join our Newsletter for the latest news right to your inbox Email address Submit

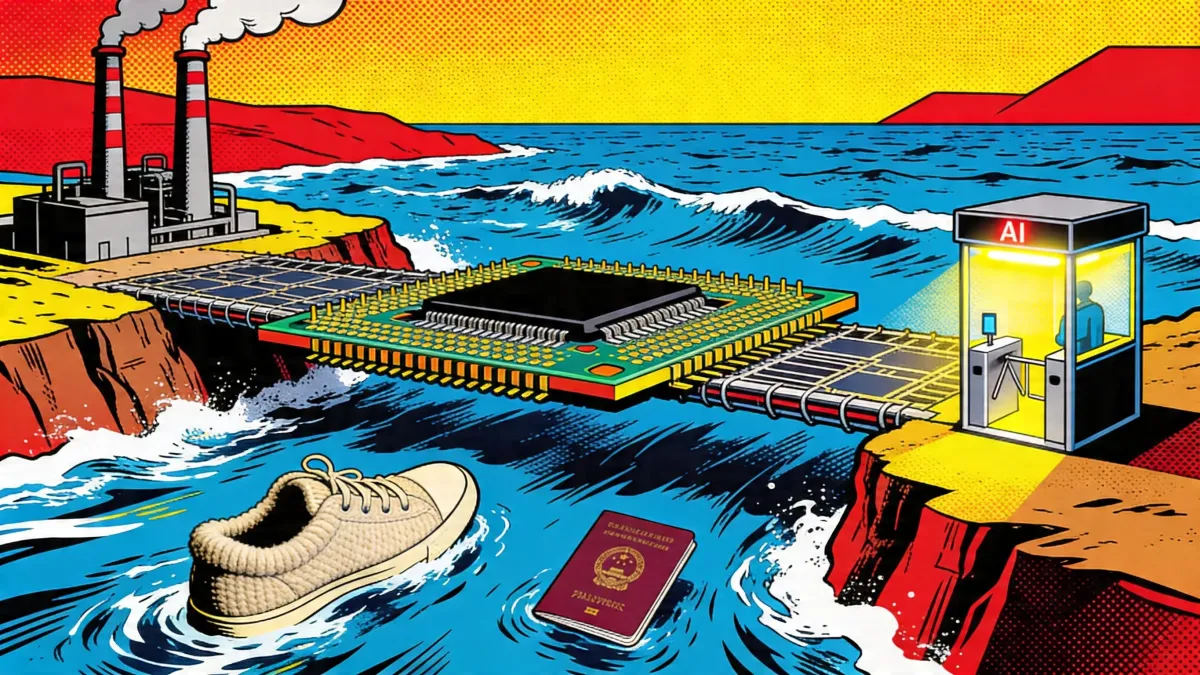

TSMC AI Capex; Allbirds AI Pivot; Anthropic ID Checks

TSMC pushes capex toward $56B as AI demand holds through Iran war supply risks. Allbirds pivots to AI. Anthropic adds passport checks. TSMC told the market it will spend toward $56 billion this year because AI customers keep buying capacity even as the Iran war drags LNG, helium and hydrogen into the chip supply story. Gross margin hit 66.2%. Revenue guidance moved higher. The confidence is real, and so is the narrowing bridge underneath it. Allbirds announced it will sell its shoe brand for $39 million and rename the public shell NewBird AI. The stock jumped 700%. The investor, the operator and the incoming COO remain unnamed. Anthropic, the company that gained a million sign-ups a day by refusing a Pentagon surveillance contract, started asking some Claude users for a passport and a selfie. TSMC raised 2026 revenue guidance above 30% growth and pushed capital spending toward the high end of its $52 billion to $56 billion range. The signal underneath the earnings beat is harder: LNG, helium, and hydrogen are now part of the quarterly call. The numbers would look strong in any cycle. First-quarter revenue hit $35.9 billion. Gross margin reached 66.2%, above guidance. High-performance computing, which includes AI, accounted for 61% of revenue. Chips at 7 nanometers, and below made up 74% of wafer sales. That makes TSMC less like a supplier riding the AI cycle and more like the bridge the cycle must cross. The company is choosing to build more capacity while a shooting war exposes how fragile the inputs behind that capacity can be. Management said Taiwan had enough LNG through at least May and that specialty gases come from multiple suppliers. That is the right answer for one quarter. It is not the same as saying the risk has gone away. Why This Matters: Allbirds is selling its shoe brand for $39 million and renaming the public shell NewBird AI to chase the compute infrastructure market. The stock exploded. The people behind the new venture have not been identified. An unnamed institutional investor is providing up to $50 million in senior secured convertible notes at 12% interest. The investor gets conversion rights, a blocked control account for lease payments, and the right to appoint a COO. The AI infrastructure counterparty is also unnamed. AI compute is a real market. CoreWeave, Lambda, Oracle, and the hyperscalers are fighting over it. The question is whether NewBird brings experienced operators or a ticker symbol attached to the right buzzword. Why This Matters: Prompt: Ultra-realistic mirror selfie portrait of a young Korean girl, fair smooth skin, fit athletic build, exactly matching the reference photo's pose. Playful pouty expression, bright sparkling eyes, slightly messy short-to-medium casual hairstyle. A smudge of white flour on her nose and cheek, hands lightly dusted with flour, one finger pointing toward the camera. Wearing a plain pink t-shirt and tiny pajama shorts, barefoot, leaning over a kitchen island counter with elbows resting on the surface. Messy kitchen background, bright overhead kitchen lights, a bowl of dough, scattered flour on the counter, cracked eggshells. Playful domestic vibe, hyper-detailed natural skin texture, hyper-detailed flour dust on skin and hands --ar 4:5 --raw --stylize 50 Anthropic started requiring government photo ID and live selfies for some Claude users through third-party vendor Persona Identities. The move comes two months after Claude drew a surge in privacy-conscious users during Anthropic's Pentagon standoff. The company says verification applies to selected capabilities, platform integrity reviews, and safety or compliance measures. Anthropic says it does not store the images, though Persona holds them, and Anthropic can access records when needed. Discord used a similar vendor last year. That vendor got breached. Seventy thousand government ID photos leaked. Users came to Claude because Anthropic refused surveillance. Now the company that earned their trust is asking for the oldest surveillance token: their papers. Why This Matters: How to Search, Summarize, and Write Across All Your Tabs with Dia Browser Dia is an AI-native web browser from the team behind Arc. The address bar doubles as an AI chat, so typing a question returns a direct answer instead of a list of links. Open any page and ask the sidebar to summarize it, compare it with another tab, or draft a reply based on what you are reading. Built-in Skills handle writing and coding tasks without copy-pasting into a separate tool. Free to use on Mac with an optional Pro tier for heavy AI usage. You matched three days ago. The conversation started strong, died after six messages, and now you are staring at a thread that ended on "haha yeah totally." You do not want to send "hey" again. That bakery near you -- Saturday morning? I will buy the sourdough, you tell me what is actually good there. Dating threads die when both people wait for the other to escalate. The prompt skips the small talk loop by forcing a specific callback to something she said, plus a plan she can say yes or no to. One message, one decision. Claude is better at tone, it avoids sounding desperate or overproduced. ChatGPT tends to add emoji and exclamation marks you will want to delete. Paste the full thread for context, not just the last message. Google's parent company holds roughly 5% of SpaceX, a position that could be worth $100 billion if the rocket company reaches its projected $2 trillion IPO valuation. Alphabet's stake has slipped from 6.11% at the end of 2025 as SpaceX has raised additional capital. China's imports of semiconductor equipment from Singapore rose 17% to $5.7 billion in 2025 while imports from Malaysia more than doubled to $3.4 billion. Direct imports from the United States fell 34% to roughly $2 billion, marking a supply chain realignment around US export controls. Amazon Web Services and Microsoft are championing a $90 billion data center buildout in Spain's Aragón region, now Europe's fastest-growing AI infrastructure hub. Residents are pushing back over water use, environmental impact, and rural transformation. Hackers are using stolen biometric data and virtual camera software distributed through Telegram to defeat bank KYC facial recognition during remote account onboarding. MIT Technology Review traced the operations to fraud centers in Cambodia exploiting lax verification practices. Alibaba's newly formed Token Hub unit has released Happy Oyster, an AI world model that generates photorealistic 3D environments, interactive videos, and game assets. The system simulates real-world physics to create immersive content with minimal manual input. India produces over 1.5 million computer science graduates a year, but AI-powered coding tools have eroded the traditional advantage of scale in IT talent. Infosys and other major firms are overhauling recruitment to prioritize AI literacy and new programming skills. Martin Cascado, who leads Andreessen Horowitz's AI investment team, told the Financial Times that recent AI breakthroughs represent a once-in-century shift comparable to steam power. He warned that institutions and workforce adaptation are not keeping pace with the technology. YouTube rolled out a feature allowing users to set the Shorts feed limit to zero, effectively removing Shorts from iOS and Android apps. The update replaces the previous minimum of 15 minutes and gives users full control to opt out of short-form video. Voice actors across multiple countries are organizing to fight AI-generated dubbing as Hollywood studios accelerate adoption of synthetic voice tools for non-English markets. Unions warn that automated dubbing threatens jobs and erases cultural depth in international storytelling. A growing field of interpretability research aims to explain how neural networks arrive at decisions, using techniques such as feature attribution and mechanistic analysis. The work is gaining urgency as AI systems enter healthcare, finance, and defense. Synera deploys AI agents that run complex engineering workflows autonomously, and NASA, BMW, and Airbus already let them. 🏭 Founders Dr. Moritz Maier, Sebastian Möller-Lafore, and Daniel Siegel started the company in 2018 in Bremen, Germany, originally under the name ELISE. Maier holds a PhD in engineering and spent years watching product development teams waste weeks on tasks that should take minutes. The company expanded to Boston through Germany's federal accelerator program and employs an estimated 50-plus people. Product Synera's platform connects to more than 80 computer-aided design and engineering tools, then orchestrates AI agents that handle design, simulation, and optimization across the full product lifecycle. The company calls it "JARVIS for engineers." Everything runs on-premises, so proprietary engineering data never leaves a customer's infrastructure. At BMW, the system extended robot operating life while cutting carbon dioxide emissions by 60%. At Hyundai, it delivered 47% weight reduction and compressed development time by 80%. Annual recurring revenue doubled in 2025, with 60% of new sales coming from the AI offering. Competition Autodesk, PTC, Siemens, and Dassault Systèmes all embed AI into their platforms but focus on single-tool automation rather than cross-tool orchestration. Altair, SimScale, and ESI Group compete in simulation. Synera's edge is tool-agnostic integration: it sits on top of existing infrastructure instead of replacing it, which removes the rip-and-replace barrier that stalls most enterprise AI deployments. Financing 💰 $40 million Series B led by Revaia, with Capgemini, UVC Partners, BMW iVentures, Cherry Ventures, and Spark Capital participating. Total raised stands at $58.1 million. Future ⭐⭐⭐⭐ Frost & Sullivan handed the company a 2025 Global Transformational Innovation Leadership award. The customer list reads like a defense contractor's dream, and the doubled ARR suggests pull rather than push. The risk: those 80 tool integrations become 80 maintenance burdens as vendors ship their own agents. 🏭 Anthropic began asking some Claude users to verify their identity with a government photo ID and a live selfie, using third-party vendor Persona Identities. The company says verification applies to "selected capabilities, platform integrity reviews, and safety or compliance measures." Accepted documents include passports and driver's licenses. The requirement arrives weeks after Claude's privacy-driven user surge hit one million sign-ups a day, triggered by CEO Dario Amodei's decision to reject a Pentagon surveillance contract. Daily active users tripled since the start of 2026. 💡 February: Anthropic rejects the Pentagon over surveillance concerns. 💡 March: a million people a day sign up because they trust the company that said no to surveillance. 💡 April: Anthropic asks those same users for a passport photo and a live selfie, routed through a third-party vendor. Discord used a similar vendor last year. That vendor got breached. Seventy thousand government ID photos leaked. Anthropic says it will not store the images, which is reassuring right up until you remember that Discord said the same thing. The privacy company gained its privacy-conscious users by being the privacy company. It is now asking them to hold their driver's license up to a webcam. The Pentagon did not get its data. Persona Identities did. 🤷♀️

Anthropic London Office Expands as OpenAI Secures Hub

Anthropic said Thursday it is taking London office space for up to 800 people, according to CNBC. Three days earlier, OpenAI said it had secured its first permanent London office, a 544-seat site due to open in 2027, according to Reuters coverage carried by RTE. Two leases, one message: frontier AI still needs a door badge. Anthropic already has more than 200 people in the capital. OpenAI says London will become its largest research hub outside the U.S. That is an odd fact for companies selling automation. Rooms still matter. The timing makes London look less like a satellite office and more like disputed ground. Anthropic chose the Knowledge Quarter, near Google DeepMind, Meta, Synthesia and Wayve. OpenAI picked Regent Quarter in King's Cross, spanning Jahn Court and the Brassworks Building. Different leases. Same neighborhood logic. That is the tell. Anthropic framed the move as room to grow into a city that already matters to its research and commercial work. Pip White, the company's head of EMEA north, said the U.K. combines enterprises that understand AI safety with a deep AI talent pool. OpenAI's London site lead, Phoebe Thacker, used similar language, saying the U.K. has "an incredible depth of talent" and a strong record in AI. Both companies sound confident. Both also sound defensive. London gives them proximity to DeepMind alumni, U.K. policymakers, banks, universities and a regulatory class that wants to be courted. It also lets each lab tell customers it has people nearby, not just a sales deck and a data-processing agreement. You can sell frontier AI from San Francisco. But when bank executives, regulators and government departments are anxious, a local office changes the room. For Anthropic, that matters because its Mythos model and Project Glasswing have pushed cybersecurity anxiety into boardrooms. For OpenAI, it matters because the company paused its Stargate U.K. data center plan after citing energy costs and regulatory uncertainty. One company is expanding after a Pentagon feud. The other is adding people after pulling back on infrastructure. The London leases sit inside a wider office race. OpenAI's first stops were London, Dublin and Tokyo. After that came Paris, Brussels, Singapore, Munich and Seoul, plus a fast-building India plan. Anthropic's list now sprawls across Europe and Asia-Pacific. London and Dublin are already old news for the company. After that, the trail jumps by region and by sales need. The non-U.S. office map looks like this: The table needs one caveat. Companies use office language loosely. OpenAI calls New Delhi an "existing presence" and says Mumbai and Bengaluru are planned for later in 2026, not already open. Anthropic said in November that Paris and Munich were plans, while also saying the company then had offices in 12 cities. For readers, the useful split is opened, announced and planned. OpenAI's London move landed days after the company paused Stargate U.K., a data center project tied to Nvidia and Nscale. The Next Web reported that the pause reflected high British industrial electricity prices and unsettled AI copyright rules. The office lease does not fix either problem. It does something else. It preserves OpenAI's claim on British AI talent and policy influence while the infrastructure math remains ugly. Hiring researchers, policy staff and enterprise teams in King's Cross costs far less than committing to thousands of Nvidia GPUs in a power-constrained market. In a country trying to sell itself as an AI hub, that is a careful compromise. Anthropic has the cleaner story this week. Its office expansion follows a reported U.K. campaign to lure the company after its clash with the U.S. government over military use of Claude. It also follows Anthropic's European expansion, where the company said EMEA run-rate revenue had grown more than ninefold over the prior year and large business accounts in the region had grown more than tenfold. Those numbers make an 800-person London space less surprising. Enterprise AI is walking into local procurement rooms now. Nervous ones. OpenAI and Anthropic are not opening offices for symbolism alone. The practical target is closer access to engineers, enterprise buyers and governments that can make their products easier or harder to sell. Anthropic said Sydney would become its fourth Asia-Pacific office, alongside Tokyo, Bengaluru and Seoul. OpenAI said India had more than 100 million weekly ChatGPT users in February and laid out plans for Mumbai and Bengaluru offices alongside its New Delhi presence. These moves follow demand. They also shape it. The absence matters too. A company can announce a country strategy without owning much physical capacity there. It can sign a data-center memorandum without breaking ground. It can call a local presence an office, then hire slowly. The map above is therefore a hiring ledger, not a victory chart. London now has the loudest entry. OpenAI has the permanent lease. Anthropic has the larger stated capacity. What neither has yet is proof that more desks in King's Cross and the Knowledge Quarter can turn British AI ambition into durable power.

As Healthcare Costs Soar, Employers Search for Solutions -- Including Unconventional Ones

KANSAS CITY, Mo. -- A striking new data point from the Lockton 2026 National Benefits Survey signals just how far employers are willing to go to manage rising healthcare costs: 46% of self-funded plan sponsors say they would consider international drug sourcing for pharmacy benefits -- an approach that remains complex and may expose plan sponsors to legal and compliance risks if not properly designed and administered, although the risk is unclear based on the lack of regulatory enforcement.

ChatGPT maker OpenAI shifts its focus to business users amid Anthropic pressure